New and Changed Information

The following table provides an overview of the significant changes up to this current release. The table does not provide an exhaustive list of all changes or of the new features up to this release.

|

Cisco APIC Release Version |

Feature |

Description |

|---|---|---|

| 5.0(x) | Support for VMware teaming policy. |

You can configure VMware teaming policy when link aggregation groups (LAGs) are used. For more information, see the section Provisioning Cisco ACI to Work with OpenShift. |

|

5.0(x) |

Support for creating multiple clusters in a Cisco Application Centric Infrastructure (ACI) tenant. |

This release decouples the Cisco ACI Tenant name from the OpenShift cluster name—that is, the System ID provided in the acc-provision input file. The decoupling enables you to put one or more OpenShift clusters into one Cisco ACI tenant by deploying the cluster in a pre-existing tenant. For more information, see Provisioning Cisco ACI to Work with OpenShift |

| 4.2(x) | Kubernetes Replication Controller and OpenShift DeploymentConfig resources. |

Support for adding endpoint group (EPG) and SecurityGroup (SG) annotations to Kubernetes ReplicationController and OpenShift DeploymentConfig resources. For more information, see the section Network Policy and EPGs. |

| 4.1(x) | Service account hardening |

Every cluster is now configured with a dedicated administrative account that enables you to control read and write permissions for the whole fabric. For more information, see the section Service Account Hardening. |

|

4.0(1) |

-- |

Added procedure on how to drain a node. For more information, see the procedure Draining a Node. |

|

4.0(1) |

Added support for OpenShift with ACI CNI plug-in to run nested on Red Hat OpenStack. |

For more information, see the procedure Preparing OpenShift Nodes Running as OpenStack Virtual Machines. |

|

3.1(1) |

|

The release supports integration of OpenShift on bare-metal servers and OpenShift nested on ESXi VMM-based VMs into the Cisco Application Centric Infrastructure (ACI). |

|

3.1(1) and later |

Service subnet advertisement |

You can configure Cisco Application Centric Infrastructure (ACI) to advertise service subnets externally through a dynamic routing protocol. For more information, see the section Service Subnet Advertisement. |

|

3.1(1) |

-- |

Added hardware dependency. For more information, see the section Hardware Requirements. |

|

3.1(1) |

-- |

This guide was released. |

Cisco ACI and OpenShift Integration

OpenShift is a container application platform that builds on top of Docker and Kubernetes that makes it accessible and easy for the developer to create applications, and a platform for operators that simplifies deployments of containers for both development and production workloads. Beginning with Cisco APIC Release 3.1(1), OpenShift can be integrated with Cisco Application Centric Infrastructure (ACI) by leveraging the ACI CNI Plugin.

To integrate Red Hat OpenShift with Cisco ACI you must perform a series of tasks. Some tasks are performed by the fabric administrator directly on APIC, while others are performed by the OpenShift Cluster administrator. Once you have integrated the Cisco ACI CNI Plugin for Red Hat OpenShift, you can use the APIC to view OpenShift endpoints and constructs in Cisco ACI.

This document provides the workflow for integrating OpenShift, Release 3.11, and specific instructions for setting up the Cisco APIC. However, it is assumed that you are familiar with OpenShift and containers and can install OpenShift. Specific instructions for installing OpenShift are beyond the scope of this document.

For documents related to OpenShift, Release 4.x, see the ACI Virtualization > Virtualization — Containers section on the Cisco APIC documentation landing page.

Hardware Requirements

This section provides the hardware requirements:

-

Connecting the servers to Gen1 hardware of Cisco Fabric Extenders (FEXes) is not supported and results in a nonworking cluster.

-

The use of symmetric policy-based routing (PBR) feature for load balancing external services requires the use of Cisco Nexus 9300-EX or -FX leaf switches.

For this reason, the Cisco ACI CNI Plug-in is only supported for clusters that are connected to switches of those models.

Note |

UCS-B is supported as long as the UCS Fabric Interconnects are connected to Cisco Nexus 9300-EX or -FX leaf switches. |

OpenShift Compatibility Matrix

Verify the compatibility matrix to review specific OpenShift and ACI release support:

Workflow for OpenShift Integration

This section provides a high-level description of the tasks required to integrate OpenShift into the Cisco ACI fabric.

-

Prepare for the integration.

Identify the subnets and VLANs that you will use in your network by following the instructions in the section Planning for OpenShift Integration.

-

Fulfill the required day-0 fabric configurations.

Perform the required day-0 fabric configurations. Make sure that you perform all fabric configurations required prior to deploying the OpenShift cluster as per the section Prerequisites for Integrating OpenShift with Cisco ACI.

-

Configure the Cisco APIC for the OpenShift Cluster.

Many of the required fabric configurations are performed directly by a provisioning tool (acc-provision). The tool is embedded in the plugin files from www.cisco.com. Once downloaded and installed modify the configuration file with the information from the planning phase and run the tool. For more information, see the section Provisioning Cisco ACI to Work with OpenShift.

-

If you plan to deploy the OpenShift cluster running nested on Red Hat OpenStack, see the section Preparing OpenShift Nodes Running as OpenStack Virtual Machines.

-

Considerations for OpenShift node network configurations.

Set up networking for the node to support OpenShift installation. This includes configuring an uplink interface, subinterfaces, and static routes. See the section Preparing the OpenShift Nodes.

-

Install OpenShift and Cisco ACI containers.

Use the appropriate method for your setup. See the section Installing OpenShift and Cisco ACI Containers.

-

Update the OpenShift router to use the ACI fabric.

See the section Updating the Openshift Router to Use the ACI Fabric.

-

Verify the integration.

Use the Cisco APIC GUI to verify that OpenShift has been integrated into the Cisco ACI. See the section Verifying the OpenShift Integration.

Planning for OpenShift Integration

The OpenShift cluster requires various network resources all of which will be provided by the ACI fabric integrated overlay. The OpenShift cluster will require the following subnets:

-

Node subnet—The subnet used for OpenShift control traffic. This is where the OpenShift API services are hosted. The acc-provisioning tool configures a private subnet. Ensure that it has access to the Cisco APIC management address.

-

Pod subnet—The subnet from which the IP addresses of OpenShift pods are allocated. The acc-provisioning tool configures a private subnet.

Note

This subnet specifies the starting address for the IP pool that is used to allocate ip to pods as well as your ACI Bridge Domain IP. For example if you define it as 192.168.255.254/16, a valid configuration from an ACI perspective, your containers will not get an IP address as there are no free IPs after 192.168.255.254 in this subnet. We suggest to always use the first IP address in the POD subnet. In this example: 192.168.0.1/16.

-

Node service subnet—The subnet used for internal routing of load-balanced service traffic. The acc-provisioning tool configures a private subnet.

Note

Similarly to the Pod subnet note, configure it with the first IP in the subnet.

-

External service subnets—Pools from which load-balanced services are allocated as externally accessible service IPs.

The externally accessible service IPs could be globally routable. Configure the next-hop router to send traffic to these IPs to the fabric. There are two such pools: One is used for dynamically allocated IPs and the other is available for services to request a specific fixed external IP.

All the above mentioned subnets must be specified on the acc-provisioning configuration file. The Node and POD subnet will be provisioned on corresponding ACI Bridge Domains that will be created by the provisioning tool. The endpoints on these subnets will be learnt as fabric endpoints and can be used to communicate directly with any other fabric endpoint without NAT, provided that contracts allow communication of course. The Node Service subnet and the External Service Subnet will not be seen as fabric endpoints, but will be instead used to manage ClusterIP and Load Balancer IP respectively, programmed on Open vSwitch via OpFlex. As mentioned before, the External Service subnet must be routable outside of the fabric.

OpenShift Nodes will need to be connected on an EPG using a VLAN encapsulation. PODs can connect to one or multiple EPGs and use either VLAN or VXLAN encapsulation. Additionally, PBR-based Load Balancing will require the use of a VLAN encapsulation to reach the opflex service endpoint IP of each Openshift node. The following VLAN IDs will therefore be required:

-

Node VLAN ID—The VLAN ID used for the EPG mapped to a Physical domain for OpenShift nodes.

-

Service VLAN ID—The VLAN ID used for delivery of load-balanced external service traffic.

-

The Fabric Infra VLAN ID—The Infra VLAN used to extend OpFlex to the OVS on the OpenShift nodes.

In addition to providing network resources, read and understand the guidelines in the knowledge base article Cisco ACI and OpFlex Connectivity for Orchestrators.

Prerequisites for Integrating OpenShift with Cisco ACI

The following are required before you can integrate OpenShift with the Cisco ACI fabric:

-

A working Cisco ACI fabric running a release that is supported for the desired OpenShift integration

-

An attachable entity profile (AEP) set up with interfaces desired for the OpenShift deployment

When running in nested mode, this is the AEP for the VMM domain on which OpenShift will be nested.

-

A Layer 3 Outside connection, along with a Layer 3 external network to serve as external access

-

Virtual routing and forwarding (VRF) configured

Note

The VRF and L3Out in Cisco ACI that are used to provide outside connectivity to OpenShift external services can be in any tenant. The most common usage is to put the VRF and L3Out in the common tenant or in a tenant that is dedicated to the OpenShift cluster. You can also have separate VRFs, one for the OpenShift bridge domains and one for the L3Out, and you can configure route leaking between them.

-

Any required route reflector configuration for the Cisco ACI fabric

-

Ensure the subnet that is used for external services is routed by the next-hop router that is connected to the selected ACI L3Out interface. This subnet is not announced by default, so either static routes or appropriate configuration must be considered.

In addition, the OpenShift cluster must be up through the fabric-connected interface on all the hosts. The default route on the OpenShift nodes should be pointing to the ACI node subnet bridge domain. This is not mandatory, but it simplifies the routing configuration on the hosts and is the recommend configuration. If you do not follow this design, ensure the OpenShift node routing is correctly used so that all OpenShift cluster traffic is routed through the ACI fabric.

Provisioning Cisco ACI to Work with OpenShift

Use the acc_provision tool to provision the fabric for the OpenShift VMM domain and generate a .yaml file that OpenShift uses to deploy the required Cisco Application Centric Infrastructure (ACI) container components.

This tool requires a configuration file as input and will perform two actions as output:

-

it will configure relevant parameters on the Cisco ACI fabric.

-

it will generate a YAML file that OpenShift administrators can use to install the Cisco ACI CNI plug-in and containers on the cluster.

Note |

We recommended that when using ESXi nested for OpenShift hosts, you provision one OpenShift host for each OpenShift cluster for each ESXi server. Doing so ensures—in case of an ESXi host failure—that a single OpenShift node is affected for each OpenShift cluster. |

Procedure

| Step 1 |

Download the provisioning tool from cisco.com.

|

||

| Step 2 |

Generate a sample configuration file that you can edit. Example:

The command generates a configuration file that looks like the following example:

Notes:

|

||

| Step 3 |

Edit the sample configuration file with the relevant values for each of the subnets, VLANs, etc., as appropriate to you planning and then save the file. |

||

| Step 4 |

Provision the Cisco ACI fabric. Example:This command generates the file aci-containers.yaml that you use after installing OpenShift. It also creates the files user-[system id].key and user-[system id].crt that contain the certificate that is used to access Cisco APIC. Save these files in case you change the configuration later and want to avoid disrupting a running cluster because of a key change. Notes:

|

||

| Step 5 |

(Optional): Advanced optional parameters can be configured to adjust to custom parameters other than the Cisco ACI default values or base provisioning assumptions:

|

Preparing OpenShift Nodes Running as OpenStack Virtual Machines

This section describes how to provision a Red Hat OpenStack installation that uses the Cisco Application Centric Infrastructure (ACI) OpenStack plug-in in order to work with a nested Red Hat OpenShift cluster using the Cisco ACI CNI Plug-in.

Before you begin

-

Ensure that you have a running Red Hat OpenStack installation using the required release of the Cisco ACI OpenStack plugin.

For more information, see the Cisco ACI Installation Guide for Red Hat OpenStack Using OSP Director.

When installing the Overcloud, the ACIOpflexInterfaceType and ACIOpflexInterfaceMTU should be set as follows:

ACIOpflexInterfaceType: ovsACIOpflexInterfaceMTU: 8000

Procedure

| Step 1 |

Create the neutron network that will be used to connect the OpenStack instances that will be running the OpenShift nodes: Notes:

Example: |

||

| Step 2 |

Launch the OpenStack VMs to host OpenShift. Sub-interfaces have to be created for the node network, infra network and the appropriate MTUs have to be set. Examples of the network-interface configuration files are shown in the following text. In the examples, we assume that the Cisco ACI infra VLAN is 3085, kube node VLAN is 2031, and VLAN 4094 is the VLAN used from OpenStack to provide IP and metadata to the VM.

The configurations in the previous text can be added or updated after initial VM bringup. The dhcp request for eth0.4093 fails if the dhcp client is not configured. The following configuration needs to be added to the /etc/dhcp/dhcpclient.conf: Example:The following configuration needs to be added to the /etc/dhcp/dhcpclient.conf: Example: |

||

| Step 3 |

For OpenShift to work correctly, the default route on the VM needs to be configured to point to the subinterface created for the Kubernetes node network. In the above example, the infra network is 10.0.0.0/16, the node network is 12.11.0.0/16 and the Neutron network is 1.1.1.0/24. Note that the metadata subnet 169.254.0.0/16 route is added to the eth0.4094 interface. Example: |

You are now ready to install OpenShift. Skip the next section and proceed to Installing OpenShift and Cisco ACI Containers.

Preparing the OpenShift Nodes

After you provision Cisco Cisco Application Centric Infrastructure (ACI), you prepare networking for the OpenShift nodes.

Procedure

| Step 1 |

Configure your uplink interface with NIC bonding or not, depending on how your AEP is configured. Set the MTU on this interface to at least 1600, preferably 9000.

|

||||

| Step 2 |

Create a subinterface on your uplink interface on your infra VLAN. Configure this subinterface to obtain an IP address using DHCP. Set the MTU on this interface to 1600. |

||||

| Step 3 |

Configure a static route for the multicast subnet 224.0.0.0/4 through the uplink interface that is used for VXLAN traffic. |

||||

| Step 4 |

Create a subinterface on your uplink interface on your node VLAN. For example, which is called kubeapi_vlan in the configuration file. Configure an IP address on this interface in your node subnet. Then set this interface and the corresponding node subnet router as the default route for the node.

|

||||

| Step 5 |

Create the /etc/dhcp/dhclient-eth0.4093.conf file with the following content, inserting the MAC address of the Ethernet interface for each server on the first line of the file: Example:

The network interface on the infra VLAN requests a DHCP address from the Cisco APIC Infrastructure network for OpFlex communication. The server must have a dhclient configuration for this interface to receive all the correct DHCP options with the lease. If you need information on how to configure a VPC interface for the OpenShift servers, see "Manually Configure the Host vPC" in the Cisco ACI with OpenStack OpFlex Deployment Guide for Red Hat on Cisco.com.

|

||||

| Step 6 |

If you have a separate management interface for the node being configured, configure any static routes required to access your management network on the management interface. |

||||

| Step 7 |

Ensure that Open vSwitch (OVS) is not running on the node. |

||||

| Step 8 |

Informational: Here is an example of the interface configuration (/etc/network/interfaces): |

||||

| Step 9 |

Add the following Iptables rule to each host to allow IGMP traffic: $ iptables -A INPUT -p igmp -j ACCEPTTo make this change persistent across reboots, add the command either to /etc/rc.d/rc.local or to a cron job that runs after reboot. |

||||

| Step 10 |

Tune the igmp_max_memberships kernel parameter. The Cisco ACI Container Network Interface (CNI) plug-in gives you the flexibility to place Namespaces, Deployment, and PODs into dedicated endpoint groups (EPGs). To ensure that Broadcast, Unknown Unicast and Multicast traffic (BUM) is not flooded to all the EPGs, Cisco ACI allocates a dedicated multicast address for BUM traffic replication for every EPG that is created. Recent kernel versions set the default for the igmp_max_memberships parameter to 20, limiting the maximum number of EPGs that can be utilized to 20. To have more than 20 EPGs, you can increase the igmp_max_memberships with the following steps:

|

Installing OpenShift and Cisco ACI Containers

After you provision Cisco Application Centric Infrastructure (ACI) and prepare the OpenShift nodes, you can install OpenShift and Cisco ACI containers. You can use any installation method appropriate to your environment. We recommend that you use this procedure to install the OpenShift and Cisco ACI containers.

Note |

When installing OpenShift, ensure that the API server is bound to the IP addresses on the node subnet and not to management or other IP addresses. Issues with node routing table configuration, API server advertisement addresses, and proxy are the most common problems during installation. If problems occur, check these issues first. |

Procedure

|

Install OpenShift, following the installation procedure documented in the article Planning your installation for the 3.11 release on the Red Hat OpenShift website. Use the configuration overrides for Cisco ACI CNI Ansible inventory configuration on the GitHub website: https://github.com/openshift/openshift-ansible/tree/release-3.11/roles/aci.

|

Updating the Openshift Router to Use the ACI Fabric

This section describes how to update the OpenShift router to use the ACI fabric.

Procedure

| Step 1 |

Remove the old router, enter the following commands: Example: |

| Step 2 |

Create the container networking router, enter the following command: Example: |

| Step 3 |

Expose the router service externally, enter the following command: Example: |

Unprovisioning OpenShift from the ACI Fabric

This section describes how to unprovision OpenShift from the ACI fabric.

Procedure

|

To unprovision, enter the following command: Example:This command unprovisions the resources that have been allocated for this OpenShift. This also deletes the tenant. If you are using a shared tenant, this is very dangerous. |

Uninstalling the CNI Plug-In

This section describes how to uninstall the CNI plug-in.

Procedure

|

Uninstall the CNI plug-in using the following command: Example: |

Verifying the OpenShift Integration

After you have performed the previous steps, you can verify the integration in the Cisco APIC GUI. The integration creates a tenant, three EPGs, and a VMM domain.

Procedure

| Step 1 |

Log in to the Cisco APIC. |

| Step 2 |

Go to . The tenant should have the name that you specified in the configuration file that you edited and used in installing OpenShift and the ACI containers. |

| Step 3 |

In the tenant navigation pane, expand the following: . You should see three folders inside the Application EPGs folder:

|

| Step 4 |

In the tenant navigation pane, expand the Networking and Bridge Domains folders. You should see two bridge domains:

|

| Step 5 |

If you deploy OpenShift with a load balancer, go to , expand L4-L7 Services, and perform the following steps:

|

| Step 6 |

Go to . |

| Step 7 |

In the Inventory navigation pane, expand the OpenShift folder. You should see that a VMM domain, with the name that you provided in the configuration file, is created and that the domain contains a folder called Nodes and a folder called Namespaces. |

Using Policy

Network Policy and EPGs

The Cisco ACI and OpenShift integration was designed to offer a highly flexible approach to policy. It was based on two premises: that OpenShift templates not need to change when they run on Cisco ACI, and that developers not be forced to implement any APIC configuration to launch new applications. At the same time, the solution optionally exposes Cisco ACI EPGs and contacts to OpenShift users if they choose to leverage them for application isolation.

By default, Cisco plug-ins create an EPG and a bridge domain in APIC for the entire OpenShift cluster. All pods by default are attached to the new EPG, has no special properties. The container team or the network team do not need to take any further action for a fully functional OpenShift cluster—as one might find in a public cloud environment. Additionally, security enforcement can occur based on usage of the OpenShift NetworkPolicy API. NetworkPolicy objects are transparently mapped into Cisco ACI and enforced for containers within the same EPG and between EPGs.

The following is an example of NetworkPolicy in OpenShift:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: test-network-policy

namespace: default

spec:

podSelector:

matchLabels:

role: db

ingress:

- from:

- namespaceSelector:

matchLabels:

project: myproject

- podSelector:

matchLabels:

role: frontend

ports:

- protocol: TCP

port: 6379

However, in many cases, you may want to leverage EPGs and contracts in a more flexible way to define policy. Additional EPGs and contracts may be created either directly through the APIC.

To move namespaces, deployments, or pods into these EPGs, a OpenShift user simply applies an annotation to any of these objects specifying the application profile and EPG. The running pods automatically shift to the new EPG, and any configured contracts are applied. In this model, it is still possible to use OpenShift NetworkPolicy, which is honored regardless of how pods are mapped to EPGs.

OpenShift supports a DeploymentConfig resource that defines the template for a pod and manages deploying new images or configuration changes. A single deployment configuration is usually analogous to a single microservice. It can support many different deployment patterns, including full restart, customizable rolling updates, and fully custom behaviors, as well as pre- and post-deployment hooks. Each individual deployment is represented as a ReplicationController. The same annotation support that was available earlier for Deployments is available for ReplicationController and—as a result—is available to OpenShift DeploymentConfig as well.

For details about the DeploymentConfig resource, see the page v1.DeploymentConfig in the REST API Reference section on the OpenShift website.

For details about the ReplicationController, see the concept ReplicationController in the Documentation section of the Kubernetes website.

Mapping to Cisco APIC

Each OpenShift cluster is represented by a tenant within Cisco APIC. By default, all pods are placed in a single EPG created automatically by the plug-ins. However, it is possible to map a namespace, deployment, or pod to a specific application profile and EPG in OpenShift through OpenShift annotations.

While this is a highly flexible model, there are three typical ways to use it:

-

EPG=OpenShift Cluster—This is the default behavior and provides the simplest solution. All pods are placed in a single EPG, kube-default.

-

EPG=Namespace—This approach can be used to add namespace isolation to OpenShift. While OpenShift does not dictate network isolation between namespaces, many users may find this desirable. Mapping EPGs to namespaces accomplishes this isolation.

-

EPG=Deployment—A OpenShift deployment represents a replicated set of pods for a microservice. You can put that set of pods in its EPG as a means of isolating specific microservices and then use contracts between them.

Creating and Mapping an EPG

Use this procedure to create and EPG, using annotations to map namespaces or deployments into it.

For information about EPGs and bridge domains, see the Cisco APIC Basic Configuration Guide.

Procedure

| Step 1 |

Log in to Cisco APIC. |

||

| Step 2 |

Create the EPG and add it to the bridge domain kube-pod-bd. |

||

| Step 3 |

Attach the EPG to the VMM domain. |

||

| Step 4 |

Configure the EPG to consume contracts in the OpenShift tenant:

|

||

| Step 5 |

Configure the EPG to consume contracts in the common tenant: Consume: kube-l3out-allow-all (optional) |

||

| Step 6 |

Create any contracts you need for your application and provide and consume them as needed. |

||

| Step 7 |

Apply annotations to the namespaces or deployments. You can apply annotations in three ways:

|

The acikubectl Command

The acikubectl command is a command-line utility that provides an abbreviated way to manage Cisco ACI policies for OpenShift objects and annotations. It also enables you to debug the system and collect logs.

The acikubectl command includes a --help option that displays descriptions of the command's supported syntax and options, as seen in the following example:

acikubectl -–help

Available Commands:

debug Commands to help diagnose problems with ACI containers

get Get a value

help Help about any command

set Set a value

Load Balancing External Services

For OpenShift services that are exposed externally and need to be load balanced, OpenShift does not handle the provisioning of the load balancing. It is expected that the load balancing network function is implemented separately. For these services, Cisco ACI takes advantage of the symmetric policy-based routing (PBR) feature available in the Cisco Nexus 9300-EX or FX leaf switches in ACI mode.

On ingress, incoming traffic to an externally exposed service is redirected by PBR to one of the OpenShift nodes that hosts at least one pod for that particular service. Each node hosts a special service endpoint that handles the traffic for all external services hosted for that endpoint. Traffic that reaches the service endpoint is not rewritten by the fabric, so it retains its original destination IP address. It is not expected that the OpenShift pods handle traffic that is sent to the service IP address, so Cisco ACI performs the necessary network address translation (NAT).

If a OpenShift worker node contains more than one IP pod for a particular service, the traffic is load balanced a second time across all the local pods for that service.

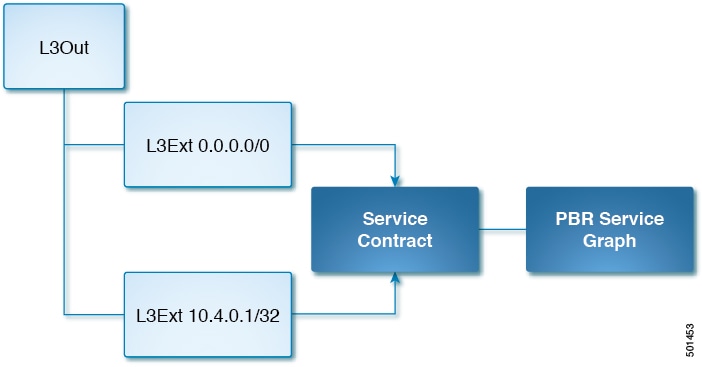

A simplified view of the Cisco ACI policy model required for the north-south load balancer is shown in the following illustration.

In the example, service IP 10.4.0.1 is exposed as an external service. It is presented as a /32 Layer 3 external network. This network provides a contract that is consumed by the default /0 Layer 3 external network. Traffic that comes into the fabric from the outside hits this contract and is redirected by the service graph to the correct set of OpenShift service endpoints.

The Cisco ACI OpenShift integration components are responsible for automatically writing the Cisco ACI policy that implements the external service into Cisco ACI.

Load Balancing External Services Across Multiple L3Out

ACI CNI supports load balancing external services across multiple L3Outs. However, the service contract must be configured

manually on the external EPG in the consumer direction. Also, if the L3Out is in a different tenant and/or VRF, then you need

to change the contract scope accordingly. This can be done by annotating the service with "opflex.cisco.com/ext_service_contract_scope=<scope>". If the ext_service_contract_scope annotation is not set, or if it set as an empty string (i.e. opflex.cisco.com/ext_service_contract_scope=””) then, the contract scope is set to context (VRF). Setting any scope other than context will also set the "import-security"

attribute for the subnet associated with the External EPG that is consuming the contract. This allows the service to be reachable

across the VRFs.

Draining a Node

This section describes how to drain the pods on a node.

Procedure

| Step 1 |

Drain the node: Example: |

||

| Step 2 |

After the drain is finished, scale the controller down to 0 replicas: Example: |

||

| Step 3 |

Remove the old annotations from the node by editing the node description and remove the opflex.cisco.com annotations and save the changes: Example: |

||

| Step 4 |

Find and delete the hostagent pod for the drained node. Example:

|

||

| Step 5 |

Bring the controller up again: Example: |

||

| Step 6 |

Bring back the node: Example: |

||

| Step 7 |

Verify annotations are present and that the hostagent pod is running: Example: |

Moving a Node Between ESXi Hosts in Nested Mode

Follow the procedure in this section to move a node from one ESXI host to another when you have nested ESXi hosts.

Note |

Using VMware vMotion to move nodes is not supported for nested ESXi hosts. Using VMware vMotion under these circumstances results in a loss of connectivity on the node that you are trying to move. |

Procedure

| Step 1 |

Create a new VM and add it to the cluster. |

| Step 2 |

Drain the old VM. |

| Step 3 |

Delete the old VM. |

Service Subnet Advertisement

By default, service subnets are not advertised externally, requiring that external routers be configured with static routes. However, you can configure Cisco Application Centric Infrastructure (ACI) to advertise the service subnets through a dynamic routing protocol.

To configure and verify service subnet advertisement, complete the procedures in this section.

Configuring Service Subnet Advertisement

Complete the following steps to configure service subnet advertisement.

Note |

Perform this procedure for all border leafs that are required to advertise the external subnets. |

Procedure

| Step 1 |

Add routes to null to the subnets: |

| Step 2 |

Create match rules for the route map and add the subnet.

|

| Step 3 |

Create a route map for the L3 Out, add a context to it, and choose the match rules.

|

What to do next

Verify that the external routers have been configured with the external routes. See the section Verifying Service Subnet Advertisement.

Verifying Service Subnet Advertisement

Use NX-OS style CLI to verify that the external routers have been configured with the external routes. Perform the commands for each for the border leafs.

Before you begin

fab2-apic1# fabric 203 show ip route vrf common:os | grep null0 -B1

10.3.0.1/24, ubest/mbest: 1/0

*via , null0, [1/0], 04:31:23, static

Procedure

| Step 1 |

Check the route maps applied to your dynamic routing protocol that permits the advertisement of the subnets. |

| Step 2 |

Find the specific route for each of the nodes, looking for entries that match the name of the match rule: Example:In the example, |

| Step 3 |

Verify that the IP addresses are correct for each of the nodes: Example: |

Service Account Hardening

Every time that you create a cluster, a dedicated user account is automatically created in Cisco Application Policy Infrastructure Controller (APIC). This account is an administrative account with read and write permissions for the whole fabric.

Read and write permissions at the fabric level could be a security concern in case of multitenant fabrics where you do not want the cluster administrator to have administrative access to the Cisco Application Centric Infrastructure (ACI) fabric.

You can modify the dedicated user account limits and permissions. The level and scope of permission that is required for the cluster account depend on the location of the networking resources:

(The networking resources include the bridge domain, virtual routing and forwarding (VRF), and Layer 3 outside (L3Out).)

-

When cluster resources are in the cluster dedicated tenant, the account needs read and write access to the cluster tenant and the cluster container domain.

-

When cluster resources are in the common tenant, the account needs read and write access to the common tenant, the cluster tenant, and the cluster container domain.

Checking the Current Administrative Privileges

Complete the following procedure to see the current administrator privileges for the Cisco Application Centric Infrastructure (ACI) fabric.

Procedure

| Step 1 |

Log in to Cisco Application Policy Infrastructure Controller (APIC). |

| Step 2 |

Go to . |

| Step 3 |

Click the username associated with your cluster. |

| Step 4 |

Scroll to the security domain and verify the following:

|

Modifying Administrative Account Permissions

After you configure the fabric, you can see a new tenant and Cisco Application Centric Infrastructure (ACI) user. Its name is equal to the system_id parameter specified in the Cisco ACI Container Network Interface (CNI) configuration file. Complete the following procedure to modify administrative permissions:

Note |

This procedure works whether cluster resources are in the same tenant or when virtual routing and forwarding (VRF) and Layer 3 Outside (L3Out) are in the common tenant. However, if VRF and L3Out are in the common tenant, you must give write permission to the common tenant In Step 3. |

Procedure

| Step 1 |

Log in to the Cisco Application Policy Infrastructure Controller (APIC). |

| Step 2 |

Create a new security domain by completing the following steps: |

| Step 3 |

Go to and complete the following steps: |

| Step 4 |

Create a custom role-based access control (RBAC) rule to allow the container account to write information into the container domain by completing the following steps: |

| Step 5 |

Map the security domain to the cluster tenant by completing the following steps:

|

Troubleshooting OpenShift Integration

This section contains instructions for troubleshooting the OpenShift integration with Cisco ACI.

For additional troubleshooting information, see the Cisco ACI Troubleshooting Kubernetes and OpenShift at:

Troubleshooting Checklist

This section contains a checklist to troubleshoot problems that occur after you integrate OpenShift with Cisco ACI.

Procedure

| Step 1 |

Check for faults on the fabric and resolve any that are relevant. |

| Step 2 |

Check that the API server advertisement addresses use the node subnet, and that the nodes are configured to route all OpenShift subnets over the node uplink. Typically, the API server advertisement address is pulled from the default route on the node during installation. If you are putting the default route on a different network than the node uplink interfaces, you should do so—in addition to configuring the subnets from the planning process and the cluster IP subnet used internally for OpenShift. |

| Step 3 |

Check the logs for the container aci-containers-controller for errors using the following command on the OpenShift master node: acikubectl debug logs controller acc |

| Step 4 |

Check these node-specific logs for errors using the following commands on the OpenShift master node:

|

Troubleshooting Specific Problems

This section describes how to troubleshoot specific problems.

Collecting and Exporting Logs

Procedure

|

Enter the following command to collect and export OpenShift logs: acikubectl debug cluster-report -o cluster-report.tar.gz |

Troubleshooting External Connectivity

Procedure

|

Check configuration of the next-hop router.

|

Troubleshooting POD EPG Communication

Follow the instructions in this section if communication between two pod EPGs is not working.

Procedure

|

Check the contracts between the pod EPGs. Ensure that you verify that there is a contract that allows ARP traffic. All pods are in the same subnet so Address Resolution Protocol (ARP) is required. |

Troubleshooting Endpoint Discovery

If an endpoint is not automatically discovered, either EPG does not exist or mapping of the annotation to EPG is not in place. Follow the instructions in this section to troubleshoot the problem.

Procedure

| Step 1 |

Ensure that the EPG name, tenant, and application are spelled correctly. |

| Step 2 |

Make sure that the VMM domain is mapped to an EPG. |

Troubleshooting Pod Health Check

Follow the instructions in this section if the pod health check does not work.

Procedure

|

Ensure that the health check contract exists between the pod EPG and the node EPG. |

Troubleshooting aci-containers-host

Follow the instructions in this section if the mcast-daemon inside aci-containers-host fails to start.

Procedure

|

Check the mcast-daemon log messages: Example:If the following error message is present, Fatal error: open: Address family not supported by protocol, ensure that IPv6 support is enabled in the kernel. IPv6 must be enabled in the kernel for the mcast-daemon to start. |

Contacting Support

If you need help with troubleshooting problems, generate a cluster report file and contact Cisco TAC for support.

Feedback

Feedback