Industrial Automation for Process Control and Refineries Design Guide

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- April 8, 2020

Chapter: Industrial Automation for Process Control and Refineries Design Guide

- Overview of Industrial Wireless Architecture

- Cisco Industrial Wireless Network Components

- Adaptive Wireless Path Protocol (AWPP)

- IEEE 802.15.4 Wireless Networks

- Greenfield Deployment Architecture

- Bridge Group Name (BGN)

- Security

- Monitoring Overall Network Health

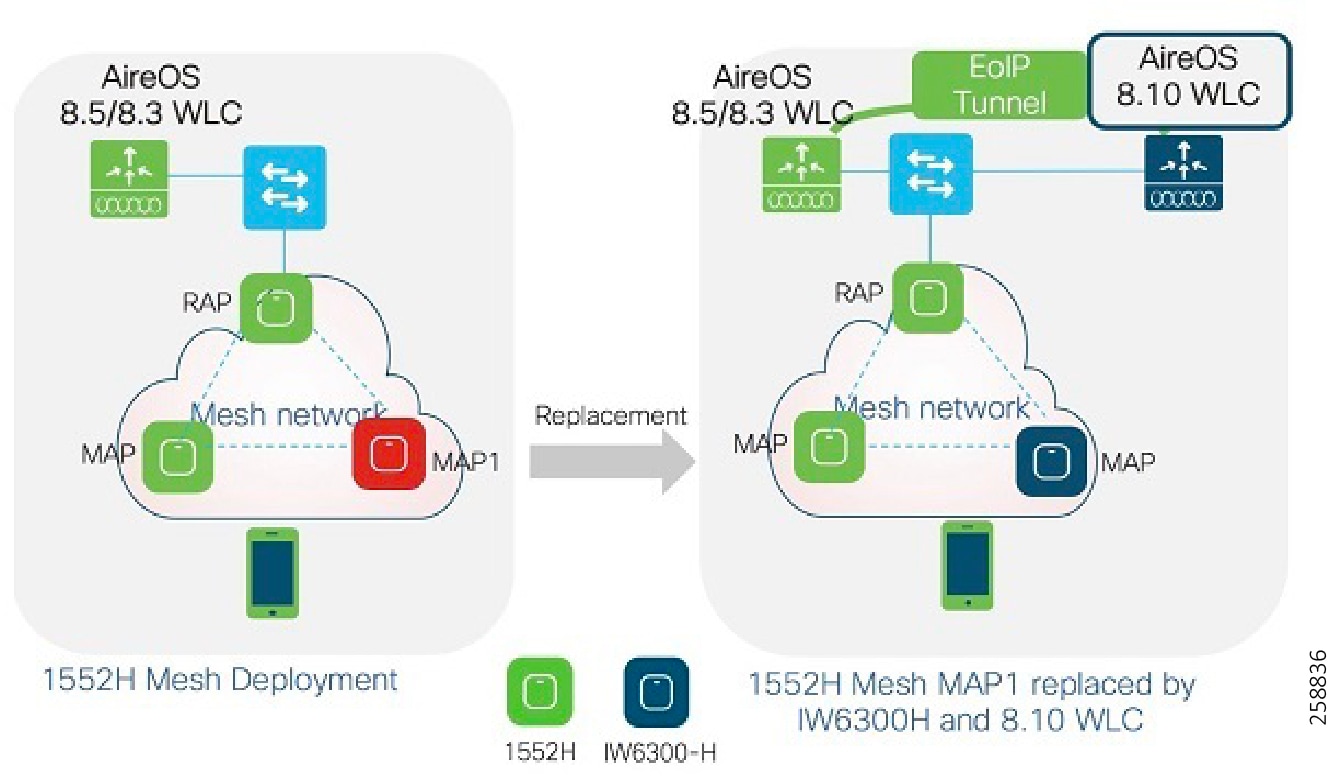

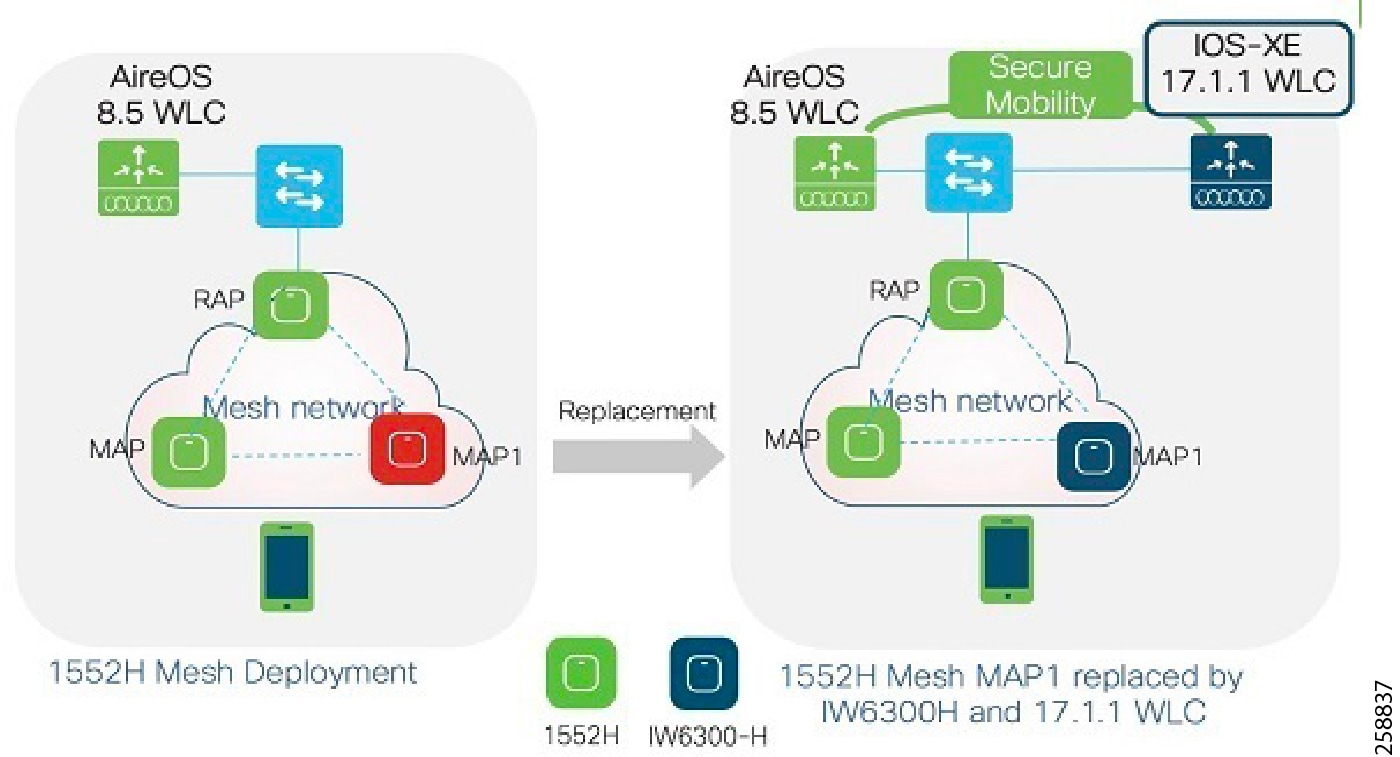

- Brownfield Deployment Architecture

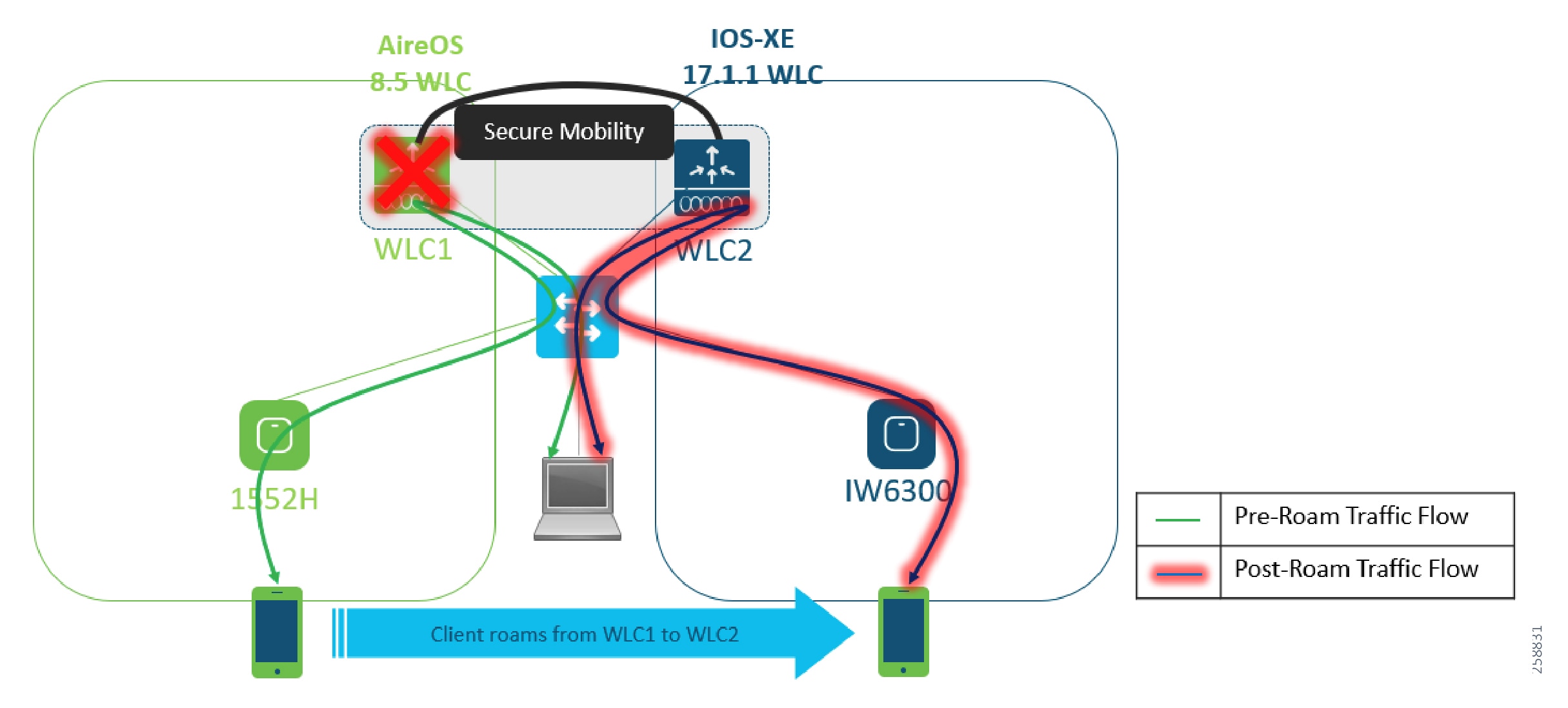

- Mixed Mesh Architecture and Data Flow

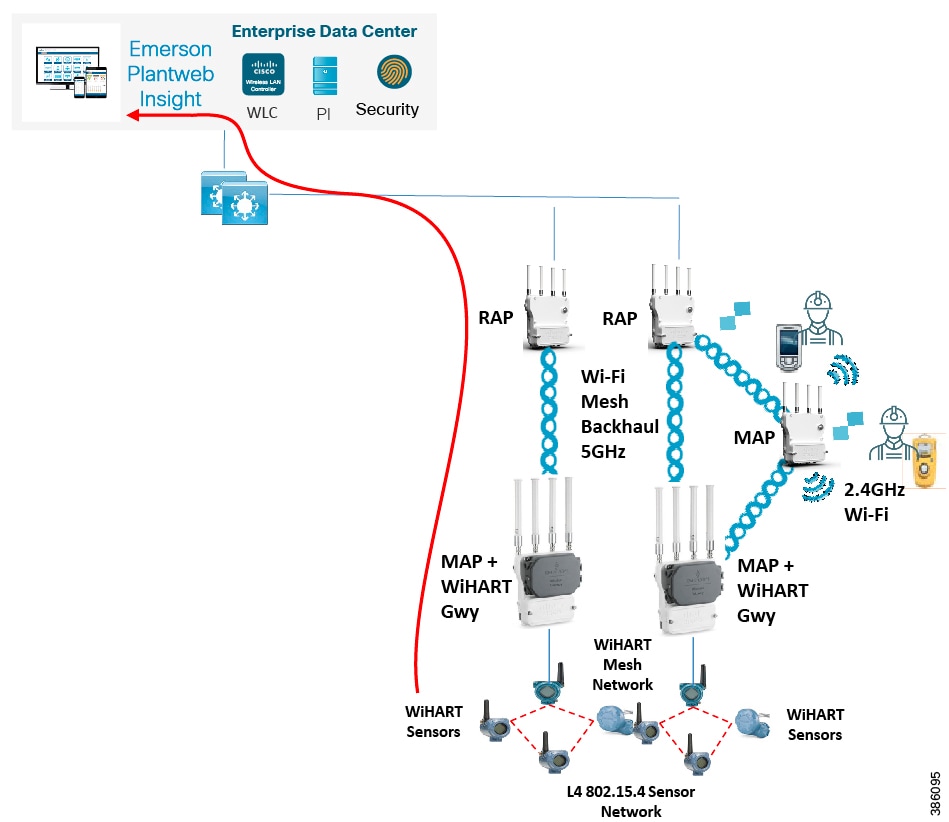

- Emerson WiHART Deployment for Condition Monitoring

- Emerson WiHART Gateways

- Emerson Rosemount (WiHART) Sensors

- Rosemount™ 3051S Series Pressure Transmitter and Rosemount 3051SF Series Flow Meter

- Emerson PlantWeb Insight

- Wi-HART Data flow over Wi-FI

- Installing and Connecting Wireless Instrumentation

- Security Considerations

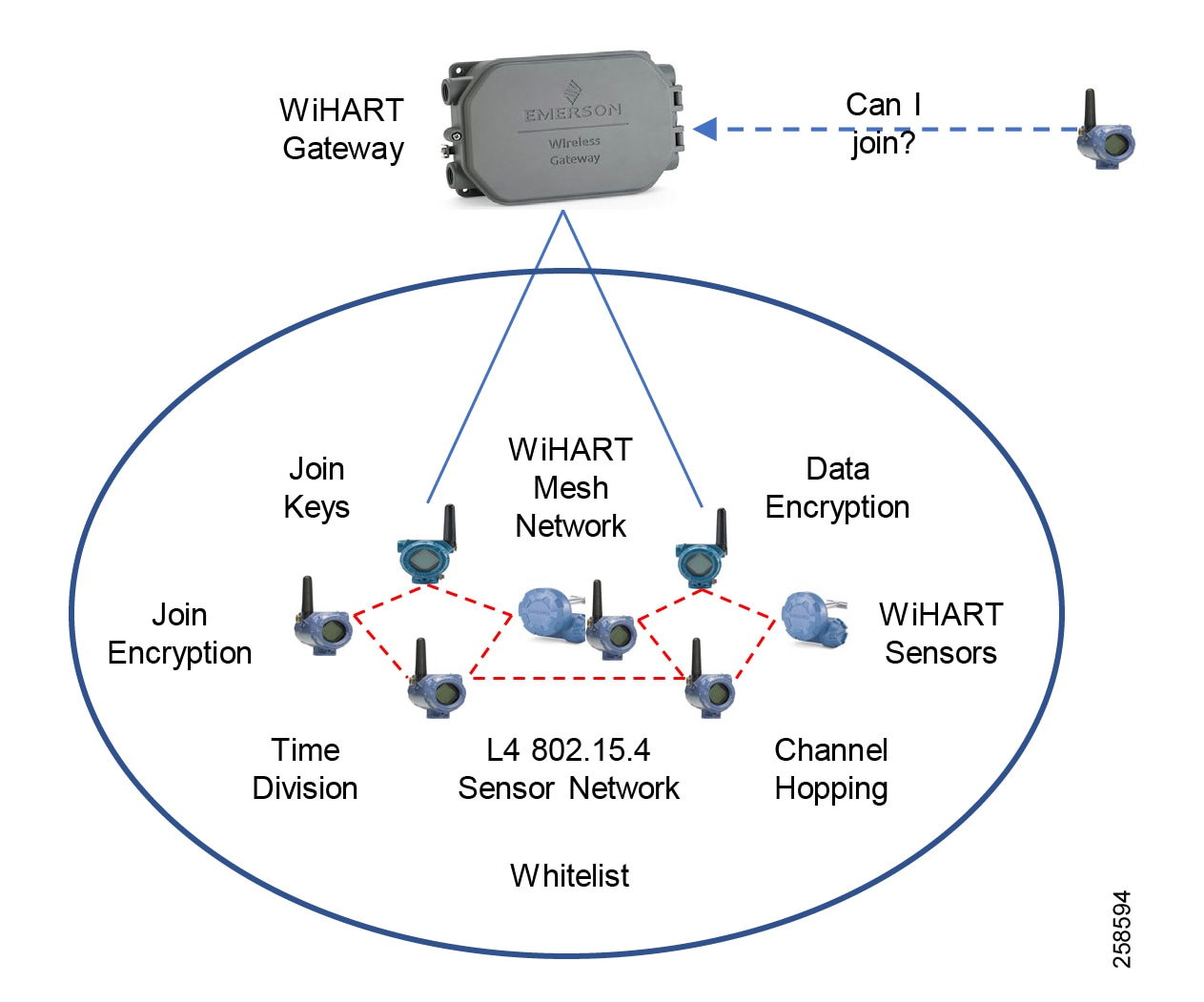

- WiHART Data Link Layer Security

- Data Security

- Network Security

Industrial Automation for Process Control and Refineries

Introduction

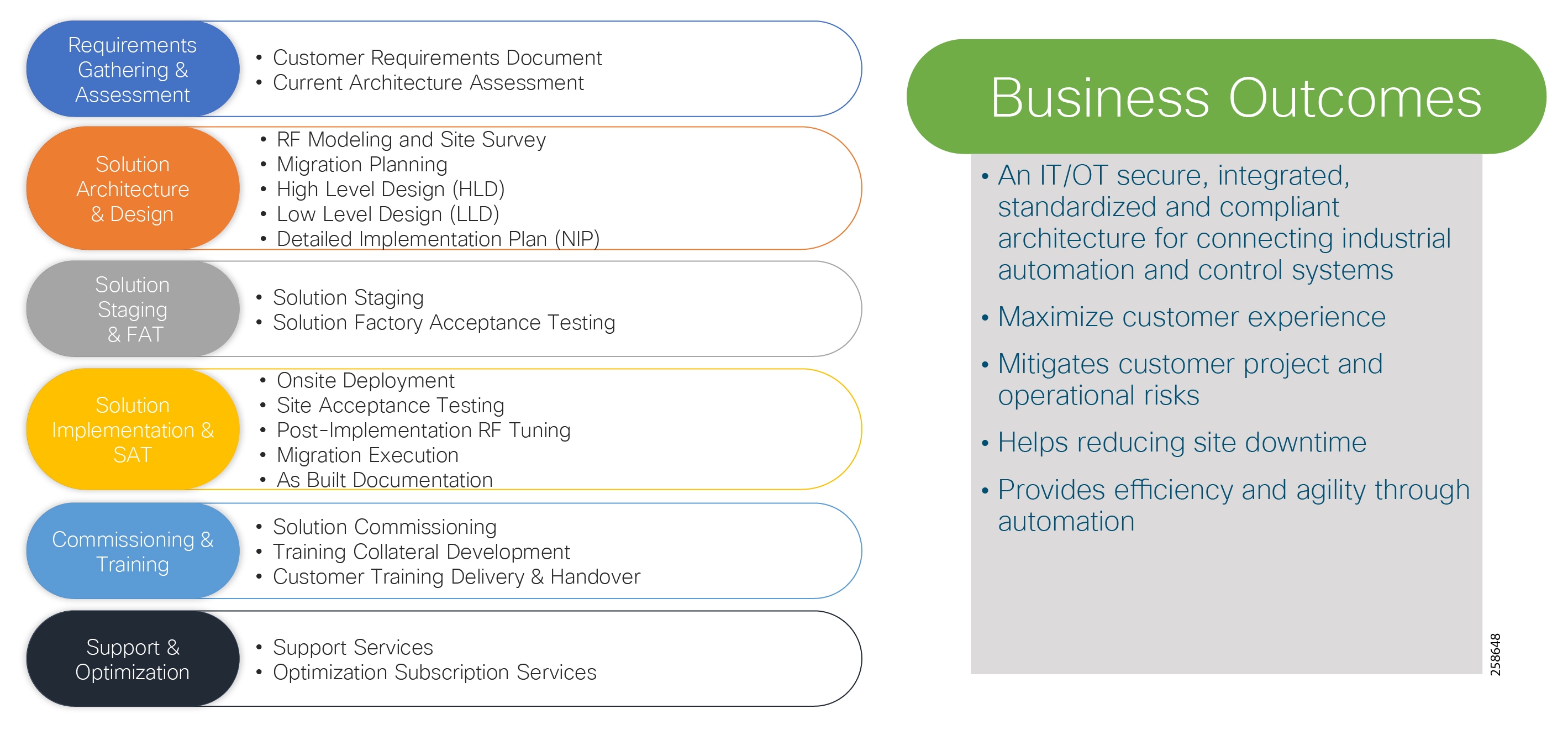

This design guide provides a comprehensive explanation of Industrial Automation for Process Control and Refineries system design. It includes information about the industry challenges, primary use cases, architecture, and guidelines for implementation. The guide also recommends best practices and potential issues when deploying the reference architecture. This document builds on the Connected Refineries and Processing Plant Cisco Reference Document (January 2016) available: https://www.cisco.com/c/dam/en_us/solutions/industries/docs/manufacturing/connected-refineries-pocessing-plant.pdf

This release of this Cisco Validated Design (CVD):

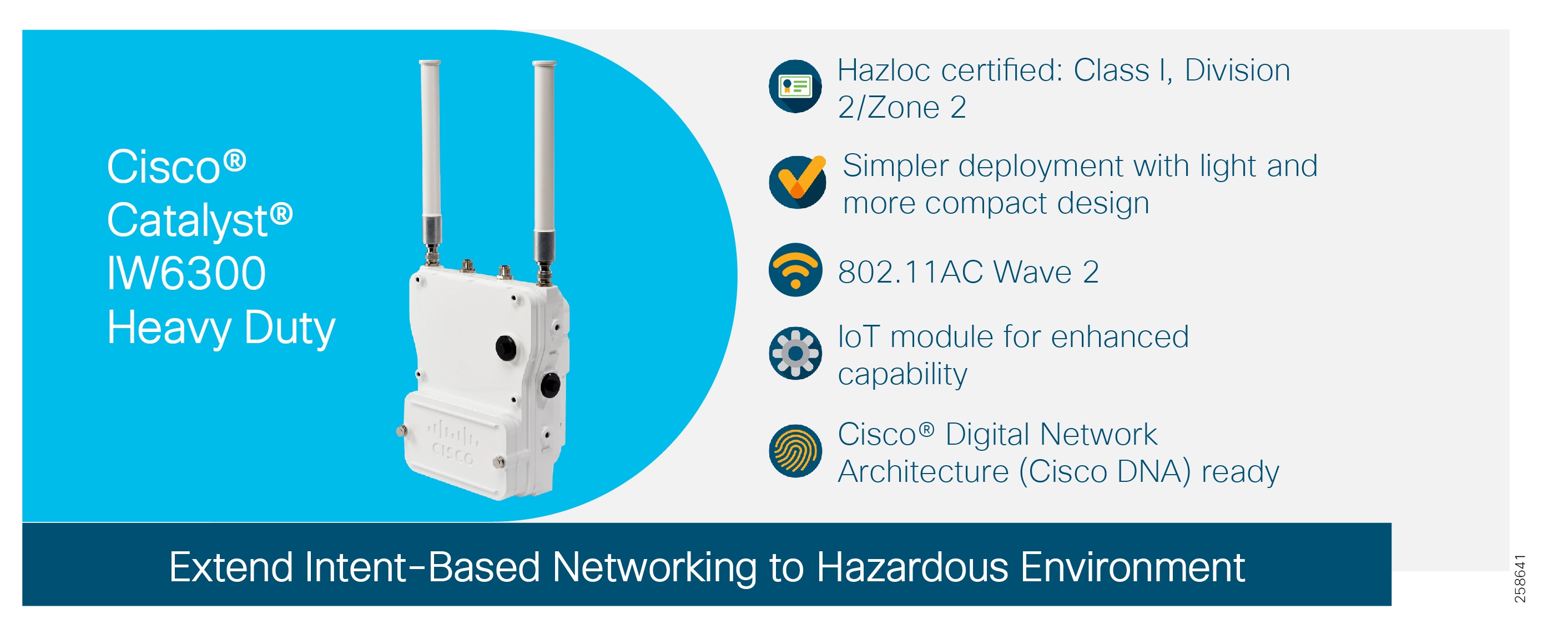

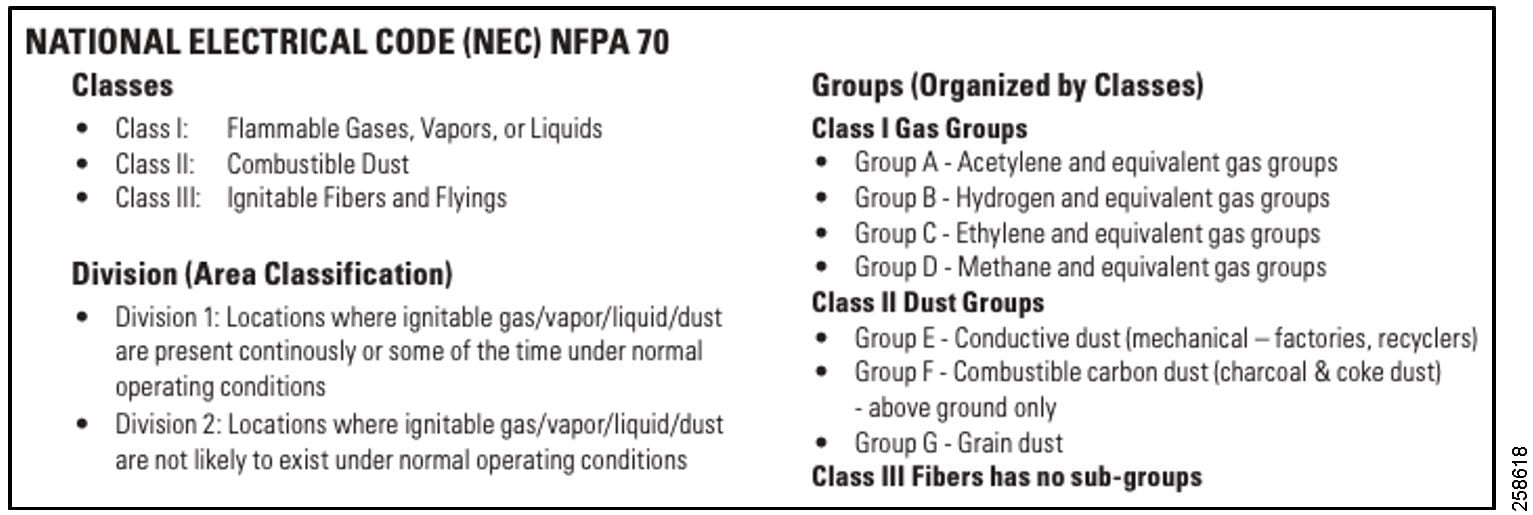

■![]() Aligns with the release of the new Cisco Hazloc Class1/Div 2/Zone2 hazardous location-certified industrial access point, the IW 6300. This guide provides best practices and design recommendations for:

Aligns with the release of the new Cisco Hazloc Class1/Div 2/Zone2 hazardous location-certified industrial access point, the IW 6300. This guide provides best practices and design recommendations for:

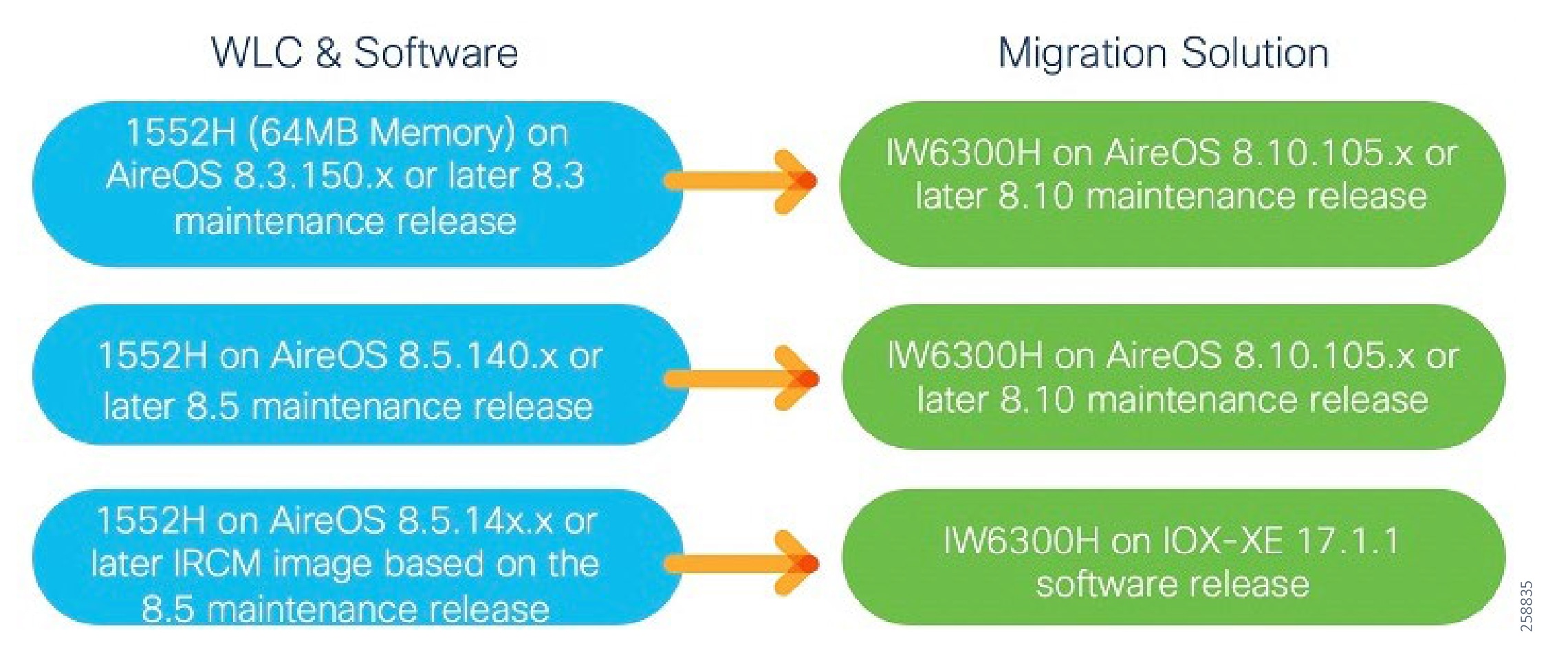

–![]() Migrating from the 1552 access point to the IW 6300 in existing Brownfield deployments where the 1552 access points are currently installed.

Migrating from the 1552 access point to the IW 6300 in existing Brownfield deployments where the 1552 access points are currently installed.

–![]() Greenfield opportunities where wireless networking is being enabled in the process plants and refineries.

Greenfield opportunities where wireless networking is being enabled in the process plants and refineries.

■![]() Describes a secure, scalable, and robust wired and wireless architecture that addresses key challenges and use cases for refinery and processing plant environments. This architecture supports capabilities for both workforce-enabled use cases and wireless instrumentation use cases. The focus of this release is primarily on the design and implementation of the wireless network supporting refineries and processing plants.

Describes a secure, scalable, and robust wired and wireless architecture that addresses key challenges and use cases for refinery and processing plant environments. This architecture supports capabilities for both workforce-enabled use cases and wireless instrumentation use cases. The focus of this release is primarily on the design and implementation of the wireless network supporting refineries and processing plants.

■![]() Provides architecture and design guidance for integrating wireless instrumentation 802.15.4 WiHART networks with the Emerson 1410 gateways and Rosemount instrumentation to the Cisco Wi-Fi Mesh networks.

Provides architecture and design guidance for integrating wireless instrumentation 802.15.4 WiHART networks with the Emerson 1410 gateways and Rosemount instrumentation to the Cisco Wi-Fi Mesh networks.

■![]() Includes Cyber Security architecture and guidance aligned with key industry standards such as NIST, IEC62443 Industrial Cybersecurity. This guide also introduces Cisco Cyber Vision for enhanced visibility of industrial devices and communication with anomaly detection for the OT assets and industrial protocols.

Includes Cyber Security architecture and guidance aligned with key industry standards such as NIST, IEC62443 Industrial Cybersecurity. This guide also introduces Cisco Cyber Vision for enhanced visibility of industrial devices and communication with anomaly detection for the OT assets and industrial protocols.

■![]() Documents the suggested equipment and technologies, system level configurations, and recommendations. This document also includes descriptions of caveats and considerations that process control customers should understand as they implement best practices.

Documents the suggested equipment and technologies, system level configurations, and recommendations. This document also includes descriptions of caveats and considerations that process control customers should understand as they implement best practices.

This document primarily focuses on addressing oil and gas refinery and processing plant issues though use cases and architectures. These solutions are applicable to other areas of the oil and gas value chain such as the oilfield, production platforms, and large pipeline stations in midstream including compressor or pump stations. The document includes:

■![]() Process Plant and Refinery Overview provides an overview of the oil and gas value chain, industry challenges, and three areas of focus with high level use cases that address the challenges.

Process Plant and Refinery Overview provides an overview of the oil and gas value chain, industry challenges, and three areas of focus with high level use cases that address the challenges.

■![]() Solution Overview and Use Cases addresses the high level design and low level use cases for the refinery and processing plant.

Solution Overview and Use Cases addresses the high level design and low level use cases for the refinery and processing plant.

■![]() Industrial Wireless Design provides low level design guidance for the wireless networks in the refinery.

Industrial Wireless Design provides low level design guidance for the wireless networks in the refinery.

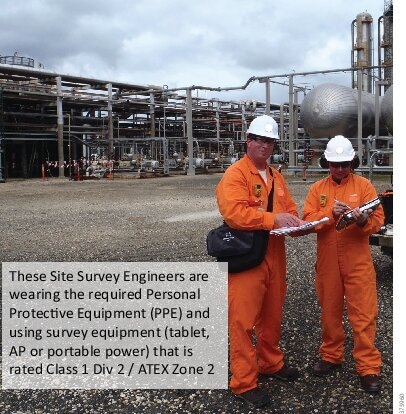

■![]() Industrial Wireless Site Survey and Design Considerations details challenges and site survey considerations for deploying wireless network in a refinery or processing plant.

Industrial Wireless Site Survey and Design Considerations details challenges and site survey considerations for deploying wireless network in a refinery or processing plant.

Process Plant and Refinery Overview

This section describes the oil and gas value chain, industry challenges, and three high level use cases focused on addressing those challenges.

Oil and Gas Value Chain

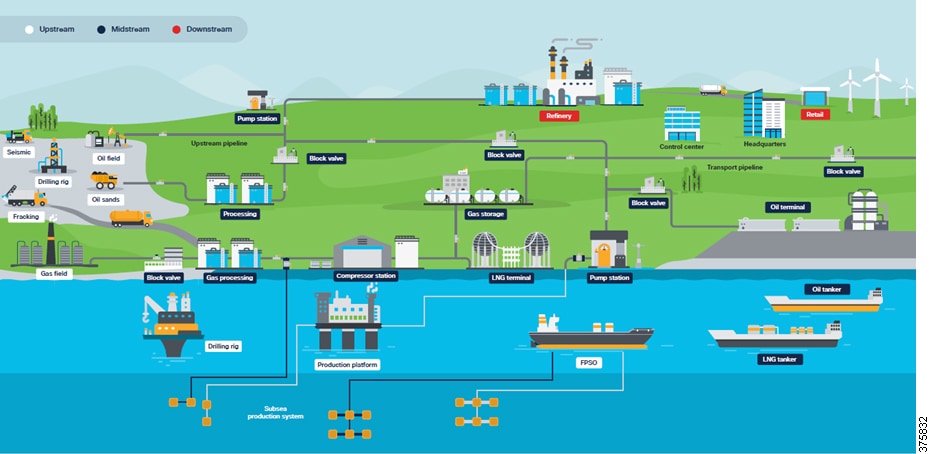

At a high level, the oil and gas value chain starts with exploration to discover resources, then transitions through development, production, processing, transportation/storage, refining, and marketing/retail of hydrocarbons. This value chain is typically grouped into three areas:

■![]() Upstream —Includes the initial exploration, evaluation and appraisal, development, and production of oil and gas assets. This is referred to as Exploration and Production (E&P). These activities occur both onshore and offshore. Upstream focuses on wells and determining how to operate individual wells or entire basins to achieve the best return on investment.

Upstream —Includes the initial exploration, evaluation and appraisal, development, and production of oil and gas assets. This is referred to as Exploration and Production (E&P). These activities occur both onshore and offshore. Upstream focuses on wells and determining how to operate individual wells or entire basins to achieve the best return on investment.

■![]() Midstream —Primarily focuses on the transport and storage of hydrocarbons via pipelines, tankers, tank farms, and terminals, providing links between production and processing facilities, and processing and the end customer. Crude oil is transported downstream to the refinery for processing into the final product.

Midstream —Primarily focuses on the transport and storage of hydrocarbons via pipelines, tankers, tank farms, and terminals, providing links between production and processing facilities, and processing and the end customer. Crude oil is transported downstream to the refinery for processing into the final product.

Midstream also includes the processing of natural gas. Although some of the processing occurs in the field near the source, the majority of gas processing takes place at a processing plant or facility, arriving there typically from the gathering pipeline network. For wholesale markets, natural gas must first be purified by removing Natural Gas Liquids (NGLs) such as butane, propane, ethane, and pentanes, before being transported via pipeline, or turned into Liquid Natural Gas (LNG) and shipped. The gas can be used immediately or stored. The NGLs are leveraged downstream for petrochemical or liquid fuels or turned into final products at the refinery. Processed natural gas is transported to gas distribution utilities for delivery to their commercial, industrial, and residential customers.

■![]() Downstream —Concerned with the final processing and delivery of product to wholesale, retail, or direct industrial customers. The refinery treats crude oil and NGL and then converts them into consumer and industrial products through separation, conversion, and purification. Modern refinery and petrochemical technology can transform crude materials into thousands of useful products including gasoline, kerosene, diesel, lubricants, plastics, and asphalt.

Downstream —Concerned with the final processing and delivery of product to wholesale, retail, or direct industrial customers. The refinery treats crude oil and NGL and then converts them into consumer and industrial products through separation, conversion, and purification. Modern refinery and petrochemical technology can transform crude materials into thousands of useful products including gasoline, kerosene, diesel, lubricants, plastics, and asphalt.

Value Chain for Oil and Gas highlights the value chain for oil and gas.

Figure 1 Value Chain for Oil and Gas

Refineries and processing plants are typically sprawling complexes with many piping systems running throughout, interconnecting the various processing and chemical units. Many large storage tanks also exist around the facility. The plant can cover multiple acres and many of the buildings and processing units on site are multi-stories high. The processing units and extensive piping networks are generally metal, spanning long distances between buildings or treatment areas. Plant equipment at the sites is continually being upgraded to ensure efficient operation. Some plant areas are potentially hazardous, handling highly-explosive gases from the chemical processes (see Industrial Wireless Site Survey and Design Considerations for details). Potential challenges from corrosion due to steam and cooling water around the site resulting in the possibility of deadly gas leaks are ever-present.

The refinery is a complicated working environment with complex equipment and extensive piping networks. Products are continuously being produced, requiring the system to be continuously monitored via pressure, temperature, vibration, and flow. At any one time, hundreds of workers including company employees, contractors, and external company support staff may be onsite. Plant operators ensure the entire process is working correctly, engineers monitor process efficiency and optimize or redesign where necessary, and maintenance staff ensure equipment is maintained, repaired, and safe. The transportation requirement to move raw materials or finished product in and out means that multiple vehicle types such as cars, trucks, oil tankers, and trains are also part of the refinery environment.

Management and safety systems keep all of the processes and people operating effectively, efficiently, and safely. To ensure these systems can operate in coordination across the refinery or processing facility, a comprehensive and reliable communications system must be implemented.

Industry Challenges

Oil and gas companies are facing distinct challenges across the industry: reduce costs, improve operational efficiency, productivity, and employee safety. The market downturn in 2014 forced oil and gas companies consider new and innovative ways to increase production, streamline operations across IT and OT, and explore new business and operating models. They found that “Digitalization” could be part of the answer. Many describe this as “Digital Transformation” or simply embracing “Digitization”. New and existing data sources need to be accessed, regulations must be complied with, and the safety of employees must be ensured. Digital technology is now viewed by many as the enabler, particularly through the adoption of wireless-based technology. The following are broad challenges and trends facing the industry.

■![]() Worker Safety is of the utmost importance—The ultimate goal is to achieve zero worker injuries, minimize the human factor, and provide a risk lens for plant or facility safety and security. New technologies are being used to provide visibility of worker location and life-safety wearables that are connected to the network, such as mobile detectors, can provide a near real-time view into risk across the plant.

Worker Safety is of the utmost importance—The ultimate goal is to achieve zero worker injuries, minimize the human factor, and provide a risk lens for plant or facility safety and security. New technologies are being used to provide visibility of worker location and life-safety wearables that are connected to the network, such as mobile detectors, can provide a near real-time view into risk across the plant.

■![]() Improve Productivity —With greater visibility into operational workflows, the effort to improve average worker productivity (wrench time) in the field, whether for employees or contractors, has increased dramatically. With average wrench time estimates of around 18% in oil and gas, this means only two out of ten hours are spent on productive work. The industry is looking to technology and digitization to increase and perhaps double productive time.

Improve Productivity —With greater visibility into operational workflows, the effort to improve average worker productivity (wrench time) in the field, whether for employees or contractors, has increased dramatically. With average wrench time estimates of around 18% in oil and gas, this means only two out of ten hours are spent on productive work. The industry is looking to technology and digitization to increase and perhaps double productive time.

■![]() Improve Operational Efficiency —With greater agility to react to production-impacting failures, market trends, industry fluctuations, and shifting demand, operations are more efficient. Digitizing the oil and gas supply chain from upstream to downstream will be transformative. The adoption of Industry 4.0 and Internet of Things (IoT) sensor connectivity, wireless instrumentation, and leveraging digital technologies such as analytics, machine learning, and Artificial Intelligence (AI), will help enable oil and gas companies to make more informed decisions, improve productivity, and increase efficiency and safety.

Improve Operational Efficiency —With greater agility to react to production-impacting failures, market trends, industry fluctuations, and shifting demand, operations are more efficient. Digitizing the oil and gas supply chain from upstream to downstream will be transformative. The adoption of Industry 4.0 and Internet of Things (IoT) sensor connectivity, wireless instrumentation, and leveraging digital technologies such as analytics, machine learning, and Artificial Intelligence (AI), will help enable oil and gas companies to make more informed decisions, improve productivity, and increase efficiency and safety.

■![]() Aging Workforce —The oil and gas workforce is starting to age out and retire. The newer workforce is accustomed to connectivity and anywhere access to data, so companies need to innovate to help meet this expectation of mobile workers and improve visibility into operations.

Aging Workforce —The oil and gas workforce is starting to age out and retire. The newer workforce is accustomed to connectivity and anywhere access to data, so companies need to innovate to help meet this expectation of mobile workers and improve visibility into operations.

■![]() Physical Security—The more devices that are connect to the network, the wider the attack surface. With oil and gas companies adopting new technologies and use cases to help digitize and transform the business, new devices are connected to the network. This brings potential security attacks—intentional, unintentional, internal, and external—that can affect the business. In oil and gas this not only impacts production, but could affect worker safety or result in environmental incidents and hefty fines. Cyber security is not the only concern; physical security solutions such as video surveillance and access control are also needed.

Physical Security—The more devices that are connect to the network, the wider the attack surface. With oil and gas companies adopting new technologies and use cases to help digitize and transform the business, new devices are connected to the network. This brings potential security attacks—intentional, unintentional, internal, and external—that can affect the business. In oil and gas this not only impacts production, but could affect worker safety or result in environmental incidents and hefty fines. Cyber security is not the only concern; physical security solutions such as video surveillance and access control are also needed.

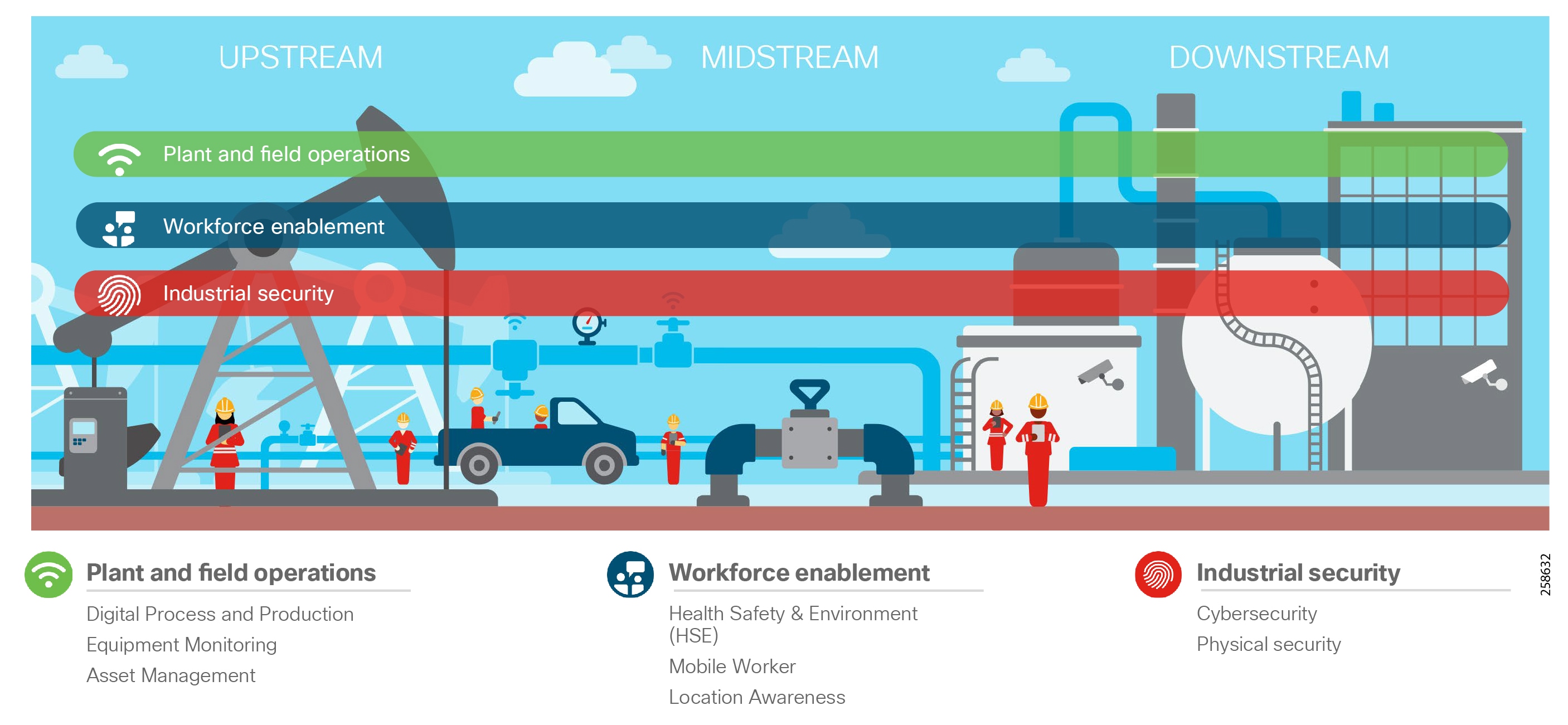

Refinery and Processing Plant High Level Use Cases

A refinery or processing facility deploys several systems to ensure uptime, continuous operations, and safety of workers. Fundamentally a secure, robust, wired and wireless infrastructure must be implemented to keep all the systems, processes, and workers operating efficiently and safely across the entire plant.

Communications must support process control applications from the instrument or sensor to the control room application. Communications support the mobile worker with access to data and information to perform his or her role. The systems can be categorized as:

■![]() Operational—Directly involved with supporting refinery operations such as the process control or safety systems.

Operational—Directly involved with supporting refinery operations such as the process control or safety systems.

■![]() Non-operational—Such as multiservice applications that support the worker and operations within the refinery, but are not directly associated with process control. Examples of non-operational services include voice, video surveillance, condition-based monitoring, and remote expert services.

Non-operational—Such as multiservice applications that support the worker and operations within the refinery, but are not directly associated with process control. Examples of non-operational services include voice, video surveillance, condition-based monitoring, and remote expert services.

The operational and non-operational services fit into three core categories across the refinery and the oil and gas value chain (Core Categories).

Plant and Field Operations

Plant and Field Operations provides operational and non-operational services supporting the process and equipment across oil and gas plants, facilities, or oil fields. The three high level use cases are described below. These use cases target uptime, operational efficiency and improved safety through continuous monitoring of the process, and equipment and assets within an oil and gas installation.

Digital Process and Production

This applies robust wired and wireless network communications with security and data management technologies to Industrial Automation and Control Systems (IACS) for real-time plant and field operations that are critical for production assets at the core of the business. Within the refinery this supports the Process Control Network (PCN), Safety and Power networks which at their core are focused on maintaining the stability, continuity, and integrity of industrial processes. Sensors, devices, controllers, and actuators must be available and managed to properly operate the industrial processes.

Equipment Monitoring

Collecting equipment data in near real-time provides the opportunity to optimize equipment performance and proactively detect issues before they occur. In most instances applications such as condition-based monitoring or predictive maintenance are not considered part of the critical process control. The data can be processed at the edge and then published to the cloud or on premise where it can be consumed. Predictive or proactive analytics can be leveraged in a facility to better manage asset maintenance on plant equipment such as motors, valves, and pumps. Typically the sensors are wireless Wireless HART or ISA 100 based. Depending on the criticality or location, the wireless sensor data is wired directly into control networks or backhauled over Wi-Fi networks.

Its worth noting that some instances of equipment monitoring may be part of critical process control. This then becomes part of the process or safety system under the scope of Digital Process and Production.

Asset Management

Across an oil and gas facility there are a number of non-critical assets that are not part of process control networks. Production Asset Management automates the collection of non-critical operational data and asset location using periodic, low-cost wireless communications. An example is non-process control related tank level monitoring or asset location awareness in parts of the refinery using LoRA Technology where regular 802.11 wireless is not deployed.

Workforce Enablement

There are several challenges within the oil and gas environments that affect worker productivity, efficiency, and safety. As noted earlier, attracting a younger workforce and retaining them is a major challenge facing the industry as the educated workforce retires. Enabling the worker with new technology such as wirelessly connected ruggedized tablets can provide them with real-time access to information to perform simple or complex operational tasks in the field regardless of location. Remote expert applications are another example. The expert is providing support services to the onsite field engineers for training and improved worker productivity. Near real time location services are key. They provide near real time visibility into assets and personnel throughout the workplace with the latter contributing to improved health and safety.

Health, Safety and Environment (HSE)

Health and safety is paramount throughout the oil and gas industry: upstream, midstream, and downstream. Protection of people, property, and infrastructure is key to preventing personnel loss and injury, and environmental incidents, ultimately providing a smart, safe, and secure workplace. Multiple high level and low level use cases contribute to HSE. Existing safety systems deployed in the plants can be enhanced with the ability to proactively view potential health and safety threats through equipment monitoring or pipeline integrity monitoring within a refinery. Providing mobile gas detectors with location awareness can provide data for real-time visibility of gas detection and personnel location in the refinery or processing plant. These use cases require a secure, robust wired and wireless infrastructure to enhance HSE within the oil and gas industry.

Mobility

Mobility is a major contributor to optimized operations and efficiency in oil and gas. Typically, field personnel perform work using information from unconnected ruggedized laptops in the field or from paper-based documents. As companies strive to improve operations, they have deployed wireless networks to enable the workforce and gain access to data on demand. Workers with safe tablets and devices can now gain access to data and update information in real time. Access to equipment manuals on demand, updating work orders remotely, and access to advanced applications such as remote expert while out in the field ultimately provide huge improvements in worker productivity.

Location

Keep workers safe and optimize productivity by always knowing who is where and what they are doing—in the field, along the pipeline, or in the refinery. Asset location tracking technologies such as RFID tags across an IEEE 802.11 wireless network or GPS-enabled sensors connected to the network enable other use cases described in this CVD (see Solution Overview and Use Cases). Plant administrators, security personnel, users, asset owners, and health and safety staff have all expressed interest in location-based services to help them address several issues in the plant. Managing the location of assets and personnel throughout the plant is key to improving operational efficiency and improving safety and regulatory compliance. Location-based services and tracking:

■![]() Improves safety for personnel and property

Improves safety for personnel and property

■![]() Provides visibility into personnel behavior or presence in risk-associated areas with geo-fencing

Provides visibility into personnel behavior or presence in risk-associated areas with geo-fencing

■![]() Provides insights into worker efficiency for the business to streamline workforce tasks and schedule the workforce efficiently

Provides insights into worker efficiency for the business to streamline workforce tasks and schedule the workforce efficiently

■![]() Reduces nonproductive time and helps locate correct machinery assets

Reduces nonproductive time and helps locate correct machinery assets

■![]() Contributes to improved turnaround and cost savings during Shutdown/Turnaround and Outage (STO) events

Contributes to improved turnaround and cost savings during Shutdown/Turnaround and Outage (STO) events

Industrial Security

Historically, a security-by-obscurity approach was adopted for the process control domain. Networks were seen as standalone with no public access, proprietary protocols were assumed as being difficult to understand and compromise, and security incidents were more likely to be accidental. As oil and gas companies continue to adopt new technologies and use cases, new and diverse devices are being connected to the network. This brings with it a potentially wider set of security attack challenges (intentional, unintentional, external, and internal). To address these, companies need to adopt a comprehensive cyber security framework. As the domains between Operational Technology (OT) and Information Technology (IT) converge, they must also align security strategies and coordinate to ensure end-to-end security. In addition to cyber security, physical safety and security solutions that include video surveillance, access control and analytics are required. A foundation for security must include both cyber and physical elements to best protect the process control environment.

Cyber Security

IoT devices in the oil and gas environment collect a wealth of real-time, real-world data that can be crucial to achieving goals around improving operational efficiency, optimizing equipment operation and maintenance, safeguarding workers, and addressing compliance requirements. Commercial off-the-shelf (COTS) products are increasingly used in oil and gas to perform tasks that were originally assigned to purpose-built equipment. COTS products come with more potential vulnerabilities, and therefore greater security risk; they dramatically increase the size of the potential attack surface. Oil and gas companies have varying degrees of cyber security maturity, so a staged approach to implementing cyber security in alignment with key security standards such as IEC 62443 and NIST should be considered.

Initially oil and gas companies can implement comprehensive visibility of the environment and create a baseline of connected assets. A risk assessment can then be made to measure and identify potential risks and establish a baseline of the environment. The company can further segment zones and conduits, develop and deploy consistent security policies to each segment, form mitigation strategies, and review the assessment regularly—making updates to reflect new equipment and new threats.

Physical Security

Physical security solutions provide broad capabilities for video surveillance, IP cameras, electronic physical access control, incident response and notifications, and personnel safety. Integrated solutions using connected cameras, electronic physical access control, geo-fencing perimeter protection solutions, and third-party intrusion can provide end-to-end support for safety and security detection, monitoring and management, and threat response across upstream, midstream, and downstream environments. Examples include video surveillance to provide perimeter security integrated with access control systems for an employee who swipes an access card to gain entry, and used for emergency incidents in the facility such as gas leaks or mandown to assist safety staff and first responders.

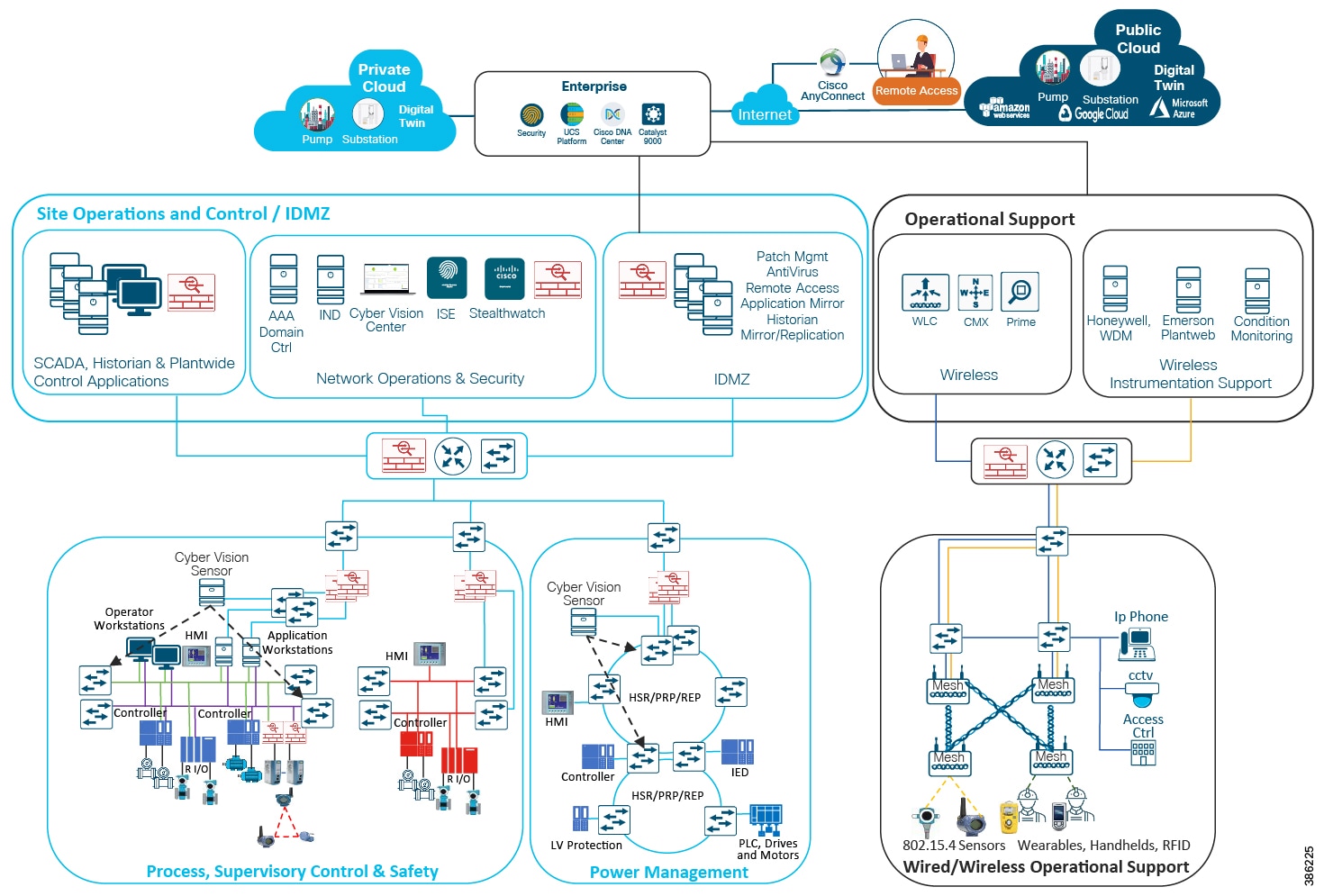

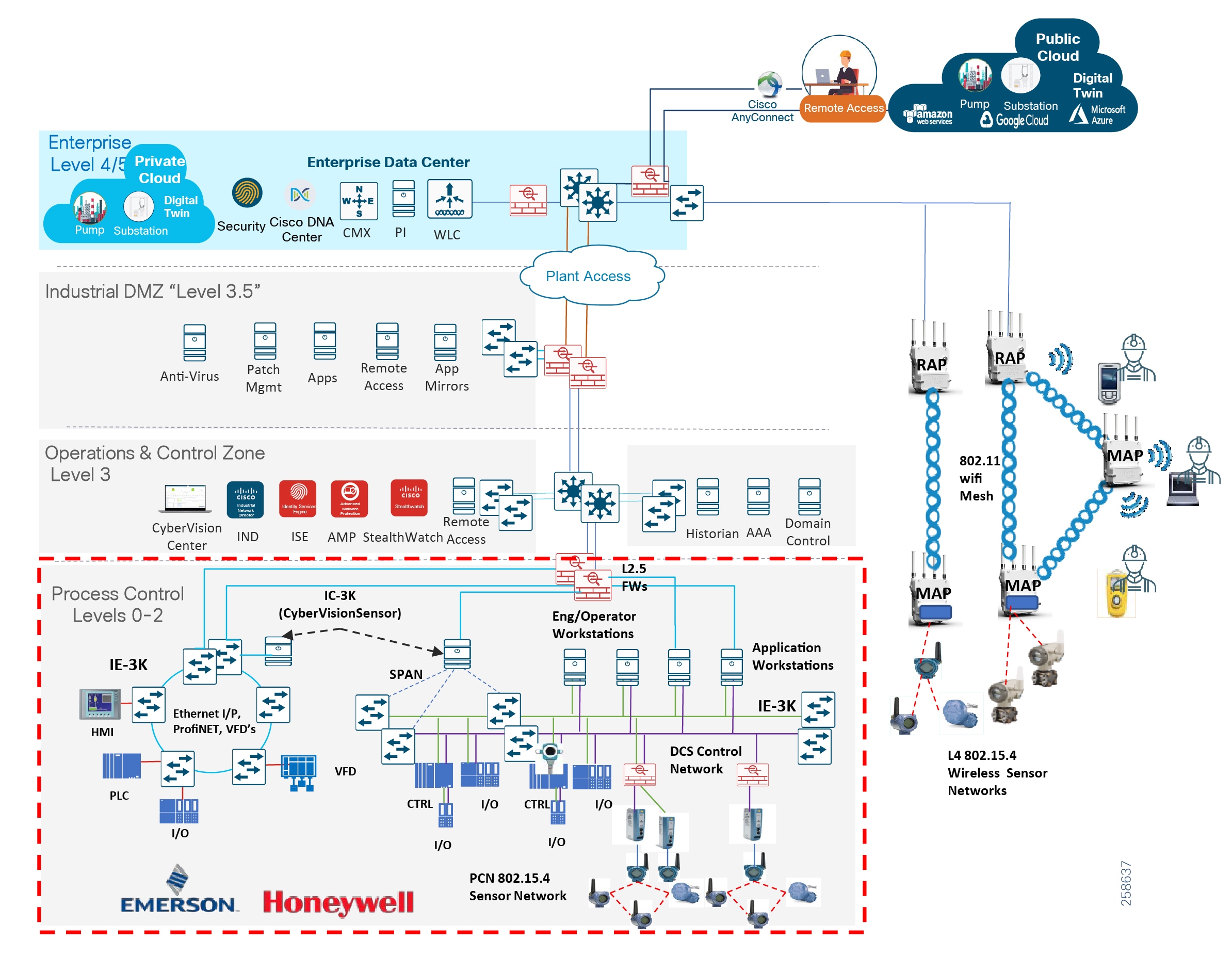

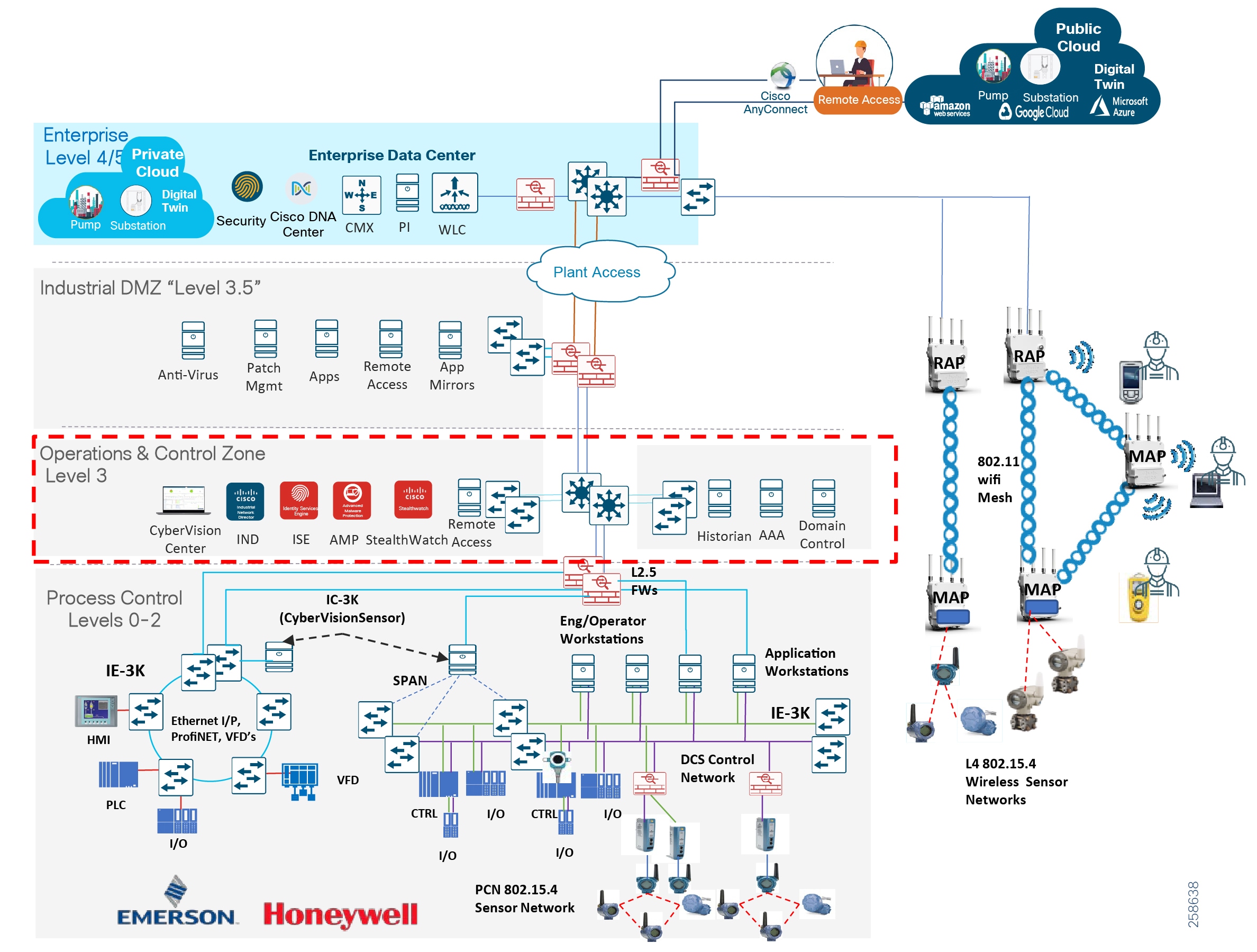

Solution Overview and Use Cases

Reference Architecture Overview

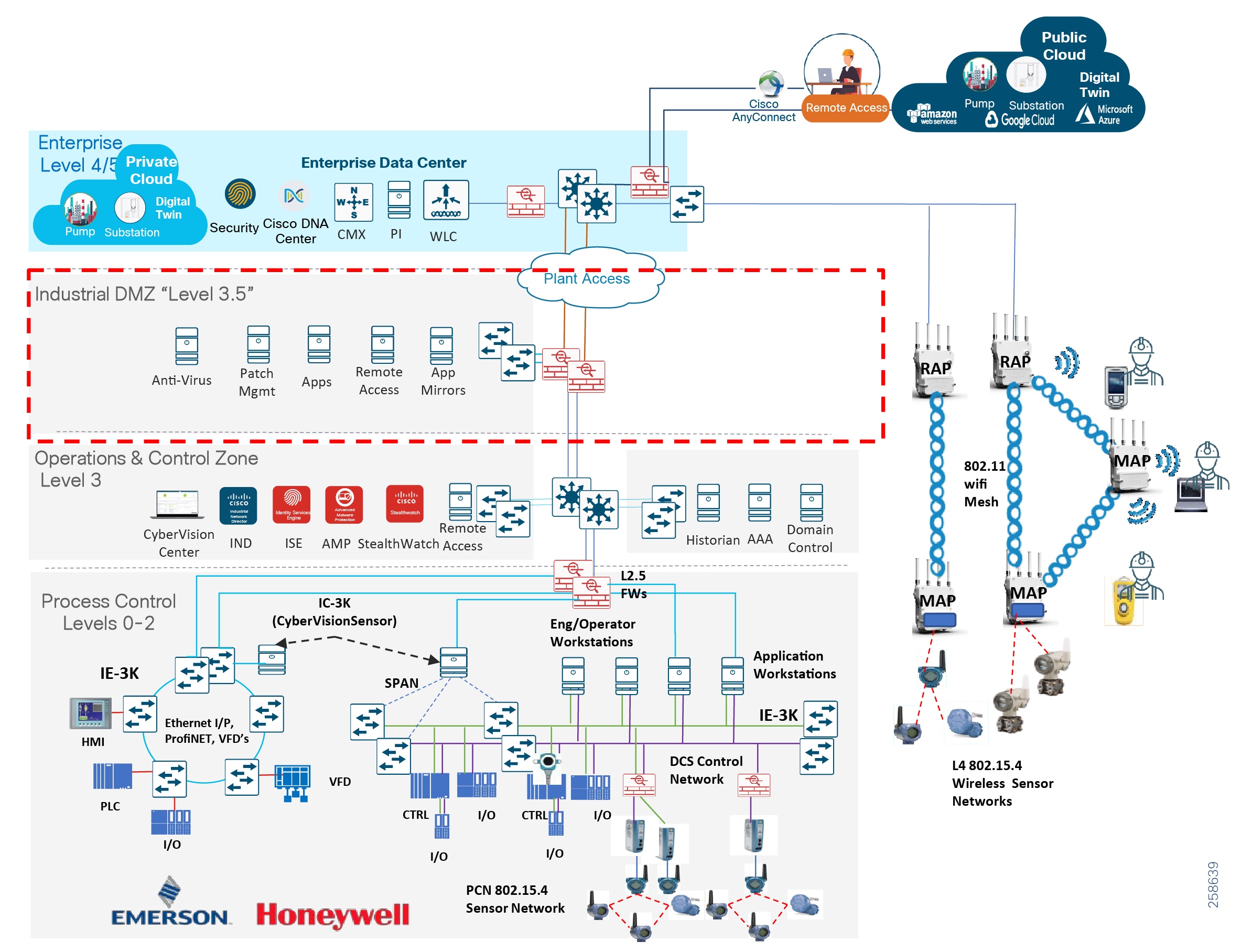

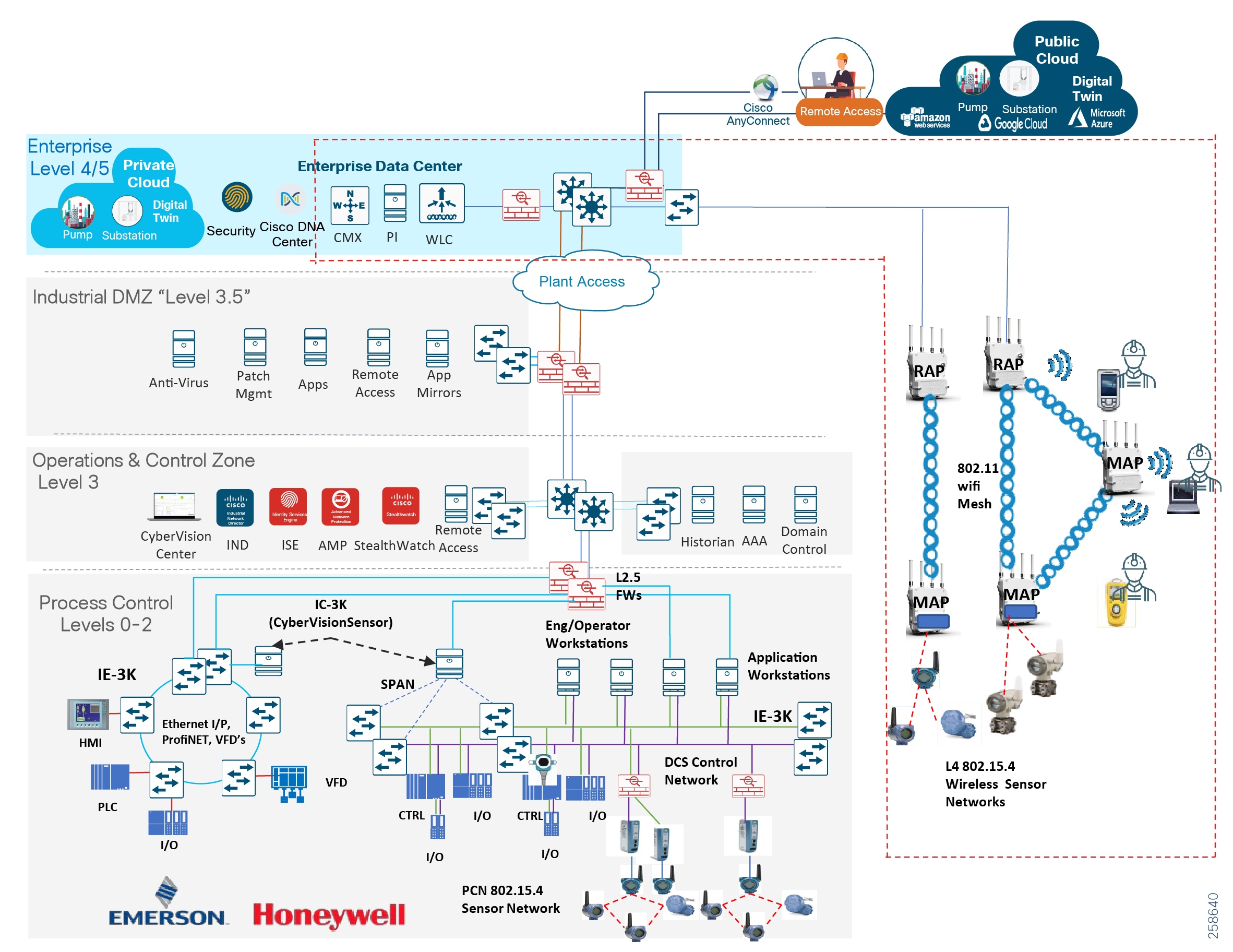

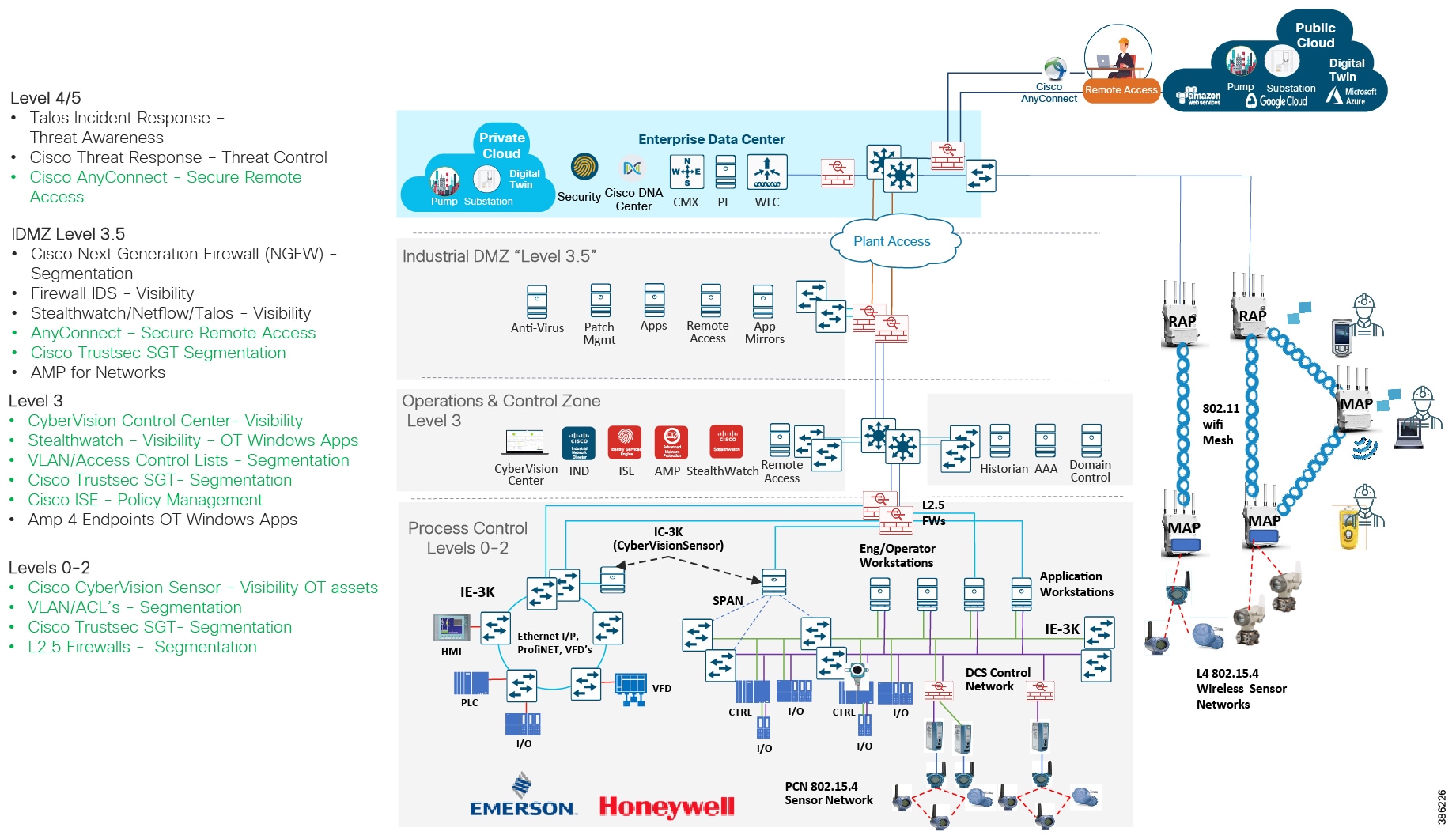

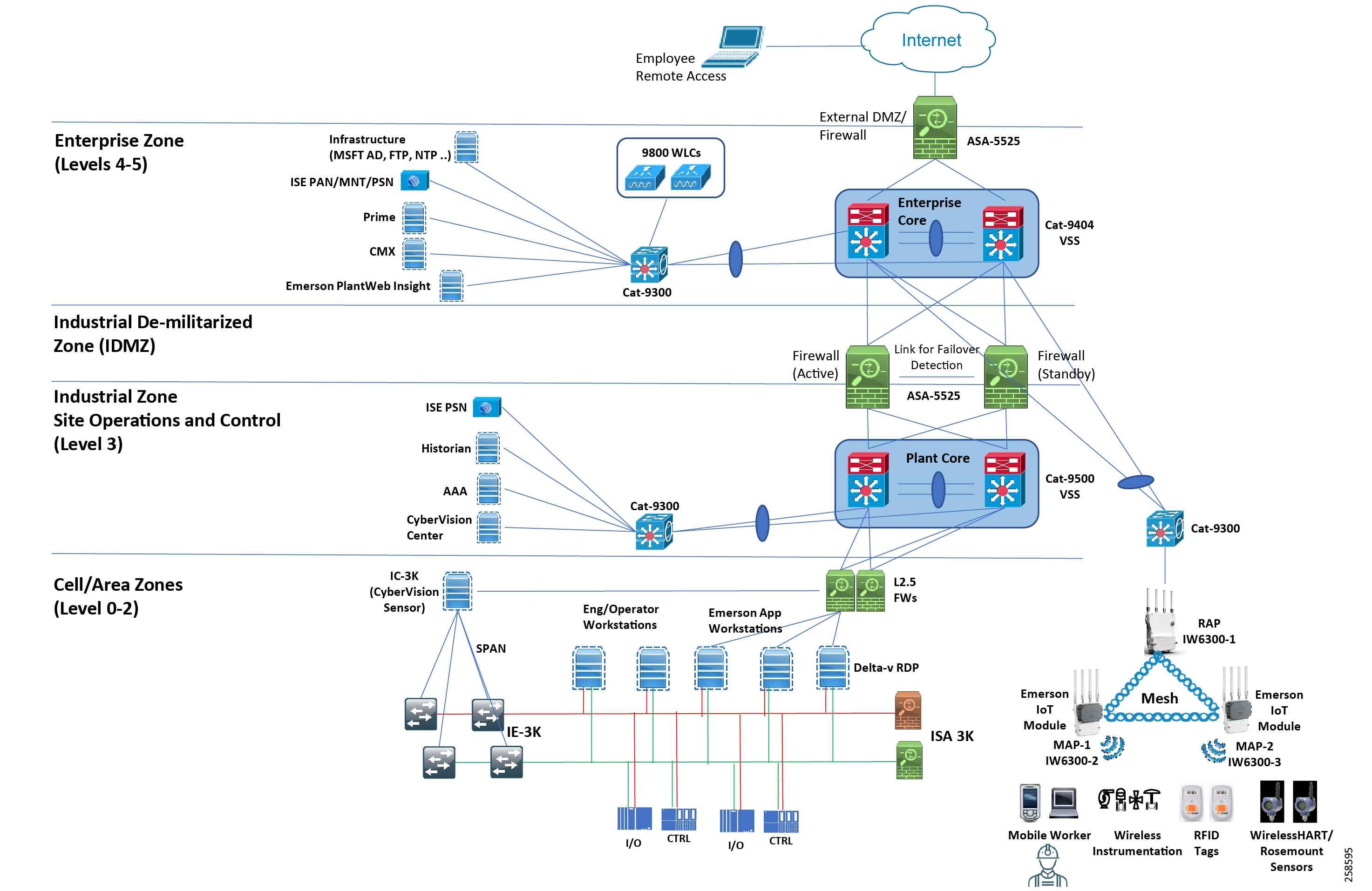

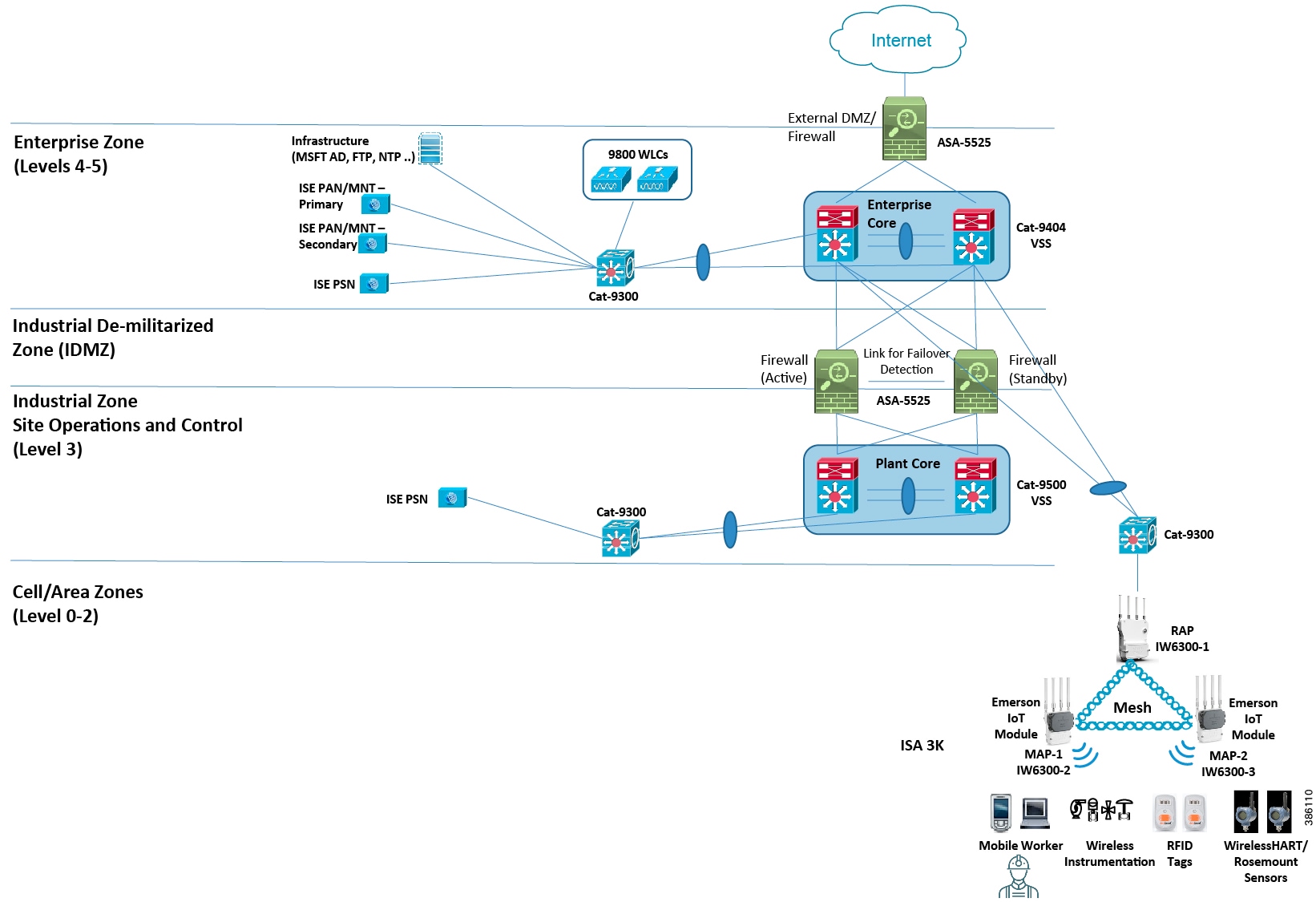

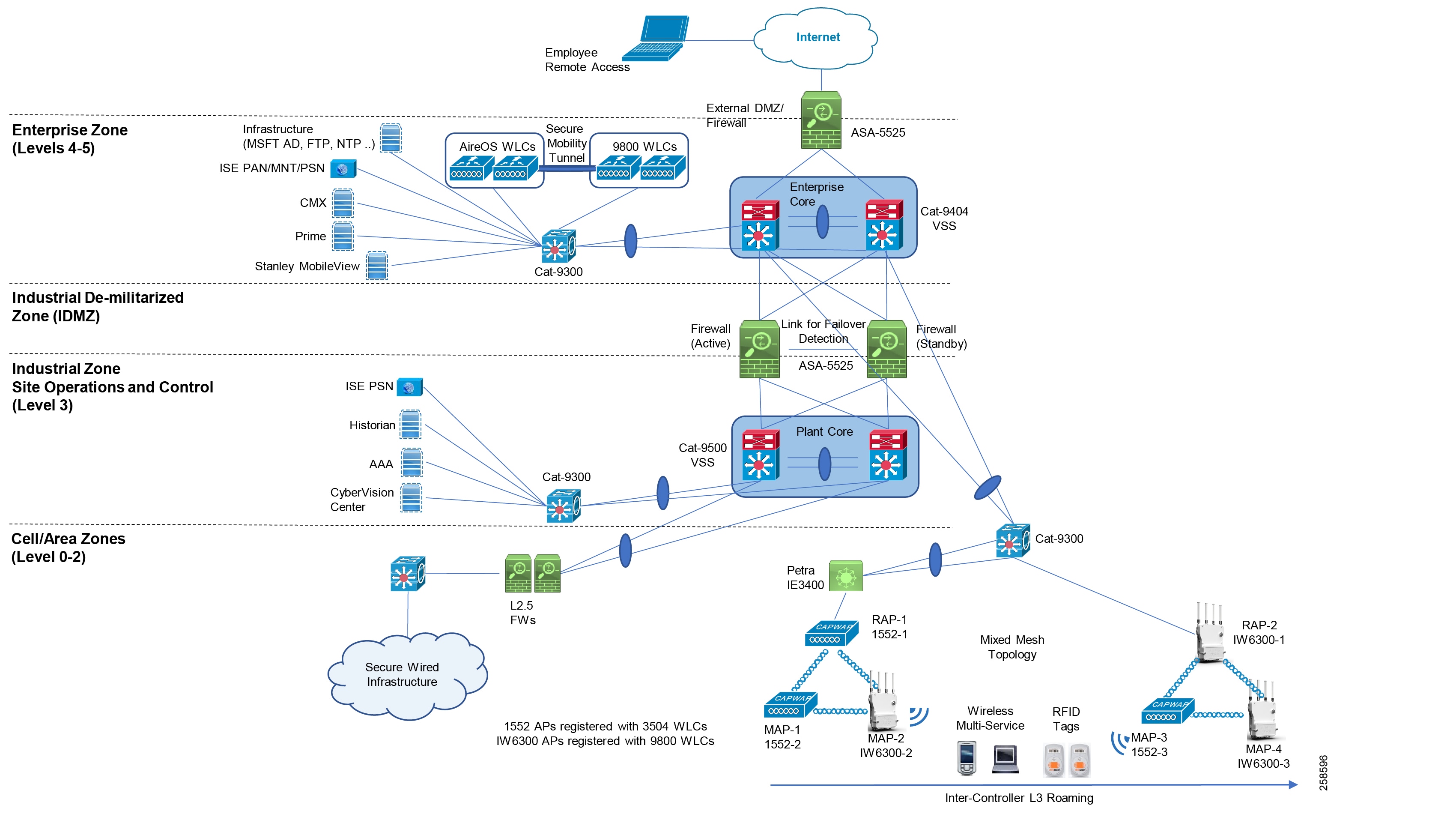

The Cisco oil and gas process control and refineries CVD defines a reference architecture to support multiple operational and non-operational services over a secure, robust communications infrastructure. The architecture applies wired and wireless network design, security, and data management technologies for process manufacturing environments. The reference architecture in Figure 3 is a blueprint for the security and connectivity building blocks required to deploy and implement digitized process control and refinery environments to significantly improve safety and business operation outcomes. The building blocks include:

■![]() Wired process control networks with safety and energy management systems

Wired process control networks with safety and energy management systems

■![]() Industrial wireless network supporting the mobile worker and wireless instrumentation

Industrial wireless network supporting the mobile worker and wireless instrumentation

■![]() Industrial security throughout the plant including the Industrial DMZ

Industrial security throughout the plant including the Industrial DMZ

The use cases and building blocks described in this guide provide a complete end-to-end view of the reference architecture, although the focus of this guide is on the use cases and architecture enabled through 802.11 and 802.15.4 wireless technologies. The wired network and security architecture is built from the Industrial Automation CVD: https://www.cisco.com/c/en/us/td/docs/solutions/Verticals/Industrial_Automation/IA_Horizontal/DG/Industrial-AutomationDG.html. The reader should be familiar with the architectural guidance and design principles in that guide, although there are some slight differences between the oil and gas process control environment and the industrial automation design which are highlighted in Oil and Gas Process Control and Refineries Reference Design—Wired.

Figure 3 Reference Architecture

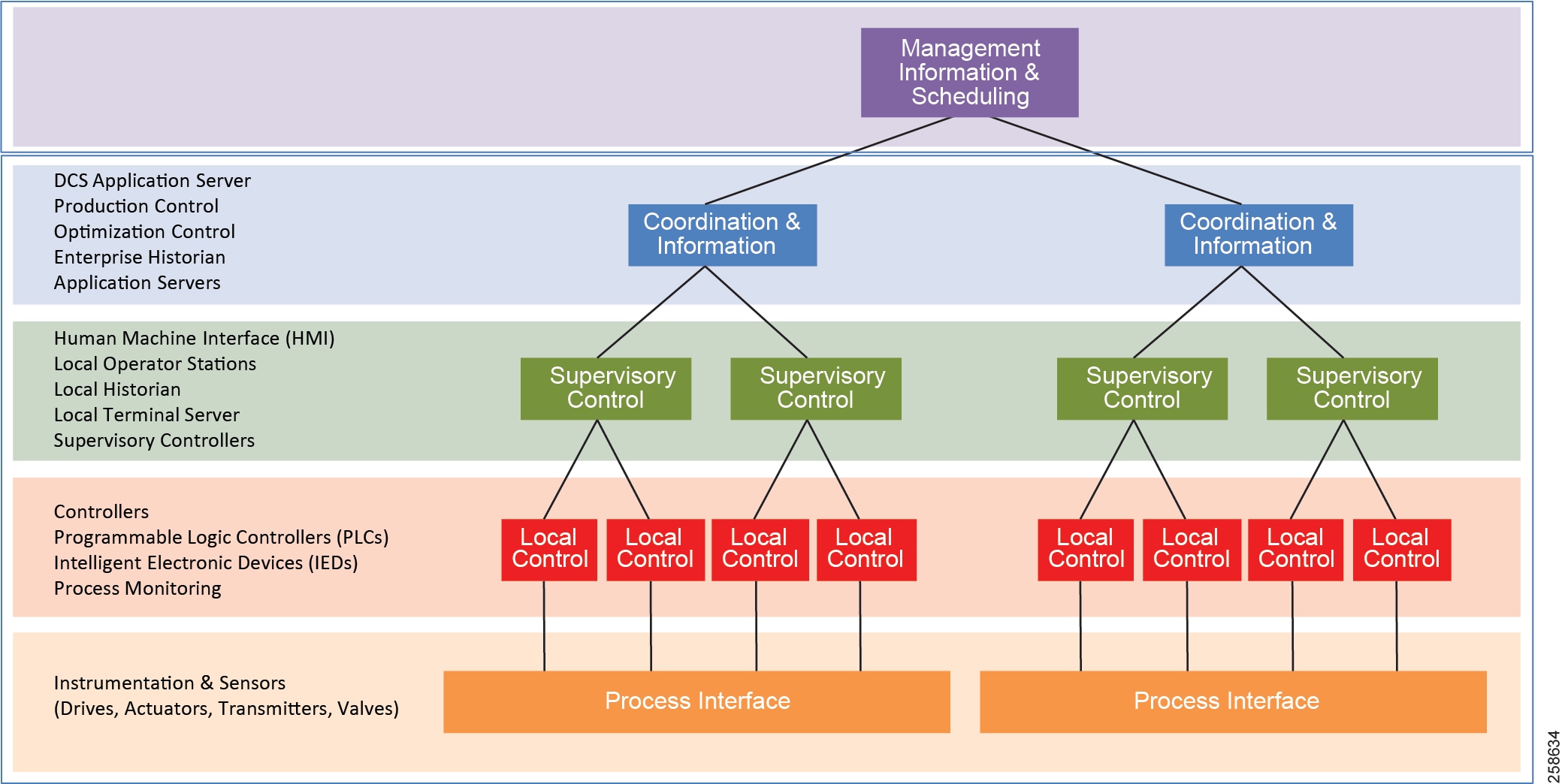

Process Control and Distributed Control Systems

A refinery or processing facility deploys many systems to ensure safety and reliability. Communications must support process control applications from the instrument or sensor to the control room applications. Historically, several of these systems would have been deployed independently; however, most process control vendors now offer integrated solutions incorporating many or all of the functions into common platforms. The typical plant will employ a Distributed Control System (DCS) that divides the control tasks into multiple distributed systems, so that if a part of the system fails, the other parts continue to operate independently. Figure 4 depicts the DCS model.

Figure 4 Distributed Control System Model

A DCS is process-driven rather than event-driven and typically produces a steady stream of process information with less reliance on determining the quality of data, as communication with control hardware is much more reliable. The DCS typically consists of multiple controllers or PLCs implementing multiple closed-loop controls. This makes them suitable for highly-interconnected local plants such as process facilities, refineries, and chemical plants.

Safety Systems

Ensuring safety and reducing risk in the production environment is key to any refinery operation, with priority on protecting workers and the surrounding environment from the accidental release of material. This is especially relevant in refineries and process manufacturing facilities where high temperatures and pressures, and hazardous materials are handled. Safety-instrumented systems are deployed across the plant to maintain safe operations when an anomalous or dangerous condition occurs. These systems are independent from the process control systems that control the same equipment or processes, ensuring that safety systems are not compromised. Because safety systems are so critical, a robust, secure, and redundant network is required to ensure high availability and integrity of these systems.

Energy Management and Non-process Systems

Within refineries and processing plants there are many non-process control related systems and facilities including power generation, energy monitoring systems, tank storage, flaring, and control rooms. Energy monitoring and control systems can play a substantial role within a refinery to manage and improve energy performance, energy re-use, and reduction in emissions. Most refineries and processing plants include energy-intensive processes with some form of power generation and co-generation or combined heat and power generation (CHP). CHP uses internally-generated fuels for power production, which yields a significant cost savings and improved energy efficiency in a refinery. Networks are required for energy management, power generation, and non-process control related systems within refineries or processing plants. Mostly wired, these networks may have wireless instrumentation in the plants, such as 802.15.4 WiHART or ISA100.11A sensors that relay equipment metrics like vibration and temperature.

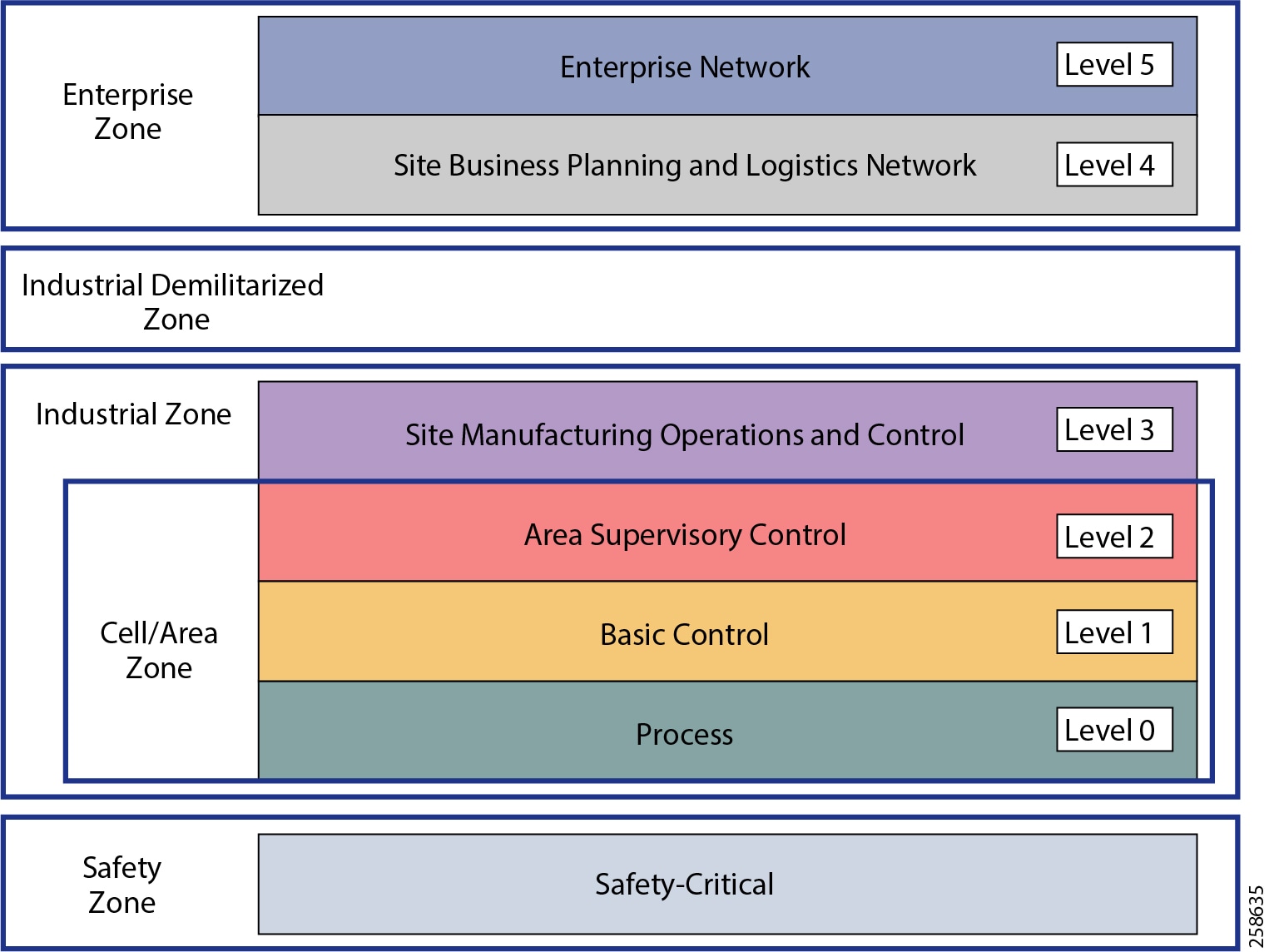

Plant Logical Framework

To understand the security and network system requirements of a PCN, this guide uses a logical framework to describe the basic functions and composition of an industrial system. The Purdue Model for Control Hierarchy (reference ISBN 1-55617-265-6) is a common and well-understood model in the industry that segments devices and equipment into hierarchical functions.

Figure 5 Plant Logical Framework

The model shown in Figure 5 identifies levels of operations and subsequent sections highlight their functions. More information about the model can be found in the Industrial Automation CVD:

https://www.cisco.com/c/en/us/td/docs/solutions/Verticals/Industrial_Automation/IA_Horizontal/DG/Industrial-AutomationDG.html

Safety Zone

Safety in process control systems is so important that not only are safety networks isolated from the rest of the process control, they typically have color-coded hardware and are subject to more stringent standards. In addition, Personal Protection Equipment (PPE) and physical barriers are required to promote safety.

Cell Area/Zone

The Cell/Area Zone is a functional area within a plant facility; many plants have multiple Cell/Area Zones. Larger plants can have zones designated for fairly broad processes that have smaller subsets of control within them where the process is broken down into multiple distributed subsets. This is typical of a distributed control system as defined earlier.

Level 0 Process

Level 0 consists of a wide variety of sensors and actuators involved in the basic industrial process. These devices perform the basic functions of the IACS as part of the physical process, such as driving a motor, measuring variables such as temperature and pressure, and setting an output.

Level 1 Basic Control

Level 1 consists of controllers that direct and manipulate the local process, primarily interfacing with the Level 0 devices (for example, I/O, sensors and actuators).

IACS controllers are the intelligence of the industrial control system, making decisions based on feedback from the devices found at Level 0. Controllers act alone or in conjunction with other controllers to manage the devices and the industrial process.

Level 2 Supervisory Control

Level 2 represents the applications and functions associated with the Cell/Area Zone runtime supervision and operation, including DCS, HMI, and supervisory and data acquisition (SCADA) software. Depending on the size of the plant, some of these functions may reside at the site level (Level 3). An example could be control room workstations monitoring processes site wide.

Industrial Zone

The industrial zone comprises the Cell/Area Zones (Levels 0 to 2) and site-level (Level 3) activities. The industrial zone is important because all the IACS applications, devices, and controllers critical to monitoring and controlling the plant floor IACS operations are in this zone. To preserve smooth plant operations and functioning of the IACS applications and IACS network in alignment with standards such as IEC 62443, this zone requires clear logical segmentation and protection from Levels 4 and 5.

Level 3 Site Operations and Control

Level 3 is where the applications and systems reside that support plant-wide control and monitoring. A centralized control room with operator stations monitoring and controlling many systems within the plant is at this level. The Level 3 IACS network may communicate with Level 1 controllers and Level 0 devices, function as a staging area for changes into the industrial zone, and share data with the enterprise (Levels 4 and 5) systems and applications via the DMZ. Examples of services at this level are historians, control applications, network and IACS management software, and network security services. Control applications will vary greatly depending on the plant.

Enterprise Zone

Level 4 Site Business Planning and Logistics

Level 4 is where the functions and systems that need standard access to services provided by the enterprise network reside. This level is viewed as an extension of the enterprise network. The basic business administration tasks are performed here and rely on standard IT services. Although important, these services are not considered critical to the IACS and the plant floor operations. Because of the more open nature of the systems and applications within the enterprise network, this level is often viewed as a source of threats and disruptions to the IACS network. Examples of applications would include Internet access, email, non-critical plant systems such as Manufacturing Execution Systems (MES), and access to enterprise applications such as SAP.

Level 5 is where the centralized IT systems and functions exist. Enterprise resource management, business-to-business, and business-to-customer services typically reside at this level. The IACS must communicate with the enterprise applications to exchange manufacturing and resource data. Direct access to the IACS is typically neither required nor recommended from this level.

Industrial DMZ

Although not part of Purdue reference model, the oil and gas process control and refineries solution includes a DMZ between the industrial and enterprise zones. New industrial security standards such as ISA-99 (also known as IEC-62443), NIST 800-82, and Department of Homeland Security INL/EXT-06-11478 include an Industrial DMZ as part of a security strategy. The IDMZ provides a buffer zone between the enterprise zone and the industrial/plant zone.

The Industrial DMZ is deployed within plant environments to separate the enterprise networks and the operational domain of the plant environment. Downtime in the IACS network can be costly and have a severe impact on revenue, so the operational zone cannot be impacted by any outside influences. Network access is not permitted directly between the enterprise and the plant, however, data and services are required to be shared between the zones, thus the industrial DMZ provides the architecture for the secure transport of data. This unofficial zone is crucial in securing and isolating the mission-critical operations network from the corporation own support services as well as from the outside world Typical services deployed in the DMZ include remote access servers and mirrored services.

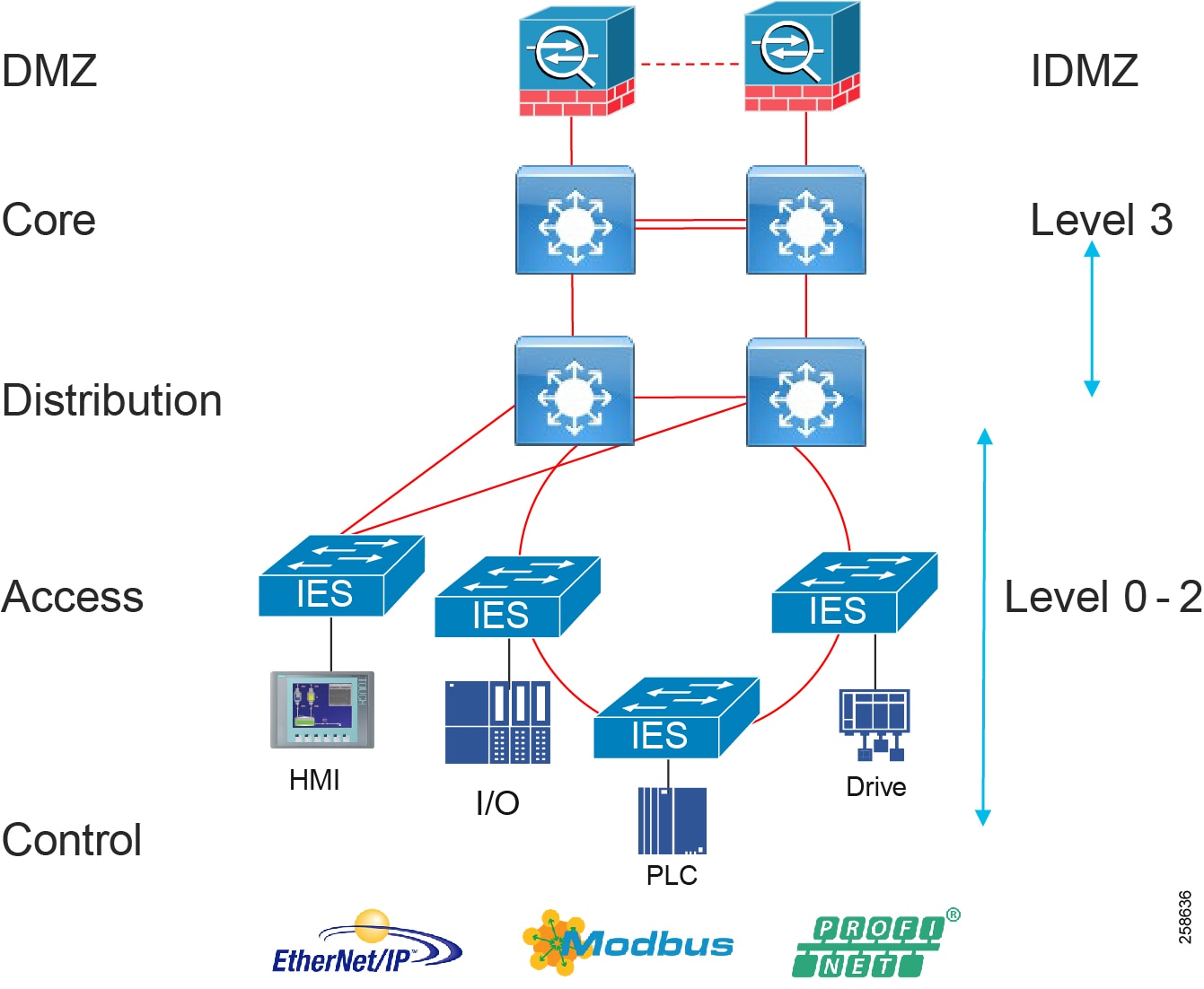

Oil and Gas Process Control and Refineries Reference Design—Wired

The wired network design supporting process control, safety, and power networks is very much aligned with the Industrial Automation CVD. The reference wired design is built on the foundation described in this CVD, though there are some slight differences. This section provides a high-level view of the architecture and identifies differences from the Industrial Automation design.

The typical enterprise campus network design is ideal for providing resilient, highly scalable, and secure connectivity for all network assets. The campus model is a proven hierarchal design that consists of three main layers: core, distribution, and access. The DMZ layer is added to provide a security interface outside of the operational plant domain. Figure 6 highlights the alignment between the Purdue model and the enterprise campus model. Although detailed design and product guidance is out of scope for this document, the following sections outline the wired reference design for the oil and gas process control and refinery reference architecture.

Figure 6 Oil and Gas Process Control and Refineries Reference Design

Process Control Levels 0-2

The process control network is where IACS devices and controllers are executing the real-time control of an industrial process. This network connects sensors, actuators, drives, controllers, and any other IACS devices that need to communicate in real-time I/O communication. It is essentially the major building block within the process control network architecture.

Within the process control levels 0-2, there are key requirements and industrial characteristics that the networking platforms must align with and support. This is very much aligned with the Cell/Area Zone design and recommendations in the Industrial automation CVD, in the section “Industrial Networking and Security Design for the Cell/Area Zone”.

Environmental conditions such as temperature, humidity, and invasive materials require networking platforms with different physical attributes. In addition, continuous availability is critical to ensure the uptime of industrial processes to minimize impact to revenue. Finally, industrial networks also differ from IT in that they may need IACS protocol support to integrate with IACS systems.

Figure 7 Process Control Levels 0-2

Availability and Access Switching

Availability of the critical IACS communications is a key factor and consideration when designing process control networks. Network topologies and resiliency design choices, such as QoS and segmentation, are critical in helping maintain availability of IACS applications, reducing the impact of a failure or security breach.

Within process control networks there are a number of factors that define the layout of the access network. The physical layout of the plant, cost of cabling, and desired availability are three important factors in plants. There are some differences in networking access between industrial automation and the oil and gas process control network when looking at typical DCS deployments. Automation vendors have preferred deployment models to support a process control network or DCS. Emerson and Honeywell both support dual LAN architectures to provide availability of their DCS at levels 0-3. The following provides a quick overview of access topologies for process control networks.

Within the classic dual LAN architecture in process environments the LANs are kept physically separate, essentially a LAN A and LAN B. The LANs do not converge at any point in the architecture from the controllers and I/O through to the workstations and application servers. Each end controller device will have two Network Interface Cards (NICs) or an A and B redundant pair of devices independently connected to each LAN. Redundancy and availability is provided through always having at least one LAN available.

The ring topology provides resiliency in that there is always a network path available even with a single link failure. Resilient Ethernet Protocol (REP) helps provide resiliency in rings. REP can offer a complete view of the state of the network ring, is deterministic and predictable during failures, is easy to configure, and can converge in under 200ms. Newer resiliency protocols for Ethernet rings such as High Availability Seamless Redundancy (HSR) are hitless and are supported on the industrial Ethernet line of switches. Rings can be used with the dual LAN if kept physically separate, again with no LAN A and LAN B convergence.

In a redundant star architecture, there are only two hops in the path between devices and there is redundancy to provide fast convergence. The classic star topology has a switch connected to a redundant pair of switches upstream. The network has an element of predictability because of the consistent number of hops in the path. Star topologies may be less utilized in these environments if dual LANs are deployed, as in this topology it breaks the fundamental rule of the dual LAN where the LANS must not converge.

Resiliency protocols for the access layer are described in detail in the Industrial Automation CVD in the section “Industrial Networking and Security Design for the Cell/Area Zone”. These include REP, HSR, Parallel Redundancy Protocol (rings or non-rings, dual LAN), and Media Redundancy Protocol (MRP-PROFINET deployments).

Note that a fair amount of wired instrumentation is connected over 4-20ma loops to marshaling I/O cabinets and into the controllers which are generally housed in controlled environments. The controllers are connected to Ethernet. For any harsher environments or where DIN rack mountable switches are mandated, the Cisco Industrial Ethernet (IE) series switches are recommended. Due to their ruggedized IP30-rated design. the Cisco IE switches are excellent access-layer switches for industrial applications.

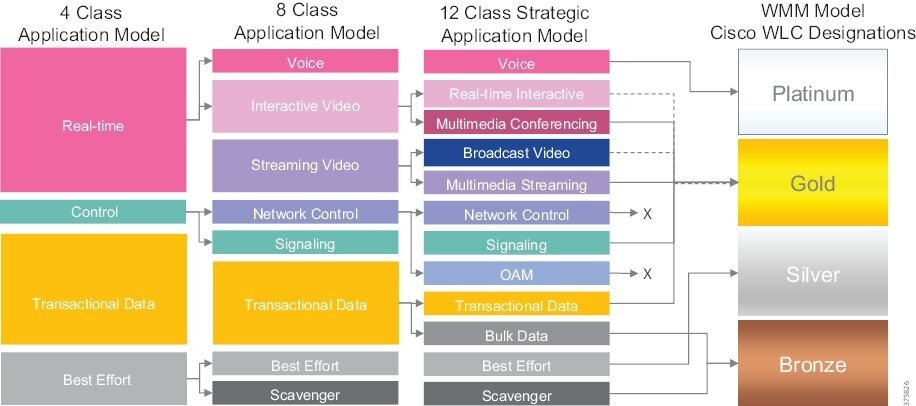

Performance and QoS

Depending on the industrial application, a delay or variance and lack of determinism in the network can shut down an industrial process and impact its overall efficiency. Achieving predictable, reliable packet delivery is a fundamental requirement for a successful network design in process control networks. A design needs to factor the number of network hops, bandwidth requirements, and network QoS and prioritization to provide a greater degree of determinism and performance for process control network applications. The Industrial Automation CVD section on the Cell/Area Zone highlights QoS models, traffic types, and IACS application requirements in its design guidance and can be leveraged for process control networks.

Security

When implementing industrial network security, customers are concerned with how to keep the environment safe and operational. It is recommended to follow an architectural approach to securing the control system and process domain. The Purdue Model of Control Hierarchy, International Society of Automation 95 (ISA95), and IEC 62443, NIST 800-82 are examples of such architectures. Key security requirements in the process control network are very much aligned with Industrial Automation. These include device and IACS asset visibility, secure access to the network, segmentation, group-based security policy, and Layer 2 hardening (control plane and data plane) to protect the infrastructure. Asset visibility, anomaly detection, and security policy and management are all provided with Cisco secure networking, Cyber Vision, ISE, and StealthWatch.

Management

Plant infrastructures are becoming more advanced and connected than ever before. Within the process control network there are two personas and skillsets taking on the responsibility of the network infrastructure, namely IT and OT staff. OT teams require an easy-to-use, lightweight, and intelligent platform that presents network information in the context of automation equipment. Key functions at this layer will include plug-and-play, easy switch replacement, and ease of use to maintain the network infrastructure. The Industrial Network Director supports this function. The management design and functions are aligned with Industrial Automation Cell/Area Zone design.

Recommended Cisco platforms for access and process control networks:

■![]() Cisco IE 3200, Cisco IE 3300, Cisco IE 3400, Cisco IE 4000, Cisco IE 4010, and Cisco IE 5000

Cisco IE 3200, Cisco IE 3300, Cisco IE 3400, Cisco IE 4000, Cisco IE 4010, and Cisco IE 5000

■![]() Carpeted access and with no industrial protocol support Cisco Catalyst 9300 and Cisco Catalyst 9200

Carpeted access and with no industrial protocol support Cisco Catalyst 9300 and Cisco Catalyst 9200

■![]() Security Cyber Vision, ISE, TrustSec, and StealthWatch

Security Cyber Vision, ISE, TrustSec, and StealthWatch

■![]() Industrial Network Director providing OT network management support

Industrial Network Director providing OT network management support

Distribution Layer Switches

At the distribution layer in the architecture the process control and refinery architecture is very much aligned with the Industrial Automation CVD. The distribution layer facilitates connectivity between the access layer and other services. In smaller plants the distribution and core layer can converge onto the same platform. It may also provide site or plant wide connectivity. These switches are generally housed in controlled environments such as control rooms and so may be more aligned with traditional enterprise switching, unless certain industrial protocols are required such as in power networks, then industrial switches may be used at this layer.

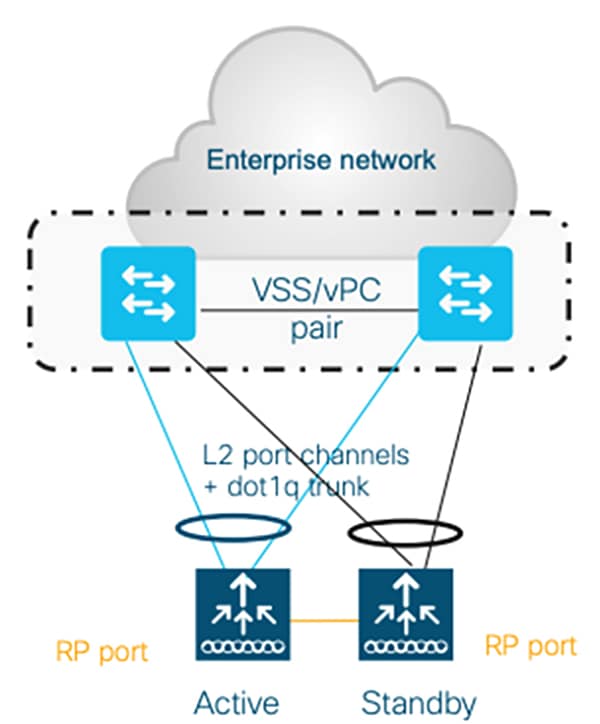

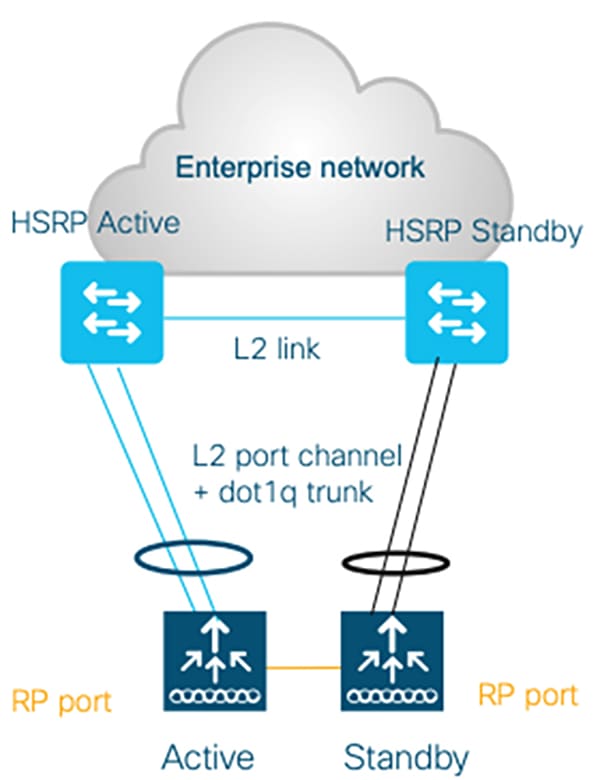

Resiliency is provided by physically redundant components like redundant switches, power supplies, switch stacking, and redundant logical control planes HSRP, VRRP, and stateful switchover.

Distribution Layer Cisco Platforms

■![]() Cisco Catalyst 9300 and Cisco Catalyst 9500

Cisco Catalyst 9300 and Cisco Catalyst 9500

■![]() Cisco IE 5000, Cisco IE 4000, Cisco IE 4010, and Cisco IE 3400

Cisco IE 5000, Cisco IE 4000, Cisco IE 4010, and Cisco IE 3400

Level 2.5 Firewalls

Segmentation may be required between systems within level 0-2 and between level 0-2 and level 3. This firewall isolates the PCN from other level 0-2 networks across the plant such as power or other control systems not part of the DCS. It essentially provides access control for the DCS and is seen in some automation vendor reference architectures such as Emerson Delta-V.

Site Operations and Control Level 3

Figure 8 Site Operations and Control Level 3

The majority of industrial plant facilities have a very different physical environment at this layer of the architecture compared to process control Level 2 and below. The networking characteristics are less intensive with respect to real time performance for the industrial protocols and equipment is physically situated in an environmentally controlled area, cabinet, or room. The core distribution networking platforms and data center are deployed at this layer. The data center houses the plantwide applications such as historians, asset management, plant floor visualization, monitoring, and reporting. Network management and plant security services are also housed here, including IND, Cyber Vision Center, ISE, and StealthWatch. This level provides the networking functions to route traffic between the process control area/zones and the applications within the site operations and control.

Core and Distribution Layer Cisco Platforms

■![]() Cisco Catalyst 9300 and Cisco Catalyst 9500

Cisco Catalyst 9300 and Cisco Catalyst 9500

■![]() Cisco Catalyst management through DNA-C

Cisco Catalyst management through DNA-C

■![]() Security ISE, Cyber Vision, StealthWatch, and AMP for endpoints

Security ISE, Cyber Vision, StealthWatch, and AMP for endpoints

■![]() Cisco Hyperflex and Cisco UCS data center and compute platforms

Cisco Hyperflex and Cisco UCS data center and compute platforms

Industrial DMZ Level 3.5

Figure 9 Industrial DMZ Level 3.5

The Industrial Zone (Levels 0-3) contains all IACS network and automation equipment that is critical to controlling and monitoring plant-wide operations. Industrial security standards including IEC-62443 recommend strict separation between the industrial zone (levels 0-3) and the enterprise/business domain and above (Levels 4-5). This segmentation and strict policy help to provide a secure industrial infrastructure and availability of the industrial processes. Though data, such as ERP data, is still required to be shared between the two entities, security networking services may be required to be managed and applied throughout the enterprise and industrial zones. A zone and infrastructure is required between the trusted industrial zone and the untrusted enterprise zone. The Industrial DMZ (IDMZ), commonly referred to as Level 3.5 provides a point of access and control for the access and exchange of data between these two entities.

The IDMZ architecture provides termination points for the enterprise and industrial domains and includes various servers, applications, and security policies to broker and police communications between the two domains. Key functions of the IDMZ include:

■![]() Best practice is that no direct communications should occur between the enterprise and the industrial zone, although in some instances this may not be possible with enterprise systems being utilized in the industrial zone (ISE deployments).

Best practice is that no direct communications should occur between the enterprise and the industrial zone, although in some instances this may not be possible with enterprise systems being utilized in the industrial zone (ISE deployments).

■![]() The IDMZ needs to provide secure communications between the enterprise and the industrial zone using mirrored or replicated servers and applications in the IDMZ.

The IDMZ needs to provide secure communications between the enterprise and the industrial zone using mirrored or replicated servers and applications in the IDMZ.

■![]() The IDMZ provides for remote access services from the external networks into the industrial zone.

The IDMZ provides for remote access services from the external networks into the industrial zone.

■![]() The IDMZ must provide a security barrier to prevent unauthorized communications into the industrial zone and therefore create security policies to explicitly allow authorized communications (ISE between enterprise and industrial zone).

The IDMZ must provide a security barrier to prevent unauthorized communications into the industrial zone and therefore create security policies to explicitly allow authorized communications (ISE between enterprise and industrial zone).

■![]() No IACS traffic will pass directly through the IDMZ (controller, I/O traffic).

No IACS traffic will pass directly through the IDMZ (controller, I/O traffic).

The IDMZ design for process control is aligned with Industrial Automation. The design guidance is detailed in the Industrial DMZ reference section and detailed implementation can be found in the Converged Plantwide Ethernet CVD at:

https://www.cisco.com/c/en/us/td/docs/solutions/Verticals/CPwE/3-5-1/IDMZ/DIG/CPwE_IDMZ_CVD.html

■![]() Cisco ASA and Cisco Firepower Firewalls

Cisco ASA and Cisco Firepower Firewalls

■![]() Cisco Catalyst 9200 and Cisco Catalyst 9300

Cisco Catalyst 9200 and Cisco Catalyst 9300

■![]() Cisco Hyperflex and Cisco UCS compute and data center platforms

Cisco Hyperflex and Cisco UCS compute and data center platforms

Multiservice Traffic (Non-Operational Applications)—Wired

Multiple services can be deployed in plants to support plant operation communications. The services are not part of the operational systems and applications running within process control environment. These services typically include physical security badge access, video surveillance, and business-enabling applications such as email, telephony, and voice systems. Segmentation of the multi-service applications from the industrial application and process control environment is a common requirement. Regulatory demands, security concerns, risk management, and confidence of the business to maintain multi-service traffic on the same infrastructure as the IACS process and assets will drive the multi-service architecture. Generally, a separate physical infrastructure for the non-operational applications and services is acceptable. This is in essence an extended enterprise where the non-operational assets move into non-carpeted space. Hardened industrial switches are used in areas where more traditional enterprise switches cannot be deployed. Assets such as phones or video cameras may also require hardening as part of this architecture.

Oil and Gas Process Control and Refineries Design—Wireless

Industrial wireless and wireless instrumentation are the main focus for this version of the oil and gas process control and refinery guide. Detailed design guidance can be found in Industrial Wireless Design and Industrial Wireless Site Survey and Design Considerations. 802.11 Wireless networks in refineries support both plant/field operations and worker enablement. In supporting the plant field operations, 802.15.4 sensors and instrumentation data supporting equipment monitoring applications are backhauled over the 802.11 Wi-Fi mesh infrastructure. Mobility services are enabled to support the mobile worker with handheld devices providing near real time access to data with location services and also visibility into worker and asset location.

The wireless network provides:

■![]() Mobile worker—A secure reliable Wi-Fi network built to enable the mobile worker within the process plant providing anywhere access to data improving the workers operational productivity and safety.

Mobile worker—A secure reliable Wi-Fi network built to enable the mobile worker within the process plant providing anywhere access to data improving the workers operational productivity and safety.

■![]() Digital transformation—Improved productivity leveraging sensor data with built-in security enabling predictive maintenance and condition-monitoring applications.

Digital transformation—Improved productivity leveraging sensor data with built-in security enabling predictive maintenance and condition-monitoring applications.

■![]() Worker safety—Visibility into worker location and enhance safety by enabling mobile safety devices such as mobile gas detection or hardened radio frequency identification (RFID) tags.

Worker safety—Visibility into worker location and enhance safety by enabling mobile safety devices such as mobile gas detection or hardened radio frequency identification (RFID) tags.

■![]() Flexible implementation of advanced applications and reduced time to deployment of devices, avoiding expensive cabling with Cisco Mesh.

Flexible implementation of advanced applications and reduced time to deployment of devices, avoiding expensive cabling with Cisco Mesh.

■![]() Improved turnaround and location awareness to see into contractor productivity during STO events and improve worker safety.

Improved turnaround and location awareness to see into contractor productivity during STO events and improve worker safety.

■![]() Remote expert—Provide access to centralized expert services to improve training and support to field engineers.

Remote expert—Provide access to centralized expert services to improve training and support to field engineers.

Figure 10 Refinery and Process Control Wireless

The IW6300 is Cisco's new Class 1, Div 2/Zone 2 hazardous location-certified, designed specifically for hazardous environments like oil and gas refineries, chemical plants, and processing plants. It is an integral component to enabling 802.11 wireless and enabling the above use cases and outcomes in the process plant and refinery.

Figure 11 Cisco Catalyst IW6300 Heavy Duty Series Access Points

The reference architecture highlights the networks deployed outside of the Operations and Control Zone Level 3 and below. All supported services and data using Cisco wireless are not part of the critical process control. Equipment monitoring, predictive maintenance, mobile worker services, and location services are all non-process control applications. The other deployment model not evaluated in this version of the CVD is to place the wireless infrastructure at level 3.5 or as an isolated network rather than at Level 4. This wireless sensing data can be used as part of the process control. Both models have merits depending on the customer use cases and operating models - (IT/OT owned and supported). Further details are provided in the Wireless Instrumentation section.

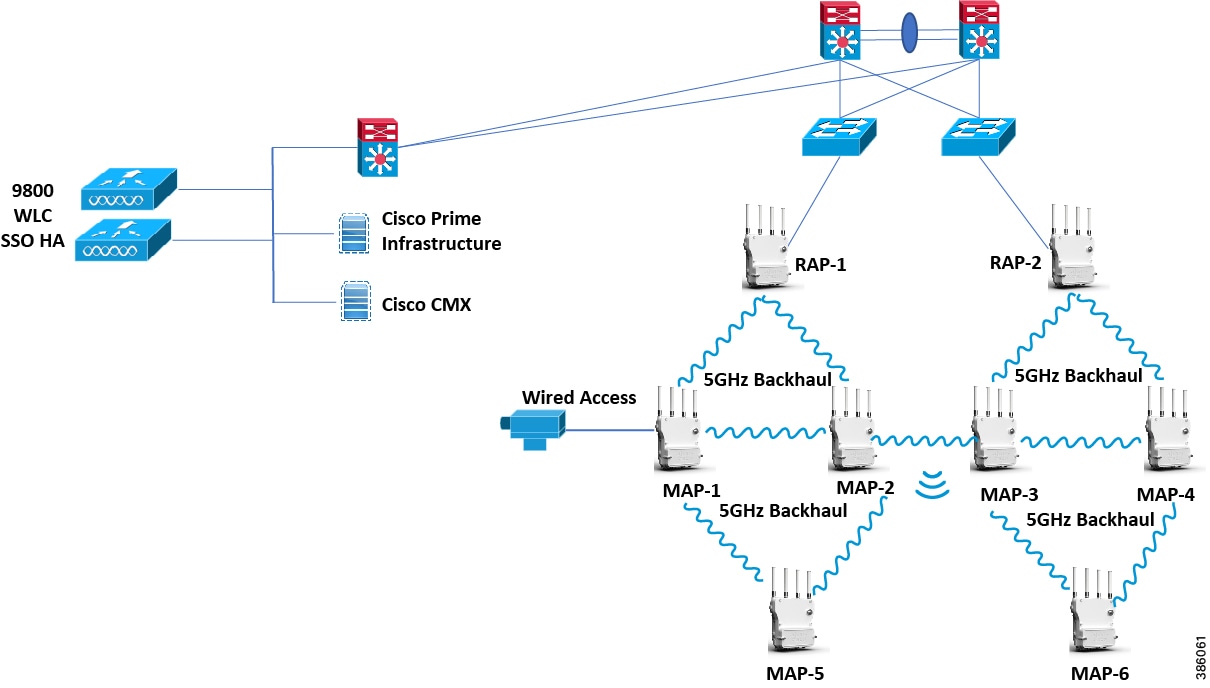

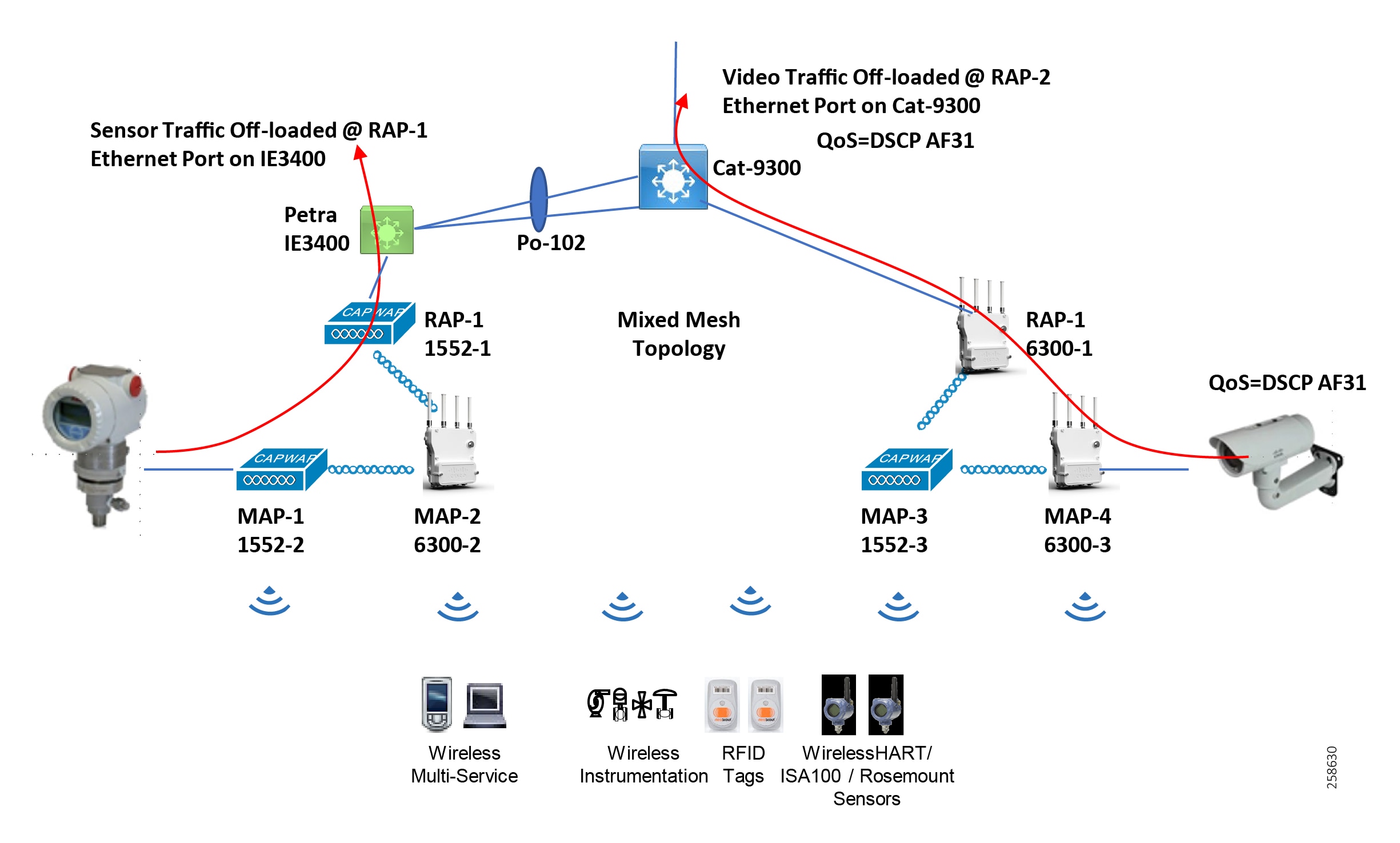

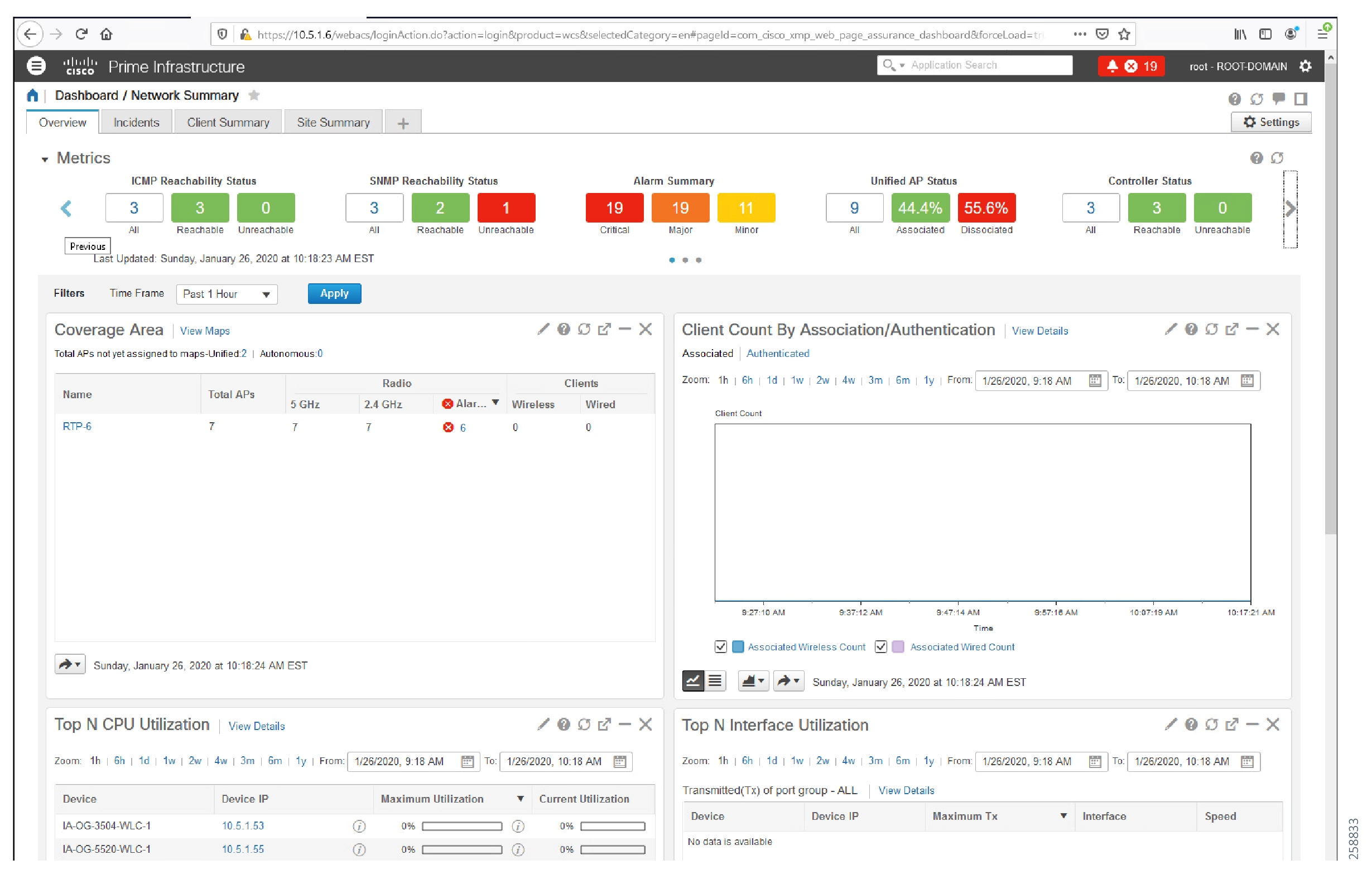

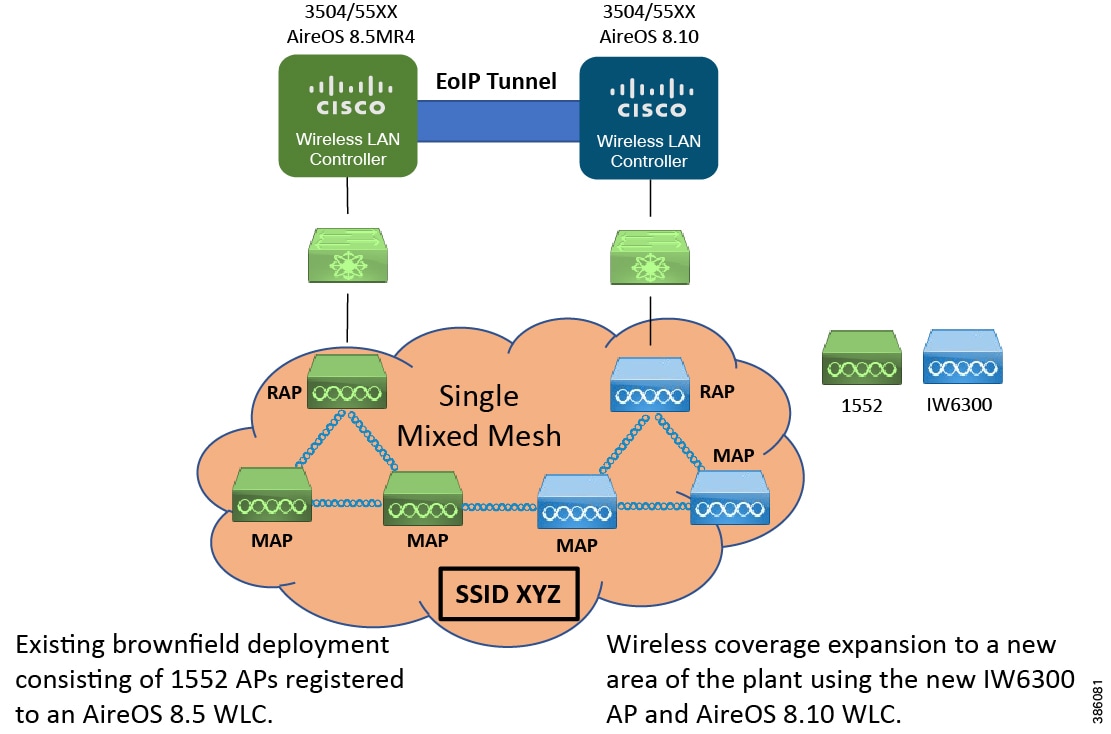

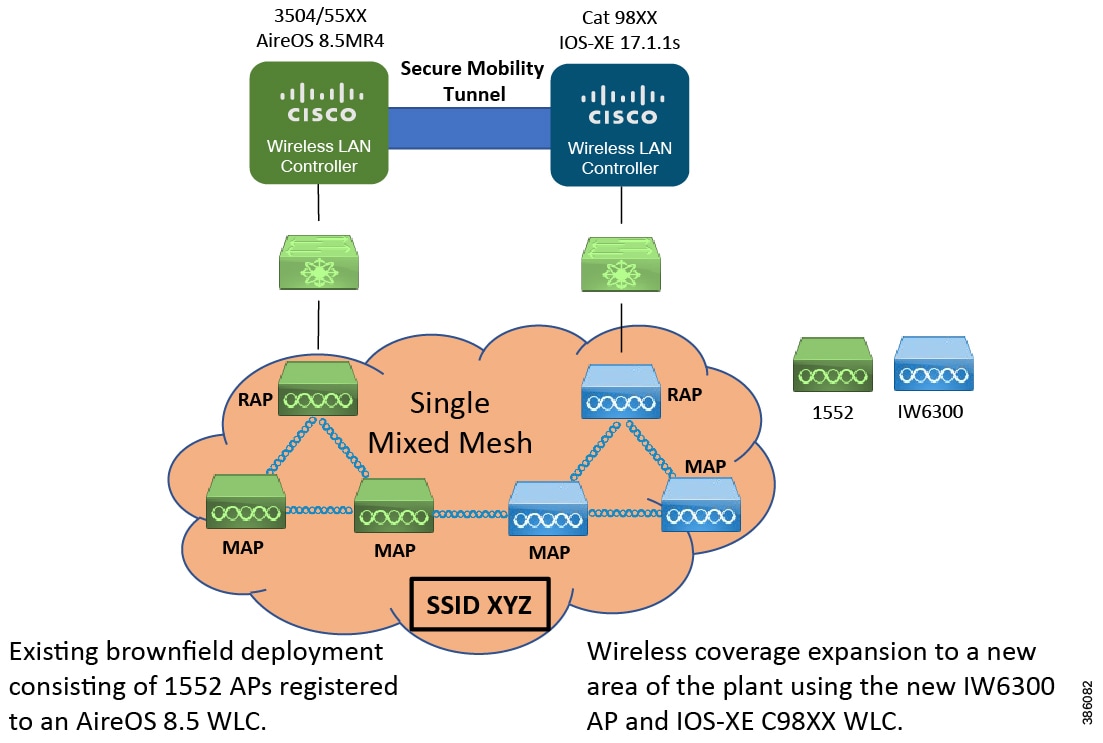

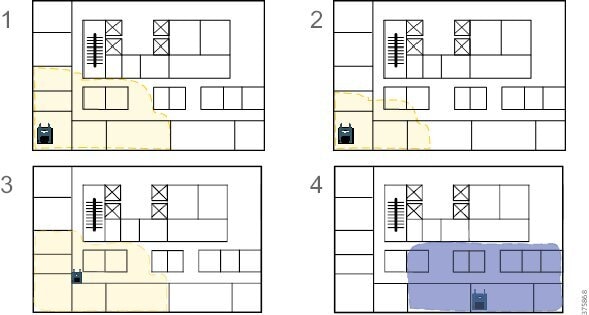

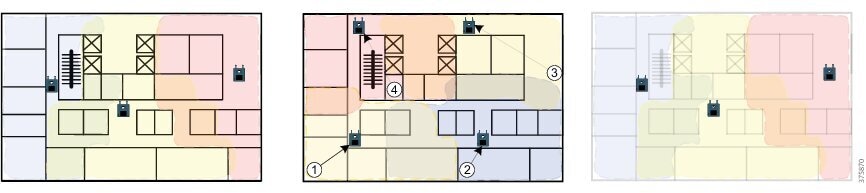

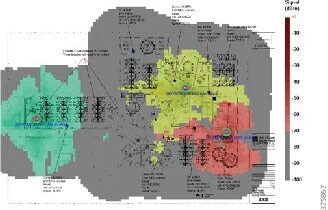

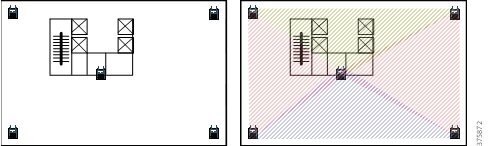

The access points are set up to form a Cisco Mesh. The Cisco Mesh allows Mesh Access Points (MAPs) to wirelessly connect to the Root Access Points (RAPs) which are wired to the Cisco infrastructure. One of the advantages of using Cisco Mesh is the reduced cabling expense to provide wireless connection to areas of the plant that are not wired. The RAPs feed into the enterprise level 4/5 switch. This could be a Cisco Catalyst or a Cisco IE switch as part of the extended enterprise. Wireless LAN controllers provide system-wide operations and policies such as mobility, security, QoS, and RF frequency management. Cisco Prime Infrastructure provides a graphical platform for wireless mesh planning, configuration, and management. Network managers can use Cisco Prime Infrastructure to design, control, and monitor wireless mesh networks from a central location. Cisco wireless network promotes information and system security with next-generation encryption, interference and rogue access point-detection tools, intrusion detection, network management, client security with 802.1x, and Cisco ISE.

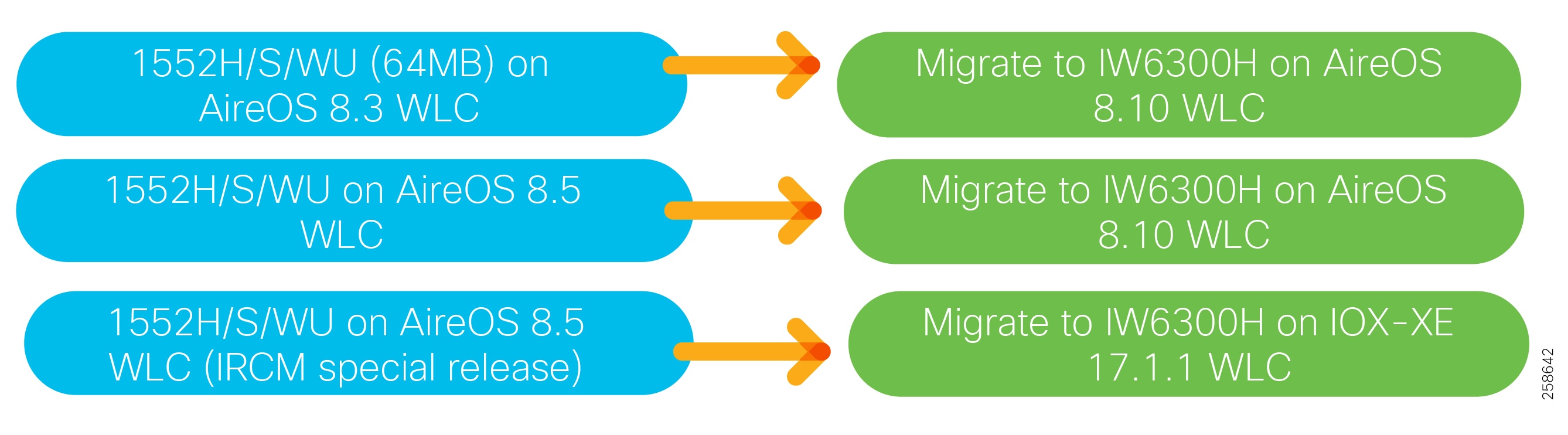

One of the major deliverables within the scope of this guide is to detail a path to migrate wireless networks from the existing 1552 hazardous location access point to the new IW6300 hazardous location access point. The design guide details 1552 and IW6300 mixed deployments for brownfield architectures and IW6300 only deployments with the new Cisco Catalyst 9800 WLC for greenfield deployments.

■![]() IW 6300 and 1552 Access points

IW 6300 and 1552 Access points

Wireless Instrumentation

Refineries and processing facilities contain sensors, instrumentation, actuators, and other equipment associated with process monitoring and control systems. Historically, it has not been technically or economically feasible to connect all of these systems via a wired communications infrastructure and many of these devices therefore remain standalone. Deploying wired sensors would incur high costs and would not enable transient or intermittent monitoring. This means manual readings, non-real-time information, and a lack of integrated information on which systems can act.

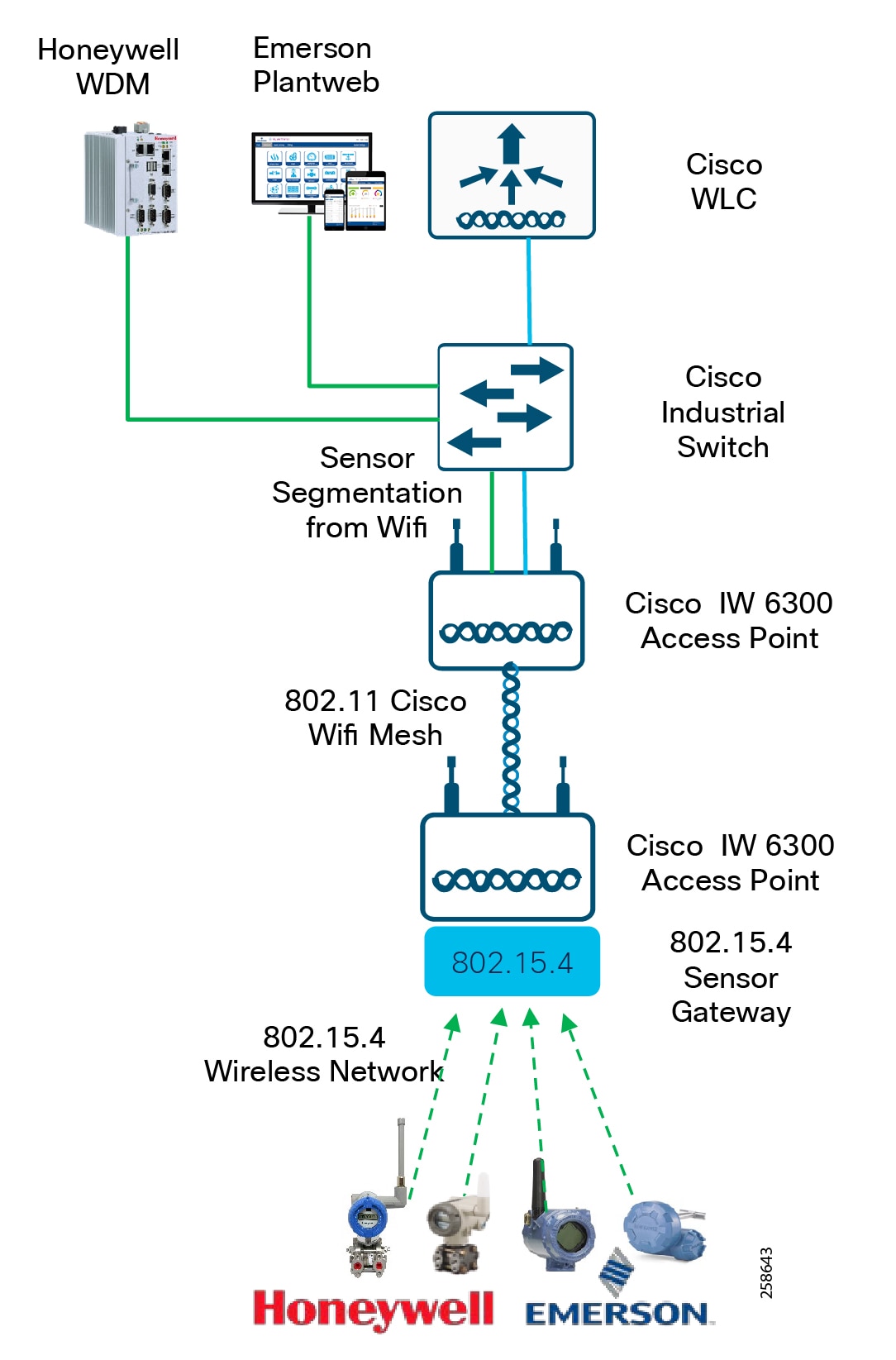

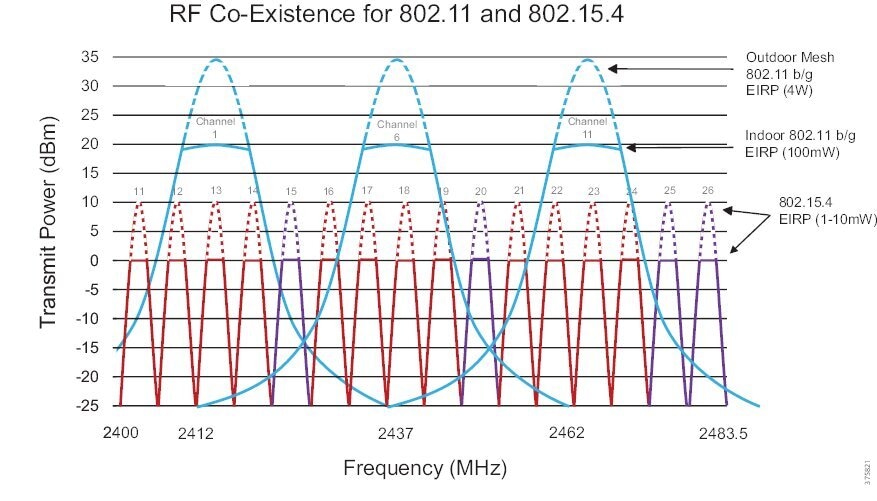

The IEEE 802.15.4 wireless infrastructure connects instrumentation and sensors using industry standard ISA100.11a or Wireless Highway Addressable Remote Transducer Protocol ( Wireless HART) protocols. The sensors are placed at the equipment or along pipes, transmit real-time process data over the 802.15.4 network to the sensor gateway, and then backhauled over the pervasive Cisco wireless 802.11 mesh. By deploying wireless technology for instrumentation, the process monitoring and control systems are able to gain real-time visibility and access to sensor-level information. This allows consistent condition-based monitoring from equipment at all times, delivering high performance, utilization, and reliability and reducing unplanned downtime.

There are two key partners supporting the wireless instrumentation infrastructure, Emerson and Honeywell Process Systems. The Cisco 1552 and IW6300 support the backhaul and integrate with both vendors wireless instrumentation solutions.

Emerson and Cisco work closely in a number of industries in oil and gas solutions, with an emphasis on integrated Industrial Wireless technologies and security for the refining and process control environments. An integral component of the Emerson Wireless plant solution is the wireless plant network. This leverages pervasive sensing for the instrumentation and sensor networks, and also for multi-service use cases to support operational activities.

In process control, Honeywell and Cisco work closely on converged Industrial Wireless solutions for processing facilities, refining, storage, and the oilfield. The joint work includes validated deployment architectures, proven use case solutions, product development, and an end-to-end communications strategy from instrument to application which securely brings together the Enterprise and the process control networks. Cisco wireless is a component of the Honeywell OneWireless Network architecture.

Historically both partners have developed joint products with Cisco and the 1552 wireless access points. This combines the Cisco Class 1 Div2 Intrinsically safe Access point with 802.15.4 Sensor gateways supporting Wireless Hart and ISA100.11A. This provides the connectivity of 802.15.4 networks and instruments, 802.11 wireless devices and 802.11 mesh backhaul all in a single device. The new IW6300 intrinsically safe Class 1/DIV 2 access point follows the same principles. Both Emerson and Honeywell have developed IoT modules which easily integrate into a single unit with the IW6300 access point.

As highlighted in the previous section, there are two modes for deploying wireless and wireless instrumentation in the refineries which have their respective merits. If deploying at Level-4, the Instrumentation gateways must carry non-process control sensing application data. There are additional guidelines that are recommended for example, the WiHART or instrumentation data cannot be accessible from the PCN, and cannot be used by an operator to make decisions in the DCS, i.e., you cannot write values in the DCS based on that data; you can output to an enterprise PI (in the DMZ for example), for data aggregation/collection, store the data in a historian DB. If instrumentation data is required from the DCS, then separate gateways need to be deployed and hardwired directly to the process control network as depicted in the reference architecture.

If sensing applications for process control are required to be backhauled over a Wi-Fi 802.11 Mesh and are essentially a component of the DCS then the WLC and infrastructure could be deployed and managed at the OT layer for example at L3.5 or below.

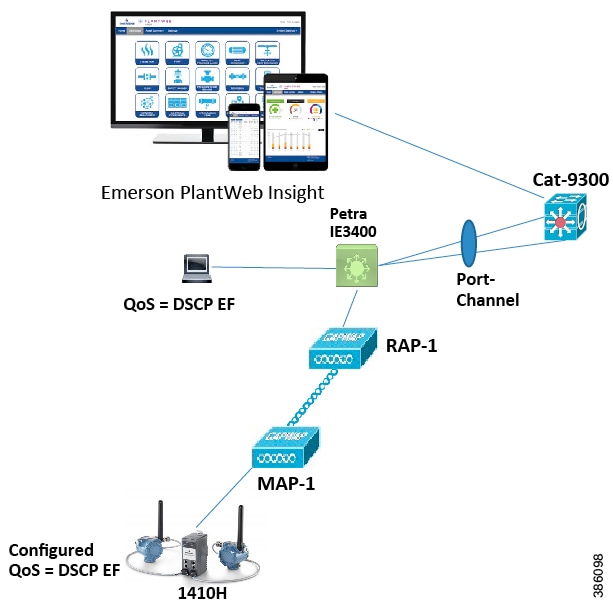

In the figure the Wireless instruments connect to the 802.15.4 Sensor gateway integrated with the IW6300. This is backhauled over the Wi-Fi mesh infrastructure and offloaded at the Root Access point over a switched infrastructure to either a Honeywell Wireless Device Manager or Emerson Plantweb instance.

Figure 13 Wireless Instrumentation

The Industrial Wireless Design describes design guidance for deployments of wireless instrumentation and the integration of 802.15.4 networks with the Cisco wireless infrastructure with a focus on Emerson for this initial release. Further releases will incorporate both Honeywell and Emerson architectures with design guidance.

■![]() IW 6300 and 1552 access points

IW 6300 and 1552 access points

■![]() AireOS WLC and Cisco Catalyst 9800 IOS-XE WLC platforms

AireOS WLC and Cisco Catalyst 9800 IOS-XE WLC platforms

■![]() 802.15.4 Sensor Gateway—Honeywell or Emerson IoT Module

802.15.4 Sensor Gateway—Honeywell or Emerson IoT Module

■![]() Honeywell or Emerson wireless instrumentation

Honeywell or Emerson wireless instrumentation

Oil and Gas Process Control and Refineries Reference Design - Industrial Security

When discussing industrial network security, customers are concerned with how to keep the environment safe and operational. It is recommended to follow an architectural approach to securing the control system and process domain. The Purdue Model of Control Hierarchy, International Society of Automation 95 (ISA95) and IEC 6244 and NIST 800-82 are examples of such guidelines. Key security requirements in the refinery networks include device and IACS asset visibility, secure access to the network, segmentation, group-based security policy, threat detection and mitigation to protect the infrastructure. As domains between Operational Technology (OT) and Information Technology (IT) converge, they must also align security strategies and work more closely together to ensure a truly end-to-end security architecture.

A Basic Security Strategy to securing any environment not just Industrial environments starts with Visibility and Baseline, Segment, Detect and then respond. This section and the highlights the security reference architecture for process control and refineries with an OT focus to securing the operational environment. This reference is built from the Industrial Automation for Network and Security CVD 2.0. This Industrial Automation for Network and Security CVD 2.0 contains deeper detail into the design and implementation supporting security in the OT process domain.

Visibility

Companies cannot secure the infrastructure without having a thorough understanding of the assets connected. Securing the OT infrastructure starts with having a precise view of the asset inventory, communication patterns, and network topologies. Plant security implementations require full visibility of their assets and application flows so they can implement security best practices, drive network segmentation, implement security policy with enforcement, and provide threat control, awareness and mitigation. Visibility is the first step in security, and the lack of visibility to assets and communication patterns in industrial control systems makes it a challenge to secure these environments. A security implementation generally starts with visibility such as implementing a security assessment. Cisco Cyber Vision, Cisco Industrial Network Director (IND) and StealthWatch can be used to provide visibility into the oil and gas and process plant connected assets.

Cisco Cyber Vision

Cisco Cyber Vision is a cybersecurity solution specifically designed for industrial organizations including oil and gas, manufacturing, power utilities and water distribution to ensure continuity, resilience, and safety of their industrial operations. It provides asset owners with full visibility into their IACS networks so they can ensure operational and process integrity, drive regulatory compliance, and enforce security policies through seamless integration with the IT Security Operations Center (SOC) and easy deployment within the industrial network. Cisco Cyber Vision leverages Cisco industrial network equipment to monitor industrial operations and feeds Cisco IT security platforms with OT context to build a unified IT/OT cybersecurity architecture. Cisco Cyber Vision provides three key value propositions:

■![]() Visibility embedded in the Industrial Network-Know what to protect. Cisco Cyber Vision is embedded in the Cisco industrial network equipment so everything that connects to it can be seen, enabling customers to segment their network and deploy IoT security at scale.

Visibility embedded in the Industrial Network-Know what to protect. Cisco Cyber Vision is embedded in the Cisco industrial network equipment so everything that connects to it can be seen, enabling customers to segment their network and deploy IoT security at scale.

■![]() Security insights for IACS and OT-Continuously monitor IACS cybersecurity integrity to help maintain system integrity and production continuity. Cisco Cyber Vision understands proprietary industrial protocols and keeps track of process data, asset modifications, and variable changes.

Security insights for IACS and OT-Continuously monitor IACS cybersecurity integrity to help maintain system integrity and production continuity. Cisco Cyber Vision understands proprietary industrial protocols and keeps track of process data, asset modifications, and variable changes.

■![]() 360° threat detection-Detect threats before it is too late. Cisco Cyber Vision leverages Cisco threat intelligence and advanced behavioral analytics to identify known and emerging threats as well as process anomalies and unknown attacks. Fully integrated with Cisco security portfolio, it extends the IT SOC to the OT domain.

360° threat detection-Detect threats before it is too late. Cisco Cyber Vision leverages Cisco threat intelligence and advanced behavioral analytics to identify known and emerging threats as well as process anomalies and unknown attacks. Fully integrated with Cisco security portfolio, it extends the IT SOC to the OT domain.

Cisco Cyber Vision has two primary components: Center and Sensor. The Sensor uses deep packet inspection (DPI) to filter the packets and extract metadata, which is sent to the Center for further analytics. Deep packet inspection is a sophisticated process of inspecting packets up to the application layer to discover any abnormal behavior occurring in the network. Primarily the sensors are deployed on industrial networks and protocols, Level 0-2 in the Purdue model.

Cisco Industrial Network Director (IND)

Cisco IND is a network management product for OT team that provides an easily-integrated system delivering increased operator and technician productivity through streamlined network monitoring and rapid troubleshooting. Cisco IND is part of a comprehensive IoT solution from Cisco and provides:

■![]() Easy-to-adopt network management system built specifically for industrial applications that leverages the full capabilities of the Cisco IE switches to make the network accessible to non-IT operations personnel

Easy-to-adopt network management system built specifically for industrial applications that leverages the full capabilities of the Cisco IE switches to make the network accessible to non-IT operations personnel

■![]() Creates a dynamic, integrated topology of automation and networking assets using discovery via industrial protocols (CIP, PROFINET) to provide a common framework for OT and IT personnel to monitor and troubleshoot the network and quickly recover from unplanned downtime. The device discovery also provides context details of the connected industrial devices (such as PLCs, I/O, Drives, HMI and so on).

Creates a dynamic, integrated topology of automation and networking assets using discovery via industrial protocols (CIP, PROFINET) to provide a common framework for OT and IT personnel to monitor and troubleshoot the network and quickly recover from unplanned downtime. The device discovery also provides context details of the connected industrial devices (such as PLCs, I/O, Drives, HMI and so on).

■![]() Rich APIs allow for easy integration of network information into existing industrial asset management systems and allow customers and system integrators to build dashboards customized to meet specific monitoring and accounting needs.

Rich APIs allow for easy integration of network information into existing industrial asset management systems and allow customers and system integrators to build dashboards customized to meet specific monitoring and accounting needs.

Although IND is targeted as a network management tool for OT personnel, the asset visibility function is utilized in the Industrial Automation security architecture.

StealthWatch and NetFlow

Cisco StealthWatch provides enterprise-wide network visibility and applies advanced security analytics to detect and respond to threats in real time. Using a combination of behavioral modeling, machine learning, and global threat intelligence, StealthWatch can quickly, and with high confidence, detect threats such as command-and-control (C&C) attacks, ransomware, distributed-denial-of-service (DDoS) attacks, illicit crypto-mining, unknown malware, and insider threats. Cisco StealthWatch collects and analyzes massive amounts of data to help security operations teams gain real-time situational awareness of all users, devices, and traffic on the extended network, so they can quickly and effectively respond to threats.

StealthWatch leverages NetFlow, IPFIX, and other types of flow data from existing infrastructure such as routers, switches, firewalls, proxy servers, endpoints, and other network infrastructure devices. The data is collected and analyzed to provide a complete picture of network activity. Focused on Enterprise IT networks. This would correspond to level 3 to 5 in the Purdue model. In the industrial domain Level 3 tends to have more COTS type applications and windows servers serving plantwide, therefore StealthWatch provides threat awareness and visibility and is impactful at this layer.

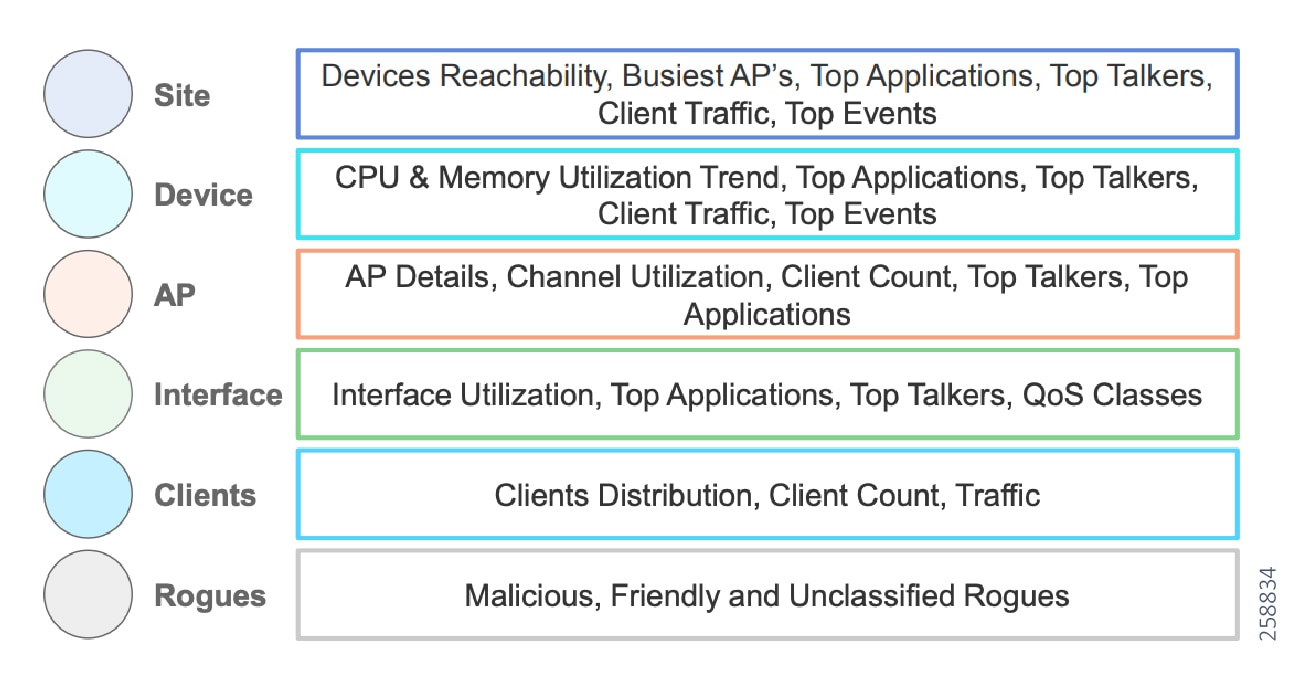

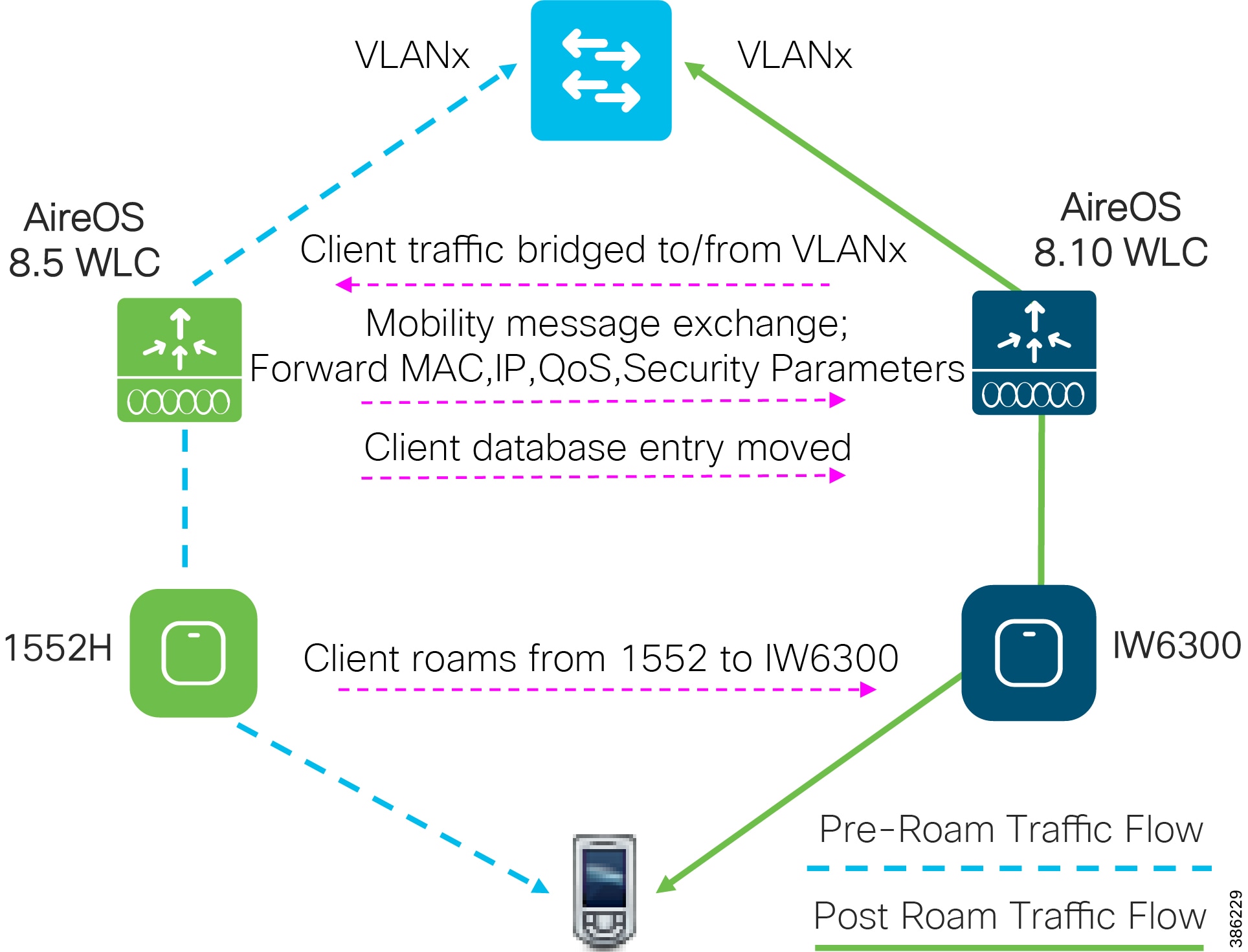

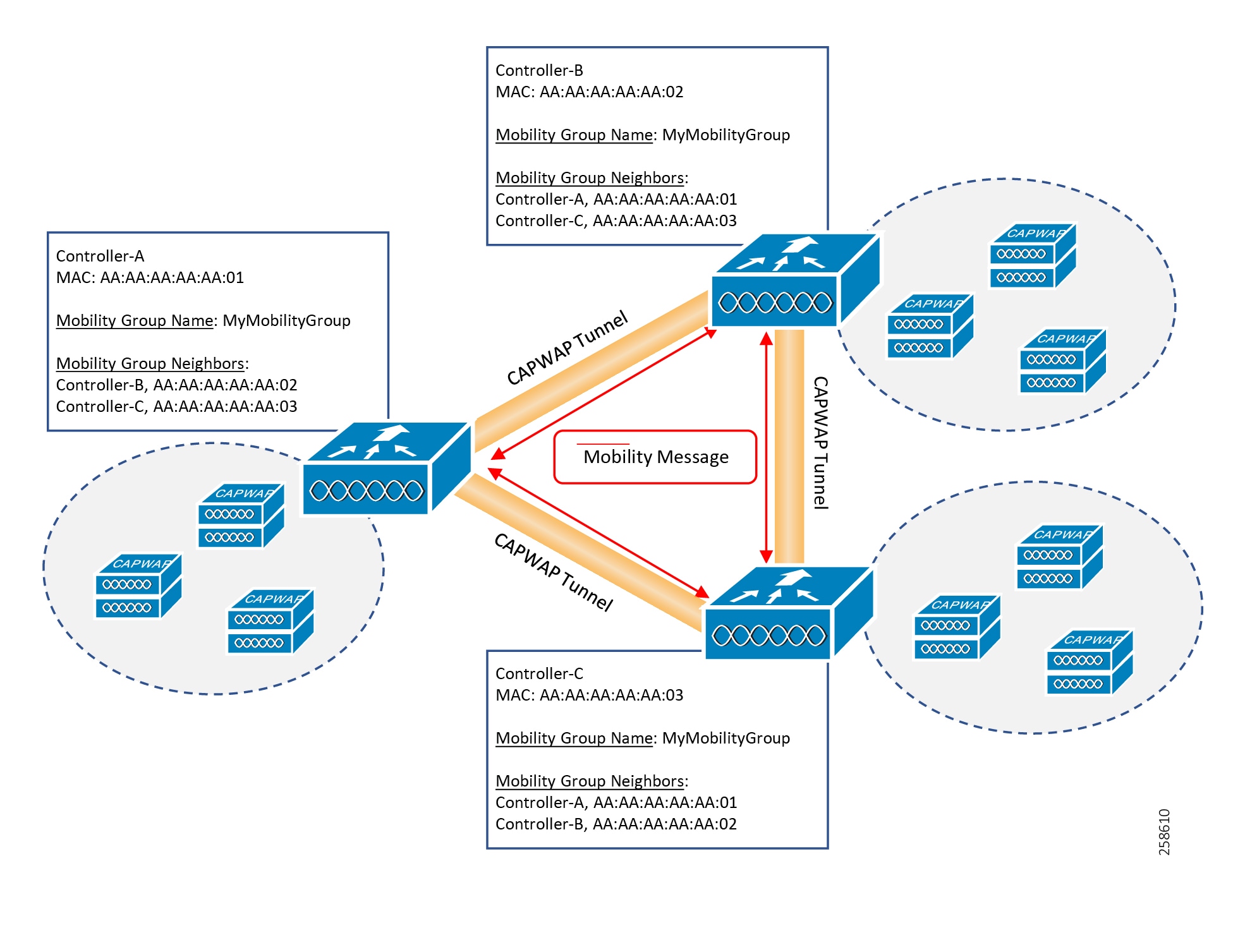

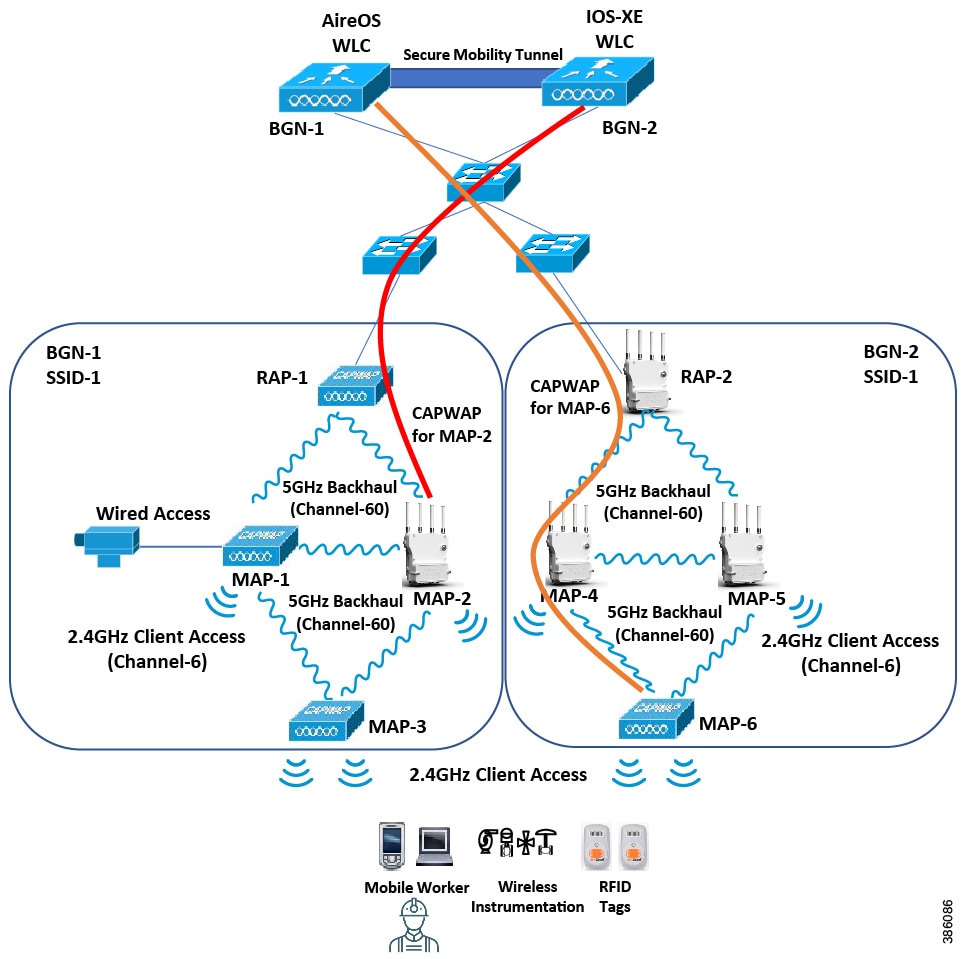

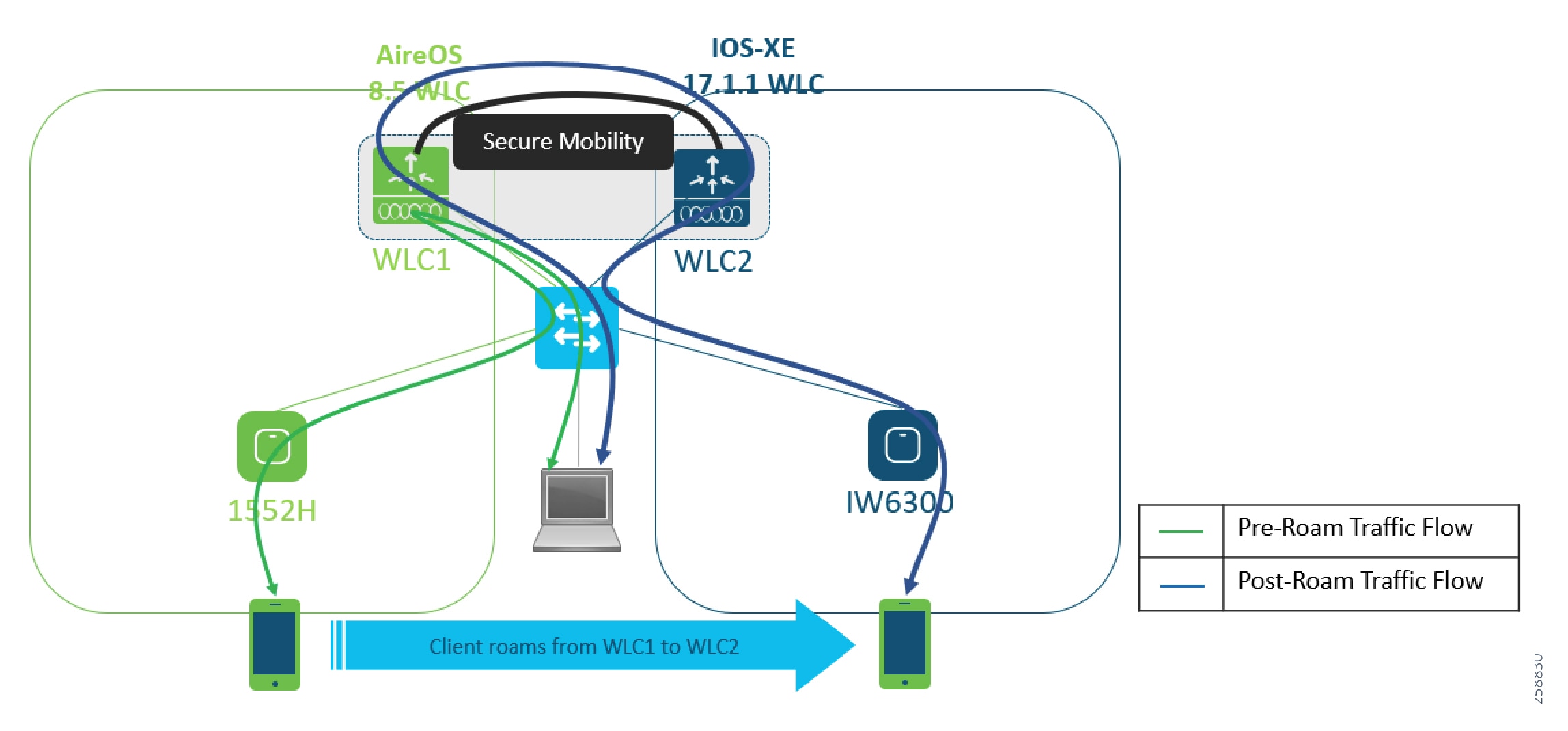

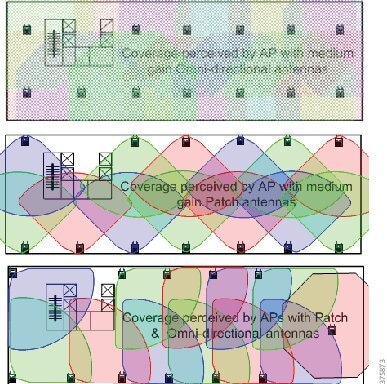

Segmentation, policy management and enforcement