- About the Document

- Introduction

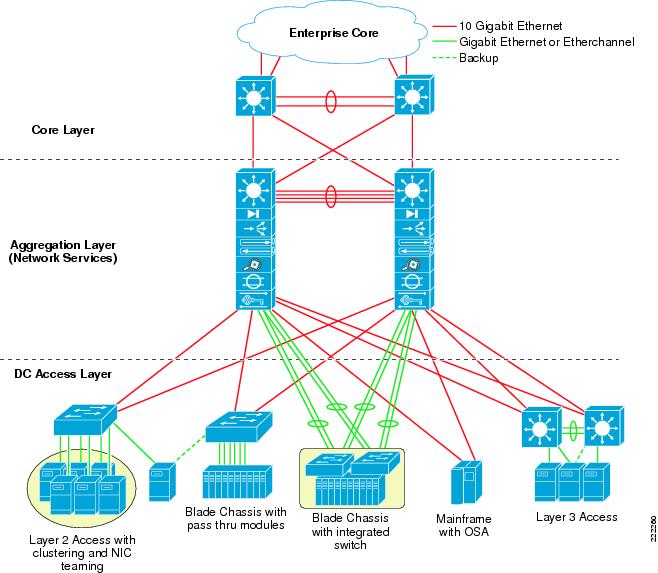

- ESX Server Network and Storage Connectivity

VMware Infrastructure 3 in a Cisco Network Environment

About the Document

This document is a collaboration between Cisco and VMware. It documents a set of suggested best practices for deploying VMware Infrastructure (VI) 3.x and VMware ESX Server 3.x in a Cisco network environment. The document provides details regarding the internal constructs of the ESX Server and their relation to external Cisco network devices are discussed.

This document is intended for network architects, network engineers, and server administrators interested in understanding and deploying VMware ESX Server 3.x hosts in a Cisco data center environment.

Introduction

Currently, there are efforts to consolidate and standardize the hardware and software platforms comprising the enterprise data center. IT groups are considering the data center facility, the servers it houses, and network components as a pool of resources rather than unrelated assets "siloed" to resolve specific business requirements. Server virtualization is a technique that allows the abstraction of server resources to provide flexibility and optimize usage on a standardized infrastructure. As a result, data center applications are no longer bound to specific hardware resources; thus making the application unaware of the underlying hardware, yet viewing the CPUs, memory, and network infrastructure as shared resource pools available via virtualization.

Virtualization of network, storage, and server platforms has been maturing over time. Technologies such as virtual local area networks (VLANs), virtual storage area networks (VSANs), and virtual network devices are widely deployed in today's enterprise data center. Mainframe legacy systems have been "virtualized" for many years, employing logical partitions (LPARs) to achieve greater resource utilization.

The ability to break the link between physical hardware (such as CPU, memory, and disk) from an operating system provides new opportunities to consolidate beyond the physical level and to optimize resource utilization and application performance. Expediting this revolution is the introduction of more powerful x86 platforms built to support a virtual environment, namely the availability of multi-core CPU and the use of AMD Virtualization (AMD-V) and the Intel Virtualization Technology (IVT).

Note ![]() For more information about AMD Processors that support this technology, refer to the following URL: http://www.amd.com/us-en/Processors/ProductInformation/0,,30_118_8796,00.html

For more information about AMD Processors that support this technology, refer to the following URL: http://www.amd.com/us-en/Processors/ProductInformation/0,,30_118_8796,00.html

For more information about Intel Processors that support this technology, refer to the following URL: http://www.intel.com/business/technologies/virtualization.htm?iid=servproc+rhc_virtualization

VMware infrastructure provides a rich set of networking capabilities that well integrate with sophisticated enterprise networks. These networking capabilities are provided by VMware ESX Server and managed by VMware VirtualCenter. With virtual networking, you can network both virtual machines and physical machines in a consistent manner. You can also build complex networks within a single ESX Server host or across multiple ESX Server hosts, where virtual switches allow virtual machines on the same ESX Server host to communicate with each other using the same network protocols that would be used over physical switches, without the need for additional networking hardware. ESX Server virtual switches also support VLANs that are compatible with standard VLAN implementations from other vendors.

A virtual machine can be configured with one or more virtual Ethernet adapters, each of which has its own IP address and MAC address. As a result, virtual machines have networking properties consistent with physical machines.

ESX Server Network and Storage Connectivity

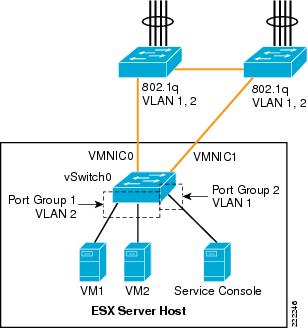

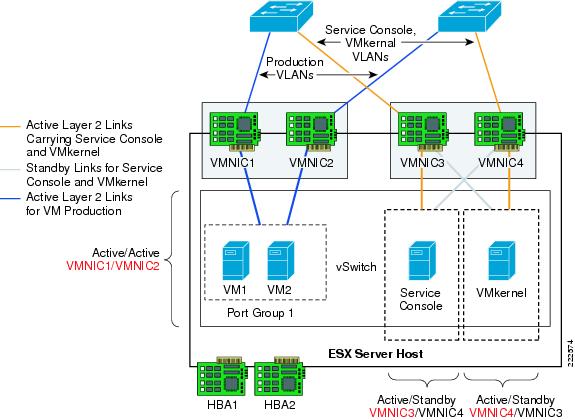

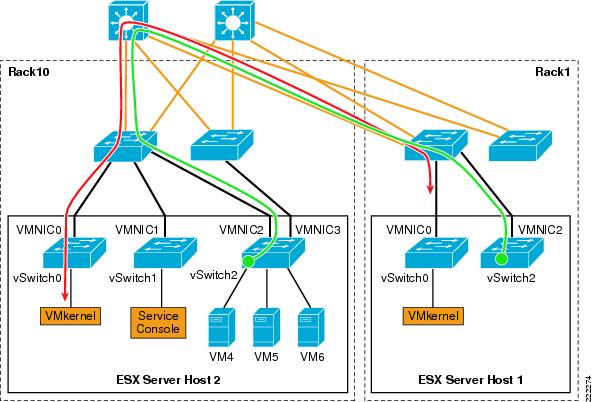

VMWare networking is defined per ESX host, and is configured via the VMware VirtualCenter Management Server, the tool used to manage an entire virtual infrastructure implementation. An ESX Server host can run multiple virtual machines (VMs) and perform some switching internal to the host's virtual network prior to sending traffic out to the physical LAN switching network.

ESX Server Networking Components

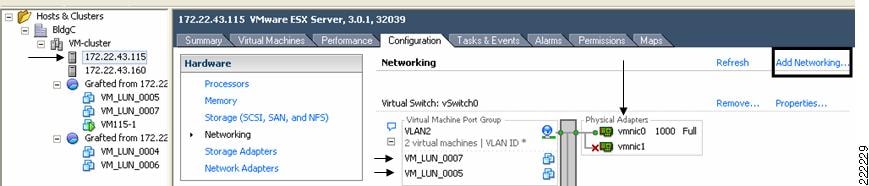

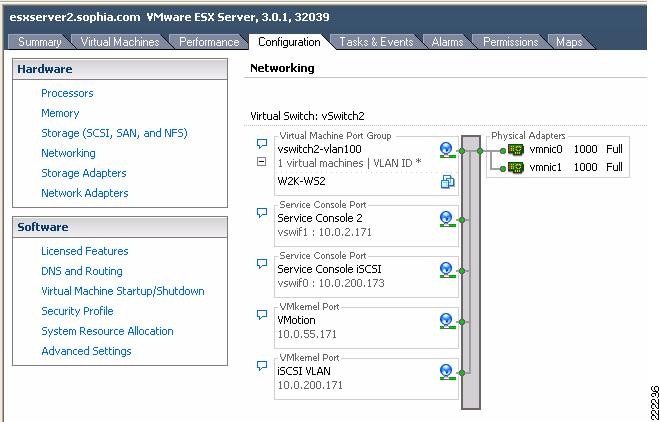

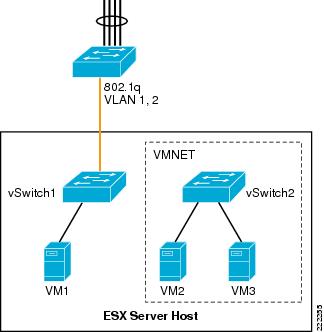

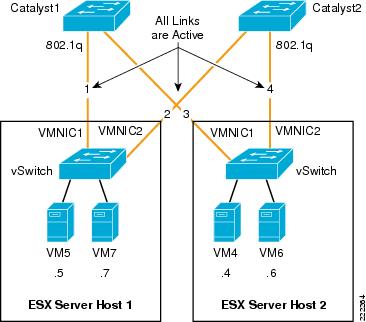

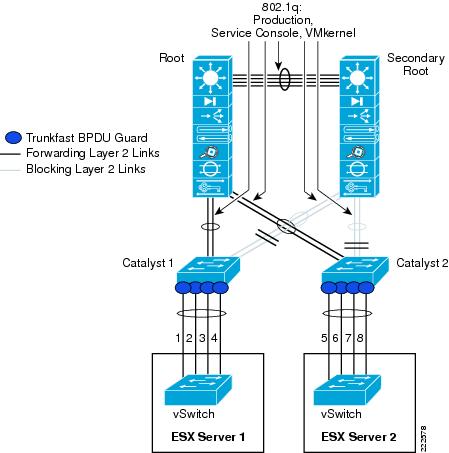

Figure 1 VMware Networking is Defined per ESX Host

vmnics, vNICs and Virtual Ports

The term "NIC" has two meanings in a VMware virtualized environment; it can refer to a physical network adapters (vmnic) of the host server hardware and it can also refer to a virtual NIC (vNIC), a virtual hardware device presented to the virtual machine by VMware's hardware abstraction layer. While a vNIC is solely a virtual device, it can leverage the hardware acceleration features offer by the physical NIC.

Through VirtualCenter, you can see the networking configuration by highlighting the ESX host of interest (on the left of the interface, see Figure 1). Within the Configuration tab (on the right side of the interface), you can find the association between the VM's vNICs (VM_LUN_0007 and VM_LUN_0005 in Figure 1) and the physical NICs (vmnic0 and vmnic1). The virtual and physical NICs are connected through a virtual switch (vSwitch). A vSwitch forwards the traffic between a vNIC and a vmnic, and the connection point between the vNIC and the vSwitch is called a virtual port.

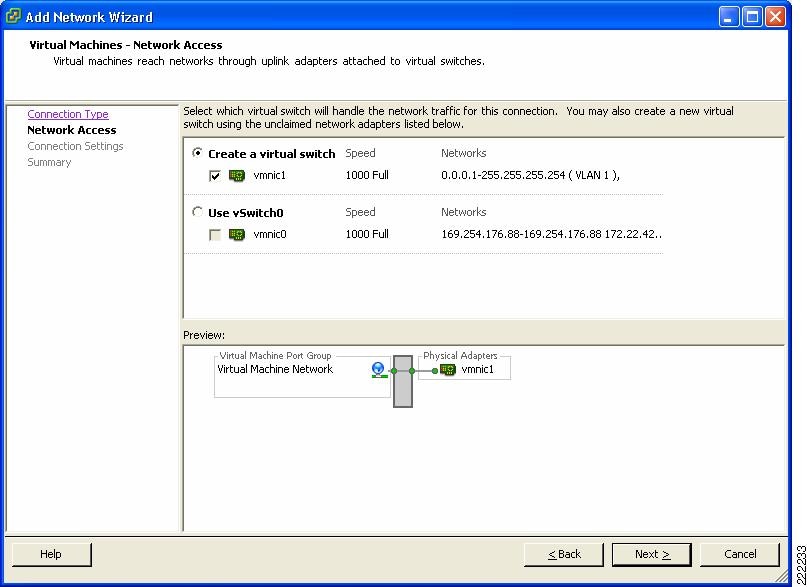

Clicking the Add Networking button opens the Add Network Wizard, which guides you through the creation of new vSwitches or new Port Groups, a feature used to partition an existing vSwitch.

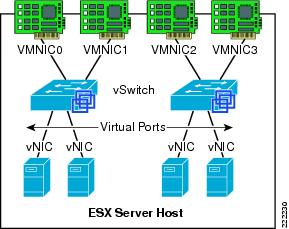

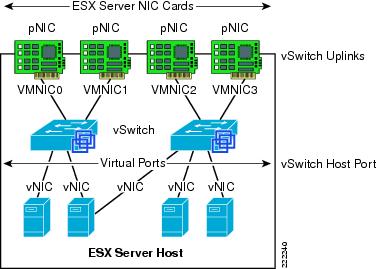

Figure 2 shows the provisioning of physical and VM adapters in an ESX host.

Figure 2 ESX Server Interfaces

In Figure 2, four vmnics are presented on the physical host. The server administrator can designate which vmnics carry VM traffic. This ESX Server has been configured with two vSwitches. Four VMs are present, each configured with a single vNIC and the vNICs are in turn connected to the virtual ports of one of the vSwitches.

vNIC MAC Addresses, Bootup, VMotion Migration

VMs can be configured with up to four vNICs. The vNICs MAC addresses are generated automatically by the ESX Server (a process described in the next section); however, they may also be specified by the administrator. This feature can be useful when deploying VMs in an environment using DHCP-based server addressing, as a designated MAC address can be used to ensure a VM always receives the same IP address.

Note ![]() Unlike with regular NICs, it is not generally necessary or useful to "team" vNICs. In a VMware environment, NIC teaming refers to connecting multiple vmnics to a vSwitch to provide network load-sharing or redundancy.

Unlike with regular NICs, it is not generally necessary or useful to "team" vNICs. In a VMware environment, NIC teaming refers to connecting multiple vmnics to a vSwitch to provide network load-sharing or redundancy.

The vNIC MAC addresses include the Organization Unique Identifiers (OUI) assigned by IEEE to VMware. The ESX host and the configuration filename information is used to create a vNIC MAC address. The OUIs used by VMware are 00-50-56 and 00-0c-29. The algorithm used to generate the MAC address reduces the chances of a MAC address collision, although the process cannot guarantee a MAC address is unique. The generated MAC addresses are created using three parts:

•![]() The VMware OUI.

The VMware OUI.

•![]() The SMBIOS UUID for the physical ESX Server machine.

The SMBIOS UUID for the physical ESX Server machine.

•![]() A hash based on the name of the entity for which the MAC address is generated.

A hash based on the name of the entity for which the MAC address is generated.

The ESX host can detect a MAC collision between VMs and resolve the collision, if necessary. VMware has reserved the range 00:50:56:00:00:00 ‡ 00:50:56:3F:FF:FF for statically assigned VM MAC addresses. If an administrator wishes to assign static MAC addresses to VMs, they should use addresses within this range.

Each VM has a unique ".vmx" file; a file containing a VMs configuration information. The dynamically generated MAC address is saved in this file. If this file is removed, a VM's MAC address may change, as the location information of that file is included in the address generation algorithm.

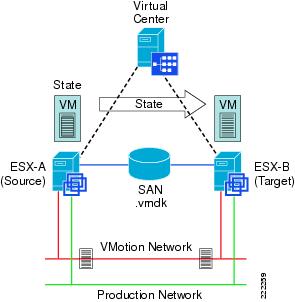

Note ![]() VMotion is the method used by ESX Server to migrate powered-on VMs within an ESX Server farm from one physical ESX host to another. A VMotion migration does not cause the VM MAC to change. If a VM moves with a VMotion migration from an ESX host to a different one, the MAC address of the VM will not change because the VMware Virtual Machine File System (VMFS) volume is on a SAN and is accessible to both the originating ESX host and target ESX host. Therefore, there is no need to copy the .vmx configuration file and VM disk to a different location, which may trigger a new MAC generation.

VMotion is the method used by ESX Server to migrate powered-on VMs within an ESX Server farm from one physical ESX host to another. A VMotion migration does not cause the VM MAC to change. If a VM moves with a VMotion migration from an ESX host to a different one, the MAC address of the VM will not change because the VMware Virtual Machine File System (VMFS) volume is on a SAN and is accessible to both the originating ESX host and target ESX host. Therefore, there is no need to copy the .vmx configuration file and VM disk to a different location, which may trigger a new MAC generation.

Note ![]() This is not necessarily the case when you migrate (non-VMotion) a powered-off VM. In this situation, you can also decide to relocate the VM, which in turn may change the MAC address on the VM.

This is not necessarily the case when you migrate (non-VMotion) a powered-off VM. In this situation, you can also decide to relocate the VM, which in turn may change the MAC address on the VM.

ESX Virtual Switch

The ESX host links local VMs to each other and to the external enterprise network via a software virtual switch (vSwitch), which runs in the context of the kernel.

Virtual Switch Overview

Virtual switches are the key networking components in VMware Infrastructure 3. You can create up to 248 simultaneous virtual switches on each ESX Server 3 host. A virtual switch is "built to order" at run time from a collection of small functional units.

Some of the key functional units are:

•![]() The core layer forwarding engine—This engine is a key part of the system (for both performance and correctness), and in virtual infrastructure it is simplified so it only processes Layer 2 Ethernet headers. It is completely independent of other implementation details, such as differences in physical Ethernet adapters and emulation differences in virtual Ethernet adapters.

The core layer forwarding engine—This engine is a key part of the system (for both performance and correctness), and in virtual infrastructure it is simplified so it only processes Layer 2 Ethernet headers. It is completely independent of other implementation details, such as differences in physical Ethernet adapters and emulation differences in virtual Ethernet adapters.

•![]() VLAN tagging, stripping, and filtering units.

VLAN tagging, stripping, and filtering units.

•![]() Layer 2 security, checksum, and segmentation offload units.

Layer 2 security, checksum, and segmentation offload units.

When the virtual switch is built at run-time, ESX Server loads only those components it needs. It installs and runs only what is actually needed to support the specific physical and virtual Ethernet adapter types used in the configuration. This means the system pays the lowest possible cost in complexity and demands on system performance.

The design of ESX Server supports temporarily loading certain components in the field—a capability that could be used, for example, for running appropriately designed diagnostic utilities. An additional benefit of the modular design is that VMware and third-party developers can easily incorporate modules to enhance the system in the future.

In many ways, the ESX Server virtual switches are similar to physical switches. In some notable ways, they are different. Understanding these similarities and differences will help you plan the configuration of your virtual network and its connections to your physical network.

A Virtual Switch is Similar to a Physical Switch

A virtual switch, as implemented in ESX Server 3, works in much the same way as a modern Ethernet switch. It maintains a MAC address, port forwarding table, and performs the following functions:

•![]() Looks up each frame's destination MAC when it arrives.

Looks up each frame's destination MAC when it arrives.

•![]() Forwards a frame to one or more ports for transmission.

Forwards a frame to one or more ports for transmission.

•![]() Avoids unnecessary deliveries (in other words, it is not a hub).

Avoids unnecessary deliveries (in other words, it is not a hub).

An ESX Server 3 virtual switch supports VLAN segmentation at the port level. This means that each port can be configured in either of the following ways:

•![]() With access to a single VLAN, making it what is called an access port in the world of physical switches, or in ESX Server terminology using virtual switch tagging.

With access to a single VLAN, making it what is called an access port in the world of physical switches, or in ESX Server terminology using virtual switch tagging.

•![]() With access to multiple VLANs, leaving tags intact, making it what is called a trunk port in the world of physical switches, or in ESX Server terminology using virtual guest tagging.

With access to multiple VLANs, leaving tags intact, making it what is called a trunk port in the world of physical switches, or in ESX Server terminology using virtual guest tagging.

In addition, an administrator can manage many configuration options for the switch as a whole and for individual ports using the Virtual Infrastructure Client.

A Virtual Switch Is Different from a Physical Switch

ESX Server provides a direct channel from virtual Ethernet adapters for such configuration information as authoritative MAC filter updates. Therefore, there is no need to learn unicast addresses or perform IGMP snooping to learn multicast group membership.

Spanning Tree Protocol not Used on the Virtual Switch

VMware infrastructure enforces a single-tier networking topology within the ESX Server. In other words, there is no way to interconnect multiple virtual switches; thus, the ESX network cannot be configured to introduce loops. Because of this, the vSwitch on the ESX host does not execute the Spanning Tree Protocol (STP).

Note ![]() It is actually possible, with some effort, to introduce a loop with virtual switches. However, to do so, you must run Layer 2 bridging software in a guest with two virtual Ethernet adapters connected to the same subnet. This would be difficult to do accidentally, and there is no reason to do so in typical configurations.

It is actually possible, with some effort, to introduce a loop with virtual switches. However, to do so, you must run Layer 2 bridging software in a guest with two virtual Ethernet adapters connected to the same subnet. This would be difficult to do accidentally, and there is no reason to do so in typical configurations.

Virtual Switch Isolation

Network traffic cannot flow directly from one virtual switch to another virtual switch within the same host. Virtual switches provide all the ports you need in one switch, leading to the following benefits:

•![]() Because there is no need to cascade virtual switches, virtual infrastructure provides no capability to connect virtual switches.

Because there is no need to cascade virtual switches, virtual infrastructure provides no capability to connect virtual switches.

•![]() Because there is no way to connect virtual switches, there is no need to prevent bad virtual switch connections.

Because there is no way to connect virtual switches, there is no need to prevent bad virtual switch connections.

•![]() Because virtual switches cannot share physical Ethernet adapters, there is no way to fool the Ethernet adapter into doing loopback or some similar configuration that would cause a leak between virtual switches.

Because virtual switches cannot share physical Ethernet adapters, there is no way to fool the Ethernet adapter into doing loopback or some similar configuration that would cause a leak between virtual switches.

In addition, each virtual switch has its own forwarding table, and there is no mechanism to allow an entry in one table to point to a port on another virtual switch. In other words, every destination the switch looks up can match only ports on the same virtual switch as the port where the frame originated, even if other virtual switches' lookup tables contain entries for that address.

There are natural limits to this isolation. If you connect the uplinks of two virtual switches together, or if you bridge two virtual switches with software running in a virtual machine.

Uplink Ports

Uplink ports are ports associated with physical adapters, providing a connection between a virtual network and a physical network. Physical adapters connect to uplink ports when they are initialized by a device driver or when the teaming policies for virtual switches are reconfigured. Some virtual switches should not connect to a physical network and thus have no uplink port. This is the case, for example, for a virtual switch that provides connections between a firewall virtual machine and the virtual machines protected by the firewall.

Virtual Ethernet adapters connect to virtual ports when you power on or resume the virtual machine on which the adapters are configured, when you take an explicit action to connect the device, or when you migrate a virtual machine using VMotion. A virtual Ethernet adapter updates the virtual switch port with MAC filtering information when it is initialized and whenever it changes. A virtual port may ignore any requests from the virtual Ethernet adapter that would violate the Layer 2 security policy in effect for the port. For example, if MAC spoofing is blocked, the port drops any packets that violate this rule.

Virtual Switch Correctness

Two correctness issues are particularly important. It is important to ensure that virtual machines or other nodes on the network cannot affect the behavior of the virtual switch. ESX Server guards against such influences in the following ways:

•![]() Virtual switches do not learn MAC addresses from the network in order to populate their forwarding tables. This eliminates a likely vector for denial- of-service (DoS) or leakage attacks, either as a direct denial of service attempt or, more likely, as a side effect of some other attack, such as a worm or virus as it scans for vulnerable hosts to infect.

Virtual switches do not learn MAC addresses from the network in order to populate their forwarding tables. This eliminates a likely vector for denial- of-service (DoS) or leakage attacks, either as a direct denial of service attempt or, more likely, as a side effect of some other attack, such as a worm or virus as it scans for vulnerable hosts to infect.

•![]() Virtual switches make private copies of any frame data used to make forwarding or filtering decisions. This is a critical feature of the virtual switch and is unique to virtual switches. The virtual switch does not copy the entire frame, because that would be inefficient, but ESX Server must make sure that the guest operating system does not have access to any sensitive data once the frame is passed on to the virtual switch.

Virtual switches make private copies of any frame data used to make forwarding or filtering decisions. This is a critical feature of the virtual switch and is unique to virtual switches. The virtual switch does not copy the entire frame, because that would be inefficient, but ESX Server must make sure that the guest operating system does not have access to any sensitive data once the frame is passed on to the virtual switch.

ESX Server ensures that frames are contained within the appropriate VLAN on a virtual switch. It does so in the following ways:

•![]() VLAN data is carried outside the frame as it passes through the virtual switch. Filtering is a simple integer comparison. This is really just a special case of the general principle that the system should not trust user accessible data.

VLAN data is carried outside the frame as it passes through the virtual switch. Filtering is a simple integer comparison. This is really just a special case of the general principle that the system should not trust user accessible data.

•![]() Virtual switches have no dynamic trunking support.

Virtual switches have no dynamic trunking support.

VLANs in VMware Infrastructure

VLANs provide for logical groupings of stations or switch ports, allowing communications as if all stations or ports were on the same physical LAN segment. Confining broadcast traffic to a subset of the switch ports or end users saves significant amounts of network bandwidth and processor time.

In order to support VLANs for VMware infrastructure users, one of the elements on the virtual or physical network has to tag the Ethernet frames with 802.1Q tag. There are three different configuration modes to tag (and untag) the packets for virtual machine frames.

•![]() Virtual switch tagging (VST mode)—This is the most common configuration. In this mode, you provision one Port Group on a virtual switch for each VLAN, then attach the virtual machine's virtual adapter to the Port Group instead of the virtual switch directly. The virtual switch Port Group tags all outbound frames and removes tags for all inbound frames. It also ensures that frames on one VLAN do not leak into a different VLAN. Use of this mode requires that the physical switch provides a trunk.

Virtual switch tagging (VST mode)—This is the most common configuration. In this mode, you provision one Port Group on a virtual switch for each VLAN, then attach the virtual machine's virtual adapter to the Port Group instead of the virtual switch directly. The virtual switch Port Group tags all outbound frames and removes tags for all inbound frames. It also ensures that frames on one VLAN do not leak into a different VLAN. Use of this mode requires that the physical switch provides a trunk.

•![]() Virtual machine guest tagging (VGT mode)—You may install an 802.1Q VLAN trunking driver inside the virtual machine, and tags will be preserved between the virtual machine networking stack and external switch when frames are passed from or to virtual switches. Use of this mode requires that the physical switch provides a trunk.

Virtual machine guest tagging (VGT mode)—You may install an 802.1Q VLAN trunking driver inside the virtual machine, and tags will be preserved between the virtual machine networking stack and external switch when frames are passed from or to virtual switches. Use of this mode requires that the physical switch provides a trunk.

•![]() External switch tagging (EST mode) —You may use external switches for VLAN tagging. This is similar to a physical network and VLAN configuration is normally transparent to each individual physical server. There is no need to provide a trunk in these environments.

External switch tagging (EST mode) —You may use external switches for VLAN tagging. This is similar to a physical network and VLAN configuration is normally transparent to each individual physical server. There is no need to provide a trunk in these environments.

Port Groups

Virtual machines connect to vSwitches through vNICs. The networking configuration on the ESX Server associates vNIC (also referred to VM Network Adapter) with a Network Label, which in turn identifies a Port Group. In other words, to associate a VM with a vSwitch, you need to assign a vNIC to a Port Group.

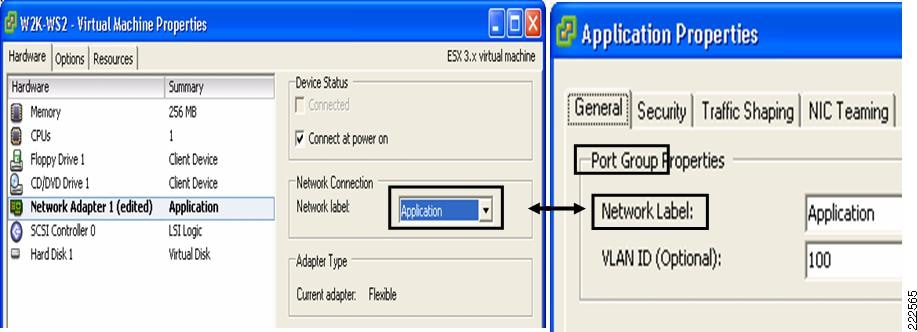

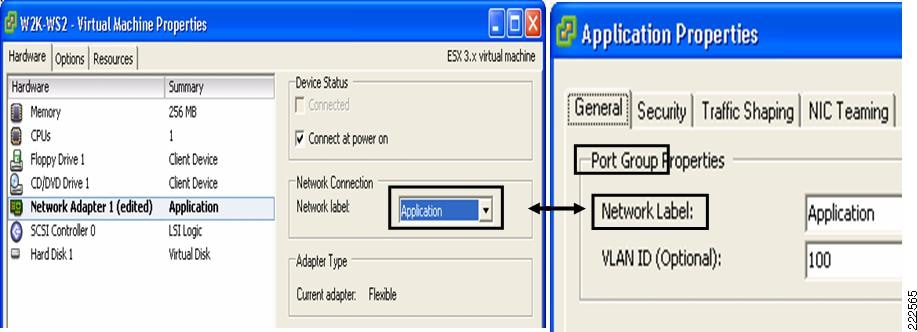

VM Assignment to a VLAN

Figure 3 shows the relationship between Port Group and VLANs. In Figure 3, to the left you see the VM Network Adapter configuration with the Network Connection settings referencing one of the available Port Groups. To the right you see the Virtual Switch Properties for a given Port Group, the Network Label and the VLAN associated with it.

Figure 3 Relation Between Port Groups and VLANs

The association of a VM Network Adapter (vNIC) to a Port Group (i.e., Network Label) does the following:

•![]() Assigns the vNIC to a vSwitch.

Assigns the vNIC to a vSwitch.

•![]() Assigns the vNIC to a specific VLAN.

Assigns the vNIC to a specific VLAN.

•![]() Assigns the vNIC to a specific "NIC Teaming" policy (this is explained in further detail in a later section as this is not vNIC teaming, nor NIC teaming in the traditional sense). It would be more appropriate to say that traffic from the VM is going to exit the vSwitch to the LAN switching network according to a traffic load-balancing policy defined by the Port Group "NIC Teaming" configuration.

Assigns the vNIC to a specific "NIC Teaming" policy (this is explained in further detail in a later section as this is not vNIC teaming, nor NIC teaming in the traditional sense). It would be more appropriate to say that traffic from the VM is going to exit the vSwitch to the LAN switching network according to a traffic load-balancing policy defined by the Port Group "NIC Teaming" configuration.

Port Groups are NOT VLANs

Port Groups are configuration templates for the vNIC ports on the vSwitch. Port Groups allow administrators to group vNICs from multiple VMs and configure them simultaneously. The administrator can set specific QoS, security policies, and VLANs by changing the Port Group configuration.

Even if Port Groups assign vNICs (thus VMs) to a VLAN, there is no 1-to-1 mapping between Port Groups and VLANs; in fact, you could have any number of different Port Groups using the same VLAN.

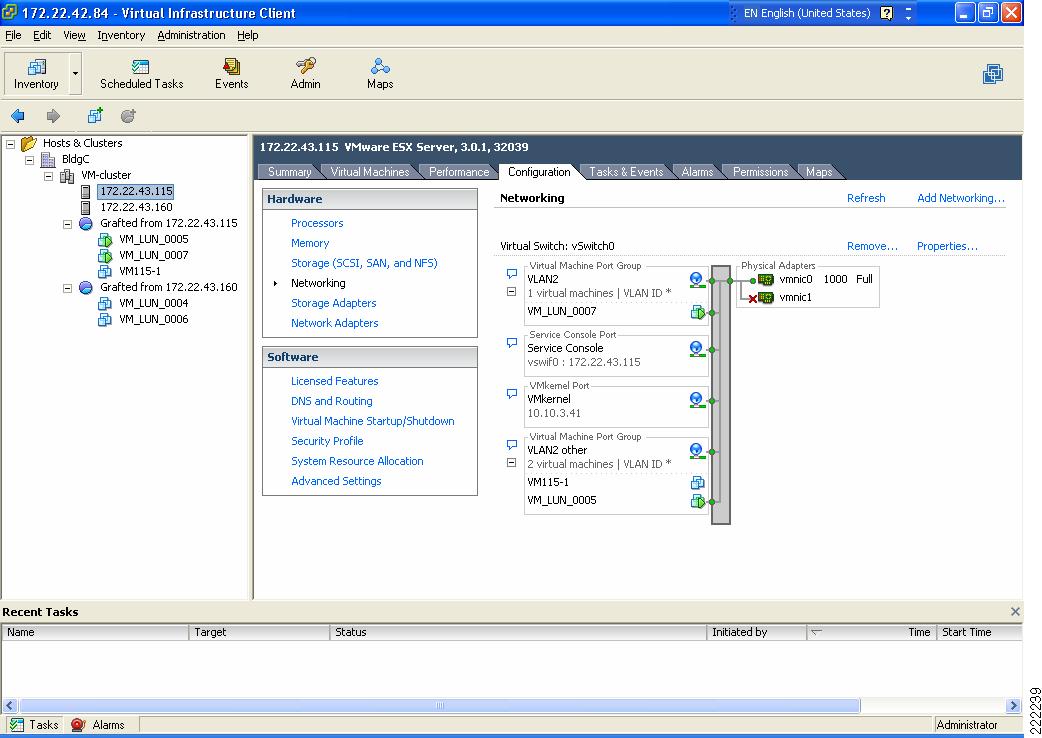

Consider Figure 4 as an example. VM_LUN_0007 and VM_LUN_0005 are on two different Port Groups, the first called VLAN2 and the second called VLAN2 other. Both Port Groups are using VLAN2, in fact VM_LUN_0007 can talk to VM_LUN_0005. Port Groups thus do not partition the switch ports by isolating them, but simply by grouping them from a configuration point of view.

Figure 4 vSwitch and Port Groups

Summary

The Port Group concept may be sometimes misunderstood by networking experts. As a summary these are key concepts to retain regarding Port Groups:

•![]() Port Groups are a configuration management mechanism.

Port Groups are a configuration management mechanism.

•![]() Port Groups are not VLANs.

Port Groups are not VLANs.

•![]() Port Groups are not Port-Channels.

Port Groups are not Port-Channels.

•![]() The association between a VM and a vSwitch is defined by selecting a Port Group (called Network Label) from the vNIC (VM Network Adapter) configuration screen.

The association between a VM and a vSwitch is defined by selecting a Port Group (called Network Label) from the vNIC (VM Network Adapter) configuration screen.

•![]() Port Groups define the following configuration parameters for the vNIC ports that belong to them: VLAN number, Layer 2 security policy, QoS policy, and traffic load-balancing policy referred to as NIC Teaming.

Port Groups define the following configuration parameters for the vNIC ports that belong to them: VLAN number, Layer 2 security policy, QoS policy, and traffic load-balancing policy referred to as NIC Teaming.

Layer 2 Security Features

The virtual switch has the ability to enforce security policies to prevent virtual machines from impersonating other nodes on the network. There are three components to this feature.

•![]() Promiscuous mode is disabled by default for all virtual machines. This prevents them from seeing unicast traffic to other nodes on the network.

Promiscuous mode is disabled by default for all virtual machines. This prevents them from seeing unicast traffic to other nodes on the network.

•![]() MAC address change lockdown prevents virtual machines from changing their own unicast addresses. This also prevents them from seeing unicast traffic to other nodes on the network, blocking a potential security vulnerability that is similar to but narrower than promiscuous mode.

MAC address change lockdown prevents virtual machines from changing their own unicast addresses. This also prevents them from seeing unicast traffic to other nodes on the network, blocking a potential security vulnerability that is similar to but narrower than promiscuous mode.

•![]() Forged transmit blocking, when you enable it, prevents virtual machines from sending traffic that appears to come from nodes on the network other than themselves.

Forged transmit blocking, when you enable it, prevents virtual machines from sending traffic that appears to come from nodes on the network other than themselves.

Management

The following are three approaches to managing an ESX Server:

•![]() Service Console

Service Console

•![]() Web-based User Interface

Web-based User Interface

•![]() Management application such as VMware VirtualCenter

Management application such as VMware VirtualCenter

The Service Console on ESX Server is accessible via SSH, Telnet, HTTP, and FTP. In addition, the Service Console supplies authentication and system monitoring services. Note that the embedded version of ESX Server, ESX 3i, has no user accessible Service Console, but still supports a web interface for management. The Service Console and web-based user interface are sufficient for managing a single ESX host.

VMware VirtualCenter is a central management solution that, depending on the VC platform, scales to support numerous clients, ESX hosts, and VMs. VirtualCenter provides tools for building and maintaining your virtual network infrastructure. You can use VirtualCenter to add, delete, and modify virtual switches and to configure Port Groups with VLANs and teaming.

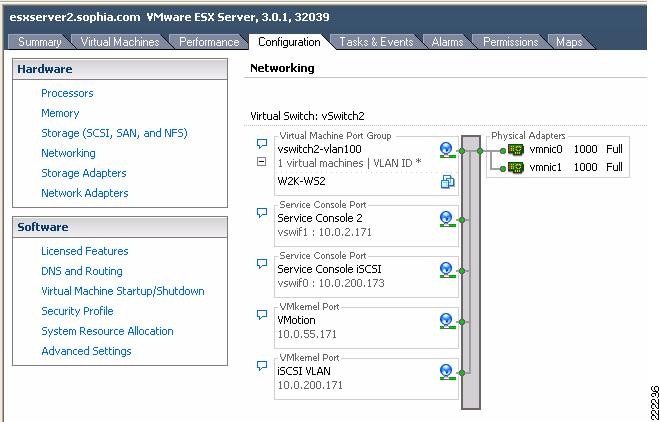

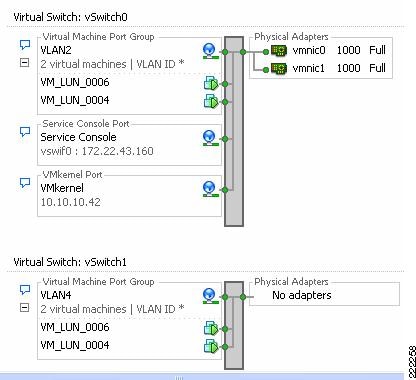

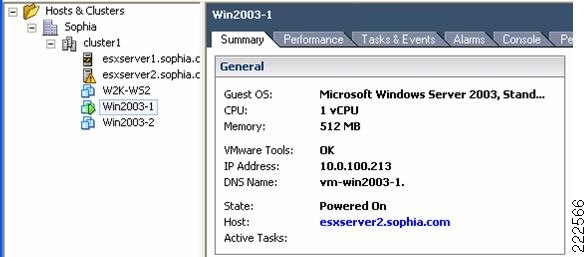

A sample configuration is visible from the VMware ESX Server/Configuration/Networking tab, as shown in Figure 5. In this example, the VM called W2K-WS2 connects to vSwitch2 on VLAN 100 (shown in Figure 5 as truncated to VLAN *).

Figure 5 vSwitch Final Configuration

The characteristics of a vSwitch can be further modified by selecting the Properties button to the right of the vSwitch. This allows adding more Port Groups, changing the NIC Teaming properties, configure traffic rate limiting, etc.

You can use the VirtualCenter roles feature to assign the permissions a network administrator needs to manage the virtual network. For detailed discussion, see Managing VMware VirtualCenter Roles and Permissions document available at http://www.vmware.com/vmtn/resources/826.

vSwitch Scalability

An ESX Server may contain multiple vSwitches, each of which can be configured with up to 1016 "internal" virtual ports for VM use. Because each vNIC assigned to the vSwitch uses one virtual port, this yields a theoretical maximum of 1016 VMs per vSwitch. The virtual switch connects to the enterprise network via outbound vmnic adapters. A maximum of 32 vmnics may be used by the virtual switch for external connectivity.

Incorrect Configurations with vSwitches

Certain configurations are not allowed with vSwitches:

•![]() vSwitches cannot be directly connected to each other. In other words, only vNICs and vmnics can connect to a vSwitch. It is possible to pass traffic from one vSwitch to another vSwitch by using a VM with two vNICs and leveraging the bridging functionality of Microsoft Windows, for example. This practice should be avoided due to the risk of introducing Layer 2 loops.

vSwitches cannot be directly connected to each other. In other words, only vNICs and vmnics can connect to a vSwitch. It is possible to pass traffic from one vSwitch to another vSwitch by using a VM with two vNICs and leveraging the bridging functionality of Microsoft Windows, for example. This practice should be avoided due to the risk of introducing Layer 2 loops.

•![]() A vmnic and its associated physical NIC cannot belong to more than one vSwitch.

A vmnic and its associated physical NIC cannot belong to more than one vSwitch.

•![]() A vSwitch should not and, in fact, cannot become a transit path for the LAN switching network (see vSwitch Forwarding Characteristics for more information).

A vSwitch should not and, in fact, cannot become a transit path for the LAN switching network (see vSwitch Forwarding Characteristics for more information).

ESX LAN Networking

A virtual switch uses at least one of the vmnics on the physical server to link VMs to the external network. The VMkernel allows the vSwitch software construct to use some of the hardware acceleration features available on the physical NICs, including the following:

•![]() TCP segmentation offload

TCP segmentation offload

•![]() VLAN tagging

VLAN tagging

•![]() Checksum calculations offload

Checksum calculations offload

vSwitch Forwarding Characteristics

The vSwitch operates like a regular Layer 2 Ethernet switch. The vSwitch forwards traffic among VMs and between VMs and the LAN switching infrastructure. The ESX Server vmnics are the vSwitch uplinks. See Figure 6.

Figure 6 vSwitch Components

The areas of similarity for vSwitches and regular Ethernet switches are:

•![]() Forwarding is based on the MAC address.

Forwarding is based on the MAC address.

•![]() Traffic from VM-to-VM within the same vSwitch and VLAN remains local.

Traffic from VM-to-VM within the same vSwitch and VLAN remains local.

•![]() vSwitches can tag traffic with a VLAN ID.

vSwitches can tag traffic with a VLAN ID.

•![]() vSwitches are capable of trunking (802.1Q trunks without negotiation protocols).

vSwitches are capable of trunking (802.1Q trunks without negotiation protocols).

•![]() vSwitches can perform some QoS functions (rate limiting).

vSwitches can perform some QoS functions (rate limiting).

•![]() vSwitches implement some Layer 2 security functions.

vSwitches implement some Layer 2 security functions.

•![]() vSwitches are capable of establishing port channels (without negotiation protocols).

vSwitches are capable of establishing port channels (without negotiation protocols).

Areas where vSwitches and regular Ethernet switches differ are:

•![]() vSwitches forwarding table is programmed by a notification mechanism between VMs and the vSwitch. The vSwitch does not learn MAC addresses from the network.

vSwitches forwarding table is programmed by a notification mechanism between VMs and the vSwitch. The vSwitch does not learn MAC addresses from the network.

•![]() vSwitches do not run or require Spanning Tree Protocol (STP), as traffic received on an uplink is never forwarded to another uplink.

vSwitches do not run or require Spanning Tree Protocol (STP), as traffic received on an uplink is never forwarded to another uplink.

•![]() vSwitches do not perform IGMP snooping; however, multicast traffic is not flooded as the vSwitch knows the multicast interest of all the vNICs.

vSwitches do not perform IGMP snooping; however, multicast traffic is not flooded as the vSwitch knows the multicast interest of all the vNICs.

•![]() vSwitches' port mirroring capabilities are a subset of SPAN capabilities.

vSwitches' port mirroring capabilities are a subset of SPAN capabilities.

Note ![]() For information from VMware: http://www.vmware.com/files/pdf/virtual_networking_concepts.pdf

For information from VMware: http://www.vmware.com/files/pdf/virtual_networking_concepts.pdf

vSwitch Forwarding Table

The vSwitch has a Layer 2 forwarding table that it uses to forward traffic based on the destination MAC address. The vSwitch forwarding table contains the MAC address for the VMs and their associated virtual ports. When a frame is destined for a VM, the vSwitch sends the frame directly to the VM. When the destination MAC address does not exist in the VM, or it is multicast or broadcast, it sends the traffic out to the vmnics (i.e., to the server NIC ports).

If multiple vmnics (physical NICs) are present, multicast and broadcast traffic is not flooded to all vmnics in the same VLAN. This happens regardless of the NIC teaming configuration—with an active/standby configuration this is self-explanatory, with an active/active configuration this is also the case because at any given time each VM only uses one vmnic to forward the traffic.

In summary, a regular Ethernet switch learns the forwarding table based on traffic seen on its ports; on the other hand, in a vSwitch, the forwarding table contains only the MAC addresses of the VMs and everything that does not match the VM entries goes out to the server NIC cards, including broadcasts and multicast traffic.

vSwitch Loop Prevention

The configuration of redundant vSwitch uplinks is addressed in Using NIC Teaming for Connectivity Redundancy. Realize for now that vSwitches do not run or require STP so the vSwitch implements other loop prevention mechanisms. These loop prevention mechanisms include dropping inbound traffic for possible returning frames, and distance vector like logic where, for example, a frame that enters from one NIC (uplink) is not going to go out of the ESX Server from a different NIC card (this would be otherwise the case for, say, broadcasts).

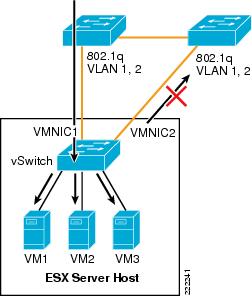

Examples help clarify these behaviors. Figure 7 represents a typical "looped" design, which means a topology that without an STP would not work because of a Layer 2 loop is present. Figure 7 shows the behavior of a vSwitch when a broadcast enters the ESX NIC1. If the vSwitch was running Spanning Tree, NIC2 would be in blocking state, thus preventing the broadcast from going out on NIC2. Unlike a regular switch, the vSwitch does not run Spanning Tree, but it does not forward the broadcast out of NIC2 either, which is desirable.

Figure 7 vSwitch and Broadcasts

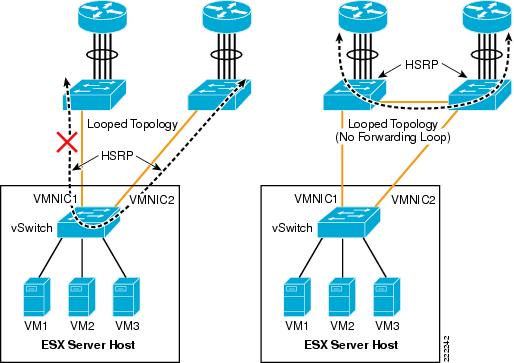

Now consider a loop-free topology (see the left side of Figure 8), which is a topology where no intrinsic loop exist. This is a topology where Spanning Tree is not really needed, except as a loop-prevention mechanism. In this topology the upstream routers need to exchange HSRP hellos to provide a redundant gateway. If the vSwitch was a normal Ethernet switch, the HSRP hellos would allow the two routers to agree on which one of the two is going to be active and which one is going to be standby for the gateway function. Because the HSRPs advertisements need to go through a vSwitch, in this case the two routers will not be able to converge, both of them believe they are active for the HSRP gateway function. The reason is that the vSwitch does not pass the HSRP datagrams. An example topology that would solve this specific problem (but not necessarily the best possible design) for this to work would be the topology to the right in Figure 8.

Figure 8 vSwitch and HSRP Traffic

Note ![]() The above examples do not constitute design recommendations, they are included in this document to clarify the forwarding and loop prevention characteristics of a vSwitch compared to a regular Ethernet switch. For design recommendations refer to ESX Server Network and Storage Connectivity.

The above examples do not constitute design recommendations, they are included in this document to clarify the forwarding and loop prevention characteristics of a vSwitch compared to a regular Ethernet switch. For design recommendations refer to ESX Server Network and Storage Connectivity.

VLAN Tagging

Physical access switches in the data center provide the VLAN tagging functionality, allowing a single network infrastructure to support multiple VLANs. With the introduction of ESX Server into the data center, the traditional method of VLAN tagging is no longer the only option.

vSwitches support VLAN tagging. You can configure a vSwitch to pass traffic from the VM as is, without any VLAN TAG, to the Cisco switch upstream connected to the ESX Server NICs. VMware calls this method External Switch Tagging (EST). You can also configure the vSwitch in such a way that it preserves the VLAN TAG assigned by the VM Guest OS when passing the traffic to the upstream Cisco switch connected to the ESX Server NICs. VMware calls this method Virtual Guest Tagging (VGT).

The most common and preferred option is to configure the vSwitch to color traffic from the VMs with a VLAN TAG and to establish a 802.1q trunk with the Cisco switch connected to the ESX Server NICs. VMware calls this method Virtual Switch Tagging (VST).

Note ![]() VMware information can be found at: http://www.vmware.com/pdf/esx3_vlan_wp.pdf.

VMware information can be found at: http://www.vmware.com/pdf/esx3_vlan_wp.pdf.

This section of the document discusses the benefits and drawbacks of each of these (EST, VTG, and VST) approaches.

External Switch Tagging

EST defines VLAN tagging at the access port of the ESX host. From a VMware configuration point of view, EST is achieved by specifying VLAN 0 in the VLAN ID field of the Port Group configuration, or simply by leaving the VLAN ID empty.

Contrary to what one may think, there is no 1-to-1 mapping between the vNIC and the vmnics. Local switching on the vSwitch still happens. When the traffic from a VM goes out to the Cisco Catalyst switch, the vSwitch does not prepend any 802.1q VLAN label.

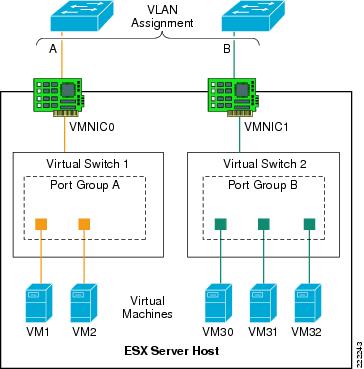

In Figure 9, each virtual switch is associated with a single VLAN: VLANs A and B. The external network defines the vmnic links to the virtual switches as access ports supporting a single VLAN per port. The vSwitch does not perform any VLAN tag functions. VM1-to-VM2 traffic is switched on Virtual Switch 1 without going out of vmnic0. If VM1 (or VM2) traffic is not directed to VM2 (or VM1), it goes out of vmnic0 and the Cisco Catalyst switch assigns it to VLAN A.

Similarly, traffic among VM30, VM31, VM32 is switched on vSwitch2 without going out of vmnic1. If either VM30, VM31, or VM32 send traffic to a different destination than the VMs on Virtual Switch 2, traffic goes out of vmnic1 and the Cisco Catalyst switch assigns it to VLAN B.

Figure 9 External Switch Tagging

Virtual Guest Tagging

VGT requires that each VM guest operating system supports and manages 802.1q tags. The VM manages the vNIC, removing all tagging responsibilities from the virtual switch. Disabling 802.1q tag support on the vSwitch can be done by setting the VLAN field to 4095 in the Port Group configuration. The vNIC driver may need to be set to e1000.

A VGT configuration requires more processing power from each VM, reducing the efficiency of the VM and overall ESX host. VGT deployments are uncommon but are necessary if a single VM must support more than four VLANs.

Note ![]() Each VM can have up to four independent vNIC which could reside on separate VLANs. As a result, there is no need to use the VGT mode if you need to place a VM on different VLANs. The vlance and vmxnet vNIC drivers do not support VGT. If you need to run VGT, you need to configure the VM vNIC driver to be e1000.

Each VM can have up to four independent vNIC which could reside on separate VLANs. As a result, there is no need to use the VGT mode if you need to place a VM on different VLANs. The vlance and vmxnet vNIC drivers do not support VGT. If you need to run VGT, you need to configure the VM vNIC driver to be e1000.

Virtual Switch Tagging

Virtual Switch Tagging (VST) allows the virtual switch to perform the 802.1q tag process. The VMkernel allows the physical adapters to carry out the VLAN tag operations, relieving the VMkernel of the work and improving overall system performance. VST requires that the vmnics connected to the vSwitch be 802.1q trunks. Note that this does not require any special configuration on the ESX host. The external network ports need to be configured to be 802.1q trunks as well.

Figure 10 shows a logical view of VST.

Figure 10 Virtual Switch Tagging

The vNICs of the VM are assigned to a Port Group that is associated with a specific VLAN, in this case VLANs "A" and "B". The vSwitch defines the vmnics as ports supporting all of the VLANs within the switch; that is, as trunks.

Note ![]() The Dynamic Trunking Protocol (DTP) allows negotiating the creation of a trunk between two switches. DTP is not supported by ESX virtual switches. This means that the Cisco Catalyst switch connecting to a vSwitch needs to be configured for static trunking.

The Dynamic Trunking Protocol (DTP) allows negotiating the creation of a trunk between two switches. DTP is not supported by ESX virtual switches. This means that the Cisco Catalyst switch connecting to a vSwitch needs to be configured for static trunking.

In VST mode, the vSwitch may support numerous VLANs over a limited number of ports, which allows the server administrator to define more VLANs than physical adapters.

Native VLAN

By default, the Cisco Catalyst switches can take traffic on the native VLAN that is not tagged and assign a VLAN tag to it. The vlan dot1q tag native configuration option on the Cisco Catalyst switches controls whether or not the switch needs to expect traffic to come into the port with or without the VLAN tag for the native VLAN. If a Port Group on the vSwitch uses the "native VLAN" according to the VST deployment scheme, it forwards traffic on the native VLAN with a 802.1q VLAN TAG. The vSwitch also expects the traffic coming from the Cisco switch on the native VLAN to be tagged. For the upstream Cisco switch port trunk to be compatible with this configuration, you need to configure the vlan dot1q tag native command on the Cisco switch.

Consider now a deployment of a mix of VST and EST mode. This means that some Port Groups define a VLAN ID, but other Port Group on the vSwitch use no VLAN ID, according to the EST deployment scheme. The traffic from these Port Groups belongs to the same VLAN (i.e., no VLAN on the vSwitch) and is colored by the Cisco Catalyst switch with the native VLAN configured on the switchport. In this case you need to disable the vlan dot1q tag native command on the Cisco Catalyst switch.

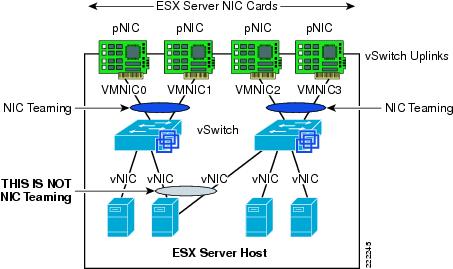

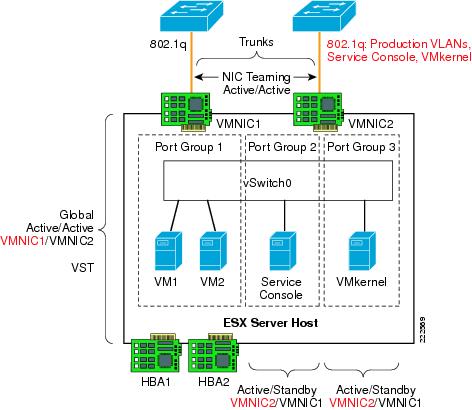

Using NIC Teaming for Connectivity Redundancy

In the context of VMWare networking, NIC Teaming refers to the configuration of redundant ESX Server NIC cards that are used as vSwitch uplinks. This is also referred to as bonding. As Figure 11 shows, NIC teaming refers to creating a redundant vmnic configuration and not a redundant vNIC configuration. ESX NIC teaming is configured at the Port Group level. A redundant configuration on the ESX Server involves configuring vSwitches and/or Port Groups with redundant connectivity to the access layer.

Note ![]() Do not look for the NIC vendor specific NIC teaming software to configure ESX Server NIC teaming. ESX NIC teaming refers to the vSwitch "uplinks" (vmnic) configuration.

Do not look for the NIC vendor specific NIC teaming software to configure ESX Server NIC teaming. ESX NIC teaming refers to the vSwitch "uplinks" (vmnic) configuration.

Although it is possible to configure a VM with multiple vNICs, this configuration does not add any redundancy or performance because vSwitches and VMs are all software constructs that run within the VMkernel. Configuring redundant vSwitch uplinks adds redundancy and possibly performance by leveraging different physical network paths and multiple physical NIC cards or ports.

NIC teaming allows to bundle heterogeneous NIC cards together in order to minimize the chance of losing network connectivity due to a PCI card failure. Just like normal NIC teaming on servers, the NICs need to be part of the same Layer 2 domain.

Figure 11 Meaning of NIC Teaming for an ESX Server

NIC teaming offers several configuration options which can be implemented per-vSwitch or per Port Group:

•![]() Active/Standby.

Active/Standby.

•![]() Active/Active with load balancing based on VM Port-ID.

Active/Active with load balancing based on VM Port-ID.

•![]() Active/Active with load balancing based on the VM MAC-address hash.

Active/Active with load balancing based on the VM MAC-address hash.

•![]() Active/Active with load balancing based on the hash of the source and destination IP address. VMWare calls this IP-based hashing. Cisco calls this configuration Port-channeling.

Active/Active with load balancing based on the hash of the source and destination IP address. VMWare calls this IP-based hashing. Cisco calls this configuration Port-channeling.

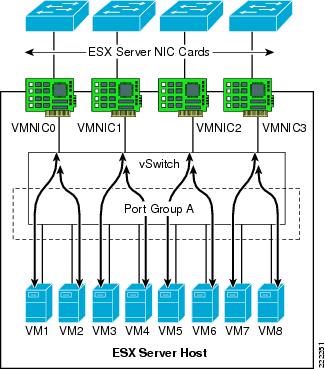

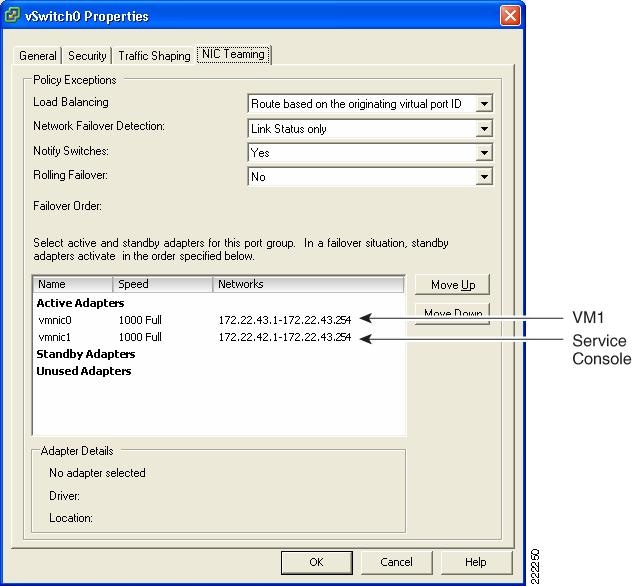

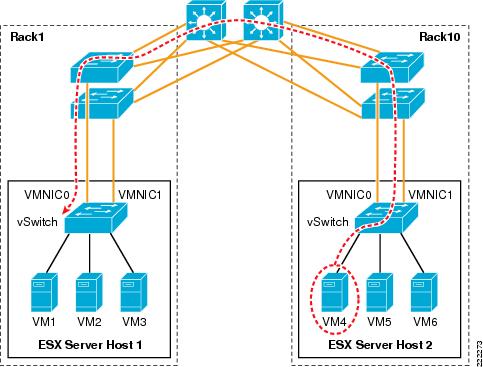

Active/Active Port-based and MAC-based

With active/active mode, all the NICs (vmnics) in the team forward and receive traffic. NICs can be attached to different Cisco Catalyst switches or to a single switch, although using separate switches is more common for reasons of redundancy. The VMware virtual switch load balances egress traffic across the teamed vmnics via the source vNIC MAC address (MAC-based mode) or based on the Virtual Port ID (Port-based). The virtual switch uses all vmnics in the team. If a link failure occurs, the vSwitch reassigns VM traffic to the remaining functional interfaces defined in the team.

With either mode, a network traffic from a VM gets assigned to one vmnics for as long as the vmnic is functioning. Traffic from VMs are on average equally spread on all the available vmnics. For example, if there were four NICs in the team, and eight VMs, there would be two VMs using each NIC.

See Figure 12 for clarification. In this example, VM1 and VM2 use vmnic0, VM3 and VM4 use vmnic1, and so on. Vmnic0 could be connected to one Layer 2 switch, vmnic1 could connect to the same or a different Layer 2 switch, vmnic3 could connect to the same or a different switch, and so on. There is no requirement for the vmnics or VMs to be on the same or different VLANs just like there is no special requirement for the vmnics to connect to one single switch or multiple switches.

This active/active teaming mechanism ensures consistent mapping between the MAC of VMs and the Cisco LAN switches ports for both inbound and outbound traffic. The MAC moves to a different vmnic (thus a different Cisco LAN switchport) only when one of the vmnics fails, or if the VM is administratively moved (for example to a different ESX Server).

Figure 12 vSwitch NIC Teaming Active/Active Traffic Distribution

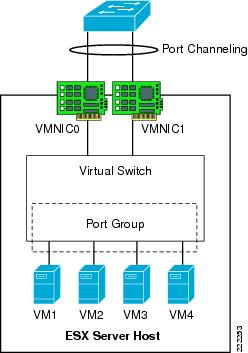

Active/Active IP-based (Port-Channeling)

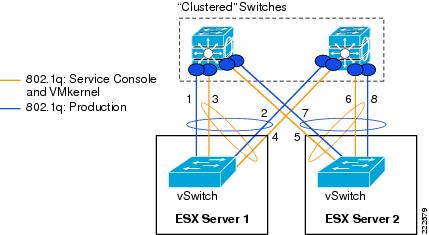

An EtherChannel (also known as 802.3ad link aggregation) bundles individual Ethernet links into a single logical link that provides the aggregate bandwidth of up to eight physical links. In VMware terminology this is referred to as IP-based load balancing and is found in the NIC teaming configuration of the ESX host. The IP-based load balancing configuration distributes outbound traffic from the VM based on the hash of the source and destination IP addresses. For this load-balancing method to work, you need to configure EtherChanneling (i.e., 802.3ad link aggregation) on the Cisco LAN switch that the vmnics connect to.

On a Cisco switch, you can configure EtherChannels manually or you can use the Port Aggregation Control Protocol (PAgP) or the 802.3ad Link Aggregation Control Protocol (LACP) to form EtherChannels. The EtherChannel protocols allow ports with similar characteristics to form an EtherChannel through dynamic negotiation with connected network devices. PAgP is a Cisco-proprietary protocol and LACP is defined in IEEE 802.3ad. Cisco switches support both protocols.

On the ESX host, the vSwitch IP-based load balancing does not run the 802.3ad LACP. For this reason, the EtherChannel configuration on the Cisco Catalyst switch can not use dynamic negotiation, which means that the channel-group is set to ON in the configuration. Note that such a configuration is static in that both the Cisco Catalyst switch and the vSwitch perform load distribution on the bundled links (with their respective load balancing algorithm) on either side, regardless of whether the other end of the bundle is configured for channeling or not.

Figure 13 shows this NIC teaming method. All NICs connect to a single switch (or to multiple switches "clustered" together with proper technology). All NIC ports that are member of the same IP-based NIC teaming configuration must be member of the same Port-channel on the Cisco LAN switch.

Note ![]() For more information on configuring EtherChanneling on the Cisco Catalyst switches, refer to the following URL:

For more information on configuring EtherChanneling on the Cisco Catalyst switches, refer to the following URL:

http://www.cisco.com/en/US/partner/docs/switches/lan/catalyst6500/ios/12.2SX/configuration/guide/channel.html

Note ![]() It is possible to connect an ESX Server to multiple Cisco Catalyst 6500 switches configured for Virtual Switching System (VSS), or multiple Cisco Blade Switches (CBS) configured for Virtual Blade Switching (VBS), and configure NIC teaming for IP-based hashing.

It is possible to connect an ESX Server to multiple Cisco Catalyst 6500 switches configured for Virtual Switching System (VSS), or multiple Cisco Blade Switches (CBS) configured for Virtual Blade Switching (VBS), and configure NIC teaming for IP-based hashing.

Figure 13 vSwitch EtherChanneling

Teaming Failback

The Teaming Failback feature controls the preemption behavior of NIC teaming. For example, assume that you have two vmnics: vmnic0 and vmnic1. After vmnic0 fails, traffic is sent to vmnic1. When vmnic0 becomes available, the default behavior (Teaming Failback set to ON) is for traffic to be reassigned to vmnic0.

This poses a risk of blackholing traffic when on the LAN switching side there is a linkup but the port does not go into forwarding mode right away. This problem can be easily addressed on the Cisco Catalyst switch side by using trunkfast and by setting the trunk mode to ON. The problem can also be addressed from the ESX host side, by disabling the Teaming Failback feature, which means that after vmnic0 gets a linkup again, the NIC is still kept inactive up until the currently active vmnic1 fails.

In releases prior to ESX 3.5, the Teaming Failback mode is enabled by disabling Rolling Failover. Failback = No (ESX 3.5) is equivalent to Rolling Failover = Yes (releases prior to ESX 3.5) and vice versa.

Beaconing

Beaconing is a probing function that allows the ESX host to monitor the availability of vmnics within a team. Beaconing requires that the vmnics reside in the same broadcast domain. Beacons are intended for use with teams connected to more than one external switch. The ESX Server monitors the loss of beacon probes to determine failures in the external network. If a failure condition exists, meaning that a vmnic has not reported receiving x number of beacons from the beacon initiator, the ESX Server toggles adapters and declares the primary adapter down.

The beacon frames are Layer 2 frames, with Ethertype 0x05ff with source MAC address equal to the burnt-in-address of the NIC card (not the VMware MAC address) and a broadcast destination address. Frames are sent on every VLAN that the vSwitch is on.

Note ![]() Beaconing is configured per Port Group.

Beaconing is configured per Port Group.

It is not recommended to use beaconing as a form of external network failure detection because of the possibility of false positives and its inability to detect upstream failures. To provide a highly available external network infrastructure, use redundant paths and/or protocols with network-based load-balancing to achieve high availability.

The Link State Tracking feature associates upstream links with downstream links to the ESX Server. Upstream link failures will then trigger downstream link failures that the ESX Server can detect using Link Status Only under Network Failover Detection. The Link State Tracking feature is available on the Cisco Catalyst blade switches, Catalyst 3750, Catalyst 2960, and Catalyst 3560. Check the Cisco website for support of this feature on your Cisco Catalyst switch. The Link State Tracking feature associates upstream links with downstream links to the ESX Server. Upstream link failures will then trigger downstream link failures that the ESX Server can detect using Link Status Only under Network Failover Detection.

vSwitch Configuration

The ESX host links local VMs to each other and to the external enterprise network via a software construct named vSwitch, which runs in the context of the VMkernel. The vSwitch emulates a traditional physical Ethernet network switch to the extent that it forwards frames at the data link layer (Layer 2).

Creation of vSwitch

Whenever a VM is created and you need to configure access to the network, you have two options to choose from: using an existing vSwitch or creating one. See Figure 14.

Figure 14 How to create a new vSwitch from the VirtualCenter GUI

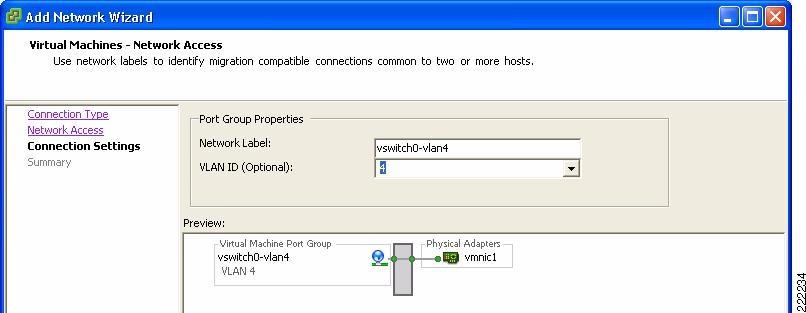

If you choose to create a new vSwitch you can then create the Port Group which is defined by a Network Label, the VLAN number and other characteristics that are analyzed in other sections of this document. In Figure 14 and Figure 15, you can see the creation of vSwitch0 and the definition of a Port Group using VLAN 4 on vSwitch 0. This Port Group uses the Network Label vSwitch0-vlan4.

Figure 15 VLAN Creation from the VirtualCenter GUI

Note ![]() Naming or labeling Port Groups within vSwitches is an important standard to develop and maintain in an ESX environment. You could name the Port Group Network Label after the VLAN, or indicate the vSwitch name and VLAN or simply use the name of the application that attaches to this Port Group.

Naming or labeling Port Groups within vSwitches is an important standard to develop and maintain in an ESX environment. You could name the Port Group Network Label after the VLAN, or indicate the vSwitch name and VLAN or simply use the name of the application that attaches to this Port Group.

Note ![]() In this part of the document, we chose a Network Label name that reflects the vSwitch and the VLAN being used. This may not be the best way of naming Port Groups because the vSwitch name has only local significance and is automatically generated. For VM mobility, the origin Network Label and the destination Network label need to be the same, as well as the VLAN. For this reason you want to use a Network Label name that can be used on a different ESX Server, regardless of which vSwitch the VM migrates to.

In this part of the document, we chose a Network Label name that reflects the vSwitch and the VLAN being used. This may not be the best way of naming Port Groups because the vSwitch name has only local significance and is automatically generated. For VM mobility, the origin Network Label and the destination Network label need to be the same, as well as the VLAN. For this reason you want to use a Network Label name that can be used on a different ESX Server, regardless of which vSwitch the VM migrates to.

Note ![]() Port Groups labels need to be unique across vSwitches on the same ESX host.

Port Groups labels need to be unique across vSwitches on the same ESX host.

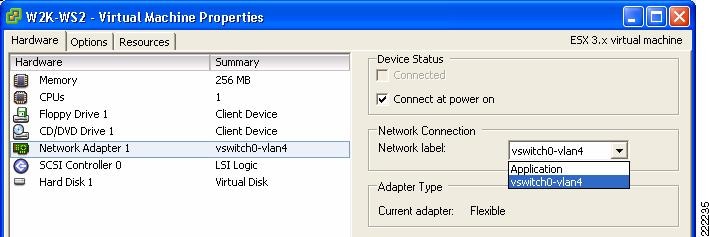

The vNIC of the VM is referred to as Network Adapter in the Edit Settings Window for the VM. The VM Network Adapter configuration (i.e., the vNIC configuration) selects the Port Group within a vSwitch that a VM connects to by referring to its Network Label. By selecting which Port Group a Network Adapter uses, you implicitly assign the VM to a vSwitch, and to a specific VLAN within the vSwitch.

In Figure 16, the Port Group has been labeled after the VLAN that it is used to color the traffic and the vSwitch that this Port Group is defined on.

Figure 16 Joining vNIC and VLAN

A sample configuration is visible from the VMware ESX Server -> Configuration -> Networking tab, as shown in Figure 17. In this example, the VM called W2K-WS2 connects to vSwitch2 on VLAN 100.

Figure 17 vSwitch Final Configuration

Vswitch characteristics can be further modified by selecting the Properties button to the right of the vSwitch. This allows adding more Port Groups, changing the NIC Teaming properties, configure traffic rate limiting, etc.

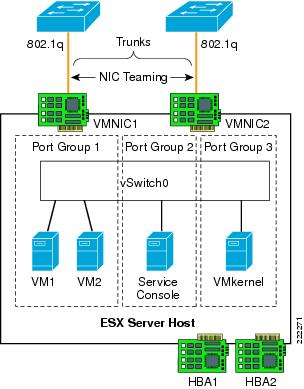

VMs, VMkernel and Service Console Network Configuration

As shown in Figure 17, vSwitch2 offers network connectivity to multiple VMs (W2K-WS2), to the Service Console, and to the VMkernel.

As a reminder, the Service Console is used by the VirtualCenter or by the Virtual Infrastructure Client to manage the ESX Server, so a change to the Service Console configuration needs to be carefully reviewed to avoid losing management access to the ESX Server. The VMkernel network configuration is used for NAS, iSCSI access and VMotion.

In the Service Console and the VMkernel configurations you can set the IP address, default gateway (which can be, both, different from the Service Console's IP and gateway), VLAN number, and more. These addresses are shown in Figure 17. You can verify the connectivity of the Service Console and the VMkernel by using CLI commands; the ping command for the Service Console and the vmkping command for the VMkernel.

Although it is recommended that Service Console and VMkernel get their own respective dedicated NIC, it is likely that in many deployments they do share vmnics. In this case, it is recommended that, while sharing the same vSwitch, the Service Console be on its own VLAN, the VM Kernel port be on its own VLAN, and the VMs be on VLANs different than the previous two.

With this configuration, the vmnic would be configured for 802.1q trunking as described further later in this document.

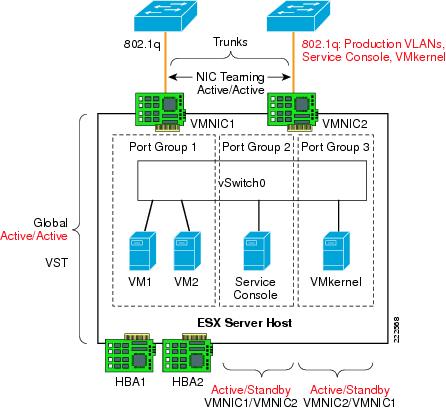

vSwitch NIC Teaming Configuration

NIC teaming is a vSwitch/vmnic configuration, not a vNIC configuration. In the context of VMware networking NIC teaming refers to configuring the vSwitch uplinks for redundancy. NICs can be attached to different Cisco Catalyst switches or to a single switch, although using separate switches is more common for reasons of redundancy. Figure 18 shows a basic configuration.

Figure 18 Active/Standby NIC Teaming

In this example, vSwitch0 is configured with vmnic0 as the active uplink and vmnic1 as the standby uplink. Both links carry VLAN1 and VLAN 2, VLAN1 carries the Service Console traffic, and VLAN 2 carries the VM1 and VM2 traffic.

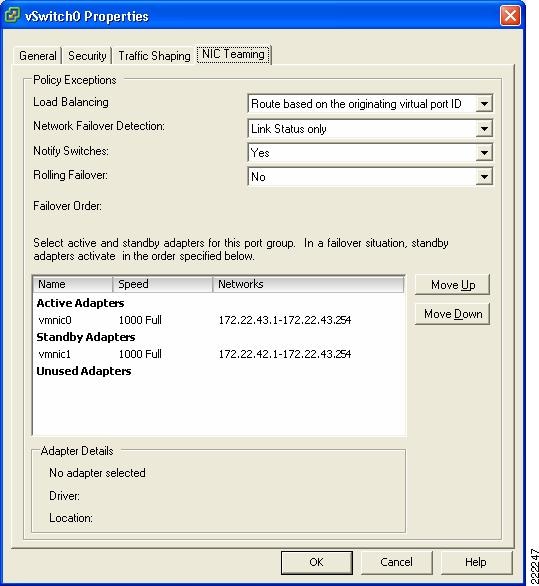

The configuration of vSwitch0 is accomplished by going to the ESX Host Network Configuration, selecting vSwitch0 Properties, and editing the vSwitch configuration as depicted in Figure 19.

Figure 19 vSwitch NIC Teaming Properties

This is a vSwitch-wide configuration:

•![]() This NIC teaming configuration applies to all Port Group/VLANs configured on the vSwitch.

This NIC teaming configuration applies to all Port Group/VLANs configured on the vSwitch.

•![]() The vmnics carry all the VLANs configured.

The vmnics carry all the VLANs configured.

•![]() If the active vmnic (vmnic0) fails, all traffic is assigned to the standby vmnic (vmnic1).

If the active vmnic (vmnic0) fails, all traffic is assigned to the standby vmnic (vmnic1).

•![]() It is possible to override the vSwitch-wide configuration from within each Port Group.

It is possible to override the vSwitch-wide configuration from within each Port Group.

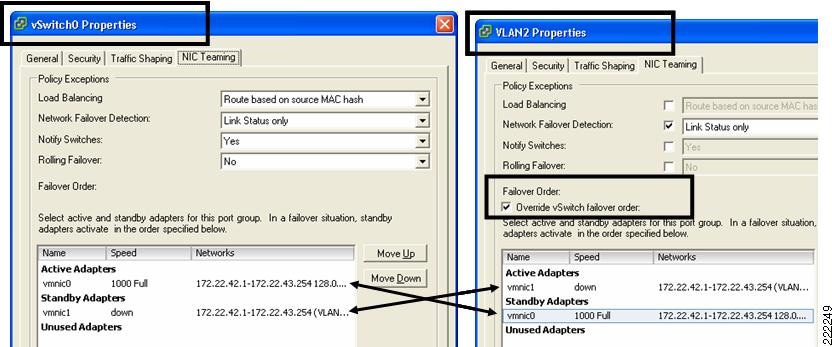

Port Group Configuration

One drawback of an active/standby NIC teaming configuration is that one of the NICs is unused, the standby vmnic1 in the previous example. Although NIC teaming is for the most part a vSwitch-wide configuration, it is also possible to override the global configuration at the Port Group level, thus achieving full NIC utilization with a per-Port Group active/standby configuration.

The following example clarifies. Figure 20 shows a logical view of an ESX Server with one vSwitch configured for teaming. Two ESX Server NICs are connected to the vSwitch as uplinks. VM5 and VM7 connect to vSwitch1 Port Group1 and are configured to use vmnic0 for traffic leaving the vSwitch. Vmnic1 is standby and takes over when vmnic0 fails. VM4 and VM6 connect to vSwitch1 Port Group 2 and use vmnic1 as the preferred uplink. Vmnic0 is standby and takes over when vmnic1 fails.

Note that VM4, VM5, VM6, and VM7 need not be in different VLANs. They can be part of the same VLAN, but simply on two different Port Groups for the purpose of spreading their traffic on two different uplinks.

Note ![]() If VM4, VM5, VM6, and VM7 are on the same VLAN and two different Port Groups, they are in the same broadcast domain.

If VM4, VM5, VM6, and VM7 are on the same VLAN and two different Port Groups, they are in the same broadcast domain.

Figure 20 vSwitch Port Groups NIC Teaming Configuration

Figure 21 shows how VirtualCenter makes this configuration possible. Under the ESX host Configuration, Networking you can select the vSwitch0 properties. Within the "Port tab" select the Port Group of interest (identified by the Network Label, which could be for example the VLAN number), then you can change the Port Group properties which include the NIC Teaming configuration. Figure 21 contrasts the vSwitch properties with the Port Group properties. Note the fact that the Port Group configuration can override the vSwitch-wide configuration and the reverse order of the vmnics in the two configurations.

Figure 21 vSwitch Port Groups NIC Teaming Overrides vSwitch NIC Teaming

This example shows the following:

•![]() Active/Standby NIC Teaming

Active/Standby NIC Teaming

•![]() The fact that different Port Groups on the same vSwitch can have different teaming/NIC Teaming configurations (also in the case when the VLAN is the same for both Port Groups)

The fact that different Port Groups on the same vSwitch can have different teaming/NIC Teaming configurations (also in the case when the VLAN is the same for both Port Groups)

Changing the NIC Teaming Configuration

All VMs that share the same NIC teaming policy are part of the same Port Group. From the vSwitch property, select the Port Group of interest (i.e., the Network Label) and the NIC Teaming properties. The load-balancing scrollbar allows choosing the port-based, MAC-based, or IP-based load balancing.

The bigger window lists the available vmnics, which you can move up or down, into the active list, the standby list, or the unused list. This gives you the flexibility of using the same available pool of vmnics from different Port Groups in different ways:

•![]() One Port Group (i.e., one set of VMs) may be using vmnic0 only and keep vmnic1 in standby.

One Port Group (i.e., one set of VMs) may be using vmnic0 only and keep vmnic1 in standby.

•![]() Another Port Group could be using both vmnic0 and vmnic1 with port-based load balancing.

Another Port Group could be using both vmnic0 and vmnic1 with port-based load balancing.

•![]() Yet another Port Group could be using both vmnic0 and vmnic1 in the reversed order to achieve better VM spread over the vmnics.

Yet another Port Group could be using both vmnic0 and vmnic1 in the reversed order to achieve better VM spread over the vmnics.

•![]() A Port Group could be using just vmnic0 and keep vmnic1 in the Unused list (which would isolate VMs when vmnic0 fails).

A Port Group could be using just vmnic0 and keep vmnic1 in the Unused list (which would isolate VMs when vmnic0 fails).

Note ![]() In order to make things simple, you can simply choose an active/active port-based load balancing configuration at the vSwitch level, and leave the other Port Groups NIC teaming configuration untouched.

In order to make things simple, you can simply choose an active/active port-based load balancing configuration at the vSwitch level, and leave the other Port Groups NIC teaming configuration untouched.

Figure 22 vSwitch NIC Teaming Options

ESX Internal Networking

Internal networks of VMs are sometimes referred to as vmnets or private networks. Internal networks are local to the ESX host without connectivity to the LAN switching environment. Vmnets use the virtual switch to link VMs internally to the ESX Server, and the configuration does not differ especially from the external networking configuration, except, that there is no vmnic assigned to an internal vSwitch. The system bus provides the transport and the CPU manages the traffic. VMnets are generally used in test and development environments.

Figure 23 shows a possible, although not very useful, use of internal networking design. In this example, VM2 and VM3 are members of vSwitch2. The Port Groups/VLANs on vSwitch2 are completely internal to the ESX Server. VM2 and VM3 do not communicate with the outside world.

Figure 23 ESX Server Private Virtual Switches

Routing and Bridging

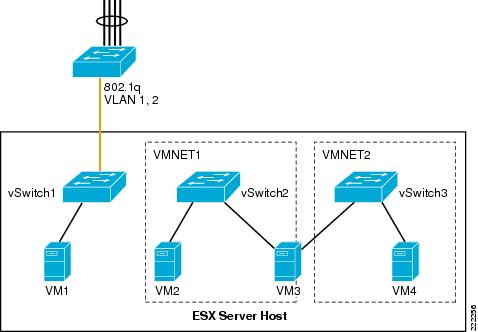

It is possible to create and interconnect multiple private networks by using a VM configured for routing or bridging as it is shown in Figure 24. In Figure 24, VMNET1 (vSwitch2) and VMNET2 (vSwitch3) are interconnected by VM3, which has two vNICs—one per VNET.

Figure 24 ESX Server Private Virtual Switches with Routing or Bridging

Use Case

Private vSwitches can be used for testing purposes but they may also be useful if you need to create a private communication channel among VMs. Figure 25 provides an example (which is not recommended).

In Figure 25, VM4 and VM6 have two vNICs. The public vNIC connects to the outside Cisco LAN switching network via vSwitch0. The private vNIC connects to a private vSwitch (vSwitch1). This allows the two VMs to exchange heartbeat or state information if necessary, locally on the server.

Figure 25 Private vSwitch used for Private Communication Among VMs

The configuration in Figure 25 appears in the VirtualCenter as in the Figure 26.

Figure 26 VirtualCenter View of Private Networks

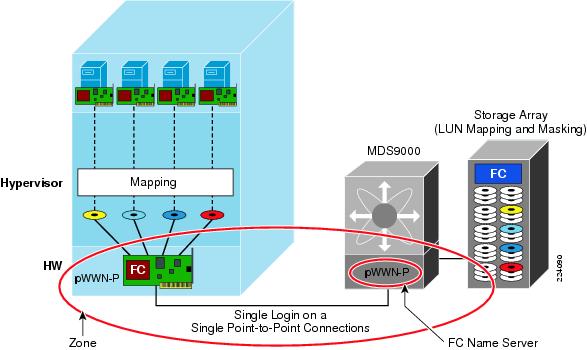

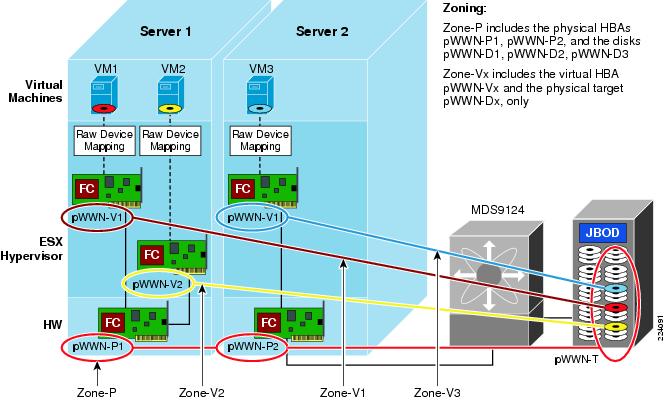

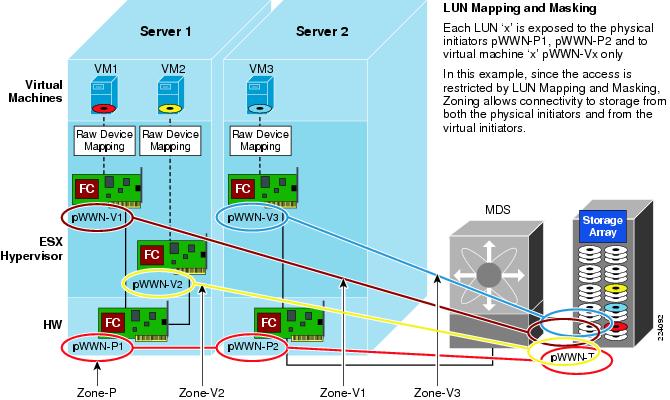

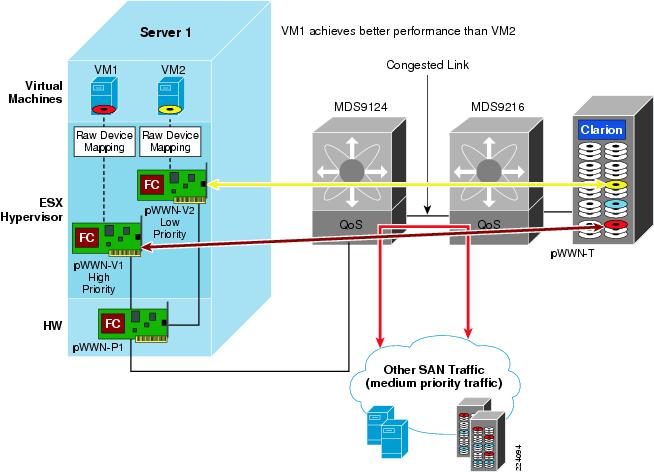

ESX Server Storage Networking

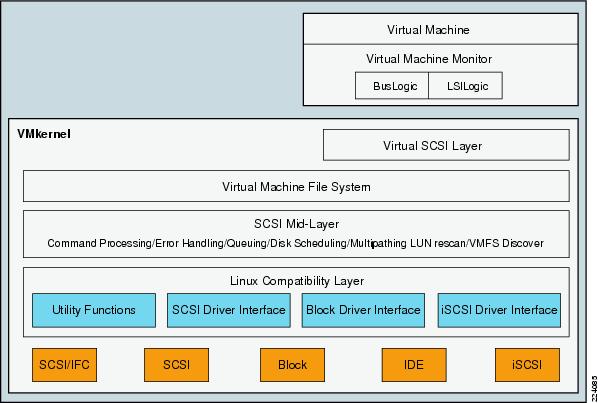

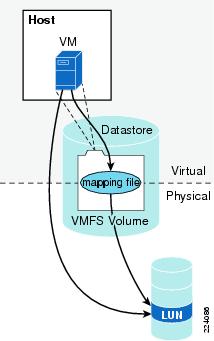

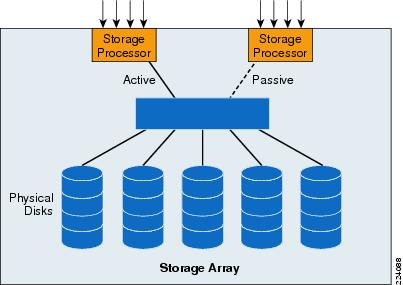

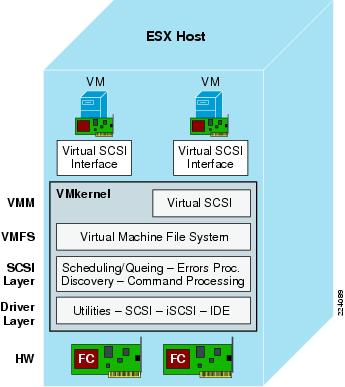

VMware Infrastructure Storage architecture (see Figure 27) provides a layer of abstraction that hides and manages the complexity of and differences between physical storage subsystems. To the applications and guest operating systems inside each virtual machine, storage is presented simply as SCSI disks connected to a virtual BusLogic or LSI SCSI HBA.

Figure 27 VMware Infrastructure Storage Architecture

The virtual SCSI disks inside the virtual machines are provisioned from datastore elements in the data center (see Figure 27). A datastore is like a storage appliance that serves up storage space for virtual disks inside the virtual machines, and stores the virtual machine definitions themselves. As shown in Figure 27, a virtual machine is stored as a set of files in its own directory in the datastore.

A virtual disk (vmdk) is a file that resides in a datastore that is managed by ESX. A datastore will reside on a VMFS volume for block-based storage or a mount-point for NFS storage. The VMFS volume is typically comprised of a single LUN, but can span several LUNs. A virtual disk can be easily manipulated (copied, moved, back-up, and so on) just like a file. For guest OS's that support hot-adds of new disks, a new virtual disk can be added without having to shutdown the VM.

The datastore provides a simple model to allocate storage space for the virtual machines without exposing them to the complexity of the variety of physical storage technologies available, such as:

•![]() FibreChannel SAN—The most common deployment option as it enables VMotion, ESX boot from SAN, support for raw device mapping, support high availability clusters (such as Microsoft MSCS). It supports the Virtual Machine File System (VMFS).

FibreChannel SAN—The most common deployment option as it enables VMotion, ESX boot from SAN, support for raw device mapping, support high availability clusters (such as Microsoft MSCS). It supports the Virtual Machine File System (VMFS).

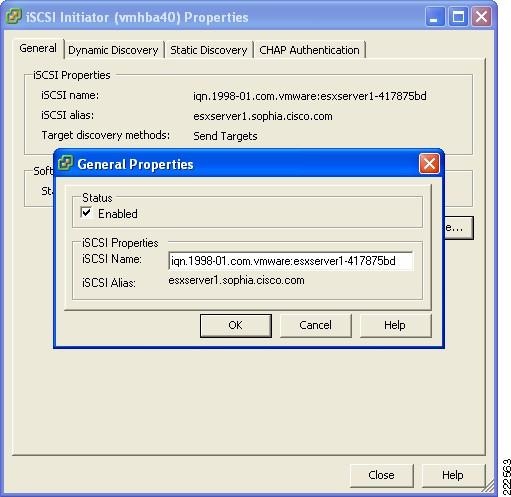

•![]() iSCSI SAN—When associated with hardware-based acceleration, iSCSI SAN enables functions similar to FibreChannel SAN: VMotion migration, ESX boot from SAN, support for raw device mapping. In the case of software-based iSCSI, booting from the SAN is not supported. It supports the VMFS.

iSCSI SAN—When associated with hardware-based acceleration, iSCSI SAN enables functions similar to FibreChannel SAN: VMotion migration, ESX boot from SAN, support for raw device mapping. In the case of software-based iSCSI, booting from the SAN is not supported. It supports the VMFS.

•![]() Direct Attached Storage—Not shared; therefore, not commonly used. Note that ESX Server 3.5 supports VMotion with swap files located on local (DAS) storage.

Direct Attached Storage—Not shared; therefore, not commonly used. Note that ESX Server 3.5 supports VMotion with swap files located on local (DAS) storage.

•![]() NAS—NFS-based storage does not support raw device mapping, nor high availability clusters, it does not use the VMFS, but it allows VMotion migration and booting from NFS.

NAS—NFS-based storage does not support raw device mapping, nor high availability clusters, it does not use the VMFS, but it allows VMotion migration and booting from NFS.

A datastore is physically just a VMFS volume or an NFS-mounted directory. Each datastore can span multiple physical storage subsystems.

As shown in Figure 27, a single VMFS volume can contain one or more smaller volumes from a direct-attached SCSI disk array on a physical server, a FibreChannel SAN disk farm, or iSCSI SAN disk farm. New volumes added to any of the physical storage subsystems or LUNs known to the ESX Server that are expanded within the storage subsystem will be discovered by the ESX Server upon issuing a rescan request through the Virtual Center management interface. They can be added to extend a previously created datastore without powering down physical servers or storage subsystems.

VMware ESX Server Storage Components

This section provides a more detailed technical description of internal ESX Server components and their operation. Figure 28 provides a more detailed view of the ESX Server architecture and specific components that perform VMware storage operations.

Figure 28 Storage Architecture Components

The key components shown in Figure 28 are the following:

•![]() Virtual Machine Monitor (VMM)

Virtual Machine Monitor (VMM)

•![]() Virtual SCSI Layer

Virtual SCSI Layer

•![]() VMFS

VMFS

•![]() SCSI Mid-Layer

SCSI Mid-Layer

•![]() Host Bus Adapter (HBA) Device Drivers

Host Bus Adapter (HBA) Device Drivers

Virtual Machine Monitor (VMM)

The VMM module's primary responsibility is to monitor a virtual machine's activities at all levels (CPU, memory, I/O, and other guest operating system functions and interactions with VMkernel). The VMM module contains a layer that emulates SCSI devices within a virtual machine. A virtual machine operating system does not have direct access to FibreChannel devices because VMware infrastructure virtualizes storage and presents only a SCSI interface to the operating system. Thus, from any type of virtual machine (regardless of operating system), applications only access storage subsystems only via a SCSI driver. Virtual machines can use either BusLogic or LSI Logic SCSI drivers. These SCSI drivers enable the use of virtual SCSI HBAs within a virtual machine.

Note ![]() Within a Windows virtual machine, under the Windows control panel display for Computer Management > Device Manager > SCSI and RAID Controllers, there are listings for BusLogic or LSI Logic drivers. BusLogic indicates that Mylex BusLogic BT-958 emulation is being used. BT-958 is a SCSI-3 protocol providing Ultra SCSI (Fast-40) transfer rates of 40MB per second. The driver emulation supports the capability of "SCSI Configured AutoMatically," also known as SCAM, which allows SCSI devices to be configured with an ID number automatically, so you do not have to assign IDs manually.

Within a Windows virtual machine, under the Windows control panel display for Computer Management > Device Manager > SCSI and RAID Controllers, there are listings for BusLogic or LSI Logic drivers. BusLogic indicates that Mylex BusLogic BT-958 emulation is being used. BT-958 is a SCSI-3 protocol providing Ultra SCSI (Fast-40) transfer rates of 40MB per second. The driver emulation supports the capability of "SCSI Configured AutoMatically," also known as SCAM, which allows SCSI devices to be configured with an ID number automatically, so you do not have to assign IDs manually.

Virtual SCSI HBAs

In an ESX Server environment, each virtual machine includes from one to four virtual SCSI HBAs. Virtual SCSI HBAs allow virtual machines access to logical SCSI devices, just as a physical HBAs allow access to physical storage devices. However, in contrast to a physical HBA, the virtual SCSI HBA does not allow storage administrators (such as SAN administrators) access to the physical machine.

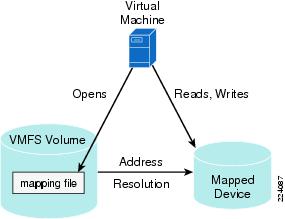

Virtual SCSI Layer

The virtual SCSI layer's primary responsibility is to manage SCSI commands and intercommunication between the VMM, the VMFS, and the SCSI mid-layer below. All SCSI commands from virtual machines must go through the virtual SCSI layer. Also, input/output (I/O) abort and reset operations are managed at this layer. From here, the virtual SCSI layer passes I/O or SCSI commands from virtual machines to lower layers, either via VMFS or device mapping (RDM), which supports two modes: passthrough and non-passthrough. In RDM passthrough mode, all SCSI commands are allowed to pass through without traps.

Virtual Machine File System (VMFS)

VMFS is a clustered file system that leverages shared storage to allow multiple physical servers to read and write to the same storage simultaneously. VMFS provides on-disk distributed locking to ensure that the same virtual machine is not powered on by multiple servers at the same time.

In a simple configuration, the virtual machines' disks are stored as files within a VMFS. When guest operating systems issue SCSI commands to their virtual disks, the virtualization layer translates these commands to VMFS file operations. For details about VMFS, refer to File System Formats.