- Index

- Preface

- Product Overview

- Command-Line Interfaces

- Configuring the Switch for the First Time

- Administering the Switch

- Configuring the Cisco IOS In-Service Software Upgrade Process

- Configuring Interfaces

- Checking Port Status and Connectivity

- Configuring Supervisor Engine Redundancy Using RPR and SSO

- Configuring Cisco NSF with SSO Supervisor Engine Redundancy

- Environmental Monitoring and Power Management

- Configuring Power over Ethernet

- Configuring NetWork Assista nt

- Configuring VLANs

- Configuring IP Unnumbered Interface

- Configuring Layer 2 Ethernet Interfaces

- Configuring SmartPort Macros

- Configuring Auto SmartPort Macros

- Configuring Spanning Tree

- Configuring Flex Links and MAC Address-Table Move Update

- Configuring Resilient Ethernet Protocol

- Configuring Enhanced Spanning Tree Features

- Configuring EtherChannel and Link State Tracking

- Configuring IGMP Snooping and Filtering

- Configuring MLD Snooping

- Configuring 802.1Q Tunneling, VLAN Mapping, and Layer 2 Protocol Tunneling

- Configuring CDP

- Configuring LLDP, LLDP-MED, and Location Service

- Configuring UDLD

- Configuring Unidirectional Ethernet

- Configuring Layer 3 Interfaces

- Configuring Cisco Express Forwarding

- Configuring Unicast Reverse Path Forwarding

- Configuring IP Multicast

- Configuring ANCP Client

- Configuring Policy-Based Routing

- Configuring VRF

- Configuring Quality of Service

- Configuring Voice Interfaces

- Configuring Private VLANs

- Configuring 802.1X Port-Based Authentication

- Configuring the PPPoE Intermediate Agent

- Configuring Web-based Authentication

- Configuring Port Security

- Configuring Control Plane Policing and Layer 2 Control Packet QoS

- Configuring DHCP Snooping, IP Source Guard, and IPSG for Static Hosts

- Configuring Dynamic ARP Inspection

- Configuring Network Security with ACL

- Support for IPv6

- Port Unicast and Multicast Flood Blocking

- Configuring Storm Control

- Configuring SPAN

- Configuring System Message Logging

- Configuring OBFL

- Configuring SNMP

- Configuring NetFlow-lite

- Configuring NetFlow Switching

- Configuring CFM and OAM

- Configuring Y1731

- Configuring Call Home

- Configuring Cisco IOS IP SLA Operations

- Configuring RMON

- Performing Diagnostics

- Configuring WCCP

- ROM Monitor

- Configuring MIB Support

- Acronyms

Catalyst 4500 Series Switch Software Configuration Guide, 15.0(2)SG Configuration Guide

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- April 28, 2011

Chapter: Configuring IP Multicast

- About IP Multicast

- Configuring IP Multicast Routing

- Monitoring and Maintaining IP Multicast Routing

- Configuration Examples

Configuring IP Multicast

This chapter describes IP multicast routing on the Catalyst 4500 series switch. It also provides procedures and examples to configure IP multicast routing.

Note![]() For more detailed information on IP Multicast, refer to this URL:

For more detailed information on IP Multicast, refer to this URL:

http://www.cisco.com/en/US/products/ps6552/products_ios_technology_home.html.

Note![]() For complete syntax and usage information for the switch commands used in this chapter, look at the Cisco Catalyst 4500 Series Switch Command Reference and related publications at this location:

For complete syntax and usage information for the switch commands used in this chapter, look at the Cisco Catalyst 4500 Series Switch Command Reference and related publications at this location:

http://www.cisco.com/en/US/products/hw/switches/ps4324/index.html

If the command is not found in the Catalyst 4500 Command Reference, it is located in the larger Cisco IOS library. Refer to the Cisco IOS Command Reference and related publications at this location:

http://www.cisco.com/en/US/products/ps6350/index.html

About IP Multicast

Note![]() Controlling the Transmission Rate to a Multicast Group is not supported.

Controlling the Transmission Rate to a Multicast Group is not supported.

At one end of the IP communication spectrum is IP unicast, where a source IP host sends packets to a specific destination IP host. In IP unicast, the destination address in the IP packet is the address of a single, unique host in the IP network. These IP packets are forwarded across the network from the source to the destination host by routers. At each point on the path between source and destination, a router uses a unicast routing table to make unicast forwarding decisions, based on the IP destination address in the packet.

At the other end of the IP communication spectrum is an IP broadcast, where a source host sends packets to all hosts on a network segment. The destination address of an IP broadcast packet has the host portion of the destination IP address set to all ones and the network portion set to the address of the subnet. IP hosts, including routers, understand that packets, which contain an IP broadcast address as the destination address, are addressed to all IP hosts on the subnet. Unless specifically configured otherwise, routers do not forward IP broadcast packets, so IP broadcast communication is normally limited to a local subnet.

IP multicasting falls between IP unicast and IP broadcast communication. IP multicast communication enables a host to send IP packets to a group of hosts anywhere within the IP network. To send information to a specific group, IP multicast communication uses a special form of IP destination address called an IP multicast group address. The IP multicast group address is specified in the IP destination address field of the packet.

To multicast IP information, Layer 3 switches and routers must forward an incoming IP packet to all output interfaces that lead to members of the IP multicast group. In the multicasting process on the Catalyst 4500 series switch, a packet is replicated in the Integrated Switching Engine, forwarded to the appropriate output interfaces, and sent to each member of the multicast group.

We tend to think of IP multicasting and video conferencing as the same thing. Although the first application in a network to use IP multicast is often video conferencing, video is only one of many IP multicast applications that can add value to a company’s business model. Other IP multicast applications that have potential for improving productivity include multimedia conferencing, data replication, real-time data multicasts, and simulation applications.

This section contains the following subsections:

- IP Multicast Protocols

- IP Multicast Implementation on the Catalyst 4500 Series Switch

- Restrictions on Using Bidirectional PIM

IP Multicast Protocols

The Catalyst 4500 series switch primarily uses these protocols to implement IP multicast routing:

- Internet Group Management Protocol (IGMP)

- Protocol Independent Multicast (PIM)

- IGMP snooping and Cisco Group Management Protocol

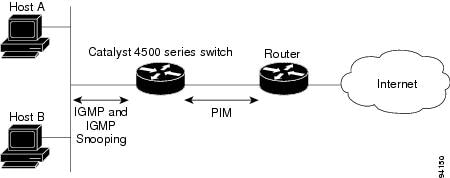

Figure 1-1 shows where these protocols operate within the IP multicast environment.

Figure 1-1 IP Multicast Routing Protocols

Internet Group Management Protocol

IGMP messages are used by IP multicast hosts to send their local Layer 3 switch or router a request to join a specific multicast group and begin receiving multicast traffic. With some extensions in IGMPv2, IP hosts can also send a request to a Layer 3 switch or router to leave an IP multicast group and not receive the multicast group traffic.

Using the information obtained by using IGMP, a Layer 3 switch or router maintains a list of multicast group memberships on a per-interface basis. A multicast group membership is active on an interface if at least one host on the interface sends an IGMP request to receive multicast group traffic.

Protocol-Independent Multicast

PIM is protocol independent because it can leverage whichever unicast routing protocol is used to populate the unicast routing table, including EIGRP, OSPF, BGP, or static route, to support IP multicast. PIM also uses a unicast routing table to perform the reverse path forwarding (RPF) check function instead of building a completely independent multicast routing table. PIM does not send and receive multicast routing updates between routers like other routing protocols do.

Note![]() Catalyst 4900M, Catalyst 4948E, Supervisor Engine 6-E, and Supervisor Engine 6L-E do not increment counters for (*, G) in PIM Dense Mode. (*, G) counters are incremented only when running Bidirectional PIM Mode.

Catalyst 4900M, Catalyst 4948E, Supervisor Engine 6-E, and Supervisor Engine 6L-E do not increment counters for (*, G) in PIM Dense Mode. (*, G) counters are incremented only when running Bidirectional PIM Mode.

PIM Dense Mode

PIM Dense Mode (PIM-DM) uses a push model to flood multicast traffic to every corner of the network. PIM-DM is intended for networks in which most LANs need to receive the multicast, such as LAN TV and corporate or financial information broadcasts. It can be an efficient delivery mechanism if active receivers exist on every subnet in the network.

For more detailed information on PIM Dense Mode, refer to this URL:

http://www.cisco.com/en/US/docs/ios-xml/ios/ipmulti_optim/configuration/12-2sx/imc_pim_dense_rfrsh.html

PIM Sparse Mode

PIM Sparse Mode (PIM-SM) uses a pull model to deliver multicast traffic. Only networks with active receivers that have explicitly requested the data are forwarded the traffic. PIM-SM is intended for networks with several different multicasts, such as desktop video conferencing and collaborative computing, that go to a small number of receivers and are typically in progress simultaneously.

Bidirectional PIM Mode

In bidirectional PIM (Bidir-PIM) mode, traffic is routed only along a bidirectional shared tree that is rooted at the rendezvous point (RP) for the group. The IP address of the RP functions as a key enabling all routers to establish a loop-free spanning tree topology rooted in that IP address.

Bidir-PIM is intended for many-to-many applications within individual PIM domains. Multicast groups in bidirectional mode can scale to an arbitrary number of sources without incurring overhead due to the number of sources.

For more detailed information on Bidirectional Mode, refer to this URL:

http://www.cisco.com/en/US/prod/collateral/iosswrel/ps6537/ps6552/ps6592/prod_white_paper0900aecd80310db2.pdf.

Rendezvous Point (RP)

If you configure PIM to operate in sparse mode, you must also choose one or more routers to be rendezvous points (RPs). Senders to a multicast group use RPs to announce their presence. Receivers of multicast packets use RPs to learn about new senders. You can configure Cisco IOS software so that packets for a single multicast group can use one or more RPs.

The RP address is used by first hop routers to send PIM register messages on behalf of a host sending a packet to the group. The RP address is also used by last hop routers to send PIM join and prune messages to the RP to inform it about group membership. You must configure the RP address on all routers (including the RP router).

A PIM router can be an RP for more than one group. Only one RP address can be used at a time within a PIM domain for the same group. The conditions specified by the access list determine for which groups the router is an RP (as different groups can have different RPs).

IGMP Snooping

IGMP snooping is used for multicasting in a Layer 2 switching environment. With IGMP snooping, a Layer 3 switch or router examines Layer 3 information in the IGMP packets in transit between hosts and a router. When the switch receives the IGMP Host Report from a host for a particular multicast group, the switch adds the host's port number to the associated multicast table entry. When the switch receives the IGMP Leave Group message from a host, it removes the host's port from the table entry.

Because IGMP control messages are transmitted as multicast packets, they are indistinguishable from multicast data if only the Layer 2 header is examined. A switch running IGMP snooping examines every multicast data packet to determine whether it contains any pertinent IGMP control information. If IGMP snooping is implemented on a low end switch with a slow CPU, performance could be severely impacted when data is transmitted at high rates. On the Catalyst 4500 series switches, IGMP snooping is implemented in the forwarding ASIC, so it does not impact the forwarding rate.

IP Multicast Implementation on the Catalyst 4500 Series Switch

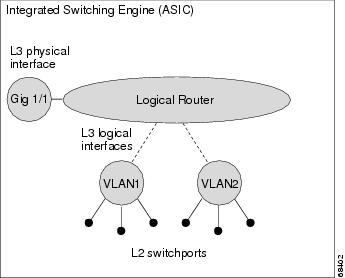

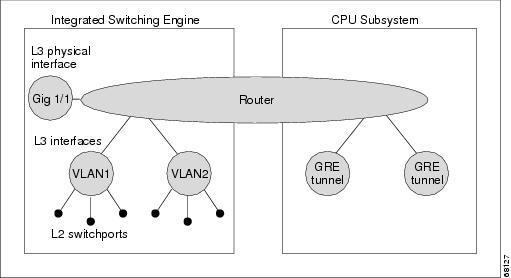

The Catalyst 4500 series switch supports an ASIC-based integrated switching engine that provides Ethernet bridging at Layer 2 and IP routing at Layer 3. Because the ASIC is specifically designed to forward packets, the integrated switching engine hardware provides very high performance with ACLs and QoS enabled. At wire-speed, forwarding in hardware is significantly faster than the CPU subsystem software, which is designed to handle exception packets.

The integrated switching engine hardware supports interfaces for inter-VLAN routing and switch ports for Layer 2 bridging. It also provides a physical Layer 3 interface that can be configured to connect with a host, a switch, or a router.

Figure 1-2 shows a logical view of Layer 2 and Layer 3 forwarding in the integrated switching engine hardware.

Figure 1-2 Logical View of Layer 2 and Layer 3 Forwarding in Hardware

CEF, MFIB, and Layer 2 Forwarding

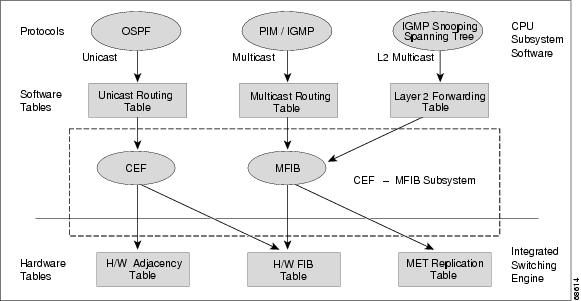

The implementation of IP multicast on the Catalyst 4500 series switch is an extension of centralized Cisco Express Forwarding (CEF). CEF extracts information from the unicast routing table, which is created by unicast routing protocols, such as BGP, OSPF, and EIGR and loads it into the hardware Forwarding Information Base (FIB). With the unicast routes in the FIB, when a route is changed in the upper-layer routing table, only one route needs to be changed in the hardware routing state. To forward unicast packets in hardware, the integrated switching engine looks up source and destination routes in ternary content addressable memory (TCAM), takes the adjacency index from the hardware FIB, and gets the Layer 2 rewrite information and next-hop address from the hardware adjacency table.

The new Multicast Forwarding Information Base (MFIB) subsystem is the multicast analog of the unicast CEF. The MFIB subsystem extracts the multicast routes that PIM and IGMP create and refines them into a protocol-independent format for forwarding in hardware. The MFIB subsystem removes the protocol-specific information and leaves only the essential forwarding information. Each entry in the MFIB table consists of an (S,G) or (*,G) route, an input RPF VLAN, and a list of Layer 3 output interfaces. The MFIB subsystem, together with platform-dependent management software, loads this multicast routing information into the hardware FIB and hardware multicast expansion table (MET).

Note![]() On the Supervisor Engine 6-E and 6L-E, MET has been replaced by the RET (Replica Expansion Table).

On the Supervisor Engine 6-E and 6L-E, MET has been replaced by the RET (Replica Expansion Table).

The Catalyst 4500 series switch performs Layer 3 routing and Layer 2 bridging at the same time. There can be multiple Layer 2 switch ports on any VLAN interface. To determine the set of output switch ports on which to forward a multicast packet, the Supervisor Engine III combines Layer 3 MFIB information with Layer 2 forwarding information and stores it in the hardware MET for packet replication.

Figure 1-3 shows a functional overview of how the Catalyst 4500 series switch combines unicast routing, multicast routing, and Layer 2 bridging information to forward in hardware.

Figure 1-3 Combining CEF, MFIB, and Layer 2 Forwarding Information in Hardware

Like the CEF unicast routes, the MFIB routes are Layer 3 and must be merged with the appropriate Layer 2 information. The following example shows an MFIB route:

The route (*,224.1.2.3) is loaded in the hardware FIB table and the list of output interfaces is loaded into the MET. A pointer to the list of output interfaces, the MET index, and the RPF interface are also loaded in the hardware FIB with the (*,224.1.2.3) route. With this information loaded in hardware, merging of the Layer 2 information can begin. For the output interfaces on VLAN1, the integrated switching engine must send the packet to all switch ports in VLAN1 that are in the spanning tree forwarding state. The same process applies to VLAN 2. To determine the set of switch ports in VLAN 2, the Layer 2 Forwarding Table is used.

When the hardware routes a packet, in addition to sending it to all of the switch ports on all output interfaces, the hardware also sends the packet to all switch ports (other than the one it arrived on) in the input VLAN. For example, assume that VLAN 3 has two switch ports in it, Gig 3/1 and Gig 3/2. If a host on Gig 3/1 sends a multicast packet, the host on Gig 3/2 might also need to receive the packet. To send a multicast packet to the host on Gig 3/2, all of the switch ports in the ingress VLAN must be added to the port set that is loaded in the MET.

If VLAN 1 contains 1/1 and 1/2, VLAN 2 contains 2/1 and 2/2, and VLAN 3 contains 3/1 and 3/2, the MET chain for this route would contain these switch ports: (1/1,1/2,2/1,2/2,3/1, and 3/2).

If IGMP snooping is on, the packet should not be forwarded to all output switch ports on VLAN 2. The packet should be forwarded only to switch ports where IGMP snooping has determined that there is either a group member or router. For example, if VLAN 1 had IGMP snooping enabled, and IGMP snooping determined that only port 1/2 had a group member on it, then the MET chain would contain these switch ports: (1/1,1/2, 2/1, 2/2, 3/1, and 3/2).

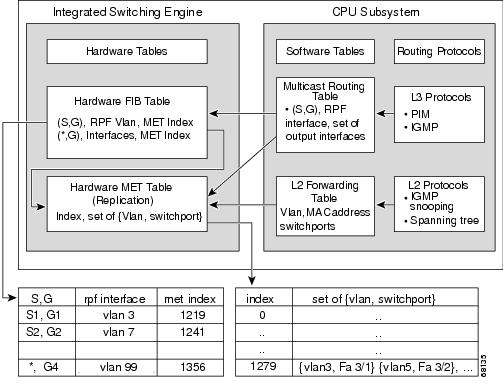

IP Multicast Tables

Figure 1-4 shows some key data structures that the Catalyst 4500 series switch uses to forward IP multicast packets in hardware.

Figure 1-4 IP Multicast Tables and Protocols

The integrated switching engine maintains the hardware FIB table to identify individual IP multicast routes. Each entry consists of a destination group IP address and an optional source IP address. Multicast traffic flows on primarily two types of routes: (S,G) and (*,G). The (S,G) routes flow from a source to a group based on the IP address of the multicast source and the IP address of the multicast group destination. Traffic on a (*,G) route flows from the PIM RP to all receivers of group G. Only sparse-mode groups use (*,G) routes. The integrated switching engine hardware contains space for a total of 128,000 routes, which are shared by unicast routes, multicast routes, and multicast fast-drop entries.

Output interface lists are stored in the multicast expansion table (MET). The MET has room for up to 32,000 output interface lists. (For RET, we can have up to 102 K entries (32 K used for floodsets, 70,000 used for multicast entries)). The MET resources are shared by both Layer 3 multicast routes and by Layer 2 multicast entries. The actual number of output interface lists available in hardware depends on the specific configuration. If the total number of multicast routes exceed 32,000, multicast packets might not be switched by the Integrated Switching Engine. They would be forwarded by the CPU subsystem at much slower speeds.

Note![]() For RET, a maximum of 102 K entries is supported (32 K used for floodsets, 70 K used for multicast entries).

For RET, a maximum of 102 K entries is supported (32 K used for floodsets, 70 K used for multicast entries).

Note![]() Partial routing is not supported on Catalyst 4900M, Catalyst 4948E, Supervisor Engine 6-E, and Supervisor Engine 6L-E; only hardware and software routing are supported.

Partial routing is not supported on Catalyst 4900M, Catalyst 4948E, Supervisor Engine 6-E, and Supervisor Engine 6L-E; only hardware and software routing are supported.

Hardware and Software Forwarding

The integrated switching engine forwards the majority of packets in hardware at very high rates of speed. The CPU subsystem forwards exception packets in software. Statistical reports should show that the integrated switching engine is forwarding the vast majority of packets in hardware.

Figure 1-5 shows a logical view of the hardware and software forwarding components.

Figure 1-5 Hardware and Software Forwarding Components

In the normal mode of operation, the integrated switching engine performs inter-VLAN routing in hardware. The CPU subsystem supports generic routing encapsulation (GRE) tunnels for forwarding in software.

Replication is a particular type of forwarding where, instead of sending out one copy of the packet, the packet is replicated and multiple copies of the packet are sent out. At Layer 3, replication occurs only for multicast packets; unicast packets are never replicated to multiple Layer 3 interfaces. In IP multicasting, for each incoming IP multicast packet that is received, many replicas of the packet are sent out.

IP multicast packets can be transmitted on the following types of routes:

Hardware routes occur when the integrated switching engine hardware forwards all replicas of a packet. Software routes occur when the CPU subsystem software forwards all replicas of a packet. Partial routes occur when the integrated switching engine forwards some of the replicas in hardware and the CPU subsystem forwards some of the replicas in software.

Partial Routes

Note![]() The conditions listed below cause the replicas to be forwarded by the CPU subsystem software, but the performance of the replicas that are forwarded in hardware is not affected.

The conditions listed below cause the replicas to be forwarded by the CPU subsystem software, but the performance of the replicas that are forwarded in hardware is not affected.

The following conditions cause some replicas of a packet for a route to be forwarded by the CPU subsystem:

Software Routes

Note![]() If any one of the following conditions is configured on the RPF interface or the output interface, all replication of the output is performed in software.

If any one of the following conditions is configured on the RPF interface or the output interface, all replication of the output is performed in software.

The following conditions cause all replicas of a packet for a route to be forwarded by the CPU subsystem software:

Non-Reverse Path Forwarding Traffic

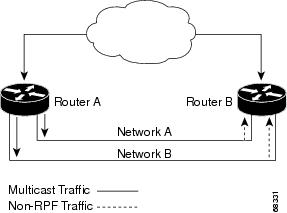

Traffic that fails an Reverse Path Forwarding (RPF) check is called non-RPF traffic. Non-RPF traffic is forwarded by the integrated switching engine by filtering (persistently dropping) or rate limiting the non-RPF traffic.

In a redundant configuration where multiple Layer 3 switches or routers connect to the same LAN segment, only one device forwards the multicast traffic from the source to the receivers on the outgoing interfaces. Figure 1-6 shows how non-RPF traffic can occur in a common network configuration.

Figure 1-6 Redundant Multicast Router Configuration in a Stub Network

In this kind of topology, only Router A, the PIM designated router (PIM DR), forwards data to the common VLAN. Router B receives the forwarded multicast traffic, but must drop this traffic because it has arrived on the wrong interface and fails the RPF check. Traffic that fails the RPF check is called non-RPF traffic.

Multicast Fast Drop

In IP multicast protocols, such as PIM-SM and PIM-DM, every (S,G) or (*,G) route has an incoming interface associated with it. This interface is referred to as the reverse path forwarding interface. In some cases, when a packet arrives on an interface other than the expected RPF interface, the packet must be forwarded to the CPU subsystem software to allow PIM to perform special protocol processing on the packet. One example of this special protocol processing that PIM performs is the PIM Assert protocol.

By default, the integrated switching engine hardware sends all packets that arrive on a non-RPF interface to the CPU subsystem software. However, processing in software is not necessary in many cases, because these non-RPF packets are often not needed by the multicast routing protocols. The problem is that if no action is taken, the non-RPF packets that are sent to the software can overwhelm the CPU.

To prevent this situation from happening, the CPU subsystem software loads fast-drop entries in the hardware when it receives an RPF failed packet that is not needed by the PIM protocols running on the switch. A fast-drop entry is keyed by (S,G, incoming interface). Any packet matching a fast-drop entry is bridged in the ingress VLAN, but is not sent to the software, so the CPU subsystem software is not overloaded by processing these RPF failures unnecessarily.

Protocol events, such as a link going down or a change in the unicast routing table, can impact the set of packets that can safely be fast dropped. A packet that was correctly fast dropped before might, after a topology change, need to be forwarded to the CPU subsystem software so that PIM can process it. The CPU subsystem software handles flushing fast-drop entries in response to protocol events so that the PIM code in IOS can process all the necessary RPF failures.

The use of fast-drop entries in the hardware is critical in some common topologies because you may have persistent RPF failures. Without the fast-drop entries, the CPU is exhausted by RPF failed packets that it did not need to process.

Multicast Forwarding Information Base

The Multicast Forwarding Information Base (MFIB) subsystem supports IP multicast routing in the integrated switching engine hardware on the Catalyst 4500 series switch. The MFIB logically resides between the IP multicast routing protocols in the CPU subsystem software (PIM, IGMP, MSDP, MBGP, and DVMRP) and the platform-specific code that manages IP multicast routing in hardware. The MFIB translates the routing table information created by the multicast routing protocols into a simplified format that can be efficiently processed and used for forwarding by the Integrated Switching Engine hardware.

To display the information in the multicast routing table, use the show ip mroute command. To display the MFIB table information, use the show ip mfib command.

The MFIB table contains a set of IP multicast routes. IP multicast routes include (S,G) and (*,G). Each route in the MFIB table can have one or more optional flags associated with it. The route flags indicate how a packet that matches a route should be forwarded. For example, the Internal Copy (IC) flag on an MFIB route indicates that a process on the switch needs to receive a copy of the packet. The following flags can be associated with MFIB routes:

- Internal Copy (IC) flag—Sets on a route when a process on the router needs to receive a copy of all packets matching the specified route.

- Signalling (S) flag—Sets on a route when a process needs to be notified when a packet matching the route is received; the expected behavior is that the protocol code updates the MFIB state in response to receiving a packet on a signalling interface.

- Connected (C) flag—–When set on an MFIB route, has the same meaning as the Signaling (S) flag, except that the C flag indicates that only packets sent by directly connected hosts to the route should be signaled to a protocol process.

A route can also have a set of optional flags associated with one or more interfaces. For example, an (S,G) route with the flags on VLAN 1 indicates how packets arriving on VLAN 1 should be handled and whether packets matching the route should be forwarded onto VLAN 1. The per-interface flags supported in the MFIB include the following:

- Accepting (A)—Sets on the interface that is known in multicast routing as the RPF interface. A packet that arrives on an interface that is marked as Accepting (A) is forwarded to all Forwarding (F) interfaces.

- Forwarding (F)—Used in conjunction with the Accepting (A) flag as described above. The set of Forwarding interfaces that form what is often referred to as the multicast “ olist ” or output interface list.

- Signaling (S)—Sets on an interface when some multicast routing protocol process in Cisco IOS needs to be notified of packets arriving on that interface.

Note![]() When PIM-SM routing is in use, the MFIB route might include an interface as in this example:

When PIM-SM routing is in use, the MFIB route might include an interface as in this example:

PimTunnel [1.2.3.4].

it is a virtual interface that the MFIB subsystem creates to indicate that packets are being tunnelled to the specified destination address. A PimTunnel interface cannot be displayed with the normal show interface command.

S/M, 224/4

An (S/M, 224/4) entry is created in the MFIB for every multicast-enabled interface. This entry ensures that all packets sent by directly connected neighbors can be register-encapsulated to the PIM-SM RP. Typically, only a small number of packets are forwarded using the (S/M,224/4) route, until the (S,G) route is established by PIM-SM.

For example, on an interface with IP address 10.0.0.1 and netmask 255.0.0.0, a route is created matching all IP multicast packets in which the source address is anything in the class A network 10. This route can be written in conventional subnet/masklength notation as (10/8,224/4). If an interface has multiple assigned IP addresses, then one route is created for each such IP address.

Restrictions on Using Bidirectional PIM

Restrictions include the following:

- IPv4 Bidirectional (Bidir) PIM is supported on Catalyst 4900M, Catalyst 4948E, Supervisor Engine 6-E, and Supervisor Engine 6L-E. IPv6 Bidir PIM is not supported.

- The Catalyst 4500 series switch allows you to forward Bidir PIM traffic in hardware up to a maximum of seven RPs. If you configure more than seven Bidir RPs, only the first seven RPs can forward traffic in hardware. The traffic directed to the remaining RPs is forwarded in software.

Configuring IP Multicast Routing

The following sections describe IP multicast routing configuration tasks:

- Default Configuration in IP Multicast Routing

- Enabling IP Multicast Routing

- Enabling PIM on an Interface

- Enabling Bidirectional Mode

- Enabling PIM-SSM Mapping

- Configuring a Rendezvous Point

- Configuring a Single Static RP

- Load Splitting of IP Multicast Traffic

For more detailed information on IP multicast routing, such as Auto-RP, PIM Version 2, and IP multicast static routes, refer to the Cisco IOS IP and IP Routing Configuration Guide, Cisco IOS Release 12.3.

Default Configuration in IP Multicast Routing

Table 1-1 shows the IP multicast default configuration.

Note![]() Source-specific multicast and IGMP v3 are supported.

Source-specific multicast and IGMP v3 are supported.

For more information about source-specific multicast with IGMPv3 and IGMP, see the following URL:

http://www.cisco.com/en/US/docs/ios/ipmulti/configuration/guide/imc_cfg_ssm.html

Enabling IP Multicast Routing

Enabling IP multicast routing allows the Catalyst 4500 series switch to forward multicast packets. To enable IP multicast routing on the router, enter this command:

|

|

|

|---|---|

Enabling PIM on an Interface

Enabling PIM on an interface also enables IGMP operation on that interface. An interface can be configured to be in dense mode, sparse mode, or sparse-dense mode. The mode determines how the Layer 3 switch or router populates its multicast routing table and how the Layer 3 switch or router forwards multicast packets it receives from its directly connected LANs. You must enable PIM in one of these modes for an interface to perform IP multicast routing.

When the switch populates the multicast routing table, dense-mode interfaces are always added to the table. Sparse-mode interfaces are added to the table only when periodic join messages are received from downstream routers, or when there is a directly connected member on the interface. When forwarding from a LAN, sparse-mode operation occurs if there is an RP known for the group. If so, the packets are encapsulated and sent toward the RP. When no RP is known, the packet is flooded in a dense-mode fashion. If the multicast traffic from a specific source is sufficient, the receiver’s first-hop router can send join messages toward the source to build a source-based distribution tree.

There is no default mode setting. By default, multicast routing is disabled on an interface.

Enabling Dense Mode

To configure PIM on an interface to be in dense mode, enter this command:

|

|

|

|---|---|

For an example of how to configure a PIM interface in dense mode, see the “PIM Dense Mode Example” section.

Enabling Sparse Mode

To configure PIM on an interface to be in sparse mode, enter this command:

|

|

|

|---|---|

For an example of how to configure a PIM interface in sparse mode, see the “PIM Sparse Mode Example” section.

Enabling Sparse-Dense Mode

When you enter either the ip pim sparse-mode or ip pim dense-mode command, sparseness or denseness is applied to the interface as a whole. However, some environments might require PIM to run in a single region in sparse mode for some groups and in dense mode for other groups.

An alternative to enabling only dense mode or only sparse mode is to enable sparse-dense mode. The interface is treated as dense mode if the group is in dense mode; the interface is treated in sparse mode if the group is in sparse mode. If you want to treat the group as a sparse group, and the interface is in sparse-dense mode, you must have an RP.

If you configure sparse-dense mode, the idea of sparseness or denseness is applied to the group on the switch, and the network manager should apply the same concept throughout the network.

Another benefit of sparse-dense mode is that Auto-RP information can be distributed in a dense-mode manner; yet, multicast groups for user groups can be used in a sparse-mode manner. You do not need to configure a default RP at the leaf routers.

When an interface is treated in dense mode, it is populated in a multicast routing table’s outgoing interface list when either of the following is true:

- When members or DVMRP neighbors exist on the interface

- When PIM neighbors exist and the group has not been pruned

When an interface is treated in sparse mode, it is populated in a multicast routing table’s outgoing interface list when either of the following is true:

- When members or DVMRP neighbors exist on the interface

- When an explicit join has been received by a PIM neighbor on the interface

To enable PIM to operate in the same mode as the group, enter this command:

|

|

|

|---|---|

Enables PIM to operate in sparse or dense mode, depending on the group. |

Enabling Bidirectional Mode

Most of the configuration requirements for Bidir-PIM are the same as those for configuring PIM-SM. You need not enable or disable an interface for carrying traffic for multicast groups in bidirectional mode. Instead, you configure which multicast groups you want to operate in bidirectional mode. Similar to PIM-SM, you can perform this configuration with Auto-RP, static RP configurations, or the PIM Version 2 bootstrap router (PIMv2 BSR) mechanism.

To enable Bidir-PIM, perform this task in global configuration mode:

|

|

|

|---|---|

To configure Bidir-PIM, enter one of these commands, depending on which method you use to distribute group-to-RP mappings:

For an example of how to configure bidir-PIM, see the “Bidirectional PIM Mode Example” section.

Enabling PIM-SSM Mapping

The Catalyst 4500 series switch supports SSM mapping, enabling an SSM transition in cases either where neither URD nor IGMP v3-lite is available, or when supporting SSM on the end system is impossible or unwanted due to administrative or technical reasons. With SSM mapping, you can leverage SSM for video delivery to legacy set-top boxes (STBs) that do not support IGMPv3 or for applications that do not take advantage of the IGMPv3 host stack.

For more details, refer to this URL:

http://www.cisco.com/en/US/docs/ios-xml/ios/ipmulti_igmp/configuration/15-s/imc_ssm_map.html

Configuring a Rendezvous Point

A rendezvous point (RP) is required in networks running Protocol Independent Multicast sparse mode (PIM-SM). In PIM-SM, traffic is forwarded only to network segments with active receivers that have explicitly requested multicast data.

The most commonly used methods to configure a rendezvous point (described here) are the use of Static RP and the use of the Auto-RP protocol. Another method (not described here) is the use of the Bootstrap Router (BSR) protocol.

Configuring Auto-RP

Auto-rendezvous point (Auto-RP) automates the distribution of group-to-rendezvous point (RP) mappings in a PIM network. To make Auto-RP work, a router must be designated as an RP mapping agent, which receives the RP announcement messages from the RPs and arbitrates conflicts. The RP mapping agent then sends the consistent group-to-RP mappings to all other routers by way of dense mode flooding.

All routers automatically discover which RP to use for the groups they support. The Internet Assigned Numbers Authority (IANA) has assigned two group addresses, 224.0.1.39 and 224.0.1.40, for Auto-RP.

The mapping agent receives announcements of intention to become the RP from Candidate-RPs. The mapping agent then announces the winner of the RP election. This announcement is made independently of the decisions by the other mapping agents.

To configure a rendezvous point, perform this task:

This example illustrates how to configure Auto-RP:

Switch(config)# ip multicast-routing

Switch(config)# interface ethernet 1

Switch(config-if)# ip pim sparse-mode

Switch(config)# ip pim rp-announce-filter rp-list 1 group-list 2

Switch(config)# interface ethernet 1

Switch(config-if)# ip multicast boundary 10 filter-autorp

Configuring a Single Static RP

If you are configuring PIM sparse mode, you must configure a PIM RP for a multicast group. An RP can either be configured statically in each device, or learned through a dynamic mechanism. This task explains how to statically configure an RP, as opposed to the router learning the RP through a dynamic mechanism such as Auto-RP.

PIM designated routers (DRs) forward data from directly connected multicast sources to the RP for distribution down the shared tree. Data is forwarded to the RP in one of two ways. It is encapsulated in register packets and unicast directly to the RP, or, if the RP has itself joined the source tree, it is multicast forwarded per the RPF forwarding algorithm. Last hop routers directly connected to receivers may, at their discretion, join themselves to the source tree and prune themselves from the shared tree.

A single RP can be configured for multiple groups that are defined by an access list. If no RP is configured for a group, the router treats the group as dense using the PIM dense mode techniques. (You can prevent this occurrence by configuring the no ip pim dm-fallback command.)

If a conflict exists between the RP configured with the ip pim rp-address command and one learned by Auto-RP, the Auto-RP information is used, unless the override keyword is configured.

To configure a single static RP, perform this task:

This example shows how to configure a single-static RP:

Switch(config)# ip multicast-routing

Switch(config-if)# ip pim sparse-mode

Switch(config)# ip pim rp-address 192.168.0.0

Load Splitting of IP Multicast Traffic

If two or more equal-cost paths from a source are available, unicast traffic is load split across those paths. However, by default, multicast traffic is not load split across multiple equal-cost paths. In general, multicast traffic flows down from the reverse path forwarding (RPF) neighbor. According to the Protocol Independent Multicast (PIM) specifications, this neighbor must have the highest IP address if more than one neighbor has the same metric.

Use the ip multicast multipath command to enable load splitting of IP multicast traffic across multiple equal-cost paths.

Note![]() The ip multicast multipath command does not work with bidirectional Protocol Independent Multicast (PIM).

The ip multicast multipath command does not work with bidirectional Protocol Independent Multicast (PIM).

To enable IP multicast multipath, perform this task:

|

|

|

|

|---|---|---|

|

|

||

|

|

||

|

|

Note![]() The ip multicast multipath command load splits the traffic but does not load balance the traffic. Traffic from a source uses only one path, even if the traffic far outweighs traffic from other sources.

The ip multicast multipath command load splits the traffic but does not load balance the traffic. Traffic from a source uses only one path, even if the traffic far outweighs traffic from other sources.

Configuring load splitting with the ip multicast multipath command causes the system to load split multicast traffic across multiple equal-cost paths based on source address using the S-hash algorithm. When the ip multicast multipath command is configured and multiple equal-cost paths exist, the path in which multicast traffic travel is selected based on the source IP address. Multicast traffic from different sources is load split across the different equal-cost paths. Load splitting does not occur across equal-cost paths for multicast traffic from the same source sent to different multicast groups.

The following example shows how to enable ECMP multicast load splitting on a router based on a source address using the S-hash algorithm:

The following example shows how to enable ECMP multicast load splitting on a router based on a source and group address using the basic S-G-hash algorithm:

The following example shows how to enable ECMP multicast load splitting on a router based on a source, group, and next-hop address using the next-hop-based S-G-hash algorithm:

Monitoring and Maintaining IP Multicast Routing

You can remove all contents of a particular cache, table, or database. You also can display specific statistics. The following sections describe how to monitor and maintain IP multicast:

- Displaying System and Network Statistics

- Displaying the Multicast Routing Table

- Displaying IP MFIB

- Displaying Bidirectional PIM Information

- Displaying PIM Statistics

- Clearing Tables and Databases

Displaying System and Network Statistics

You can display specific statistics, such as the contents of IP routing tables and databases. Information provided can be used to determine resource utilization and solve network problems. You can also display information about node reachability and discover the routing path your device’s packets are taking using the network.

To display various routing statistics, enter any of these commands:

|

|

|

|---|---|

|

|

|

|

|

|

|

|

Displaying the Multicast Routing Table

The following is sample output from the show ip mroute command for a router operating in dense mode. This command displays the contents of the IP multicast FIB table for the multicast group named cbone-audio.

The following is sample output from the show ip mroute command for a router operating in sparse mode:

Note![]() Interface timers are not updated for hardware-forwarded packets. Entry timers are updated approximately every five seconds.

Interface timers are not updated for hardware-forwarded packets. Entry timers are updated approximately every five seconds.

The following is sample output from the show ip mroute command with the summary keyword:

The following is sample output from the show ip mroute command with the active keyword:

The following is sample output from the show ip mroute command with the count keyword:

Note![]() Multicast route byte and packet statistics are supported only for the first 1024 multicast routes. Output interface statistics are not maintained.

Multicast route byte and packet statistics are supported only for the first 1024 multicast routes. Output interface statistics are not maintained.

Displaying IP MFIB

You can display all routes in the MFIB, including routes that might not exist directly in the upper-layer routing protocol database but that are used to accelerate fast switching. These routes appear in the MFIB, even if dense-mode forwarding is in use.

To display various MFIB routing routes, enter one of these commands:

The following is sample output from the show ip mfib command:

The fast-switched packet count represents the number of packets that were switched in hardware on the corresponding route.

The partially switched packet counter represents the number of times that a fast-switched packet was also copied to the CPU for software processing or for forwarding to one or more non-platform switched interfaces (such as a PimTunnel interface).

The slow-switched packet count represents the number of packets that were switched completely in software on the corresponding route.

Displaying Bidirectional PIM Information

To display bidir-PIM information, enter one of these commands:

Displaying PIM Statistics

The following is sample output from the show ip pim interface command:

The following is sample output from the show ip pim interface command with a count:

The following is sample output from the show ip pim interface command with a count when IP multicast is enabled. The example lists the PIM interfaces that are fast-switched and process-switched, and the packet counts for these. The H is added to interfaces where IP multicast is enabled.

Clearing Tables and Databases

You can remove all contents of a particular cache, table, or database. Clearing a cache, table, or database might be necessary when the contents of the particular structure have become, or are suspected to be, invalid.

To clear IP multicast caches, tables, and databases, enter one of these commands:

|

|

|

|---|---|

|

|

|

|

|

Note![]() IP multicast routes can be regenerated in response to protocol events and as data packets arrive.

IP multicast routes can be regenerated in response to protocol events and as data packets arrive.

Configuration Examples

The following sections provide IP multicast routing configuration examples:

- PIM Dense Mode Example

- PIM Sparse Mode Example

- Bidirectional PIM Mode Example

- Sparse Mode with a Single Static RP Example

- Sparse Mode with Auto-RP: Example

PIM Dense Mode Example

This example is a configuration of dense-mode PIM on an Ethernet interface:

PIM Sparse Mode Example

This example is a configuration of sparse-mode PIM. The RP router is the router with the address 10.8.0.20.

Bidirectional PIM Mode Example

By default, a bidirectional RP advertises all groups as bidirectional. Use an access list on the RP to specify a list of groups to be advertised as bidirectional. Groups with the deny keyword operate in dense mode. A different, nonbidirectional RP address is required for groups that operate in sparse mode, because a single access list only allows either a permit or deny keyword.

The following example shows how to configure an RP for both sparse and bidirectional mode groups. 224/8 and 227/8 are bidirectional groups, 226/8 is sparse mode, and 225/8 is dense mode. The RP must be configured to use different IP addresses for sparse and bidirectional mode operations. Two loopback interfaces are used to allow this configuration and the addresses of these interfaces must be routed throughout the PIM domain so that the other routers in the PIM domain can receive Auto-RP announcements and communicate with the RP:

Sparse Mode with a Single Static RP Example

The following example sets the PIM RP address to 192.168.1.1 for all multicast groups and defines all groups to operate in sparse mode:

Note![]() The same RP cannot be used for both bidirectional and sparse mode groups.

The same RP cannot be used for both bidirectional and sparse mode groups.

The following example sets the PIM RP address to 172.16.1.1 for the multicast group 225.2.2.2 only:

Sparse Mode with Auto-RP: Example

The following example configures sparse mode with Auto-RP:

Feedback

Feedback