Substituição de componentes com defeito no servidor UCS C240 M4 - vEPC

Opções de download

Linguagem imparcial

O conjunto de documentação deste produto faz o possível para usar uma linguagem imparcial. Para os fins deste conjunto de documentação, a imparcialidade é definida como uma linguagem que não implica em discriminação baseada em idade, deficiência, gênero, identidade racial, identidade étnica, orientação sexual, status socioeconômico e interseccionalidade. Pode haver exceções na documentação devido à linguagem codificada nas interfaces de usuário do software do produto, linguagem usada com base na documentação de RFP ou linguagem usada por um produto de terceiros referenciado. Saiba mais sobre como a Cisco está usando a linguagem inclusiva.

Sobre esta tradução

A Cisco traduziu este documento com a ajuda de tecnologias de tradução automática e humana para oferecer conteúdo de suporte aos seus usuários no seu próprio idioma, independentemente da localização. Observe que mesmo a melhor tradução automática não será tão precisa quanto as realizadas por um tradutor profissional. A Cisco Systems, Inc. não se responsabiliza pela precisão destas traduções e recomenda que o documento original em inglês (link fornecido) seja sempre consultado.

Contents

Introdução

Este documento descreve as etapas necessárias para substituir componentes defeituosos mencionados aqui em um servidor Unified Computing System (UCS) em uma configuração Ultra-M que hospeda StarOS Virtual Network Functions (VNFs).

- MOP de substituição de módulo de memória dupla em linha (DIMM)

- Falha do controlador FlexFlash

- Falha na unidade de estado sólido (SSD)

- Falha do Trusted Platform Module (TPM)

- Falha de Cache Raid

- Falha de Controladora Raid/Adaptador de Barramento Quente (HBA)

- Falha no riser PCI

- Falha de adaptador PCIe Intel X520 10G

- Falha de LAN-on Motherboard (MLOM) modular

- RMA da bandeja do ventilador

- Falha de CPU

Informações de Apoio

O Ultra-M é uma solução de núcleo de pacotes móveis virtualizados validada e predefinida, projetada para simplificar a implantação de VNFs. O OpenStack é o Virtualized Infrastructure Manager (VIM) para Ultra-M e consiste nos seguintes tipos de nó:

- Computação

- Disco de armazenamento de objetos - Computação (OSD - Computação)

- Controlador

- Plataforma OpenStack - Diretor (OSPD)

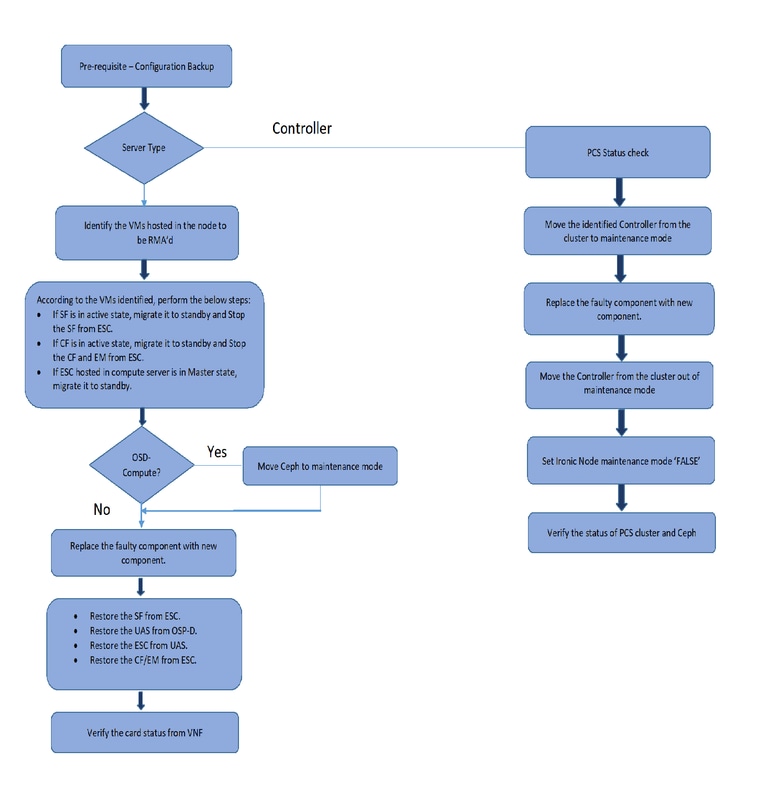

A arquitetura avançada do Ultra-M e os componentes envolvidos são descritos nesta imagem:

Este documento destina-se ao pessoal da Cisco que está familiarizado com a plataforma Cisco Ultra-M e detalha as etapas necessárias a serem executadas no nível OpenStack e StarOS VNF no momento da Substituição de Componentes no servidor.

Note: A versão Ultra M 5.1.x é considerada para definir os procedimentos neste documento.

Abreviaturas

| VNF | Função de rede virtual |

| CF | Função de controle |

| SF | Função de serviço |

| ESC | Controlador de serviço elástico |

| MOP | Método de Procedimento |

| OSD | Discos de Armazenamento de Objetos |

| HDD | Unidade de disco rígido |

| SSD | Unidade de estado sólido |

| VIM | Gerente de infraestrutura virtual |

| VM | Máquina virtual |

| EM | Gerenciador de Elementos |

| UAS | Ultra Automation Services |

| UUID | Identificador Exclusivo Universal |

Fluxo de trabalho do MoP

Pré-requisitos

Fazer backup

Antes de substituir um componente defeituoso, é importante verificar o estado atual do ambiente da plataforma Red Hat OpenStack. É recomendável verificar o estado atual para evitar complicações quando o processo de substituição estiver ligado. Isso pode ser obtido por meio desse fluxo de substituição.

Em caso de recuperação, a Cisco recomenda fazer um backup do banco de dados OSPD com o uso destas etapas:

[root@director ~]# mysqldump --opt --all-databases > /root/undercloud-all-databases.sql

[root@director ~]# tar --xattrs -czf undercloud-backup-`date +%F`.tar.gz /root/undercloud-all-databases.sql

/etc/my.cnf.d/server.cnf /var/lib/glance/images /srv/node /home/stack

tar: Removing leading `/' from member names

Este processo garante que um nó possa ser substituído sem afetar a disponibilidade de nenhuma instância. Além disso, é recomendável fazer backup da configuração do StarOS, especialmente se o nó de computação/computação OSD a ser substituído hospedar a VM (Máquina Virtual) da CF (Função de Controle).

Note: Se o servidor for o nó Controlador, vá para a seção "", caso contrário, continue com a próxima seção.

Componente RMA - nó de computação/OSD-Compute

Identificar as VMs hospedadas no nó de computação/OSD-Compute

Identifique as VMs que estão hospedadas no servidor. Pode haver duas possibilidades:

- O servidor contém somente a VM da Função de Serviço (SF):

[stack@director ~]$ nova list --field name,host | grep compute-10

| 49ac5f22-469e-4b84-badc-031083db0533 | VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d |

pod1-compute-10.localdomain |

- O servidor contém combinação de VMs Control Function (CF)/Elastic Services Controller (ESC)/ Element Manager (EM)/ Ultra Automation Services (UAS):

[stack@director ~]$ nova list --field name,host | grep compute-8

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-compute-8.localdomain |

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 | pod1-compute-8.localdomain |

| 75528898-ef4b-4d68-b05d-882014708694 | VNF2-ESC-ESC-0 | pod1-compute-8.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-compute-8.localdomain |

Observação: na saída mostrada aqui, a primeira coluna corresponde ao Universally Unique IDentifier (UUID), a segunda coluna é o nome da VM e a terceira coluna é o nome do host onde a VM está presente. Os parâmetros desta saída serão usados nas seções subsequentes.

Desligamento da energia - normal

Caso 1. O nó de computação hospeda somente SF VM

Migrar placa SF para estado de espera

- Inicie sessão na VNF do StarOS e identifique a placa que corresponde à VM do SF. Use o UUID da VM SF identificada na seção "Identify the VMs hosted in the Compute/OSD-Compute Node" (Identificar as VMs hospedadas no nó de computação/OSD-Compute) e identifique a placa que corresponde ao UUID:

[local]VNF2# show card hardware

Tuesday might 08 16:49:42 UTC 2018

<snip>

Card 8:

Card Type : 4-Port Service Function Virtual Card

CPU Packages : 26 [#0, #1, #2, #3, #4, #5, #6, #7, #8, #9, #10, #11, #12, #13, #14, #15, #16, #17, #18, #19, #20, #21, #22, #23, #24, #25]

CPU Nodes : 2

CPU Cores/Threads : 26

Memory : 98304M (qvpc-di-large)

UUID/Serial Number : 49AC5F22-469E-4B84-BADC-031083DB0533

- Verifique o status da placa:

[local]VNF2# show card table

Tuesday might 08 16:52:53 UTC 2018

Slot Card Type Oper State SPOF Attach

----------- -------------------------------------- ------------- ---- ------

1: CFC Control Function Virtual Card Active No

2: CFC Control Function Virtual Card Standby -

3: FC 4-Port Service Function Virtual Card Active No

4: FC 4-Port Service Function Virtual Card Active No

5: FC 4-Port Service Function Virtual Card Active No

6: FC 4-Port Service Function Virtual Card Active No

7: FC 4-Port Service Function Virtual Card Active No

8: FC 4-Port Service Function Virtual Card Active No

9: FC 4-Port Service Function Virtual Card Active No

10: FC 4-Port Service Function Virtual Card Standby -

- Se a placa estiver no estado ativo, mova-a para o estado de standby:

[local]VNF2# card migrate from 8 to 10

Desligar VM SF do ESC

- Faça login no nó ESC que corresponde ao VNF e verifique o status do VM SF:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

VM_ALIVE_STATE

VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

VM_ALIVE_STATE</state>

<snip>

- Pare a VM SF com o uso de seu Nome de VM. (Nome da VM anotado na seção "Identificar as VMs hospedadas no nó de computação/OSD-Compute"):

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

- Depois de interrompida, a VM deve entrar no estado SHUTOFF:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

VM_ALIVE_STATE

VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

VM_ALIVE_STATE

VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

VM_SHUTOFF_STATE</state>

Caso 2. Computação/OSD-Compute Node Hosts CF/ESC/EM/UAS

Migrar Placa CF para Estado de Espera

- Faça login na VNF do StarOS e identifique a placa que corresponde à VM do CF. Use o UUID da VM CF identificada na seção "Identifique as VMs hospedadas no Nó" e localize a placa que corresponde ao UUID:

[local]VNF2# show card hardware

Tuesday might 08 16:49:42 UTC 2018

<snip>

Card 2:

Card Type : Control Function Virtual Card

CPU Packages : 8 [#0, #1, #2, #3, #4, #5, #6, #7]

CPU Nodes : 1

CPU Cores/Threads : 8

Memory : 16384M (qvpc-di-large)

UUID/Serial Number : F9C0763A-4A4F-4BBD-AF51-BC7545774BE2

<snip>

- Verifique o status da placa:

[local]VNF2# show card table

Tuesday might 08 16:52:53 UTC 2018

Slot Card Type Oper State SPOF Attach

----------- -------------------------------------- ------------- ---- ------

1: CFC Control Function Virtual Card Standby -

2: CFC Control Function Virtual Card Active No

3: FC 4-Port Service Function Virtual Card Active No

4: FC 4-Port Service Function Virtual Card Active No

5: FC 4-Port Service Function Virtual Card Active No

6: FC 4-Port Service Function Virtual Card Active No

7: FC 4-Port Service Function Virtual Card Active No

8: FC 4-Port Service Function Virtual Card Active No

9: FC 4-Port Service Function Virtual Card Active No

10: FC 4-Port Service Function Virtual Card Standby -

- Se a placa estiver no estado ativo, mova-a para o estado de standby:

[local]VNF2# card migrate from 2 to 1

Desligar VM do CF e do EM do ESC

- Faça login no nó ESC que corresponde ao VNF e verifique o status das VMs:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

VM_ALIVE_STATE</state>

VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

VM_ALIVE_STATE

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

507d67c2-1d00-4321-b9d1-da879af524f8

dc168a6a-4aeb-4e81-abd9-91d7568b5f7c

9ffec58b-4b9d-4072-b944-5413bf7fcf07

SERVICE_ACTIVE_STATE

VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea

VM_ALIVE_STATE</state>

<snip>

- Interrompa a VM do CF e do EM individualmente com o uso de seu Nome da VM. (Nome da VM anotado na seção "Identificar as VMs hospedadas no nó de computação/OSD-Compute"):

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea

- Depois de parar, as VMs devem entrar no estado SHUTOFF:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

VM_SHUTOFF_STATE</state>

VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

VM_ALIVE_STATE

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

507d67c2-1d00-4321-b9d1-da879af524f8

dc168a6a-4aeb-4e81-abd9-91d7568b5f7c

9ffec58b-4b9d-4072-b944-5413bf7fcf07

SERVICE_ACTIVE_STATE

VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

VM_SHUTOFF_STATE

<snip>

Migrar ESC para o Modo de Espera

- Inicie sessão no ESC hospedado no nó e verifique se ele está no estado mestre. Em caso afirmativo, mude o ESC para o modo de espera:

[admin@VNF2-esc-esc-0 esc-cli]$ escadm status

0 ESC status=0 ESC Master Healthy

[admin@VNF2-esc-esc-0 ~]$ sudo service keepalived stop

Stopping keepalived: [ OK ]

[admin@VNF2-esc-esc-0 ~]$ escadm status

1 ESC status=0 In SWITCHING_TO_STOP state. Please check status after a while.

[admin@VNF2-esc-esc-0 ~]$ sudo reboot

Broadcast message from admin@vnf1-esc-esc-0.novalocal

(/dev/pts/0) at 13:32 ...

The system is going down for reboot NOW!

Note: Se o componente defeituoso tiver que ser substituído no nó OSD-Compute, coloque o Ceph em Maintenance no servidor antes de continuar com a substituição do componente.

[admin@osd-compute-0 ~]$ sudo ceph osd set norebalance

set norebalance

[admin@osd-compute-0 ~]$ sudo ceph osd set noout

set noout

[admin@osd-compute-0 ~]$ sudo ceph status

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_WARN

noout,norebalance,sortbitwise,require_jewel_osds flag(s) set

monmap e1: 3 mons at {tb3-ultram-pod1-controller-0=11.118.0.40:6789/0,tb3-ultram-pod1-controller-1=11.118.0.41:6789/0,tb3-ultram-pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 tb3-ultram-pod1-controller-0,tb3-ultram-pod1-controller-1,tb3-ultram-pod1-controller-2

osdmap e194: 12 osds: 12 up, 12 in

flags noout,norebalance,sortbitwise,require_jewel_osds

pgmap v584865: 704 pgs, 6 pools, 531 GB data, 344 kobjects

1585 GB used, 11808 GB / 13393 GB avail

704 active+clean

client io 463 kB/s rd, 14903 kB/s wr, 263 op/s rd, 542 op/s wr

Substitua o componente com defeito do nó Compute/OSD-Compute

Desligue o servidor especificado. As etapas para substituir um componente defeituoso no servidor UCS C240 M4 podem ser consultadas em:

Substituindo os componentes do servidor

Restaure as VMs

Caso 1. O nó de computação hospeda somente SF VM

Recuperação de VM SF a partir do ESC

- O SF VM estaria em estado de erro na lista de novas:

[stack@director ~]$ nova list |grep VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

| 49ac5f22-469e-4b84-badc-031083db0533 | VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d | ERROR | - | NOSTATE |

- Recupere a VM SF do ESC:

[admin@VNF2-esc-esc-0 ~]$ sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

[sudo] password for admin:

Recovery VM Action

/opt/cisco/esc/confd/bin/netconf-console --port=830 --host=127.0.0.1 --user=admin --privKeyFile=/root/.ssh/confd_id_dsa --privKeyType=dsa --rpc=/tmp/esc_nc_cli.ZpRCGiieuW

<?xml version="1.0" encoding="UTF-8"?>

<rpc-reply xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="1">

<ok/>

</rpc-reply>

- Monitore o yangesc.log:

admin@VNF2-esc-esc-0 ~]$ tail -f /var/log/esc/yangesc.log

…

14:59:50,112 07-Nov-2017 WARN Type: VM_RECOVERY_COMPLETE

14:59:50,112 07-Nov-2017 WARN Status: SUCCESS

14:59:50,112 07-Nov-2017 WARN Status Code: 200

14:59:50,112 07-Nov-2017 WARN Status Msg: Recovery: Successfully recovered VM [VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d].

- Certifique-se de que a placa SF esteja ativa como SF de espera no VNF

Caso 2. Computação/OSD-Compute Node Hosts CF, ESC, EM e UAS

Recuperação de UAS VM

- Verifique o status da VM do UAS na lista de novas e exclua-a:

[stack@director ~]$ nova list | grep VNF2-UAS-uas-0

| 307a704c-a17c-4cdc-8e7a-3d6e7e4332fa | VNF2-UAS-uas-0 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.10; VNF2-UAS-uas-management=172.168.10.3

[stack@tb5-ospd ~]$ nova delete VNF2-UAS-uas-0

Request to delete server VNF2-UAS-uas-0 has been accepted.

- Para recuperar a VM do autovnf-uas, execute o script uas-check para verificar o estado. Ele deve relatar um erro. Em seguida, execute novamente com a opção —fix para recriar a VM do UAS ausente:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts/

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS

2017-12-08 12:38:05,446 - INFO: Check of AutoVNF cluster started

2017-12-08 12:38:07,925 - INFO: Instance 'vnf1-UAS-uas-0' status is 'ERROR'

2017-12-08 12:38:07,925 - INFO: Check completed, AutoVNF cluster has recoverable errors

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS --fix

2017-11-22 14:01:07,215 - INFO: Check of AutoVNF cluster started

2017-11-22 14:01:09,575 - INFO: Instance VNF2-UAS-uas-0' status is 'ERROR'

2017-11-22 14:01:09,575 - INFO: Check completed, AutoVNF cluster has recoverable errors

2017-11-22 14:01:09,778 - INFO: Removing instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Removed instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Creating instance VNF2-UAS-uas-0' and attaching volume ‘VNF2-UAS-uas-vol-0'

2017-11-22 14:01:49,525 - INFO: Created instance ‘VNF2-UAS-uas-0'

- Faça login no autovnf-uas. Aguarde alguns minutos e o UAS deve retornar ao estado bom:

VNF2-autovnf-uas-0#show uas

uas version 1.0.1-1

uas state ha-active

uas ha-vip 172.17.181.101

INSTANCE IP STATE ROLE

-----------------------------------

172.17.180.6 alive CONFD-SLAVE

172.17.180.7 alive CONFD-MASTER

172.17.180.9 alive NA

Note: Se uas-check.py —fix falhar, talvez seja necessário copiar este arquivo e executar novamente.

[stack@director ~]$ mkdir –p /opt/cisco/usp/apps/auto-it/common/uas-deploy/

[stack@director ~]$ cp /opt/cisco/usp/uas-installer/common/uas-deploy/userdata-uas.txt /opt/cisco/usp/apps/auto-it/common/uas-deploy/

Recuperação da VM do ESC

- Verifique o estado da VM ESC na lista de novas e apague-a:

stack@director scripts]$ nova list |grep ESC-1

| c566efbf-1274-4588-a2d8-0682e17b0d41 | VNF2-ESC-ESC-1 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.14; VNF2-UAS-uas-management=172.168.10.4 |

[stack@director scripts]$ nova delete VNF2-ESC-ESC-1

Request to delete server VNF2-ESC-ESC-1 has been accepted.

- Em AutoVNF-UAS, localize a transação de implantação ESC e, no registro da transação, localize a linha de comando boot_vm.py para criar a instância ESC:

ubuntu@VNF2-uas-uas-0:~$ sudo -i

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show transaction

TX ID TX TYPE DEPLOYMENT ID TIMESTAMP STATUS

-----------------------------------------------------------------------------------------------------------------------------

35eefc4a-d4a9-11e7-bb72-fa163ef8df2b vnf-deployment VNF2-DEPLOYMENT 2017-11-29T02:01:27.750692-00:00 deployment-success

73d9c540-d4a8-11e7-bb72-fa163ef8df2b vnfm-deployment VNF2-ESC 2017-11-29T01:56:02.133663-00:00 deployment-success

VNF2-uas-uas-0#show logs 73d9c540-d4a8-11e7-bb72-fa163ef8df2b | display xml

<config xmlns="http://tail-f.com/ns/config/1.0">

<logs xmlns="http://www.cisco.com/usp/nfv/usp-autovnf-oper">

<tx-id>73d9c540-d4a8-11e7-bb72-fa163ef8df2b</tx-id>

<log>2017-11-29 01:56:02,142 - VNFM Deployment RPC triggered for deployment: VNF2-ESC, deactivate: 0

2017-11-29 01:56:02,179 - Notify deployment

..

2017-11-29 01:57:30,385 - Creating VNFM 'VNF2-ESC-ESC-1' with [python //opt/cisco/vnf-staging/bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689 --net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username ****** --os_password ****** --bs_os_auth_url http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username ****** --bs_os_password ****** --esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --encrypt_key ****** --user_pass ****** --user_confd_pass ****** --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3 172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh --file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py --file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

***** Salve a linha boot_vm.py em um arquivo de script de shell (esc.sh) e atualize todas as linhas de ***** de nome de usuário e senha com as informações corretas (normalmente core/<PASSWORD>). Você também precisa remover a opção -encrypt_key. Para user_pass e user_confd_pass, você precisa usar o formato - nome de usuário: senha (exemplo - admin:<PASSWORD>).

- Localize o URL para bootvm.py de running-config e obtenha o arquivo bootvm.py para a VM do autovnf-uas. Nesse caso, 10.1.2.3 é o IP da VM de TI Automática:

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show running-config autovnf-vnfm:vnfm

…

configs bootvm

value http:// 10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

!

root@VNF2-uas-uas-0:~# wget http://10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

--2017-12-01 20:25:52-- http://10.1.2.3 /bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

Connecting to 10.1.2.3:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 127771 (125K) [text/x-python]

Saving to: ‘bootvm-2_3_2_155.py’

100%[=====================================================================================>] 127,771 --.-K/s in 0.001s

2017-12-01 20:25:52 (173 MB/s) - ‘bootvm-2_3_2_155.py’ saved [127771/127771]

- Crie um arquivo/tmp/esc_params.cfg:

root@VNF2-uas-uas-0:~# echo "openstack.endpoint=publicURL" > /tmp/esc_params.cfg

- Execute o script de shell para implantar ESC a partir do nó de UAS:

root@VNF2-uas-uas-0:~# /bin/sh esc.sh

+ python ./bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689

--net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url

http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username core --os_password <PASSWORD> --bs_os_auth_url

http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username core --bs_os_password <PASSWORD>

--esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --user_pass admin:<PASSWORD> --user_confd_pass

admin:<PASSWORD> --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3

172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh

--file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py

--file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

- Faça login no novo ESC e verifique o estado de Backup:

ubuntu@VNF2-uas-uas-0:~$ ssh admin@172.168.11.14

…

####################################################################

# ESC on VNF2-esc-esc-1.novalocal is in BACKUP state.

####################################################################

[admin@VNF2-esc-esc-1 ~]$ escadm status

0 ESC status=0 ESC Backup Healthy

[admin@VNF2-esc-esc-1 ~]$ health.sh

============== ESC HA (BACKUP) ===================================================

ESC HEALTH PASSED

Recuperar VMs CF e EM da ESC

- Verifique o status das VMs CF e EM na lista de novas. Eles devem estar no estado ERROR:

[stack@director ~]$ source corerc

[stack@director ~]$ nova list --field name,host,status |grep -i err

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | None | ERROR|

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 |None | ERROR

- Faça login no ESC Master, execute recovery-vm-action para cada VM de EM e CF afetada. Seja paciente. O ESC agendaria a ação de recuperação e isso pode não acontecer por alguns minutos. Monitore o yangesc.log:

sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO

[admin@VNF2-esc-esc-0 ~]$ sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8

[sudo] password for admin:

Recovery VM Action

/opt/cisco/esc/confd/bin/netconf-console --port=830 --host=127.0.0.1 --user=admin --privKeyFile=/root/.ssh/confd_id_dsa --privKeyType=dsa --rpc=/tmp/esc_nc_cli.ZpRCGiieuW

<?xml version="1.0" encoding="UTF-8"?>

<rpc-reply xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="1">

<ok/>

</rpc-reply>

[admin@VNF2-esc-esc-0 ~]$ tail -f /var/log/esc/yangesc.log

…

14:59:50,112 07-Nov-2017 WARN Type: VM_RECOVERY_COMPLETE

14:59:50,112 07-Nov-2017 WARN Status: SUCCESS

14:59:50,112 07-Nov-2017 WARN Status Code: 200

14:59:50,112 07-Nov-2017 WARN Status Msg: Recovery: Successfully recovered VM [VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8]

- Efetue login no novo EM e verifique se o estado EM está ativo:

ubuntu@VNF2vnfddeploymentem-1:~$ /opt/cisco/ncs/current/bin/ncs_cli -u admin -C

admin connected from 172.17.180.6 using ssh on VNF2vnfddeploymentem-1

admin@scm# show ems

EM VNFM

ID SLA SCM PROXY

---------------------

2 up up up

3 up up up

- Faça login no VNF do StarOS e verifique se a placa CF está no estado de espera

Manipular falha de recuperação ESC

Nos casos em que o ESC não inicia a VM devido a um estado inesperado, a Cisco recomenda como executar uma alternância do ESC reinicializando o ESC Mestre. A transição para o sistema ESC demoraria cerca de um minuto. Execute o script "health.sh" no novo Master ESC para verificar se o status está ativo. ESC mestre para iniciar a VM e corrigir o estado da VM. Essa tarefa de recuperação levaria até 5 minutos para ser concluída.

Você pode monitorar /var/log/esc/yangesc.log e /var/log/esc/escmanager.log. Se você não perceber que a VM é recuperada após 5 a 7 minutos, o usuário precisará fazer a recuperação manual da(s) VM(s) afetada(s).

Atualização de configuração de implantação automática

- Em AutoDeploy VM, edite o autodeploy.cfg e substitua o servidor de computação antigo pelo novo. Em seguida, carregue replace no confd_cli. Esta etapa é necessária para uma desativação de implantação bem-sucedida mais tarde:

root@auto-deploy-iso-2007-uas-0:/home/ubuntu# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on auto-deploy-iso-2007-uas-0

auto-deploy-iso-2007-uas-0#config

Entering configuration mode terminal

auto-deploy-iso-2007-uas-0(config)#load replace autodeploy.cfg

Loading. 14.63 KiB parsed in 0.42 sec (34.16 KiB/sec)

auto-deploy-iso-2007-uas-0(config)#commit

Commit complete.

auto-deploy-iso-2007-uas-0(config)#end

- Reinicie os serviços uas-confd e autodeploy após a alteração da configuração:

root@auto-deploy-iso-2007-uas-0:~# service uas-confd restart

uas-confd stop/waiting

uas-confd start/running, process 14078

root@auto-deploy-iso-2007-uas-0:~# service uas-confd status

uas-confd start/running, process 14078

root@auto-deploy-iso-2007-uas-0:~# service autodeploy restart

autodeploy stop/waiting

autodeploy start/running, process 14017

root@auto-deploy-iso-2007-uas-0:~# service autodeploy status

autodeploy start/running, process 14017

Componente RMA - Nó Controlador

Pré-verificação

- No OSPD, faça login no controlador e verifique se os pcs estão em bom estado - todos os três controladores Online e Galera mostram todos os três controladores como Master.

Note: Um cluster íntegro requer dois controladores ativos, portanto, verifique se os dois controladores restantes estão Online e ativos.

[heat-admin@pod1-controller-0 ~]$ sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-2 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Mon Dec 4 00:46:10 2017 Last change: Wed Nov 29 01:20:52 2017 by hacluster via crmd on pod1-controller-0

3 nodes and 22 resources configured

Online: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Full list of resources:

ip-11.118.0.42 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-11.119.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

ip-11.120.0.49 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.102 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

ip-11.120.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-2 ]

Slaves: [ pod1-controller-0 pod1-controller-1 ]

ip-10.84.123.35 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-2

my-ipmilan-for-pod1-controller-0 (stonith:fence_ipmilan): Started pod1-controller-0

my-ipmilan-for-pod1-controller-1 (stonith:fence_ipmilan): Started pod1-controller-0

my-ipmilan-for-pod1-controller-2 (stonith:fence_ipmilan): Started pod1-controller-0

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

Mover Cluster de Controladores para Modo de Manutenção

- Use o cluster pcs no controlador que é atualizado em espera:

[heat-admin@pod1-controller-0 ~]$ sudo pcs cluster standby

- Verifique o status dos pcs novamente e certifique-se de que o cluster de pcs parou neste nó:

[heat-admin@pod1-controller-0 ~]$ sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-2 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Mon Dec 4 00:48:24 2017 Last change: Mon Dec 4 00:48:18 2017 by root via crm_attribute on pod1-controller-0

3 nodes and 22 resources configured

Node pod1-controller-0: standby

Online: [ pod1-controller-1 pod1-controller-2 ]

Full list of resources:

ip-11.118.0.42 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-11.119.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

ip-11.120.0.49 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.102 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-1 pod1-controller-2 ]

Stopped: [ pod1-controller-0 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-1 pod1-controller-2 ]

Slaves: [ pod1-controller-0 ]

ip-11.120.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-2 ]

Slaves: [ pod1-controller-1 ]

Stopped: [ pod1-controller-0 ]

ip-10.84.123.35 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-2

my-ipmilan-for-pod1-controller-0 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-pod1-controller-1 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-pod1-controller-2 (stonith:fence_ipmilan): Started pod1-controller-2

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

Além disso, o status dos computadores nos outros 2 controladores deve mostrar o nó como standby.

Substitua o componente com defeito do nó da controladora

Desligue o servidor especificado. As etapas para substituir um componente defeituoso no servidor UCS C240 M4 podem ser consultadas em:

Substituindo os componentes do servidor

Ligue o servidor

- Ligue o servidor e verifique se o servidor é ativado:

[stack@tb5-ospd ~]$ source stackrc

[stack@tb5-ospd ~]$ nova list |grep pod1-controller-0

| 1ca946b8-52e5-4add-b94c-4d4b8a15a975 | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.112 |

- Faça login no controlador afetado, remova o modo de espera com o uso de unstandby. Verifique se o controlador está on-line com cluster e se Galera mostra todos os três controladores como Master. Isso pode levar alguns minutos:

[heat-admin@pod1-controller-0 ~]$ sudo pcs cluster unstandby

[heat-admin@pod1-controller-0 ~]$ sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-2 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Mon Dec 4 01:08:10 2017 Last change: Mon Dec 4 01:04:21 2017 by root via crm_attribute on pod1-controller-0

3 nodes and 22 resources configured

Online: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Full list of resources:

ip-11.118.0.42 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-11.119.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

ip-11.120.0.49 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.102 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

ip-11.120.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-2 ]

Slaves: [ pod1-controller-0 pod1-controller-1 ]

ip-10.84.123.35 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-2

my-ipmilan-for-pod1-controller-0 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-pod1-controller-1 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-pod1-controller-2 (stonith:fence_ipmilan): Started pod1-controller-2

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

- Você pode verificar alguns dos serviços de monitoramento, como ceph, que estão em um estado íntegro:

[heat-admin@pod1-controller-0 ~]$ sudo ceph -s

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_OK

monmap e1: 3 mons at {pod1-controller-0=11.118.0.10:6789/0,pod1-controller-1=11.118.0.11:6789/0,pod1-controller-2=11.118.0.12:6789/0}

election epoch 70, quorum 0,1,2 pod1-controller-0,pod1-controller-1,pod1-controller-2

osdmap e218: 12 osds: 12 up, 12 in

flags sortbitwise,require_jewel_osds

pgmap v2080888: 704 pgs, 6 pools, 714 GB data, 237 kobjects

2142 GB used, 11251 GB / 13393 GB avail

704 active+clean

client io 11797 kB/s wr, 0 op/s rd, 57 op/s wr

Histórico de revisões

| Revisão | Data de publicação | Comentários |

|---|---|---|

1.0 |

02-Jul-2018

|

Versão inicial |

Colaborado por engenheiros da Cisco

- Prashanth ShettyServiços avançados da Cisco

- Padmaraj RamanoudjamServiços avançados da Cisco

Contate a Cisco

- Abrir um caso de suporte

- (É necessário um Contrato de Serviço da Cisco)

Feedback

Feedback