FabricPath to ACI Migration Cisco Validated Design Guide

Available Languages

FabricPath to ACI Migration Cisco Validated Design Guide

First Published: September 1, 2014

Last Updated: May 5, 2017

Contents

¯ Preface

¯ Audience

¯ Scope

■ Initial Design Considerations

¯ Management Out-of-Band and In-Band

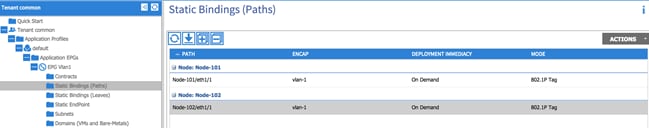

¯ Connectivity With VLAN to EPG Static Mappings

¯ Scenario 1: Mapping a VLAN to Multiple EPGs

¯ Scenario 2: Mapping VLANs to EPGs (1:1)

■ Infrastructure Deployment Considerations

¯ FabricPath Enabled Datacenter

¯ ACI Infrastructure Deployment

¯ Fabric Access Policy Configuration

¯ Integration Phase – Scenario 1

¯ Migration Phase – Scenario 1

¯ Fabric Access Policy Configuration

¯ Integration Phase – Scenario 2

¯ Migration Phase – Scenario 2

¯ UCS B-series and ACI integration considerations

¯ ACI Object Naming Conventions

■ Obtaining Documentation and Submitting a Service Request

Introduction

Preface

This document describes the migration procedures that can be adopted to move workloads and applications between a Brownfield environment and a new Greenfield ACI fabric. Different use cases are discussed, including the migration of network services and of the connectivity to the external Layer 3 network.

Audience

This document is intended for use by network architects and engineers to aid in developing operational-based solutions for Cisco ACI.

Scope

The scope of this document is to specifically cover Cisco ACI concepts for implementing an operational model for the ACI fabric. Limited background information is included on other related components whose understanding is required for the solution implementation. For more background information on ACI please refer to the following link: http://www.cisco.com/go/aci.

The following documents discuss Cisco ACI design and deployment considerations, which are useful prerequisites:

· Cisco Application Centric Infrastructure Design Guide:

http://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c11-731960.html

· Cisco Application Centric Infrastructure (ACI) - Endpoint Groups (EPG) Usage and Design:

http://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c11-731630.html

Advantages to Adopting Cisco ACI

Cisco Application Centric Infrastructure (ACI) is an innovative secure architecture that delivers centralized application-driven policy automation, management, and visibility of physical and virtual networks. ACI is built upon a fabric foundation that delivers the best-in-class infrastructure by combining hardware, software, and ASIC innovations into an integrated system.

ACI provides significant advantages over a FabricPath-based network, and some of those are listed as follows:

| Areas |

FabricPath |

ACI Fabric |

ACI Technical Advantages |

ACI Business Advantages |

| Fabric Technology |

routing |

VXLAN, bridging, gateway, vPC |

· Enables a reliable, yet flexible placement of multitenant segments throughout the data center. · Enables better utilization of available network paths in the underlying infrastructure. |

· Reduces time to market for new services in a reliable manner · Enables an easy, modular, and scalable approach to deploy and place application workloads anywhere in the data center |

| Fabric Technology Enhancements |

N/A |

Enhanced VXLAN |

· Enhanced VXLAN provides advanced capabilities such as atomic counters and fabric-wide security. · ACI-vCenter integration means VNIDs are where you need them and when, instead of needing to configure them on all switches, which helps scale effectively. · Complete overlay visibility and troubleshooting insight. |

· Increase in application performance reflects directly on improved customer experience and ease of use of business and consumer services · Increased reliability due to proactive problem resolution |

| Endpoint (MAC/IP) Discovery |

Multicast, broadcast flooding |

Routing control plane |

· With routing control plane, ACI inherently increases stability, reliability, and security due to the use of routing and eliminating broadcast, multicast-based endpoint (MAC) discovery · Combines the efficiency of Layer 3 routing and VXLAN to provide a highly flexible, secure, and scalable solution |

· A more stable, reliable, and secure fabric directly contributes to customer success · Increases operational efficiency of customer staff |

| Management |

Per device |

A single system |

· Industry leading Cisco Nexus operating system · Even with hundreds of switches, ACI provides a single point of managing the fabric via Application Policy Infrastructure Controller (APIC). · Freedom from VNID/VLANID management on a per-switch basis · Unified firmware/software management control with rolling updgrade schedules |

· Substantial operational savings from eliminating hours of time and effort spent in managing hundreds of switches (including configuration, status checks, or upgrades) |

| Operations |

Per device |

Single system |

· As a single system, ACI provides complete application, network, and virtual compute visibility · Visibility: application health scores, fabric health scores, device health scores · Ability to perform impact analysis with reflection on what applications are impacted by network configuration changes (such as if a switch goes down, which EPGs/VLANs/VXLANs are impacted) · Subnet <> BD <> EPG independence (much simpler to implement than VXLANs/VXLANs on a large scale as would be needed in any legacy networking solution, including standalone) |

· End-to-end visibility reduces troubleshooting time of not only network infrastructure, but also for the virtual, compute, and application infrastructure · Increase in application performance reflects directly on improved customer experience and ease of use of business services |

| Security |

Per device |

Integrated |

· Automates security policy while allowing security teams to retain control over policies for compliance · Automated insertion of security services simplifies application deployments |

· Effectively addresses the ever-increasing concern around security · Improved security with faster provisioning · Simplified operations |

| Programmability |

XML, Python per device |

Fabric-wide APIs available on APIC |

· There is a single-point API for entire fabric · Support for OpFlex and device packages to extend fabric policy outside of the fabric |

· Consistency and agility across infrastructure · Flexible deployment, easier scaling, and lower TCO |

| Virtual Integration |

Not built in |

Readily available |

· Central deployment model accelerates network and security infrastructure configuration · Helps enable an any-workload, anywhere deployment model |

· Operational efficiency · Rapid deployment · Higher availability and increased customer satisfaction |

| L4 – L7 Integration |

No Automation |

Automated and tightly coupled |

Tightly coupled L4-L7 service automation enables automation of application lifecycle |

· Increase in application performance reflects directly on improved customer experience and ease of use of business services · End-to-end visibility reduces troubleshooting time of not only network infrastructure, but also for the virtual, compute, and application infrastructure |

| Application Intelligence |

Traditional VLAN-based |

Application Profiles, EPG-based grouping, Application Policy |

· Ability to define network policy by application definition · Contracts allow granular, simple control of interaction endpoint groups |

· Improves time to market, as application provision can be automated end-to-end and with ease · Operational improvement enabled by self-documenting data center through the APIC policy model · Provides real-time visibility into detailed information about application, compute, VMs, and associated policies · Improved security and management |

In a nutshell, the motivation for customers to adopt ACI is due to:

■ Having an out-of-box automated fabric

■ Deploying a solution versus independent devices

Some of the operational advantages offered by ACI are:

■ End-to-end visibility reduces troubleshooting time of not only network infrastructure, but also for the virtual, compute, and application infrastructure

■ Increase in application performance reflects directly on improved customer experience and ease of use of business services

■ Flexibility, control, and customization

■ Highly secure and scalable multitenancy

■ Proactive problem resolution and faster troubleshooting

■ Simplified operations

■ Applications delivered in business time

Initial Design Considerations

Extension Considerations

Extension Options

There are several options for extending from the ACI fabric to traditional environments (that is, spanning tree protocol (STP), vPC, and FabricPath). An in-depth explanation for each is not provided, but rather a pros and cons list, and an overview about which option most customers choose to deploy in their networks today.

If you would like to review extension options in detail, review the CCO white paper “Connecting Application Centric Infrastructure (ACI) to Outside and 3 Networks” using the following link: http://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c07-732033.html.

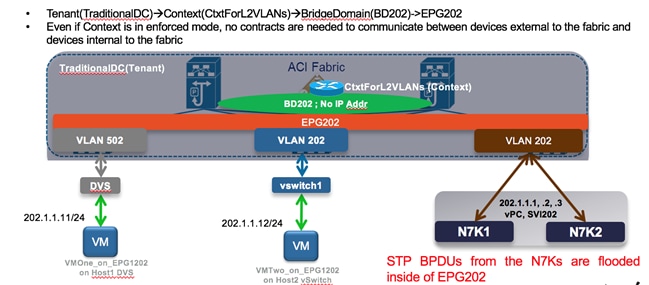

Connectivity via EPG – No Policy (L2Out via EPG)

This is the most popular option for extension to an ACI environment because via EPG is simple and straightforward. Users can extend an EPG beyond an ACI leaf by statically assigning a leaf port (along with a VLAN ID) to an EPG. After doing so, all the traffic received with the configured VLAN ID on this leaf port is mapped to the EPG and the configured policy for this EPG is enforced. The endpoints need not be directly connected to the ACI leaf port. They can be behind a network as long as the VLAN associated with the EPG is enabled within the network that connects the remote endpoint to the ACI fabric.

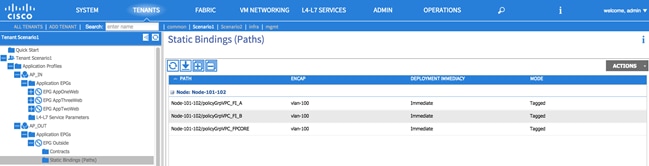

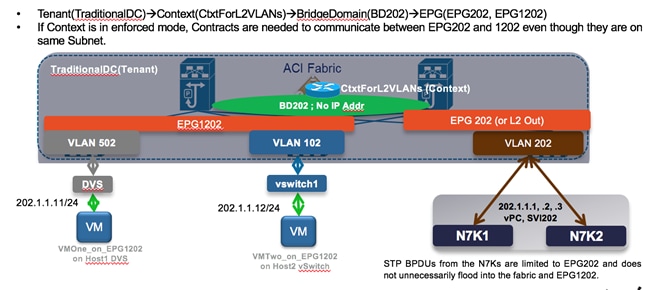

Consider the example in Figure 1 in which the following are shown:

1. Virtual workloads connected to a port group defined on an ACI-managed DVS and using VLAN tag 502 to send the traffic toward the ACI fabric.

2. Virtual workloads connected to a port group defined on a standard DVS not ACI-managed. From an ACI perspective, such endpoints are considered as belonging to a physical domain, hence static path bindings to a corresponding EPG is performed using VLAN tag 202.

3. Extension connectivity to an external network performed by creating a static path binding to a pair of Cisco Nexus 7000 switches using VLAN tag 202.

Note: The VLAN tags used for the static path bindings are only locally significant (on a per-interface basis), therefore there is no technical requirement to use the same 202 value shown in the following example.

Figure 1: L2Out via Static VLAN-EPG Mapping

Pros:

· Easiest of the solutions

· Straightforward

Cons:

· No contract enforcement between devices outside of the fabric (on the same broadcast domain) and devices inside the fabric, since they are all part of the same EPG202.

· STP TCNs, which are flooded from the Cisco Nexus 7000s, can result in traffic disruption as they cause the ACI fabric to flush the MAC address tables on the leaf nodes. There are mitigation steps to limit impact from this.

o Use vPC or double-sided vPC to connect ACI with STP environments.

o Enable the peer-switch feature with vPC (in the STP environment) that will eliminate root-bridge changes.

o Under the ACI BD configuration, enable flood mode for Layer 2 Unknown unicast packets. This will reduce the traffic disruption during an STP topology change.

Via BD – With Policy (L2Out via BD)

This section explains connectivity via a bridge domain (BD). Instead of extending from an EPG on the fabric, it is taken up a level and performs the extension from the BD. This enables the insertion of policy (that is, whitelist/contract functionality) between devices outside of the fabric (on the same broadcast domain) and devices inside of the fabric. Additionally, the STP TCN issue is marginally improved, as the STP TCNs are not flooded in EPGs attached to the BDs (only to the L2Out EPG).

Figure 2: L2Out via BD

Pros: Contract enforcement is enabled between devices outside of the fabric (on the same broadcast domain) and devices inside the fabric.

Cons: More complex to deploy.

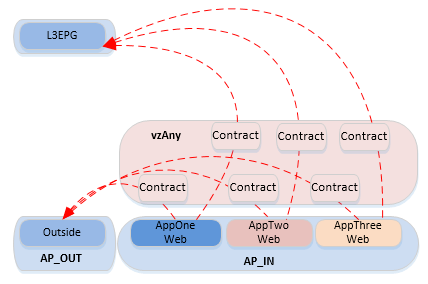

Connectivity via EPG – With Policy

If you need policy enforcement (contract functionality) enabled between devices outside of the fabric (on the same broadcast domain) and devices inside the fabric, but you don’t want the added complexity of the L2Out via BD, then Connectivity via EPG – With Policy is the recommended solution.

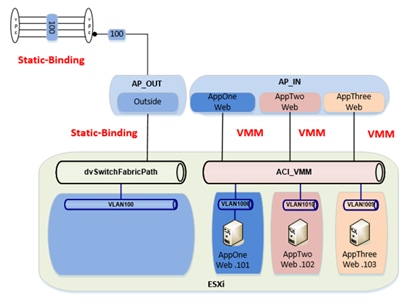

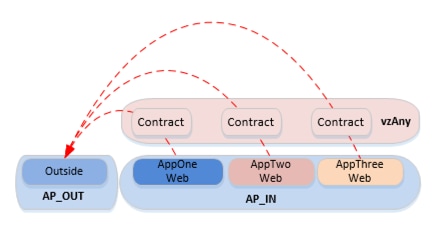

In the following figure, you can see the blending of the L2Out via EPG and L2Out via BD. There is an “Outside” EPG, which has connectivity via static path bindings to Nexus 7000s on the outside. Additionally, the “Outside” EPG also has static path bindings to a standard VMware DVS. The definition of an Outside EPG allows you to provide a contract to control the policy (communication) between “internal” EPGs (AppOneWeb, AppTwoWeb, and AppThreeWeb) and endpoints connected to the Outside EPG.

Note: This is the approach adopted for the migration scenarios discussed in this document.

Figure 3: L2Out via EPG (with Policy Enforcement)

Pros: Allows the same Contract enforcement between devices outside of the fabric (on the same broadcast domain) and devices inside the fabric as the L2Out via BD, without the associated complexity.

Cons: Same STP TCN concerns as for the Layer 2 via EPG scenario.

ACI Interaction With STP Considerations

While ACI does not run STP, nor participate in STP environments, it does pass STP bridge protocol data units (BPDUs) it receives to other devices in the same EPG. For this reason, it is very important that the design takes this principle into account.

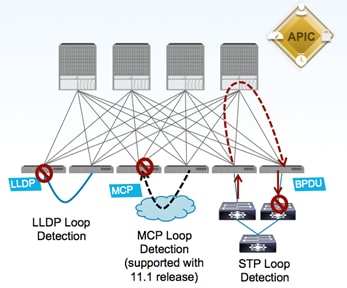

The following figure shows the three mechanisms currently used in ACI for loop detection.

Figure 4: ACI Loop Detetction Mechanisms

· LLDP Loop Detection: if an ACI leaf node receives on an edge port an LLDP frame generated by another leaf node part of the same fabric, the edge port is disabled.

· Mis-Cabling Protocol (MCP) Loop Detection: new link-level protocol sending MCP frames on all VLANs on all edge ports. If any ACI leaf receives on an edge port an MCP frame generated by another leaf part of the same ACI fabric, the edge port gets error-disabled.

· STP Loop Detection: when connecting to an outside network, the ACI fabric floods the received STP BPDU frames within the boundary of the EPG (by using the VXLAN network identifier (VNID) assigned for the EPG when it encapsulates the BPDU in VXLAN format). External switches are expected to break any potential loop upon receiving the flooded BPDU from the ACI fabric.

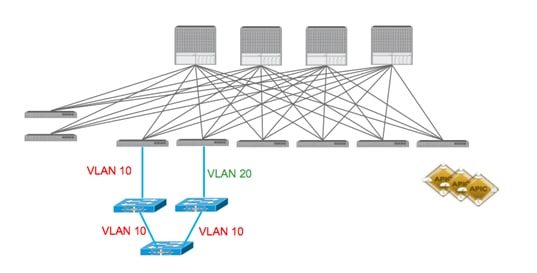

Regarding the STP loop detection mechanism, in order for the external network to be able to detect a Layer 2 loop, it is important that the VLAN ID mapped to an EPG is kept consistent across different interfaces. This requires attention when extending connectivity outside the fabric by leveraging static EPG to VLAN mapping, as discussed in the “Layer-2 Extension Options” section.

Figure 5: ACI and STP Interaction

Figure 5 highlights what happens when that is not the case: the Layer 2 loop cannot be detected by the external Layer 2 switches, since a different VLAN tag is used on the two interfaces connecting them to the ACI fabric. Notice that this would not create an end-to-end Layer 2 loop in the data plane, but it may cause the Layer 2 switches to error-disable the interface that receives the BPDU for VLAN 10 on an interface configured as part of VLAN 20.

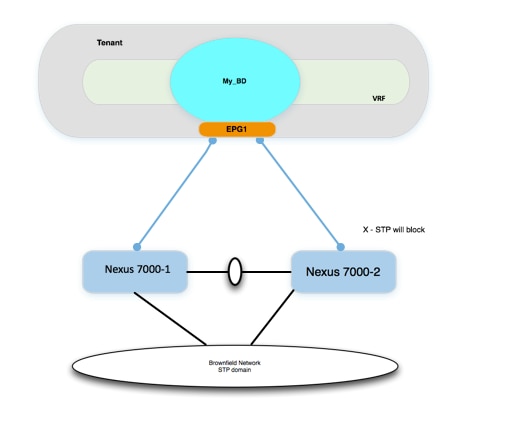

A couple of additional best practices when connecting an external Layer 2 network to the ACI fabric are captured as follows (and in the following diagrams):

· Never connect the same STP domain (Layer 2 network) to ACI fabric edge interfaces part of two different EPGs. Since the STP BPDUs are flooded inside the EPG, a Layer 2 loop created via the external Layer 2 domain cannot be detected in this case.

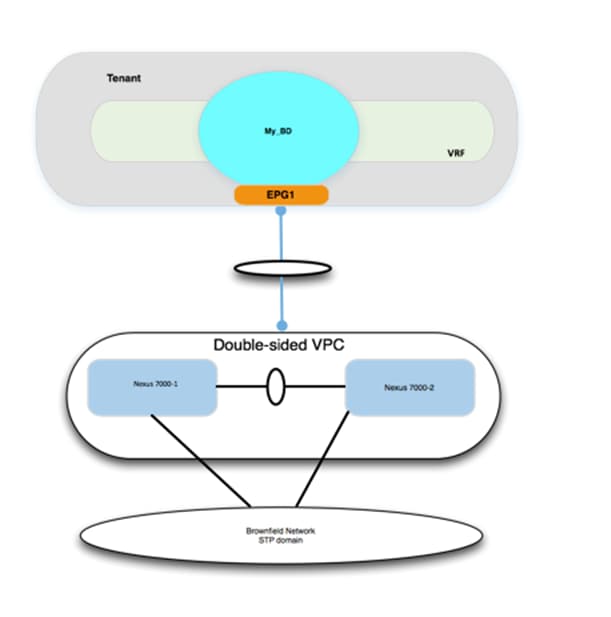

· Always ensure there is a single logical vPC connection between the ACI fabric and the external Layer 2 network domain.

Figure 6: STP and ACI Designs – STP Loop Free

In the example below, STP BPDUs are flooded inside of the EPG, not at the BD level. The Cisco Nexus 7000s will see BPDUs through the ACI fabric. As long as the devices in the STP environment see the appropriate BPDUs, they will forward and block appropriately.

Figure 7: STP and ACI Designs – STP Loop Free with vPC

In the example below, STP BPDUs are flooded inside of the EPG, not at the BD level. However, because there are no physical loops connecting up to the ACI fabric, there is no chance of an STP loop.

ACI Interaction With STP TCNs

Although the ACI fabric control plan doesn’t run STP, it does intercept the STP TCN frame. Why would ACI care about STP TCN frames if it doesn’t run STP? ACI uses the TCNs to flush out MAC address entries, which helps avoiding the “black-holing” of traffic after an STP topology change on the outside network. Upon receiving an STP BPDU TCN frame, the APIC flushes the MAC addresses for the corresponding EPG that experienced the STP topology change. This does have an impact on the choice of how the ACI fabric forwards unknown unicast. By default, the ACI leaf forwards the unknown unicast traffic to a spine proxy for further lookup.

The spine node will drop the packet if the MAC address doesn’t exist in the proxy database. This option is called “Hardware Proxy,” and it is the default option. The other unknown unicast configuration option is “flood mode”, which causes the bridge domain (BD) to operate like a standard switch. When the “Hardware Proxy” option is selected and the fabric detects an STP topology change, the MAC addresses for the EPG are flushed in the fabric. Communication is impacted until the endpoints MAC addresses are learned again.

There are several ways to limit the impact of STP TCNs with ACI. When connecting ACI to external STP domains, use the following best practices:

1. Use vPC or double-sided vPC to connect ACI with STP environments to ensure no Layer 2-looped topology can be created between those networks.

2. Recommend to enable the peer-switch feature with vPC (in the Brownfield STP environment) that will eliminate root-bridge changes.

3. Under the ACI bridge domain (BD) configuration, enable flood mode for Layer 2 unknown unicast packets. This will reduce the traffic disruption during an STP topology change.

ACI With MST

BPDU frames for Per-VLAN Spanning Tree (PVST) and Rapid Per-VLAN Spanning Tree (RPVST) carry a VLAN tag. The ACI leaf can identify which EPG the BPDU should be flooded in based on the VLAN tag in the frame. In MST (802.1s) deployments, however, BPDU frames are sent untagged over the native VLAN. Because of these factors, additional configuration is required in the ACI fabric in order for Multiple Spanning Tree (MST) BPDUs to be properly flooded.

By default, there is no native VLAN configured for ACI. Additionally, the native VLAN is not generally used to carry data traffic. To accept traffic for any VLAN, the VLAN must be provisioned by a statically assigned port and a VLAN to an EPG. As a result, to ensure MST BPDUs are flooded to the desired ports, the user must create a specific EPG to carry those BPDUs. As shown in the following diagram, the mode must be “native” given that the BPDU frame is untagged.

Figure 8: Assign Port to an EPG Using Native Mode

In addition to the configuration tasks previously described, the user must also create a physical domain and associated VLAN pool (which includes VLAN 1 in this example), and the attachable access entity profile to allow VLAN 1 to be used for these ports.

Layer 3 Considerations

MTU

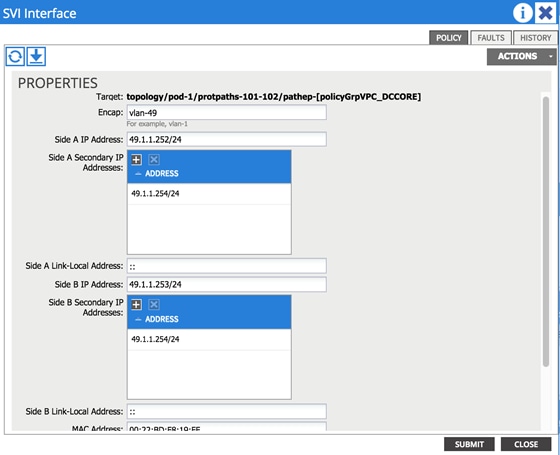

When using routed interfaces, routed subinterfaces, or SVI interfaces for ACI, be aware that the ACI fabric defaults to a system MTU of 9000 for all interfaces (Layer 2 and Layer 3). This means that it is critical to ensure that the MTU configuration on the corresponding external router interfaces matches this value. Failure to do so will lead to suboptimal fragmentation or routing protocol adjacency failures or could lead to packet loss. For example, if devices inside the fabric, that is, VMs, are configured to use jumbo MTUs, and the L3Out is configured for an MTU of 1500, the fabric will drop the packets on egress.

As an example, on external Cisco Nexus 7000 devices, this means you have to configure the following:

· Set the system jumboMTU to 9216 globally:

DCCORE01(config)# system jumbo 9216

· Configure the Layer 2 interfaces (Layer 2 port-channels, Layer 2 trunks, and Layer 2 access ports) to the system jumboMTU value:

DCCORE01(config-if)# mtu 9216

· Configure the Layer 3 interfaces (SVIs, routed sub-interfaces, and Layer 3 routed interfaces) to match the ACI MTU of 9000:

DCCORE01(config-if)# mtu 9000

Note: Enable Jumbo MTUs throughout the datacenter, and use a routing platform (that is, ASR 1000, ASR 9000, and so on) to step down your MTU from jumbo to a standard MTU of 1500. Do not use a switching platform to perform MTU translation.

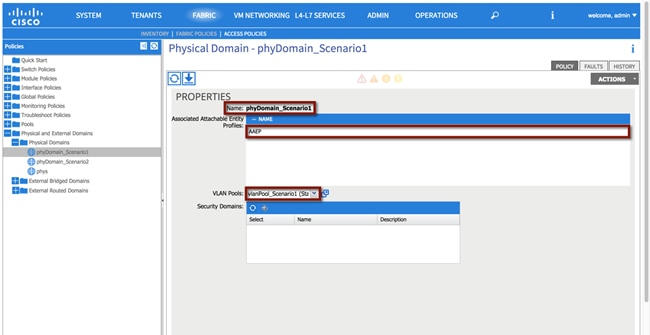

SVI-Based Interfaces for ACI

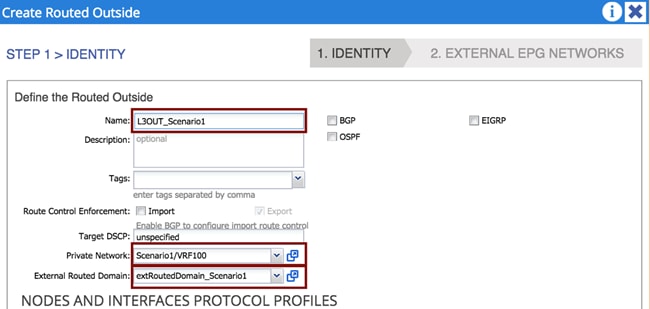

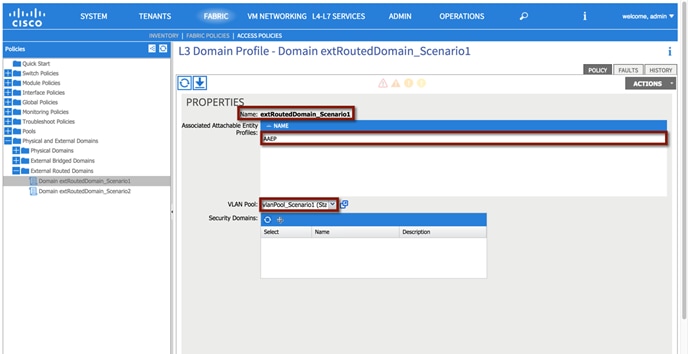

External Routed Domain

When using SVI-based interfaces for external connectivity, it is mandatory that you define and associate an external routed domain to the L3Out connection. This is because incoming and outgoing traffic must be using the specified VLAN tag so that the corresponding SVI interfaces can be enabled. The available VLAN tags are configured in the VLAN pool associated to the External Routed Domain, which will be discussed in more detail in “External Routed Domain” section.

Figure 9: Associate an External Layer 3 Domain to an L3Out Connection

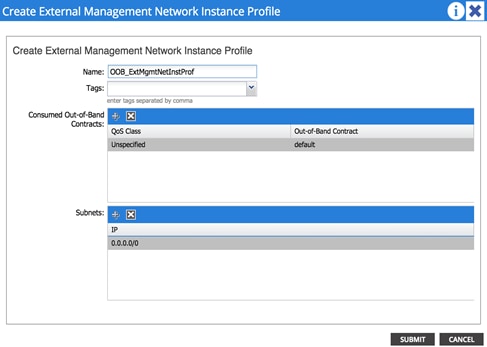

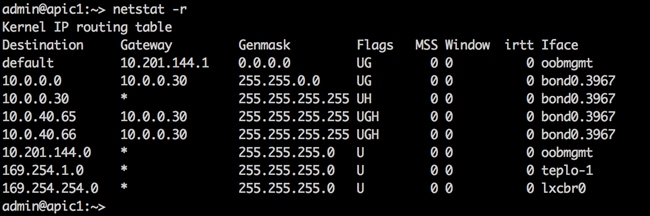

Management Out-of-Band and In-Band

Out-of-Band and In-Band Key Concepts

· Contracts are always needed to permit traffic to the out-of-band (OOB) interfaces of the leaf and spine switches. Don’t forget to configure your contract in the “mgmt” tenant.

· Tenant à Tenant mgmt à Node Management EPGs, click Out-Of-Band EPG, and select “default”.

· The default contract acts as a permit ip any.

Figure 10: Create a Contract to Access the OOB Mgmt Network

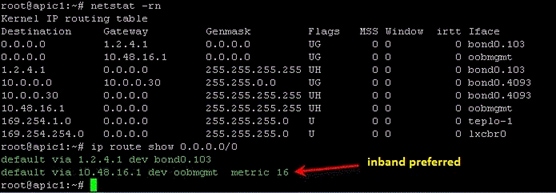

Note: If you install both a default route via OOB and via inband, the inband path is preferred over OOB.

Figure 11: Preferred Inband Default Route

Migration Strategy

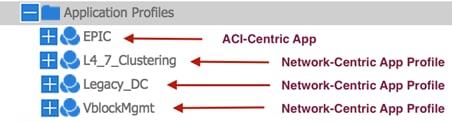

The process described in this guide is referred to as “network-centric migration”, and consists of interconnecting the existing Brownfield datacenter network (usually built on STP, vPC or in this case, FabricPath) to a new deployment of ACI, with the goal of migrating workloads from the old environment to the new environment.

In order to accomplish the migration, you must map traditional network concepts (VLANs, IP subnets, VRFs, etc.) to new ACI constructs, like endpoint groups (EPGs), bridge domains (BDs), and private networks (ACI constructs will be discussed later in this document).

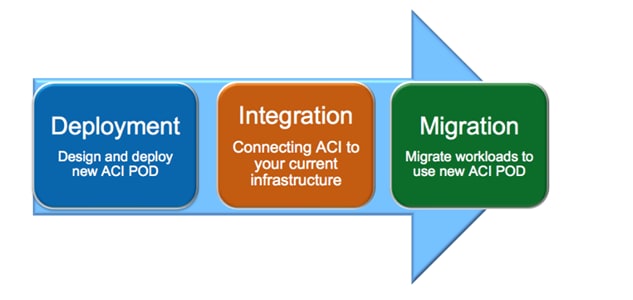

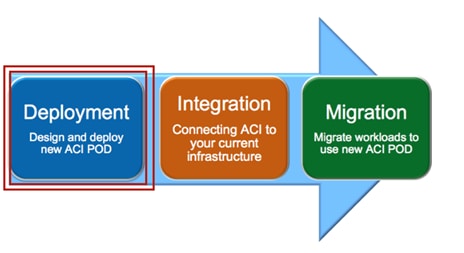

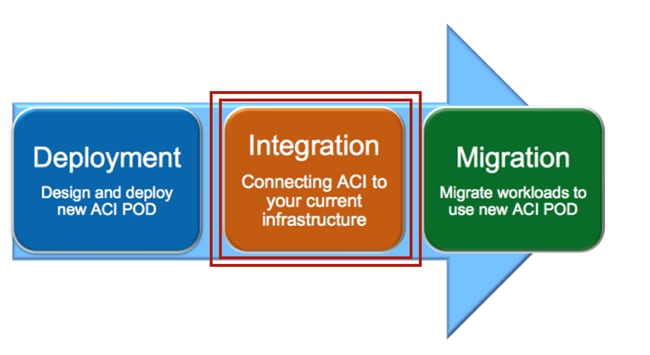

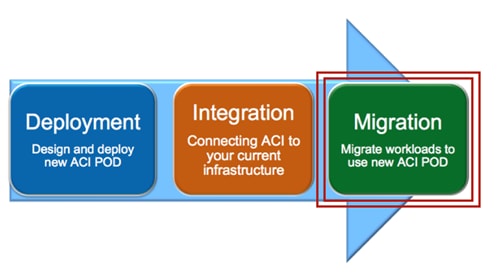

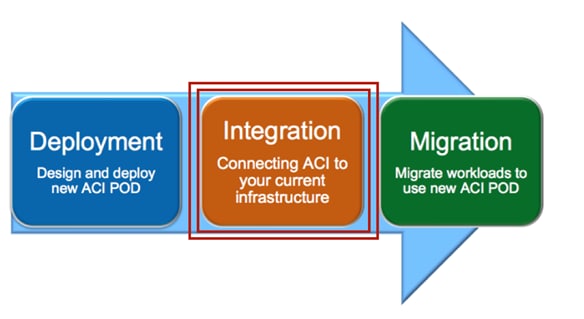

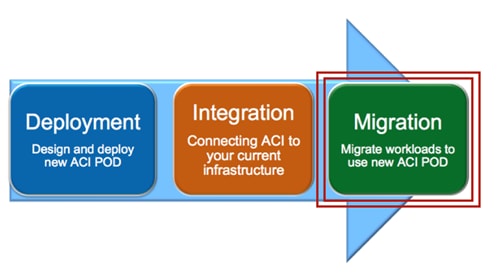

The following diagram shows the ACI network-centric migration methodology, which highlights the major steps required for performing the migration of applications from a Brownfield datacenter network to an ACI fabric.

Figure 12: ACI Network Centric Migration Methodology

The steps of the ACI network-centric migration are described as follows:

1. Deployment –The first step is the design and deployment of the new ACI POD; it is likely that the size of such a deployment is initially small, with plans to grow it over time. A typical ACI POD consists of at least two spines and two leaf switches, which are managed by a cluster of at least three APIC controllers.

2. Integration – The second step is the integration between the existing DC network infrastructure (usually called the Brownfield network) and the new ACI POD. Layer 2 and Layer 3 connectivity between the two networks is required to allow successful workload migration from the Brownfield network to the new ACI infrastructure.

3. Migration – The final step consists of migrating workloads between the Brownfield network and the ACI POD. It is likely that this application migration process may take several months to complete. During this time, a Layer 2 and Layer 3 interconnect between the Brownfield environment and ACI infrastructure remains in place to allow communication to occur until the migration is complete.

The following sections discuss in great detail those required steps, focusing on specific migration use cases.

· Note: This design guide is the companion document for the “Migrating Existing Networks to Cisco ACI” document.

Connectivity With VLAN to EPG Static Mappings

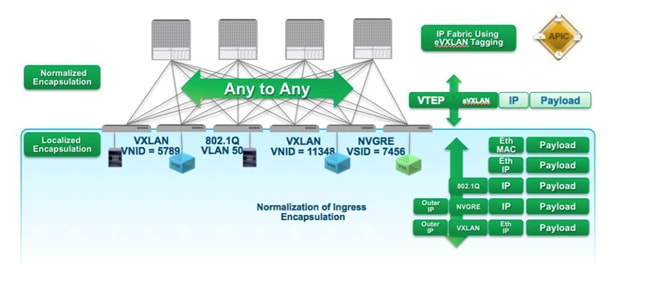

In ACI, VLANs do not exist inside the fabric; they are only defined on the edge ports connecting the virtual or physical endpoints. This means that the VLAN tags are localized on a per-interface basis. This method allows the possibility of establishing intra-IP subnet communication between devices, which are a part of segments identified by different 802.1q encapsulation tags (different VLAN numbers), or even another type of tag altogether, such as VXLAN or NVGRE encapsulation.

The following diagram shows the ACI normalization of ingress encapsulation, which demonstrates the fabric normalization of the port encapsulation.

Figure 13: Fabric Normalization of Port Encapsulation

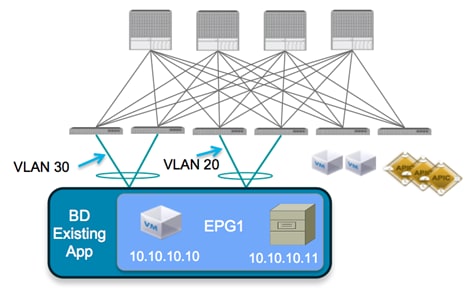

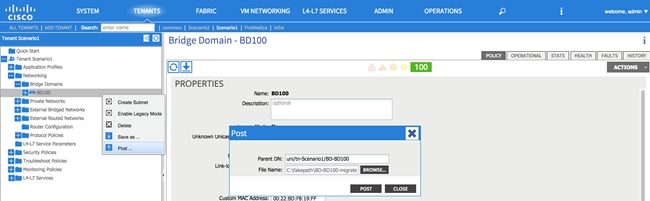

The traditional concept of a VLAN as a Layer 2 broadcast domain is replaced in the ACI fabric with a BD. The BD represents the Layer 2 broadcast domain where endpoints (either virtual or physical) are connected.

To better demonstrate this concept, consider the following diagram, which illustrates that it is possible to associate different VLAN tags (VLAN 20 and 30, in this example), which are configured on different edge ports to the same IP broadcast domain. The result is that endpoint 10.10.10.10 will still be able to communicate with endpoint 10.10.10.11, even though they are attached to different local VLANs.

Figure 14: ACI Local VLAN Significance

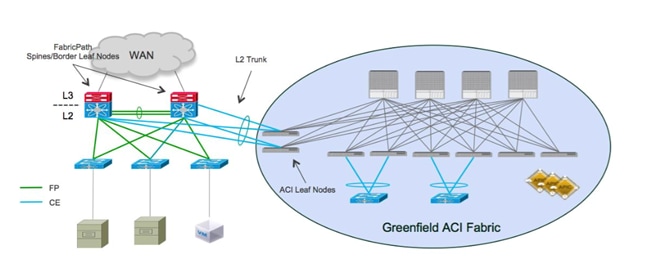

To connect the Brownfield network and the ACI fabric via Layer 2, perform the workload migration:

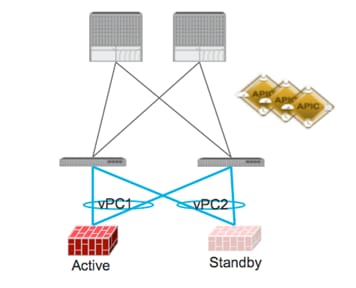

1. Establish a double-sided vPC connection between a pair of ACI border leaf nodes and the two devices representing the boundary between Layer 2/Layer 3 in the Brownfield data center network. Depending on the Layer 2 technology used in the Brownfield network (i.e., STP, vPC or FabricPath), this Layer 2/Layer 3 boundary may be found at the aggregation layer or on a dedicated pair of devices called border leaf nodes. The following diagram shows the Brownfield network connected to the ACI fabric.

The use of dedicated border leaf swithes for Layer 2/Layer 3 connectivity is recommended, but not required. It is worth noting that at the time of writing this document, up to 12K endpoints can be supported on the Brownfield network if they need to communicate at Layer 2 with the ACI fabric. This is because of the size of the hardware table available on a given pair of border leaf nodes to learn the MAC and IP addresses of those endpoints (on a Layer 2 interface). In scenarios where it is required to support a higher number of external endpoints, it is possible to deploy different pairs of border leaf nodes and spread among them the Layer 2 VLANs connecting to the Brownfield network domain. Always ensure that you are within the verified scalability numbers for endpoints, especially when attaching Brownfield environments to ACI. For more information, refer to the following document: http://www.cisco.com/c/en/us/td/docs/switches/datacenter/aci/apic/sw/1-x/verified-scalability/b_Verified_Scalability_Release_1_1_2h.html

Figure 15: Layer 2 Interconnection between the FP and ACI Networks

In this example, the Brownfield network is represented by a FabricPath implementation. The FabricPath spine layer not only serves as the Layer 2/Layer 3 boundary for the environment, but will also serve as the connection point to the ACI environment. A double-sided vPC+ connection to a pair of ACI border leaf nodes allows the extension of Layer 2 connectivity between the two network infrastructures without introducing any Layer 2 loop in the topology. This allows all vPC links to actively forward traffic on all paths.

Note: This design would look identical if the Brownfield network was built with STP or vPC as opposed to FabricPath.

2. Associate endpoints connected to VLANs in the Brownfield network to Endpoint Groups (EPGs), which are defined inside of the ACI fabric. As previously mentioned, the recommended approach discussed in this document consists of statically mapping VLAN tags to EPGs on the ACI leaf nodes. When doing so, there are a couple of scenarios to explore, which are discussed in detail in the following two sections.

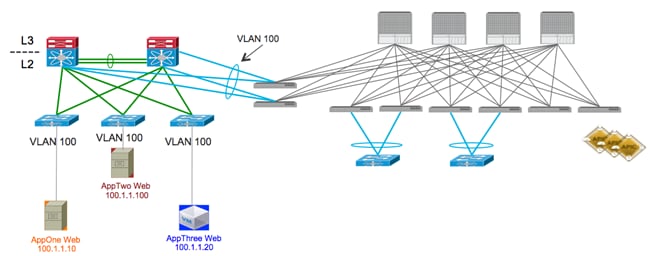

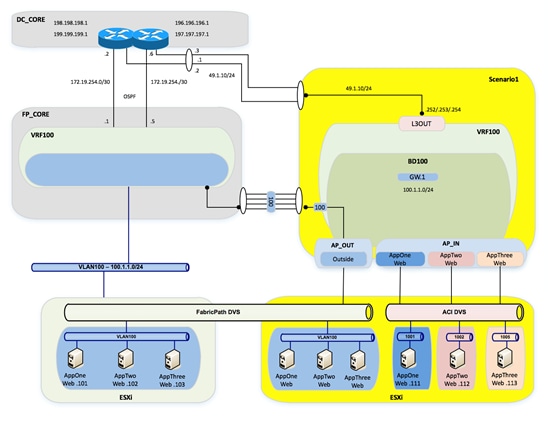

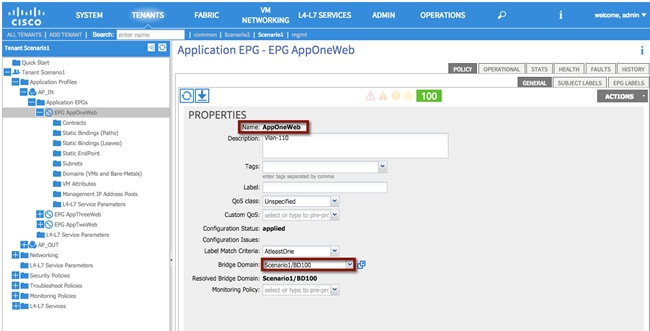

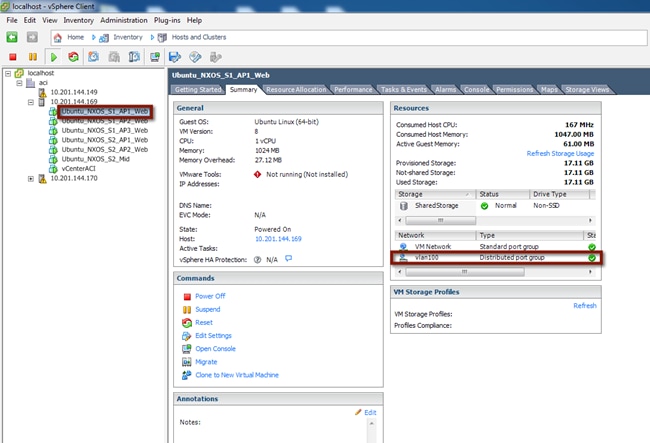

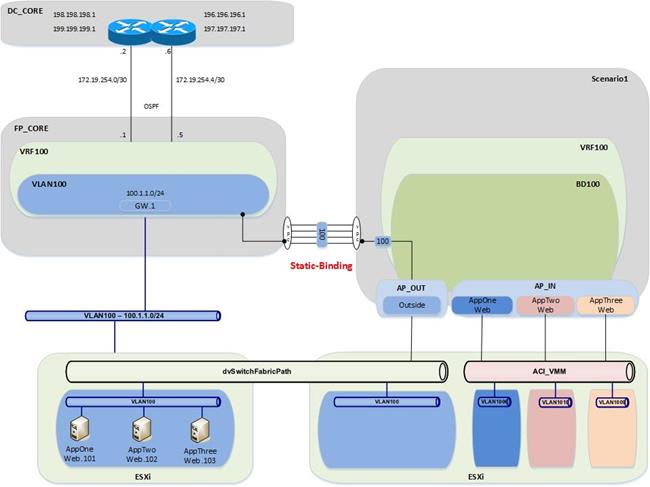

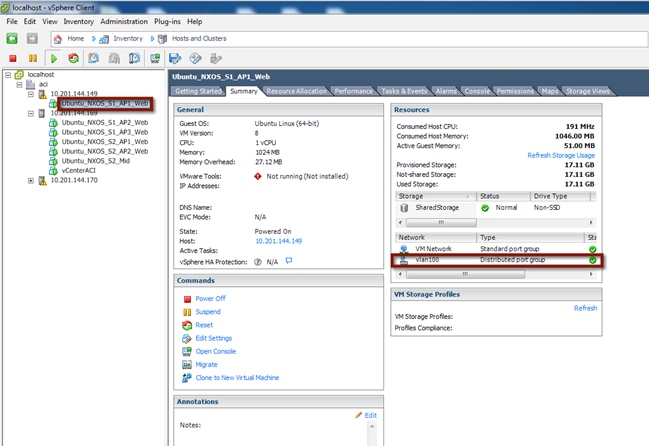

Scenario 1: Mapping a VLAN to Multiple EPGs

In this scenario, the customer has a single VLAN deployed in the FabricPath network, which supports multiple applications. Due to compliance regulations, the customer intends to segregate the application workloads on VLAN 100 based on application type, but cannot change IP addresses on any of their application servers.

The goal is to migrate the application workloads from the FabricPath environment to the new ACI fabric, where you can take advantage of the security functionalities offered by ACI to logically isolate the application workloads into groups of endpoints, based on application type.

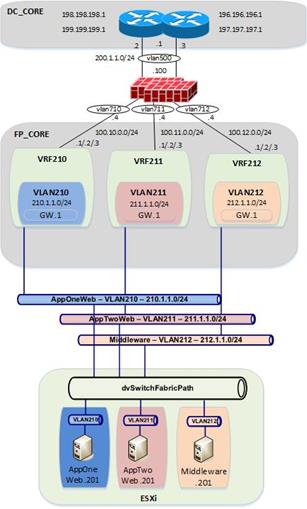

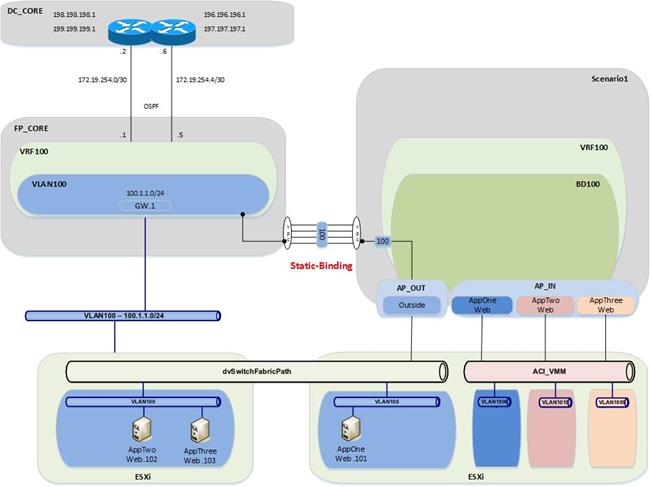

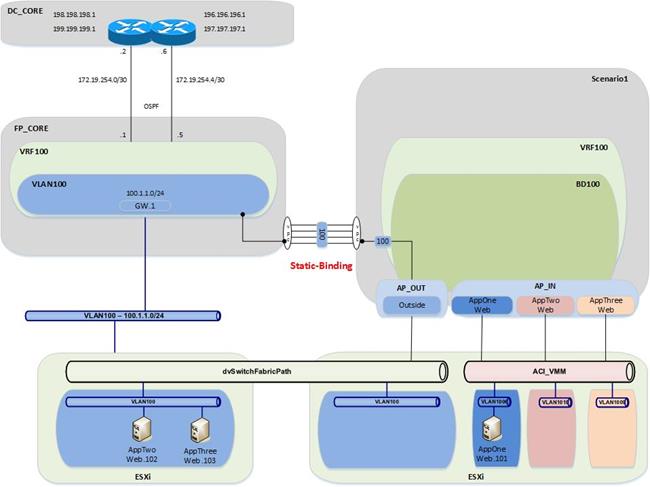

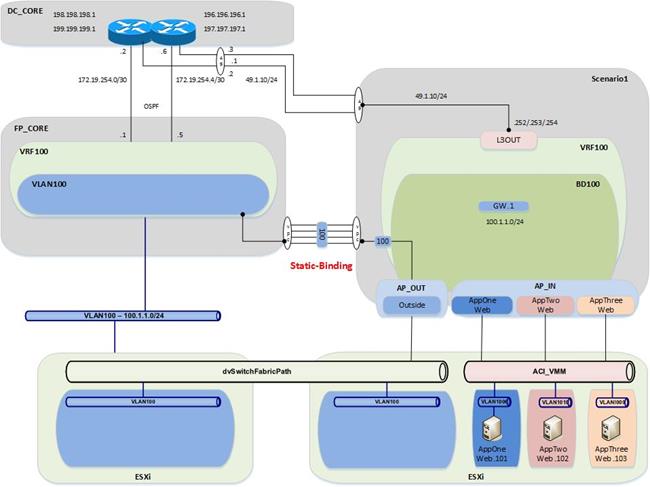

Figure 16: FabricPath to ACI Migration (Scenario 1)

The figure above shows the current application deployment for VLAN 100. All application servers reside in a single FaricPath VLAN.

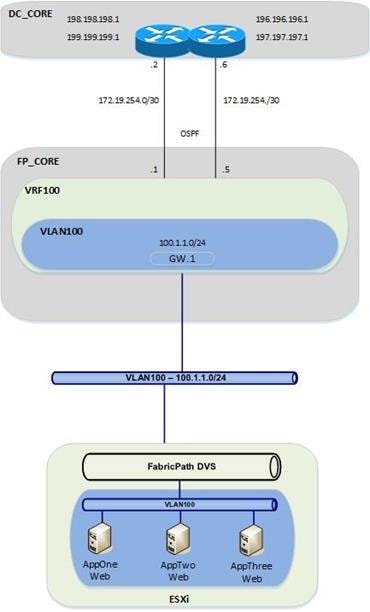

As shown in the following diagram, the current environment is a FabricPath datacenter, with Cisco Nexus 7000 devices serving as the aggregation/spine devices and providing Layer 2/Layer 3 services. The access layer devices are Cisco Nexus 5648 switches (Layer 2 only). For Scenario 1, there is one routing table configured in the FabricPath environment (which is the default routing table) and one VLAN configured (VLAN 100).

| Figure 17: Current DC Based on FabricPath

|

|

From the FabricPath Core switches, OSPF is running to the Data center core routers (DCCORE01/02).

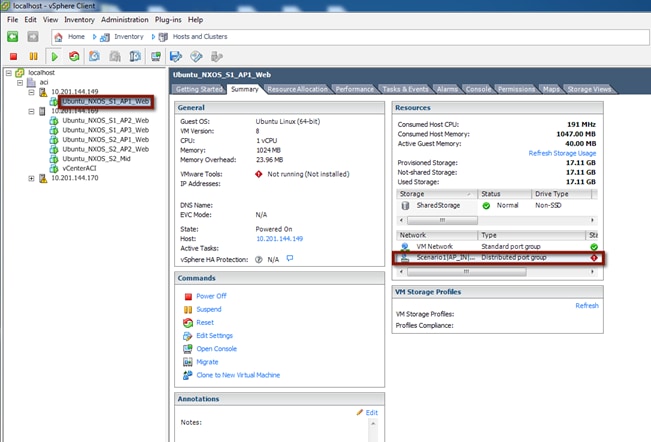

For the Compute layer, there are two ESXi hosts being managed by vSphere 5.5, with a traditional DVS. For Scenario 1, all three of the VM hosts reside in portgroup VLAN100 on the VMware-managed DVS (Distributed Virtual Switch). Because all three VM hosts reside in the same VLAN, they have full communication to each other, even though they are supporting different applications.

Requirements for New Application Architecture

1. Servers in VLAN 100 are divided into three logical groupings, based upon the application they support. The application grouping is as follows:

a. AppOneWeb

b. AppTwoWeb

c. AppThreeWeb

2. Workloads belonging to the same application can communicate with other servers that support the same application.

3. Servers in one application group cannot communicate with other servers supporting a different application.

4. Communication must be maintained between servers in the legacy FabricPath DC infrastructure and the new ACI DC infrastructure during migration.

5. IP addressing must be maintained unaltered for all servers.

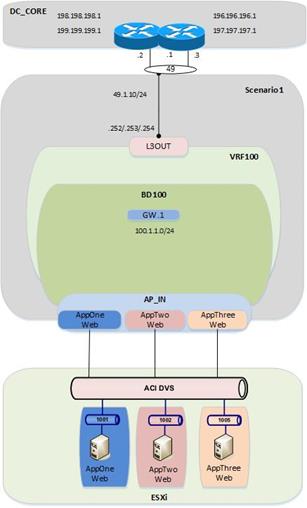

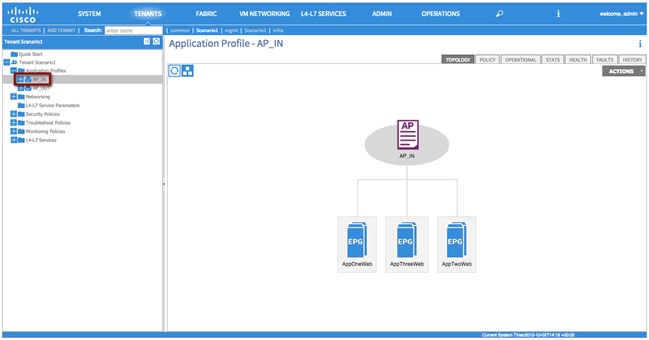

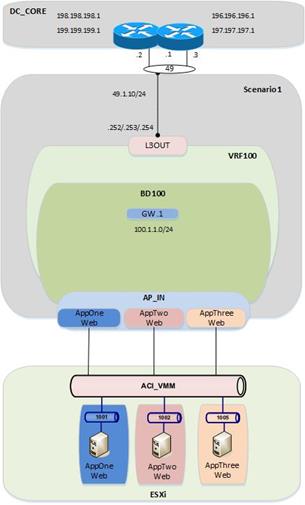

The following diagram shows the desired end state of the ACI fabric. VLAN 100 is subdivided into three endpoint groups: AppOneWeb, AppTwoWeb, and AppThreeWeb, respectively. While these three “groups” will share a common IP subnet, inter-EPG communication is restricted because of the default “white listing” type of policy implemented inside the ACI fabric.

Additionally, you will create a fourth EPG (not shown in the following diagram), called “Outside”, which will be used during the migration between the FabricPath environment and the ACI environment. From an ACI fabric perspective, all the endpoints still connected to the Brownfield network are grouped as part of this outside EPG. Finally, an L3Out connection is utilized to interconnect the ACI fabric to the external Layer 3 network domain.

Figure 18: ACI Fabric at the End of the Implementation

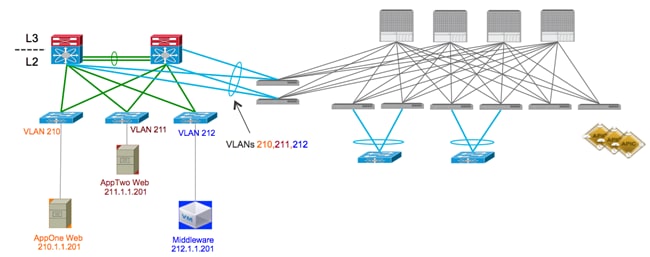

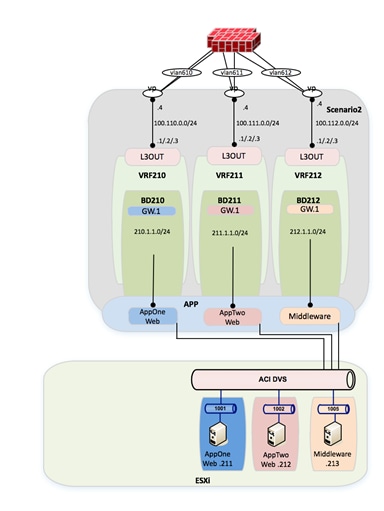

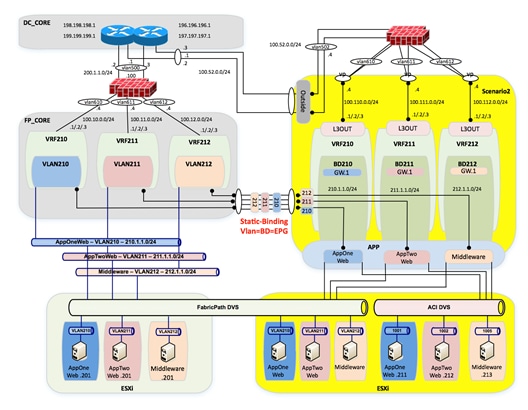

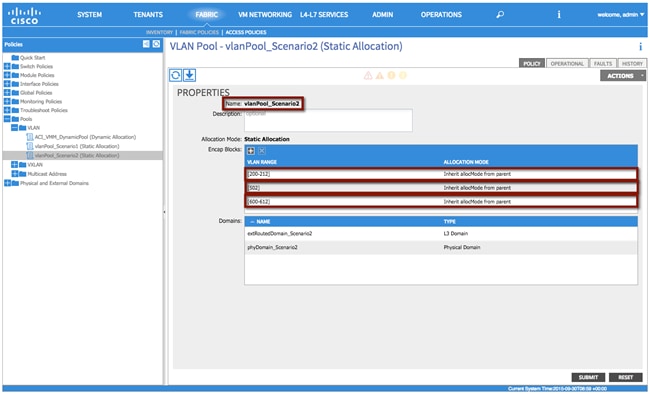

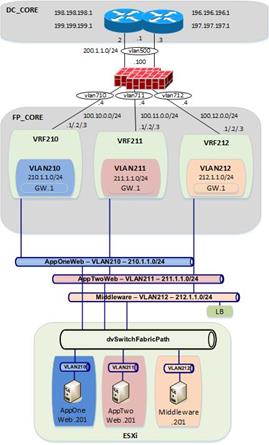

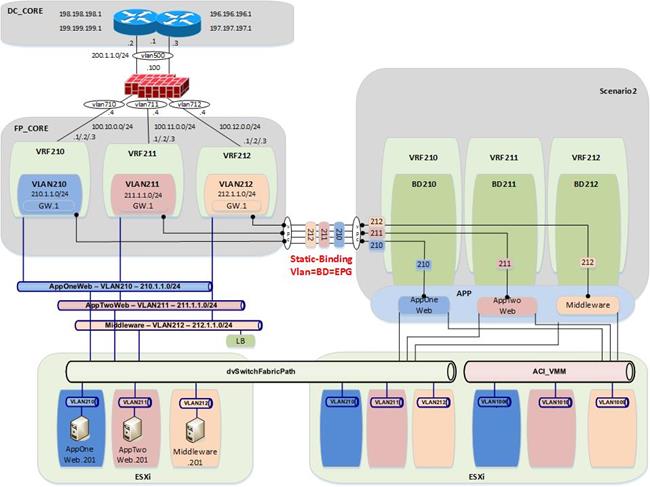

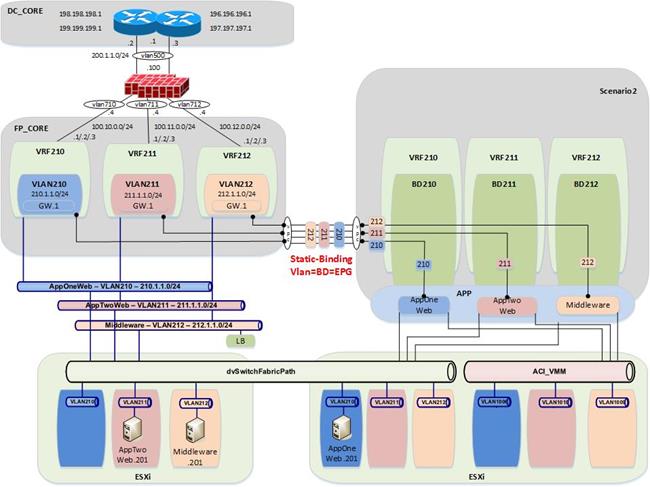

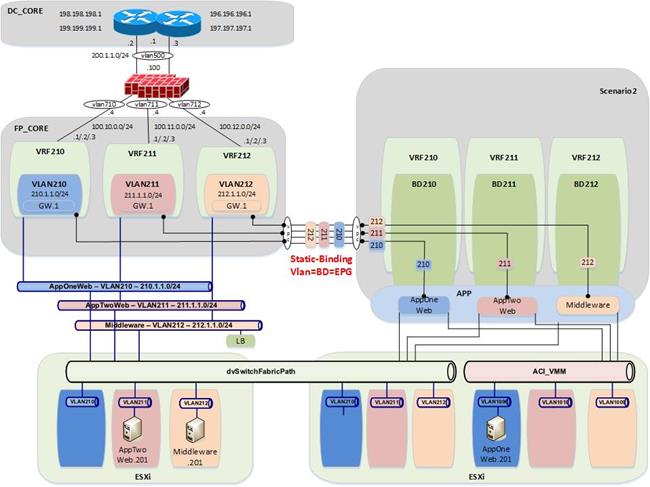

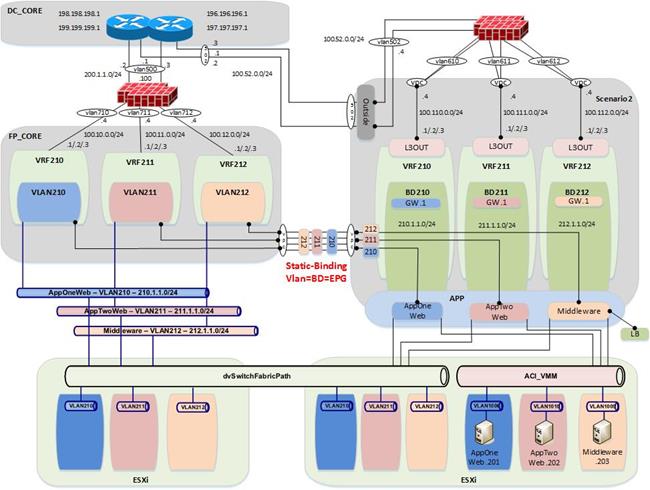

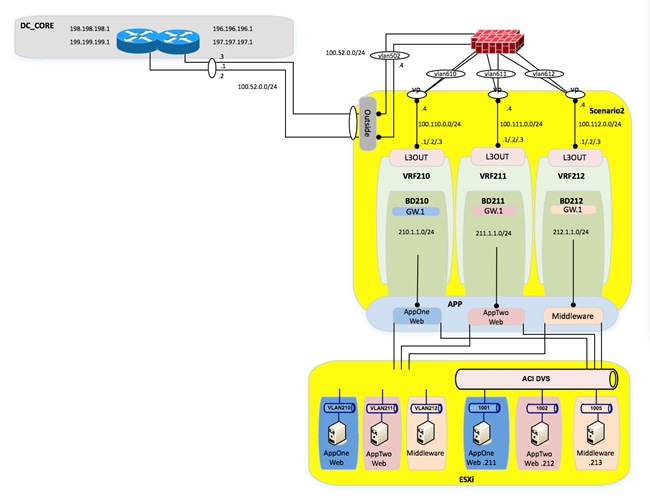

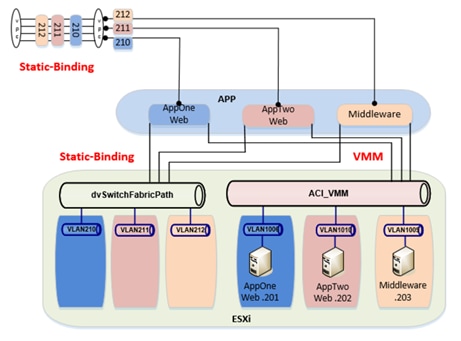

Scenario 2: Mapping VLANs to EPGs (1:1)

In this scenario, the customer has a classic, multitiered application (Web/App/DB), in which each tier of the application is located in its own dedicated VLAN. The customer is performing a network-centric migration of their VLAN into ACI; this implies that each Brownfield VLAN is related to an EPG and a BD in the ACI fabric (VLAN = EPG = BD). By performing static mappings of the VLANs to EPGs, the customer ensures that their workloads, which are connected to the Brownfield FabricPath network, remain a part of the same Layer 2 broadcast domain with the workloads inside of the ACI fabric.

Figure 19: FabricPath to ACI Migration (Scenario 2)

As shown in Figure 17, servers are already deployed into three logical groupings in the FabricPath network, based upon the application (or application tier) they support. The application grouping is as follows:

a. AppOneWeb workloads are part of FP VLAN 210.

b. AppTwoWeb workloads are part of FP VLAN 211.

c. AppThreeWeb workloads are part of FP VLAN 212.

Figure 20: Current DC Based on FabricPath (with FWs)

|

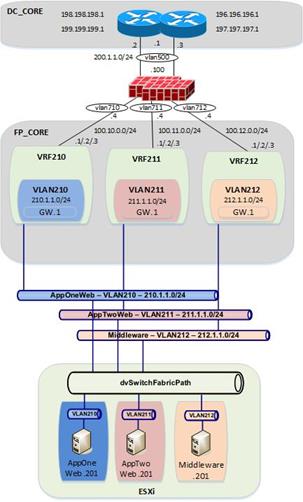

Those three VLANs are then mapped to different VRF instances (VRF210, VRF211, and VRF212, respectively) to maintain logical isolation between tenants also across the Layer 3 domain.

In this specific scenario, a pair of active/standby firewalls has also been added at the perimeter of the FabricPath network. Each tenant (VRF) connects to a separate FW interface, so that security policies can be enforced to control inter-tenant communication and north-to-south traffic flows between each tenant and the DC Layer 3 core.

For each VRF, a static default route is configured on the FabricPath core switches, pointing to the ASA firewall. The ASA firewall has specific static routes configured to route between FabricPath VRFs, as well as routes to get to devices outside of the data center by routing to the data center core routers (DCCORE01/02). This means that all communication between different VMs has to flow up through the FabricPath environment and out to the firewall before it can talk with another VM of a different VRF.

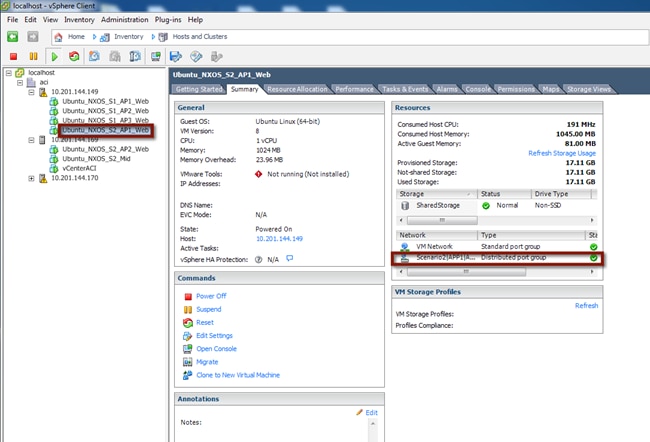

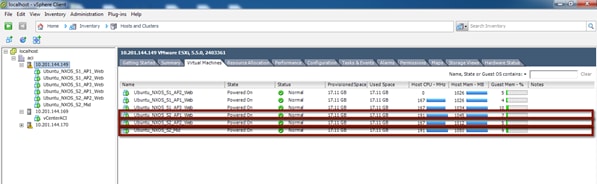

For the compute layer, there are two ESXi hosts being managed by vSphere 5.5, with a traditional DVS. For Scenario 2, all three of the VM hosts reside in different port groups on the VMware-managed DVS (Distributed Virtual Switch).

Requirements for New Application Architecture

1. Use network-centric deployment mode for ACI (VLAN = EPG = BD).

2. Servers of the same security zone (i.e., VRF) can communicate freely.

3. Server communication between different security zones (i.e., different VRFs) must pass through a stateful firewall for inspection.

4. Communication must be maintained for servers in the legacy FabricPath DC infrastructure and the new ACI DC infrastructure during migration.

5. IP addressing must be maintained for all servers.

Figure 21: Current DC Based on FabricPath

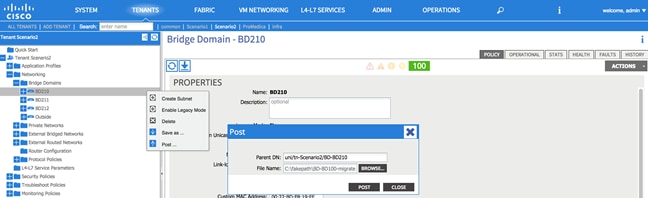

As shown in the following diagram, the end state for the ACI deployment will closely mimic the FabricPath environment. The ACI fabric will have one tenant, three VRFs, and three BD/EPGs that map in a 1:1 fashion to VLANs defined in the FabricPath environment.

Figure 22: ACI Fabric at the End of the Implementation

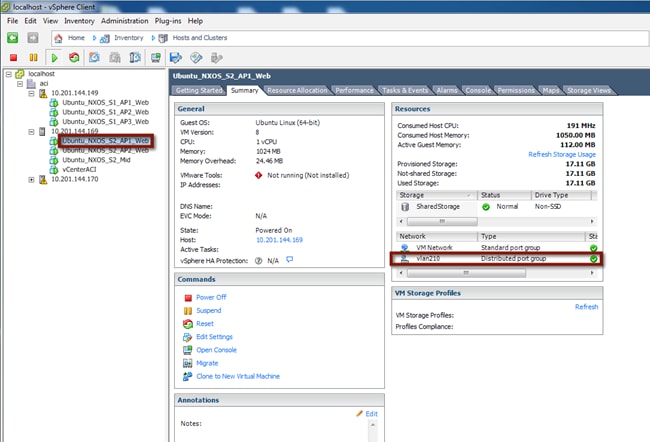

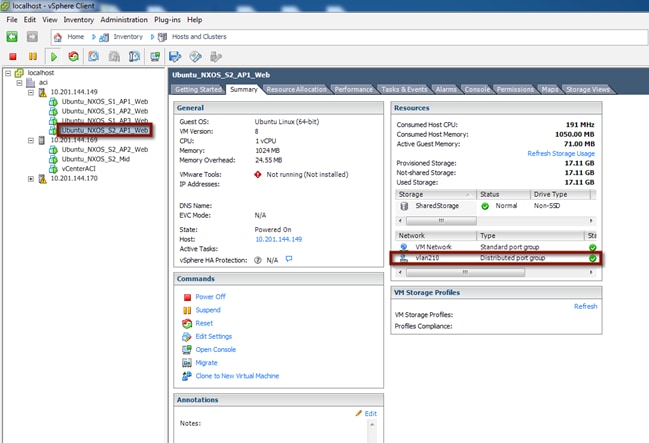

From a compute perspective, the end goal will be to move all of the VMs from the vCenter-managed VDS to an ACI-managed DVS. The VMM integration with the ACI-managed DVS allows for the automatic configuration of EPG-based port groups. This allows VMware administrators to then manage VMNIC setting to control endpoint placement within the ACI fabric.

Infrastructure Deployment Considerations

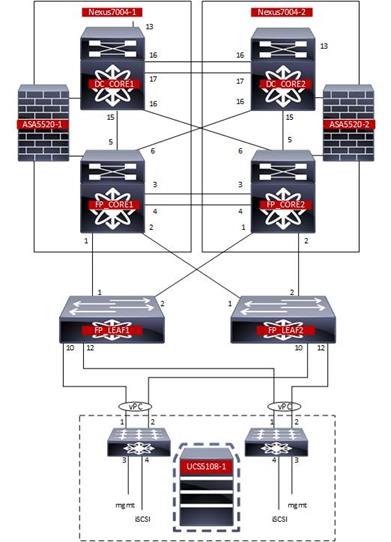

FabricPath-Enabled Data Center

The FabricPath-enabled data center represents the Brownfield network infrastructure and consists of a traditional FabricPath deployment with a pair of Cisco Nexus 7000 and a pair of Cisco Nexus 5000. The pair of Cisco Nexus 7000 are deployed with a VDC infrastructure to allow them to provide a dual FabricPath core as well as a dual Layer 3 DC core.

Note: For more information about FabricPath and relative deployment best practices, refer to the following documents:

VDC (1) and VDC (2) provide DC core functionality and connect to VDC (3) and VDC (4) representing the FabricPath core.

Figure 23: FabricPath Data Center

Data Center Core

The Data Center core consists of a VDC within each of the Cisco Nexus 7000.

Figure 24: Cisco Nexus 7000 VDCs

| Nexus7K-01 |

|

N7K1# show vdc

Switchwide mode is m1 f1 m1xl f2 m2xl f2e f3

vdc_id vdc_name state mac type lc ------ -------- ----- ---------- --------- ------ 1 N7K1 active 38:ed:18:a2:f1:41 Admin None 2 FP_Core01 active 38:ed:18:a2:f1:42 Ethernet f2e 3 DC_CORE1 active 38:ed:18:a2:f1:43 Ethernet f2e

N7K1#

|

| Nexus7K-02 |

|

N7K2# show vdc

Switchwide mode is m1 f1 m1xl f2 m2xl f2e f3

vdc_id vdc_name state mac type lc ------ -------- ----- ---------- --------- ------ 1 N7K2 active 38:ed:18:a2:f3:c1 Admin None 2 FP_Core02 active 38:ed:18:a2:f3:c2 Ethernet f2e 3 DC_CORE2 active 38:ed:18:a2:f3:c3 Ethernet f2e

N7K2#

|

The DC core is attached to the FabricPath core via a full mesh of 10-G point-to-point connections. Routes are exchanged between the DC core and the FabricPath core using OSPF as routing protocol.

The DC core devices are then interconnected via two 10-G connections and provide the vPC peer-link functionality. This is required since a vPC connection is leveraged to connect to the ACI fabric and establish Layer 3 connectivity.

FabricPath Core

The FabricPath core consists of a VDC within each of the Cisco Nexus 7000.

The FP core devices are connected to the DC core routers via a full mesh of 10-G point-to-point connections. Routes are exchanged between the FP core and the DC core using OSPF.

The FP spines are then interconnected via two 10-G connections that provide the vPC peerlink functionality. The vPCs are then created to connect to the ACI fabric for Layer 2 connectivity.

The FabricPath core VDCs provide the spine functionality and are connected to a pair of Cisco Nexus 5000 leaf switches. The Cisco Nexus 5000 leaf switches are then connected via vPC to the UCS fabric Interconnects (FIs).

Figure 25: Cisco Nexus 7000 FabricPath Configuration

|

FP_Core01# sh run fabricpath

!Command: show running-config fabricpath !Time: Mon Oct 5 07:01:44 2015

version 6.2(12) feature-set fabricpath

vlan 5,10-212,600,610-612 mode fabricpath fabricpath switch-id 201 vpc domain 10 fabricpath switch-id 200

interface port-channel1 switchport mode fabricpath

interface Ethernet3/1 switchport mode fabricpath

interface Ethernet3/2 switchport mode fabricpath

interface Ethernet3/3 switchport mode fabricpath

interface Ethernet3/4 switchport mode fabricpath

interface Ethernet4/1 switchport mode fabricpath

interface Ethernet4/2 switchport mode fabricpath

interface Ethernet4/3 switchport mode fabricpath

interface Ethernet4/4 switchport mode fabricpath fabricpath domain default spf-interval 50 50 50 lsp-gen-interval 50 50 50 root-priority 101

FP_Core01#

|

FP_Core02# show running-config fabricpath

!Command: show running-config fabricpath !Time: Mon Oct 5 07:03:27 2015

version 6.2(12) feature-set fabricpath

vlan 5,10-12,100,210-212,600,610-612 mode fabricpath fabricpath switch-id 202 vpc domain 10 fabricpath switch-id 200

interface port-channel1 switchport mode fabricpath

interface Ethernet3/1 switchport mode fabricpath

interface Ethernet3/2 switchport mode fabricpath

interface Ethernet3/3 switchport mode fabricpath

interface Ethernet3/4 switchport mode fabricpath

interface Ethernet4/1 switchport mode fabricpath

interface Ethernet4/2 switchport mode fabricpath

interface Ethernet4/3 switchport mode fabricpath

interface Ethernet4/4 switchport mode fabricpath fabricpath domain default spf-interval 50 50 50 lsp-gen-interval 50 50 50 root-priority 100

FP_Core02#

|

Network Services

The FabricPath core includes a pair of ASA firewalls for inter-VRF routing within Scenario 2. The FWs are connected to the FP core and to the DC core and are participating in static routing for reachability.

Figure 26: FabricPath Data Center Services

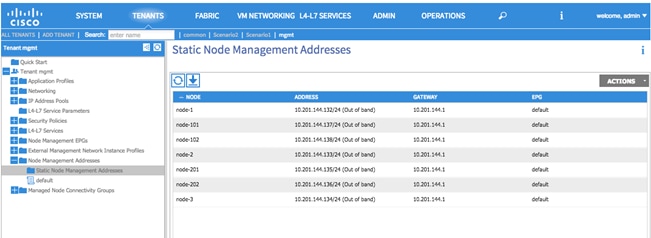

Management

Infrastructure Management

Out-of-band (OOB) management access is used for all devices within the validated topology. This includes the ACI Spine/Leaf switches, the APIC controller cluster, the Cisco Nexus 7000 switches and Cisco Nexus 5600 switches used for FabricPath, the ASA firewalls and the UCS chassis.

Note: Although OOB management was used for the purpose of this migration, in-band management is also a valid design option.

To configure the node management address, log in to the APIC GUI with administrator privileges and follow the path below:

Tenant à [mgmt] à Node Management Addresses

Figure 27: Management - ACI Fabric OOB

Virtual Environment

The virtual environment represents the topology and configuration required to support the distribution of workload in the FabricPath and ACI environments. For the migration, the virtual environment within the FabricPath domain remains the constant while a second virtual environment is deployed to eventually support the workload within the ACI fabric.

Note: Depending on the use case and the requirements, there may not be a requirement for a second virtual environment in the ACI fabric. The use of the second virtual environment within the ACI migration presented in this document is based on the overall assumption that the new data center fabric will also deploy new network and compute devices that would require to be connected to an ACI managed virtual environment.

UCS

The configuration discussed as follows pertains to both the existing FabricPath and the new ACI virtual environments. For each network domain the connected virtual environment consists of a UCSB-Mini with integrated fabric interconnects. Each of the fabric interconnects has a 10-G connection to a pair of FabricPath or ACI leaf nodes. A port channel on each of the fabric interconnects is connected to a vPC on the FabricPath/ACI domains.

Note: Depending on the use case and requirements, there is no ACI requirement for using a Cisco UCSB compute node. Based on the current environment, the migration discussed throughout the document is capable of supporting other compute nodes.

Compute Resources FabricPath Attached

Data vNICs

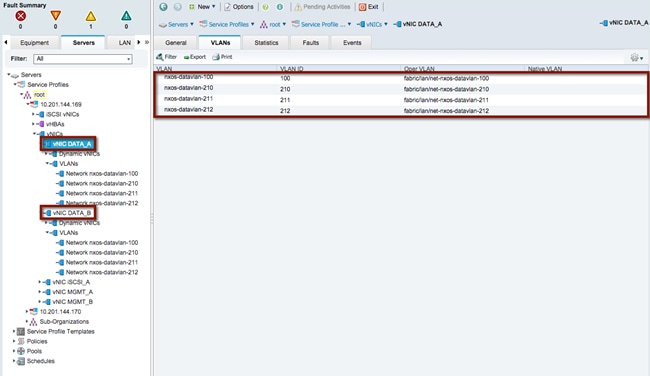

vNIC DATA_A and vNIC DATA_B have been defined and extended to the ESXi host deployed on the FabricPath-attached compute node. The allowed VLANs include 100, 210, 211 and 212. VLAN 100 as defined in the FabricPath domain supports the VMs associated with Scenario 1 and VLANs 210-212 support the VMs associated with Scenario 2. The VLAN range will be used to create the DVS portgroup mapping for the VM NIC connectivity.

Refer to the following diagram for the vNIC and VLAN usage within the FabricPath-attached UCS chassis for Scenario 1 and Scenario 2.

Figure 28: FabricPath Compute Node Data vNIC

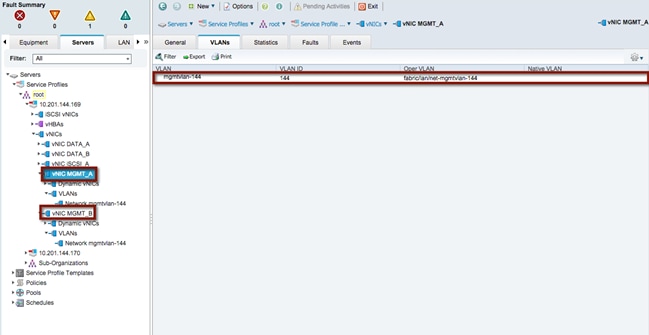

Management vNICs

vNIC MGMT_A and vNIC MGMT_B have been defined and extended to the ESXi hosts deployed on the FabricPath-attached compute node. The allowed VLAN includes VLAN 144 and is defined in the out-of-band (OOB) management infrastructure located within the test environment.

Refer to the following diagram for the vNIC and VLAN usage within the FabricPath-attached UCS chassis associated with the OOB management access.

Figure 29: FabricPath Compute Node Management vNIC

Network Control Policy

In support of the connectivity between the FabricPath domain and the FI, the UCS LAN network control policy is set for CDP Enabled. This allows the FI and the FabricPath network to exchange CDP neighborship messages.

Compute Resources ACI Attached

The compute resources that are connected to the ACI fabric leverage the same vNICs (data and management) previously presented for the compute nodes connected to the FabricPath network. Another pair of Data vNICs must be defined, since the goal is to connect those ESXi hosts to both a vCenter-managed DVS and an ACI-managed DVS. This is discussed in more detail in the “VMware” section.

Data vNICs

As with the FabricPath-attached compute nodes, vNIC DATA_A and vNIC DATA_B have been defined and extended to the ESXi hosts deployed on the ACI-fabric-attached compute nodes. The allowed VLANs include 100, 210, 211 and 212. VLAN 100 supports the VMs associated with Scenario 1 and VLANs 210-212 support the VMs associated with Scenario 2.

Management vNICs

As with the FabricPath-attached compute nodes, vNIC MGMT_A and vNIC MGMT_B have been defined and extended to the ESXi hosts deployed on the ACI-attached compute nodes. The allowed VLAN includes VLAN 144 and is defined in the out-of-band management infrastructure located within the test bed.

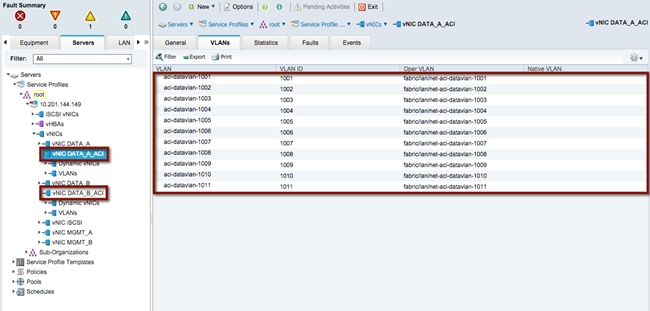

ACI Data vNICs

vNIC DATA_A_ACI and vNIC DATA_B_ACI have been defined and extended to the ESXi host deployed on the ACI-fabric-attached compute node. The allowed VLANs include 1001-1011. VLANs 1001-1010 as defined in the ACI fabric support the VMs associated with Scenario 1 and Scenario 2. The APIC will use a VLAN from the range and associate it to each DVS port group dynamically created as a result of the association of an EPG to the VMM domain. The ACI fabric will hence receive traffic sourced by VMs connected to those port groups tagged with those specific VLAN values.

Figure 30: ACI Fabric Compute Node Data vNIC

Network Control Policy

In support of the connectivity between the ACI domain and the FI, the UCS LAN network control policy is set for CDP enabled. This allows both the FI and the ACI leaf nodes to exchange CDP neighborship messages.

VMware

VMWare ESXi is the validated hypervisor deployed on the compute nodes to facilitate the virtual environments.

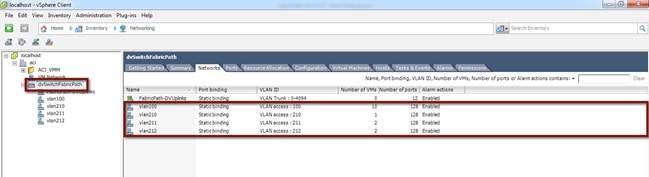

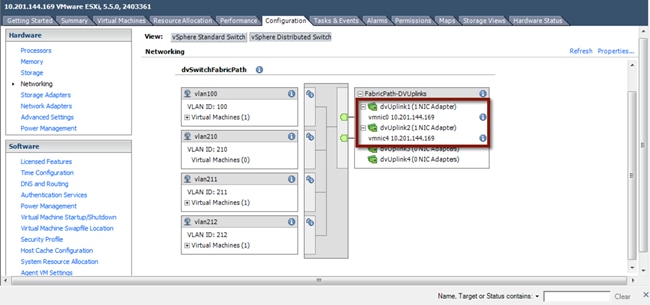

vCenter Managed Distributed Virtual Switch

To support the initial step of the migration strategy, each ESXi host (on both the FP and ACI sides) is connected to a vCenter-managed DVS switch. The DVS switch leverages port groups associated to the FabricPath data VLANs used for Scenario 1 and Scenario 2 respectively: 100, 210, 211 and 212 (see the following diagram).

Figure 31: vCenter Managed DVS

ACI-Managed Distributed Virtual Switch

The final step of the application migration consists of connecting the virtual machines to the ACI-managed DVS that is dynamically created in vCenter after the creation of the VMM Domain (this will be discussed in the “ACI VM Networking” section). The DVS switch will leverage dynamically created port groups associated to ACI internal EPGs and leveraging a set of VLAN tags in the range 1001-1010 (see the following diagram).

Figure 32: ACI-Managed DVS

VMware Uplinks for vCenter-Managed DVS

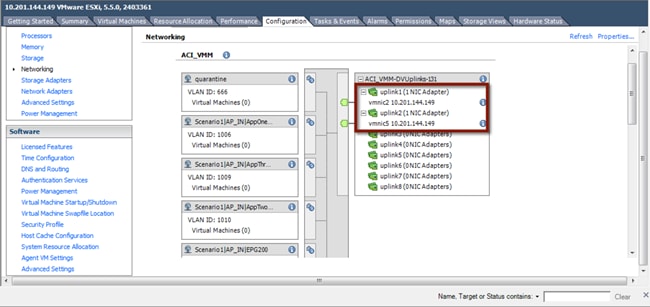

The data vNICs exposed via the UCS configuration are configured as part of the vCenter-managed DVS uplinks, as shown in the following figure.

Figure 33: VMware Uplinks for vCenter-Managed DVS

|

|

ESXi hosts connected to both the FabricPath and ACI networks are attached to the vSphere-managed DVS, since the first step of the application migration consists of performing a live vMotion of virtual machines across these two ESXi hosts.

VMware Uplinks for ACI-Managed DVS

The ACI Data vNICs exposed via the UCS configuration are configured as part of the ACI-managed DVS uplinks, as shown in the following diagram.

Figure 34: VMware Uplinks for ACI-Managed DVS

|

|

| Only the ESXi hosts connected to the ACI fabric have uplinks connected to the ACI-managed DVS. |

CDP

Cisco Discovery Protocol (CDP) is enabled within the vCenter to accommodate the neighborship message exchange from the ESXi host to the ACI fabric. The CDP messages from the VM to the FI and from the FI to the ACI leaf switches are required to properly exchange host details for dynamically created port groups.

Note: ACI has the capability to support user-defined port groups and avoid the requirement for CDP support. VM integration with UCS-B Series and ACI requires specific configurations. Refer to the following for more details: http://www.cisco.com/c/en/us/support/docs/cloud-systems-management/application-policy-infrastructure-controller-apic/118965-config-vmm-aci-ucs-00.html

ACI Infrastructure Deployment

Within this section, you will accomplish the following:

1. Bring the APIC controllers online.

2. Initialize the fabric.

3. Configure the fabric resources for network connectivity.

Each step previously listed is executed once along with the Fabric Configuration section being used in both Scenario (1) and Scenario (2).

The Infrastructure Deployment section is the foundation to the ACI fabric configuration. The APIC Controller section provides the details required to bring the APIC cluster online while the Fabric Initialization section initiates the process for bringing the Spine/Leaf topology online and to configure the infrastructure. Once the APIC and the Spine/Leaf topology is accessible, the Fabric Configuration section provides the necessary details for connecting the ACI fabric to the virtual environment, the FabricPath domain, the data center core and the firewall services layer.

At the Deployment Phase, you are going to configure the ACI fabric and stage it in preparation for integration with the existing FabricPath environment.

Figure 35: Deployment Phase

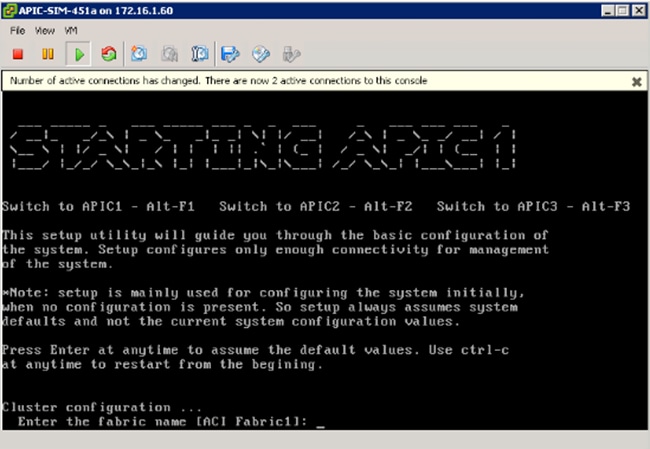

APIC Controllers

APIC controllers are a set of three servers connected at different points in the ACI fabric. Each APIC server connects to a pair of leaf nodes, with 10-G ports, in active/standby configuration. The 1-G management connections are wired to an out-of-band (OOB) switched network for device access.

In the design discussed herein, the three APICs are each physically connected to the same pair of leaf nodes. With KVM access to each controller, the following table provides the configuration parameters required to initialize the controller nodes. APIC 1 through 3 should be configured sequentially with the appropriate controller details.

Note: In production environments, the recommendation is to connect each APIC node to a different pair of ACI leaf nodes.

Table 1: APIC Controller Initialization

| FabricPathCore |

ACI Fabric |

Notes |

| Fabric Name |

ACI_Fabric |

The fabric name specified must be the same for each controller |

| Number of Controllers |

3 |

Specifies the total number of controllers within the cluster |

| Controller ID |

1 |

Specifies the instance of the controller |

| Controller Name |

apic1/apic2/apic3 |

Specifies the individual controller name |

| TEP Address Pool |

10.0.0.0/16 |

Specifies the address range for VXLAN TEP address pool NOTE: Minimum TEP Address pool is /23. This address range should not overlap with any allocated address space. |

| Infra VLAN ID |

3967 |

Specifies the infrastructure VLAN ID that will be extended to the controllers for infrastructure communications NOTE: 3967 is the recommended Infra VLAN ID because this VLAN is not reserved on any Cisco platform, should it need to be extended outside of the ACI fabric (that is, for extending connectivity to the Cisco AVS virtual switch) |

| Multicast Address Pool |

225.0.0.0/15 |

Specifies the multicast address pool for use within the fabric |

| APIC IP Address/Mask |

10.10.10.10/.11/.12 |

Specifies the management IP address and mask for the specified controller |

| Default Gateway |

10.10.10.1 |

Specifies the default gateway for the controller |

| APIC Password |

******** |

Specifies the administrator password for the fabric |

The initial KVM screen output for the controller is noted in the following diagram. After each input, hitting the enter key takes you to the next parameter. Following the last configuration parameter, the output gives you the opportunity to accept the configuration or start the configuration script over.

Note: The Infra VLAN cannot be changed once the startup script has been completed. Changing the Infra VLAN requires initiating the startup script.

Using the parameters in the table above, complete the APIC startup screen for the first APIC then move to the second and third APIC node respectively.

Figure 36: APIC Controller Initialization

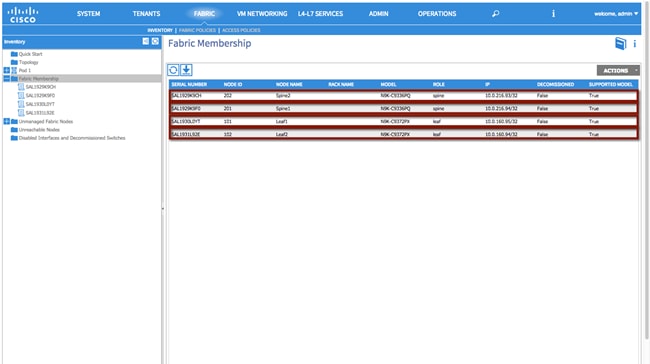

Fabric Initialization

The Fabric Initialization process is the ability to register the given spine/leaf topology. Each node part of the desired infrastructure must be accepted into the topology for connectivity to the overall environment, which makes up the ACI fabric. Once the administrator accepts the node into the fabric and assigns a node ID and name, the APIC then provisions the logical connectivity. Each node is identified within the controller by the platform-specific serial number and will appear in the APIC-generated topology once the configuration is complete.

To initiate fabric Initialization, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Inventory à Fabric Membership

Figure 37: Fabric Initialization

Fabric Configuration

This section contains the fabric configuration parameters for resources used throughout the fabric.

Fabric Policies

Route Reflector Policy

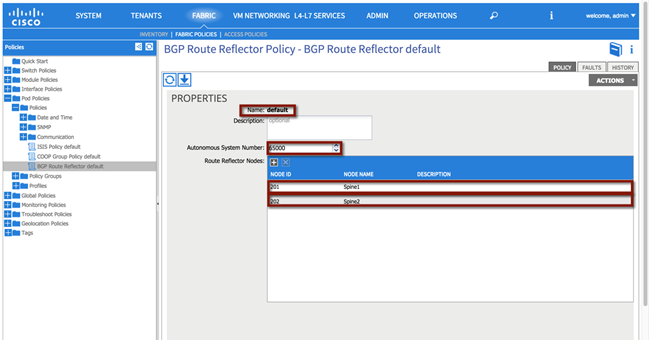

The MP-BGP route reflector policy is used to specify two parameters:

· The AS number assigned to the ACI fabric (65000 in the following example).

· The devices in the ACI fabric deployed as MP-BGP route-reflectors (Spine1 and Spine 2 in the example).

It is worth recalling that MP-BGP is the control plane used inside the ACI fabric to communicate to the ACI leaf nodes external IP prefixes information learned on the border leaf nodes via the L3Out connection.

Fabric à Fabric Policies à Pod Policies à Policies à [BGP Route Reflector default]

Figure 38: Route Reflector Policy

Aside from the GUI representation of the required parameters, the ACI API can also be used to provide access to the configuration and management of the infrastructure. The XML format of the above configuration can be applied utilizing multiple techniques. See the following Cisco APIC REST API User Guide for additional details:

Note: The XML formats required to perform the same configuration steps shown on the APIC GUI will be shown throughout the document, starting with the following BGP RR Policy.

XML 1: Route Reflector Policy

<!—Route Reflector -->

<bgpInstPol descr="" name="default" >

<!—Route Reflector Nodes-->

<bgpRRP descr="" name="">

<bgpRRNodePEp descr="" id="202"/>

<bgpRRNodePEp descr="" id="201"/>

</bgpRRP>

<!—Route Reflector ASN -->

<bgpAsP asn="65000" descr="" name=""/>

</bgpInstPol>

Access Policies

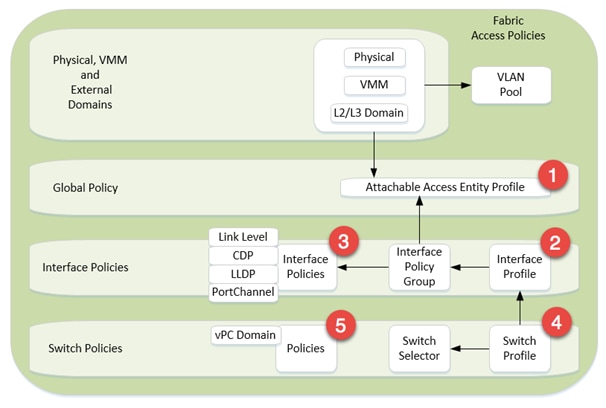

The Access Policy section pertains to the resources required to provide the physical connectivity within the desired topology. The parameters within this section are configured once and referenced in the vPC Scenario (1) and Scenario (2) sections to provide network connectivity.

The following policies are used to create each vPC within the topology:

1. Configure an Attachable Access Entity Profile.

2. Configure an Interface Profile.

3. Configure Interface Policies

a. Configure a Link Level Policy

b. Configure a CDP Policy

c. Configure an LLDP Policy

d. Configure a Port Channel Policy

4. Configure a Switch Profile

5. vPC Policy

The figure below is a representation of the overall fabric resources and how they relate to each other.

Figure 39: Access Policy Model

The vPC domain allows for the creation of a user-specified vPC domain to identify the leaf switches participating in the specified vPC while the interface and the switch profiles specify the desired switch and interface polices the vPC domain will be created within.

Each vPC configuration (Interface Policy Group) references physical attributes such as link/speed and port channel behavior policies. The individual interface policies are defined and referenced for each subsequent vPC configuration.

Note: The use of the Quick Start guide is not used in order to demonstrate the object relationship for the configuration parameters. Additionally, while Quick Start menus can change from version to version, the method of configuration displayed in this whitepaper will remain valid.

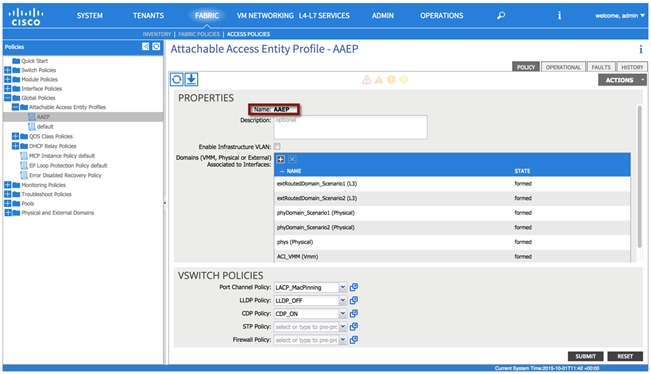

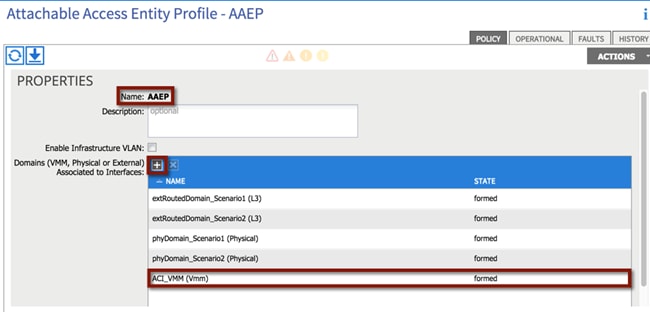

Attachable Access Entity Profile

The Attachable Access Entity Profile provides a template for attachment point between the switch and interface profiles and the fabric resources such as the VLAN pool. The AEP can be considered the ‘glue’ between the defined physical, virtual or Layer 2 / Layer 3 domains and the fabric interfaces (logical or physical), essentially allowing to specify what VLAN tags can be used on those interfaces.

Note: Although a single AEP is used to support both scenarios, multiple AEPs can be deployed providing further granularity in defining connectivity.

To configure an attachable access entity profile, login to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Global Policies à Attachable Access Entity Profiles à [AAEP]

Figure 40: Attachable Access Entity Profile

XML 2: Attachable Access Entity Profile

<!—Attachable Access Entity Profile -->

<infraAttEntityP descr="" name="AAEP" >

</infraAttEntityP>

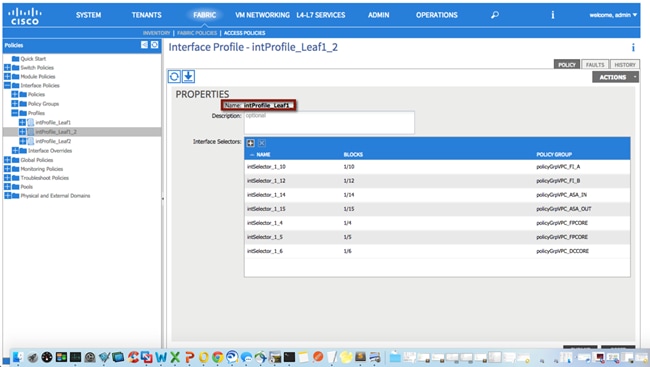

Interface Profile

The Interface Profile enables you to define a set of “interface selectors” each specifying a block of physical interfaces. Each selector uses the properties identified under the corresponding Interface Policy Group, as shown in following diagram. The policy names used in the following example reflect the policy type name and the node(s). A single interface profile will be used for each switch and switch combination used in the topology.

Note: Depending on the use case, multiple combinations of interface profiles can be deployed.

To configure an interface profile, log in to the APIC GUI with administrator privileges and follow the path below:

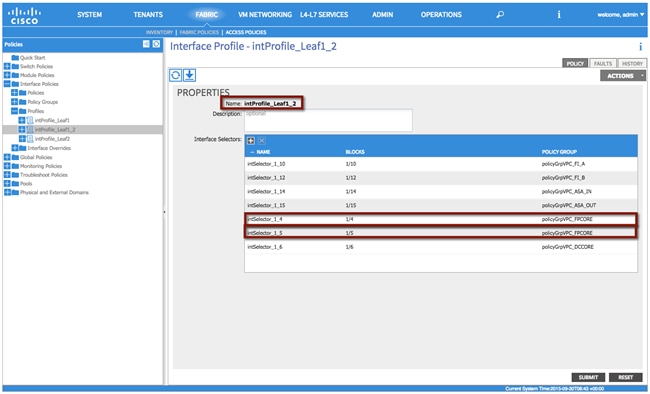

Fabric à Access Policies à Interface Policies à Profiles à [intProfile_Leaf1_2]

Figure 41: Interface Profile

XML 3: Interface Profile

<!—Interface Profile -->

<infraAccPortP descr="" name="intProfile_Leaf1_2"/>

</infraAccPortP>

Repeat the process for the remaining interface profiles:

Fabric à Access Policies à Interface Policies à Profiles à [intProfile_Leaf1]

Fabric à Access Policies à Interface Policies à Profiles à [intProfile_Leaf2]

The ACI Interface Policies specify interface properties for fabric connectivity. The interface policies are used for all physical connections and are reflective of the physical device connected.

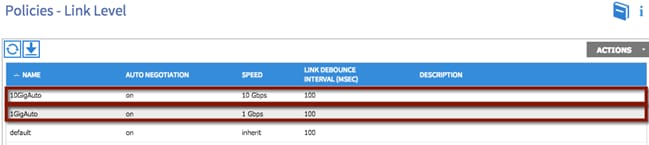

Link Level Policy

The link level policy specifies the Layer 1 parameters of host-facing ports. The policy contains the interface-specific details such as auto-negotiation, speed, and debounce interval. The policy names used as follows reflect the speed and negotiation abilities.

To configure a link level policy, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Interface Policies à Policies à Link Level

Figure 42: Interface Policy – Link Level Policy

XML 4: Interface Policy - Link Level Policy

<!—1 GIG Auto Policy -->

<fabricHIfPol autoNeg="on" descr="" linkDebounce="100" name="1GigAuto" speed="1G"/>

<!—10 GIG Auto Policy -->

<fabricHIfPol autoNeg="on" descr="" linkDebounce="100" name="10GigAuto" speed="10G"/>

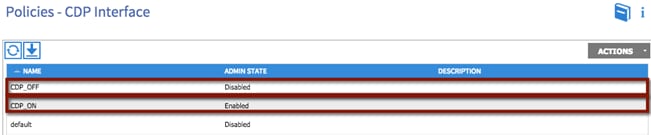

CDP Policy

The CDP interface policy is primarily used to obtain protocol addresses of neighboring devices and discover the platform of those devices. The policy names used as follows reflect the policy type and admin state.

To configure a CDP policy, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Interface Policies à Policies à CDP Interface

Figure 43: Interface Policy – CDP Policy

XML 5: Interface Policy - CDP Policy

<!—CDP Off Policy -->

<cdpIfPol adminSt="disabled" descr="" name="CDP_OFF" />

<!—CDP On Policy -->

<cdpIfPol adminSt="enabled" descr="" name="CDP_ON" />

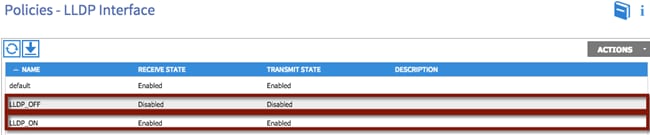

LLDP Interface

The LLDP interface policy defines a common configuration that applies to one or more LLDP interfaces. LLDP uses the logical link control (LLC) services to transmit and receive information to and from other LLDP agents. The policy names used as follows reflect the policy type and admin state.

To configure an LLDP policy, login to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Interface Policies à Policies à LLDP Interface

Figure 44: Interface Policy – LLDP Policy

XML 6: Interface Policy - LLDP Policy

<!—LLDP Off Policy -->

<lldpIfPol adminRxSt="disabled" adminTxSt="disabled" descr="" name="LLDP_OFF" />

<!—LLDP On Policy -->

<lldpIfPol adminRxSt="enabled" adminTxSt="enabled" descr="" name="LLDP_ON" />

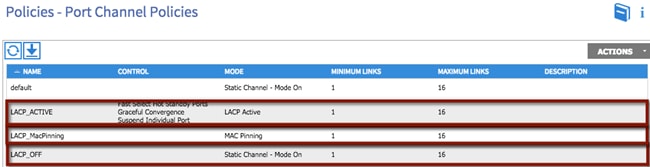

Port Channel Policies

The port channel policy enables you to bundle several physical ports together to form a single port channel. LACP enables a node to negotiate an automatic bundling of links by sending LACP packets to the peer node. The policy names used as follows reflect the policy type name and mode.

To configure a port channel policy, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Interface Policies à Policies à Port Channel Policies

Figure 45: Interface Policy – Port Channel Policy

XML 7: Interface Policy - Channel Policy

<!—Port Channel LACP Active Policy -->

<lacpLagPol ctrl="fast-sel-hot-stdby,graceful-conv,susp-individual" descr="" dn="uni/infra/lacplagp-LACP_ACTIVE" maxLinks="16" minLinks="1" mode="active" name="LACP_ACTIVE" />

<!—Port Channel LACP MacPinning Policy -->

<lacpLagPol ctrl="fast-sel-hot-stdby,graceful-conv,susp-individual" descr="" dn="uni/infra/lacplagp-LACP_MacPinning" maxLinks="16" minLinks="1" mode="mac-pin" name="LACP_MacPinning" />

<!—Port Channel LACP Off Policy -->

<lacpLagPol ctrl="fast-sel-hot-stdby,graceful-conv,susp-individual" descr="" dn="uni/infra/lacplagp-LACP_OFF" maxLinks="16" minLinks="1" mode="off" name="LACP_OFF" />

Switch Profile

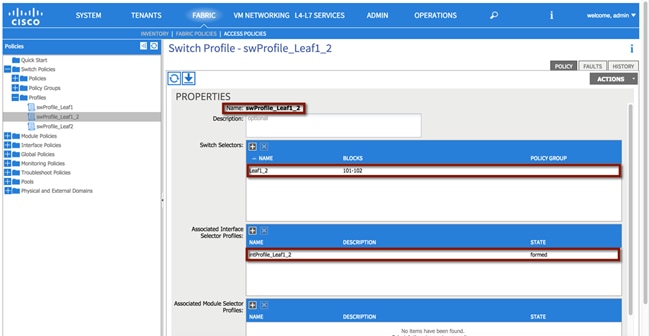

The switch profile identifies the leaf nodes that are provisioned to support the environment. The switch profile name swProfile_Leaf1_2 specifies a switch selector designator Leaf1_2 identifying nodes 101 and 102. Also, the previously defined interface profiles are also associated to the switch profiles, as shown in the following diagram.

Note: The switch profile configuration must be updated following the completion of each interface profile.

To configure a switch profile, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Switch Policies à Switch Profile à [swProfile_Leaf1_2]

Figure 46: Switch Profile

XML 8: Switch Profile

<infraNodeP descr="" name="swProfile_Leaf1_2" >

<!—Switch Profile Leaf Selector -->

<infraLeafS descr="" name="Leaf1_2" type="range">

<infraNodeBlk descr="" from_="101" name="29a1f174834b2ea7" to_="102"/>

</infraLeafS>

<!—Associated Interface Profile -->

<infraRsAccPortP tDn="uni/infra/accportprof-intProfile_Leaf1_2"/>

</infraNodeP>

Repeat the process for the remaining switch profiles:

Fabric à Access Policies à Switch Policies à Switch Profile à [swProfile_Leaf1]

Fabric à Access Policies à Switch Policies à Switch Profile à [swProfile_Leaf2]

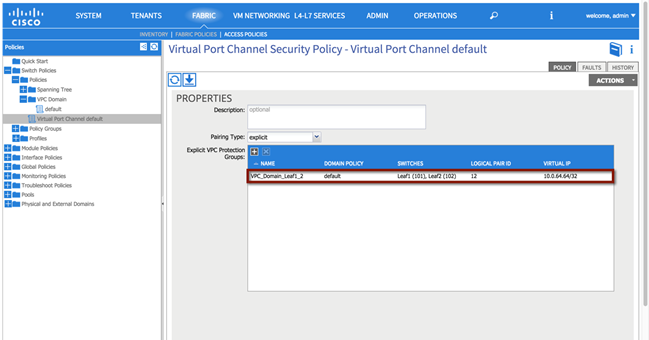

Virtual Port Channel (vPC) Domain

The vPC domain identifies the leaf nodes that define a virtual port channel. Within the vPC configuration, a VPC explicit protection group represents a vPC domain (a protection group). You can explicitly configure member nodes of the group using a fabric policy node endpoint. The explicit vPC protection group name used in Scenario 1 is ‘VPC_Domain_Leaf1_2’ along with the logical pair ID number ‘12’.

Note: Depending on the use case, multiple vPC domains may need to be created to support the topology.

To configure a vPC domain, login to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Switch Policies à Switch Profile à [swProfile_Leaf1_2]

Figure 47: vPC Domain

XML 9: vPC Domain

<!—vPC Domain -->

<fabricProtPol descr="" name="default" pairT="explicit">

<!—vPC ID and Name-->

<fabricExplicitGEp id="12" name="VPC_Domain_Leaf1_2">

<fabricRsVpcInstPol tnVpcInstPolName="default"/>

<!—Node Selection -->

<fabricNodePEp descr="" id="101" name=""/>

<fabricNodePEp descr="" id="102" name=""/>

</fabricExplicitGEp>

</fabricProtPol>

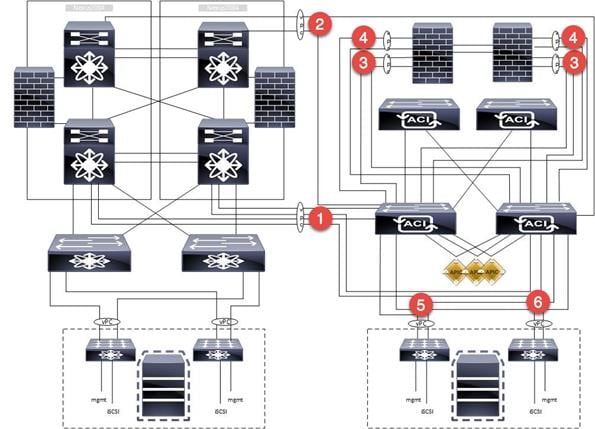

Creation of Virtual Port Channels (vPCs)

This section provides the steps required to support the vPC configuration on the pair of ACI leaf switches used in the validated topology. Multiple vPCs are used to support the physical connectivity of external devices to the ACI fabric, as for example the Cisco Nexus 7000 switches (FabricPath and DC core devices) for Layer 2 and Layer 3 connectivity, the ASAs for firewall services and the UCS for virtual hosts.

The following network diagram highlights all the vPC connections used for the different migration scenarios:

1. The vPC identified with the number 1 is used for the Layer 2 connectivity between the ACI fabric and the FabricPath core and will be named VPC_FPCORE.

2. vPC 2 is used for layer 3 connectivity between the ACI fabric and the DC core (VPC_DCCORE).

3. vPC 3 is used for the connectivity between the ACI fabric and the ASA inside interface. Notice how two separate vPC connections are used from the ACI fabric, one to connect to the Active FW node and the other to connect to the Standby node (VPC_ASA_IN_1 and VPC_ASA_IN_2). This has some important implications for the configuration of the L3Out connections between the ACI VRFs and the ASA FWs, as discussed in more detail in the “External Routed Networks” section part of the migration Scenario 2.

4. vPC 4 is used for the connectivity between the ACI fabric and the ASA outside interface. As discussed above, two vPC logical connections are used also in this case, one to each ASA FW node (VPC_ASA_OUT_1 and VPC_ASA_OUT_2).

5. vPC 5 is used for the UCS connectivity between the ACI fabric and Fabric Interconnect A (VPC_FI_A).

6. vPC 6 is used for the UCS connectivity between the ACI fabric and Fabric Interconnect B (VPC_FI_B).

Figure 48: vPC Connections

Some important considerations that relate to the creation of those vPC logical connections:

· Depending on the topology and requirements, other valid configurations may be used to establish Layer 3 connectivity between the Brownfield and ACI environments (routed interfaces or routed sub-interfaces in lieu of SVI connectivity over vPC). The design suggested in this document consists in creating a logical vPC connection and static routing. This provides the advantage that a link failure scenario would be recovered at the Layer 2 level (that is, re-hashing of traffic flows across the remaining physical links of the vPC).

· The reason you didn’t just use the same Layer 2 vPC1 also for establishing Layer 3 connectivity between the FP and the ACI networks (instead of the separate vPC2) is because traditionally, during migrations, the idea is to migrate off the equipment that is Layer 2 attached, and then retire it, shut it down, or repurpose it. It was important to demonstrate that the Layer 3 DC routers (DCCORE01/02) would be used even after migration from the FabricPath FP_CORE01/02 devices was completed.

· Although multiple vPCs on the ASA were used (a vPC for the inside interface and a separate vPC for the outside interface), a single vPC could have also been deployed to support the same topology. The use case and connectivity requirements should define the overall connectivity strategy.

The following sections highlight the different configuration steps required for the creation of a vPC.

Note: The vPC configuration discussed in the following sections is relative to the creation of VPC_FPCORE (for Layer 2 connectivity between the FP and the ACI networks). A pretty much identical procedure can be followed for the creation of the other vPC connections (not covered in this document).

vPC1: FabricPath Core to ACI Leaf Nodes

The following section describes the procedure for creating in APIC the vPC logical connection used for Layer 2 communication between the FabricPath and the ACI networks.

The Cisco Nexus 7000 FP spines will be connected to the ACI leaf switches using full-meshed 10-G interfaces for redundancy and to carry data traffic for different VLANs used for the migration scenarios. The 2x10G interfaces will be directly connected between two Cisco Nexus 7000 switches for high availability.

The following table provides the physical interface designation for the Cisco Nexus 7000 and the ACI leaf connectivity.

Table 2: Layer 2 Physical Connectivity

| Fabric Path Core |

ACI Fabric |

Speed |

| FP_CORE1_3/10 |

Leaf1_1/4 |

10GIG |

| FP_CORE1_3/11 |

Leaf2_1/4 |

10GIG |

| FP_CORE2_3/11 |

Leaf1_1/5 |

10GIG |

| FP_CORE2_3/11 |

Leaf2_1/5 |

10GIG |

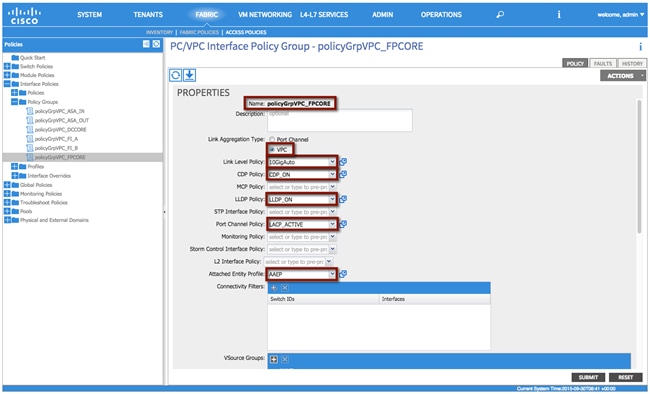

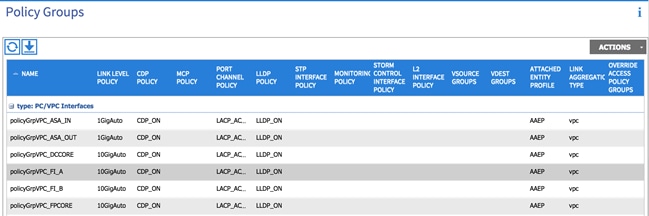

vPC1 Interface Policy Group

The Interface Policy Group enables you to specify the interface characteristics and define the behavior for selected ports. Within the Interface Policy Group, interface parameters such as the link properties (Link Level Policy) and Port Channel capabilities are defined. The policy names used as follows reflect the policy type name along with the interface type and location within the topology.

To configure an interface policy group, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Interface Policies à Policy Groups à [policyGrpVPC_FPCORE]

Table 3: Layer 2 vPC Interface Policy Group

| Interface Poicy Group |

Configuration |

| Name |

policyGrpVPC_FPCORE |

| Link Aggregation Type |

VPC |

| Link Level Policy |

10GIGAuto |

| CDP_Policy |

CDP_ON |

| LLDP_Policy |

LLDP_ON |

| Port Channel Policy |

LACP_ACTIVE |

| Attachable Entity Profile |

AAEP |

Figure 49: Layer 2 vPC Interface Policy Group

XML 10: vPC Interface Policy Group

<infraAccBndlGrp descr="" dn="uni/infra/funcprof/accbundle-policyGrpVPC_FPCORE" lagT="node" name="policyGrpVPC_FPCORE" >

<infraRsMonIfInfraPol tnMonInfraPolName=""/>

<!—LLDP Policy Selection -->

<infraRsLldpIfPol tnLldpIfPolName="LLDP_ON"/>

<infraRsStpIfPol tnStpIfPolName=""/>

<!—LLDP Policy Selection -->

<infraRsCdpIfPol tnCdpIfPolName="CDP_ON"/>

<infraRsL2IfPol tnL2IfPolName=""/>

<!— Attachable Entity Profile Selection -->

<infraRsAttEntP tDn="uni/infra/attentp-AAEP"/>

<infraRsMcpIfPol tnMcpIfPolName=""/>

<!—Port Channel Policy Selection -->

<infraRsLacpPol tnLacpLagPolName="LACP_ACTIVE"/>

<infraRsStormctrlIfPol tnStormctrlIfPolName=""/>

<!—Link Level Policy Selection -->

<infraRsHIfPol tnFabricHIfPolName="10GigAuto"/>

</infraAccBndlGrp>

Note: The interface policies selected are the ones previously created in the “Interface Policies” section.

vPC1 Interface Profile

The Interface Profile enables you to define the specific interfaces that use the properties identified under the Interface Policy Group. Within the Interface Profile, each physical interface is identified and added. The policy names used as follows reflect the policy type name and the node(s).

To configure an Interface Profile, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Interface Policies à Profiles à [intProfile_Leaf1_2]

Table 4: Layer 2 vPC Interface Profile

| Interface Profile |

Configuration |

| Interface Selector |

intSelector_1_4 |

| Interface Selector |

intSelector_1_5 |

Note: Object names, such as the Interface Selector name, cannot be modified once implemented. This may present an operational challenge if the specific interfaces wanted to be re-used later on in the future. Because of this fact, separate interface selectors were created for each and every interface in use. This ensures that the interface selectors can be reused in the future, if needed, with no impact. Careful consideration should be exercised when planning object naming.

Figure 50: Layer 2 vPC Interface Profile

XML 11: Layer 2 vPC Interface Profile

<infraAccPortP descr="" name="intProfile_Leaf1_2" >

<!—Interface Selector -->

<infraHPortS descr="" name="intSelector_1_5" type="range">

<infraRsAccBaseGrp fexId="101" tDn="uni/infra/funcprof/accbundle-policyGrpVPC_FPCORE"/>

<!—Port Selector -->

<infraPortBlk descr="" fromCard="1" fromPort="5" name="block2" toCard="1" toPort="5"/>

<!—Interface Selector -->

</infraHPortS>

<infraHPortS descr="" name="intSelector_1_4" type="range">

<infraRsAccBaseGrp fexId="101" tDn="uni/infra/funcprof/accbundle-policyGrpVPC_FPCORE"/>

<!—Port Selector -->

<infraPortBlk descr="" fromCard="1" fromPort="4" name="block2" toCard="1" toPort="4"/>

</infraHPortS>

</infraAccPortP>

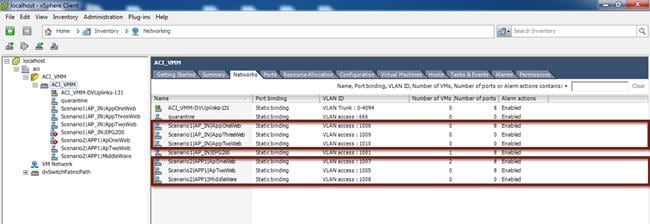

ACI VM Networking

VMM integration allows a manager such as VMware vCenter to be linked to ACI so that policies can be made available for virtual machines hosted within the VMM domain. Once the APIC and a vCenter servers are linked together (via communication over an OOB network) with the creation of a VMM domain, the following actions take place:

· A Distributed Virtual Switch (DVS) managed by APIC is created and made available to all the ESXi hosts managed by the vCenter server.

· Every time an EPG is created in APIC and bound to the VMM domain, a corresponding port group is dynamically created in vCenter for the previously described DVS. Virtual machines (VMs) can then be connected to that port group and this allows the ACI fabric to classify them as part of the defined EPG.

The following sections described the various steps required for the creation of the VMM Domain.

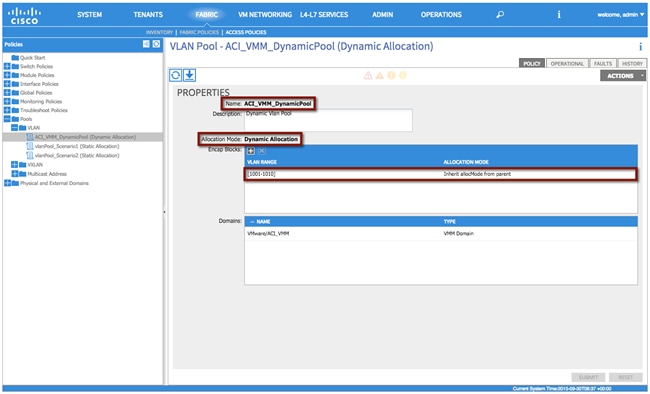

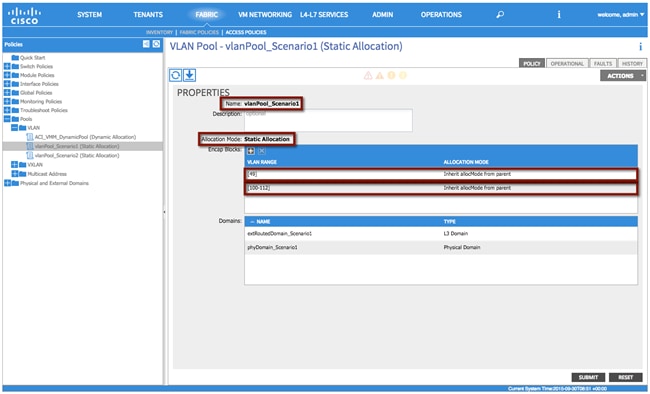

Dynamic VLAN Pool

A Dynamic VLAN pool is managed internally by the APIC to allocate VLANs to the dynamically created port groups (associated to the EPGs) that are made available on the APIC-managed DVS. In the following example, a Dynamic Pool 1000-1010 is created and will be used for the migration scenarios discussed in later sections.

Note: A VMM domain can be associated with only one dynamic VLAN pool.

Table 5: VM Networking – Dynamic VLAN Pool

| VM Networking |

VLAN Pool |

Description |

| Name |

ACI_VMM_DynamicPool |

- |

| Allocation Mode |

Dynamic Allocation |

- |

| Encap Block |

1000-1010 |

VM Portgroup VLAN Pool |

To configure a dynamic VLAN pool, login to the APIC GUI with administrator privileges and follow the path below:

Fabric à Access Policies à Pools à VLAN à [ACI_VMM_DynamicPool]

Figure 51: VM Networking – Dynamic VLAN Pool

XML 12: VM Networking – Dynamic VLAN Pool

<fvnsVlanInstP allocMode="dynamic" descr="Dynamic Vlan Pool" name="ACI_VMM_DynamicPool">

<!—VLAN Pool -->

<fvnsEncapBlk allocMode="inherit" from="vlan-1001" name="" to="vlan-1010"/>

</fvnsVlanInstP>

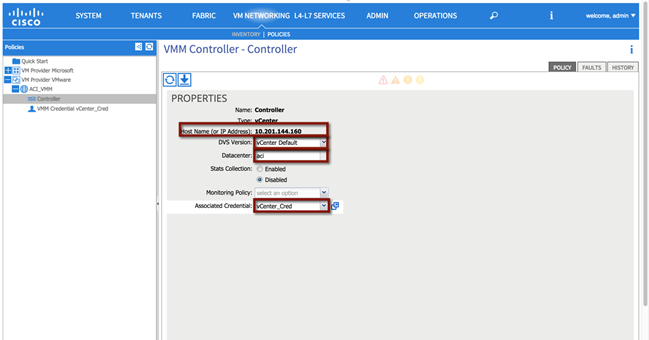

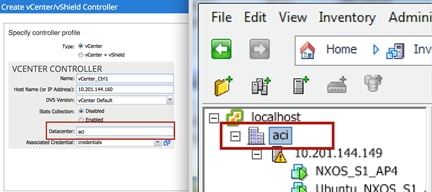

Creation of the VMM Domain

VMM domains contain VM controllers such as VMware vCenter or Microsoft System Center Virtual Machine Manager (SCVMM) and the credential(s) required for the ACI API to interact with the VM controller. A VMM domain enables VM mobility within the domain but not across domains.

To configure a VMM domain, the first step is defining the VM Provider (Microsoft or VMware options are available at the time of writing of this document). In order to do that, log in to the APIC GUI with administrator privileges and follow the path below:

Fabric à VM Networking à Policies à VM Provider VMware à [ACI_VMM] à Controller

Figure 52: VM Networking – Provider VMware

XML 13: VM Networking – Provider VMware

<vmmDomP enfPref="hw" mcastAddr="0.0.0.0" mode="default" name="ACI_VMM">

<vmmRsDefaultStpIfPol tnStpIfPolName="default"/>

<vmmRsDefaultFwPol tnNwsFwPolName="default"/>

<vmmRsDefaultLldpIfPol tnLldpIfPolName="default"/>

<!—vCenter IP -->

<vmmCtrlrP dvsVersion="unmanaged" hostOrIp="10.201.144.160" inventoryTrigSt="untriggered" name="controller" port="0" rootContName="aci" scope="vm" statsMode="disabled">

<!—vCenter Credentials -->

<vmmRsAcc tDn="uni/vmmp-VMware/dom-ACI_VMM/usracc-Creds"/>

</vmmCtrlrP>

<!—Dynamic VLAN Pool -->

<infraRsVlanNs tDn="uni/infra/vlanns-[ACI_VMM_DynamicPool]-dynamic"/>

<vmmRsDefaultCdpIfPol tnCdpIfPolName="default"/>

<vmmRsDefaultLacpLagPol tnLacpLagPolName="default"/>

<vmmRsDefaultL2InstPol tnL2InstPolName="default"/>

<vmmUsrAccP descr="" name="Creds" usr="root"/>

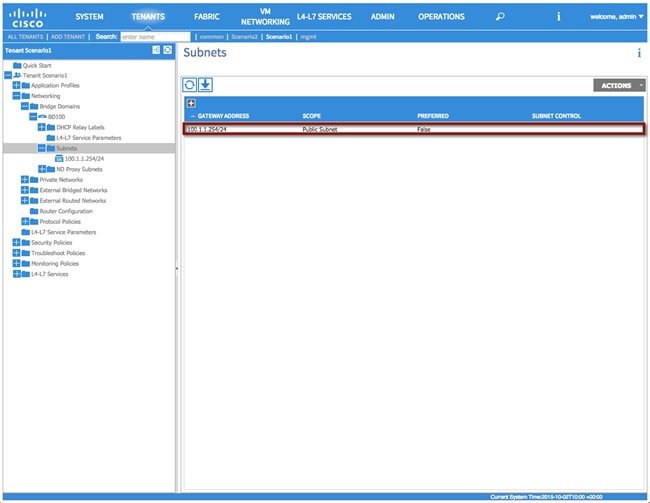

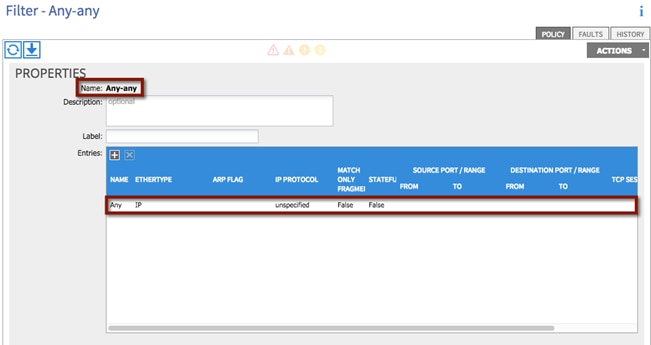

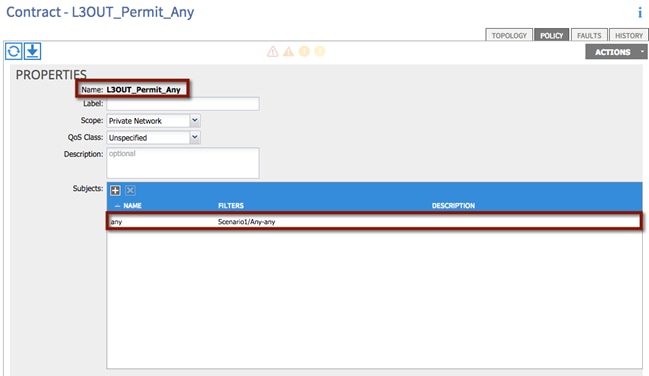

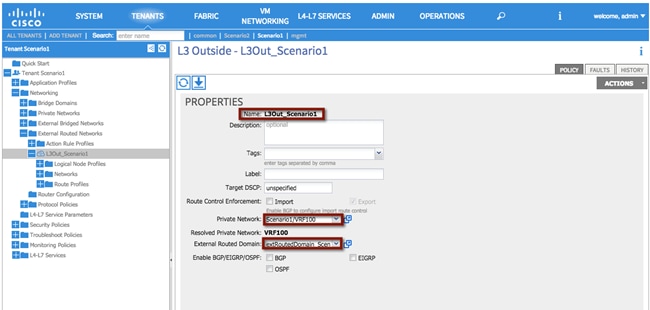

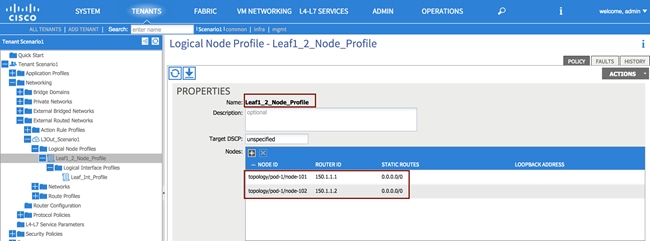

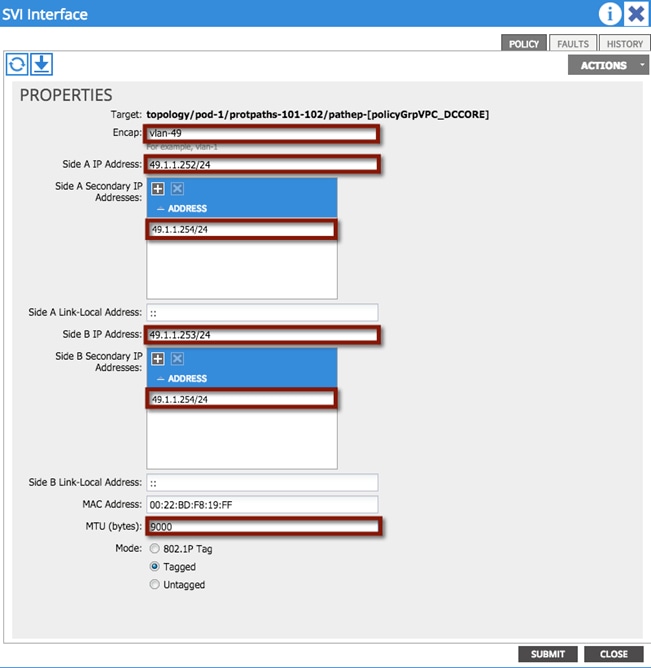

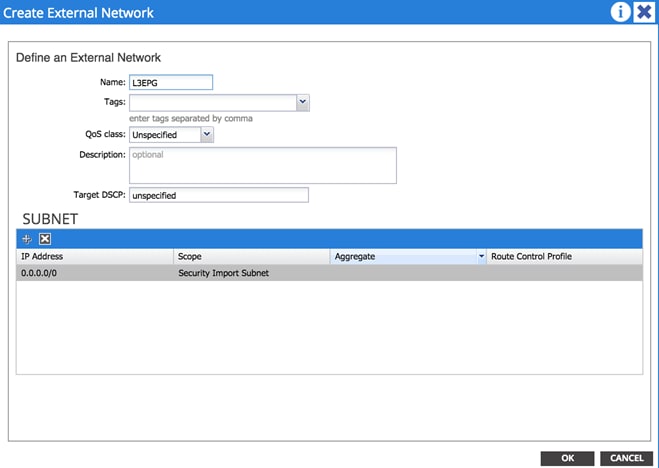

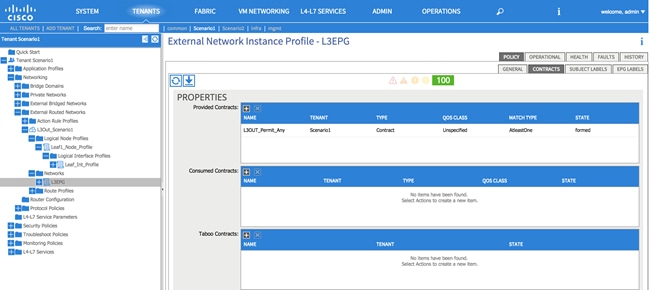

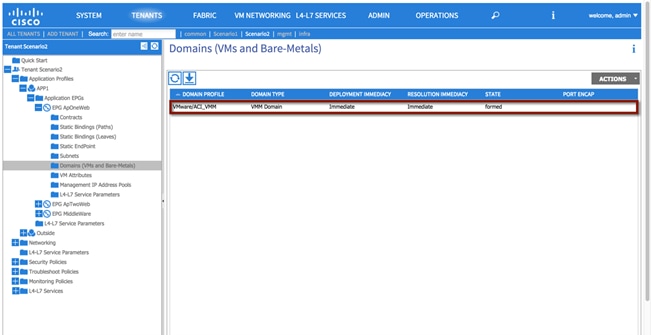

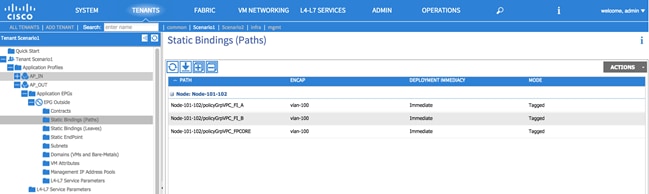

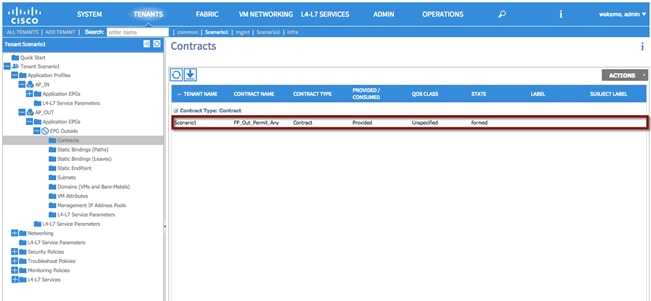

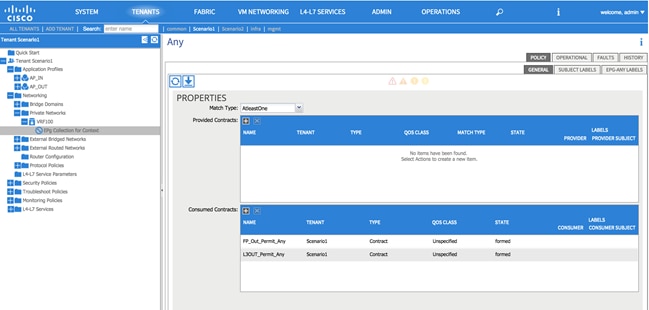

</vmmDomP>