CURWB Deployment for Autonomous Operations in Open-Pit Mining

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- February 22, 2022

Chapter: CURWB Deployment for Autonomous Operations in Open-Pit Mining

- Chapter 1: Autonomous and Tele-Remote Operations in Open-Pit Mining

- Executive Summary

- Objectives and Challenges in the Mining Industry

- Mining Use-Cases and Requirements

- Digitalization in Mining

- Safety Systems

- Tailing Ponds

- Mobile Fleet Management Systems

- Autonomous and Tele-Remote Operations

- Semi-Autonomous Operations

- Software Applications

- Autonomous Dozing

- Autonomous Haulage

- Autonomous Drilling

- Advantages of Autonomous / Semi-Autonomous Mining Operations

- Caterpillar MineStar® Command for Hauling

- AutoTap

- Network Time Protocol (NTP)

- CURWB Radio Behavior

- VLAN Design

- CURWB Fixed Infrastructure – Wireless Backhaul

- CURWB PtP in Mesh Mode

- CURWB Mesh End

- CURWB PtMP in Mesh Mode

- Design Considerations for CURWB PtP and PtMP Wireless Backhaul

- CURWB Fixed Infrastructure - Wireless Backhaul for Mine Deployments

- Tower-ID Feature

- CURWB Mobility Architecture – Layer 2 Fluidity

- L2 Fluidity Packet Flow

- Fluidity Rate Adaptation

- Layer 2 Connectivity

- Ports Connected between Switches

- Ports Connected to CURWB Devices

- Configuring Switch MTU

- Configuring QoS on Switches

- Configuring NTP

- Data Center Distribution Switch

- Data Center Access Switch

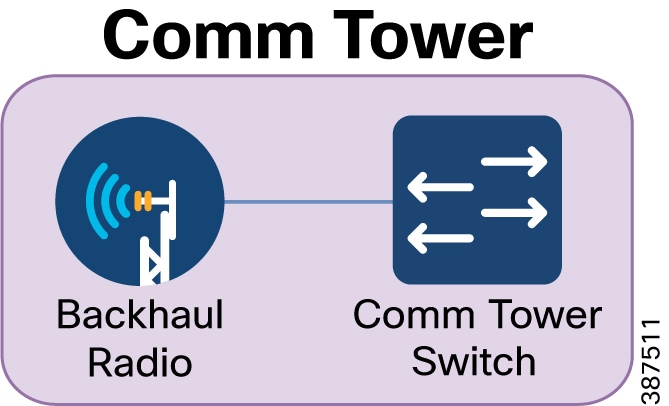

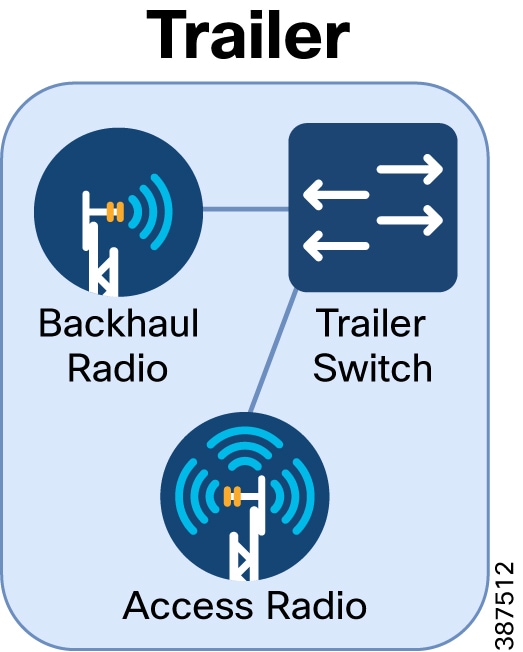

- Trailer

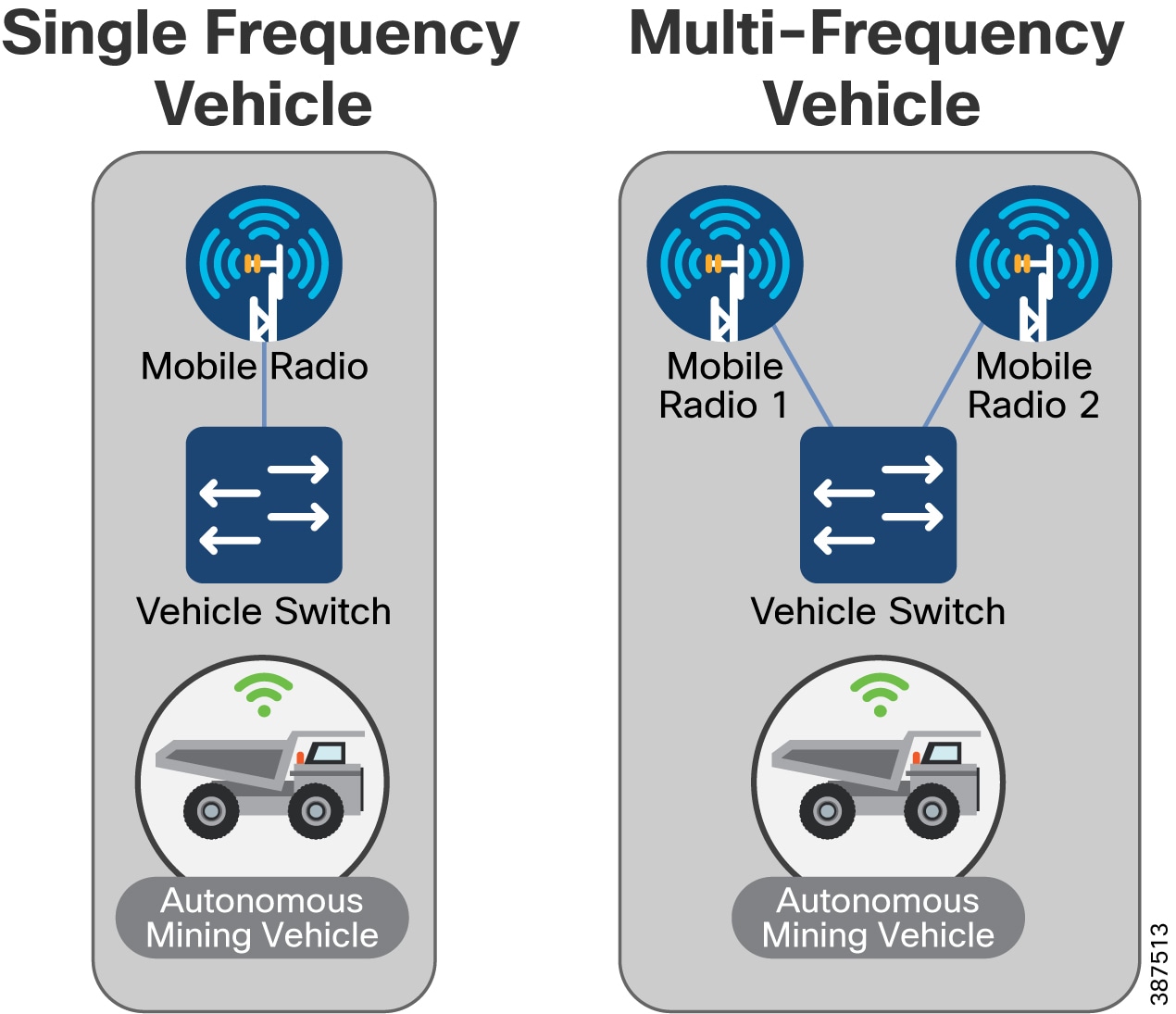

- Vehicles

- CURWB Configuration

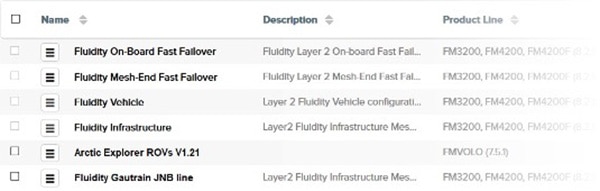

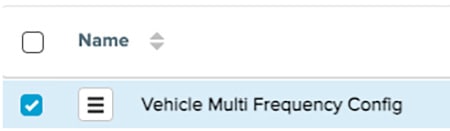

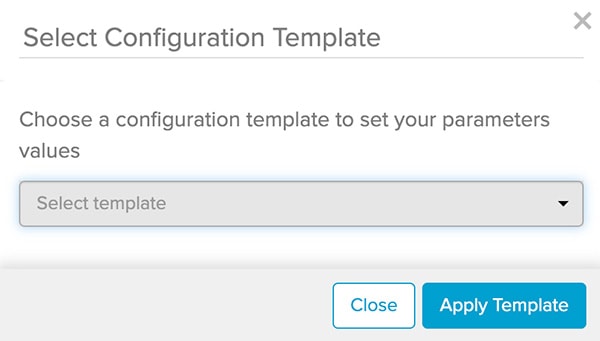

- Creating a New Configuration Template

- CURWB Device Initial Setup and Configuration

- Appendix A: RACER Configuration Template Table

Chapter 1: Autonomous and Tele-Remote Operations in Open-Pit Mining

Executive Summary

Operations in today's mining industry must be flexible and reactive to commodity price fluctuations and shifting customer demand, while maintaining operational efficiency, product quality, sustainability and most importantly safety of the mine and its personnel. Mining companies are seeking to drive operational and safety improvements into their production systems and assets through convergence and digitization by leveraging new paradigms introduced by the Industrial Internet of Things (IIoT). However, such initiatives require the secure connection of process environments via standard networking technologies to allow mining companies and their key partners access to a rich stream of new data, real-time visibility, optimized production systems and when needed, secure remote access to the systems and assets in the operational environments.

The Cisco® Industrial Automation (IA) Mining solution and relevant product technologies are an essential foundation to securely connect and digitize mining production environments to achieve these significantly improved business operational outcomes. The Cisco solution overcomes top customer barriers to digitization including security concerns, inflexible legacy networks, and complexity. The solution provides a proven and validated blueprint for connecting Industrial Automation and Control Systems (IACS) and production assets, improving industrial security, and improving plant data access and reliable operations. Following this best practice blueprint with Cisco market-leading technologies will help decrease deployment time, risk, complexity, and improve overall security and operating uptime.

This version of the Cisco Validated Design and Implementation guide focuses on the design, deployment, configuration and validation of a subset of the Cisco Industrial Automation Mining Reference Architecture focusing on providing wireless network connectivity for mobile Fleet Management Systems (FMS) and Autonomous Vehicle operations, specifically integration with the Caterpillar MineStar(R) suite of products.

Cisco Industrial Automation Mining Reference Architecture: https://www.cisco.com/c/en/us/td/docs/solutions/Verticals/Industrial_Automation/IA_Verticals/Mining/IA-Mining-DG/IA-Mining-DG.html

Objectives and Challenges in the Mining Industry

Mining Customer Objectives and Challenges below highlights key objectives and challenges of digitizing mining production environments, from extraction to transportation and several of the steps in between.

Figure 1 Mining Customer Objectives and Challenges

Mining Use-Cases and Requirements

Digitalization in Mining

With growing pressures on the global mining industry, achieving breakthrough performance in all areas of the mining life cycle is fundamental to staying profitable. Mining companies will have to re-think how they have been operating in the past and adapt a digital future to improve productivity, safety, and efficiency.

Digital technologies have the potential to unlock new ways of managing variability and enhancing productivity. As the skilled labor pool shrinks companies are seeking opportunities to better utilize their more experienced workers, and to gain new flexibility to meet future supply chain demands.

Connected devices and smart machines help capture real-time process information enabling better decision-making. Gaining deeper insights into equipment health and operations can dramatically improve asset productivity. Remote operation centers are the evolution to the digitization effort helping enable visibility, management, and remote command and control to allow for economies of scale.

The mine digitalization revolution and the development of technology has and will continue to enable huge improvements for mining operations. This requires a modern and standardized infrastructure that will support the digitalization process as well as openness and interoperability between different systems.

Benefits of Digitalization in Mining:

Safety Systems

Prioritizing safe, healthy, and sustainable operations with worker and environmental safety is the top priority. The ultimate goal is to achieve zero worker injuries and minimize human error. Autonomous, semi-autonomous, and remote operations are helping achieve this goal today by removing people from high-risk environments. Machine autonomy demands a highly available, deterministic, and secure network infrastructure upon which network-intensive mining systems and applications rely. Slope and seismic activity monitoring allow for production optimization while minimizing safety risk.

Tailing Ponds

Currently many mine operators monitor tailing ponds manually. Operations management send personnel to tailing ponds; however, prior approval is typically required for access. Acquiring approval for access can take time, as does the drive to and from the tailing pond which can take an hour in some facilities. Additionally, supervisors require that personnel check valves and place discharge hoses. Ultimately, a large amount of time is expended prior to the movement of any water or waste product.

Figure 2 Tailing Pond at an Open-Pit Mine

Enabling connectivity and visibility into water and waste flow from the processing plant to the tailing ponds will improve production efficiency, resource utilization, monitoring for safety, and environmental compliance. Being able to monitor valve positions remotely allows operators to proactively identify where waste would be delivered without having to dispatch personnel to visually inspect valve conditions along the lengthy pipes that run between the processing plant and the tailing ponds. This capability will speed up the waste management process and improve safety with the knowledge that waste is being sent to the correct location. Otherwise, waste could cause instability if sent to an incorrect tailing pond and may potentially lead to environmental impact.

Tailing dams require seismic and dam wall monitoring. Mobile mine workers want full coverage via remote access to production and corporate systems when working in and around tailing dams.

Dust control is another major concern around mines in general and at tailing areas specifically, because tailing ponds are made of very small particles of earth. Environmental impact is a major concern, as not only could dust have a negative impact on the environment but it also could result in large fines from the local environmental supervisory agencies. By automating dust control sprays and using video to demonstrate dust control, a mining operation can limit the financial impact from penalties imposed should dust-related issues occur. Other places where automated dust control is needed include ore heaps and bulk shipping ports.

Key networking capabilities required to support the mobility domain include:

■![]() Resilient, reliable, and mobile wireless networks to connect key assets and personnel

Resilient, reliable, and mobile wireless networks to connect key assets and personnel

■![]() Wireless backhaul and WAN technologies to interconnect the extraction zones to local sitewide operational services and Remote Operations Centers.

Wireless backhaul and WAN technologies to interconnect the extraction zones to local sitewide operational services and Remote Operations Centers.

Mobile Fleet Management Systems

Most mines today have some version of a mobile Fleet Management System (FMS) to provide equipment monitoring and dispatch. For these systems to work effectively, they require connectivity between mine equipment and mine operations software. These requirements often drive the business case behind the initial mine network deployment. Even in these initial wireless network deployments, it is very helpful to consider the planned operation of the mine since an increase in the scope of network usage implies more network requirements.

As use cases get more complex, the impact of network performance becomes increasingly critical to the operation of an industrial site. Increased demand is put on the network because field personnel come to rely on access to online systems and the new features of mobile fleet management. Eventually, the infrastructure becomes a must-have, nonnegotiable requirement to operate the industrial site.

Autonomous and Tele-Remote Operations

Traditionally, most heavy equipment operations in a mine are performed with an operator located within the mining equipment. Not only is this costly, but it also puts personnel into potentially hazardous situations such as equipment rolls or collisions. The capability of new industrial vehicles to drive autonomously or semi-autonomously has been a game changer for the mining industry.

Within mining operations, transportation from personnel housing to mine operator staging areas can take over an hour (one way). Workers might be required to wear special personal protective equipment (PPE), which requires a significant amount of time to maintain and change into. In some underground areas that are extremely dangerous and unstable, such as wet muck underground tunnels, or even in extremely hot or cold mine locations, mine personnel can be directly exposed to dangerous environments for only limited amounts of time.

News reports of self-driving cars on public roads have been increasing in recent years, but the technology is definitely struggling to keep up with the hype. Challenges include the large number of unpredictable conditions from undocumented aspects of the driving environment, other human drivers, and wildlife, just to name a few. In the mining environment, however, it’s feasible to control or document the conditions to an extent that driving autonomy is very much achievable and profitable.

Mining operations are driving toward fully autonomous operational models throughout the production chain. Removing humans that manually operate equipment in high-risk production areas will improve productivity, improve product quality, increase worker safety, and help reduce the overall cost of operations. Common use cases today involving autonomous vehicles and equipment are either fully automated, without any direct human interaction, or semi-automated, with equipment that is remotely operated and monitored. Remote operations centers can be located close to the mine site or located completely offsite and away from the mine.

Digital Dispatch

The first step in the evolution from manual to semi-automated or fully automated mining operations is digital dispatch. Digital dispatch processes connect mobile fleets to the mine network, thus allowing for proper route calculations and ensuring that operators unload the correct materials in the right spots, efficiently transporting high-grade ore to the crusher and appropriately delivering overburden to the correct dump. Digital dispatch requires connecting the mine fleet over a wireless network.

Semi-Autonomous Operations

Semi-autonomous machine operations include loaders in a one-to-one or one-to-many remote operator to machine ratio. One use case is a haul truck operator who can control a loader from inside the cab of the truck to load ore into their truck, thus eliminating the need for an additional operator who would be sitting idle the entire time that the truck is in transit. A ratio of one-to-one or one-to-many allows remote personnel to operate mining equipment from a safe location.

Likewise, autonomous trucks can haul resources from shovels or front-end loaders in a mine to a crusher area. When fully automated, trucks may continuously operate at optimum performance, thus reducing engine wear and improving tire performance and fuel efficiency. This reduces maintenance costs, reduces downtime, and increases productivity.

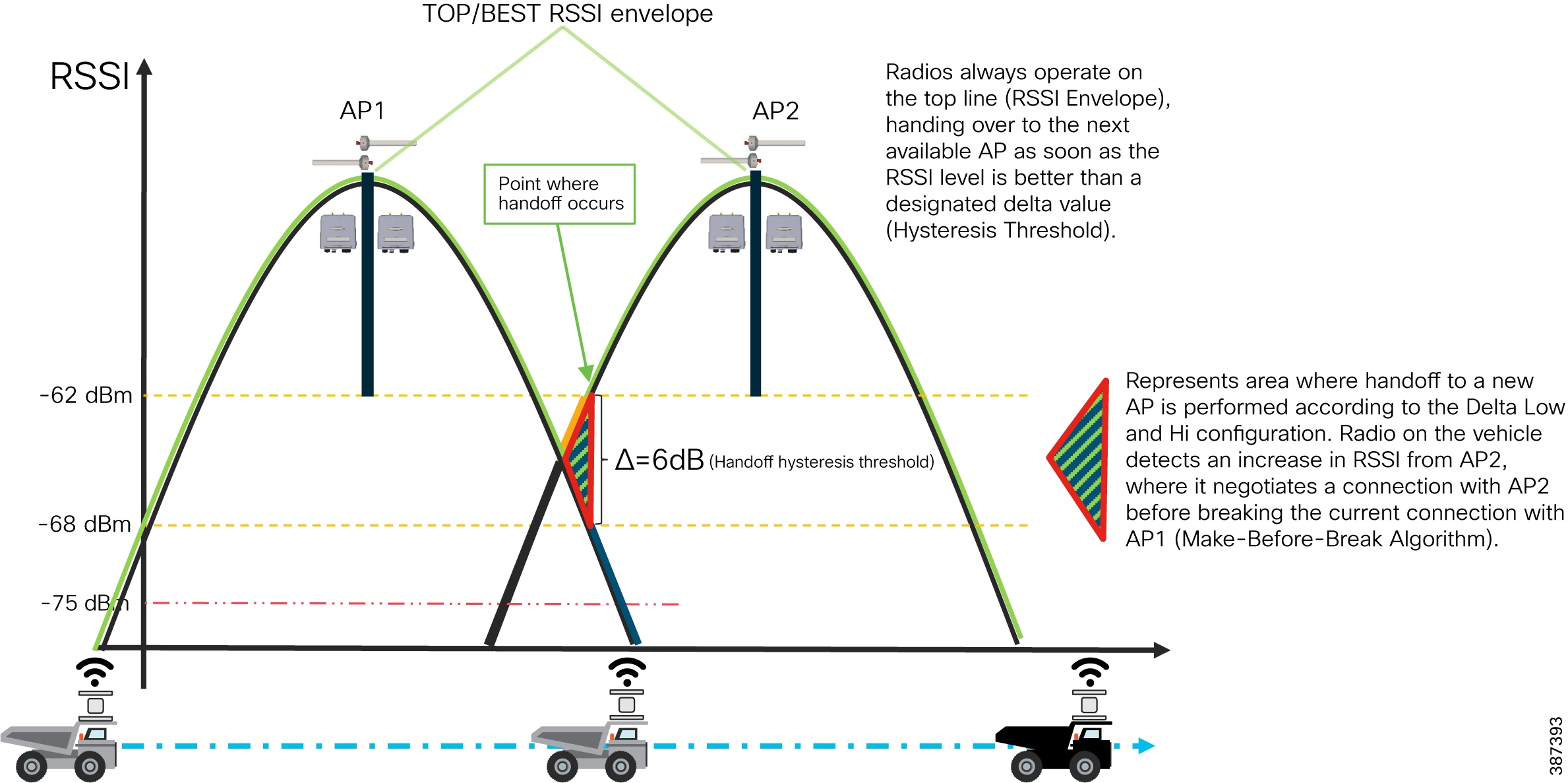

Reliable wireless network performance is critical to ensuring continuous equipment operations. Network personnel strive to minimize packet loss and wireless roam times to achieve optimal application performance. Any wired network issues, or prolonged wireless roam times can trigger the safety system that in-turn will result in the vehicle or equipment stopping, ultimately affecting productivity, reducing production, and incurring financial losses. The Cisco Ultra-Reliable Wireless Backhaul (CURWB) portfolio of fixed and mobility wireless solutions play an integral part in providing a high-performing, highly available, and secured wireless networking infrastructure for supporting autonomous operations within a mine.

Connecting the mine vehicle fleet to the production network allows Vehicle Intelligent Monitoring Systems (VIMS) to feed a large data analytics engine. Analysis of VIMS data by mine operators enables better equipment monitoring and proactive maintenance. Cisco’s solution helps mining companies improve predictive maintenance and provides visibility into issues such as problems with engine oil pressure or faulty cooling systems before they escalate. Discovering and addressing these issues before a failure occurs can save up to 72 hours of downtime or a costly engine replacement.

As an example, consider the conversion of haul trucks to autonomous operation in an open pit mine. Removing drivers from haul trucks has an immediate reduction in personnel cost, since only a few people are required to supervise the operation that required dozens of drivers previously. Safety in the mine is also increased when the number of people in hazardous environments is dramatically reduced. Without the human driver in charge, the truck no longer needs to stop for lunch breaks, shift changes, or other human initiated stops. Once in production, it’s also become evident that autonomous systems are less harsh in the operation of the vehicles, resulting in fewer maintenance issues.

Other considerations that are less documented include predictive maintenance savings by leveraging access to more vehicle data or more-efficient driving paths that are possible for trucks but not friendly to human drivers.

The benefits of vehicle autonomy are so compelling that most mining companies have an active autonomous vehicle project or are considering it. All of these projects have implications to the network infrastructure, and each of them is centered around a specific autonomous driving software platform.

The key networking capabilities required to support the mobility domain include:

Resilient, reliable, and mobile wireless networks to connect key assets and personnel

Wireless backhaul and WAN technologies to interconnect the extraction zones to local sitewide operational services and Remote Operations Centers.

Software Applications

The software systems used to oversee and control the autonomous mine vehicle environment are largely owned by the mine vehicle manufacturers, with third-party solutions starting to emerge. For example, in open pit mining, Caterpillar has been deploying their CAT(R) MineStar(R) application suite for a number of years.

The application suite is made up of two parts: first, the user interface for the customer’s autonomous or tele-remote operations center, and second, a software component in every vehicle that is allowed to enter the autonomous zone. Truck software components provide location and other vehicle-specific data to the central system to help with decision-making about positioning, route, and speed instructions.

The equipment must be in continuous communication with the central system for position, route, speed, and other instructions.

At an autonomous operations center, a large wall screen shows a map of the autonomous zone’s geographic features and all the real-time vehicle locations in the zone. The vehicle status is evident by the color of the vehicle icon and any other relevant information that may help in managing the system.

Routing and speed decisions are primarily made by what is known in the digital version of the autonomous zone. The geographic features are entered into the zone by the builder role. One or more builders are present in the zone during production to ensure that any new geographic features are added to the digital representation as soon as possible. All vehicle data is dynamically updated in the digital world by the software onboard each vehicle. If the digital world doesn’t match the physical world, there are safety mechanisms like lidar, radar, and other technologies that are used to stop autonomous vehicles if a safety issue is evident. One of the safety mechanisms is triggered when network connectivity is disrupted between any vehicle and the central system. A reliable network infrastructure is essential to the success of this use case.

Autonomous Dozing

Dozing can be done in several ways. The traditional way is to have an operator inside the cab driving the dozer. Several times a dozer is used to compact an area or to move in a repetitive manner to move some material. When this is the case autonomy can play a big role in executing the repetitive motions. An operator can remotely move an automated dozer from one job to the other and this way a single operator can efficiently control several dozers. In addition to working more efficiently, operators also experience less physical fatigue thanks to their location in a comfortable office environment.

Autonomous Haulage

Likewise, autonomous trucks can haul resources from shovels or front-end loaders in a mine to a crusher area. When fully automated, trucks may continuously operate at optimum performance, thus reducing engine wear, improving tire performance and fuel efficiency. This reduces maintenance costs and downtime and increases productivity.

Autonomous Drilling

Accurate drilling and blasting help make every other aspect of a mining operation smoother, safer and more productive. Even minor deviations from the pattern can have a big impact, with unevenly blasted material that is harder and more costly to handle, resulting in higher cost per ton for the entire operation.

With high precision and more accurate depth tracking, autonomous drills can work far more accurately to plan. That means more accurate blasting and better-shot material, less time spent removing overburden, and higher productivity.

Most importantly, an autonomous drill removes operators from the dust, vibration and other hazardous conditions that surround a working drill — and keeps them safely away from blasting areas. No operator is safer than one who is overseeing the machine from a comfortable location far from the hazards the machine itself faces.

Advantages of Autonomous / Semi-Autonomous Mining Operations

■![]() Higher Operational Efficiency: Programmed loader and truck cycles enable fast, precise operation that is controlled within equipment limitations. Bottlenecks are reduced for material handling, cycle times, and productivity.

Higher Operational Efficiency: Programmed loader and truck cycles enable fast, precise operation that is controlled within equipment limitations. Bottlenecks are reduced for material handling, cycle times, and productivity.

■![]() 24 x 7 Operations: Ore extraction can proceed in round-the-clock shifts with minimal transition time, thereby dramatically improving productivity and daily output.

24 x 7 Operations: Ore extraction can proceed in round-the-clock shifts with minimal transition time, thereby dramatically improving productivity and daily output.

■![]() Improved Worker Safety: Improvements in worker safety and health are realized by connecting autonomous vehicles, and other equipment, which can be operated remotely from a safe location with no risk to workers.

Improved Worker Safety: Improvements in worker safety and health are realized by connecting autonomous vehicles, and other equipment, which can be operated remotely from a safe location with no risk to workers.

■![]() Improved Mine Safety: Fewer workers are required in mining pit. Depending on the level of automation, these workers could be limited to maintenance and other general functions.

Improved Mine Safety: Fewer workers are required in mining pit. Depending on the level of automation, these workers could be limited to maintenance and other general functions.

■![]() Improved equipment utilization: Vehicle automation provides more detailed telemetry on vehicle health and status, thereby facilitating predictive and better preventive maintenance scheduling and lower risk of incidents and abuse. Vehicle automation increases productivity and decreases vehicle maintenance costs.

Improved equipment utilization: Vehicle automation provides more detailed telemetry on vehicle health and status, thereby facilitating predictive and better preventive maintenance scheduling and lower risk of incidents and abuse. Vehicle automation increases productivity and decreases vehicle maintenance costs.

■![]() Ability to attract tech-savvy young talent pool: Embracing technology has the added benefit of helping mining operations attract a new generation of tech-savvy talent by moving labor-intensive jobs out of the mine and into remote control centers. Fewer risks and safer conditions expand the pool of candidates to operate mine vehicles.

Ability to attract tech-savvy young talent pool: Embracing technology has the added benefit of helping mining operations attract a new generation of tech-savvy talent by moving labor-intensive jobs out of the mine and into remote control centers. Fewer risks and safer conditions expand the pool of candidates to operate mine vehicles.

Fleet Management Network Requirements

■![]() Must support IP connectivity from mine vehicles or other assets to a local server

Must support IP connectivity from mine vehicles or other assets to a local server

■![]() Minimum Throughput capability 500 kbps

Minimum Throughput capability 500 kbps

■![]() Minimum client radio connection rate 2 Mbps

Minimum client radio connection rate 2 Mbps

■![]() Must support wireless roaming < 250 mSec roaming time

Must support wireless roaming < 250 mSec roaming time

■![]() Maximum loss of connectivity 3 seconds

Maximum loss of connectivity 3 seconds

■![]() Must support the following protocols:

Must support the following protocols:

Autonomous Haulage System (AHS) Network Requirements

■![]() Must support IP connectivity from mine vehicles or other assets to a local server.

Must support IP connectivity from mine vehicles or other assets to a local server.

■![]() Consecutive packet loss or out of order packets <= 2

Consecutive packet loss or out of order packets <= 2

■![]() Minimum Throughput capability 6 Mbps

Minimum Throughput capability 6 Mbps

■![]() Minimum client radio connection rate 6 Mbps

Minimum client radio connection rate 6 Mbps

■![]() Must support wireless roaming <250 mSec roaming time

Must support wireless roaming <250 mSec roaming time

■![]() Maximum loss of connectivity 2 seconds

Maximum loss of connectivity 2 seconds

■![]() Must support the following protocols:

Must support the following protocols:

Remote Command Network Requirements

■![]() Must support IP connectivity from mine vehicles or other assets to a local server.

Must support IP connectivity from mine vehicles or other assets to a local server.

■![]() Minimum Throughput capability 10 Mbps

Minimum Throughput capability 10 Mbps

■![]() Minimum client radio connection rate 24 Mbps

Minimum client radio connection rate 24 Mbps

■![]() Consecutive packet loss or out of order packets <= 2

Consecutive packet loss or out of order packets <= 2

■![]() Must support wireless roaming < 250 mSec roaming time

Must support wireless roaming < 250 mSec roaming time

Caterpillar MineStar® Command for Hauling

Cat® Command for Hauling is an autonomous haulage solution that can increase your safety, efficiency, productivity and more. Trucks can travel to the loading and dump points, fueling stations, haul roads and more — all without the need for an operator to step on board.

Command for Hauling takes advantage of the most sophisticated technologies available to enable Cat® autonomous haul trucks to work safely and productively on busy mine sites. More than just an operator-free equipment system, Command for hauling is a complete, next-generation haulage solution that delivers solid, bottom-line benefits for miners who need to work in difficult or inaccessible locations. Highly advanced safety systems enable Cat autonomous haul trucks to operate reliably around other mining equipment, light vehicles, and site employees.

Command for Hauling integrates with all other Cat® MineStar® System capability sets for assignment, control, and machine health monitoring.

Command for Hauling – Network Requirements

Command for Hauling requires continual, uninterrupted communication with the moving machines as they traverse the zone of operations. The underpinning network technology requirement of Command for Hauling is the reliable transmission and reception of multicast, unicast and broadcast packets at all points in the area of operations. In order to operate most effectively, the autonomous trucks need to continually update their location and receive a stream of position updates of the other site awareness machines throughout the mine. Additionally, all machines within the AOZ must reliably receive GPS correction broadcasts for positioning accuracy.

Network design challenges exist in the handoff from one area of coverage to another as these algorithms must be robust and efficient. Support for multicast traffic during handoffs is also a key requirement.

Cat® MineStar® Command for Hauling takes advantage of the most sophisticated technologies available to deliver a next-gen haulage solution - one that boosts safety, productivity, and availability on busy mine sites, especially those in difficult or remote locations.

Command for Hauling has forever changed how mining companies move material — allowing them to haul more efficiently with near-continuous operation and move more with fewer people on site. Proven on diverse operations around the globe, Command for Hauling is opening new possibilities for mines of all sizes, complexities and locations.

■![]() Enhances safety by removing operators from hazardous or remote sites.

Enhances safety by removing operators from hazardous or remote sites.

■![]() Reduces costs for employee infrastructure and travel to remote sites.

Reduces costs for employee infrastructure and travel to remote sites.

■![]() Improves efficiency, enables consistency in operations and provides near-continuous operation through the reduction of operational delays.

Improves efficiency, enables consistency in operations and provides near-continuous operation through the reduction of operational delays.

■![]() Improves fuel efficiency and component life.

Improves fuel efficiency and component life.

■![]() Prevents machine damage and downtime due to misuse and overloading.

Prevents machine damage and downtime due to misuse and overloading.

■![]() Allows the instant alteration or redesign of mine maps to meet changing operational needs.

Allows the instant alteration or redesign of mine maps to meet changing operational needs.

■![]() Enables advanced assignment and tracking from a central location.

Enables advanced assignment and tracking from a central location.

■![]() Alerts maintenance personnel to machine faults, enabling repairs before failure and reducing downtime.

Alerts maintenance personnel to machine faults, enabling repairs before failure and reducing downtime.

System Requirements

It is important to note that Command for Hauling requirements must form the basis of the network design criteria and not compromise requirements to other applications. The network traffic from Command for Hauling should be protected through appropriate quality of service or segregation.

Quality of Service (QoS) Requirements

A quality of service (QoS) function to provide priority to Command for Hauling over background traffic will be required if other applications requiring significant bandwidth are running on the same network. In these cases, Command for Hauling traffic must be prioritized in the top queues.

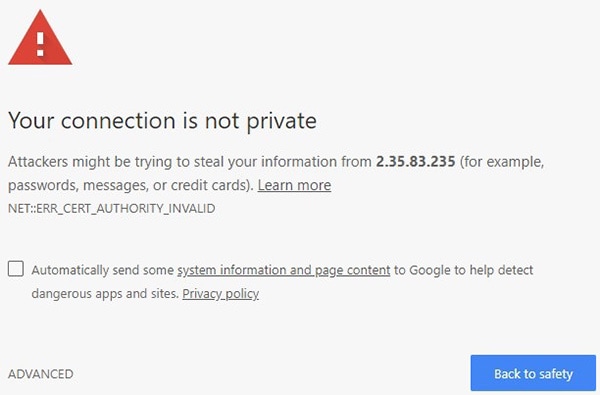

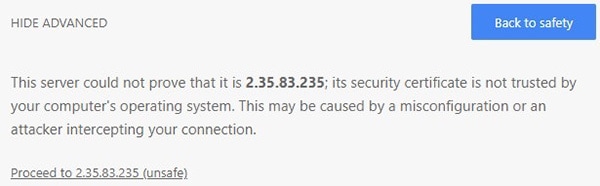

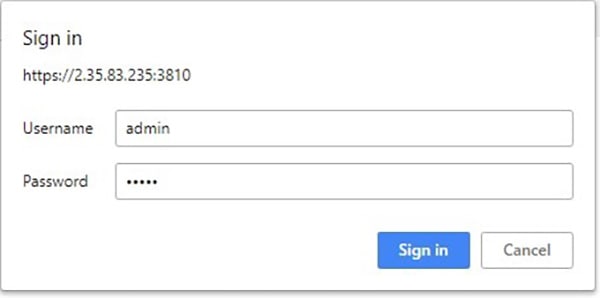

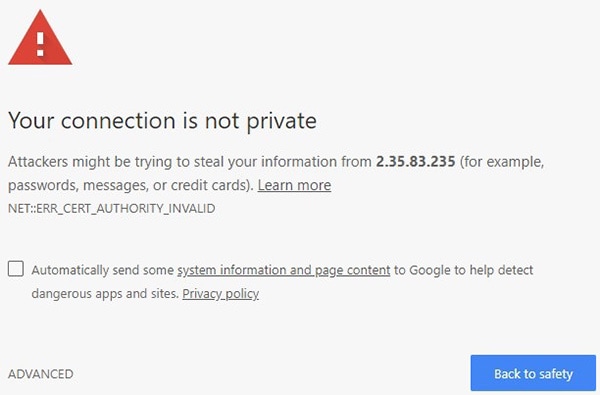

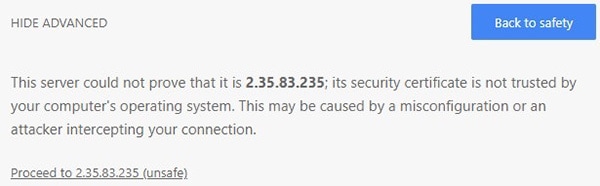

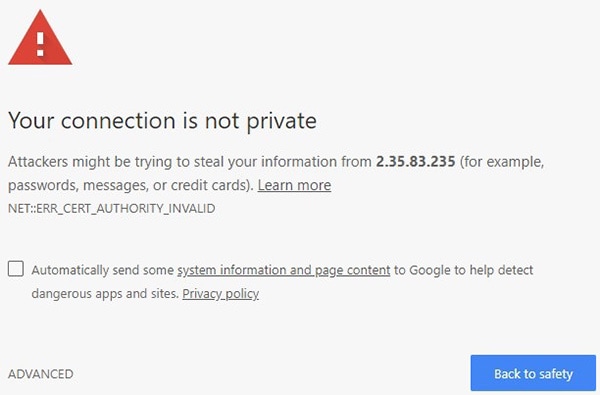

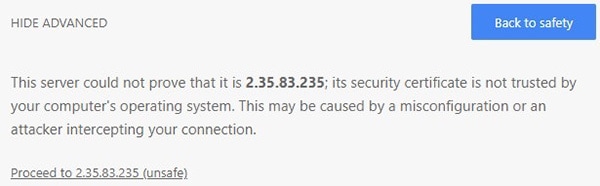

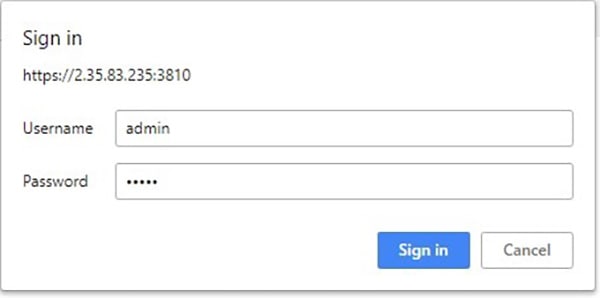

Security Requirements

The site communications infrastructure must be protected from access by unauthorized or unqualified individuals and applications through industry best practices for securing mission critical data networks including but not limited to the following:

■![]() Access to configuration of all network components must be limited to authenticated, authorized network management and support personnel.

Access to configuration of all network components must be limited to authenticated, authorized network management and support personnel.

■![]() Adequate security firewalls must be in place to protect against unauthorized access via public networks.

Adequate security firewalls must be in place to protect against unauthorized access via public networks.

■![]() Qualified Caterpillar and Caterpillar dealer technical support personnel must be allowed appropriate access to the network to administer and support the Command for Hauling system.

Qualified Caterpillar and Caterpillar dealer technical support personnel must be allowed appropriate access to the network to administer and support the Command for Hauling system.

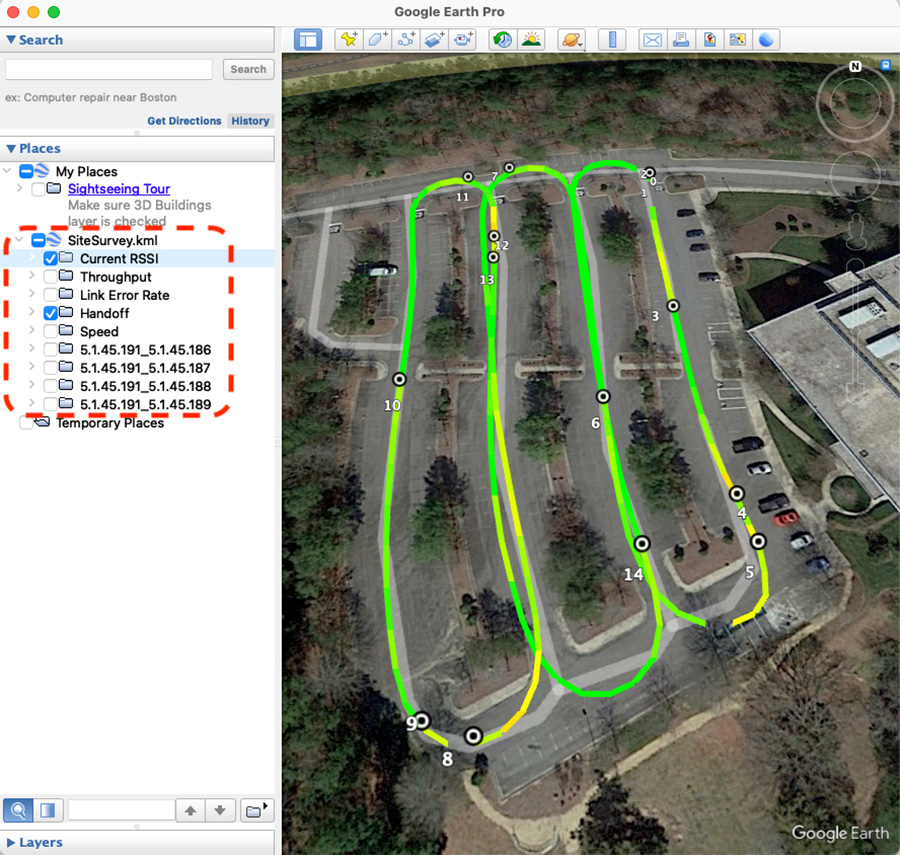

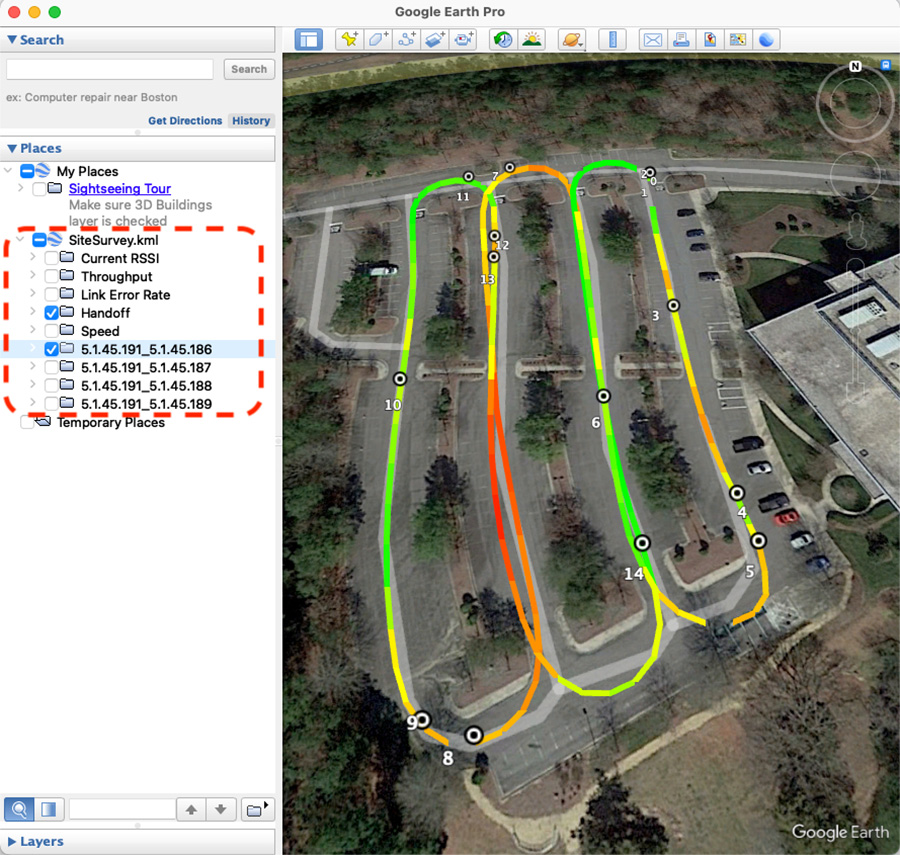

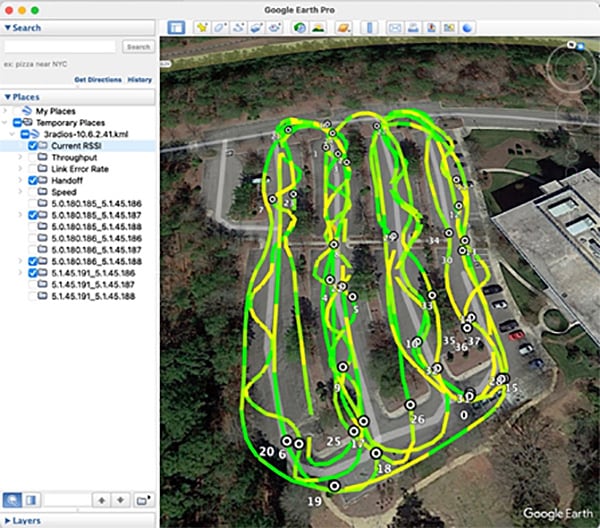

Network Management and Maintenance Requirements

The wireless RF environment must frequently be measured and quality maintained to assure coverage. In an active mining environment, the RF characteristics change as the mine moves or terrain features are removed. It is strongly suggested that responsibility for the wireless network coverage and availability is well integrated with the mine operations so changes can be anticipated and responded to quickly by surveying and altering the coverage as necessary.

Understanding the health of the network and equipping the network support team with the right tools is important to getting the most productivity from Command products. Wi-Fi spectrum analyzer to locate and mitigate interference is a key tool.

Chapter 2: CURWB Architecture to support Autonomous and Tele-Remote Operations within Open-Pit Mines

This chapter starts by providing an overview of the Cisco Ultra-Reliable Wireless Backhaul (CURWB) technology, the wired and wireless network components needed to build out the solution, the high-level and low-level architecture to support autonomous and tele-remote operations within an Open-Pit mine, followed by some design best-practices around High-availability, QoS and Security.

Cisco Ultra-Reliable Wireless Backhaul (CURWB) Overview

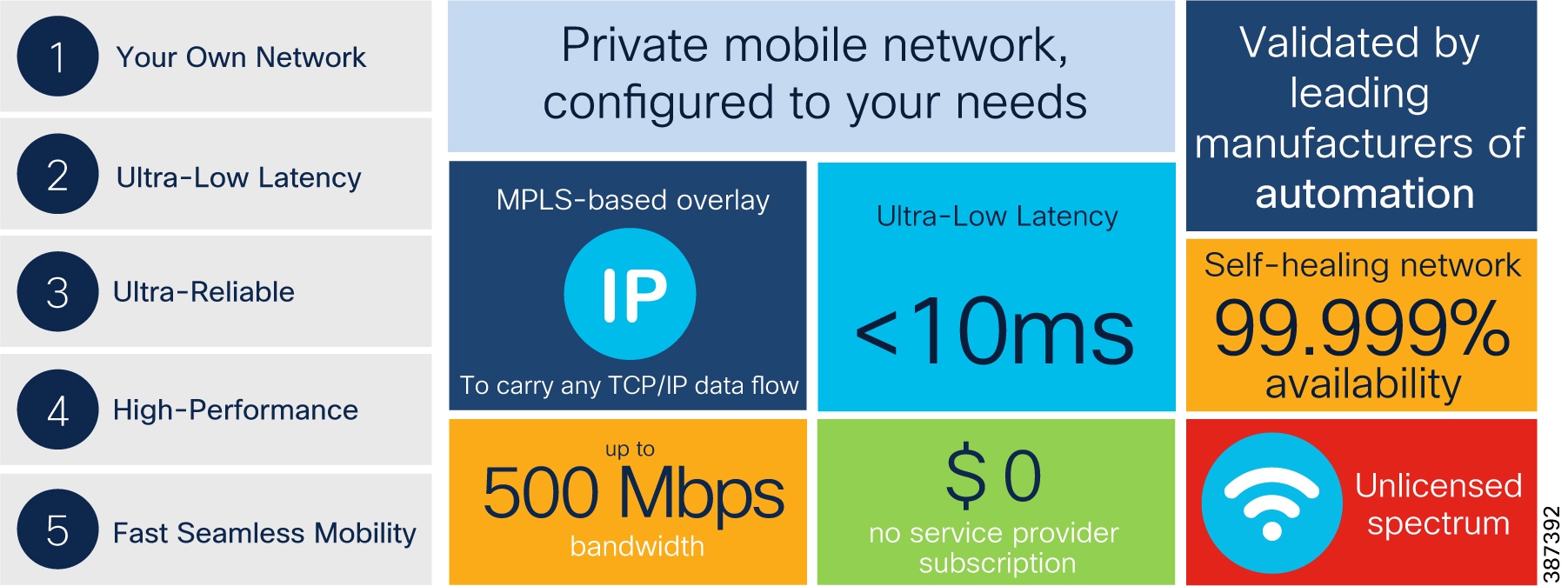

Figure 3 Key CURWB capabilities

Key technical requirement met by CURWB for the Mining Vertical:

■![]() Supports PROFINET and CIP safety

Supports PROFINET and CIP safety

■![]() Ultra-Low latency of < 10 mSec

Ultra-Low latency of < 10 mSec

■![]() Seamless roaming (handoff) - Multi-frequency capability with 0 m/s handoff

Seamless roaming (handoff) - Multi-frequency capability with 0 m/s handoff

CURWB - Key Technology Pillars

Three key technologies underlay the foundation for the Cisco Ultra-Reliable Wireless Backhaul (CURWB) solution.

■![]() Prodigy 2.0: MPLS-based transmission protocol built to overcome the limits of standard wireless protocols.

Prodigy 2.0: MPLS-based transmission protocol built to overcome the limits of standard wireless protocols.

■![]() Fluidity: Proprietary fast-roaming algorithm for vehicle-to-wayside communication with a 0 mSec roam delay and no roam loss for speeds up to 200 Mph or 360 km/hour.

Fluidity: Proprietary fast-roaming algorithm for vehicle-to-wayside communication with a 0 mSec roam delay and no roam loss for speeds up to 200 Mph or 360 km/hour.

■![]() TITAN: Proprietary fast-failover high-availability mechanism that provides hardware redundancy and carrier-grade availability.

TITAN: Proprietary fast-failover high-availability mechanism that provides hardware redundancy and carrier-grade availability.

Prodigy 2.0 – MPLS Overlay

CURWB uses the proprietary wireless-based MPLS transmission protocol Prodigy to discover and create label-switched paths (LSPs) between mesh-point radios and mesh end(s). Prodigy helps with making the wireless mesh networks resilient and helps for both Fixed as well as Mobility networks. MPLS provides an end-to-end packet delivery service operating between Layer 2 and 3 of the OSI network stack. It relies on label identifiers, rather than the network destination address as in traditional IP routing, to determine the sequence of nodes to be traversed to reach the end of the path.

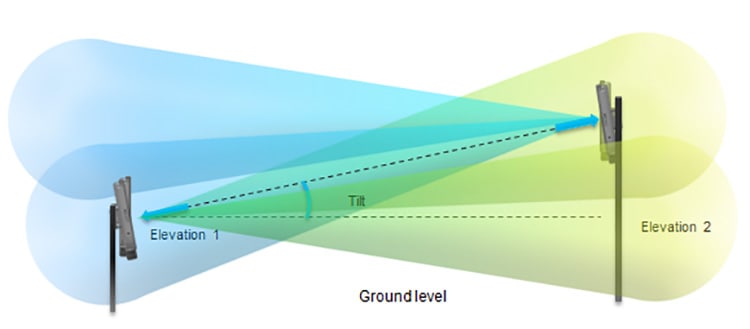

Fluidity

Fluidity enables a vehicle that is moving between multiple infrastructure APs to maintain end-to-end connectivity with seamless handoff between APs. Vehicle radios negotiate with the infrastructure APs and form a new wireless connection to a more favorable infrastructure AP with better signal quality before breaking or losing its currently active wireless connection.

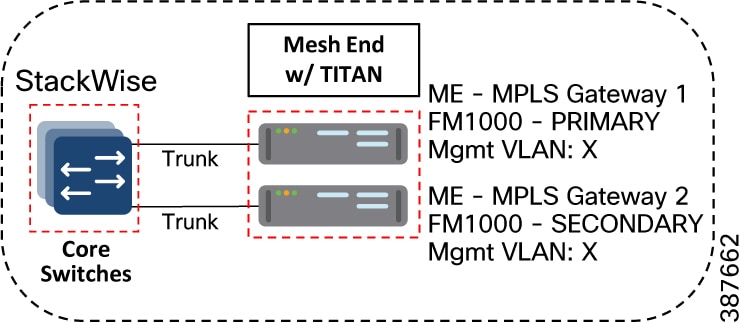

TITAN – Hardware Redundancy and High-Availability

TITAN is a proprietary fast-failover function providing high-availability and protection against hardware failures. The feature virtually guarantees uninterrupted service for mission-critical applications where safety and/or operations would otherwise be compromised by failure of a single radio or gateway device. Leveraging an MPLS-based protocol, TITAN is able to achieve device failovers within 500 mSec within both L2 and L3 networks.

Wired Network Components

Cisco Catalyst 9300 Access Layer Switch

Figure 4 Cisco Catalyst 9300 Access Layer Switch

The Cisco Catalyst 9300 Series Switches are the next generation of enterprise-class, stackable, aggregation layer switches. They provide full convergence between wired and wireless networks on a single platform.

■![]() Delivers 480 Gbps stacking bandwidth capacity.

Delivers 480 Gbps stacking bandwidth capacity.

■![]() Flexible uplinks: Cisco Multigigabit, 1 Gbps, 10 Gbps, 25 Gbps, and 40 Gbps. Fixed (C9300L) and modular (C9300) options.

Flexible uplinks: Cisco Multigigabit, 1 Gbps, 10 Gbps, 25 Gbps, and 40 Gbps. Fixed (C9300L) and modular (C9300) options.

■![]() Flexible downlinks: Cisco Multigigabit, 5 Gbps, 2.5 Gbps, or 1 Gbps copper, or 1 Gbps fiber. Perpetual Cisco UPOE+, Cisco UPOE and PoE+ options.

Flexible downlinks: Cisco Multigigabit, 5 Gbps, 2.5 Gbps, or 1 Gbps copper, or 1 Gbps fiber. Perpetual Cisco UPOE+, Cisco UPOE and PoE+ options.

■![]() Supports ETA, AVB, Cisco Umbrella cloud security, MACsec-256 encryption, hot patching, NFS/ SSO, redundant power and fans.

Supports ETA, AVB, Cisco Umbrella cloud security, MACsec-256 encryption, hot patching, NFS/ SSO, redundant power and fans.

Cat-9300 Datasheet and switch model selector: https://www.cisco.com/c/en/us/products/collateral/switches/catalyst-9300-series-switches/nb-06-cat9300-ser-data-sheet-cte-en.html

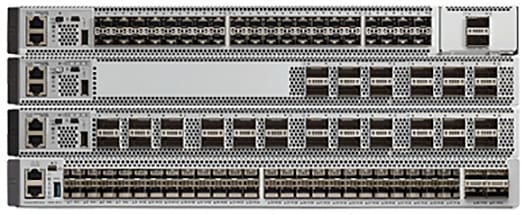

Catalyst 9500 Distribution/Core Layer Switch

Figure 5 Catalyst 9500 Distribution/Core Layer Switch

The Cisco Catalyst 9500 Series Switches are the next generation of enterprise-class, stackable, core layer switches.

■![]() 4-core x86, 2.4-GHz CPU, 16-GB DDR4 memory, and 16-GB internal storage

4-core x86, 2.4-GHz CPU, 16-GB DDR4 memory, and 16-GB internal storage

■![]() Up to 6.4-Tbps switching capacity with up to 2 Bpps of forwarding performance

Up to 6.4-Tbps switching capacity with up to 2 Bpps of forwarding performance

■![]() Up to 32 nonblocking 100 Gigabit Ethernet QSFP28 ports

Up to 32 nonblocking 100 Gigabit Ethernet QSFP28 ports

■![]() Up to 32 nonblocking 40 Gigabit Ethernet QSFP+ ports

Up to 32 nonblocking 40 Gigabit Ethernet QSFP+ ports

■![]() Up to 48 nonblocking 25 Gigabit Ethernet SFP28 ports

Up to 48 nonblocking 25 Gigabit Ethernet SFP28 ports

■![]() Up to 48 nonblocking 10 Gigabit Ethernet SFP+ ports

Up to 48 nonblocking 10 Gigabit Ethernet SFP+ ports

Cat-9500 Datasheet and switch model selector: https://www.cisco.com/c/en/us/products/collateral/switches/catalyst-9500-series-switches/nb-06-cat9500-ser-data-sheet-cte-en.html

Cisco IE3x00 Rugged Industrial Switches

Figure 6 Cisco IE3x00 Rugged Industrial Switches

Cisco Catalyst IE3200 Rugged Series switches feature advanced, full Gigabit Ethernet with a modular, future-proof design. Expandable up to 26 ports in a compact form factor, these rugged switches are optimized for size, power, and performance.

Cisco Catalyst IE3300 Rugged Series switches deliver high-speed up to 10 Gigabit Ethernet connectivity in a compact form factor, and are designed for a wide range of industrial applications where hardened products are required. The modular design of the Cisco Catalyst IE3300 Rugged Series offers the flexibility to expand to up to 26 ports of Gigabit Ethernet or up to 24 ports of Gigabit Ethernet and 2 ports of 10 Gigabit (10G) Ethernet with a range of expansion module options.

The Cisco Catalyst IE3400 Rugged Series switches deliver advanced, high-speed Gigabit Ethernet connectivity in a compact form factor, and are designed for a wide range of industrial applications where hardened products are required. The modular design of the Cisco Catalyst IE3400 Rugged Series offers the flexibility to expand up to 26 ports of Gigabit Ethernet with a range of expansion module options.

All of the above platforms are built to withstand harsh environments in manufacturing, energy, ports and terminals, transportation, mining, smart cities, and oil and gas.

These switches run Cisco IOS® XE, a next-generation operating system with built-in security and trust, featuring secure boot, image signing, and the Cisco® Trust anchor module.

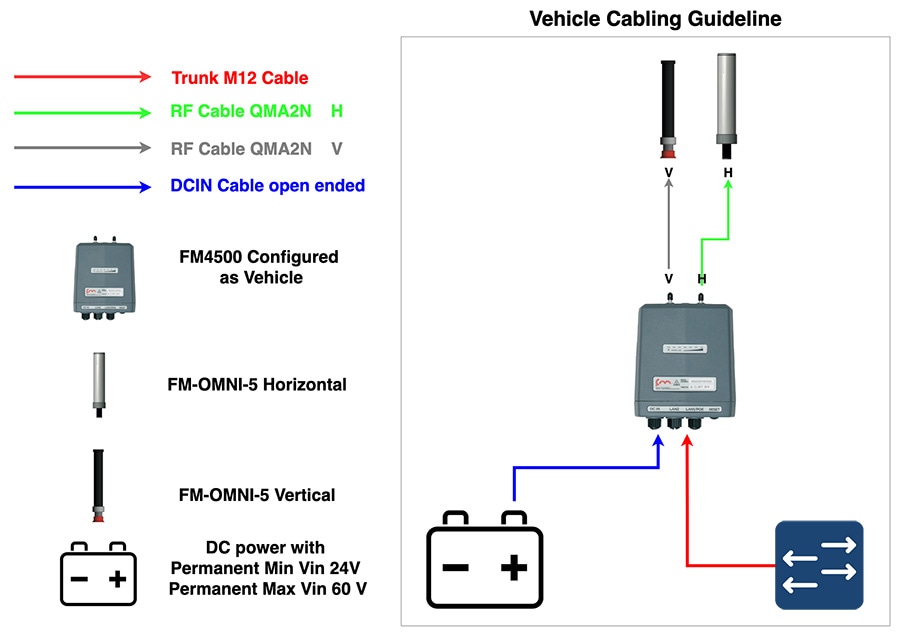

Cisco IE3400H Heavy Duty Industrial Switch

Figure 7 Cisco IE3400H Heavy Duty Industrial Switch

The Cisco Catalyst IE3400 Heavy Duty Series switches deliver the advanced capabilities similar to the Cisco Catalyst IE3400 Rugged Series in environments that have heavy exposure to dust and water. These switches are available with 8, 16, or 24 Fast Ethernet (D-coded) or Gigabit Ethernet (X-coded) M12 interfaces. The switches can be wall mounted and deployed without a housing cabinet.

Cisco IE3400H Datasheet: https://www.cisco.com/c/en/us/products/collateral/switches/catalyst-ie3400-heavy-duty-series/datasheet-c78-742313.html

Note: Due to the high-level of vibration on Mining autonomous vehicles it is highly recommended to use the IE3400H series of switches since they are more rugged and can better handle vibration.

Note: The IE3400H does not support PoE so the CURWB radios onboard the vehicle will need to be powered using DC power.

Wireless Network Components

CURWB Mesh End Gateway

All Fluidity / fixed infrastructure deployments need a mesh end. It functions as a gateway between wireless + wired. It is highly recommended that all systems using Fluidity use a redundant pair of mesh end gateways to terminate the MPLS tunnels, aggregate traffic and act as interface between the wired and wireless network. Mesh End gateways can also be thought of as MPLS label edge routers (LERs) on the infrastructure network. The Mesh End gateway is responsible for encapsulating the traffic coming in from the wired network into the Fluidity overlay network using MPLS and de-encapsulating MPLS and delivering standard datagrams onto the wired network.

CURWB gateways are rugged, industrial grade network appliances that make setup and management of medium and large-scale CURWB Fluidity and Fixed Infrastructure deployments fast and easy.

CURWB Gateway models comparison

Figure 8 FM1000 and FM10000 Mesh End Gateway

Note: A CURWB radio such as an FM3500 can also be configured as a Mesh End gateway. It can support throughput up to 250 Mbps.

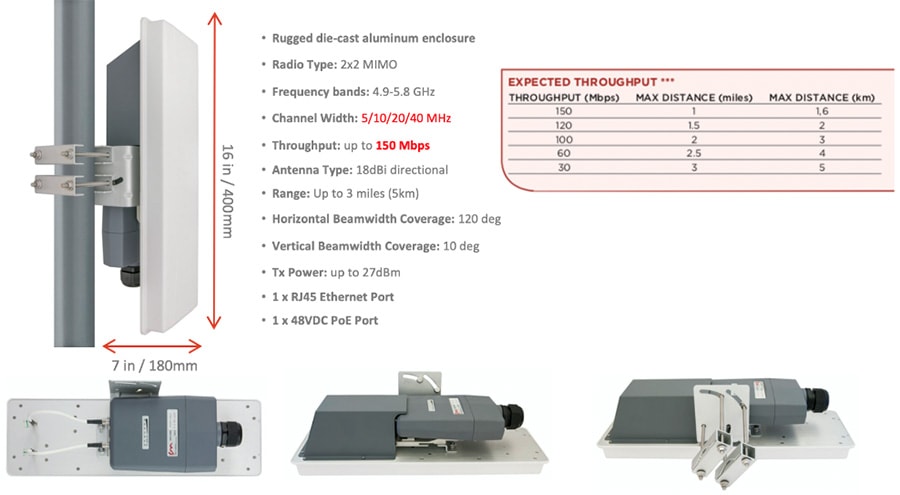

CURWB FM3200 Base Radio Unit

A rugged designed, long lasting performance radio, with an integrated 120-degree sector antenna, supports point-to-point, point-to-multipoint, mesh and mobility networks with a real throughput of up to 150Mbps. Within a mining deployment this radio is optimal to be deployed on Communications Towers that are acting as a hub for PtMP deployments to aggregate incoming traffic from multiple trailers.

Figure 9 FM3200 Base Radio Unit

FM3200 Base datasheet: https://www.cisco.com/c/en/us/products/collateral/wireless/ultra-reliable-wireless-backhaul/datasheet-c78-744548.html

CURWB FM4200 Fiber Radio Unit

Figure 10 FM4200 Fiber Radio Unit

The Cisco FM4200 Fiber is a high-performance mobility-communications radio transceiver, designed to deliver fast and stable connectivity particularly for mission-critical use cases and in extreme environments. Within a mining deployment, an FM4200 Fiber radio unit can be used as the backhaul radio (spoke radio for a PtP or PtMP fixed wireless deployment) on mining Trailers or Poles.

FM4200 Fiber datasheet: https://www.cisco.com/c/en/us/products/collateral/wireless/ultra-reliable-wireless-backhaul/datasheet-c78-744550.html

CURWB FM4500 Radio Unit

Figure 11 FM4500 MOBI and FM4500 Fiber Radio Unit

The FM4500 MOBI comes in a rugged die cast aluminum housing that has been purpose built for harsh environments such as those found within the Mining Vertical. It consists of industrial-grade anti-vibration M12 ports and QMA connector. Optionally, one can also order the fiber-enabled FM4500 FIBER which supports a fiber port with an XCO connector.

The Ethernet model has 2 x 10/100/1000 M12 ports. The Fiber model has 1 x Dual LC ruggedized SFP XCO connector (transceiver not included) and 1 x 10/100/1000 M12 port. The radio can either be powered using PoE+ output from a switch or 48V DC input from an onboard power source.

Note: The radio can also be powered using both DC power and PoE at the same time. So each power source acts as a backup for the other one.

The FM4500 MOBI is the recommended radio model to be deployed on board the vehicles, since it is vibration resistant. Within a mine deployment this is also the recommended radio model to be deployed as an access radio providing RF coverage to the autonomous vehicles within the access layer.

Cisco FM4500 MOBI data sheet: https://www.cisco.com/c/en/us/products/collateral/wireless/ultra-reliable-wireless-backhaul/datasheet-c78-744552.html

Cisco FM4500 FIBER data sheet: https://www.cisco.com/c/en/us/products/collateral/wireless/ultra-reliable-wireless-backhaul/datasheet-c78-744551.html

CURWB radios SFP compatibility matrix: https://tmgmatrix.cisco.com/?npid=4601&npid=4602&npid=5001

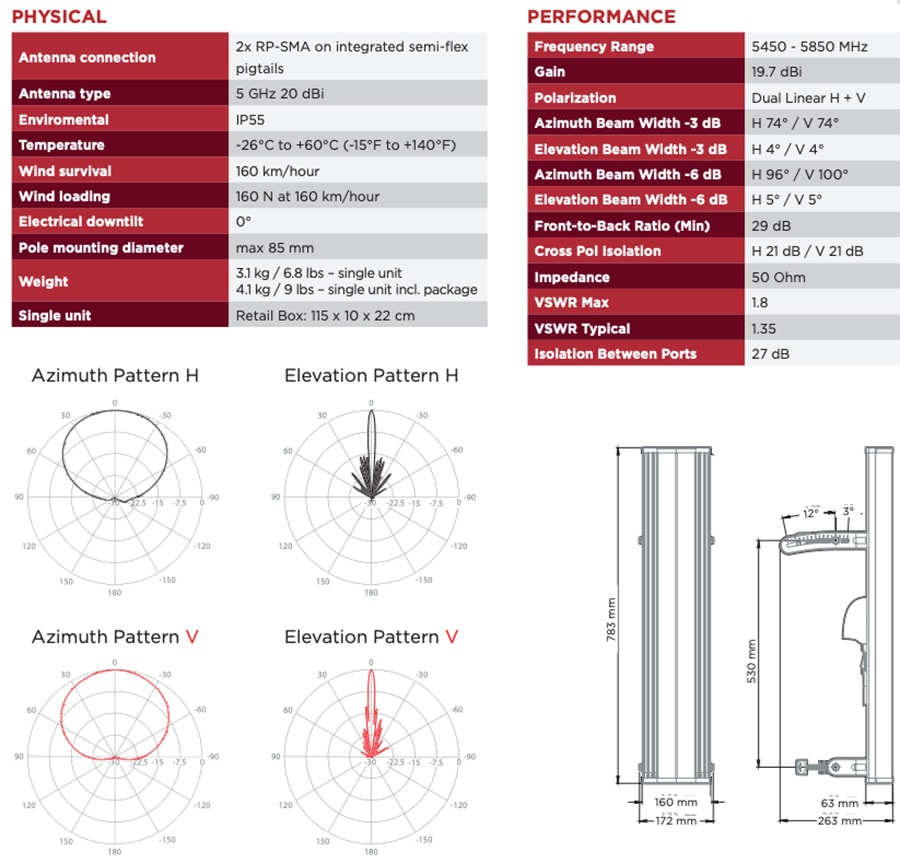

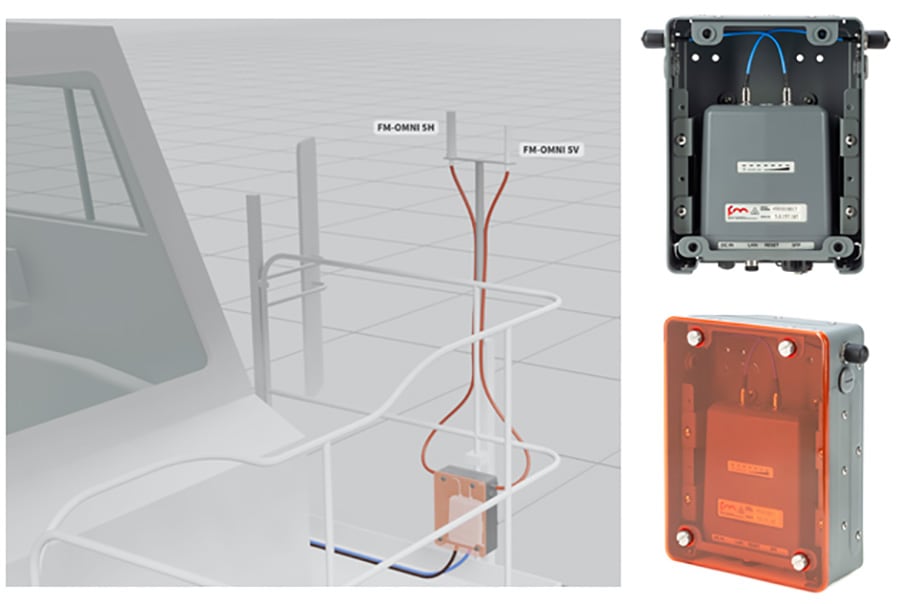

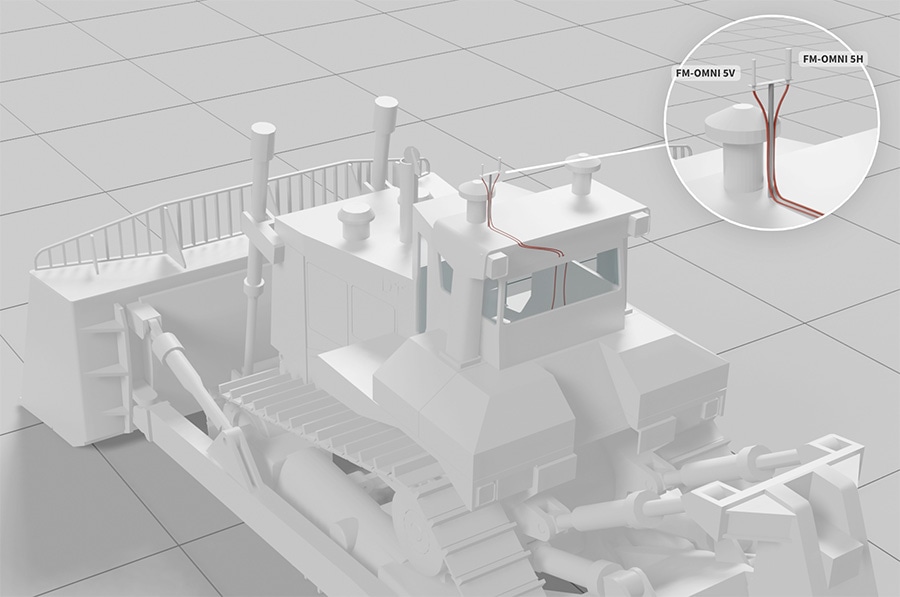

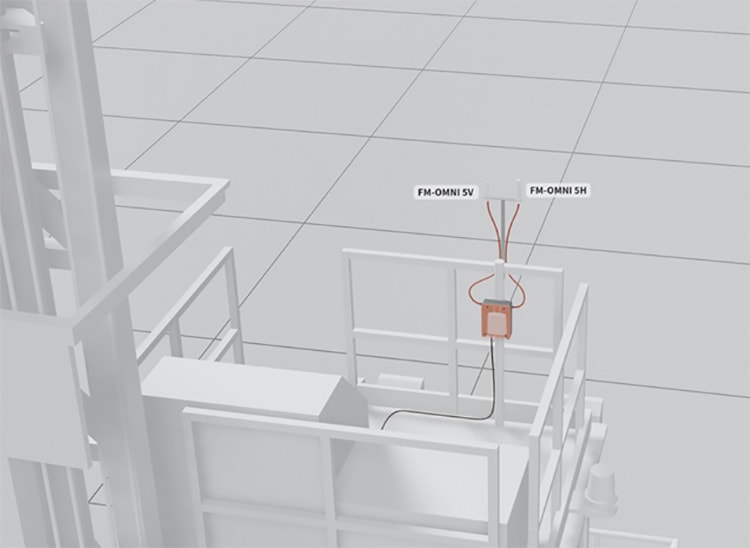

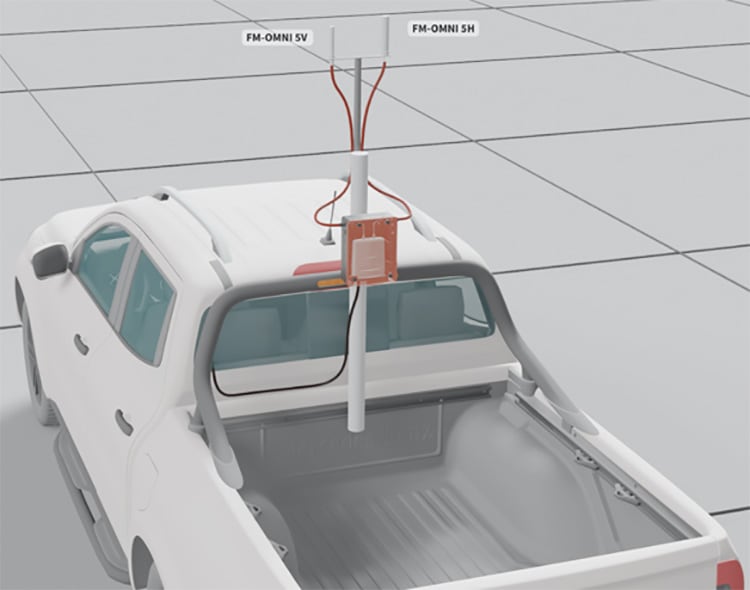

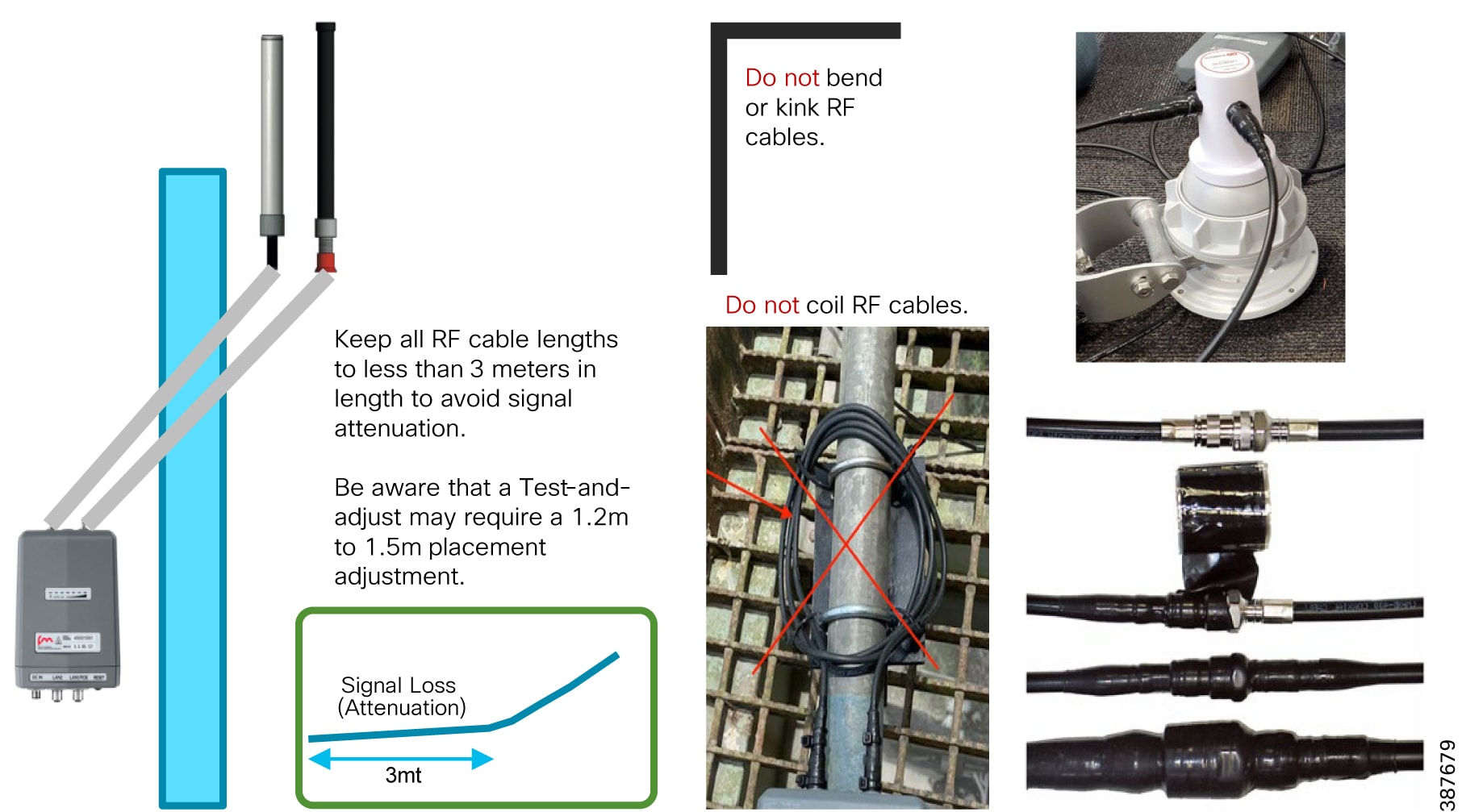

FM-OMNI-5-KIT Antenna

The FM-OMNI-5-KIT antenna consists of two antennas, a FM-OMNI-5-H which is a horizontally polarized antenna and an FM-OMNI-5-V which is a vertically polarized antenna. FM-OMNI-5-H horizontally polarized omnidirectional antennas are designed for long-lasting operation with outdoor access points. The FM-OMNI-5-V vertically polarized omnidirectional design utilizes a linear array, encapsulated in a heavy-duty fiberglass radome with a thick-walled mounting base for reliable, long-term use.

This rugged design of the above antennas withstands harsh environments, making the antennas ideal for Industrial Wireless applications. The antennas are DC grounded for ESD protection of radio components.

Figure 12 FM-OMNI-5-KIT Antenna Specifications

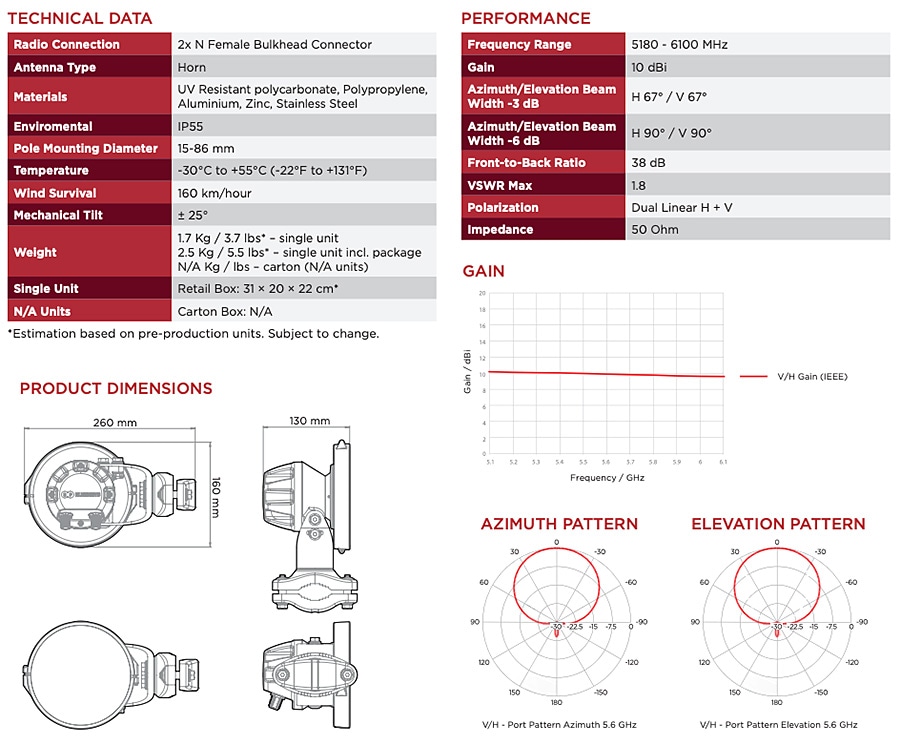

FM-Horn-90 Antenna

The FM-Horn-90 is a connectorized symmetrical horn antenna with carrier class performance. The FM-Horn-90 antenna offers unique RF performance in a very compact package. Scalar horn antennas have symmetrical beams with identical patterns in the Vertical and Horizontal planes. Extremely small side lobes result in greatly decreased interference. FM-Horn-90 antennas are ideal for covering areas with close in clients where null zone issues occur. High density AP clusters and radio co-location is now practical due to its radiation pattern and a compact size. The FM-Horn-90 antenna is equipped with N-female connectors.

Figure 13 FM-Horn-90 Specifications

FM-SECTOR-90-HV

FM-SECTOR-90-HV are addressing customer requirements for exceptional RF performance, an innovative patent pending Back-Shield™ system of frequency selective reflectors that are incorporated into the antenna structure to attenuate side lobes and backside near field radiation. All FM-SECTOR-90-HV sectors are designed with independent horizontal and vertical polarization antenna elements for unmatched RF performance.

Figure 14 FM-Sector-90-HV Antenna Specifications

The FM-Sector-90-HV is a sector antenna that provides 90-degrees of coverage and can be used with either the FM3200 ENDO or the FM3500 on the communications towers for PtP or PtMP deployments.

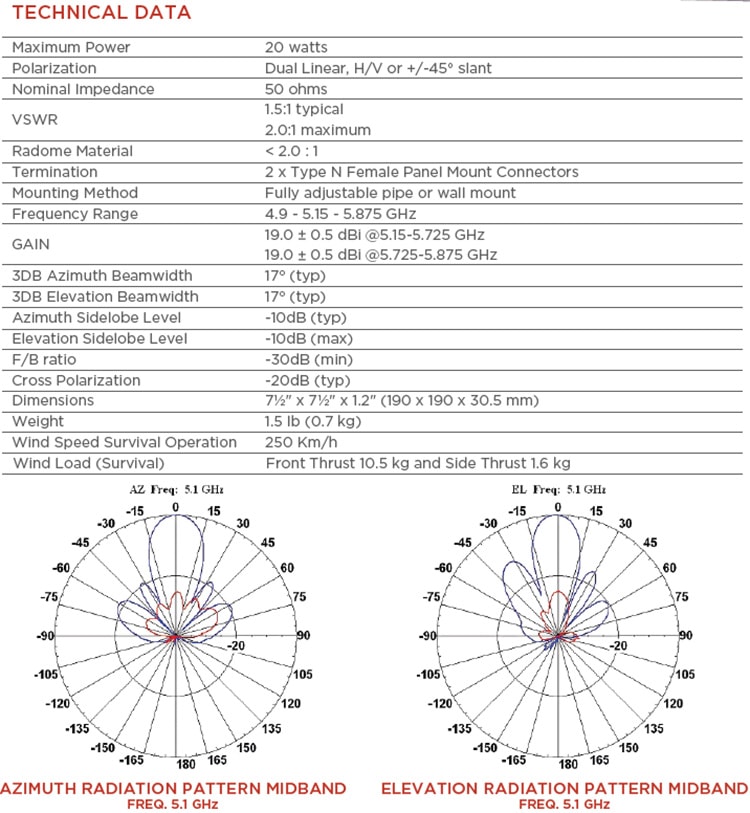

FM-PANEL-19 Antenna

The FM-PANEL-19 is a dual-polarized Directional Panel Antenna with a gain of 19 dBi and is suitable for use in CURWB PtP and PtMP deployments. Within a mining deployment these can be deployed along with CURWB backhaul radios on trailers.

Figure 15 FM-PANEL-19 Antenna Specifications

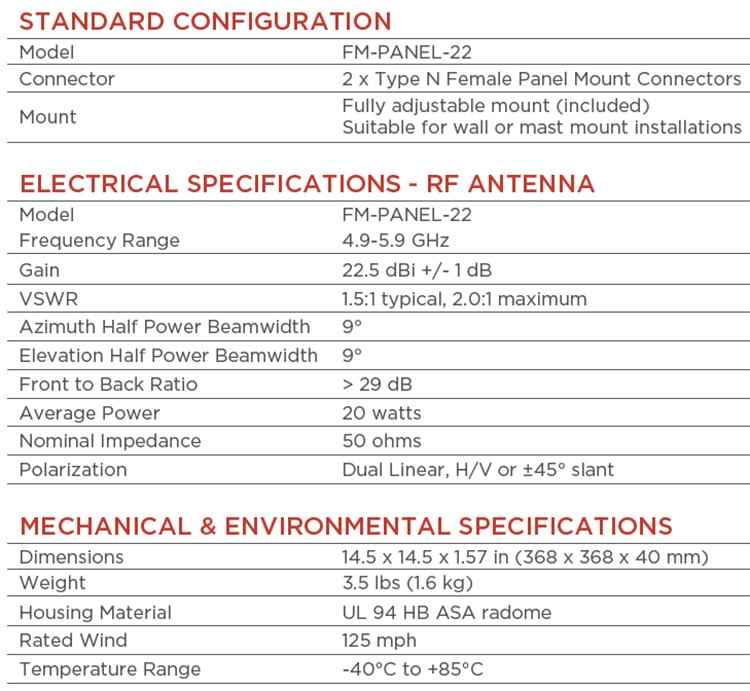

FM-PANEL-22 Antenna

The FM-PANEL-22 is a dual-polarized Directional Panel Antenna with a gain of 22 dBi and is suitable for use in CURWB PtP and PtMP deployments. Within a mining deployment these can be deployed along with CURWB backhaul radios on trailers.

Figure 16 FM-PANEL-22 Antenna Specifications

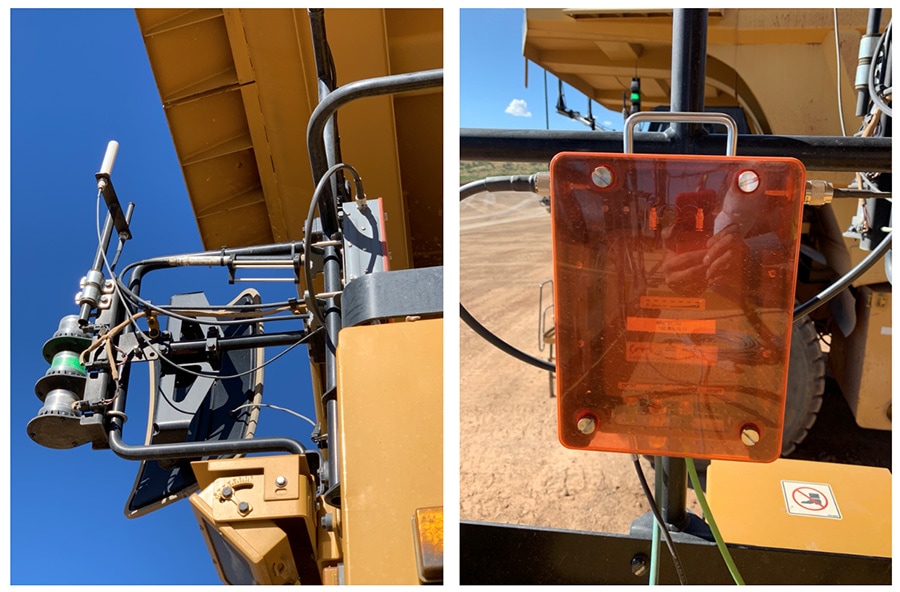

FM-Shield Ruggedized Enclosure

The FM-SHIELD is a proprietary ruggedized enclosure designed to ensure long-term durability and reliability of radios that are installed in outdoor environments.

If a 3200-series, 3500 ENDO, 4200-series or 4500-series radio is installed in outdoor conditions, it is compulsory to install the radio inside an FM-SHIELD. It provides additional protection from impact, salt air and water.

■![]() Steel protective enclosure with Polycarbonate cover, designed to protect against high-pressure water spray and impacts from heavy, fast-moving objects.

Steel protective enclosure with Polycarbonate cover, designed to protect against high-pressure water spray and impacts from heavy, fast-moving objects.

■![]() Proven in high-vibration environments, including all vehicles operated in a terminal environment.

Proven in high-vibration environments, including all vehicles operated in a terminal environment.

■![]() N-Female antenna connectors for easy integration, and minimal RF signal loss.

N-Female antenna connectors for easy integration, and minimal RF signal loss.

■![]() Designed for installation within automation cabinets and on vehicle hand railings, antenna poles, can be installed in either a horizontal or vertical position.

Designed for installation within automation cabinets and on vehicle hand railings, antenna poles, can be installed in either a horizontal or vertical position.

■![]() Semi-transparent front panel with self-retaining screws for easy inspection.

Semi-transparent front panel with self-retaining screws for easy inspection.

Figure 17 FM-Shield Ruggedized Enclosure

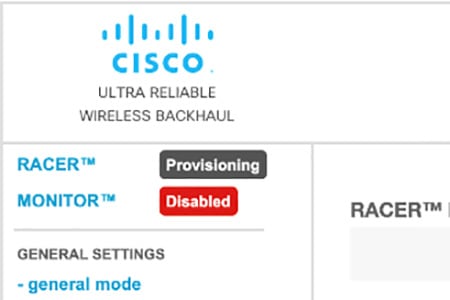

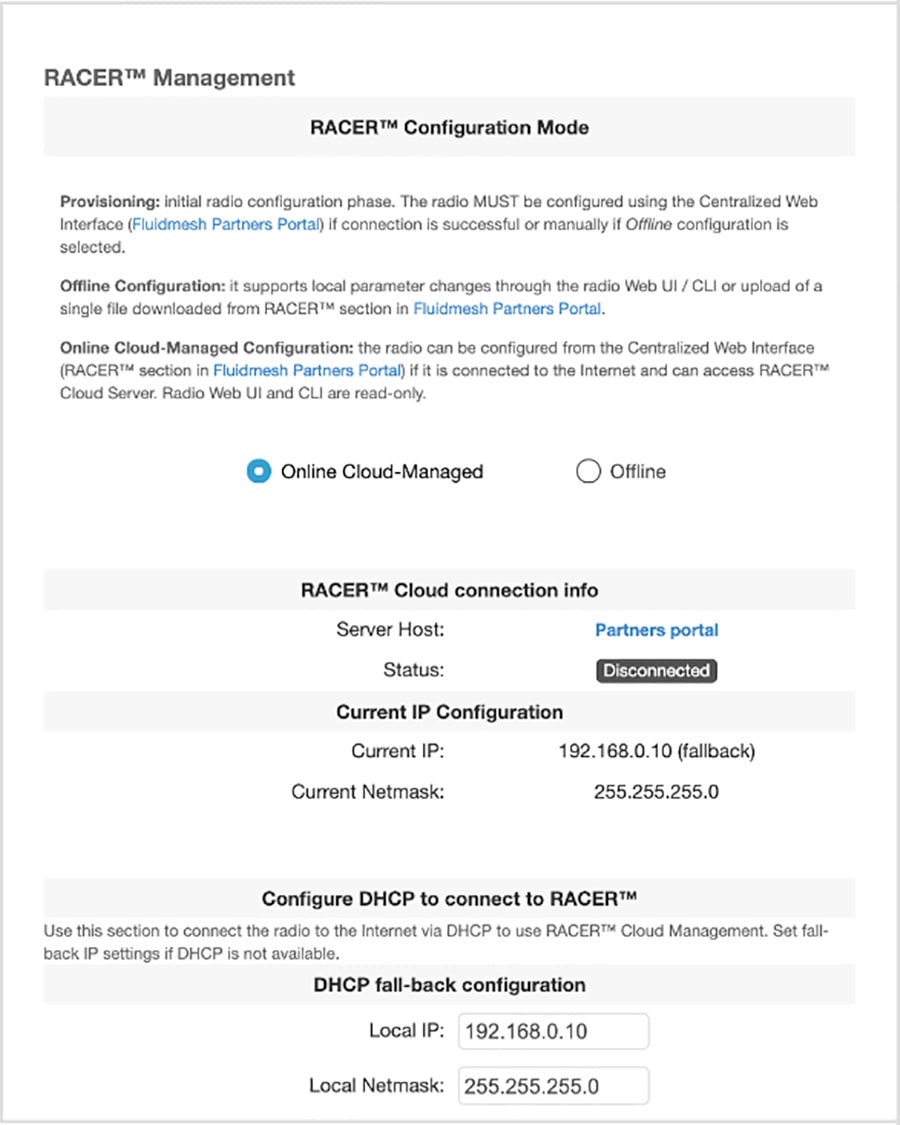

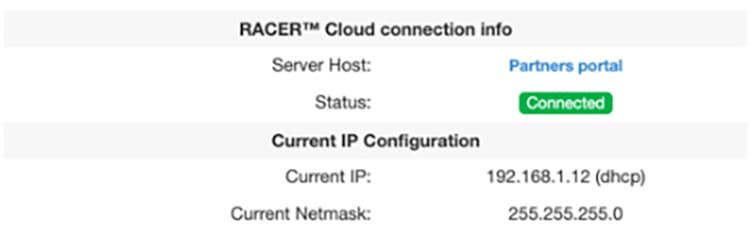

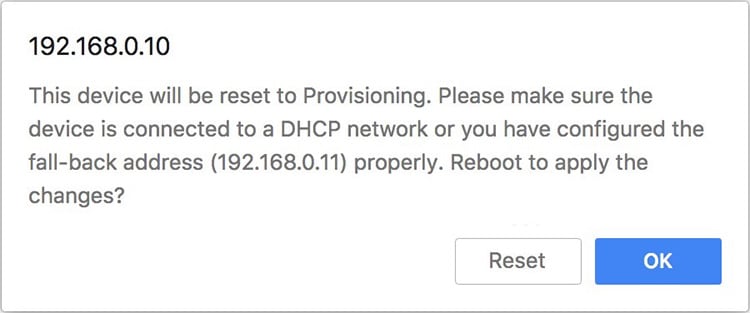

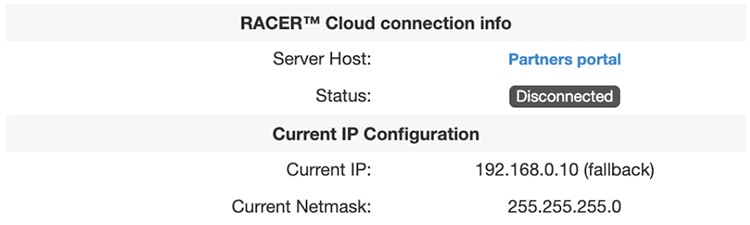

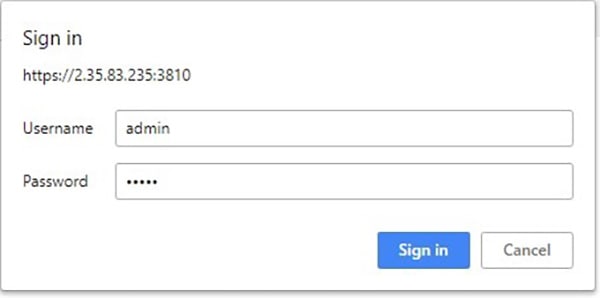

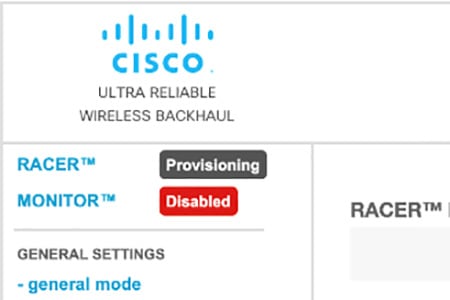

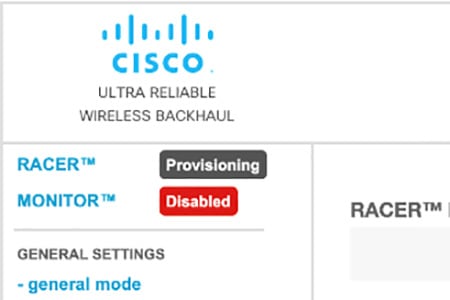

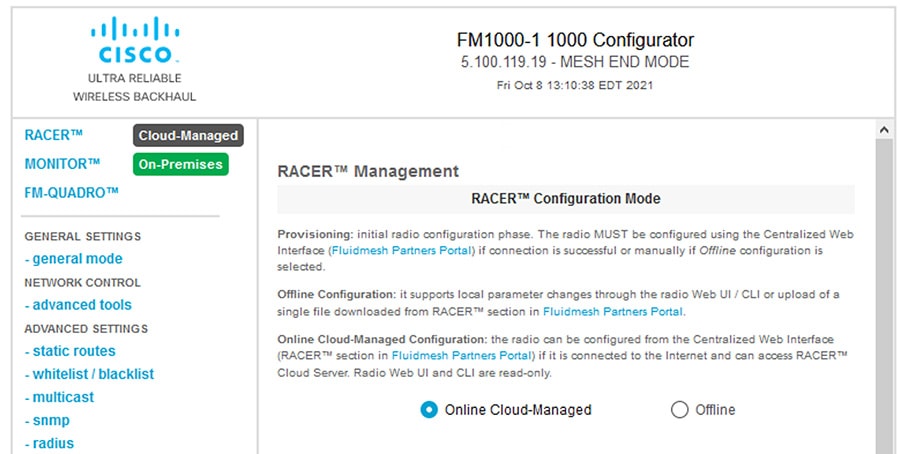

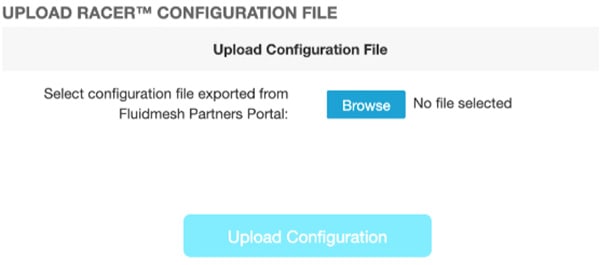

RACER

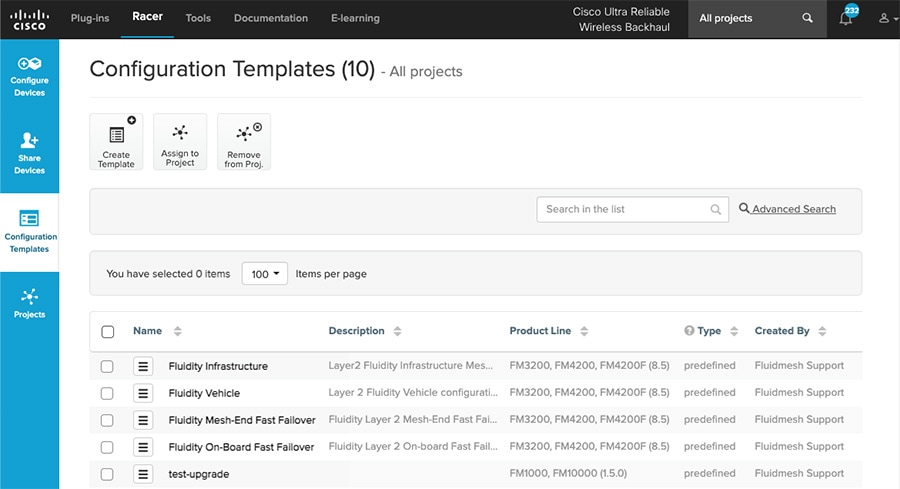

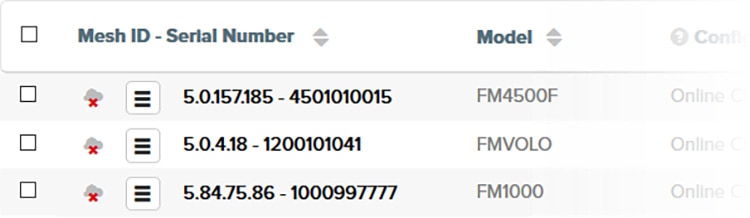

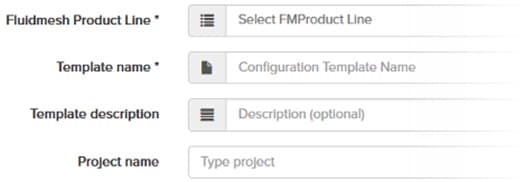

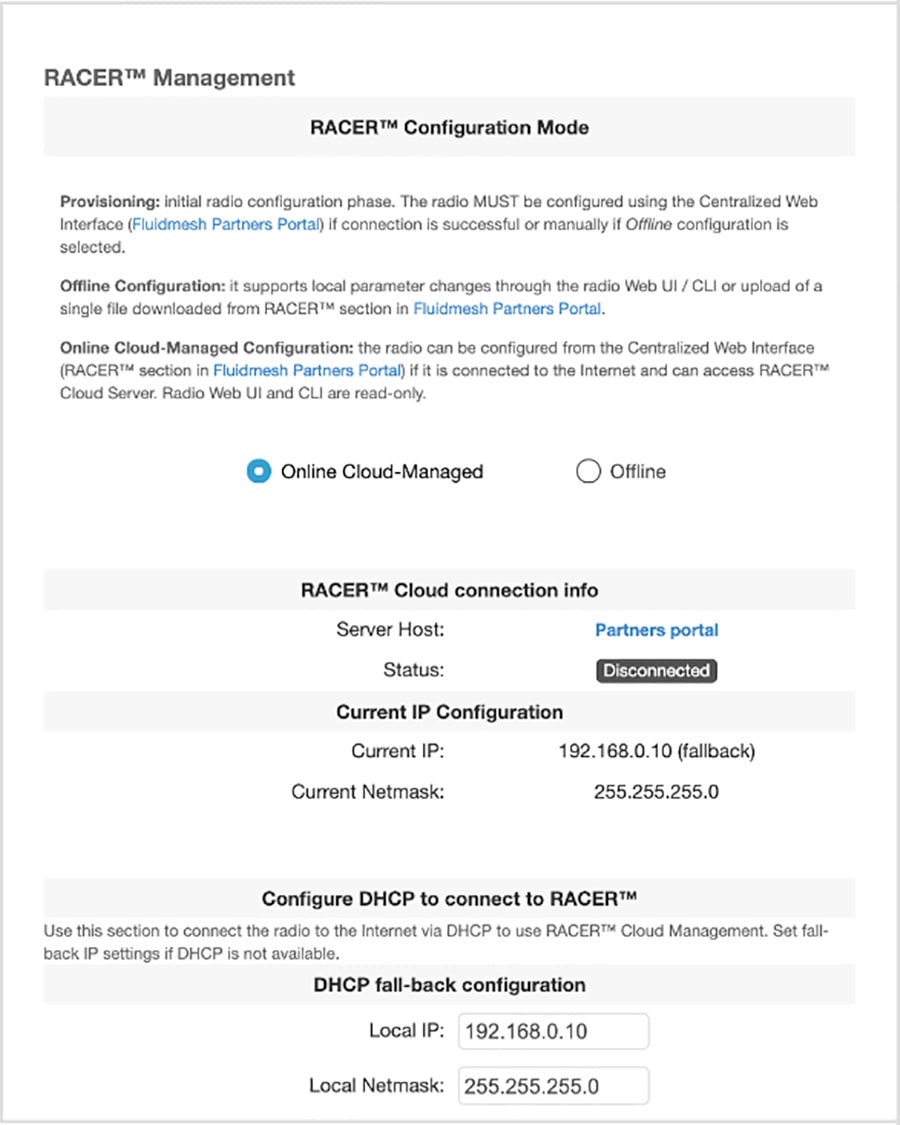

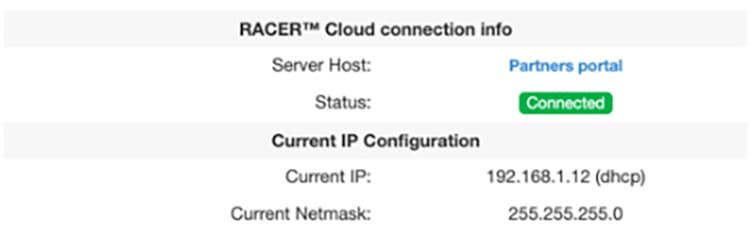

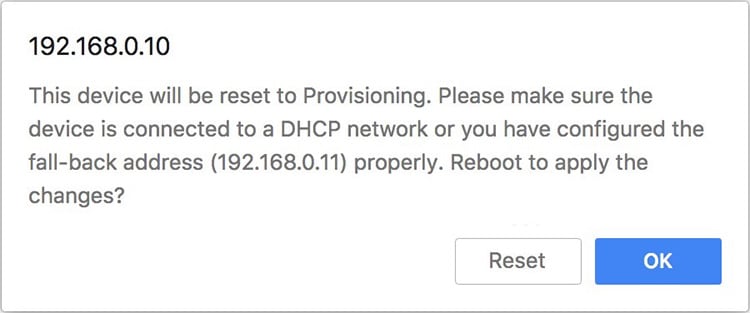

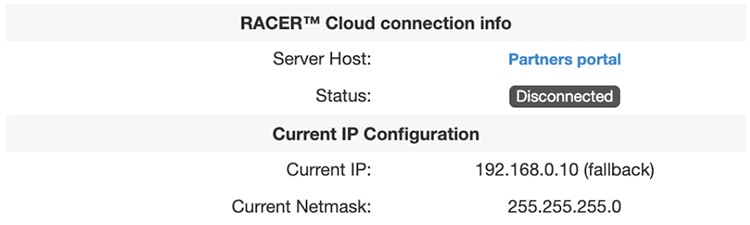

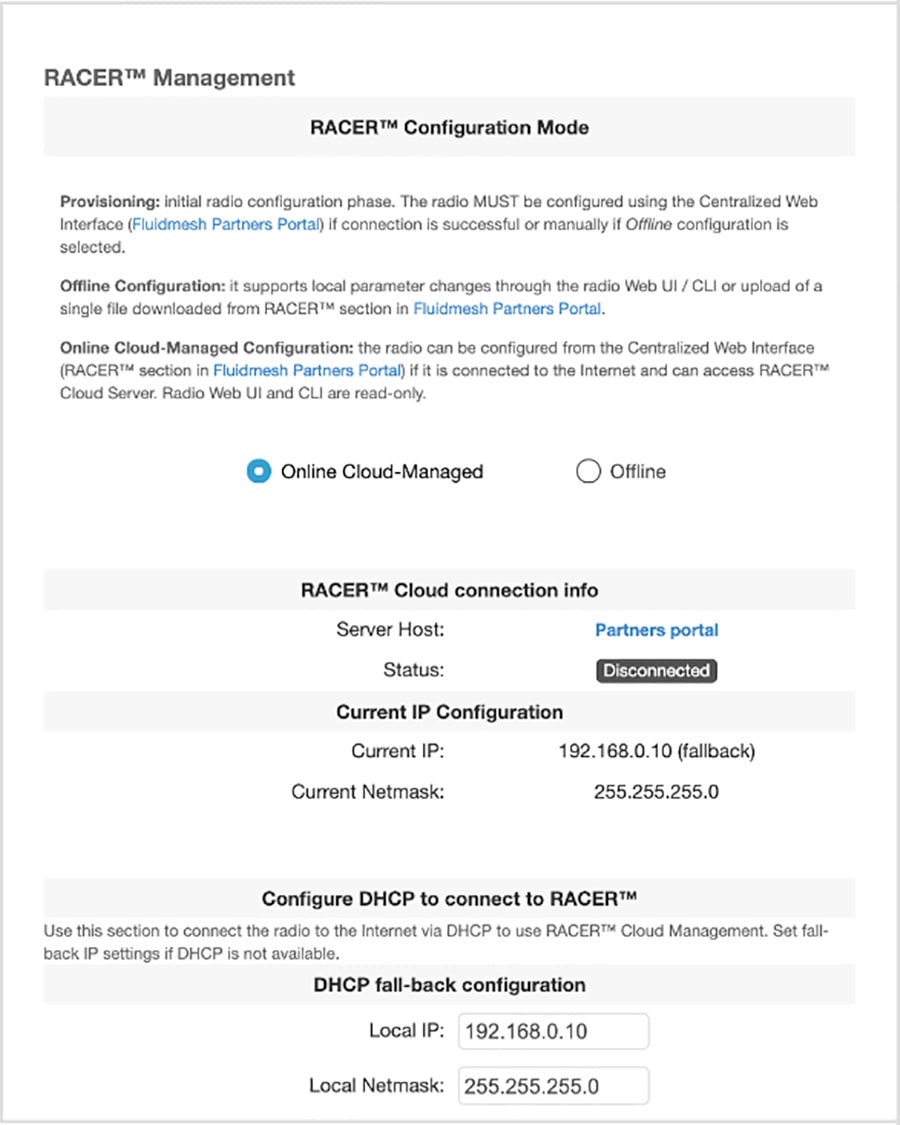

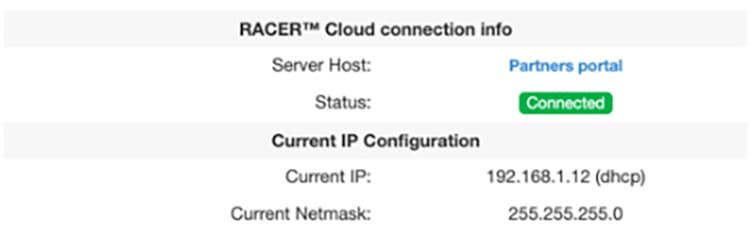

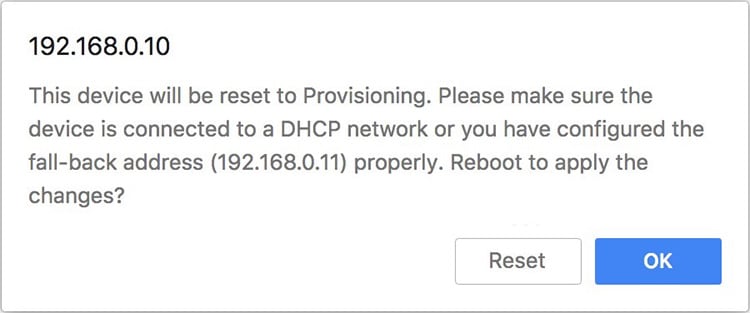

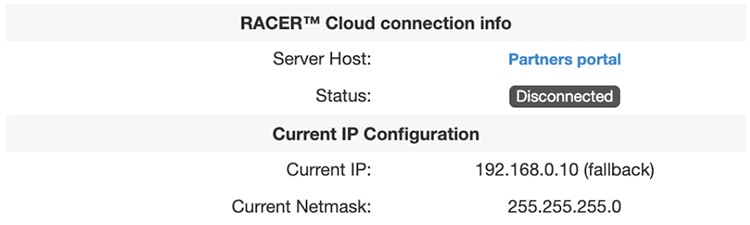

CURWB RACER is a centralized cloud-hosted server that can be used for provisioning of the entire CURWB system including configuration, firmware upgrade, and plug-in activation. It allows all the radio configuration to be done in a single pane and uploaded to the radios in real time or offline. RACER supports almost all the configuration options (basic and advanced).

RACER can be used to create configuration templates, fill in the template with the required parameter values to create radio configurations, and apply them to multiple CURWB devices of the same type. Configurations created in RACER can be applied to the radio in either online mode (if the CURWB devices have Internet access) or offline mode (if the CURWB devices have no Internet access). The advantage of using RACER is that along with the device configuration it also upgrades the firmware to the latest version available and also applies the configured plug-ins. This is the preferred method for configuring CURWB devices for any size deployment.

Figure 18 RACER Cloud-Hosted CURWB Configuration Tool

Note: Refer to the RACER section within the Implementation Guide below for step-by-step instructions on how to use RACER to create the appropriate CURWB radio configuration templates and configuring the CURWB radio devices.

FM-Monitor – Centralized Management of CURWB Infrastructure

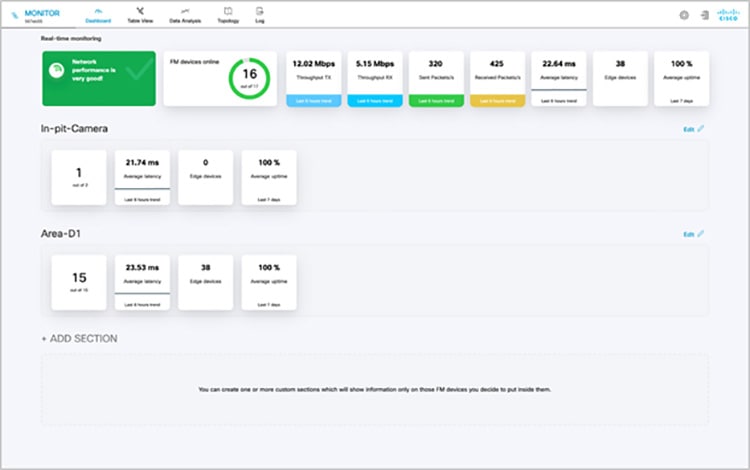

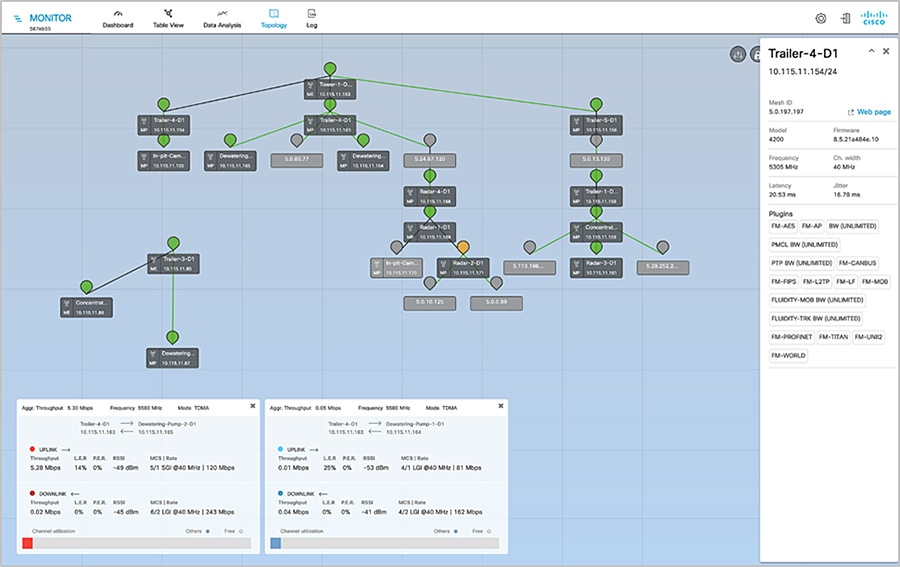

FM-Monitor is a network-wide, on-premises monitoring dashboard, allowing any CURWB customer to proactively maintain and monitor one or multiple wireless OT networks. FM-Monitor displays data and situational alerts from every CURWB device in a network, in real time.

FM-Monitor supports fixed and roaming network architectures and allows easier end-to-end troubleshooting. It can be operated as a standalone system or in parallel with a sitewide Simple Network Management Protocol (SNMP) monitoring tool. It is designed to support network installations used in smart cities, rail, mining, ports and terminals, entertainment, smart factories, and military applications.

Note: This document does not provide the setup or configuration instructions for FM Monitor. For more information, refer to the FM Monitor documentation page at the following URL:

https://www.cisco.com/c/en/us/support/wireless/ultra-reliable-wireless-backhaul/series.html#~tab-documents

■![]() On-premises monitoring tool for CURWB networks

On-premises monitoring tool for CURWB networks

■![]() Wizard setup for quick and easy installation and deployment

Wizard setup for quick and easy installation and deployment

■![]() Real-time dashboard displaying uptime, throughput, latency, jitter, and other network KPIs

Real-time dashboard displaying uptime, throughput, latency, jitter, and other network KPIs

■![]() Customizable section view to easily check groups of radios

Customizable section view to easily check groups of radios

■![]() Customizable monitoring alerts for prompt response

Customizable monitoring alerts for prompt response

■![]() Radio-by-radio data logging with a minimum sampling interval of 300 mSec

Radio-by-radio data logging with a minimum sampling interval of 300 mSec

■![]() Real-time radio configuration display for quick and accurate troubleshooting

Real-time radio configuration display for quick and accurate troubleshooting

■![]() Side-by-side comparison of radio KPIs over time and vehicle position

Side-by-side comparison of radio KPIs over time and vehicle position

■![]() Ability to export logs to a Syslog server

Ability to export logs to a Syslog server

One of the biggest advantages of FM-Monitor is the ability to configure alerts for a group of radios based on certain KPIs. Imagine needing to support an application mix of Automation and CCTV. The set of radios supporting the Automation application can be grouped and alarms configured for KPIs such as latency, jitter, RSSI, etc. while the group of radios supporting the CCTV network can have alarms configured using different KPIs such as Link Error Rate (LER), MCS rate, etc.

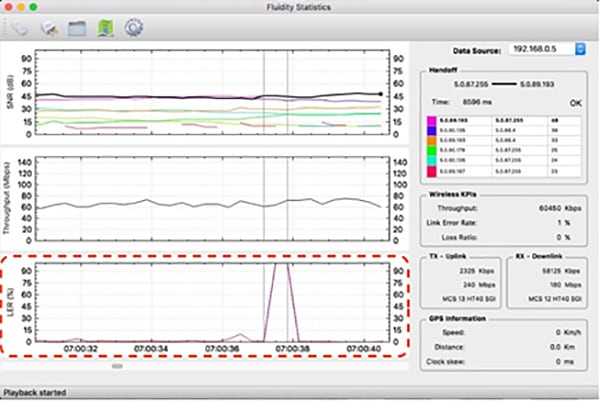

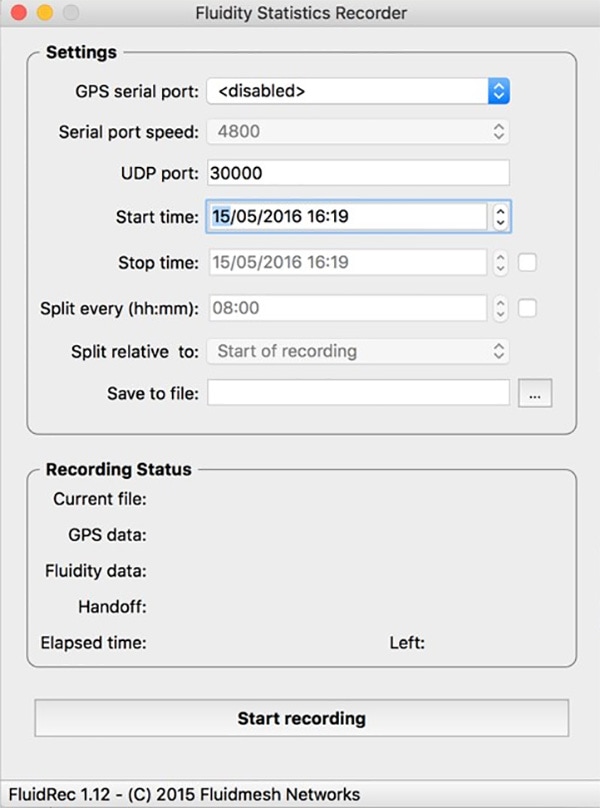

FM-Monitor Dashboard

The dashboard shows overall network performance and offers customizable segmentation of the network into clusters. This allows for easy monitoring of network sections or parts of a fleet of vehicles, maximizing network usage and performance. Clusters can include backhaul point-to-point links, point-to-multipoint distribution networks, vehicle access networks, wayside networks, and vehicle-mounted radios. FM Monitor displays and tracks real-time Key Performance Indicators (KPIs) within each cluster, including the number of active radios, number of connected IP edge devices, end-to-end latency, jitter, upload/download throughput in real time, and system uptime.

Figure 19 FM-MONITOR Dashboard

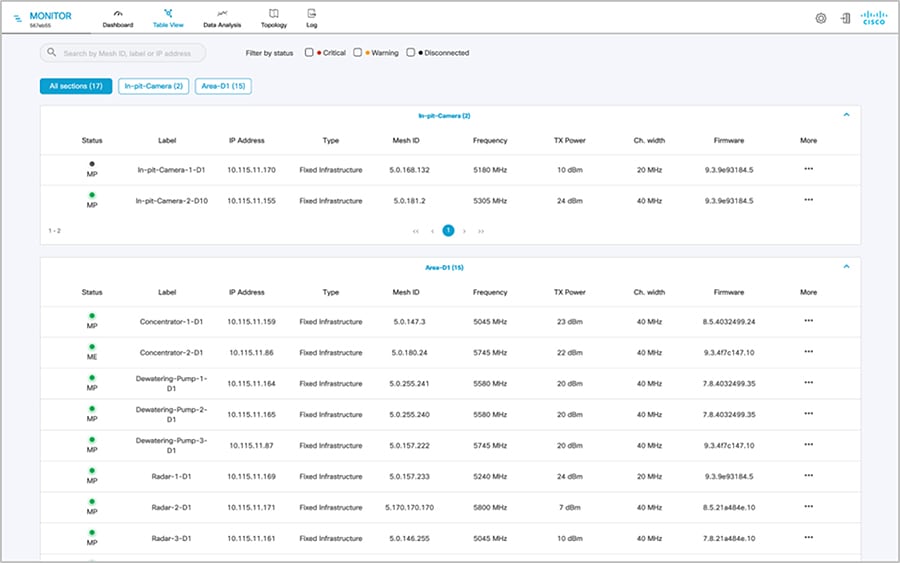

FM-Monitor Table View

The table view allows customers to condense sections of the network into a tabular view, isolating specific radio configurations and performance statistics. During troubleshooting, this drastically reduces the time needed to understand system performance on a radio-by-radio basis.

Figure 20 FM-Monitor Table View

Figure 21 FM-Monitor Topology View

CURWB - Terminology and miscellaneous configurations

The following section covers some prerequisites to understand the CURWB architecture and deployment.

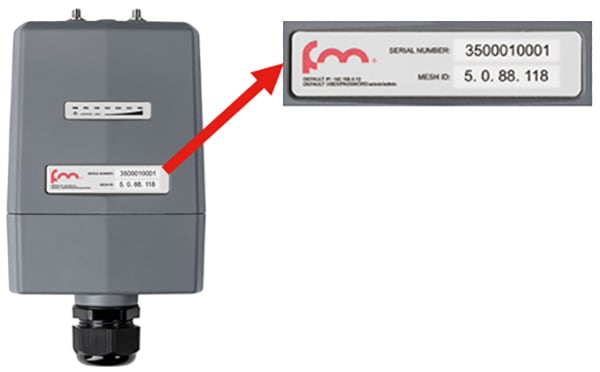

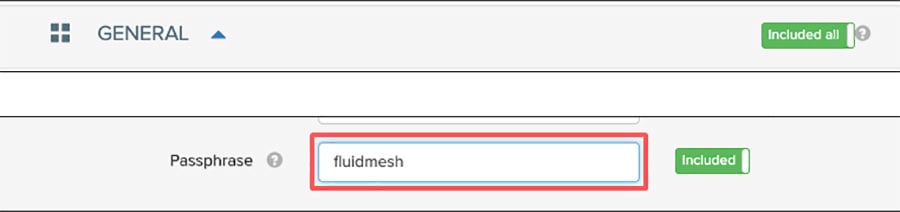

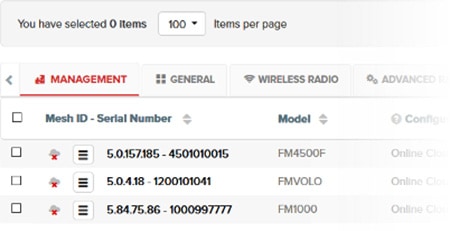

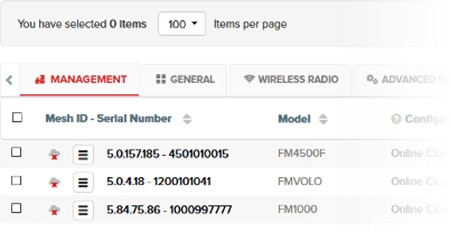

Mesh-ID

The Mesh-ID is a hardware identifier for the CURWB Gateways and Radios. It is pre-programmed from the factory with a hard-coded value which cannot be modified. It follows the Format of 5.x.x.x. Note that this is NOT an IP Address. The Mesh-ID is relevant within the constructs of network design. A gateway/radio with lower Mesh-ID becomes the “primary”. Also the gateway/radio with the lowest Mesh-ID becomes the Mesh-End (if one is not explicitly configured).

Passphrases

CURWB gateways/radios are configured with shared passphrases. CURWB control plane traffic is encrypted using this passphrase. The passphrase can also be used as a means to segment a particular network in that radios with the same shared passphrase form a cluster and are kept separate from other mesh networks which use a different passphrase.

Note: Data-plane / user traffic is not encrypted using the passphrase. In order to encrypt data-plan / user traffic, AES encryption must be enabled on the gateways/radios.

Note: If a shared passphrase is defined, the same passphrase must be used for all Fluidmesh units in the same network. As a deployment best-practice configure the passphrase to be something other than the default value of “Fluidmesh”. The shared passphrase can be composed of any ASCII characters except the following special characters: '(single quote),`(apostrophe), "(double quote), \(backslash), $(dollar sign), or =(equal sign).

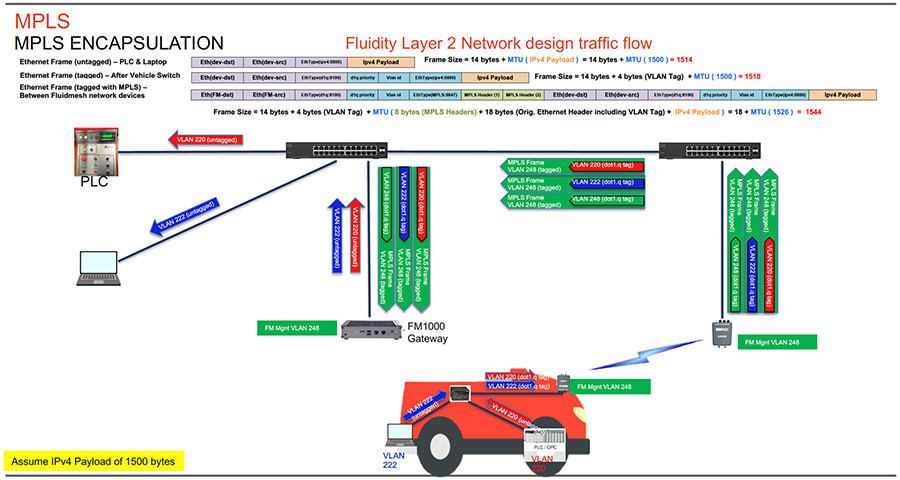

MTU Considerations

■![]() Similar considerations as for normal MPLS

Similar considerations as for normal MPLS

■![]() Minimum required MTU on switches = 1544

Minimum required MTU on switches = 1544

■![]() Radios don’t have to be configured with MTU – this is done automatically

Radios don’t have to be configured with MTU – this is done automatically

Spanning Tree Protocol (STP)

STP is a Layer 2 protocol that runs on switches to prevent loops in the network when there are redundant paths in the network. Switches run the STP algorithm when they are first connected to a network or whenever there is a topology change. CURWB radios do not participate in the STP alongside the switches. The radios simply forward or block the BPDU messaged depending on how they are configured. CURWB radios have an equivalent process to STP, called AutoTap, and this helps avoid any loops within the wireless network.

BPDU Snooping can be enabled or disabled on the radio, according to the configuration the radio will act or not act on the contents of the BPDU.

BPDU forwarding, configured as ‘Pass’, forwards all the BPDUs. BPDU forwarding, configured as ‘Auto’, forwards the BPDUs in the wayside space and not forward them to the vehicle space and vice-versa. When BPDU forwarding is configured as ‘Stop’, no BPDUs are forwarded.

AutoTap

AutoTap is a network loop prevention mechanism that allows CURWB radios to detect connections and allow only a dedicated ingress/egress route to and from the Mesh End or network core.

With AutoTap, only one radio will publish MAC address information, and traffic is seen coming from only one radio that gets elected as the Primary radio of the physically connected redundant group. The radio with the lowest Mesh ID is selected as the Primary radio which advertises its MAC address. With this configuration, the radios are able to detect each other over the wired connection, and forward traffic to the connected radio utilizing this connection. Routes to the core and end devices are built automatically. The result is like having a single radio with multiple wireless interfaces.

Network Time Protocol (NTP)

As a best-practice, NTP should be configured on the CURWB radios. A primary and secondary NTP server IP can be configured during RACER template configuration. When enabling NTP on the radio, it will synchronize time from the NTP server usually within an hour, however we can force it to happen sooner, the connection will be down for milli-seconds when forcing the radio to connect to the NTP server.

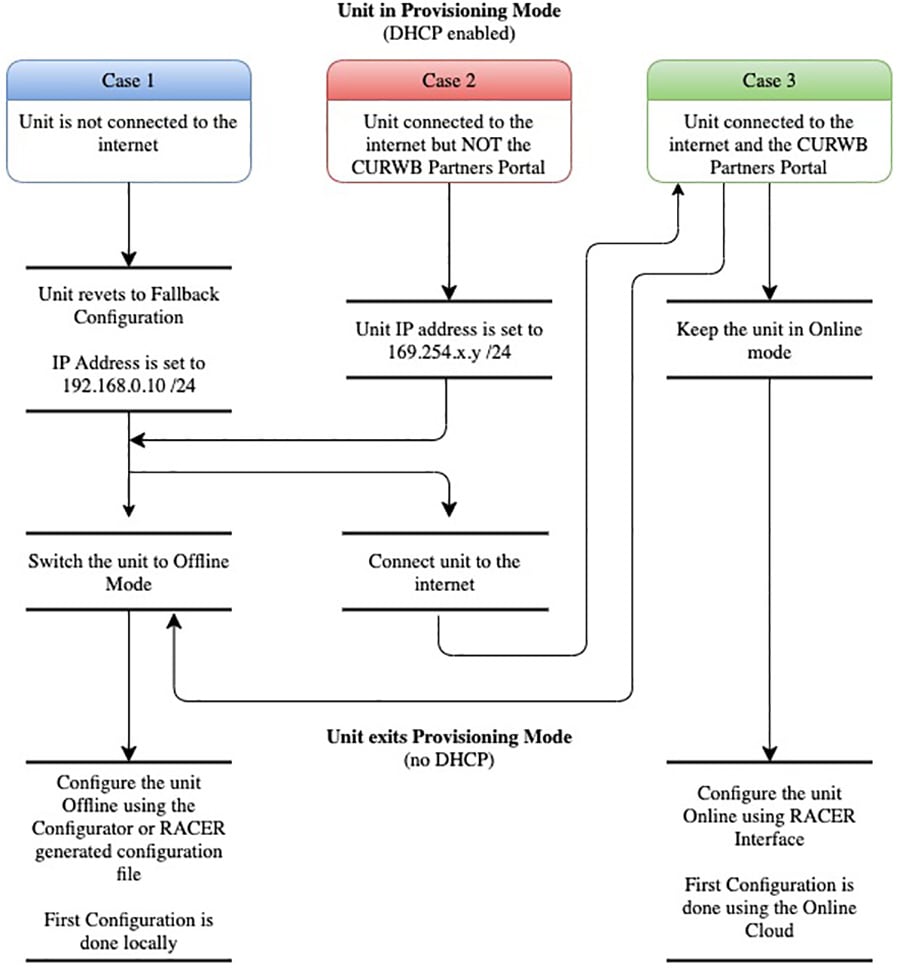

CURWB Radio Behavior

Below describes the typical behavior of a CURWB radio:

■![]() Populate local VBR (Virtual Bridge Routing) table with local end points

Populate local VBR (Virtual Bridge Routing) table with local end points

■![]() Over each antenna (with configured channel/frequency): Find peer radios

Over each antenna (with configured channel/frequency): Find peer radios

–![]() Condition: Same passphrase, same cluster-id

Condition: Same passphrase, same cluster-id

–![]() Find peer radios and gateways

Find peer radios and gateways

–![]() Pseudowire-set: “Mesh-End only”: To Mesh End only

Pseudowire-set: “Mesh-End only”: To Mesh End only

–![]() Pseudowire-set: “All”: To all other radios

Pseudowire-set: “All”: To all other radios

–![]() Radio metrics determine path: Higher signal strength wins over alternative path (over full path)

Radio metrics determine path: Higher signal strength wins over alternative path (over full path)

■![]() Used for Loop Avoidance when two radios using the same passphrase are directly connected using the wired network

Used for Loop Avoidance when two radios using the same passphrase are directly connected using the wired network

■![]() Directly connected radios select a primary; radio with lower mesh-id becomes primary; only primary announces local endpoints either connected via switch or directly to a radio

Directly connected radios select a primary; radio with lower mesh-id becomes primary; only primary announces local endpoints either connected via switch or directly to a radio

■![]() The AutoTAP feature kicks in automatically and no configuration is needed

The AutoTAP feature kicks in automatically and no configuration is needed

■![]() For each LSP: announce local VBR table, Learn remote VBR table entries

For each LSP: announce local VBR table, Learn remote VBR table entries

Note: AutoTAP is independent of LSPs. It does not influence LSPs or block ports (like STP does).

Note: The maximum throughput of a radio includes both directions, upstream and downstream. E.g., a 150M radio cannot support 100M upstream and 100M downstream traffic.

VLAN Design

On CURWB radios, VLAN support is not enabled by default and can be enabled by installing an optional plug-in. Installing and enabling the VLAN plug-in is recommended to control how tagged and untagged traffic is propagated through the network. When enabled, two VLANs are configurable, one for management of the radio unit and the other one is the CURWB Native VLAN. The CURWB management VLAN is used for control plane communication between the radios and also to connect, configure and manage the CURWB devices. The native VLAN determines how untagged traffic will be handled as it passes through the radio. Setting the native VLAN to 0 is a special case where all untagged traffic is dropped and only tagged traffic can pass through the radio unit.

The switch interface connected to a CURWB radio should be configured as a trunk port on the CURWB management VLAN and the client traffic VLAN(s). Note that the CURWB radio has some limitations on VLAN tagging. It is highly recommended that VLAN tagging be done on the directly-connected switch.

CURWB Fixed Infrastructure – Wireless Backhaul

The CURWB Fixed Infrastructure architecture consists of two different deployment models:

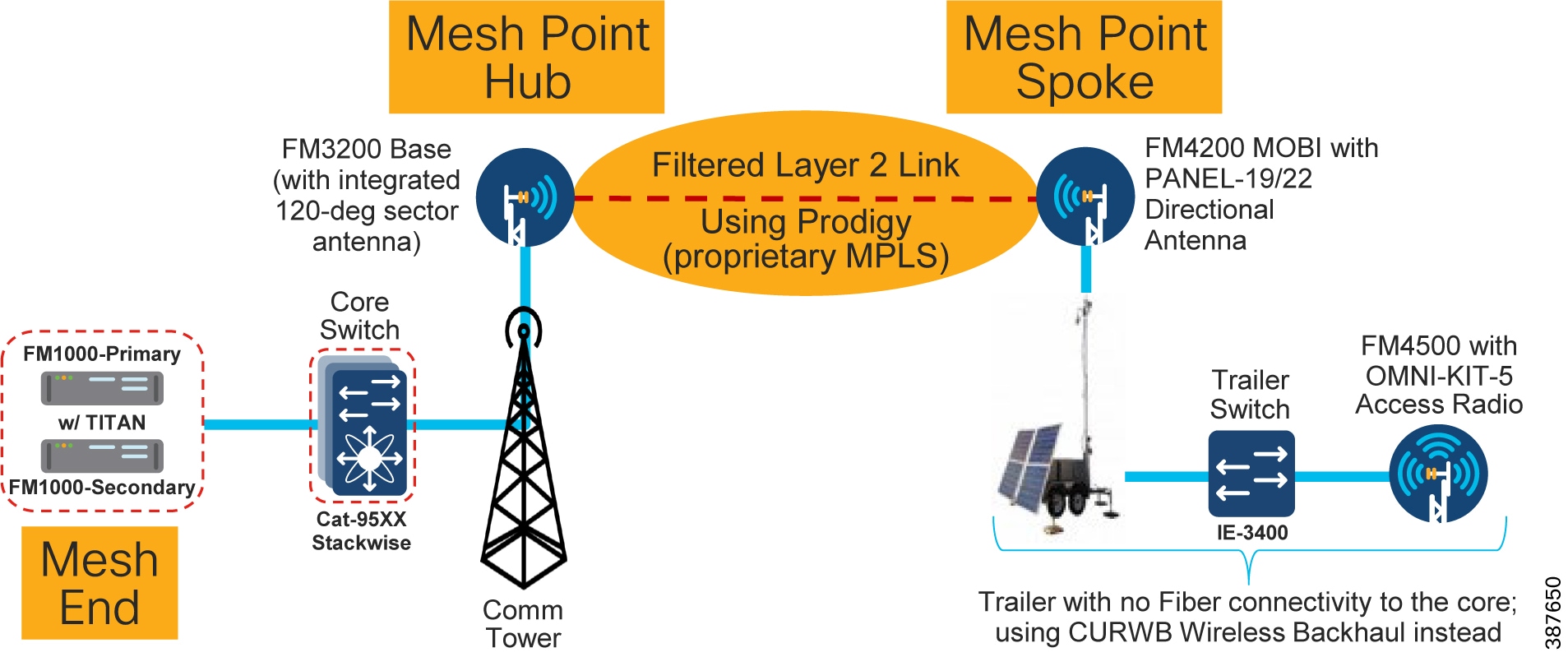

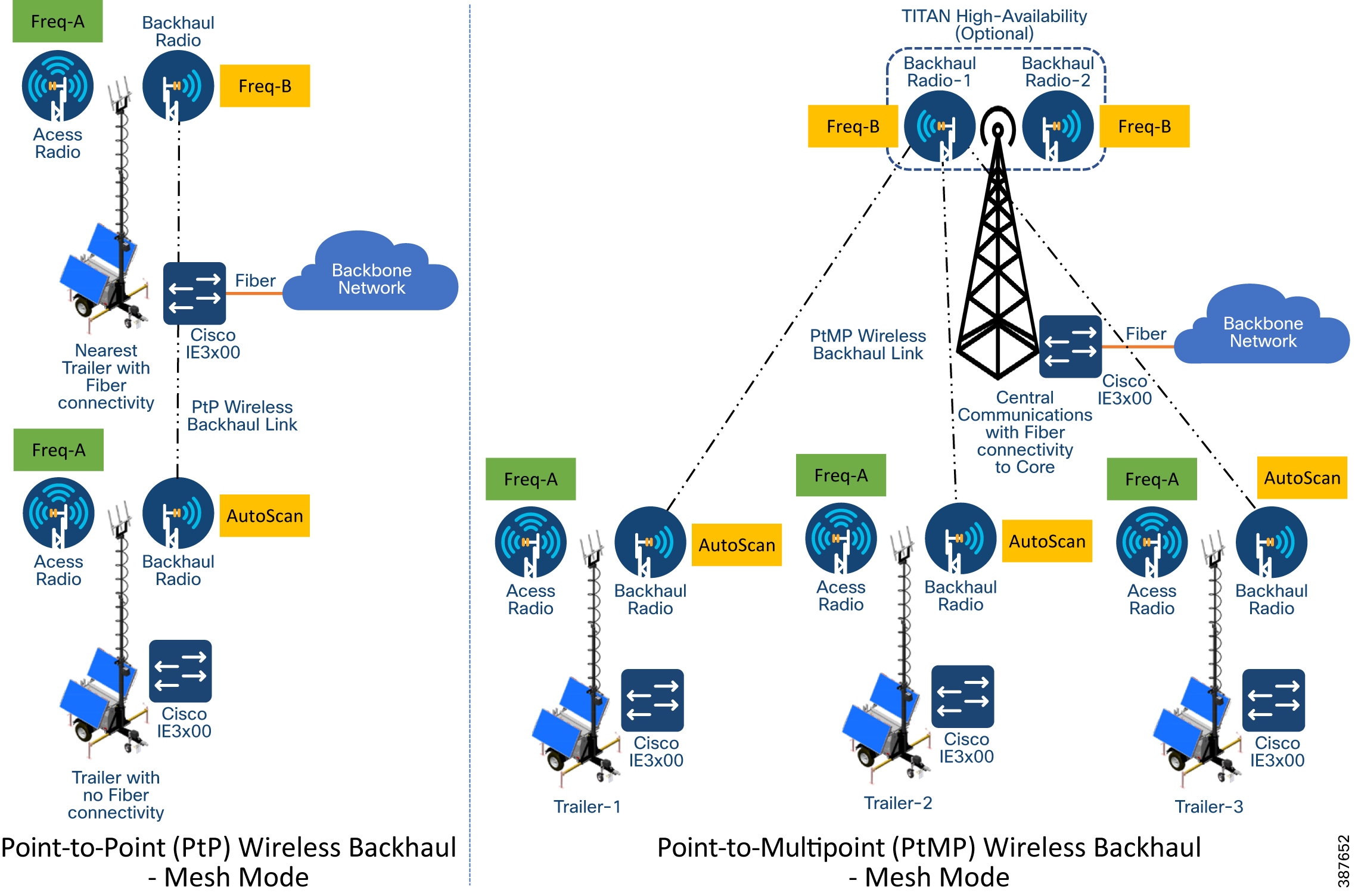

CURWB PtP in Mesh Mode

Figure 25 CURWB Wireless Backhaul – PtP in Mesh Mode

The CURWB PtP wireless backhaul can be used for individual trailers not having any fiber connectivity to backhaul traffic to the core. The PtP in Mesh Mode acts as a filtered L2 link in which not all protocols are enabled by default (e.g., IP multicast traffic). It leverages the proprietary Prodigy MPLS technology. The radio closer to the core is configured as a Mesh Point Hub and the radio on the trailer is configured as a Mesh Point Spoke for the PtP link. All the IP addresses need to be on the same subnet. The radio IP addresses are used for configuration and management of the radios.

Note: There is also a PtP Bridge mode deployment, which does not use the MPLS overlay, available for CURWB backhaul, however this is not recommended within a Mining deployment. The PtP in Mesh mode is the preferred deployment model since it enables flexibility to convert it to a PtMP link in the future to provide flexibility for the scenario in which trailers need to be moved around the mine pit.

Note: For PtP in Mesh Mode, not all protocols are enabled by default. These need to be enabled (e.g. IP Multicast, PROFINET, etc.). Multicast is a built-in feature whereas PROFINET requires a separate plug-in to be installed on the CURWB radio units.

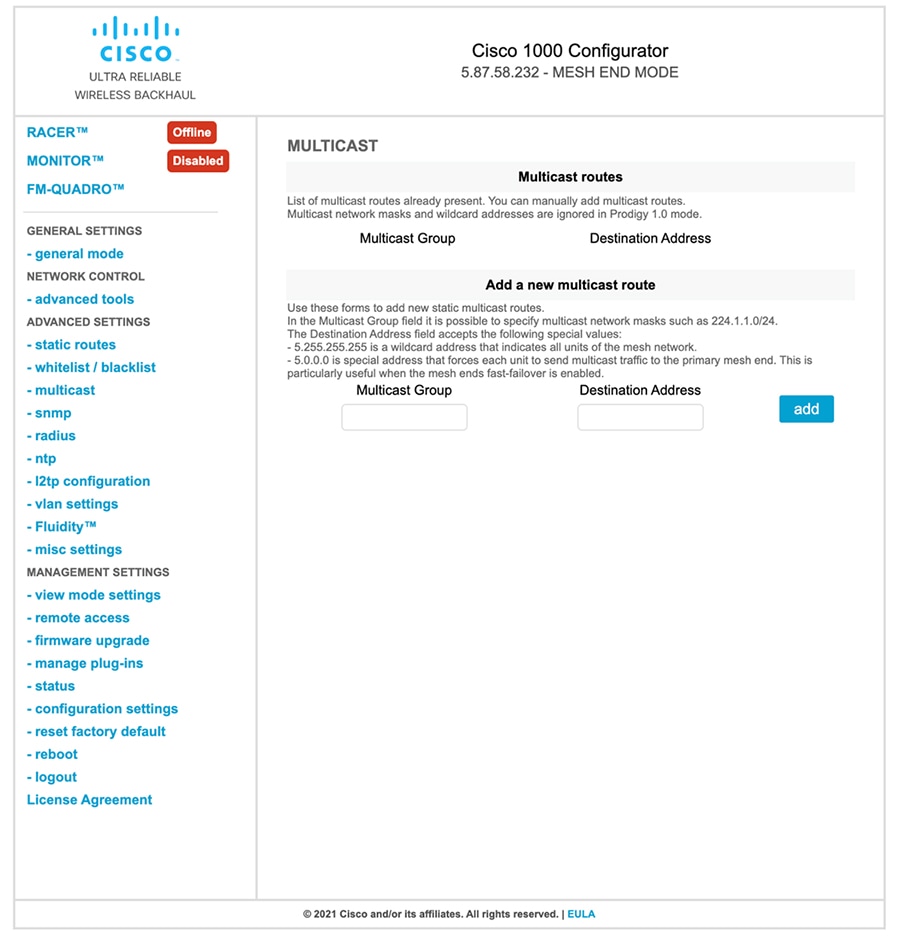

CURWB Mesh End

A logical Mesh End (ME) can be redundant consisting of two physical Mesh End gateways/radios with the TITAN high-availability plug-in. The ME is typically configured within the Core network. The purpose of the Mesh End radio is to terminate all the MPLS label-switched paths and act as a gateway between the CURWB network and the wired network. The ME holds all the Label Switch Paths (LSPs) to all the other radios in its database.

Even though the CURWB solution has the capability to automatically select the gateway/radio with the lowest Mesh-ID to become the ME, as a best-practice it is highly recommended to configure the role of Mesh End and Mesh Point(s) manually within the deployment to have more deterministic convergence in case of failure within the network.

Note: In mining deployments where a CURWB Fixed Infrastructure is deployed alongside the CURWB Fluidity mobility, the CURWB L2 Fluidity gateway(s) within the core network are configured as the Mesh End. The PtP or PtMP hub radios are configured as MPs.

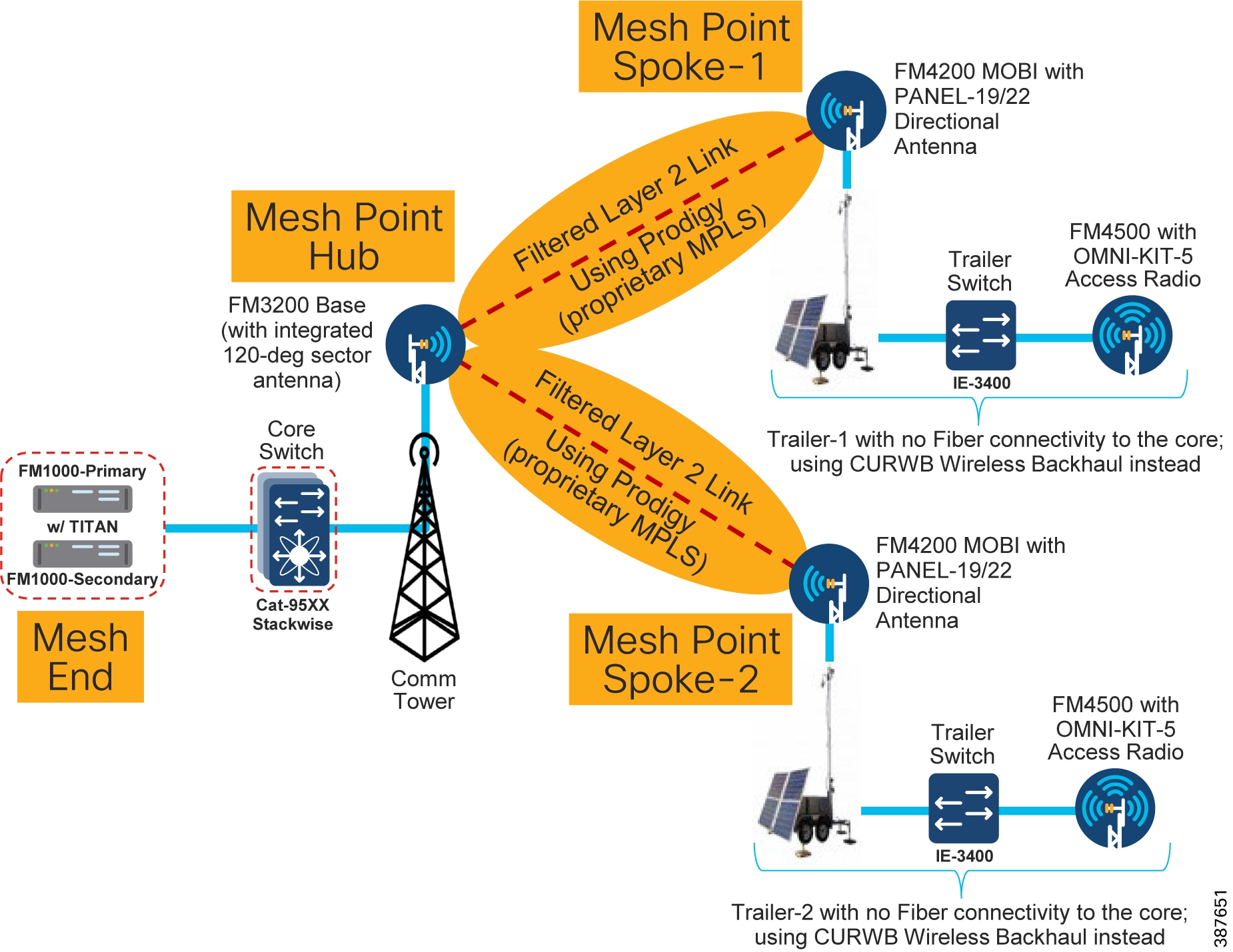

CURWB PtMP in Mesh Mode

A CURWB Point-to-Multi-Point (PtMP) deployment is used to connect multiple trailers without fiber connectivity to a central hub. All the IP addresses need to be within the same subnet. When using Static configuration, all the radios are configured with the same radio settings (Frequency, Channel Width and Passphrase).

Figure 26 CURWB Wireless Backhaul – PtMP in Mesh Mode

Note: When deploying a PtMP configuration, ensure that the right bandwidth plug-in is applied at the central location aggregating the fixed wireless traffic in-coming from its wireless connected trailers. For example, if each of the trailers has a bandwidth plug-in for 10 Mbps throughput and traffic from three trailers is getting aggregated at the hub, ensure that the hub radio(s) have a minimum bandwidth plug-in for 30 Mbps throughput.

Design Considerations for CURWB PtP and PtMP Wireless Backhaul

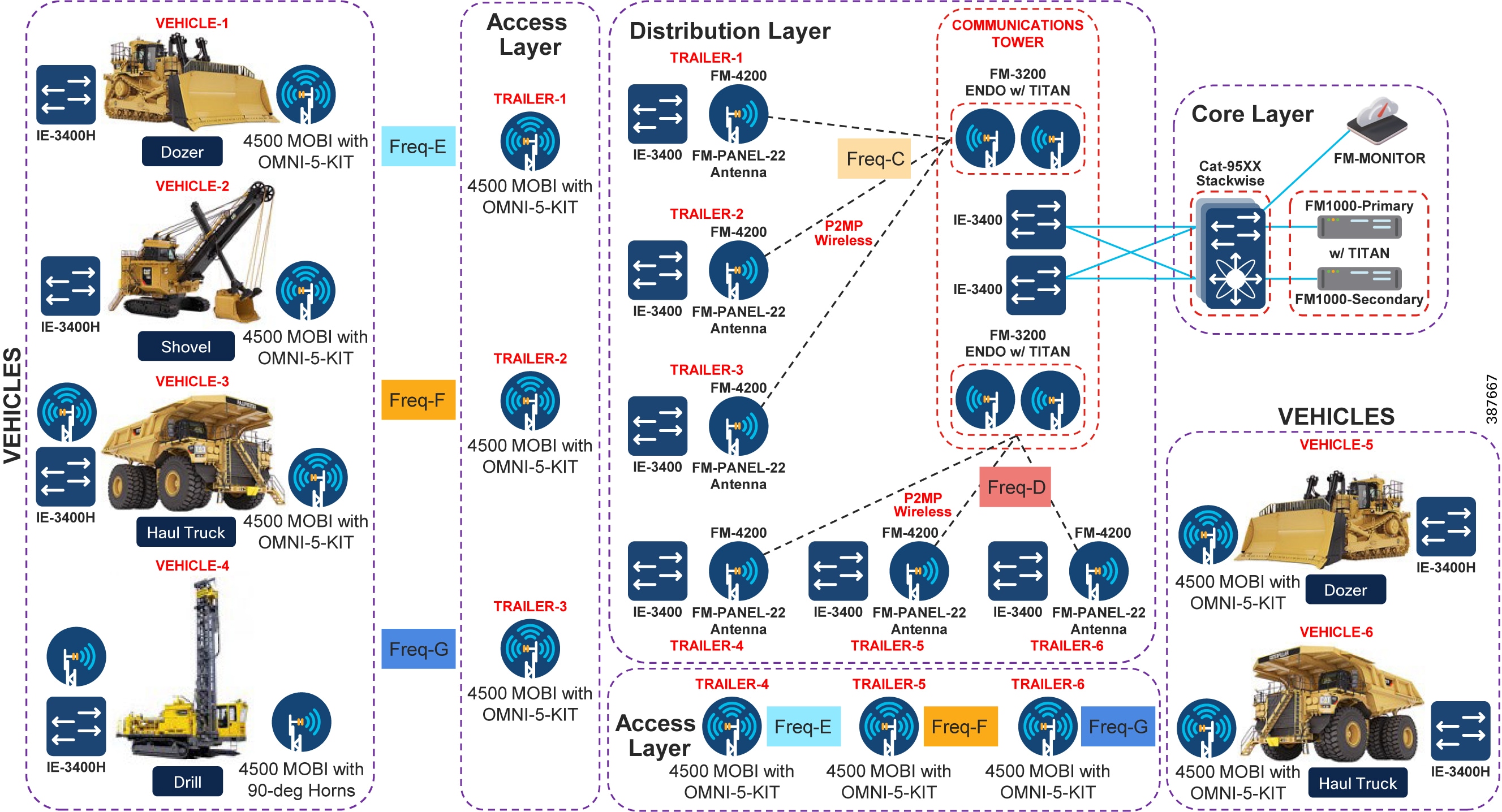

It is recommended to deploy the FM3200 BASE radio unit for the PtP or PtMP hub since it comes with an integrated 120-degree sector antenna. This is convenient since it can provide 120-degree coverage around a Communications Tower to multiple trailers.

If the communications tower is located in the middle of a site that needs RF coverage all around, 3 FM3200 BASE radios can be deployed on the communications tower to provide 360-degrees of coverage.

The FM4200 MOBI radios are recommended to be installed on the trailers as the backhaul radio. These radios can be paired with either the FM-PANEL-19 or FM-PANEL-22 directional antennas pointing toward the FM3200 BASE sector antenna.

The reason the FM3200/FM4200 radios are recommended for either PtP or PtMP is because these models support the TDMA mode of transmission. The TDMA mode is not the default mode and can be configured by enabling the FLUIDMAX feature on the radios.

The FM3200/FM4200F radios can provide a total maximum throughput of up to 150 Mbps for each PtP or PtMP deployment.

For PtP or PtMP backhauls that need higher than 150 Mbps throughput, the FM3500 (Hub) /FM4500 (Trailer Backhaul) radios can be used which can provide throughput of up to 500 Mbps. However an important point to keep in mind when using these especially within a PtMP setup is that these radio models do not support the TDMA mode of transmission. Hence it is recommended to enable RTS/CTS when using this combination of radios. This has a slight drawback in that enabling RTS/CTS can cause a reduction in overall throughput and also the efficiency of the wireless medium decreases as the number of trailers associated with a single hub goes up, hence does not scale up very well. Due to this it is recommended to deploy the FM3200/FM4200F pair for PtMP deployments.

The PtP deployment can also be configured to use bridge mode instead of the mesh mode. The bridge mode provides the advantage of a simpler configuration and also the fact that it can transfer any kind of traffic across the wireless link. The PtP deployment in bridge mode is useful where-in traffic needs to be backhauled from the distribution to the core network for conditions where a fiber link cannot be deployed. PtP in bridge mode is not recommended to be used within the distribution layer even if initially there is a need to provide connectivity to just a single trailer. As mentioned previously a mine environment is pretty dynamic with mobile trailers needing to move. A PtP deployment configured for bridge mode will not be able to accept another trailer if the need arises in the future. Converting a PtP deployment from bridge mode to mesh mode will take time since they both use different set of plug-in licenses. Hence for the distribution layer it is highly recommended to deploy PtP links using the mesh mode to provide flexibility for addition of trailers to convert the PtP deployment into a PtMP deployment.

Note: In order to avoid RF interference and high channel utilization, it is mandatory to select a different non-overlapping RF channel for each of the wireless backhaul link (unless they are really far apart and cannot interfere with each other). Also, the RF channel(s) used for the fixed infrastructure should be different compared to the RF channel used within the access layer.

Note: When wireless backhaul is used for a trailer which does not have a switch, the access radio and the backhaul radio can be connected back to back using the LAN2 ports. If using POE injectors to power-up the radios, the LAN1 port on the POE injectors can be used to connect the two radios to each other. On the CURWB radios both LAN1 and LAN2 ports are bridged together so the radios can be connected back to back using any of the ports.

CURWB Fixed Infrastructure - Wireless Backhaul for Mine Deployments

When fiber is not available at a Trailer for the access radio, CURWB radios can be deployed in the fixed infrastructure mode to provide wireless backhaul connectivity to the core wired network. In this configuration, it is recommended that a backhaul radio be installed at a Trailer with fiber connectivity and the wirelessly connected Trailer be no more than 1 radio hop away.

When deployed alongside L2 Fluidity, the radios should be configured as mesh points with the same passphrase as the mesh end deployed for Fluidity. Bridge mode can also be used if a small number of Trailers need a wireless backhaul connection. Bridge mode is a variation of point to point in that the radios do not use MPLS encapsulation and cannot be expanded into a multipoint configuration. Because of this limited flexibility, mesh point is the preferred method over bridge mode. It is important to remember to use different frequencies for the fixed infrastructure radios which do not overlap with the access radios. Examples of both a point-to-point and a point-to-multipoint deployment are depicted below.

Note: Radios configured in Bridge mode don’t need to use the same passphrase as the Fluidity network. A different passphrase needs to be used for each PtP bridge link.

Figure 27 Example of PtP and PtMP Wireless Backhaul within a Mining Deployment

Note: In order to avoid RF interference and high channel utilization, it is mandatory to select a different non-overlapping RF channel for each of the wireless backhaul link (unless they are really far apart and cannot interfere with each other). Also, the RF channel(s) used for the fixed infrastructure should be different compared to the RF channel used within the access layer.

As a best-practice, the Trailer backhaul radios should be configured for AutoScan to enable mobility of trailers between different communications towers configured for different frequencies. All the communications tower radios need to be configured with the same passphrase to enable trailer mobility across different communications towers.

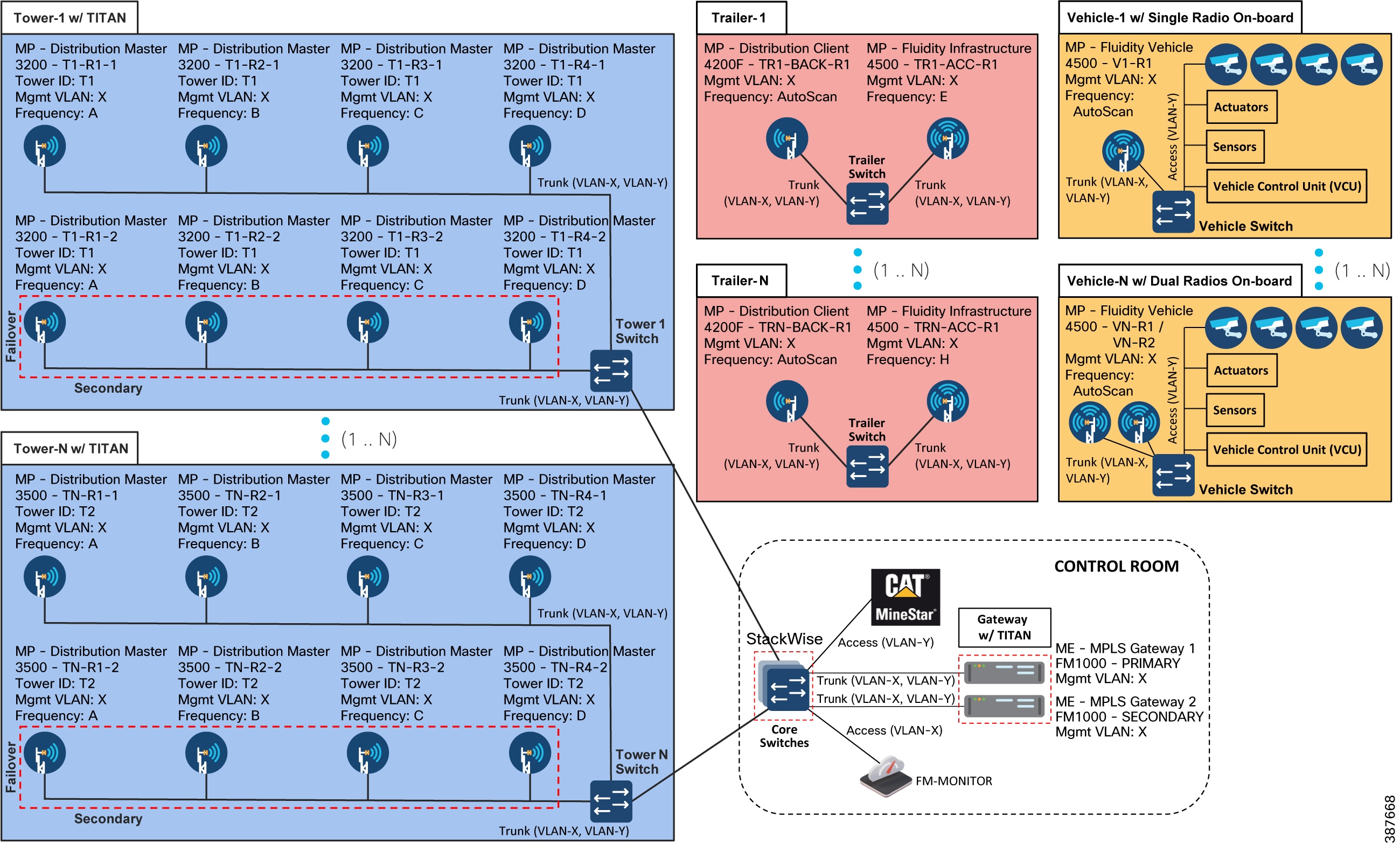

Because most mine autonomous operations need to operate 24x7 it is highly recommended to install a pair of CURWB FM3200 on the Communications towers especially for PtMP Hub deployments to provide high-availability protecting against hardware failure, since traffic from multiple trailers and autonomous vehicles will be aggregated and forwarded to the core

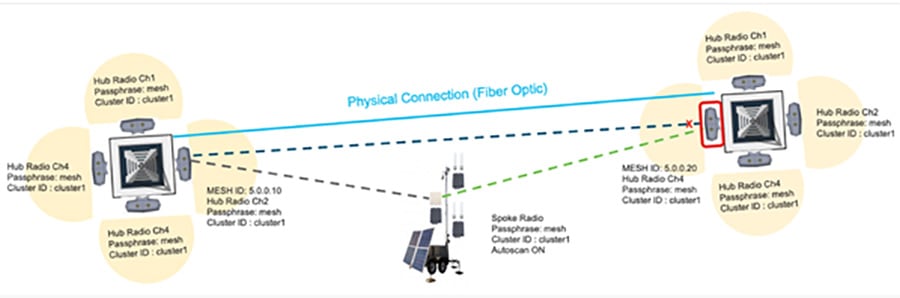

Tower-ID Feature

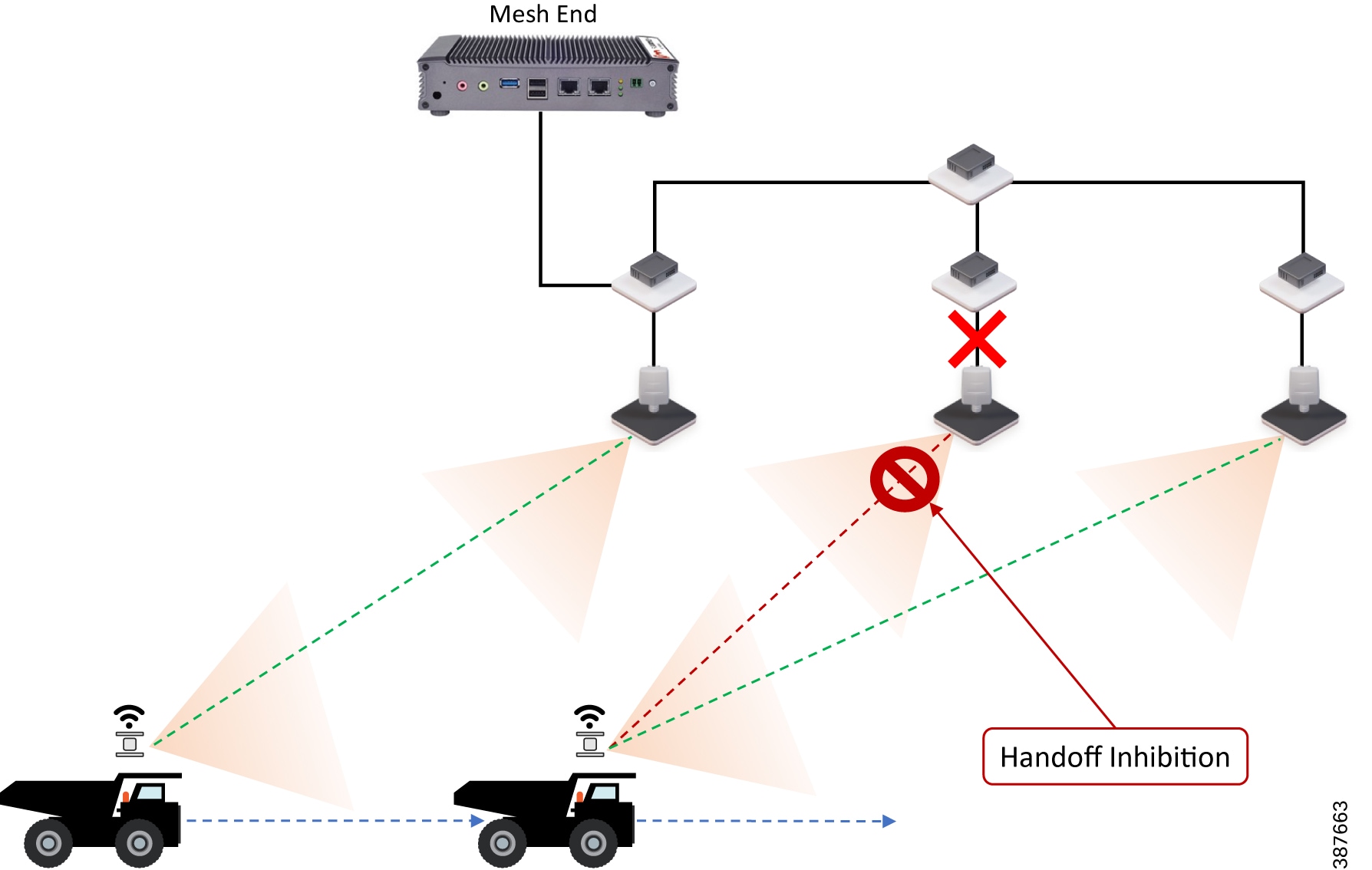

Figure 28 Behavior with Tower-ID feature disabled

The ‘Advanced Radio Settings’ section also contains the Tower-ID parameter.

This feature is used only on Hub Radios. As seen in the figure above, the network uses two radio towers with four Hub Radios each. Each hub radio operates on a different channel, but has the same cluster id and passphrase. The two towers are also linked by a Fiber optic backbone network.

Now, consider radio 5.0.0.10 on tower 1, and radio 5.0.0.20 on Tower 2. Both radios use the same channel, passphrase and cluster id, but Tower ID values have not been configured for either radio. Since they are both linked by radio and fiber optics, the radio with the numerically lower Mesh ID, in this case 5.0.0.10, will automatically become the primary radio. 5.0.0.20 will also stop advertising itself to any other radios, as it is considered to be a backup or secondary radio.

The trailer-mounted Spoke radio is connected to Hub radio 5.0.0.10. If this radio is moved to a location where it receives a stronger signal from radio 5.0.0.20, it will not be able to switch Hubs, because 5.0.0.20 is identified as a backup radio. This will cause unwanted radio behavior, and gaps in coverage.

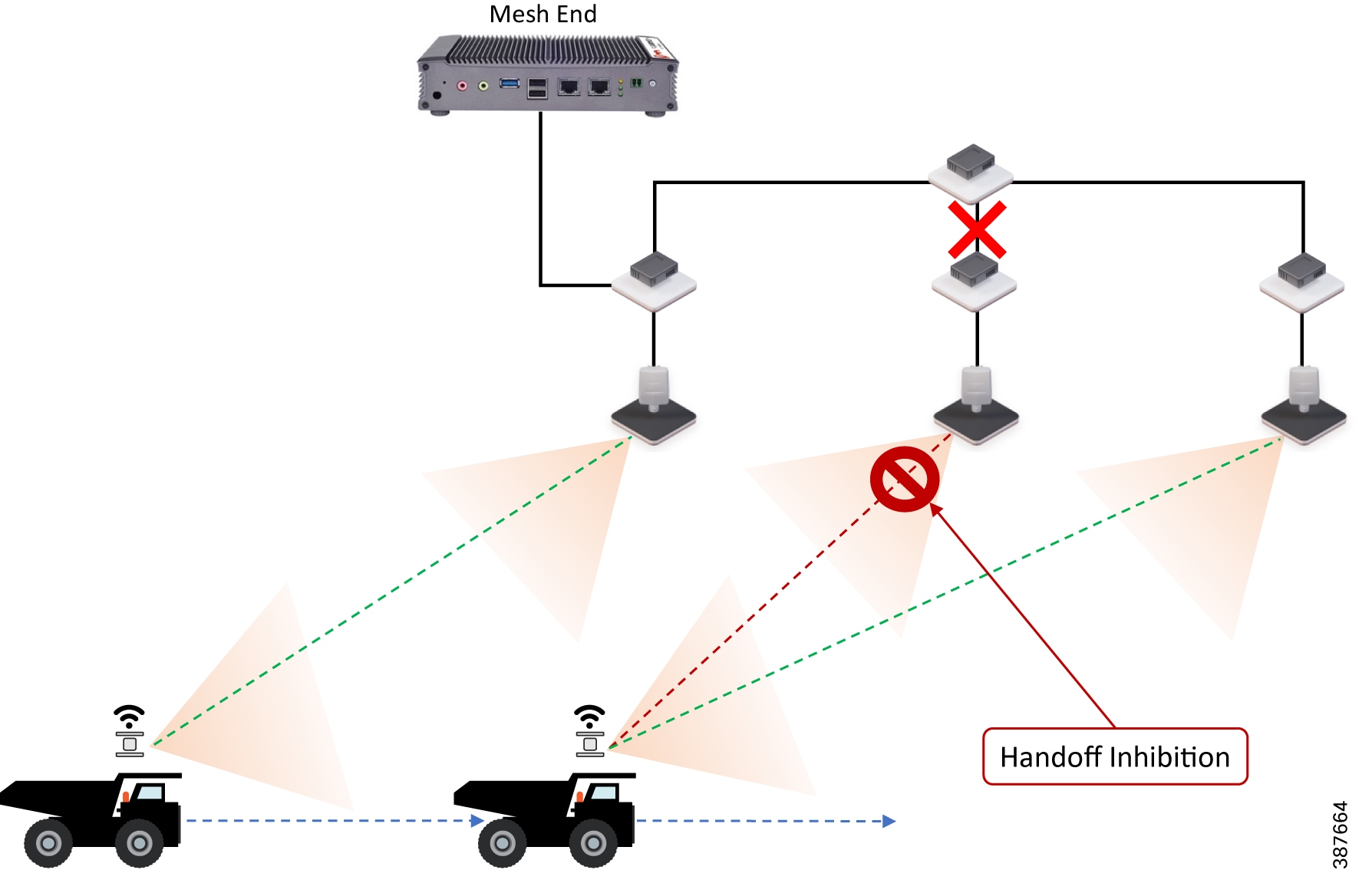

Figure 29 Behavior with Tower-ID feature enabled

Leveraging the Tower-ID feature helps solve this coverage gap issue. Hub radios 5.0.0.10 and 5.0.0.20 are both assigned the same passphrase, cluster ID and operating frequency, but each radio is assigned a different tower ID. This allows both radios to identify themselves as Primary radios within the same cluster.

The trailer-mounted Spoke radio is connected to Hub radio 5.0.0.10. Now, if the trailer is moved to a location where it receives a stronger signal from radio 5.0.0.20, it will automatically switch Hubs, because radios 5.0.0.10 and 5.0.0.20 are both identified as Primary Hub radios. Leveraging the Tower-ID feature, a larger area can receive radio coverage.

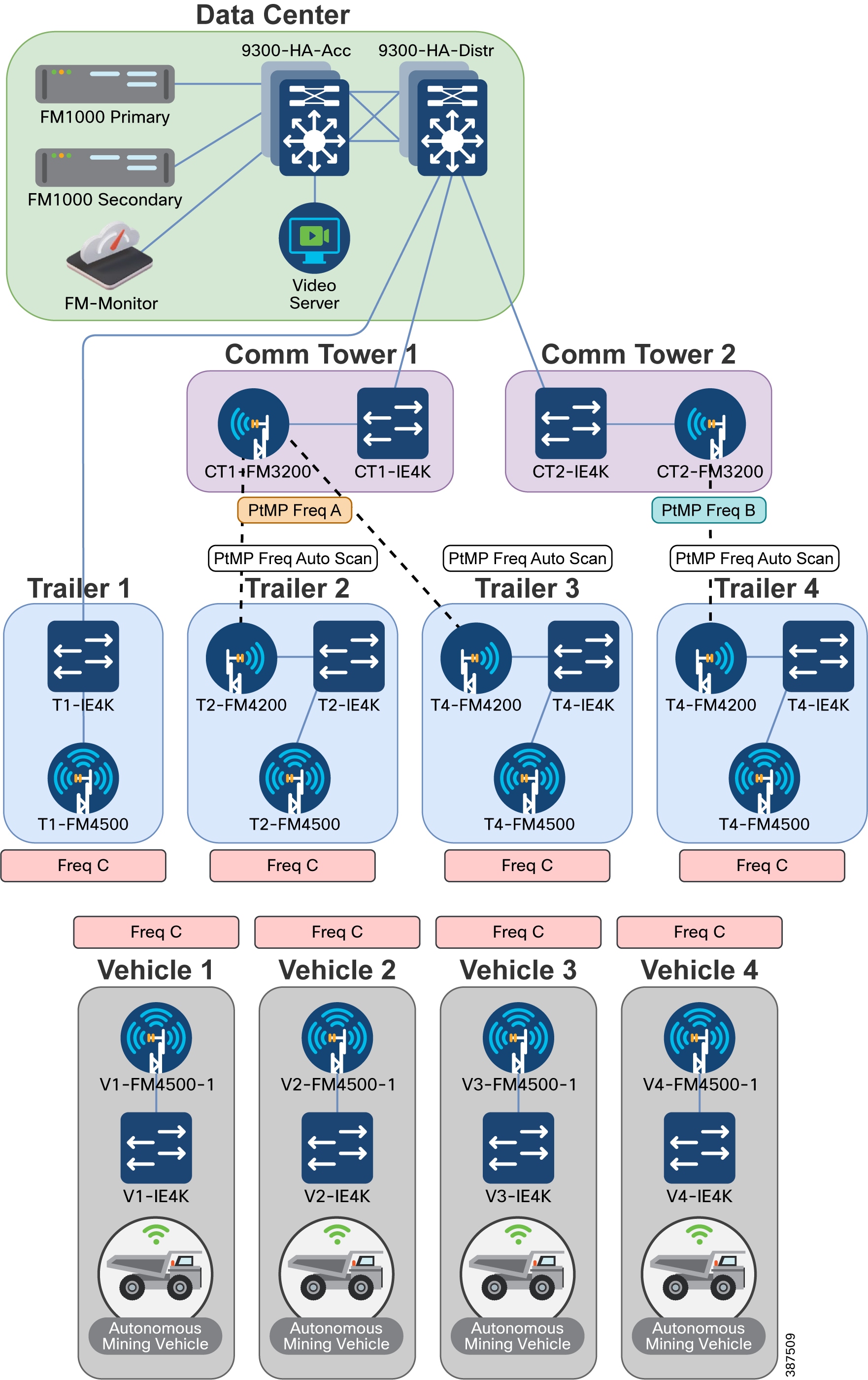

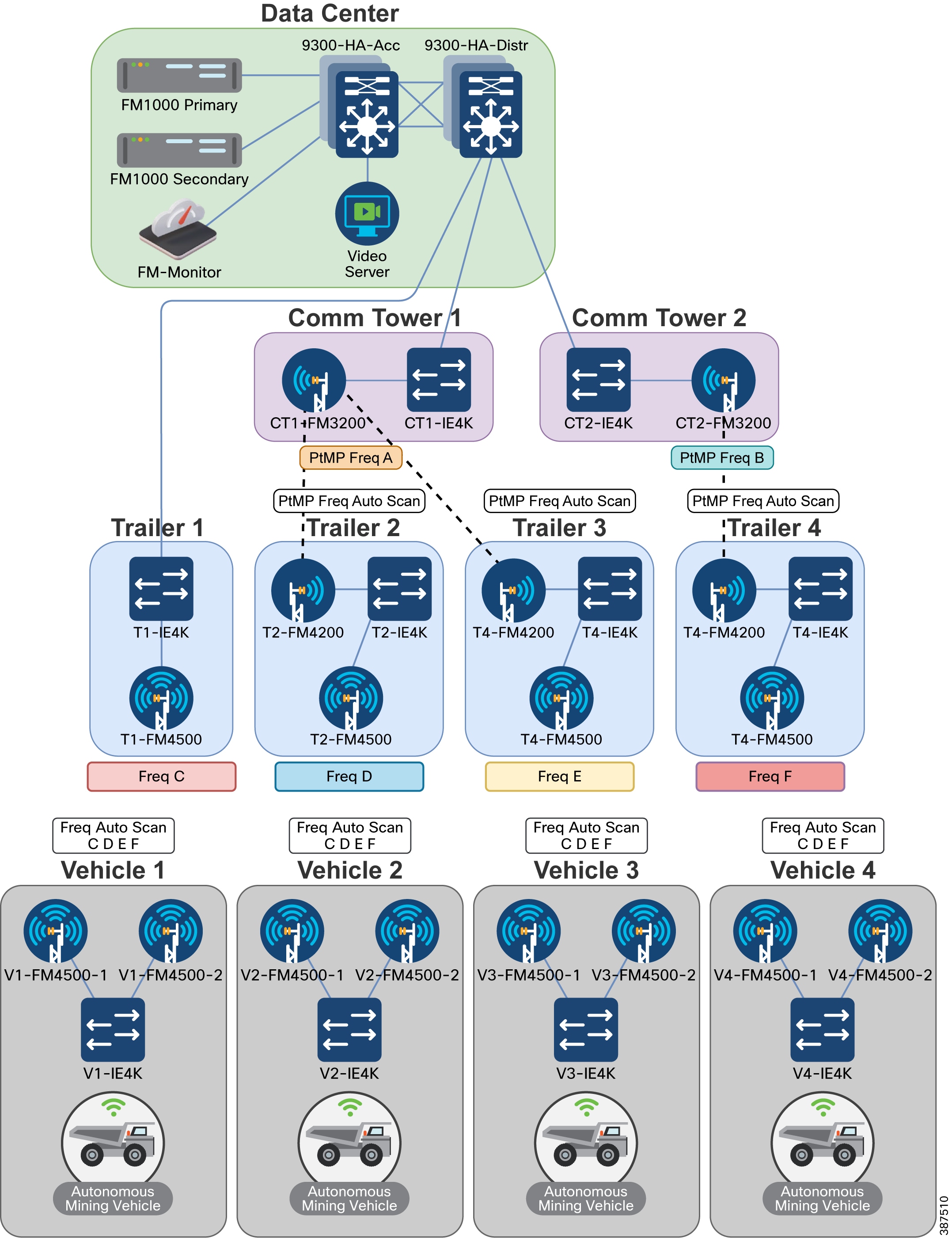

CURWB Mobility Architecture – Layer 2 Fluidity

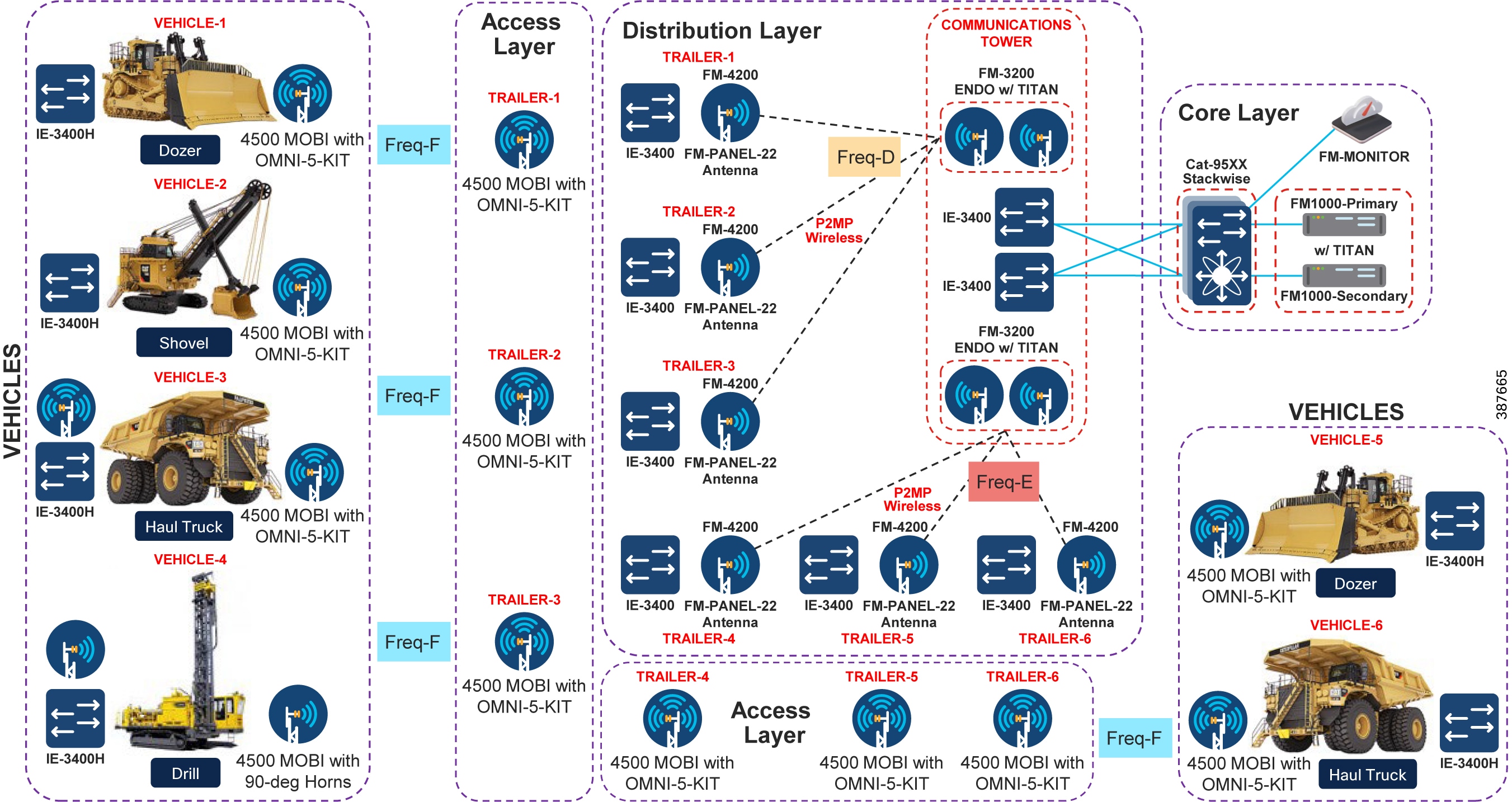

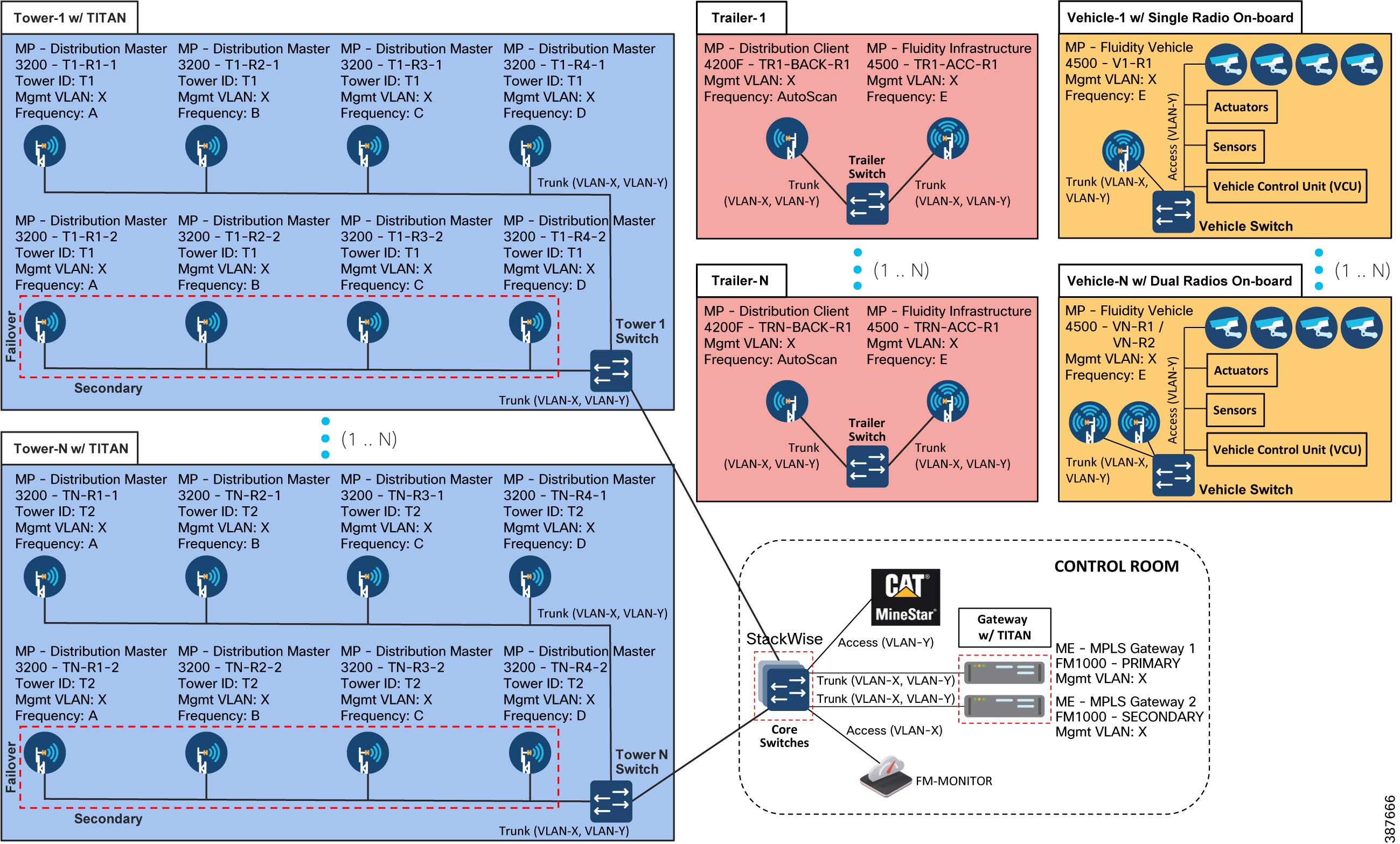

The figure below depicts a typical L2 Fluidity mobility architecture for mining. A pre-requisite for L2 Fluidity is that all the CURWB devices (mesh-end gateways, access radios, and mobile radios) need to be within the same VLAN/IP subnet/L2 broadcast domain and configured with the same passphrase.

The core layer consists of a redundant pair of mesh-end gateways. The role of the mesh-ends is to terminate the MPLS tunnels from each of the vehicle and access radios and act as a demarcation point between the wired and the wireless domains. The mesh-ends are responsible for de-encapsulating the MPLS header and then forwarding the traffic to the distribution/core switch. For the traffic originating from the wired network and going towards the mobility domain, the mesh-ends act as the default gateway and are also responsible for the MPLS encapsulation and forwarding the traffic to the appropriate vehicle radio.

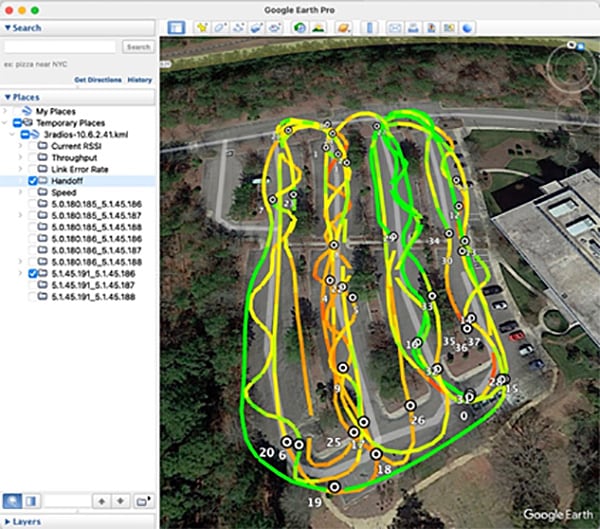

Figure 30 CURWB L2 Fluidity + CURWB Wireless Backhaul Architecture for Mining Autonomous Vehicles (Single Frequency)

The access radios are configured as mesh points in the L2 Fluidity mode with same passphrase that is configured on the mesh-ends. The role of the access radios is to provide RF coverage for the mobility domain. The access radios are distributed across the area where wireless coverage is required while the vehicles roam. In the above architecture all the access radios are configured to operate in the same frequency.

The radio on the vehicles are configured in “Vehicle” mode and are statically configured to use the same frequency used on the infrastructure radios.

The network architecture is based on Prodigy 2.0, MPLS-based technology, which is used to deliver IP-encapsulated data. MPLS provides an end-to-end packet delivery service operating between levels 2 and 3 of the OSI network stack. It relies on label identifiers, rather than the network destination address as in traditional IP routing, to determine the sequence of nodes to be traversed to reach the end of the path.

An MPLS-enabled device is also called a Label Switched Router (LSR). A sequence of LSR nodes configured to deliver packets from the ingress to the egress using label switching is denoted as a Label Switched Path (LSP), or “tunnel”. LSRs situated on the border of an MPLS-enabled network and / or other traditional IP-based devices are also called a Label Edge Router (LER). The ingress node of the path classifies incoming packet according to a set of Forwarding Equivalence Classes (FEC); when a packet matches a class, it is marked with the label associated with the particular class and then forwarded to the next node of the sequence, according to the information configured into the Forwarding Information BAse (FIB) table of the node. Subsequently, each intermediate node manipulates the MPLS label(s) stored into the packet and then it forwards the data to the next node. The egress node finally removes the label and handles the packet using normal IP routing functions.

The FIBs on the different nodes of the network are managed by means of a Label Distribution Protocol (LDP) that is the primary component of the so-called network control plane. Fluidity relies on a custom label distribution protocol that provides automated installation of LSPs among the different nodes of the network; this ensures that each node can be reached from any other node.

In traditional MPLS networks, whenever the network topology changes for any reason, the FIBs of the nodes involved must be reconfigured to adapt to the new paths. This is usually performed using the standard label distribution protocol signaling available.

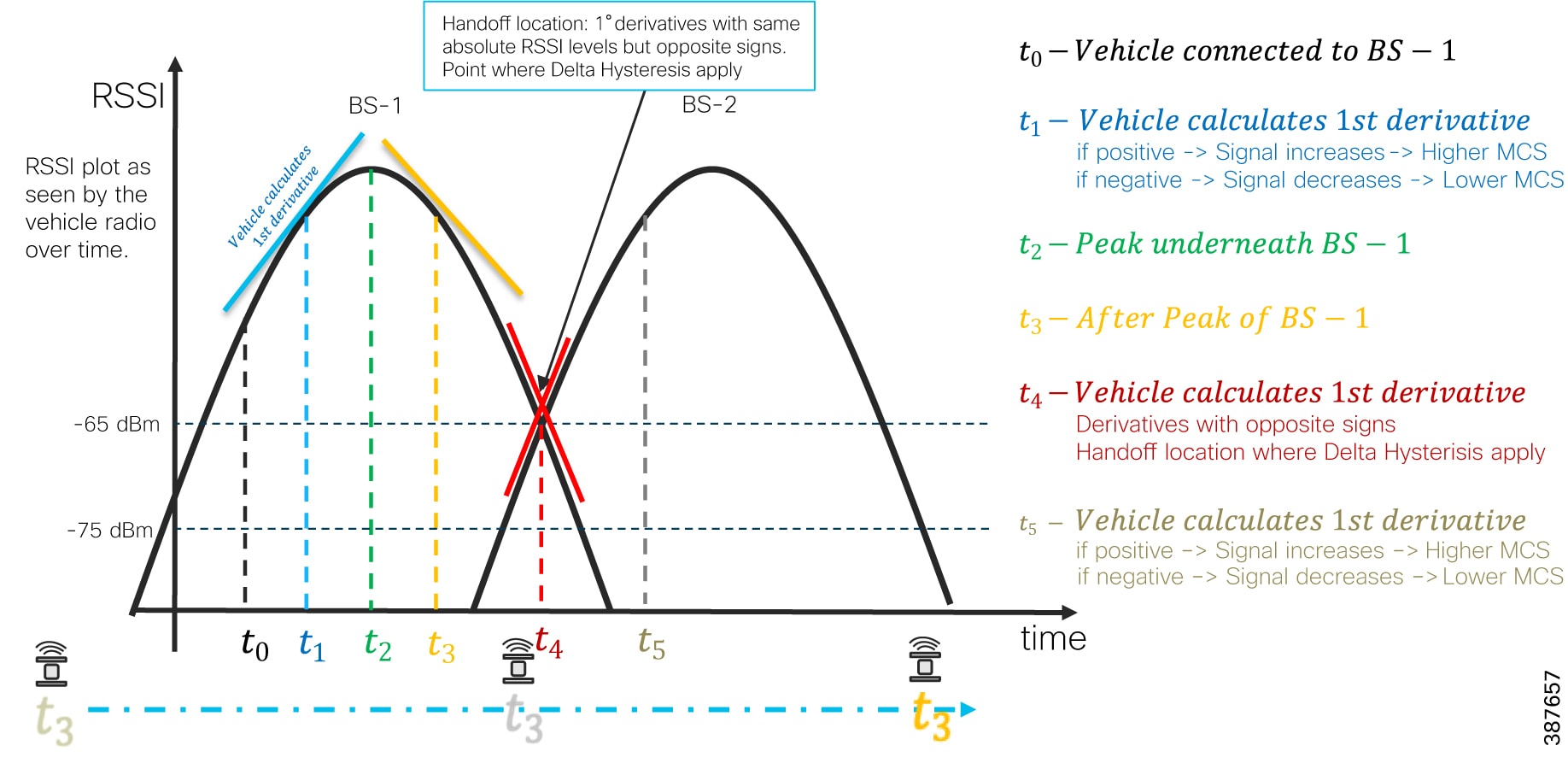

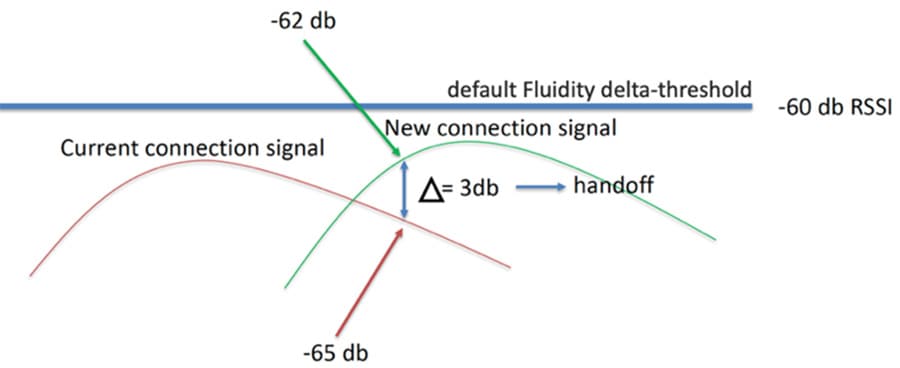

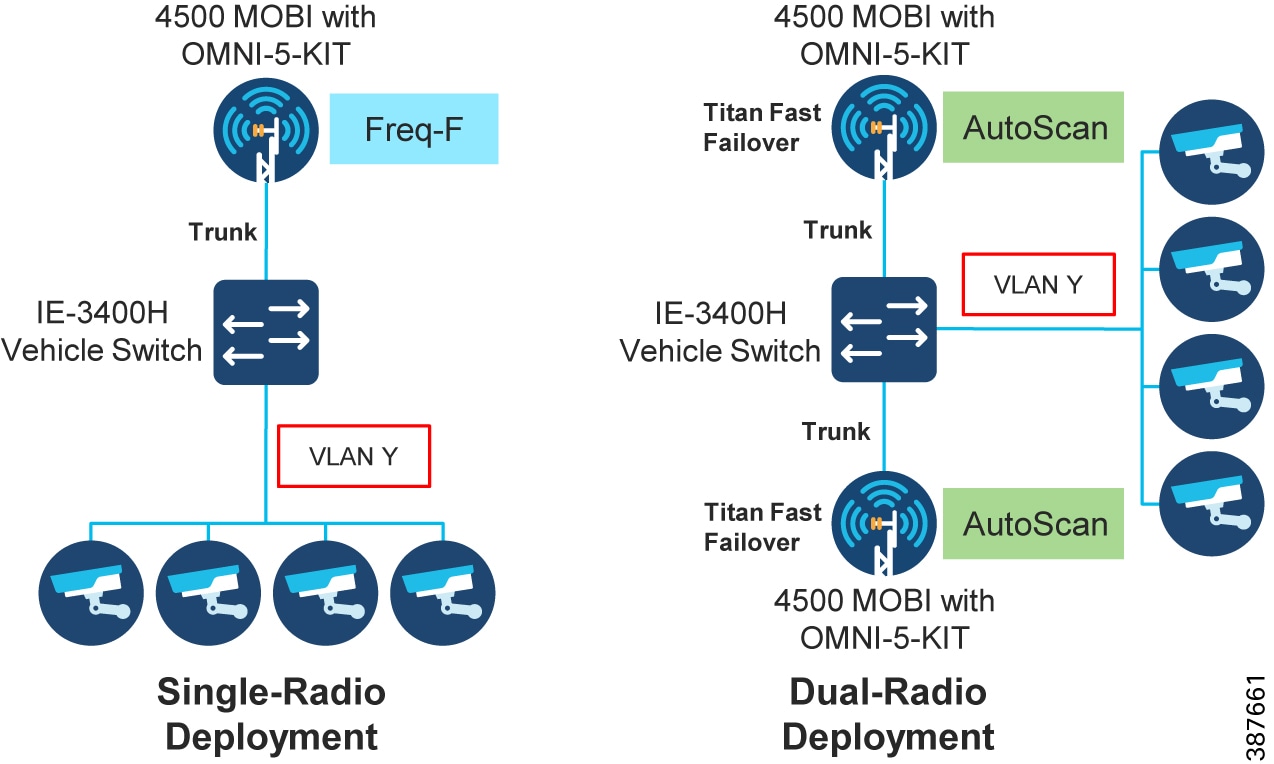

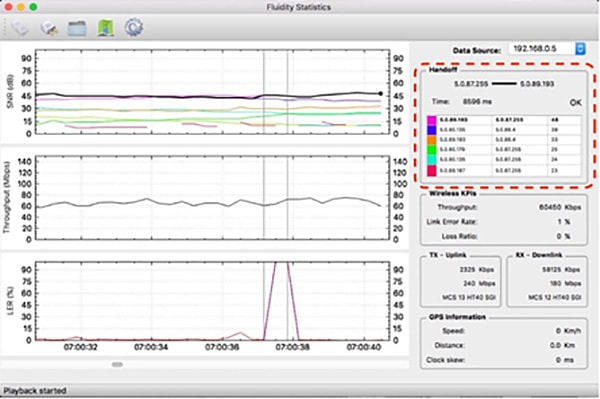

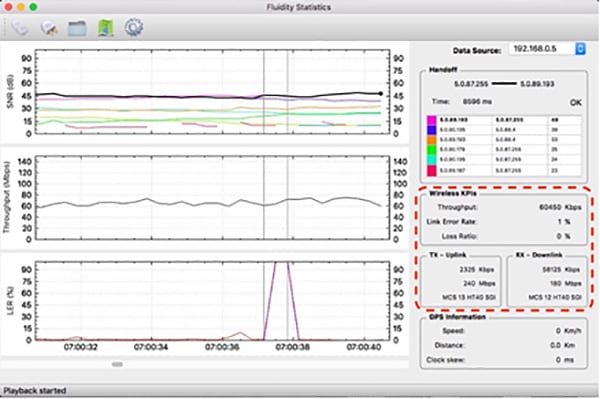

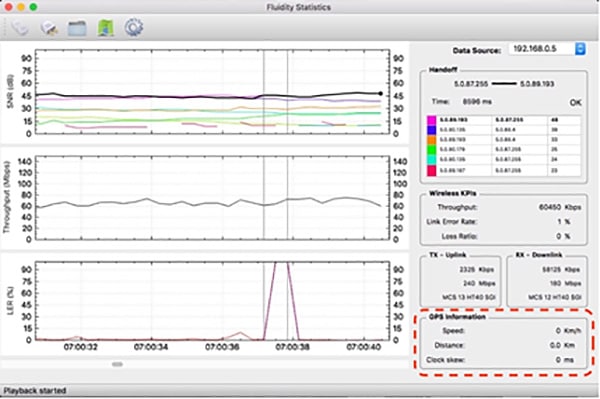

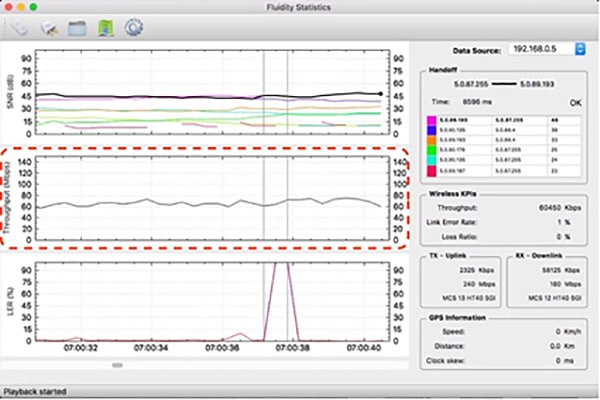

In a mobility network scenario, the handoff process can be assimilated to a network topology change, where a link is broken and a new one is created like in Wi-Fi. The standard mechanisms to detect the change and reconfigure the nodes are, however, too slow and data-intensive to provide adequate performance in a real-time constrained scenario such as high-speed mobility. In particular, the whole reconfiguration latency and the number of messages exchanged should be minimized to reduce the chances that some data packets are lost in the process.