Hadoop as a Service (HaaS) with Cisco UCS Common Platform Architecture (CPA v2) for Big Data and OpenStack

Available Languages

Table of Contents

About Cisco Validated Design (CVD) Program Hortonworks Data Platform (HDP 2.0)

Hortonworks: Key Features and Benefits

Canonical Ubuntu 12.04 LTS Release

Canonical Ubuntu OpenStack Architecture benefits

Hadoop as a Service (HaaS) Architecture Overview

Server Configuration and Cabling

Software Distributions and Versions

Hortonworks Data Platform (HDP 2.0)

Canonical Ubuntu 12.04 LTS Release

Performing Initial Setup of Cisco UCS 6296 Fabric Interconnects

Upgrading UCS Manager Software to Version 2.2(1b)

Adding Block of IP Addresses for KVM Access

Editing Chassis/FEX Discovery Policy

Enabling Server Ports and Uplink Ports

Creating Pools for Service Profile Templates

Creating Policies for Service Profile Templates

Creating Host Firmware Package Policy

Creating Local Disk Configuration Policy

Creating Service Profile Template

Configuring Network Settings for the Template

Configuring Storage Policy for the Template

Configuring vNIC/vHBA Placement for the Template

Configuring Server Boot Order for the Template

Configuring Server Assignment for the Template

Configuring Operational Policies for the Template

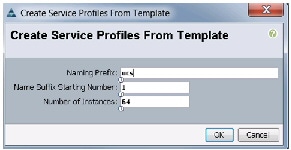

Creating Service Profiles from Templates

Configuring Nytro Flash on all node for installing OS

Configuring Disk Drives on all nodes

Installing Ubuntu Server 12.04.4 LTS with KVM

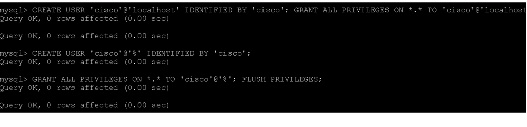

Install MySQL Server on the Controller Node

Install MySql Client on all the Other Nodes

OpenStack Repository - Ubuntu Cloud Archive for Havana

Install Apache on the Controller Node

Canonical Ubuntu OpenStack Havana Software Components

Installing Havana OpenStack Services

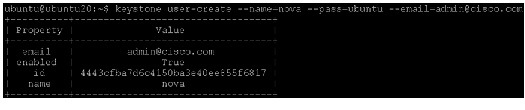

Define Users, Tenants, and Roles

Define services and API endpoints

Verify the Identity Service installation

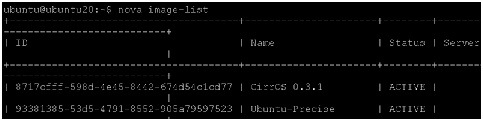

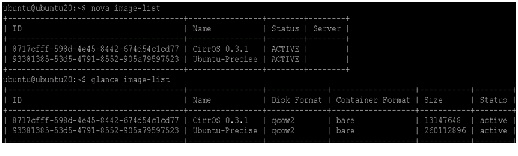

Verify the Image Service installation

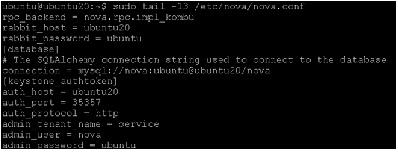

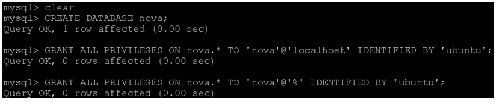

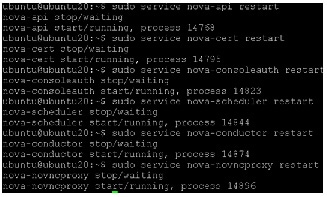

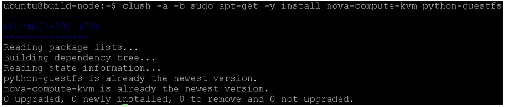

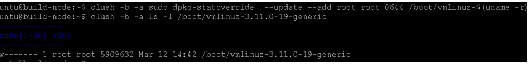

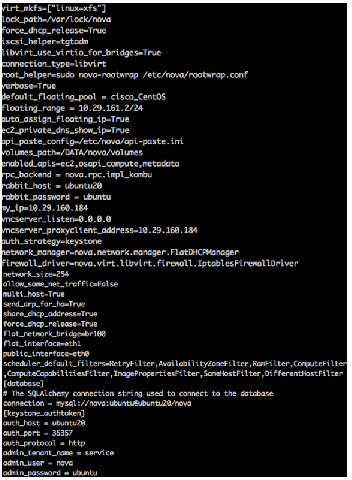

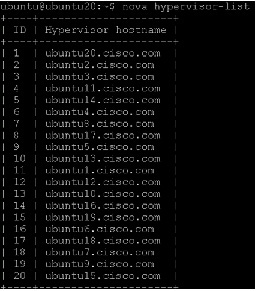

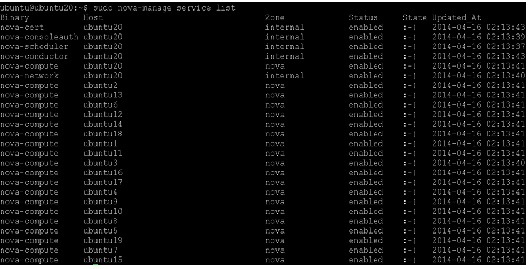

Install Compute controller services

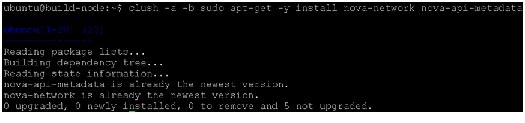

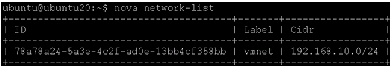

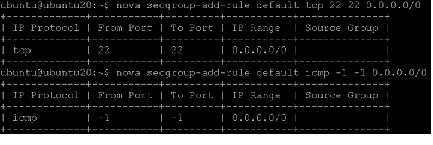

Enable Networking (nova-networking)

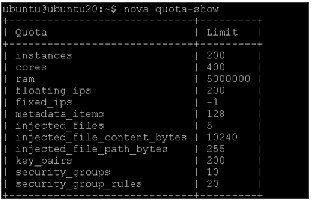

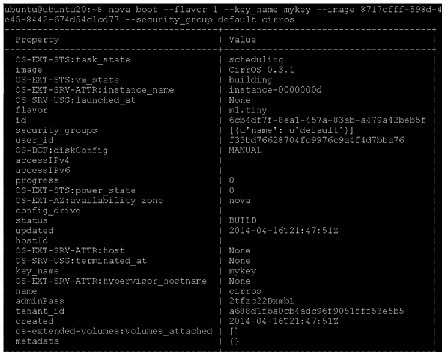

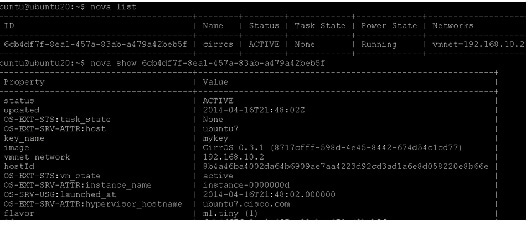

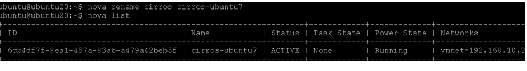

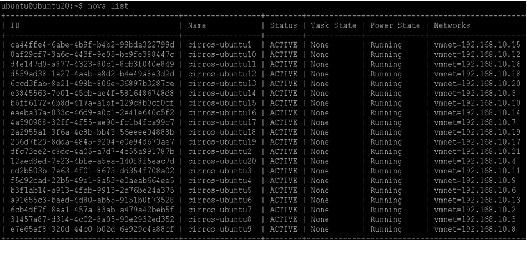

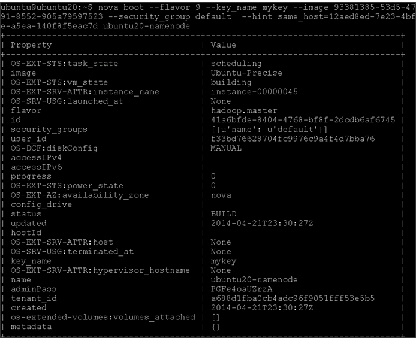

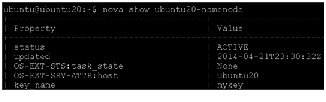

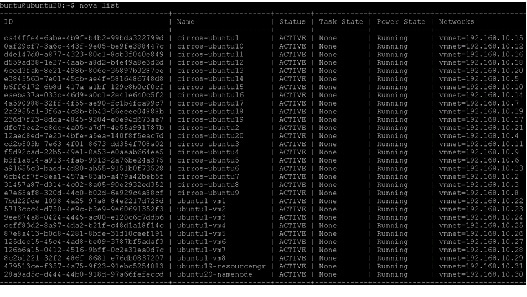

Allocated floating-ips to the VMs

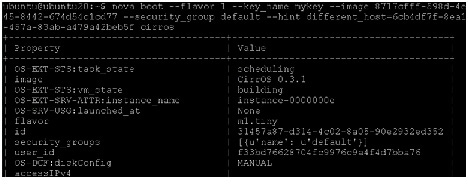

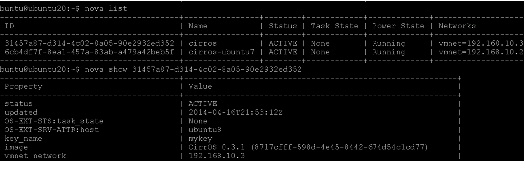

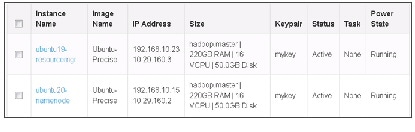

Pre-config of VM cluster for HDP Installation

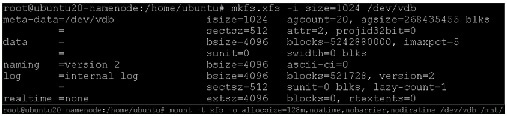

Setting Up XFS Filesystem Master Nodes

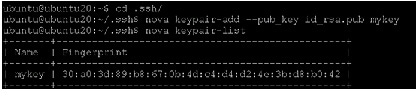

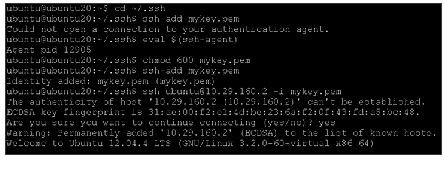

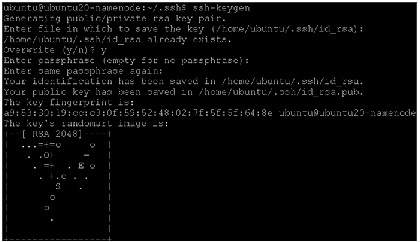

Setting Up Password-less Login within VMs

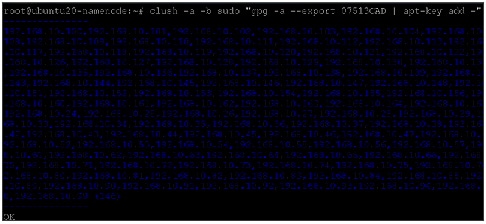

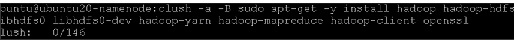

HDP 2.0 Repo for Ubuntu on all VMs

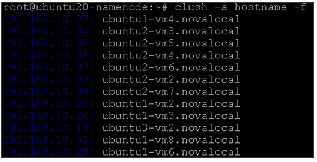

Fully Qualified domain name (FQDN)

Install MySQL (resourcemanager VM)

Role Assignment – Masters/Slaves

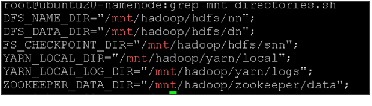

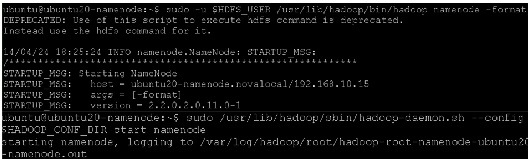

Create the NameNode Directories

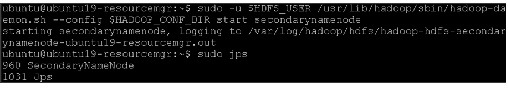

Create the SecondaryNameNode Directories

Create DataNode and YARN NodeManager Local Directories

Create the Log and PID Directories

Determine YARN and MapReduce Memory Configuration Settings

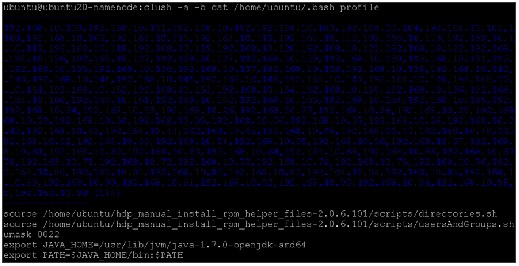

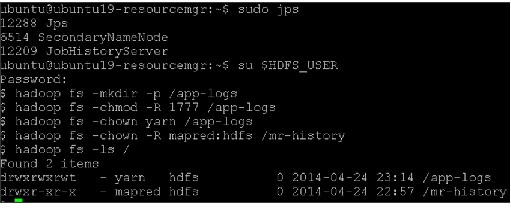

Set Default File and Directory Permissions

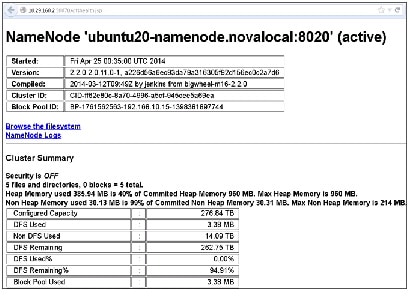

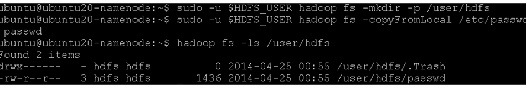

Validating the Core Hadoop Installation

Start MapReduce JobHistory Server

Installing HBase and Zookeeper

Create the Hbase Log and PID Directories

Create the Zookeeper Data and Pid Directories

Set Up the Configuration Files for Zookeeper and Hbase

Installing Apache Hive/HCatalog

About Cisco Validated Design (CVD) Program

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit: http://www.cisco.com/go/designzone .

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

The Cisco implementation of TCP header compression is an adaptation of a program developed by the University of California, Berkeley (UCB) as part of UCB’s public domain version of the UNIX operating system. All rights reserved. Copyright © 1981, Regents of the University of California.

Cisco and the Cisco logo are trademarks or registered trademarks of Cisco and/or its affiliates in the U.S. and other countries. To view a list of Cisco trademarks, go to this URL: http://www.cisco.com/go/trademarks . Third-party trademarks mentioned are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (1110R).

Any Internet Protocol (IP) addresses and phone numbers used in this document are not intended to be actual addresses and phone numbers. Any examples, command display output, network topology diagrams, and other figures included in the document are shown for illustrative purposes only. Any use of actual IP addresses or phone numbers in illustrative content is unintentional and coincidental.

© 2014 Cisco Systems, Inc. All rights reserved.

Acknowledgment

The authors acknowledge Ashwin Manjunatha, Ajay Singh, Mehul Bhatt, Samantha Jian-Pielak, Marc Solanas Tarre, Debo Dutta and Sindhu Sudhir for their contributions in developing this document.

Hadoop as a Service (HaaS) with Cisco UCS Common Platform Architecture (CPA v2) for Big Data and OpenStack

Introduction

Hadoop has become a strategic data platform embraced by mainstream enterprises as it offers a path for businesses to unlock value in big data while maximizing existing investments. The Hortonworks Data Platform 2.0 (HDP 2.0) is a 100% open source distribution of Apache Hadoop that is built, tested and hardened with enterprise rigor. The combination of HDP and Cisco UCS provides an industry-leading platform for Hadoop based application deployments. Hadoop as a Service is new to the industry but is gaining traction in many Service Providers and IT Organizations. This CVD focuses on setting up OpenStack on Ubuntu to deploy and manage Hadoop as a Service on Cisco UCS Common Platform Architecture version 2 (CPA v2).

Audience

This document describes the architecture and deployment procedures of Hadoop as a Service (HaaS) with OpenStack and Hortonworks Data Platform 2.0 (HDP 2.0) for Hadoop on a 64-node cluster based on CPA v2 for Big Data. The intended audience of this document include, but is not limited to, sales engineers, field consultants, professional services, IT managers, partner engineering and customers who want to deploy Hadoop as a Service with HDP 2.0 on Cisco UCS CPA v2 for Big Data.

Big Data

The Cisco UCS solution for HDP 2.0 is based on CPA v2 for Big Data, is a highly scalable architecture designed to meet a variety of scale-out application demands with seamless data integration and management integration capabilities built using the following components:

- Cisco UCS 6200 Series Fabric Interconnects —provide high-bandwidth, low-latency connectivity for servers, with integrated, unified management provided for all connected devices by Cisco UCS Manager. Deployed in redundant pairs, Cisco fabric interconnects offer the full active-active redundancy, performance, and exceptional scalability needed to support the large number of nodes that are typical in clusters serving big data applications. Cisco UCS Manger enables rapid and consistent server configuration using service profiles, automating ongoing system maintenance activities such as firmware updates across the entire cluster as a single operation. Cisco UCS Manager also offers advanced monitoring with options to raise alarms and send notifications about the health of the entire cluster.

- Cisco UCS 2200 Series Fabric Extenders —extend the network into each rack, acting as remote line cards for fabric interconnects and providing highly scalable and extremely cost-effective connectivity for a large number of nodes.

- Cisco UCS C-Series Rack Mount Servers —are 2-socket servers based on Intel Xeon E-2600 v2 series processors and supporting up to 768GB of main memory. 24 Small Form Factor (SFF) disk drives are supported in performance optimized option and 12 Large Form Factor (LFF) disk drives are supported in capacity option, along with 4 Gigabit Ethernet LAN-on-motherboard (LOM) ports.

- Cisco UCS Virtual Interface Cards (VICs) —unique to Cisco, Cisco UCS Virtual Interface Cards incorporate next-generation converged network adapter (CNA) technology from Cisco, and offer dual 10Gbps ports designed for use with Cisco UCS C-Series Rack-Mount Servers. Optimized for virtualized networking, these cards deliver high performance and bandwidth utilization and support up to 256 virtual devices.

- Cisco UCS Manager —resides within the Cisco UCS 6200 Series Fabric Interconnects. It makes the system self-aware and self-integrating, managing all of the system components as a single logical entity. Cisco UCS Manager can be accessed through an intuitive graphical user interface (GUI), a command-line interface (CLI), or an XML application-programming interface (API). Cisco UCS Manager uses service profiles to define the personality, configuration, and connectivity of all resources within Cisco UCS, radically simplifying provisioning of resources so that the process takes minutes instead of days. This simplification allows IT departments to shift their focus from constant maintenance to strategic business initiatives.

Hortonworks Data Platform (HDP 2.0)

Apache Hadoop is an open-source software framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. The Hortonworks Data Platform2.0 (HDP 2.0) is an enterprise-grade, Apache Hadoop distribution that enables storing, processing, and managing large data sets. The HDP 2.0 combines Apache Hadoop and it’s related projects into a single tested and certified package.

Hortonworks: Key Features and Benefits

With HDP 2.0, enterprises can retain and process large amounts of data, join new and existing data sets, and lower the cost of data analysis compared to traditional solutions. Hortonworks enables enterprises to implement the following data management principles:

- Retain as much data as possible. Traditional data warehouses age, and over time will eventually store only summary data. Analyzing detailed records is often critical to uncovering useful business insights.

- Join new and existing data sets. Enterprises can build large-scale environments for transactional data with analytic databases, but these solutions are not always well suited to processing nontraditional data sets such as text, images, machine data, and online data. Hortonworks enables enterprises to incorporate both structured and unstructured data in one comprehensive data management system.

- Archive data at low cost. It is not always clear what portion of stored data will be of value for future analysis. Therefore, it can be difficult to justify expensive processes to capture, cleanse, and store that data. Hadoop scales easily, so you can store years of data without much incremental cost, and find deeper patterns that your competitors may miss.

- Access all data efficiently. Data needs to be readily accessible. Apache Hadoop clusters can provide a low-cost solution for storing massive data sets while still making the information readily available. Hadoop is designed to efficiently scan all of the data, which is complimentary to databases that are efficient at finding subsets of data.

- Apply data cleansing and data cataloging. Categorize and label all data in Hadoop with enough descriptive information (metadata) to make sense of it later, and to enable integration with transactional databases and analytic tools. This greatly reduces the time and effort of integrating with other data sets, and avoids a scenario in which valuable data is eventually rendered useless.

- Integrate with existing platforms and applications. There are many business intelligence (BI) and analytic tools available, but they may not be compatible with your particular data warehouse or DBMS. Hortonworks connects seamlessly with many leading analytic, data integration, and database management tools.

The HDP 2.0 is the foundation for the next-generation enterprise data architecture – one that addresses both the volume and complexity of today’s data.

Canonical Ubuntu 12.04 LTS Release

Also known by its code name “Precise Pangolin”, Ubuntu 12.04 is a Long Term Support (LTS) release from Canonical. The support for Ubuntu 12.04 is expected to continue till April 2017, hence providing a long term robust support framework for customers. For open source projects, support is a crucial component, and Canonical provides enterprise level scale, stability and support for underlying Operating System as well as OpenStack components for cloud deployment on Ubuntu.

Canonical Ubuntu OpenStack Architecture benefits

Canonical OpenStack Platform on Canonical Ubuntu 12.04 provides the foundation to build a private or public Infrastructure as a Service (IaaS) for cloud-enabled workloads. It allows organizations to leverage OpenStack, the largest and fastest growing open source cloud infrastructure project, while maintaining the security, stability, and enterprise readiness of a platform built on Canonical Ubuntu 12.04.

Canonical Ubuntu OpenStack Platform gives organizations a open framework for hosting cloud workloads. In conjunction with other Ubuntu technologies, Canonical Ubuntu OpenStack Platform allows organizations to move from traditional workloads to cloud-enabled workloads on their own terms and timelines, as their applications require.

Canonical Ubuntu OpenStack Platform provides a certified ecosystem of hardware, software, and services, an enterprise lifecycle that extends the community OpenStack release cycle, and Canonical support on both the OpenStack modules and their underlying Linux dependencies.

Hadoop as a Service (HaaS) Architecture Overview

The Architecture for Hadoop as a Service is outlined in this section.

Canonical Ubuntu 12.04 LTS is both the host OS and guest OS on top of OpenStack. the tested configuration is based on Cisco UCS CPA v2 for Big Data optimized for Capacity with Flash Memory. http://www.cisco.com/c/dam/en/us/solutions/collateral/borderless-networks/advanced-services/common_platform_architecture.pdf . OpenStack release used for this solution is Havana. OpenStack components used are Keystone for Identity Service, Glance for VM Image service, Nova for compute (nova-compute uses KVM as the hypervisor), Storage is ephemeral (storage that is local to the VM and is deleted when the VM is terminated), networking is nova-network, which is a FlatNetwork and Horizon is used for OpenStack dashboard. Hortonworks 2.0 is installed manually on the guest VMs.

Note

This CVD goes with Ephemeral storage for data storage. One of the reasons to go with Ephemeral as storage was to have compute local to storage as this is fundamental to Hadoop, else this would cause unwanted network traffic slowing the performance. Data redundancy is managed inherently by HDFS (Hadoop Filesystem). There are other solutions on top of OpenStack, which can provide storage to be local to compute or provide an alternate to HDFS with hooks to Hadoop MapReduce which are beyond the scope of this CVD. This CVD goes with Ephemeral storage for data storage. One of the reasons to go with Ephemeral as storage was to have compute local to storage as this is fundamental to Hadoop, else this would cause unwanted network traffic slowing the performance. Data redundancy is managed inherently by HDFS (Hadoop Filesystem). There are other solutions on top of OpenStack, which can provide storage to be local to compute or provide an alternate to HDFS with hooks to Hadoop MapReduce which are beyond the scope of this CVD.

OpenStack and Hadoop Layout

Of the 64 nodes (physical servers), one of the node is going to be Controller node for OpenStack (A controller node basically runs all services necessary to manage compute nodes which host VMs. Compute nodes run only minimal services and refer to Controller node for all querying and managing VM lifecycle within the individual servers. Typically a controller node does not host VMs. In other words, controller node does not run nova-compute; however, in this architecture Hadoop Namenode is run as a Single VM on the controller node. Hence, to run this single VM we need to run nova-compute services on controller node as well.

In order to host Hadoop Master services (namely Namenode and Resource Manager), two nodes run single VM to host Hadoop Namenode and Hadoop Resource Manager/Secondary Namenode (and other Master services discussed later). All other compute nodes host multiple VMs which will be used for data and tasks.

Note

If running a smaller cluster, say less than 16 nodes, all Hadoop Master Services can be placed in a single VM on one node, which is the OpenStack controller node. However, Hadoop data and task (job) services are not run on this VM. If running a smaller cluster, say less than 16 nodes, all Hadoop Master Services can be placed in a single VM on one node, which is the OpenStack controller node. However, Hadoop data and task (job) services are not run on this VM.

Solution Overview

The current version of the Cisco UCS CPA v2 for Big Data offers the following configuration depending on the compute and storage requirements:

Note

This CVD describes the install process for a 64 node Capacity Optimized with Flash Memory Cluster Configuration with 256GB Memory. This CVD describes the install process for a 64 node Capacity Optimized with Flash Memory Cluster Configuration with 256GB Memory.

The Capacity Optimized with Flash memory cluster configuration consists of the following:

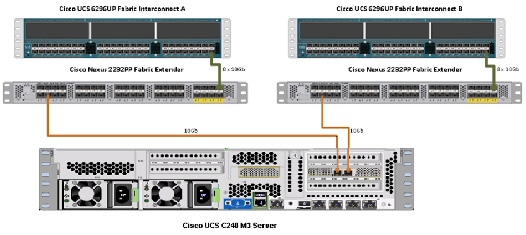

Rack and PDU Configuration

Each rack consists of two vertical PDUs. The master rack consists of two Cisco UCS 6296UP Fabric Interconnects, two Cisco Nexus 2232PP Fabric Extenders and sixteen Cisco UCS C240M3 Servers, connected to each of the vertical PDUs for redundancy; thereby, ensuring availability during power source failure. The expansion racks consists of two Cisco Nexus 2232PP Fabric Extenders and sixteen Cisco UCS C240M3 Servers are connected to each of the vertical PDUs for redundancy; thereby, ensuring availability during power source failure, similar to the master rack.

Note

Please contact your Cisco representative for country specific information. Please contact your Cisco representative for country specific information.

Table 2 and Table 3 describe the rack configurations of rack 1 (master rack) and racks 2-4 (expansion racks).

Server Configuration and Cabling

This CVD focuses on the architecture for Canonical Ubuntu OpenStack on UCS platform using Cisco UCS C-Series Servers for both compute and storage. Cisco UCS C240 M3 servers are used as compute, storage and controller nodes (from OpenStack perspective). UCS C-Series Servers are managed by UCS Manager, which provides ease of infrastructure management, and built-in network high availability.

The C240 M3 rack server is equipped with Intel Xeon E5-2660 v2 processors, 256GB of memory, Cisco UCS Virtual Interface Card 1225, Cisco LSI Nytro MegaRAID 8110-4i with 200GB Flash storage controller and 12 x 4TB 7.2K SATA disk drives.

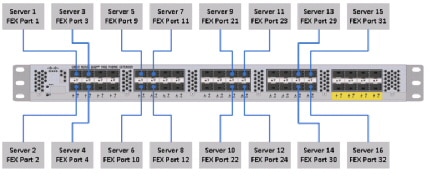

Figure 1 illustrates the ports on the Cisco Nexus 2232PP Fabric Extender connecting to the Cisco UCS C240 M3 Servers. Sixteen Cisco UCS C240 M3 servers are used in Master rack configurations.

Figure 1 Topology Diagram of Cisco UCS C240 M3 Server

Figure 2 illustrates the port connectivity between the Cisco Nexus 2232PP fabric extender and Cisco UCS C240M3 server.

Figure 2 Connectivity Diagram of Cisco Nexus 2232PP FEX and Cisco UCS C240 M3 Servers

For more information on physical connectivity and single-wire management see:

http://www.cisco.com/en/US/docs/unified_computing/ucs/c-series_integration/ucsm2.1/b_UCSM2-1_C-Integration_chapter_010.html

For more information on physical connectivity illustrations and cluster setup, see:

http://www.cisco.com/en/US/docs/unified_computing/ucs/c-series_integration/ucsm2.1/b_UCSM2-1_C-Integration_chapter_010.html#reference_FE5B914256CB4C47B30287D2F9CE3597

Figure 3 depicts a 64 node cluster. Each link in the figure represents 8 x 10 Gigabit links.

Figure 3 64 Node Cluster Configuration

Software Distributions and Versions

The software distributions required versions are listed below.

Hortonworks Data Platform (HDP 2.0)

The Hortonworks Data Platform supported is HDP 2.0. For more information visit http://www.hortonworks.com

Canonical Ubuntu 12.04 LTS Release

The operating system supported is Ubuntu 12.04.4 Server. For more information visit http://www.ubuntu.com

Software Versions

The software versions tested and validated in this document are listed in Table 4 .

Note

The latest drivers can be downloaded from the link below: The latest drivers can be downloaded from the link below: http://software.cisco.com/download/release.html?mdfid=284296254&flowid=31743&softwareid=283853158&release=1.5.1&relind=AVAILABLE&rellifecycle=&reltype=latest

Fabric Configuration

This section provides details for configuring a fully redundant, highly available Cisco UCS 6296 fabric configuration.

1.

Initial setup of the Fabric Interconnect A and B.

2.

Connect to IP address of Fabric Interconnect A using web browser.

4.

Edit the chassis discovery policy.

5.

Enable server and uplink ports.

6.

Create pools and polices for service profile template.

7.

Create service profile template and 64 service profiles.

Note

Make sure that the links, L1 of Fabric A is connected to L1 of Fabric B and L2 of Fabric A is connected to L2 of Fabric B. Make sure that the links, L1 of Fabric A is connected to L1 of Fabric B and L2 of Fabric A is connected to L2 of Fabric B.

Performing Initial Setup of Cisco UCS 6296 Fabric Interconnects

This section describes the steps to perform initial setup of the Cisco UCS 6296 Fabric Interconnects A and B.

Configure Fabric Interconnect A

Follow these steps to configure Fabric Interconnect A:

1.

Connect to the console port on the first Cisco UCS 6296 Fabric Interconnect.

2.

At the prompt to enter the configuration method, enter console to continue.

3.

If asked to either perform a new setup or restore from backup, enter setup to continue.

4.

Enter y to continue to set up a new Fabric Interconnect.

5.

Enter y to enforce strong passwords.

6.

Enter the password for the admin user.

7.

Enter the same password again to confirm the password for the admin user.

8.

When asked if this fabric interconnect is part of a cluster, answer y to continue.

9.

Enter A for the switch fabric.

10.

Enter the cluster name for the system name.

11.

Enter the Mgmt0 IPv4 address.

12.

Enter the Mgmt0 IPv4 netmask.

13.

Enter the IPv4 address of the default gateway.

14.

Enter the cluster IPv4 address.

15.

To configure DNS, answer y.

16.

Enter the DNS IPv4 address.

17.

Answer y to set up the default domain name.

18.

Enter the default domain name.

19.

Review the settings that were printed to the console, and if they are correct, answer yes to save the configuration.

20.

Wait for the login prompt to make sure the configuration has been saved.

Configure Fabric Interconnect B

Follow these steps to configure Fabric Interconnect B:

1.

Connect to the console port on the second Cisco UCS 6296 Fabric Interconnect.

2.

When prompted to enter the configuration method, enter console to continue.

3.

The installer detects the presence of the partner Fabric Interconnect and adds this fabric interconnect to the cluster. Enter y to continue the installation.

4.

Enter the admin password that was configured for the first Fabric Interconnect.

5.

Enter the Mgmt0 IPv4 address.

6.

Answer yes to save the configuration.

7.

Wait for the login prompt to confirm that the configuration has been saved.

For more information on configuring Cisco UCS 6200 Series Fabric Interconnect, see:

http://www.cisco.com/en/US/docs/unified_computing/ucs/sw/gui/config/guide/2.0/b_UCSM_GUI_Configuration_Guide_2_0_chapter_0100.html

Logging Into Cisco UCS Manager

Follow these steps to login to Cisco UCS Manager.

Open a Web browser and navigate to the Cisco UCS 6296 Fabric Interconnect cluster address.

Click the Launch link to download the Cisco UCS Manager software.

If prompted to accept security certificates, accept as necessary.

When prompted, enter admin for the username and enter the administrative password.

Upgrading UCS Manager Software to Version 2.2(1b)

This document assumes the use of UCS 2.2(1b). Make sure you have upgraded the Cisco UCS Manager software and Cisco UCS 6296UP Fabric Interconnect to version 2.2(1b)

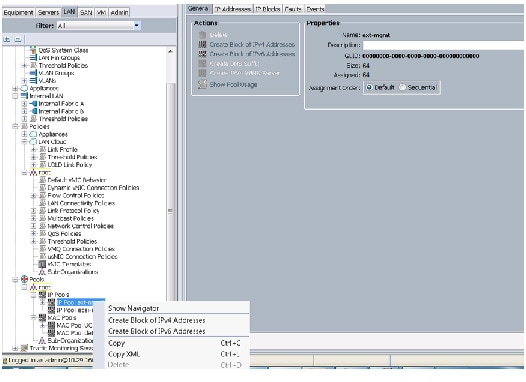

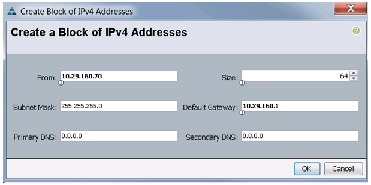

Adding Block of IP Addresses for KVM Access

The procedure discussed here provides you the details for creating a block of KVM IP addresses for server access in the Cisco UCS environment.

Follow these steps to create a block of IP addresses:

1.

Select the LAN tab at the top of the left window.

2.

Select Pools > IpPools > Ip Pool ext-mgmt.

3.

Right-click on IP Pool ext-mgmt.

4.

Select Create Block of IPv4 Addresses.

Figure 4 Adding a Block of IPv4 Addresses for KVM Access - Part 1

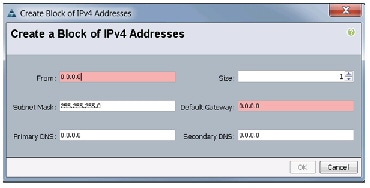

5.

Enter the starting IP address of the block and number of IPs needed, as well as the subnet and gateway information.

Figure 5 Adding Block of IPv4 Addresses for KVM Access - Part 2

6.

Click OK to create the IP block.

7.

Click OK in the message box.

Figure 6 Adding Block of IPv4 Addresses for KVM Access - Part 3

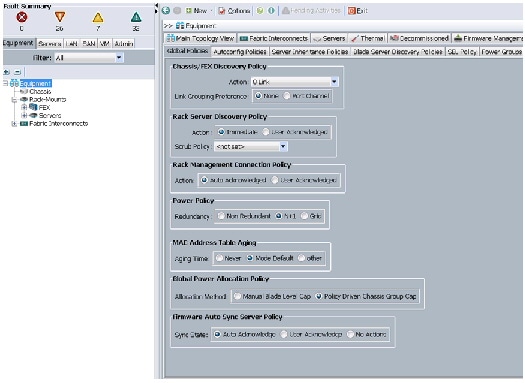

Editing Chassis/FEX Discovery Policy

The procedure discussed here provides you the details for modifying the chassis discovery policy. Setting the discovery policy now will simplify server for the future B-Series UCS Chassis and additional Cisco UCS Fabric Extenders for further C-Series connectivity.

Follow these steps to edit the Chassis Discovery Policy:

1.

Navigate to the Equipment tab in the left pane.

2.

In the right pane, click the Policies tab.

3.

Under Global Policies, change the Chassis/FEX Discovery Policy to 8-link.

4.

Click Save Changes in the bottom right hand corner.

Figure 7 Chassis/FEX Discovery Policy

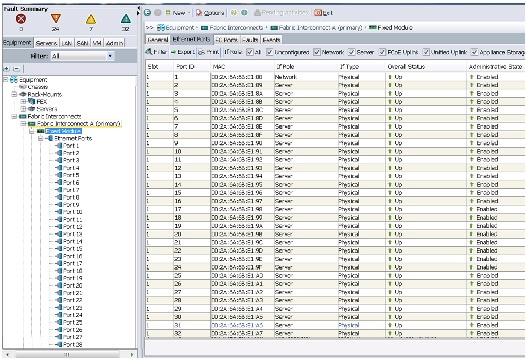

Enabling Server Ports and Uplink Ports

The procedure discussed here provides you the details for enabling server and uplinks ports.

Follow these steps to enable server ports and uplink ports:

1.

Select the Equipment tab on the top left of the window.

2.

Select Equipment > Fabric Interconnects > Fabric Interconnect A (primary) > Fixed Module.

3.

Expand the Unconfigured Ethernet Ports section.

4.

Select all the ports that are connected to the Cisco 2232 FEX (8 per FEX), right-click on them, and select Reconfigure > Configure as a Server Port.

5.

Select port 1 that is connected to the uplink switch, right-click, then select Reconfigure > Configure as Uplink Port.

6.

Select Show Interface and select 10GB for Uplink Connection.

7.

A pop-up window appears to confirm your selection. Click Yes then OK to continue.

8.

Select Equipment > Fabric Interconnects > Fabric Interconnect B (subordinate) > Fixed Module.

9.

Expand the Unconfigured Ethernet Ports section.

10.

Select all the ports that are connected to the Cisco 2232 Fabric Extenders (8 per Fex), right-click on them, and select Reconfigure > Configure as Server Port.

11.

A prompt displays asking if this is what you want to do. Click Yes then OK to continue.

12.

Select port 1, which is connected to the uplink switch, right-click, then select Reconfigure > Configure as Uplink Port.

13.

Select Show Interface and select 10GB for Uplink Connection.

14.

A pop-up window appears to confirm your selection. Click Yes then OK to continue.

Figure 8 Enabling Server Ports

Figure 9 Showing Servers and Uplink Ports

Creating Pools for Service Profile Templates

The procedure discussed in this section provides you the details for creating Organizations, pools, and VLAN configuration for Service profile Templates.

Creating an Organization

Organizations are used as a means to arrange and restrict access to various groups within the IT organization, thereby enabling multi-tenancy of the compute resources. This document does not assume the use of Organizations; however, the necessary steps are provided for future reference.

Follow these steps to configure an organization within the Cisco UCS Manager:

1.

Click New on the top left corner in the right pane in the UCS Manager GUI.

2.

Select Create Organization from the options.

3.

Enter a name for the organization.

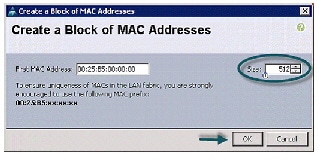

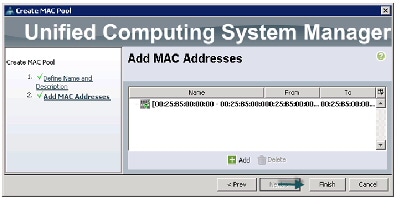

Creating MAC Address Pools

Follow these steps to create MAC address pools:

1.

Select the LAN tab on the left of the window.

3.

Right-click on MAC Pools under the root organization.

4.

Select Create MAC Pool to create the MAC address pool. Enter ucs for the name of the MAC pool.

5.

(Optional) Enter a description of the MAC pool.

8.

Specify a starting MAC address.

9.

Specify a size of the MAC address pool, which is sufficient to support the available server resources.

Figure 10 Specifying first MAC Address and Size

Figure 11 Adding MAC Addresses

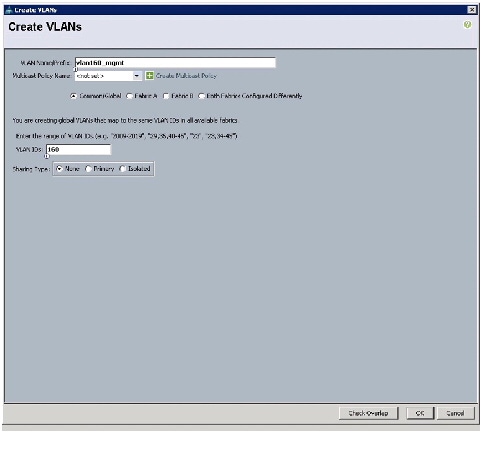

Configuring VLANs

VLANs are configured as in shown in Table 5 .

All of the VLANs created need to be trunked to the upstream distribution switch connecting the fabric interconnects. For this deployment vlan160_mgmt is configured for management access and user connectivity, vlan12_HDFS is configured for Hadoop interconnect traffic.

Follow these steps to configure VLANs in the Cisco UCS Manager:

1.

Select the LAN tab in the left pane in the UCS Manager GUI.

3.

Right-click on the VLANs under the root organization.

4.

Select Create VLANs to create the VLAN.

5.

Enter vlan160_mgmt for the VLAN Name.

6.

Select Common/Global for vlan160_mgmt.

7.

Enter 160 on VLAN IDs of the Create VLAN IDs.

8.

Click OK and then, click Finish.

9.

Click OK in the success message box.

Figure 13 Creating Management VLAN

10.

Select the LAN tab in the left pane again

12.

Right-click on the VLANs under the root organization.

13.

Select Create VLANs to create the VLAN.

14.

Enter vlan12_HDFS for the VLAN Name.

15.

Select Common/Global for the vlan12_HDFS.

16.

Enter 12 on VLAN IDs of the Create VLAN IDs.

17.

Click OK and then, click Finish.

Figure 14 Creating VLAN for Hadoop Data

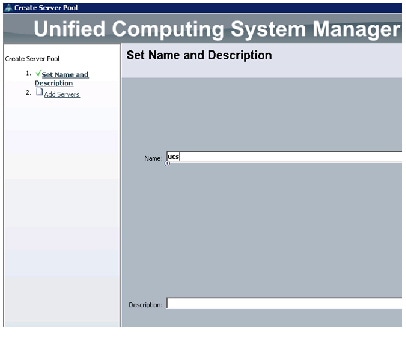

Creating Server Pool

A server pool contains a set of servers. These servers typically share the same characteristics in terms of location in the chassis, server type, amount of memory, local storage, type of CPU, or local drive configuration. You can manually assign a server to a server pool, or use server pool policies and server pool policy qualifications to automate the assignment.

Follow these steps to configure the server pool within the Cisco UCS Manager:

1.

Select the Servers tab in the left pane in the UCS Manager GUI.

3.

Right-click on the Server Pools.

5.

Enter your required name (ucs) for the Server Pool in the name text box.

6.

(Optional) enter a description for the organization

7.

Click Next to add the servers.

Figure 15 Setting Name and Description of the Server Pool

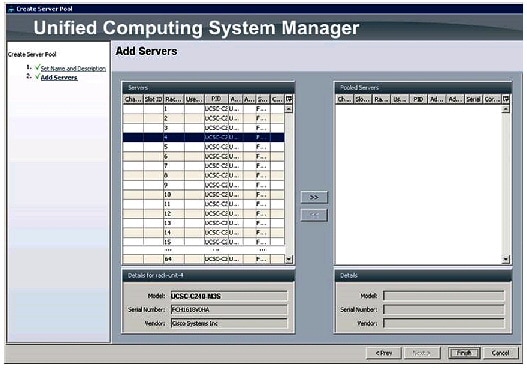

8.

Select all the Cisco UCS C240 M3 servers to be added to the server pool that you have previously created (ucs), then click >> to add them to the pool.

10.

Click OK and then click Finish.

Figure 16 Adding Servers to the Server Pool

Creating Policies for Service Profile Templates

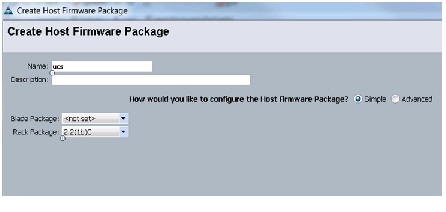

Creating Host Firmware Package Policy

Firmware management policies allow the administrator to select the corresponding packages for a given server configuration. These include adapters, BIOS, board controllers, FC adapters, HBA options, ROM and storage controller properties as applicable.

Follow these steps to create a firmware management policy for a given server configuration using the Cisco UCS Manager:

1.

Select the Servers tab in the left pane in the UCS Manager GUI.

3.

Right-click on the Host Firmware Packages.

4.

Select Create Host Firmware Package.

5.

Enter your required Host Firmware package name (ucs).

6.

Select Simple radio button to configure the Host Firmware package.

7.

Select the appropriate Rack package that you have.

8.

Click OK to complete creating the management firmware package.

Figure 17 Creating Host Firmware Package

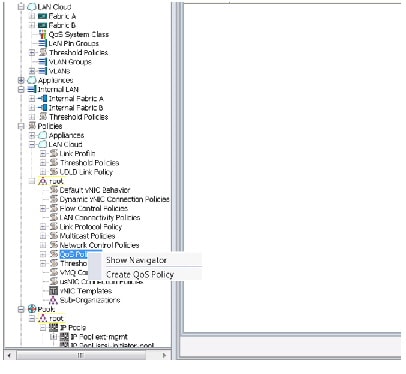

Creating QoS Policies

Follow these steps to create the QoS policy for a given server configuration using the Cisco

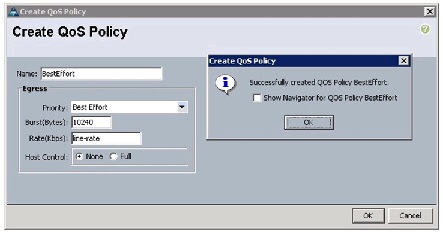

Best Effort Policy

Follow these steps for setting the best effort policy:

1.

Select the LAN tab in the left pane in the UCS Manager GUI.

3.

Right-click on the QoS Policies.

5.

Enter BestEffort as the name of the policy.

6.

Select BestEffort from the drop down menu.

7.

Keep the Burst (Bytes) field as default (10240).

8.

Keep the Rate (Kbps) field as default (line-rate).

9.

Keep Host Control radio button as default (none).

10.

Once the pop-up window appears, click OK to complete the creation of the policy.

Figure 19 Creating BestEffort QoS Policy

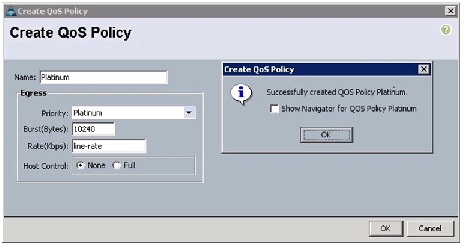

Platinum Policy

Follow these steps for setting platinum policy:

1.

Select the LAN tab in the left pane in the UCS Manager GUI.

3.

Right-click on the QoS Policies.

5.

Enter Platinum as the name of the policy.

6.

Select Platinum from the drop down menu.

7.

Keep the Burst (Bytes) field as default (10240).

8.

Keep the Rate (Kbps) field as default (line-rate).

9.

Keep Host Control radio button as default (none).

10.

Once the pop-up window appears, click OK to complete the creation of the policy.

Figure 20 Creating Platinum QoS Policy

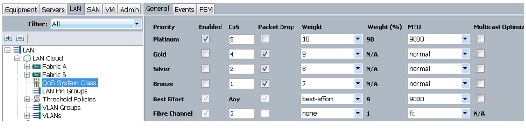

Setting Jumbo Frames

Follow these steps for setting Jumbo frames and enabling QoS:

1.

Select the LAN tab in the left pane in the UCS Manager GUI.

2.

Select LAN Cloud > QoS System Class.

3.

In the right pane, select the General tab

4.

In the Platinum row, enter 9000 for MTU.

5.

Check the Enabled Check box next to Platinum.

6.

In the Best Effort row, select best-effort for weight and 9000 for MTU.

7.

In the Fiber Channel row, select none for weight.

Figure 21 Setting Jumbo Frames

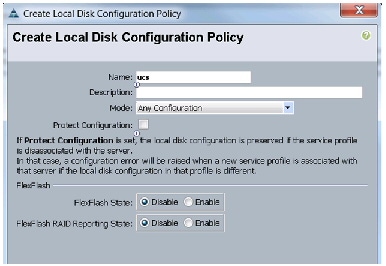

Creating Local Disk Configuration Policy

Follow these steps to create local disk configuration in the Cisco UCS Manager:

1.

Select the Servers tab on the left pane in the UCS Manager GUI.

3.

Right-click on the Local Disk Configuration Policies.

4.

Select Create Local Disk Configuration Policy.

5.

Enter ucs as the local disk configuration policy name.

6.

Change the Mode to Any Configuration. Uncheck the Protect Configuration check box.

7.

Keep the FlexFlash State field as default (Disable).

8.

Keep the FlexFlash RAID Reporting State field as default (Disable).

9.

Click OK to complete the creation of the Local Disk Configuration Policy.

Figure 22 Configuring Local Disk Policy

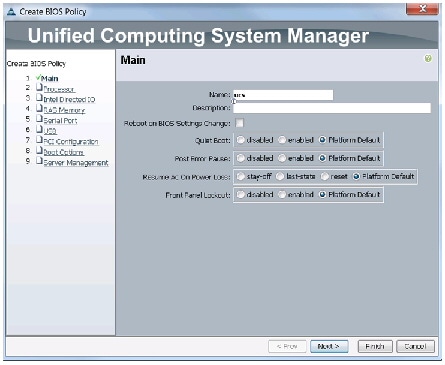

Creating Server BIOS Policy

The BIOS policy feature in Cisco UCS automates the BIOS configuration process. The traditional method of setting the BIOS is done manually and is often error-prone. By creating a BIOS policy and assigning the policy to a server or group of servers, you can enable transparency within the BIOS settings configuration.

BIOS settings can have a significant performance impact, depending on the workload and the applications. The BIOS settings listed in this section is for configurations optimized for best performance which can be adjusted based on the application, performance and energy efficiency requirements.

Follow these steps to create a server BIOS policy using the Cisco UCS Manager:

1.

Select the Servers tab in the left pane in the UCS Manager GUI.

3.

Right-click on the BIOS Policies.

5.

Enter your preferred BIOS policy name (ucs).

6.

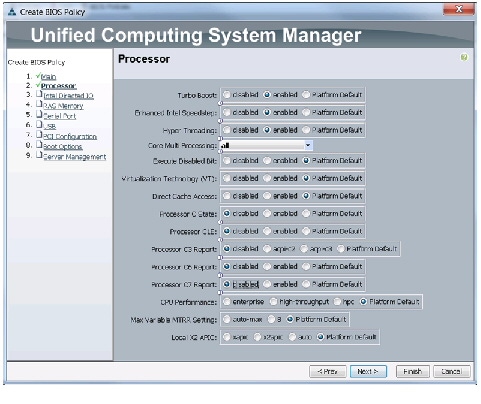

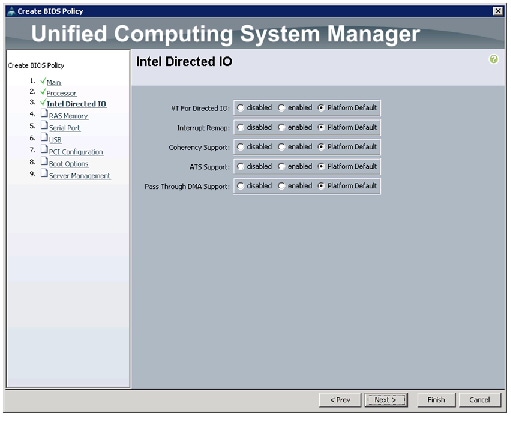

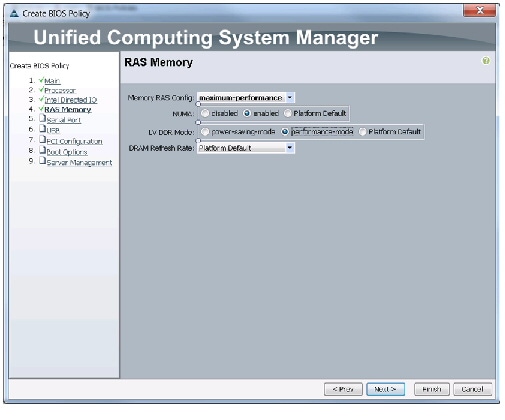

Change the BIOS settings as per the Figure 23, Figure 24, Figure 25.

Figure 23 Creating Server BIOS Policy

Figure 24 Creating Server BIOS Policy for Processor

Figure 25 Creating Server BIOS Policy for Intel Directed IO

Click Finish to complete creating the BIOS policy.

Figure 26 Creating Server BIOS Policy for Memory

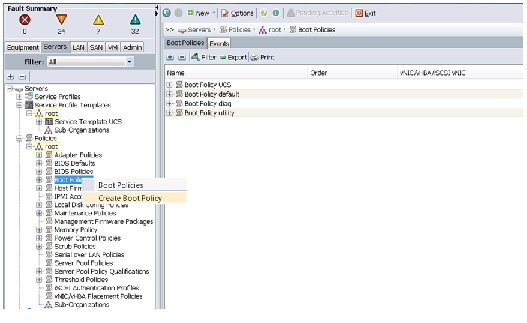

Creating Boot Policy

Follow these steps to create boot policies within the Cisco UCS Manager:

1.

Select the Servers tab in the left pane in the UCS Manager GUI.

3.

Right-click on the Boot Policies.

Figure 27 Creating Boot Policy - Part 1

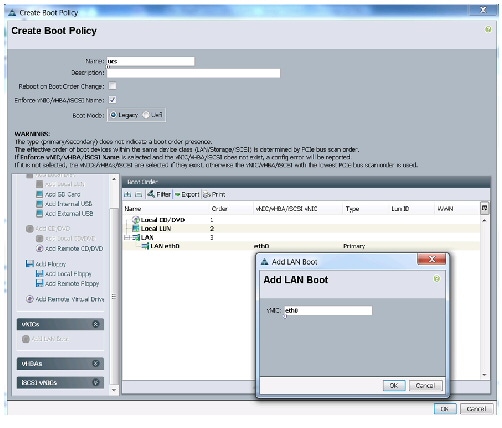

5.

Enter ucs as the boot policy name.

6.

(Optional) enter a description for the boot policy.

7.

Keep the Reboot on Boot Order Change check box unchecked.

8.

Keep Enforce vNIC/vHBA/iSCSI Name check box checked.

9.

Keep Boot Mode Default (Legacy).

10.

Expand Local Devices > Add CD/DVD and select Add Local CD/DVD.

11.

Expand Local Devices > Add Local Disk and select Add Local LUN.

12.

Expand vNICs and select Add LAN Boot and enter eth0.

13.

Click OK to add the Boot Policy.

Figure 28 Creating Boot Policy - Part 2

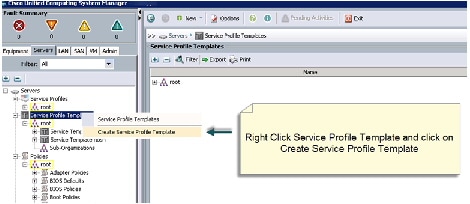

Creating Service Profile Template

To create a service profile template, follow these steps:

1.

Select the Servers tab in the left pane in the UCSM GUI.

2.

Right-click on the Service Profile Templates.

3.

Select Create Service Profile Template .

Figure 29 Creating Service Profile Template

4.

The Create Service Profile Template window appears.

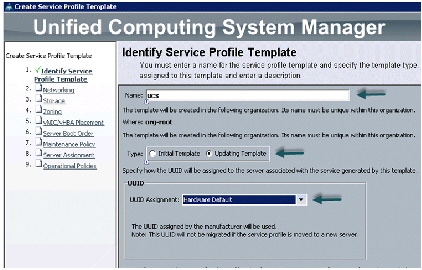

The following steps provide a detailed configuration procedure to identify the service profile template:

a.

Name the service profile template as ucs. Select the Updating Template radio button.

b.

In the UUID section, select Hardware Default as the UUID pool.

c.

Click Next to continue to the next section.

Figure 30 Creating Service Profile Template - Identify

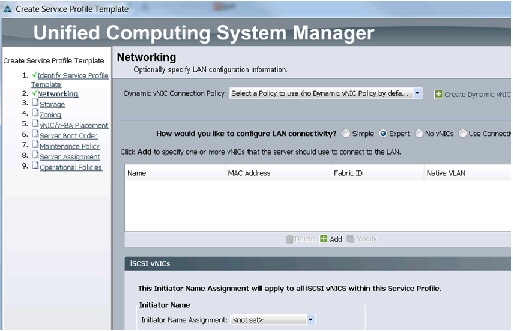

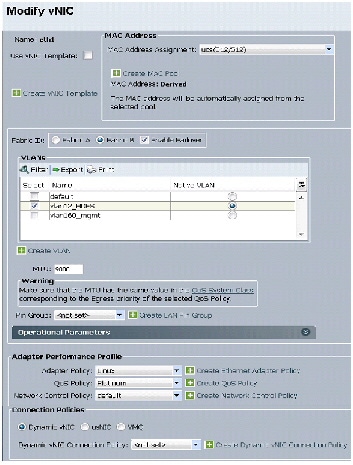

Configuring Network Settings for the Template

For network setting, follow these steps:

1.

Keep the Dynamic vNIC Connection Policy field at the default.

2.

Select Expert radio button for the option how would you like to configure LAN connectivity?

3.

Click Add to add a vNIC to the template.

Figure 31 Creating Service Profile Template - Networking

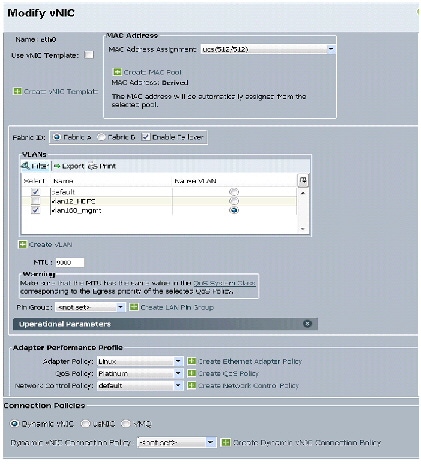

4.

The Create vNIC window displays. Name the vNIC as eth0.

5.

Select ucs in the Mac Address Assignment pool.

6.

Select the Fabric A radio button and check the Enable Failover check box for the Fabric ID.

7.

Check the vlan160_mgmt check box for VLANs and select the Native VLAN radio button.

9.

Select adapter policy as Linux .

10.

Select QoS Policy as BestEffort .

11.

Keep the Network Control Policy as Default .

12.

Keep the Connection Policies as Dynamic vNIC .

13.

Keep the Dynamic vNIC Connection Policy as <not set> .

Figure 32 Configuring vNIC eth0

15.

The Create vNIC window appears. Name the vNIC as eth1.

16.

Select ucs in the Mac Address Assignment pool.

17.

Select Fabric B radio button and check the Enable Failover check box for the Fabric ID.

18.

Check the vlan12_HDFS check box for VLANs and select the Native VLAN radio button

20.

Select adapter policy as Linux.

21.

Select QoS Policy as Platinum.

22.

Keep the Network Control Policy as Default.

23.

Keep the Connection Policies as Dynamic vNIC.

24.

Keep the Dynamic vNIC Connection Policy as <not set>.

Figure 33 Configuring vNIC eth1

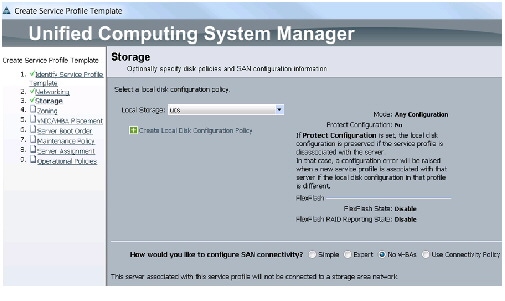

Configuring Storage Policy for the Template

For configuring storage policy, follow these steps:

1.

Select ucs for the local disk configuration policy.

2.

Select the No vHBAs radio button for the option for How would you like to configure SAN connectivity?

3.

Click Next to continue to the next section.

Figure 34 Creating Service Profile Template - Storage

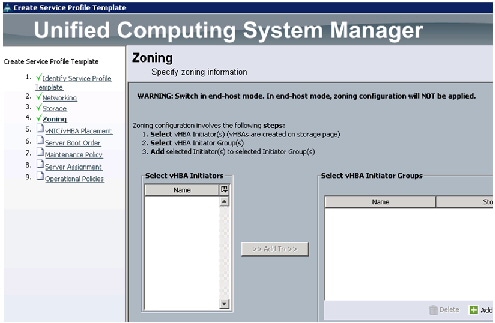

4.

Click Next to continue. Click Next, in the zoning window to go to the next section.

Figure 35 Creating Service Profile Template - Zoning

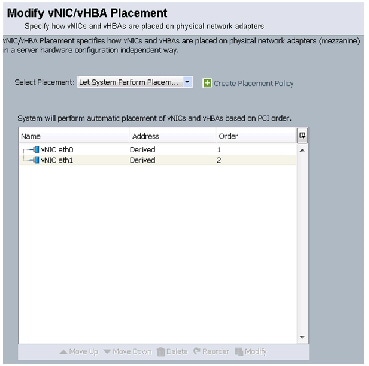

C onfiguring vNIC/vHBA Placement for the Template

For configuring vNIC/vHBA placement policy, follow these steps:

1.

Select the Default Placement Policy option for the Select Placement field.

2.

Select eth0 and eth1 assign the vNICs in the following order:

3.

Review to make sure that all of the vNICs were assigned in the appropriate order.

4.

Click Next to continue to the next section.

Figure 36 Creating Service Profile Template - vNIC/vHBA Placement

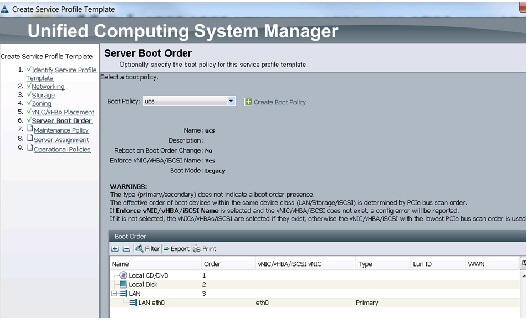

Configuring Server Boot Order for the Template

For setting the server boot order, follow these steps:

1.

Select ucs in the Boot Policy name field.

2.

Check the Enforce vNIC/vHBA/iSCSI Name check box.

3.

Review to make sure that all of the boot devices were created and identified.

4.

Verify that the boot devices are in the correct boot sequence.

6.

Click Next to continue to the next section.

Figure 37 Creating Service Profile Template - Server Boot Order

For applying the maintenance policy, follow these steps:

1.

Keep the Maintenance policy at no policy used by default.

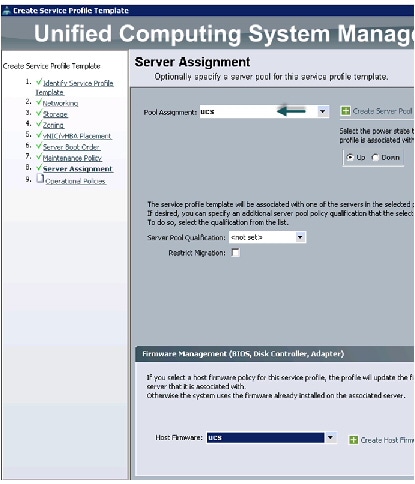

Configuring Server Assignment for the Template

For assigning servers to the pool, follow these steps:

1.

Select ucs for the Pool Assignment field.

2.

Keep the Server Pool Qualification field at default.

3.

Select ucs in Host Firmware Package.

Figure 38 Creating Service Profile Template - Server Assignment

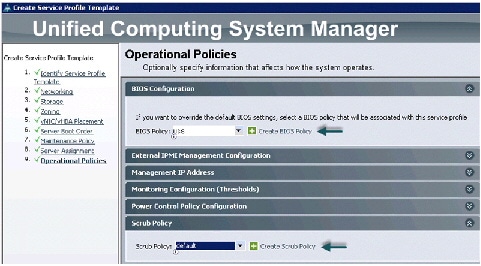

Configuring Operational Policies for the Template

For configuring operational Policies, follow these steps:

1.

Select ucs in the BIOS Policy field.

2.

Keep the Scrub Policy as default.

3.

Click Finish to create the Service Profile template.

4.

Click OK in the pop-up window to proceed.

Figure 39 Creating Service Profile Template - BIOS Configuration for Operational Policies

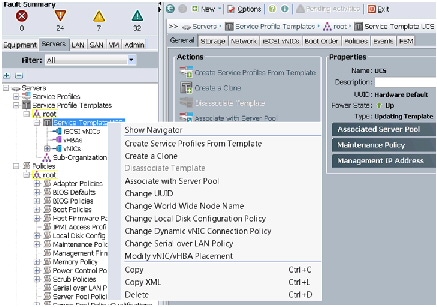

Creating Service Profiles from Templates

1.

Select the Servers tab in the left pane of the UCS Manager GUI.

2.

Go to Service Profile Templates > root .

3.

Right-click on the Service Profile Templates .

4.

Select Create Service Profiles From Template .

Figure 40 Creating Service Profiles from Template

5.

The Create Service Profile from Template window appears.

Figure 41 Creating Service Profiles

6.

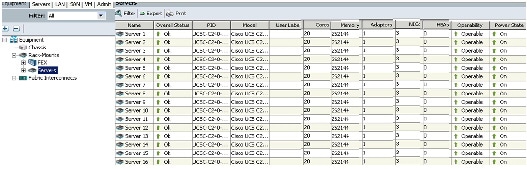

UCS Manager will then discover the servers. Association of the Service Profiles will happen automatically. .

Figure 42 UCS Manager Showing all the Discovered Servers

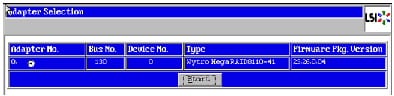

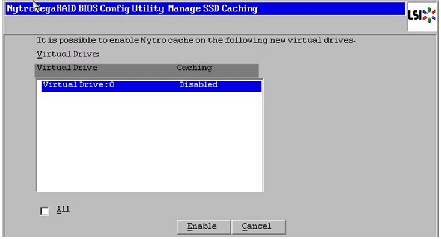

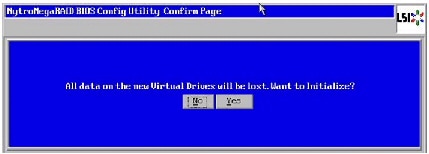

Configuring Nytro Flash on all node for installing OS

This section details the RAID configuration of Nytro Flash on all the nodes for installing the operating system. The Nytro Flash on RAID controller is configured as boot volume with 40 GB size with RAID1 configuration for redundancy.

Note

Installing OS on the Cisco Nytro MegaRaid card has the advantage of having all the 12 4TB Disk drives fully available for data. Installing OS on the Cisco Nytro MegaRaid card has the advantage of having all the 12 4TB Disk drives fully available for data.

There are several ways to configure RAID: using LSI WebBIOS Configuration Utility embedded in the MegaRAID BIOS, booting DOS and running MegaCLI commands, using Linux based MegaCLI commands, or using third party tools that have MegaCLI integrated. For this deployment, the Nytro Flash and the disk drives are configured using LSI WebBIOS Configuration Utility.

Follow these steps to create RAID1 on the Nytro Flash to install the operating system:

1.

When the server is booting, the following text appears on the screen:

a.

Press <Ctrl><H> to launch the WebBIOS.

2.

The Adapter Selection window appears. Click Start to continue.

Figure 43 Adapter Selection for RAID Configuration

3.

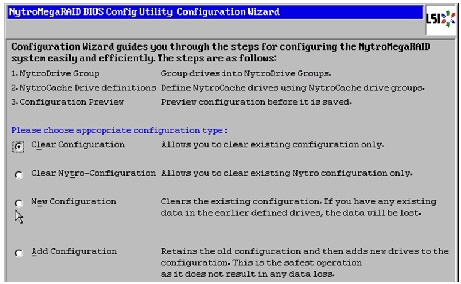

Click Configuration Wizard.

4.

In the configuration wizard window, choose Clear Configuration and click Next to clear the existing configuration.

Figure 44 Clearing Current Configuration on the Controller

5.

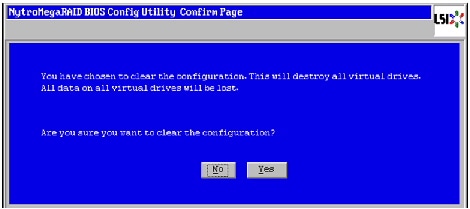

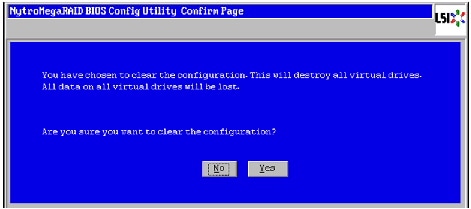

Choose Yes when asked to confirm the wiping of the current configuration.

Figure 45 Confirming Clearance of the Previous Configuration on the Controller

6.

In the Physical View, ensure that all the drives are Unconfigured Good .

7.

Click Configuration Wizard .

8.

In the Configuration Wizard window choose the configuration type as New Configuration and click Next .

Figure 46 Creating a New Configuration

9.

Choose Yes when asked to confirm the clear the current configuration.

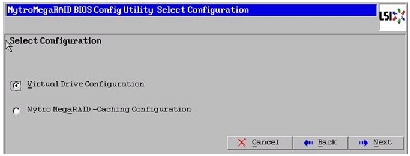

10.

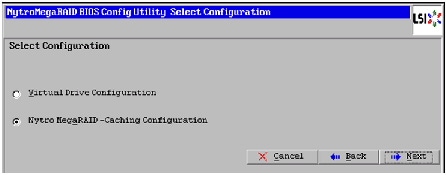

Select the Nytro MegaRAID Caching Configuration radio button.

Figure 47 Selecting Nytro MegaRAID-Caching Configuration

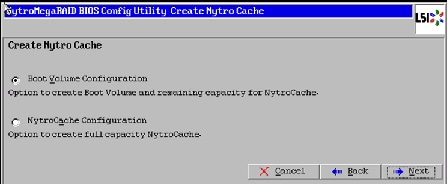

11.

Select Boot Volume Configuration to create a Boot Volume for installing Operating system and the remaining capacity on Nytro Flash is used for NytroCache.

Figure 48 Selecting Boot Volume Configuration

12.

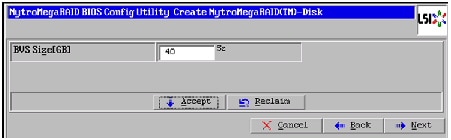

Type 40 for BVS Size [GB]. Click Accept and Next .

Figure 49 Creating Boot Volume of 40GB

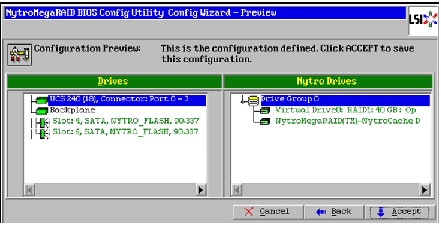

13.

Verify the configuration and click Accept to save the Configuration.

Figure 50 Accept the Configuration

14.

Click Yes to save the configuration.

15.

Click Yes . When asked to confirm the initialization.

Figure 51 Confirm Saving Configuration

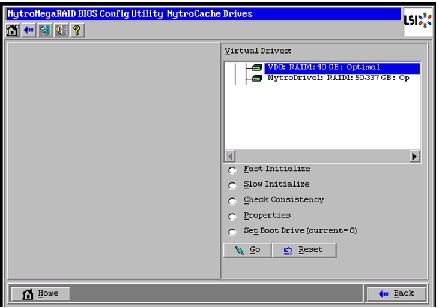

16.

Set VD0 as the Boot Drive and click Go .

Figure 52 Setting Virtual Drive as Boot Drive

Configuring Disk Drives on all nodes

This section details the configuration of disk drives on all nodes. The disk drives are configured as single RAID5 with 1MB stripe size, read ahead cache and write cache is enabled while battery is in use.

There are several ways in which you can configure RAID:

- Using LSI WebBIOS Configuration Utility embedded in the MegaRAID BIOS

- Booting DOS and running MegaCLI commands

- Using Linux based MegaCLI commands

- Using third party tools that have MegaCLI integrated

For this deployment, the disk drives are configured using LSI WebBIOS Configuration Utility.

Follow these steps to create RAID5 on all the 12 disk drive:

1.

When the server is booting, the following text appears on the screen:

a.

Press <Ctrl><H> to launch the WebBIOS.

2.

The Adapter Selection window appears. Click Start to continue.

3.

Click Configuration Wizard .

Figure 53 Adapter Selection for RAID Configuration

4.

In the Configuration Wizard window choose the configuration type as add Configuration and click Next .

Figure 54 Setting Virtual Drive as Boot Drive

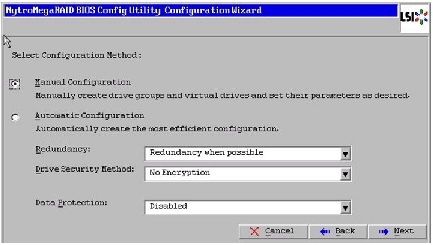

5.

Select the configuration method as Manual Configuration ; this enables you to have complete control over all attributes of the new storage configuration, such as, the drive groups, virtual drives and the ability to set their parameters.

Figure 55 Choosing Manual Configuration Method

7.

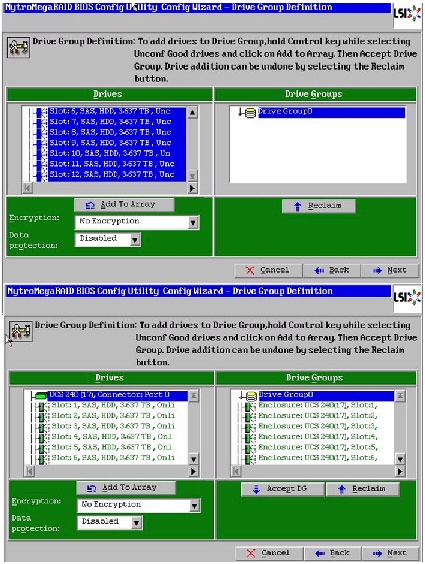

The Drive Group Definition window appears. Use this window to choose all the 12 drives to create drive groups.

8.

Click Add to Array to move the drives to a proposed drive group configuration in the Drive Groups pane. Click Accept DG and then, click Next .

Figure 56 Selecting all Drives and Adding to Drive Group

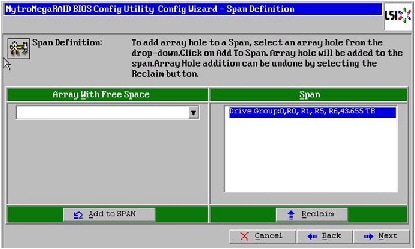

9.

In the Span definitions Window, Click Add to SPAN and click Next .

Figure 57 Span Definition Window - Adding to Span

10.

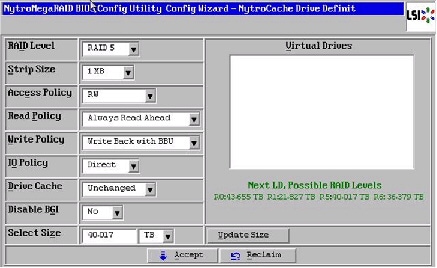

In the Virtual Drive definitions window, follow these steps to change the virtual drive definition:

b.

Change Strip Size to 1MB . A larger strip size produces higher read performance

c.

From the Read Policy drop-down list, choose Always Read Ahead .

d.

From the Write Policy drop-down list, choose Write Back with BBU .

e.

Make sure the RAID Level is set to RAID5 .

f.

Click Accept to accept all the changes to the virtual drive definitions.

Note Clicking the Update Size might change some of the settings in the window. Make sure all the settings are correct before accepting.

Figure 58 Virtual Drive Definition Window

11.

After the virtual drive definitions are done, click Next . The Configuration Preview window appears showing VD1.

12.

Check the virtual drive configuration in the Configuration Preview window and click Accept to accept the configuration.

13.

Click Yes to save the configuration.

14.

In the managing SSD Caching Window, Click Cancel .

15.

Click Yes . When asked to confirm the initialization.

Figure 60 Initializing Virtual Drive Window

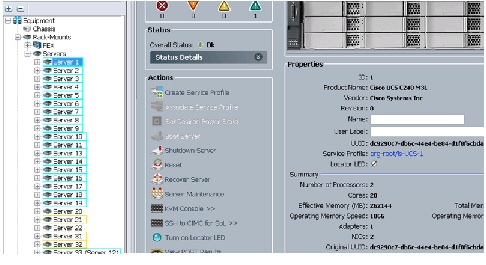

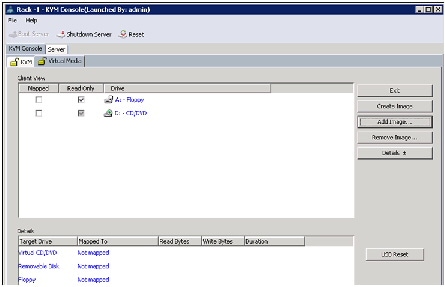

Installing Ubuntu Server 12.04.4 LTS with KVM

The following section provides detailed procedures for installing Ubuntu Server 12.04.4 LTS.

There are multiple methods to install Ubuntu Server OS. The installation procedure described in this deployment guide uses KVM console and virtual media from Cisco UCS Manager.

1.

Log in to the Cisco UCS 6296UP Fabric Interconnect and launch the Cisco UCS Manager application.

3.

In the navigation pane expand Rack-Mounts and Servers .

4.

Right-click on the server requiring OS Installation and select KVM Console .

Figure 61 Opening KVM Console of the Server

5.

In the KVM window, select the Virtual Media tab.

6.

Click

in the Virtual Media selection window.

7.

Browse to the Ubuntu Server 12.04.4 LTS installer ISO image file.

Note The Ubuntu Server 12.04.4 LTS ISO can be downloaded from: http://www.ubuntu.com/download/server/thank-you?country=US&version=12.04.4&architecture=amd64.

8.

Select Ubuntu Server 12.04.4 LTS installer ISO image file and click Open to add the image to the list of virtual media.

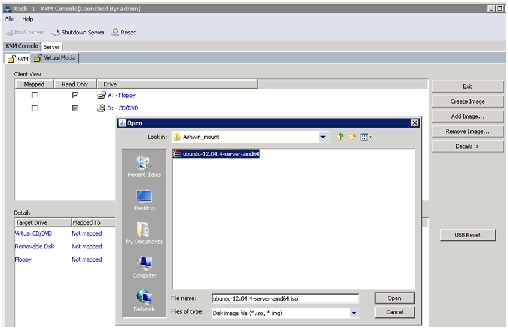

Figure 63 Browse to Ubuntu Server 12.04.4 LTS ISO Image

9.

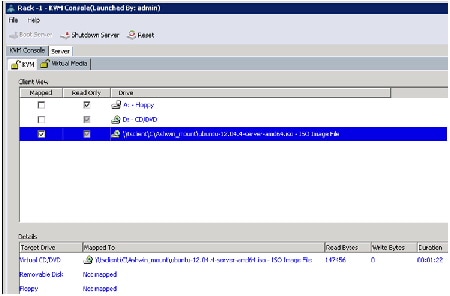

Check the Mapped check box, next to the entry corresponding to the image you just added.

10.

In the KVM window, select the KVM tab to monitor during boot.

11.

In the KVM window, select

.

13.

Click OK to reboot the system.

14.

On reboot, the machine detects the presence of the Ubuntu Server 12.04.4 LTS ISO install media. Choose English from the list.

15.

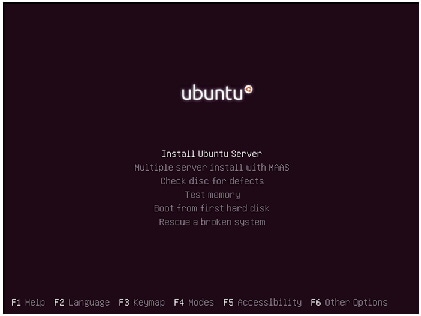

Select the Install Ubuntu Server option.

Figure 66 Selecting Install Ubuntu Server Option

16.

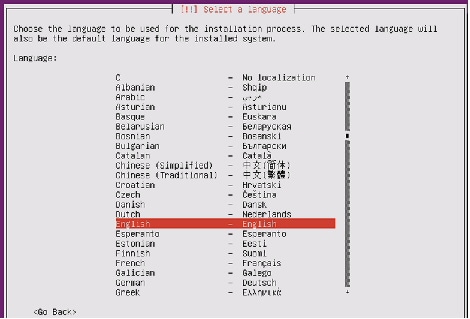

Choose the language for Installation process and the default language for the installed server.

Figure 67 Selecting Language for Installation and Default Language for the Server

17.

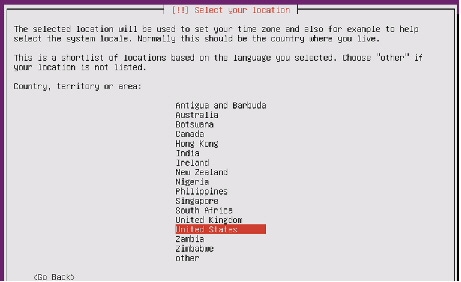

Select your location from the list.

18.

Click No when asked to detect keyboard layout.

19.

Choose your keyboard layout from the list.

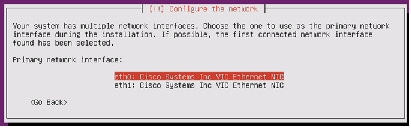

20.

Select Network Interfaces eth0 for assigning IP address.

Figure 69 Configuring Network for eth0

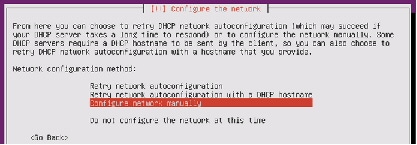

21.

Select Configure network manually.

Figure 70 Selecting Network Configuration Method

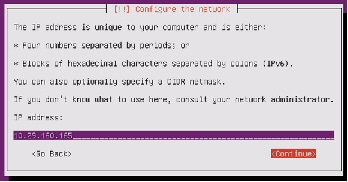

22.

Assign IP addresses for eth0. For this setup we use the following ip address:

23.

Table 6 shows the eth0 and eth1 IP addresses used for all the servers in the cluster.

24.

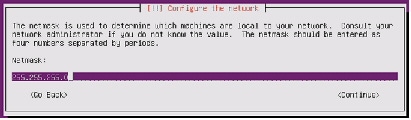

Enter netmask as 255.255.255.0 and click Continue .

25.

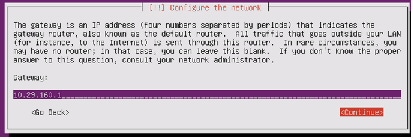

Enter your gateway address and click Continue .

26.

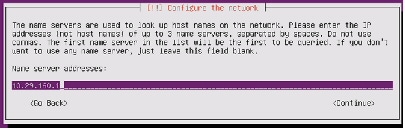

Enter your name server addresses and click Continue.(Optional)

Figure 74 Entering Name Server Address

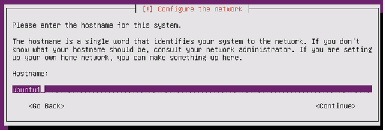

27.

Enter hostname of the server and click Continue .

Figure 75 Entering Hostname for the Server

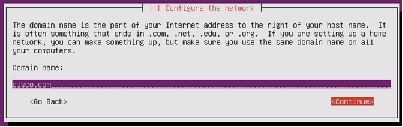

28.

Enter domain name of the server and click Continue .

Figure 76 Entering Domain Name for the Server

29.

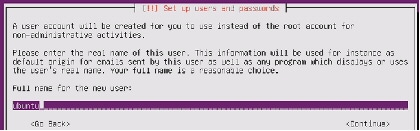

Add user and assign password for the user .

Figure 77 Setting up User for the Server

Figure 78 Setting up Username and Password

30.

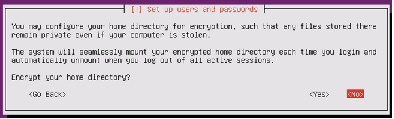

Choose No to disable encryption on home directory.

Figure 79 Configuring Encryption for Home Directory

32.

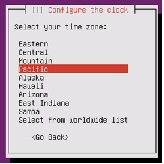

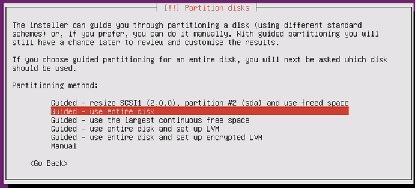

Choose Guided-use entire disk. All the data on the disk will be erased.

Figure 81 Choosing Partitioning Method

33.

Select 42.9 GB LSI NMR8110-4i from the list

Figure 82 Partitioning Nytro Flash VD for Installing Operating System

34.

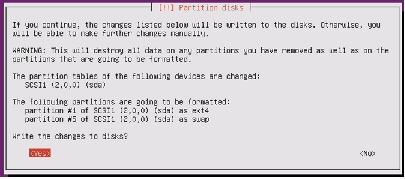

Choose Yes to confirm partitioning.

Figure 83 Confirm Write Changes

35.

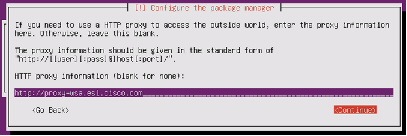

Enter http proxy information if needed.

Figure 84 Configuring http Proxy

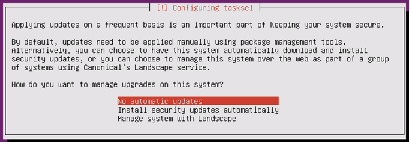

Figure 85 Selecting Updating Options

37.

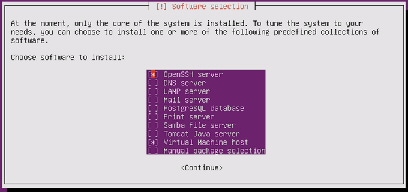

Choose OpenSSH server and Virtual Machine host from the list of Software for Installation.

Figure 86 Selecting Software for Installation

38.

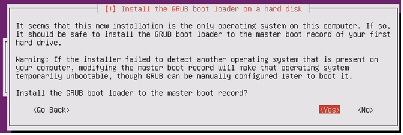

Installing GRUB boot loader to Master Boot Record.

Figure 87 Installing GRUB Boot Loader

39.

Once the installation is complete, choose Continue and uncheck the Ubuntu Server 12.04.4 LTS installer ISO image file from KVM Virtual Media tab.

Figure 88 Finishing the Installation

Repeat the above steps (Steps 1 to 39) on all the servers to install Ubuntu Server 12.04.4 LTS. The OS installation and configuration of the nodes that is mentioned above can be automated through PXE boot or third party tools.

Note

The hostnames and their corresponding IP addresses are shown in Table 6.

Post OS Install Configuration

Choose one of the nodes of the cluster or a separate node as Admin Node for management of all the other nodes, node ubuntu20 for the purpose of this CVD.

Note • All Nodes in the system are assumed to have access to Internet.

- Most commands in this CVD are run as “sudo”. To avoid this, one could change profile as “sudo su” and run the commands without “sudo” as this is with full root privileges.

Most of the following configurations are done from the admin node.

Update Proxy for apt

On the admin node add the proxy to apt

Run the following command after updating the file as above

Note

This apt.conf should be updated on all nodes and this will be done after clush is installed as per the instructions given in the This apt.conf should be updated on all nodes and this will be done after clush is installed as per the instructions given in the “Setup Parallel Shell - clush” section.

Password-less login

On the admin node run command ssh-keygen:

Run the following command from the admin node to copy the public key id_rsa.pub to all the nodes

of the cluster. ssh-copy-id appends the keys to the remote-host's .ssh/authorized_key.

Setup Parallel Shell - clush

Install clustershell as follows

Update clustershell config to identify all nodes in the cluster

Note

Provide “all” in the above file relates to all nodes to be included in “-a” option for clush Provide “all” in the above file relates to all nodes to be included in “-a” option for clush

For all cluster nodes add the following lines in the end of /etc/sudoers to prevent Ubuntu asking for

N ameserver

On Admin node move the link - /etc/resolv.conf to static file for backing up:

Ping www.ubuntu.com to confirm

Configuring /etc/hosts

Follow these steps to create the host file across all the nodes in the cluster:

1.

Populate the host file with IP addresses and corresponding hostnames on the Admin node (ubuntu20).

2.

Deploy /etc/hosts from the admin node (ubuntu20) to all the nodes via the following pscp command:

NTP Configuration

The Network Time Protocol (NTP) is used to keep all the cluster nodes time-synchronized. The Network Time Protocol daemon (ntpd) sets and maintains the system time in sync with the timeserver located in the admin node (ubuntu20). Configuring NTP is critical for any Hadoop cluster because if the server clocks in the cluster go out of sync, serious problems will occur with HBase and other services.

Note

Installing an internal NTP server keeps your cluster synchronized even when an outside NTP server is inaccessible. Installing an internal NTP server keeps your cluster synchronized even when an outside NTP server is inaccessible.

Create /home/ubuntu/ntp.conf on the admin node and copy it to all nodes except admin node (Don’t overwrite ntp.conf on admin node).

Install ntp on all the nodes by running the following commands:

Copy ntp.conf file from the admin node to /etc of all the nodes by executing the following command in the admin node (ubuntu20).

Restart NTP on all the nodes including the admin node:

Configuring the Filesystem

In order to format the data partition which will be used by OpenStack for instances and data, ensure OS is on /dev/sda on all nodes and the data partition is on /dev/sdb by running the following commands

On the Admin node, create a file containing the following script driveconf.sh

1.

To create partition tables and file systems on the local disks supplied to each of the nodes, run the following script as the root user on each node.

echo /sbin/mkfs.xfs -f -q -l size=65536b,lazy-count=1,su=256k -d sunit=1024,swidth=6144 -r extsize=256k -L ${Y} ${X}1/sbin/mkfs.xfs -f -q -l size=65536b,lazy-count=1,su=256k -d sunit=1024,swidth=6144 -r extsize=256k -L ${Y} ${X}1

Note This script formats /dev/sdb which is considered as the data partition (single volume of all 12 4TB drives which is RAID5). If OS is on /dev/sdb, that will be wiped out.

2.

Run the following command to copy driveconf.sh to all the datanodes.

3.

Run the following command from the admin node to run the script across all data nodes.

O penStack Pre-requisites

The following section provides information on setting up OpenStack.

Controller Node

In this CVD, the node ubuntu20 is used as the controller node. The controller node in OpenStack runs all OpenStack API services and OpenStack schedulers.

Networking

For an OpenStack production deployment, most of the nodes must have these network interface cards:

- One network interface card for external network traffic

- Another card to communicate with other OpenStack nodes.

In this CVD, eth0 is 10.29.160.x - public interface (with access to internet). eth1 is 192.168.10.x - private/internal interface for communication between VMs. We can add additional eth1 ip as follows:

Update /etc/network/interface on each host.

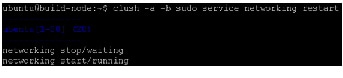

Run command “service networking restart” on all nodes as follows

Passwords

The various OpenStack services and the required software like the database and the Messaging server have to be password protected. These passwords are needed when configuring a service and then again to access the service. For more information, see:

http://docs.openstack.org/havana/install-guide/install/apt/content/basics-passwords.html

For simplicity, we use “ubuntu” as the password for all services in this CVD.

This guide uses the conventions, SERVICE_PASS as the password to access the service with SERVICE as the database name and SERVICE_DBPASS as the database password.

Table provides the list of passwords used in this CVD.

Installing MySQL

OpenStack services require a database to store information. This CVD uses MySQL for database which will be installed on the controller node and all the other nodes need MySQL client software to be installed for accessing MySQL.

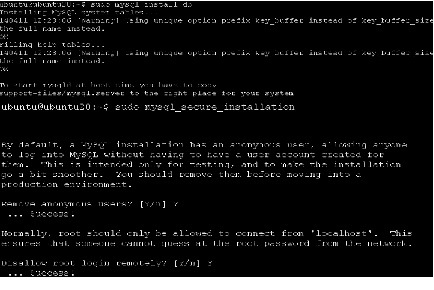

Install MySQL Server on the Controller Node

During the install, you will be prompted for the mysql root password. Enter a password of your choice and verify it.

Edit /etc/mysql/my.cnf and set the bind-address to the internal IP address of the controller, to enable access from outside the controller node.

Restart the MySQL service to apply the changes:

Securing MySQL Server

All anonymous users that are created when the database is first started are to be deleted. Otherwise, database connection problems occur while following the CVD. To do this, use the mysql_secure_installation command.

This command presents a number of options to secure the database installation. Respond yes to all prompts unless there is a good reason to do otherwise.

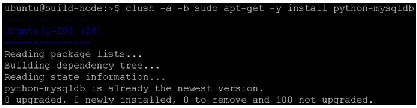

Install MySql Client on all the Other Nodes

On all the nodes, install the MySQL client and the MySQL Python library on any of the systems that does not host a MySQL database:

OpenStack Repository - Ubuntu Cloud Archive for Havana

1.

Install the Ubuntu Cloud Archive for Havana:

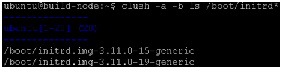

2.

Update the package database, upgrade your system, and reboot for all changes to take effect:

Messaging server (RabbitMQ)

Install the messaging queue server RabbitMQ on the controller node.

Note

The rabbitmq-server package configures the RabbitMQ service to start automatically and creates a guest user with a default guest password. This guide uses the guest account, and it is strongly advised to change its default password, especially if you have IPv6 available: by default the RabbitMQ server enables anyone to connect to it by using guest as login and password, and with IPv6, it is reachable from the outside. The rabbitmq-server package configures the RabbitMQ service to start automatically and creates a guest user with a default guest password. This guide uses the guest account, and it is strongly advised to change its default password, especially if you have IPv6 available: by default the RabbitMQ server enables anyone to connect to it by using guest as login and password, and with IPv6, it is reachable from the outside.

OpenStack Services

OpenStack provides an Infrastructure as a Service (IaaS) solution through a set of interrelated services. Each service offers an Application Programming Interface (API) that facilitates this integration. Depending on the needs, some or all services can be installed.

Note

For more information, see: For more information, see: http://docs.openstack.org/havana/install-guide/install/apt/content/ch_overview.html

The following table describes the OpenStack services that make up the OpenStack architecture:

Canonical Ubuntu OpenStack Havana Software Components

This CVD focuses on Canonical OpenStack software components based on the upstream “Havana” OpenStack release. Ubuntu is the popular Linux flavor to deploy OpenStack among Service Providers and Enterprise customers. Following few subsections cover key software components involved in OpenStack. For more information, see:

https://wiki.openstack.org/wiki/UnderstandingFlatNetworking#Multiple_nodes.2C_multiple_adapters

Identity Service (“Keystone”)

This is a central authentication and authorization mechanism for all OpenStack users and services. It supports multiple forms of authentication including standard username and password credentials and it can also integrate with existing directory services such as LDAP.

Endpoints, Tenants, Tokens and Roles

The Identity service catalog lists all of the services deployed in an OpenStack cloud and manages authentication for them through endpoints. An endpoint is a network address where a service listens for requests. The Identity service provides each OpenStack service – such as Image, Compute, or Block Storage -- with one or more endpoints.

The Identity service uses tenants to group or isolate resources. By default users in one tenant can’t access resources in another even if they reside within the same OpenStack cloud deployment or physical host. The Identity service issues tokens to authenticated users. The endpoints validate the token before allowing user access. User accounts are associated with roles that define their access credentials. Multiple users can share the same role within a tenant.

Image Service (“Glance”)

This service discovers, registers, and delivers virtual machine images. They can be copied via snapshot and immediately stored as the basis for new instance deployments. Stored images allow OpenStack users and administrators to provision multiple servers quickly and consistently.

By default the Image Service stores images in the /var/lib/glance/images directory of the local server’s filesystem where Glance is installed.

Compute Service (“Nova”)

OpenStack Compute provisions and manages large networks of virtual machines. It is the backbone of OpenStack’s IaaS functionality. OpenStack Compute scales horizontally on standard hardware enabling the favorable economics of cloud computing. Users and administrators interact with the compute fabric via a web interface and command line tools.

OpenStack Compute provides distributed and asynchronous architecture, allowing scale out fault tolerance for virtual machine instance management.

OpenStack Compute is composed of many services that work together to provide the full functionality. The openstack-nova-cert and openstack-nova-consoleauth services handle authorization. The openstack-nova-api responds to service requests and the openstack-nova-scheduler dispatches the requests to the message queue. The openstack-nova-conductor service updates the state database, which limits direct access to the state database by compute nodes for increased security. The openstacknova-compute service creates and terminates virtual machine instances on the compute nodes. Finally, openstack-nova-novncproxy provides a VNC proxy for console access to virtual machines via a standard web browser.

Ephemeral Storage

Deploying only the OpenStack Compute Service (nova), users do not have access to any form of persistent storage by default. The disks associated with VMs are "Ephemeral", meaning that (from the user's point of view) they effectively disappear when a virtual machine is terminated (when VM is deleted).

Networking (nova-network)

Networking for the VMs in this CVD employs nova-network (Legacy OpenStack Network), which is a FlatNetwork. FlatNetworking primarily involves compute nodes. It uses Ethernet adapters configured as bridges to allow network traffic to transit between all the various nodes. This setup can be done with a single adapter on the physical host, or multiple.

For more information, see: https://wiki.openstack.org/wiki/UnderstandingFlatNetworking#Multiple_nodes.2C_multiple_adapters

Dashboard (“Horizon”)

The OpenStack Dashboard is an extensible web-based application that allows cloud administrators and users to control and provision compute, storage, and networking resources. Administrators can use the Dashboard to view the state of the cloud, create users, assign them to tenants, and set resource limits.

The OpenStack Dashboard runs as an Apache HTTP server via the httpd service.

Note

Both the Dashboard and command line tools can be used to manage an OpenStack environment. This CVD focuses on the command line tools because they offer more granular control and insight into the OpenStack functionality. Both the Dashboard and command line tools can be used to manage an OpenStack environment. This CVD focuses on the command line tools because they offer more granular control and insight into the OpenStack functionality.

Installation of OpenStack

OpenStack basically has three roles for the nodes underneath.

- Controller node – It is the main management for OpenStack which controls compute and storage node. This runs all OpenStack API Services and OpenStack schedulers.

- Compute node – These nodes are hosts to the VMs spawned.

- Storage node – These nodes hosts the storage for VMs.

Note

In the architecture discussed in this CVD, the storage is Ephemeral, which is local to VMs. Hence compute nodes do the job of storage nodes as well and there are no separate storage nodes. In the architecture discussed in this CVD, the storage is Ephemeral, which is local to VMs. Hence compute nodes do the job of storage nodes as well and there are no separate storage nodes.

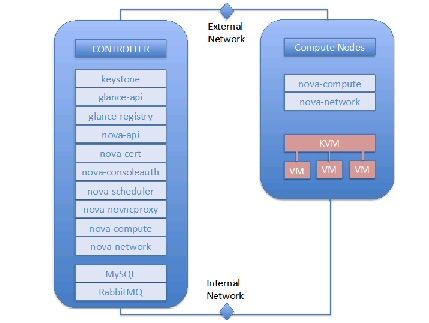

Following OpenStack services are run on different components.

- Controller node - Identity Service (keystone), Image Service (Glance), Dashboard (Horizon), and management portion of Compute, with the associated API services, MySQL databases, and messaging system. In this CVD, we have Nova compute and Nova network also running on the controller node.

- Compute node - Tenant virtual machines on a hypervisor. By default, Nova compute uses KVM as the hypervisor. Compute also provisions and operates tenant networks and implements security groups.

Figure 89 Controller and Compute Nodes

Installing Havana OpenStack Services

This section details the installation of Havana OpenStack components on the cluster.

Identity Service (Keystone)

The Identity Service is used for the following functions:

- User management: To manage User Credentials.

- Service catalog: Provides available services along with their API endpoints.

- User - Digital representation of a person, system, or service who uses OpenStack cloud services. Users have a login and may be assigned tokens to access resources. Users can be directly assigned to a particular tenant and behave as if they are contained in that tenant.

- Token - An arbitrary bit of text that is used to access resources. Each token has a scope which describes which resources are accessible with it. A token may be revoked at any time and is valid for a finite duration.

- Tenant - A container used to group or isolate resources and/or identity objects. Depending on the service operator, a tenant may map to a customer, account, organization, or project.

- Service - An OpenStack service such as Compute (Nova), or Image Service (Glance). Provides one or more endpoints through which users can access resources and perform operations.

- Endpoint - A network-accessible address, usually described by a URL, from where you access a service.

- Role - A role includes a set of rights and privileges. A user assuming that role inherits those rights and privileges. In the Identity Service, a token that is issued to a user includes the list of roles that user has. Services that are being called by that user determine how they interpret the set of roles a user has and to which operations or resources each role grants access.

Note

For more information on Identity Service, see For more information on Identity Service, see Install the Identity Service (keystone).

1.

Install the OpenStack Identity Service on the controller node. The following command will also install python-keystoneclient (which is a dependency):

2.

The Identity Service uses MySQL to store information. Specify the location of the database in the configuration file. In this CVD we use MySQL database on the controller node (ubuntu20 in this CVD) with the username keystone . Replace KEYSTONE_DBPASS with a suitable password for the database user. As mentioned earlier for simplicity, we use “ubuntu” as the password for all services in this CVD. Replace controller with the hostname of the controller.

3.

Delete the default sqllite database keystone.db file created in the /var/lib/keystone/ directory.

4.

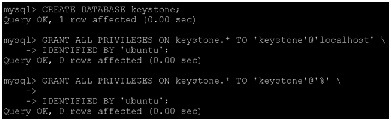

Login to MySQL as root. Create a keystone database user:

mysql> GRANT ALL PRIVILEGES ON keystone.* TO \ 'keystone'@'localhost' IDENTIFIED BY 'KEYSTONE_DBPASS';

5.

Create the database tables for the Identity Service:

6.

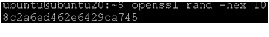

Define an authorization token to use as a shared secret between the Identity Service and other OpenStack services. Use openssl to generate a random token and store it in the configuration file:

Edit /etc/keystone/keystone.conf and change the [DEFAULT] section, replacing ADMIN_TOKEN with the results of the command.

Edit /etc/keystone/keystone.conf and change the [DEFAULT] section, replacing ADMIN_TOKEN with the results of the command.

Note Make sure there are no trailing spaces before admin_token.

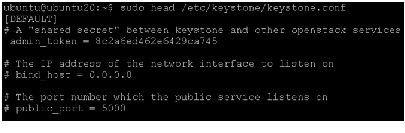

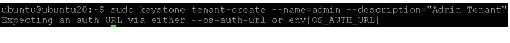

Define Users, Tenants, and Roles

After you install the Identity Service, set up users, tenants, and roles to authenticate against. These are used to allow access to services and endpoints.

At this point, we have not created any users, so we have to use the authorization token created in an earlier step. We have set OS_SERVICE_TOKEN, as well as OS_SERVICE_ENDPOINT to specify where the Identity Service is running. Replace ADMIN_TOKEN with your authorization token above.

First, create a tenant for an administrative user and a tenant for other OpenStack services to use.

Note

Ensure to run the above commands without “sudo”, else it throws error as above as the export values are created for a user Ubuntu and not “sudo/root” Ensure to run the above commands without “sudo”, else it throws error as above as the export values are created for a user Ubuntu and not “sudo/root”

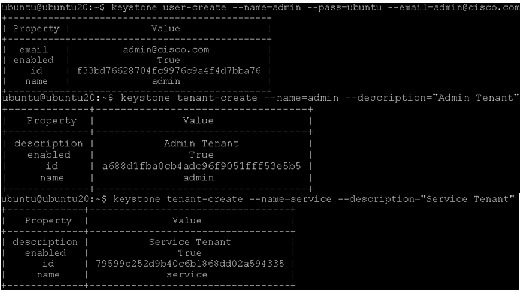

Next, create an administrative user called admin. Choose a password for the admin user and specify an email address for the account.

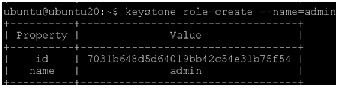

Create a role for administrative tasks called admin. Any roles you create should map to roles specified in the policy.json files of the various OpenStack services. The default policy files use the admin role to allow access to most services.

Finally, add roles to users. Users always log in with a tenant, and roles are assigned to users within tenants. Add the admin role to the admin user when logging in with the admin tenant.

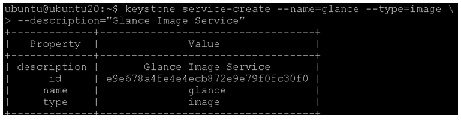

Define services and API endpoints

For Identity Service to track which OpenStack services are installed and where they are located on the network, services must be registered in the OpenStack installation. To register a service, run these commands:

- keystone service-create - Describes the service.

- keystone endpoint-create - Associates API endpoints with the service.

Identity Service itself must be registered. Use the OS_SERVICE_TOKEN environment variable, as set previously, for authentication.

1.

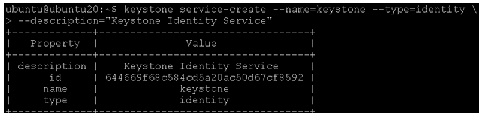

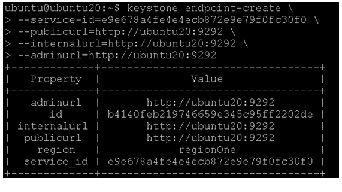

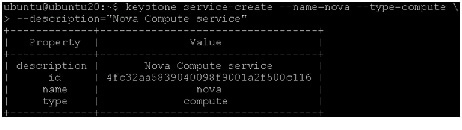

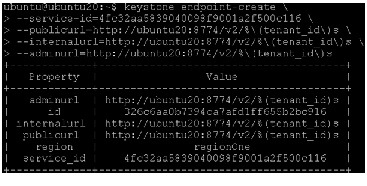

Create a service entry for the Identity Service:

The service ID is randomly generated and is different from the one shown below.

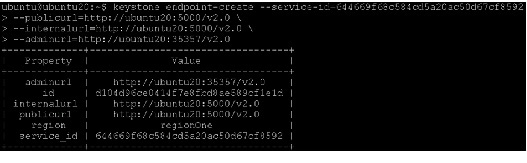

2.

Specify an API endpoint for the Identity Service by using the returned service ID. When you specify an endpoint, you provide URLs for the public API, internal API, and admin API. In this guide, the controller host name is used. Note that the Identity Service uses a different port for the admin API.

3.

As services are added to the OpenStack installation, call these commands to register the services with the Identity Service.

Verify the Identity Service installation

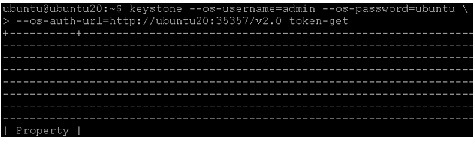

To verify the Identity Service is installed and configured correctly, first unset the OS_SERVICE_TOKEN and OS_SERVICE_ENDPOINT environment variables. These were only used to bootstrap the administrative user and register the Identity Service.

Now use the regular username-based authentication. Request an authentication token using the admin user and the password you chose during the earlier administrative user-creation step.

You should receive a token in response, paired with your user ID. This verifies that keystone is running on the expected endpoint, and that the user account is established with the expected credentials.

Next, verify that authorization is behaving as expected by requesting authorization on a tenant.

You should receive a new token in response, this time including the ID of the tenant you specified. This verifies that your user account has an explicitly defined role on the specified tenant, and that the tenant exists as expected.

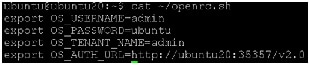

You can also set your --os-* variables in your environment to simplify command-line usage. Set up an openrc.sh file with the admin credentials and admin endpoint.

Source this file to read in the environment variables.

Verify that openrc.sh file is configured correctly by performing the same command as above, but without the --os-* arguments.

The command returns a token and the ID of the specified tenant. This verifies that you have configured your environment variables correctly.

Finally, verify that your admin account has authorization to perform administrative commands.

+----------------------------------+---------+--------------------+--------+ | id | enabled | email | name | +----------------------------------+---------+--------------------+--------+This verifies that the user account has the admin role, which matches the role used in the Identity Service policy.json file.

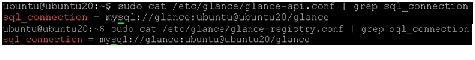

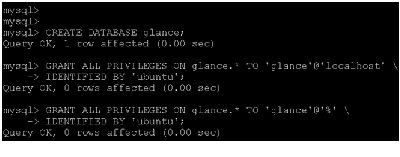

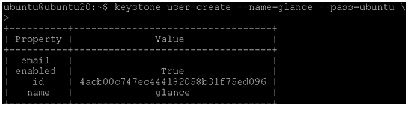

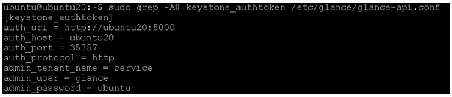

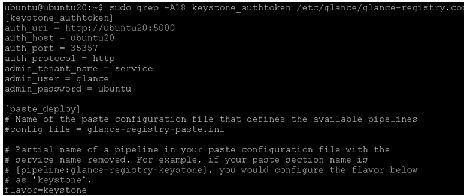

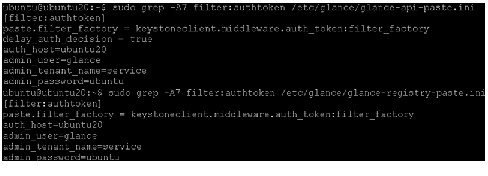

Image Service (Glance)