Data Center Service Integration: Service Chassis Design Guide

Available Languages

Table Of Contents

Data Center Service Integration: Service Chassis Design Guide

Document Format and Naming Conventions

Service Integration Approaches

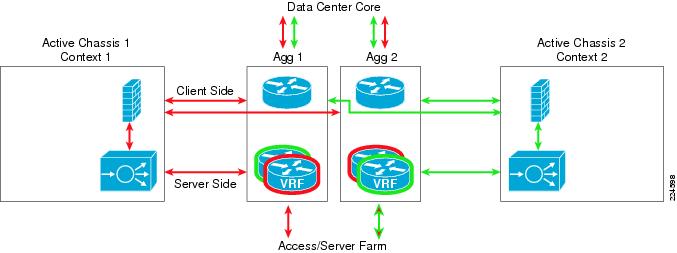

Integrated Services Physical Model

Services Chassis Physical Model

Service Chassis Logical Topologies

Active-Standby Service Chassis

Active/Standby Service Chassis Design

Active/Active Service Chassis Design

Data Center Service Integration: Service Chassis Design Guide

September 5, 2008

Introduction

This document provides reference architectures and configuration guidance for the integrating intelligent networking services such as server load balancing and firewall into an enterprise data center. Dedicated Catalyst 6500 Services Chassis housing Firewall Services Modules (FWSM) and Application Control Engine (ACE) service modules are leveraged in the example architecture.

Audience

This document is intended for network engineers and architects who need to understand the design options and configurations necessary for advanced networking services placed in a dedicated region of the data center network.

Document Objectives

The objective of this document is to provide customers guidance on how to deploy network services in a Cisco data center leveraging a dedicated network services layer. This document is not intended to introduce the reader to basic Cisco data center design best practices, but to build upon these well-documented concepts. The prerequisite Cisco data center design knowledge can be found at the following locations:

•

Cisco.com—Data Center:

•

Cisco Validated Design (CVD) Program:

http://www.cisco.com/en/US/netsol/ns741/networking_solutions_program_home.html

Document Format and Naming Conventions

User-defined properties such as access control list names and policy definitions are shown in ALL CAPS to assist the reader in understanding what is user-definable versus command specific. All commands are shown in Courier font. All commands that are applicable to the section covered will be in BOLD.

Overview

The data center is a critical portion of the Enterprise network. The data center network design must address the high availability requirements of any device or link failure. It is also an area where more intelligence is required from the network, to perform services such as firewall and the load balancing of servers and the applications they host. This document examines two architecture models for integrating these services into a dedicated pair of Catalyst 6500 Services Chassis within the data center topology.

Service Integration Approaches

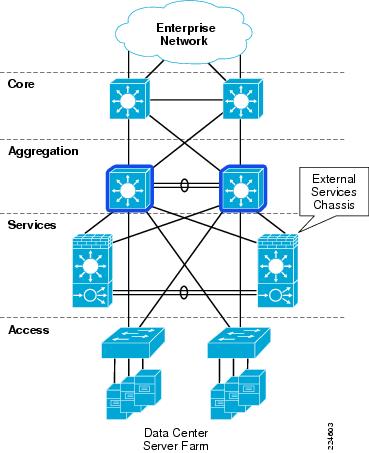

Integrated Services Physical Model

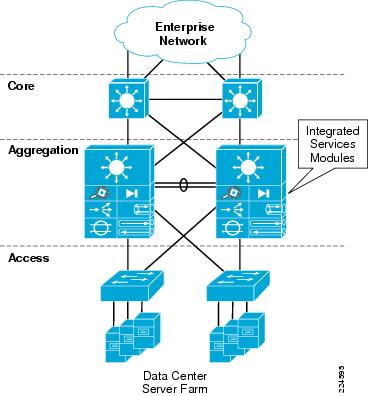

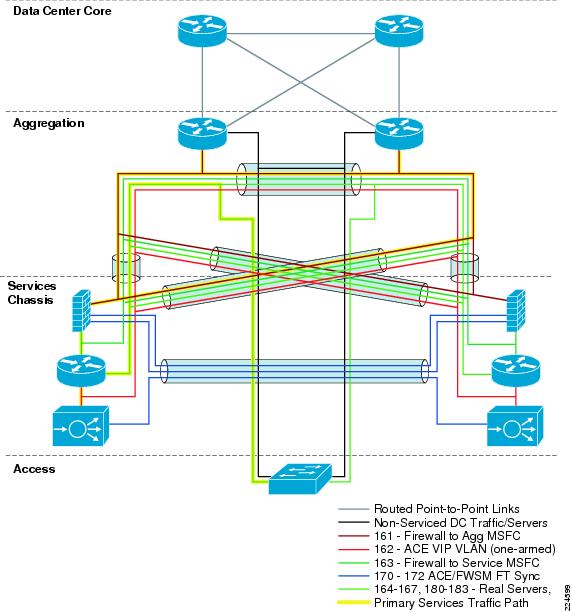

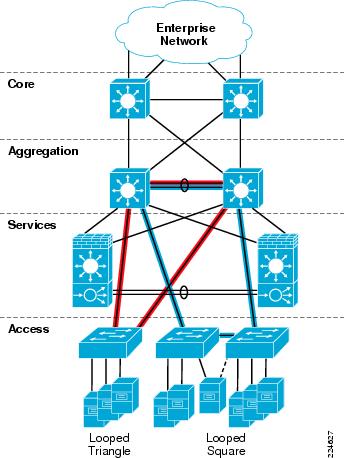

The Cisco Catalyst 6500 platform offers the option of integrating service modules directly into card slots within the chassis, conserving valuable rack space, power, and cabling in the data center network. One common design model is to integrate these modules directly into the Aggregation layer switches within the hierarchical network design, as shown in Figure 1. This approach is commonly taken when there are available slots within existing Aggregation layer switches, or chassis slot capacity is planned and allocated to the service modules in the initial design.

Figure 1 Integrated Services Physical Model

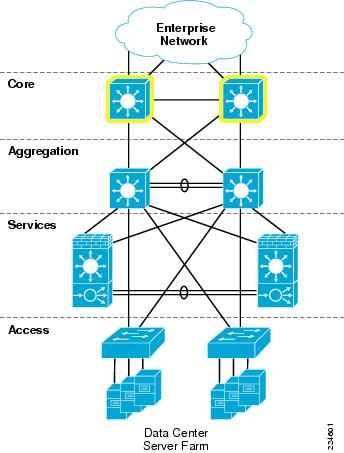

Services Chassis Physical Model

As the data center network grows and needs to scale larger over time, there can be a demand to recover the slots that are being consumed by the service modules to accommodate greater port density in the Aggregation layer. This would allow aggregation of a greater number of Access layer switches without needing to move to a second aggregation block. Other factors may drive the migration away from an integrated services approach, such as the desire to deploy new hardware in the Aggregation layer that may not support the Cisco Catalyst 6500 service modules. For example, the Cisco Nexus 7000 Series switches have a different linecard form factor and do not support Cisco Catalyst 6500 service modules. The initial release of the Cisco Catalyst 6500 Virtual Switching System 1440 does not support installation of service modules in the chassis, this support requires new software that is planned for Cisco IOS Release 12.2(33)SXI .

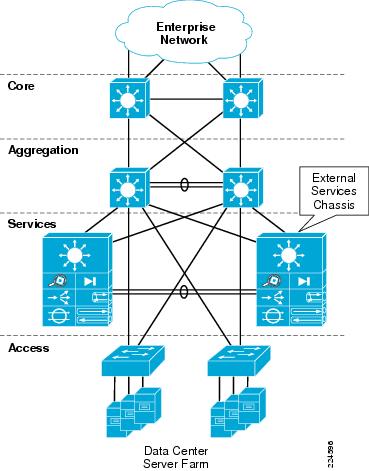

Since these modules require a Cisco Catalyst 6500 chassis for power and network connectivity, another approach for integrating these devices into the data center network may be considered. One approach is the implementation of an additional pair of Cisco 6500 chassis, adjacent to the Aggregation layer of the data center network. These switches are commonly referred to as Services Chassis.

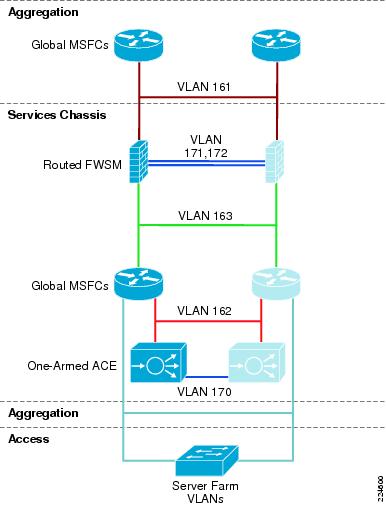

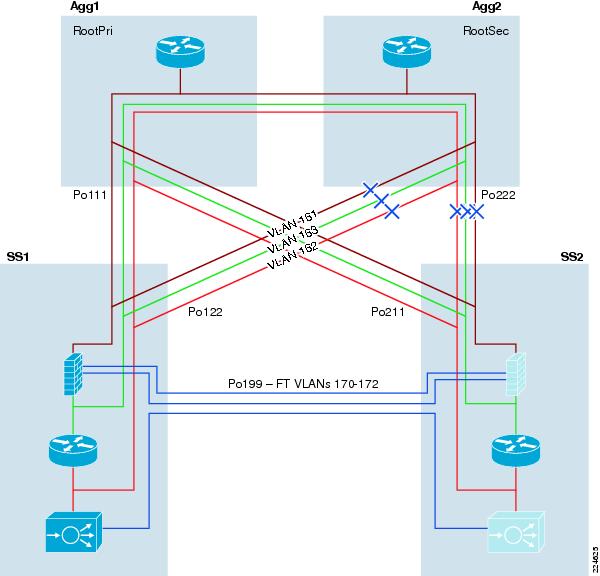

Figure 2 Services Chassis Physical Model

The Services Chassis Physical model, as shown in Figure 2, uses a dual-homed approach for data path connectivity of the Services Chassis into both of the Aggregation layer switches. This approach decouples the service modules from dependence on a specific aggregation switch. This provides operational flexibility for system maintenance that may be required to the aggregation switches or the services switches. From a high availability perspective, if one of the aggregation switches fails, traffic can continue to flow through the other aggregation switch to the active service modules without any failover event needing to occur with the service modules themselves.

802.1q trunking is used on the dual-homed links to allow common physical links to carry ingress and egress traffic VLANs, as well as VLANs that reside between the layers of service modules which must be extended to provide high availability in the event of device or link failure. A separate physical link is recommended directly between the two Services Chassis to carry fault-tolerance traffic and replicate state information between the active and standby modules. Provisioning this separate link ensures that the fault-tolerance control traffic will not be overrun by user data traffic, removing the need for the use of quality-of-service (QoS) class definitions to protect the fault-tolerance traffic across the Aggregation layer.

Logical Design Options

Once the physical layer connectivity of the Services Chassis is decided, there are still many options to choose from to design the logical topology. Some of these options include:

•

Service modules inline or one-armed with traffic redirection?

•

Service modules deployed in a routed (Layer 3) or transparent (Layer 2) mode? If routed, use a dynamic routing protocol or static routes?

•

Employing non-virtualized or single-context service modules or multiple virtual contexts for services?

•

Server farm subnets default gateway placement on a service module or on a router?

•

Use global MSFC routing only or include Virtual Routing Forwarding-lite? (VRF-lite)?

Add to these questions the application-specific requirements and addressing constraints of a particular customer's existing network design. Designing services into the data center network may become a complex project to undertake for the network architect. In order to simplify this process, Cisco Enterprise Solutions Engineering (ESE) has validated two reference architectures for the integration of Services Chassis into the Enterprise data center network.

Logical Design Goals

Network design can often include tradeoffs when choosing between design options, there are always pros and cons involved. Cisco ESE pursued the following goals in the development of Services Chassis reference architectures for validation:

Seamless Service Integration

Provide for the insertion or removal of services into a chain for a specific class of server with the least amount of reconfiguration required.

Architecture Flexibility

Design models that can remain consistent in terms of connectivity requirements and flows, even if a specific module is run in a different mode, or a newer product is inserted into the same role at a future time.

Predictable Traffic Patterns

The design should optimize traffic paths first for the normal running state with all devices in place. Failover patterns should be optimized if possible but not at the expense of the normal state.

Consistent Network Reconvergence Times

A full high availability analysis was conducted on the reference models as part of the design validation process. This included simulated failure of each link or device in the primary traffic path, with an analysis of reconvergence times and failover traffic paths.

Focus on Frontend Services Between Client and Server

Customer data centers may contain multi-tier applications and specific requirements such as servers that require Layer 2 adjacency with services between the servers. These requirements can significantly impact design decisions. The validation of these reference models for Services Chassis integration was focused primarily at client to server interaction.

Focus on the Most Common Data Center Services Being Deployed in the Enterprise

In surveys of Enterprise customers, Firewall and Server Load Balancing were the most common services being deployed in the data center. The Cisco Firewall Services Module (FWSM) and Application Control Engine Module (ACE) were chosen to represent these classes of services.

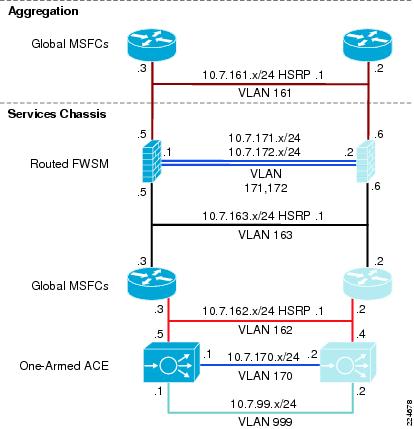

As a product of these design options and goals, two primary data center Services Chassis logical reference architectures have been validated. The first model is a simple Active-Standby architecture with no virtualization of services. The second model is a full Active-Active, virtualized architecture with multiple FWSM and ACE contexts, and VRF instances controlling the routing functions for these contexts. The standard physical Services Chassis model shown in Figure 2 above was used for all validation.

Service Chassis Logical Topologies

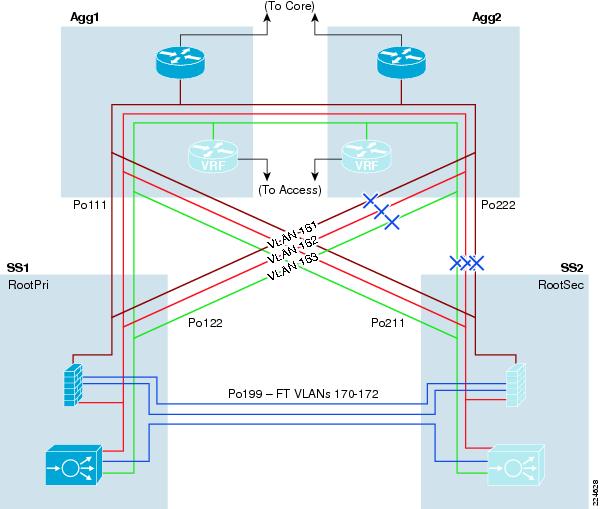

Active-Standby Service Chassis

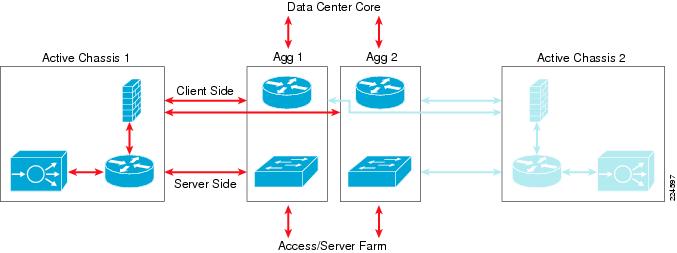

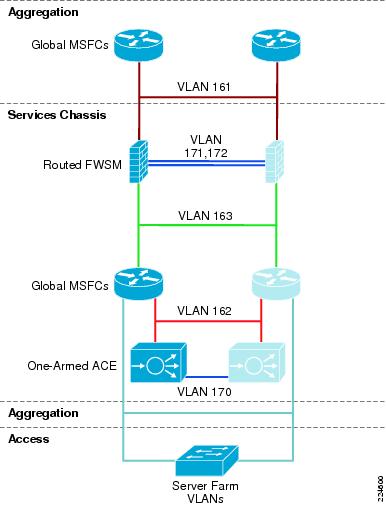

The first reference design model discussed is referred to as the Active-Standby Services Chassis model. This model focuses on simplicity of implementation, and builds an intentionally one-sided flow of traffic through the primary active Services Chassis. The secondary Services Chassis and its associated modules act purely as hot standby devices, present for fault tolerance in case the primary chassis or one of the modules fails. An illustration of the traffic flow for the Active-Standby model is provided in Figure 3.

Figure 3 Active-Standby Traffic Flow

Architecture Attributes

This design model was validated with the following characteristics:

Routed FWSM

A routed service device is conceptually easier to implement and troubleshoot, since there is a one-to-one correlation between VLANs and subnets, and a simplified Spanning Tree structure since the device is not forwarding BPDUs between VLANs.

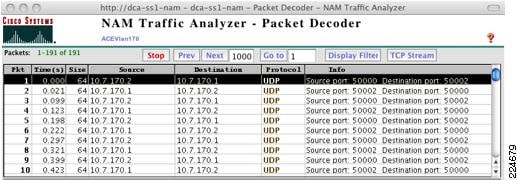

One-Armed ACE

The one-armed ACE can be introduced seamlessly into the network, and will not be in the path of other traffic that does not need to hit the virtual IP (VIP) addresses. ACE failure or failover only impacts traffic that is being load-balanced or leveraging other ACE application services such as SSL acceleration. A traffic-diversion mechanism is required to ensure both sides of a protocol exchange pass through the ACE, either Policy-Based Routing (PBR) or Source-Address Network Address Translation (Source-NAT) may be used. Source-NAT was chosen for the validation of this design due to ease of configuration and support relative to PBR.

Services Chassis Global MSFC as IP Default Gateway for Server Farm Subnets

Using the MSFC as default gateway for servers provides for the insertion or removal of services above the MSFC without altering the basic IP configuration of devices in the server farm. It also prevents the need to enable ICMP redirects or have load-balanced traffic traverse the FWSM twice during a flow.

Traffic Flow Between Service Modules and Clients

For client/server traffic, ingress and egress traffic on the client side is balanced across both aggregation 6500's global MSFC's.

Traffic Flow Between Service Modules and Server Farm

For client/server traffic, ingress and egress traffic on the server (Access layer) side is concentrated in one of the Aggregation layer switches that is configured as the IP default gateway for the server farm subnets.

Note

For server-to-server traffic, the traffic would be contained to a given access switch or be forwarded through the Aggregation layer if the servers are Layer 2 adjacent and on the same IP subnet. If the servers are on different subnets, the traffic needs to traverse the Services Chassis to forward between subnets. This model may not be optimal for server-to-server traffic flow between subnets. Consider the Active-Active Services Chassis model, which leverages VRFs in the Aggregation layer to streamline the flow of server-to-server traffic.

A full description of the VLANs, IP Subnets, and features used in the configuration of the Active-Standby design follows in the Active/Standby Service Chassis Design.

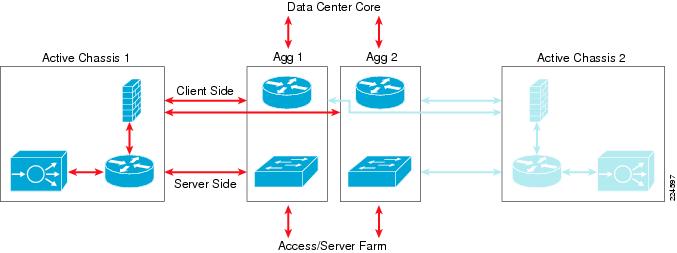

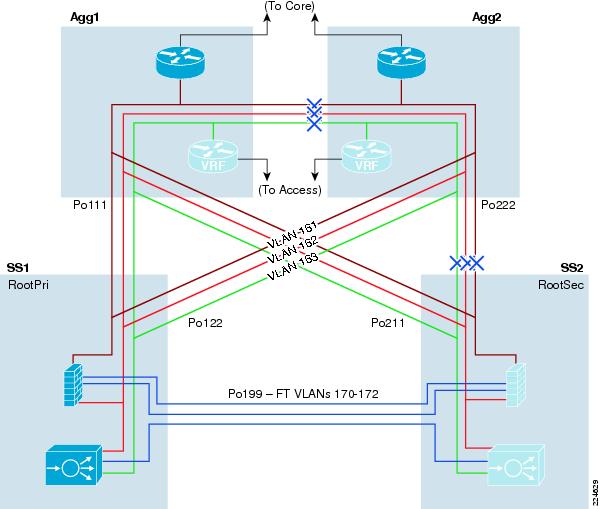

Active-Active Service Chassis

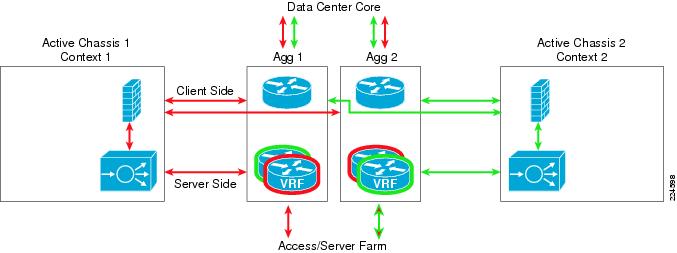

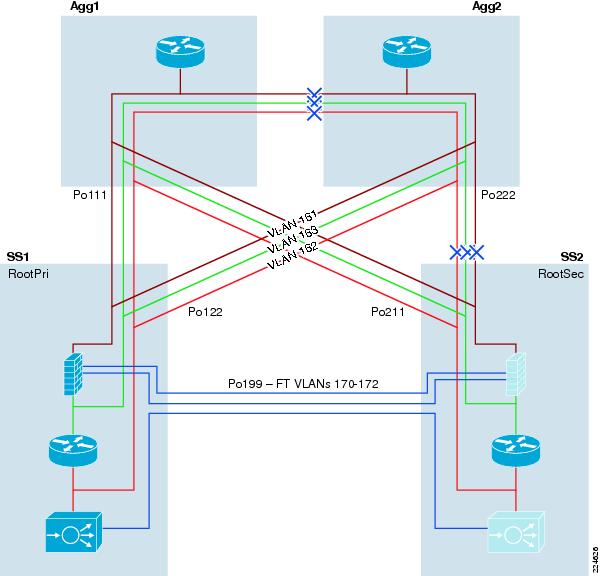

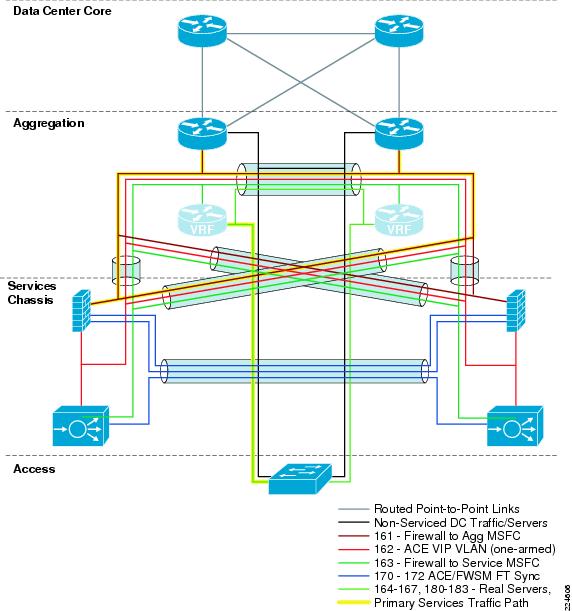

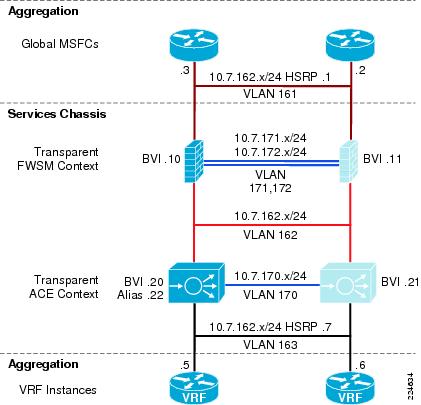

The second reference design model discussed is referred to as the Active-Active Services Chassis model. This model leverages the virtualization capabilities of the FWSM and ACE Modules to distribute a portion of the traffic across both Services Chassis. The traffic is not automatically equally balanced across the devices, but the network administrator has the ability to assign different server farm subnets to specific contexts, which may be done based on expected load or based on other organizational factors. Routing virtualization is also used in the active-active model through the implementation of VRF instances in the aggregation switches. In the validated active-active model, all Layer 3 processing takes place in the Aggregation layer, and simplifies the implementation of the Services Chassis by keeping them as pure Layer 2 connected adjunct switches. However, the model is flexible enough to support the implementation of a routed FWSM or ACE if it better supports specific customer requirements. An illustration of the traffic flow for the Active-Standby model as validated is provided in Figure 4.

Figure 4 Active-Active Traffic Flow

Architecture Attributes

This design model was validated with the following characteristics:

Transparent FWSM

A transparent firewall requires less configuration than a routed firewall, since there is no routing protocol to configure or list of static routes to maintain. It requires only a single IP subnet on the bridge-group interface, and forwards BPDUs between bridging devices that live on attached segments, in that way it is truly transparent, and not a bridge itself. The VLANs on the different interfaces of the transparent FWSM will carry different VLAN numbers, so a transparent device is often said to be "stitching" or "chaining" VLANs together.

Note

The FWSM supports a maximum of eight bridge-group interfaces (BVI) per context.

Transparent ACE

The transparent ACE implementation works similarly to the FWSM, where multiple VLANs are stitched together to transport one IP subnet, and BPDUs are forwarded to allow adjacent switches to perform Spanning Tree calculations. Unlike the One-Armed ACE approach, a transparent ACE sits inline with traffic, and requires no traffic diversion mechanism to ensure that both sides of a protocol exchange pass through the device. The ACE supports a maximum of two Layer 2 interface VLANs per bridge-group and a maximum of two thousand BVIs per system.

Dual Active Contexts on the Services Modules

With the virtualization capabilities of the Cisco Catalyst 6500 Services Modules, two separate contexts have been created which behave as separate virtual devices. The first FWSM and ACE are primary for the first context, and standby for the second context. The second FWSM and ACE are primary for the second context, and secondary for the first context. This allows modules in both sides of the design to be primary for a portion of the traffic, and allows the network administrator to distribute load across the topology instead of having one set of modules nearly idle in a pure-standby role.

Note

It is important to note that in an Active-Active design, network administrators must properly plan for failure events where one service module supports all of the active contexts. If the total traffic exceeds the capacity of the remaining service module, the potential to lose connections exists.

Aggregation Layer VRF instances as IP default gateway for server farm subnets

Using VRF instances for the default gateway for servers provides for the insertion or removal of services above the VRF without altering the basic IP configuration of devices in the server farm. It also provides for direct routing between server farm subnets through the Aggregation layer, without a requirement to drive traffic out to the Services Chassis for first-hop IP default gateway services. For the Active-Active design, a separate set of VRF instances was created for each of the two Services Modules contexts, to keep traffic flows segregated to the proper side of the design.

Traffic flow between Service Modules and Clients

For client/server traffic, ingress and egress traffic on the client side is balanced across both aggregation 6500's global MSFC's.

Traffic flow between Service Modules and Server Farm

For client/server traffic, ingress and egress traffic on the server (Access layer) side is concentrated to one of the two Aggregation layer switches VRF instances which is configured as the IP default gateway for the server farm subnets. The Hot Standby Router Protocol (HSRP) gateway configuration was validated using Aggregation Switch 1 as primary for context 1, and Aggregation Switch 2 as primary for context 2.

Note

For server-to-server traffic, the traffic would be contained to a given Aggregation layer switch if the administrator has assigned the servers that needed to communicate to the same services context. If the servers have been assigned to different contexts, the server-to-server traffic flow would be forced through the services chain that is assigned to each context. This approach could be used to insert services between layers of a multi-tier application; however, special attention must be paid to the bandwidth required to ensure that the inter-switch links between the Aggregation layer and Services Chassis do not become saturated.

A full description of the VLANs, IP Subnets, and features used in the configuration of the Active-Standby design follows in Active/Standby Service Chassis Design .

Required Components

The hardware and software components listed in Table 1 were used in the construction of these validated design models.

Active/Standby Service Chassis Design

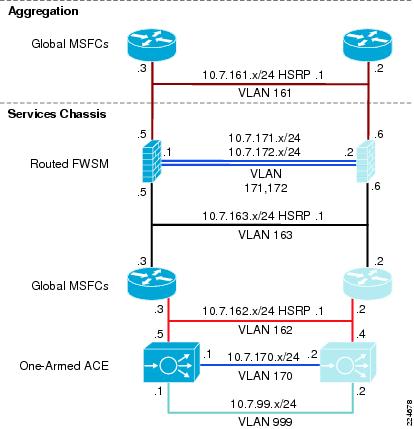

Infrastructure Description

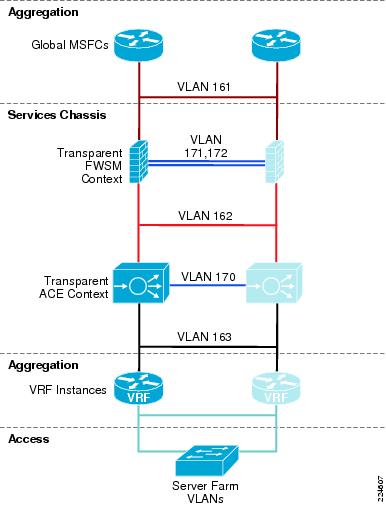

The Active-Standby Services Chassis model is a relatively simple model designed for ease of implementation, support, and troubleshooting. It is based on the dual-homed physical Services Chassis model discussed in Service Integration Approaches, which is illustrated in Figure 2. The implementation of services in the data center requires careful planning of traffic flows and logical constructs such as VLANs and IP subnets in order to control the flow of traffic through the service modules. The illustration in Figure 5 provides a view of the logical architecture of the Active-Standby model, overlaid on the physical infrastructure.

Figure 5 Active-Standby Combined Physical and Logical View

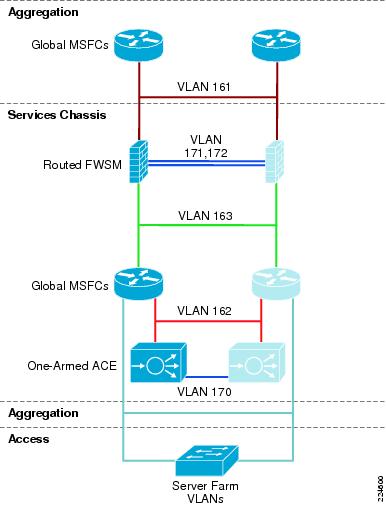

To analyze the flow of traffic through this topology, we can simplify the discussion by focusing initially on a purely logical diagram of the same topology, which is shown in Figure 6.

All of the data-path VLANs that are extended between the two Services Chassis must traverse the dual-homed links through the Aggregation layer. Ingress and egress VLANs that are the path to client and server connections must pass through the Aggregation layer to connect to the Core and Access layers of the network. Intermediate VLANs between layers of the services chain, such as VLANs 163 and 162 in are also extended to prevent any blackholing of traffic in failover situations. These intermediate VLANs are also extended across the Aggregation layer to keep the direct link between Services Chassis dedicated to failover and module state traffic. The Fault Tolerance VLANs that run directly between the pairs of Services Modules are the only VLANs that are extended across the physical link that runs directly between the two Services Chassis.

Figure 6 Active-Standby Logical Diagram

Following is a brief analysis of the function of each of the VLANs within the logical design. Since there are no transparent mode modules in this topology, each VLAN corresponds to a unique IP subnet.

•

Aggregation Global MSFC's to routed FWSM. This is shown as VLAN 161 in Figure 6. This VLAN is extended across the dual-homed physical links between the Services Chassis and Aggregation layer, and provides the ingress and egress path for traffic on the client side of the service modules.

•

FWSM Fault Tolerance links. These are shown as VLAN 171 and 172 in Figure 6, and are extended across the dedicated physical link between the two Services Chassis. They carry failover hello packets, state information, and allow the primary and secondary FWSM to keep their configurations synchronized.

•

Routed FWSM to Services Chassis Global MSFC's. This is shown as VLAN 163 in Figure 6. This VLAN is extended across the dual-homed physical links between the Services Chassis and Aggregation layer. The Services Chassis MSFC makes forwarding decisions to direct traffic received on this link directly to the server farm, or to the One-Armed ACE Module if a VIP address is the destination.

•

Services Chassis Global MSFC's to One-Armed ACE. This is shown as VLAN 162 in Figure 6. This is both the ingress and egress interface for traffic being serviced by the ACE Module. The ACE performs Source NAT, which changes the source address of packets that it is forwarding to the server farm. In this way, the return packets must also pass through the ACE to have their destination addresses translated back to that of the original requesting client node. This VLAN is extended across the dual-homed physical links between the Services Chassis and Aggregation layer.

•

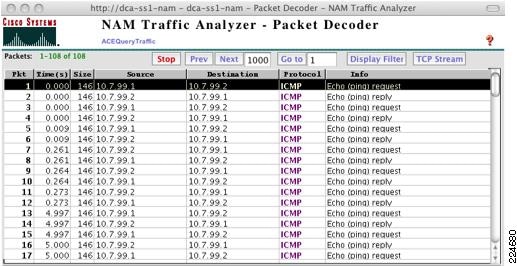

ACE Module Fault Tolerance link. This link is shown as VLAN 170 in Figure 6, and is extended across the dedicated physical link between the two Services Chassis. This link carries hello traffic and allows configuration synchronization between the two ACE Modules.

•

Services Chassis Global MSFC's to Server Farm VLANs. These VLANs are referenced as the "Server Farm VLANs", and are shown Figure 6. These VLANs are extended across the dual-homed links to the Aggregation layer, and also extend down into the Access layer to support server connectivity. In the reference topology, eight different VLANs carrying different types of serviced traffic (voice, firewalled-only data, SLB data) were configured; the actual number and purpose of VLANs deployed will be specific to a customer requirement.

Note

Not illustrated in Figure 6 is the possibility of having VLANs that carry non-serviced traffic. For server farm subnets that do not require FWSM or ACE services, a traditional hierarchical design data path may be used with these VLANs terminating on the Aggregation layer, with their IP default gateway services provided by the Aggregation layer global MSFC's.

The next section of this document discusses the physical layers of this model step-by-step and describes the features that are required to build this topology.

Core Layer

Overview

The Core layer of the Active-Standby Services Chassis model is primarily focused on stability and high-performance Layer 3 IP-packet forwarding. It provides a layer of insulation between the Spanning Tree domains at the data center Aggregation layer, and other places in the network. It is typically constructed of two Cisco Catalyst 6500 switches, with purely 10 Gigabit Ethernet or Gigabit EtherChannel interfaces, all configured in a routed mode. Depending on the scale or specific requirements of the Enterprise, this may represent a dedicated data center Core layer, or may actually be a shared Core where other Distribution or aggregation blocks connect, such as campus, WAN/branch, or Internet edge. The two switches in the Core layer of the Active-Standby Services Chassis model are highlighted in Figure 7.

Figure 7 Data Center Core Layer

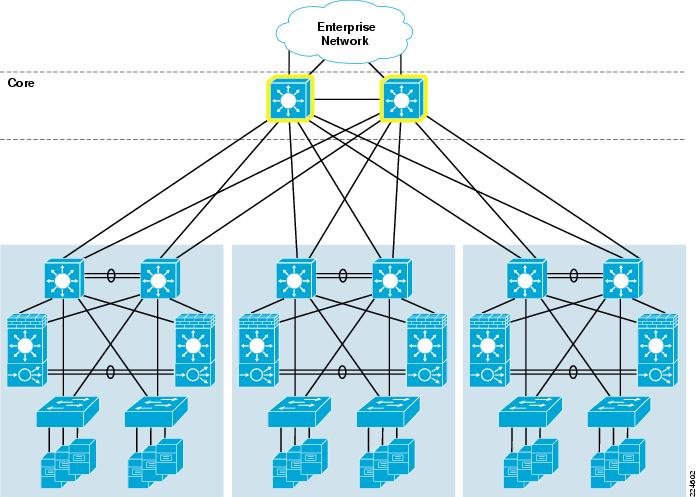

Scaling the Data Center

A dedicated data center Core layer also provides the capability to deploy multiple Aggregation layer blocks. This will allow the data center to scale to support larger numbers of Access layer switches due to greater Aggregation layer port count, which in turn translates to a greater number of servers that may be supported. There may also be operational factors that cause a network architect to deploy multiple aggregation blocks, such as after a merger of two companies or in a larger organization with multiple distinct business units that demand some physical separation of equipment for security or maintenance window purposes. An example of a data center network topology with multiple aggregation blocks is shown in Figure 8.

Figure 8 Data Center Core with Multiple Aggregation Blocks

If multiple aggregation blocks are connected to the data center Core, the best practice is to maintain the boundary between Layer 2 and Layer 3 within each distinct pair of aggregation switches, so that only routed links are extended to the core. Maintaining this boundary provides the ability to extend VLANs to multiple Access layer switches, but only within the confines of a given Aggregation layer block. This approach provides for greater stability, since a failure within a given Layer 2 Broadcast and Spanning Tree domain will be constrained to that aggregation block.

Services Chassis deployment in a data center with multiple aggregation blocks should be constrained to a separate pair of Services Chassis for each Aggregation layer switch pair. If greater services capacity is required, multiple pairs of Services Chassis may be used with a single aggregation block. It is a best practice not to attach a single pair of Services Chassis to multiple aggregation blocks, which could potentially result in joining two Layer 2 domains by inadvertent misconfiguration. In general, the total bandwidth capacity of an aggregation block is much greater than that of a given set of services, so when scaling a data center to multiple aggregation blocks multiple sets of Services Modules are typically required.

Features

IP Route Summarization

Routing protocol summarization is a common IP networking practice to keep routing tables small for faster reconvergence and greater stability. In the data center hierarchical network, summarization may be performed at the data center Core or the Aggregation layer. Summarization is recommended at the data center Core if it is a dedicated layer that is separate from the Enterprise core. The idea is to keep the Enterprise Core routing table as concise and stable as possible, to limit the impact of routing changes happening in other places in the network from impacting the data center, and vice versa. If a shared Enterprise Core is used, summarization is recommended at the Aggregation layer. In order to enable summarization, proper IP address allocation must have been used in the assignment of subnets to allow them to be summarized into a single route. Example configurations in this document will show route summarization enabled only at the Aggregation layer.

OSPF Configuration

The Open Shortest Path First (OSPF) protocol is a popular IP Interior Gateway Protocol (IGP), which is often used to provide dynamic routing in Enterprise networks. OSPF Version 2 is standardized in RFC 2328. Common best practices features recommended for configuration of OSPF in the data center model with a Services Chassis include:

•

Hello and Dead timer adjustment. The hello and dead timers in OSPF control how often adjacent neighbors transmit hello packets to maintain the adjacent, and how long a neighbor can miss hello packets before considering a neighbor to be dead and tearing down the adjacency. Cisco IOS offers OSPF timers down to millisecond values, but in interest of stability in the data center environment a hello timer of 1 second and dead timer of 3 seconds are recommended. The control plane protocol load on the Aggregation layer switches can be higher in the data center than some other places in the network. The use of millisecond timers can potentially cause undesirable routing protocol peering flaps when other failover events occur in the network, which will in turn increase reconvergence times.

•

OSPF Authentication. Authenticating OSPF neighbor routers with a pre-shared message digest key is the preferred way to mitigate the threat of unauthorized devices attempting to form routing adjacencies with data center infrastructure equipment. OSPF Authentication must be mutually configured on both routing neighbors to form an adjacency, with a matching key phrase.

•

Passive Interface Default. When configuring the OSPF router in Cisco IOS, change the default behavior for IP routed interfaces to passive. Then, turn off the passive function only on interfaces where an OSPF neighbor is expected in the design. In the Core layer, an OSPF neighbor should really be on all interfaces, other than possibly a management interface that is not participating in routing. The passive default has more bearing in the Aggregation layer, where there are many routed interfaces facing the Access that should not be enabled as active OSPF interfaces. However, it is cleaner to use passive default as a consistent best practice across all of the routing nodes in the network.

•

Hard-coded Router ID. By default, OSPF will choose the highest Loopback interface IP address in the router as the Router ID, if there are no loopbacks then it chooses the highest physical interface address. It is desirable to have OSPF router id's remain consistent and not change, both for routing stability and for troubleshooting purposes. Best practice is to be sure to create a loopback interface on the router to maintain a consistent router id, or use the router-id command in IOS to hardset this value.

•

Auto-cost reference bandwidth adjustment. The default reference bandwidth of 100 Mbps results in 10 Gigabit, 1 Gigabit, and 100 Mbps interfaces all to have the same cost. By raising the reference bandwidth to 10,000 Mbps, it is the equivalent of 10 Gigabits, so a 10 Gigabit Ethernet interface will have a cost of 1, and a 1-Gigabit interface will have a cost of 10.

•

Throttle timers for SPF and LSA processing. These timer optimizations improve the response time for initial SPF calculation and LSA generation, while maintaining a dampening factor to reduce control plane load in a situation where an interface is flapping.

The following are examples of interface and router OSPF configurations in Cisco IOS with these best practices optimizations applied:

interface TenGigabitEthernet4/1ip address 10.7.2.1 255.255.255.0ip pim sparse-modeip ospf authentication message-digestip ospf message-digest-key 1 md5 c1sc0ip ospf hello-interval 1ip ospf dead-interval 3ip igmp version 3router ospf 7router-id 2.2.2.1log-adjacency-changesauto-cost reference-bandwidth 10000area 0 authentication message-digesttimers throttle spf 10 100 5000timers throttle lsa all 10 100 5000passive-interface defaultno passive-interface TenGigabitEthernet4/1no passive-interface TenGigabitEthernet4/2network 10.7.0.0 0.0.63.255 area 0EIGRP Configuration

Enhanced Interior Gateway Routing Protocol (EIGRP) is a Cisco-specific routing protocol that is commonly used as an IGP in the Enterprise environment. EIGRP does not require the area constructs that OSPF uses, and permits summarization to be configured on a per-interface basis that provides greater flexibility. Best practices for IP subnetting and address allocation should still be followed if summarization is desired. EIGRP provides convergence times that are equal to or slightly faster than OSPF in the validated topologies. Features to enable when leveraging EIGRP in the Active-Standby Services Chassis model include:

•

Hello Interval and Hold Time adjustment. The hello interval and hold time in EIGRP control how often neighbors transmit hello packets to maintain the adjacency, and how long a neighbor can miss hello packets before considering a neighbor to be dead and tearing down the adjacency. An EIGRP hello interval setting of one second, and a hold time setting of 3 seconds are recommended for fast reconvergence.

•

EIGRP Authentication. Authenticating EIGRP neighbor routers with a pre-shared message digest key is the preferred way to mitigate the threat of unauthorized devices attempting to form routing adjacencies with data center infrastructure equipment. OSPF authentication must be mutually configured on both routing neighbors to form an adjacency, with a matching key phrase.

•

Passive Interface Default. When configuring the EIGRP router in Cisco IOS, change the default behavior for IP routed interfaces to passive. Then, turn off the passive function only on interfaces where an EIGRP neighbor is expected in the design. In the Core layer, an EIGRP neighbor should really be on all interfaces, other than possibly a management interface that is not participating in routing. The passive interface default has more bearing in the Aggregation layer, where there are many routed interfaces facing the access that should not be enabled as active EIGRP interfaces. However, it is cleaner to use passive default as a consistent best practice across all of the routing nodes in the network.

•

Disable Auto-Summary. EIGRP has the capability to automatically summarize routes at classful network boundaries. When a network administrator is configuring IP route summarization at the Core or Aggregation layer of a data center topology, this will often not coincide with a transition of classful network space. This is an optional setting, but it is a cleaner configuration to disable the automatic summarization and summarize at the interface level only where dictated by the logical design.

The following are examples of interface and router OSPF configurations in Cisco IOS with these best practices optimizations applied:

interface TenGigabitEthernet13/5ip address 10.7.1.2 255.255.255.0ip pim sparse-modeip hello-interval eigrp 7 1ip hold-time eigrp 7 3ip authentication mode eigrp 7 md5ip authentication key-chain eigrp 7 eigrpip igmp version 3router eigrp 7passive-interface defaultno passive-interface TenGigabitEthernet13/5no passive-interface TenGigabitEthernet13/6network 10.0.0.0no auto-summaryMulticast Configuration

IP multicast is commonly leveraged by multi-media applications such as voice conferencing, video broadcasts, and video surveillance. Regardless of the specific applications requirements, at a minimum a basic multicast configuration should be applied to all internetworking devices in the enterprise to ensure that any multicast streams that are introduced to a given IP subnet are not treated as broadcast due to lack of configuration. Depending on application specifics, devices in the data center server farm could be either sources or receivers of multicast streams. A basic multicast configuration requires the following steps:

Step 1

Enable IP multicast routing on all routers in the network.

Step 2

Enable IGMP version 3 on all routed interfaces on the network. Ensure that any required multicast peers are IGMP version 3 capable, if necessary down-rev to IGMP version 2 on specific interfaces. For example, the FWSM supports only IGMP version 2.

Step 3

Enable PIM sparse-mode on all routed interfaces on the network. PIM dense mode should be avoided due to its periodic flooding of multicast traffic. PIM sparse-dense mode may be used if using Auto-RP to facilitate Rendezvous Point discovery. On a routed FWSM, enabling PIM turns on sparse-mode behavior by default.

Step 4

Configure one or more routers in the network to act as a multicast Rendezvous Point. The RP allows multicast routers to discover the availability of sources on specific group addresses. An RP-to-group mapping agent is also required if using a dynamic mechanism for RP discovery.

Step 5

Configure an RP discovery mechanism for multicast routers. The RP address for the multicast group addresses required must be known to all multicast capable routers in the path of a multicast stream. This address may be hard-coded in the routers if necessary. Several dynamic mechanisms also exist, such as Cisco Auto-RP, Auto-RP listener, and Boot Strap Router (BSR). BSR was used between the Core and Aggregation layers in the validation of the Services Chassis models, also static RP addressing was used on the FWSM and Services Chassis routers since the FWSM could not participate in any dynamic RP discovery mechanism.

If hard-coded RP addresses are required in the network , use Anycast RP to provide for RP redundancy. The dynamic RP discovery mechanisms allow for redundant RPs to be configured with different IP addresses. In a network with hard-coded RP addresses another mechanism must be used to allow for redundancy of the RP services. Anycast RP allows two different routers to carry the same IP address on a loopback interface, which provides for a redundant physical set of RPs available at a single IP. Another set of loopback interfaces and a Multicast Source Discovery Protocol (MSDP) peering relationship is used to allow the redundant RP's to replicate source information.

Aggregation Layer

Overview

The Aggregation layer of the data center provides connectivity for the Access layer switches in the server farm, an aggregates them into a smaller number of interfaces to be connected into the Core layer. In most data center environments, the Aggregation layer is the transition point between the purely Layer 3 routed Core layer, and the Layer 2-switched Access layer. 802.1Q trunks extend the server farm VLANs between Access and Aggregation layers.

The Aggregation layer also provides a common connection point to insert services into the data flows between clients and servers, or between tiers of servers in a multi-tier application. In the Active-Standby Services Chassis model, the Services Chassis are dual-homed into the Aggregation layer with 802.1Q trunks similar to the way that Access layer switches are connected. An illustration highlighting the data center Aggregation layer is shown in Figure 9.

Figure 9 Data Center Aggregation Layer

Features

Layer 2

Port Channel Configuration

Port-Channel interfaces are configured in Cisco IOS to facilitate the bonding of multiple physical ports into a single logical interface; this is also referred to as an EtherChannel. Link Aggregation Control Protocol (LACP) is part of the IEEE 802.3ad specification and is a standards-based mechanism for two switches to negotiate the building of these bundled links. Cisco also supports Port Aggregation Protocol (PAgP), but LACP is the recommended for standards compliance. LACP is configured using the keywords "active" and "passive" in the interface configuration. At least one end of the port-channel connection must be placed in "active" mode for channel negotiation to occur.

Cisco devices allocate traffic across members of an EtherChannel bundle using a hash distribution mechanism. Cisco IOS 12.2(33)SXH and later for the Catalyst 6500 supports an alternative hash-distribution algorithm called the adaptive algorithm. Use of the adaptive algorithm eliminates the reset of the port ASIC on each port in the channel when a single physical port is added to or deleted from the channel. The adaptive algorithm was shown to slightly improve network convergence times during single-port EtherChannel failovers during design validation. The adaptive algorithm may be enabled globally, or on a per-interface basis. If using a global configuration, ensure that all connected endpoints support the use of the adaptive algorithm. An example of port-channel configuration is shown below.

port-channel hash-distribution adaptiveinterface Port-channel99switchportswitchport trunk encapsulation dot1qswitchport trunk allowed vlan 128-133,161-167,180-183,300-399,999switchport mode trunkspanning-tree guard loopinterface TenGigabitEthernet13/3switchportswitchport trunk encapsulation dot1qswitchport trunk allowed vlan 128-133,161-167,180-183,300-399,999switchport mode trunkchannel-protocol lacpchannel-group 99 mode activespanning-tree guard loopSpanning Tree Configuration

The Active-Standby Services Chassis model was validated using a looped configuration with Access layer switches dual-homed into the Aggregation layer. The looped topology is required in order to facilitate extension of the server farm VLANs into the two redundant Services Chassis. When redundant connections are introduced into the network to provide Layer 2 redundancy, a loop-prevention mechanism is required to prevent broadcast and unknown destination packets from endlessly circling the network. Traditionally, Spanning Tree Protocol (STP), standardized in 802.1D, has provided this function.

Rapid Spanning Tree, (RSTP)

RSTP, which is standardized in IEEE 802.1w, provides faster reconvergence than traditional STP and replaces the need to run the Cisco proprietary extensions of Uplink Fast and Backbone Fast to improve convergence times. Cisco's implementation of RSTP is known as Rapid Per-VLAN Spanning Tree, (RPVST+, also known as PVRST+) which uses the features of 802.1w and implements a separate Spanning Tree instance for each active VLAN. RPVST+ is the recommended Spanning Tree implementation for the Active-Standby Services Chassis model to provide rapid re-convergence in the event of link or device failure.

Multiple Spanning Tree (MST)

MST, which is standardized in IEEE 802.1s, provides for the consolidation of multiple STP instances that all follow the same topology into a reduced number of STP instances. MST may be used in conjunction with 802.1w to support networks with very large numbers of VLANs, but was not implemented in the validation of this Services Chassis model.

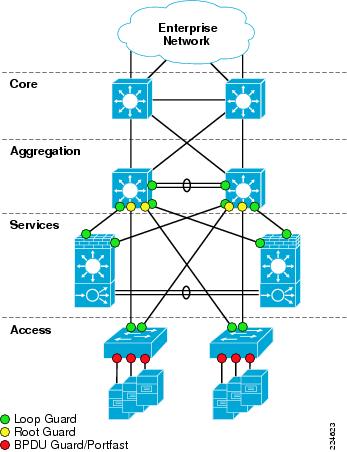

Loop Guard

Loop Guard is a Cisco-specific feature that provides additional protection against Layer 2 forwarding loops. Loop Guard should be enabled on Root and Alternate ports in the Spanning Tree topology. When Loop Guard detects that BPDUs are no longer being received on a non-designated port, the port is moved into a loop-inconsistent state instead of transitioning to the listening/learning/forwarding state. This prevents a Layer 2 loop from occurring in the event that a link becomes unidirectional or a node stops transmitting BPDUs for some reason. Loop Guard may also be configured globally, but port-specific configuration is preferred to ensure that it is only enabled where specifically necessary. An illustration of where to enable Loop Guard, Root Guard, and BPDU Guard Spanning Tree enhancements is shown in Figure 10.

Root Guard

Root Guard is a Cisco-specific feature which prevents a Layer 2 switched port from becoming a root port (a root port is a port which faces the Spanning Tree root switch). This feature is sometimes recommended on Aggregation layer ports that are facing the Access layer, to ensure that a misconfiguration on an Access layer switch cannot cause it to change the location of the Spanning Tree root switch (bridge) for a given VLAN or instance. There is an instance where use of Root Guard at the Aggregation layer may cause an issue in a hierarchical network design. If routing protocol summarization is in place at the Aggregation layer, and the inter-switch link between the two aggregation switches were to completely fail, traffic arriving at the non-root Aggregation layer switch would be black-holed (dropped) due to Root Guard blocking the possible alternate path to the Access layer. For this reason, Root Guard should be used with caution if IP route summarization is in use at the Aggregation layer. As a best practice, construct the Aggregation layer inter-switch link (ISL) Port Channel from multiple physical links residing on separate physical line cards to reduce the chance of total failure of the Port Channel. Building ISL EtherChannels with ports from different line cards is a best practice across the board for Enterprise network design.

Figure 10 Spanning Tree Feature Placement

Spanning Tree primary and secondary root assignments should be performed on the Aggregation layer switches for all server farm VLANs. This assignment is according to the principals of classic hierarchical network design. Layer 2 STP root should be assigned to the same switch to which Layer 3 primary default gateway is assigned using HSRP or another First Hop Redundancy Protocol (FHRP). This aligns Layer 2 and Layer 3 paths in the network to eliminate any unnecessary hops. In the Active-Standby design, the topology is intentionally one-sided for simplicity of implementation and troubleshooting, so all server farm STP roots and HSRP primary routers should be configured on Aggregation 1, indicated on the left side of the topology.

Layer 3

IP Routing Best Practices

In general, the configuration of OSPF and EIGRP routing protocols in the Aggregation layer to peer with the Core layer follows the same best practices as the Core. Hello and dead timers, neighbor authentication, and passive interface default are all best practices common to both OSPF and EIGRP routing protocols. For OSPF specifically, hard-set Router ID, auto-cost reference bandwidth adjustment, and throttle timer adjustment are also recommended. Refer to the "Core Layer" section, for specifics on these recommendations.

IP Route Summarization

As mentioned in the "Core Layer" section, IP route summarization may be performed at the data center Aggregation or Core layer. Core layer IP route summarization is more appropriate when there is a dedicated data center Core which is separate from the Enterprise Core, typically when multiple Aggregation blocks exist in the data center. Aggregation layer IP route summarization is more appropriate when there is a shared Enterprise Core, and the data center contains only one Aggregation block with the switches performing a dual role as a collapsed Core and Aggregation layer for the data center.

IP route summarization is configured differently between the OSPF and EIGRP Routing protocols. EIGRP allows summarization anywhere in the topology that the IP addressing will allow. This is configured at the interface level, on every interface that advertises the route to the portion of the network being summarized. The following IOS configuration example illustrates EIGRP route summarization:

interface TenGigabitEthernet1/1ip address 10.7.3.2 255.255.255.0ip summary-address eigrp 10.7.128.0 255.255.192.0 5OSPF requires an area structure, which is often mapped to chunks of IP address space that can be summarized at a bit boundary. The Area Border Router (ABR) defines the border between OSPF areas and is the logical point for IP route summarization. OSPF syntax in Cisco IOS allows route summarization to be performed directly in the router ospf portion of the configuration:

router ospf 7router-id 3.3.3.1area 71 range 10.7.128.0 255.255.192.0When configuring an Area Border Router for OSPF in the data center, the interfaces facing the data center should be placed in a numbered, non-zero assigned OSPF area. This area identifier is used during route summarization. The interfaces pointing towards the Enterprise Core should be in Area 0, which defines the "backbone area" of the OSPF Autonomous System.

Static Routing Requirements

The validated architecture for the Active-Standby Services Chassis model uses statically configured IP routes between the Aggregation layer and the routed-mode Firewall Services Module (FWSM.) The FWSM is optimized as a security device, and while it does support OSPF, there are design advantages to the use of static IP routing at this layer. Details of this architecture decision are discussed in the Services Chassis portion of the Active-Standby model. Static IP routes are required for each of the server farm VLANs that have default gateway services on the Services Chassis MSFC's. When using static routes to the FWSM, the Aggregation layer MSFC's only use a dynamic routing protocol to peer with the Core layer.

Non-Serviced Traffic Option

In many data centers, not all traffic destined to the server farm may be required to transit the services modules. The Active-Standby Services Chassis model supports the capability of configuring non-serviced server farm VLANs and IP subnets, which terminate directly on the Aggregation layer. This is a similar approach to the basic hierarchical network design without services. The Aggregation layer MSFC's provide IP default gateway for these subnets, and the VLANs are not extended across the trunks to the Services Chassis. A given server farm VLAN and IP subnet should not be extended between the non-serviced and Services Chassis domains.

Default Gateway Redundancy

Redundant IP default gateway services may be provided using several available First Hop Redundancy Protocols (FHRPs). These protocols include Hot Standby Router Protocol (HSRP), Gateway Load Balancing Protocol (GLBP), and Virtual Router Redundancy Protocol (VRRP). For validation of the Active-Standby Services Chassis model, HSRP was chosen since it is widely deployed and stable. A single gateway address is desirable per subnet to support better control of traffic paths through the network when implementing services.

HSRP hello and dead timers should be configured to match the routing protocol timers being used in the network; a one-second hello and three-second dead timer are recommended. HSRP supports authentication of peers, which is desirable to prevent an unintended device from participating in the negotiation of active gateway. The priority needs to be set higher on the HSRP peer router that will act as the primary default gateway when all components in the design are up and running normally.

HSRP preemption is a feature that allows an HSRP peer with a higher priority to regain control of the shared IP and mac address for gateway services. Preemption is desirable during the restoration of a device that was previously failed. However, it is possible when re-inserting a previously failed switch into the network that it may attempt to preempt HSRP active status before all interfaces are ready to forward traffic during a boot process. For that reason, a damping timer of 180 seconds is recommended on HSRP preemption, to ensure that a switch is fully booted and all interfaces are functioning normally before HSRP active status is resumed. An example of HSRP interface configuration is shown below:

interface Vlan128ip address 10.7.128.3 255.255.255.0ip pim sparse-modeip igmp version 3standby 1 ip 10.7.128.1standby 1 timers 1 3standby 1 priority 20standby 1 preempt delay minimum 180standby 1 authentication c1sc0Multicast

See Core Layer for best practices on multicast configuration.

Services Chassis

The following section describes the physical and logical design of the Services Chassis in the Cisco data center architecture.

Overview

Physical Topology

High availability requirements in the data center demand that network services and service paths are deployed redundantly removing any one single point of failure. As a result, the service chassis switches are deployed in pairs dual-homed to the Aggregation layer switches and directly attached to one another. Figure 11 illustrates the physical layout of the Services Chassis design. In this example, each of the services switches is hosting a single FWSM and ACE service module.

The direct connection between the services switches is an 802.1q trunk providing a direct and dedicated path for service module fault tolerant traffic including:

•

Service device heartbeats and probes

•

Configuration synchronization

•

Replicated connection state information

Figure 11 Service Chassis Physical Connectivity

The Service Chassis Inter-Switch Link (ISL) does not support user data traffic; it is dedicated to fault tolerant control traffic between service modules. To improve resiliency, the Services Chassis ISL is configured as an EtherChannel, removing a single point of failure.

Note

It is recommended to distribute the connections between aggregation and services switches across physical linecards to improve infrastructure availability.

In this model, the Services Chassis's employs 10 Gigabit Ethernet links to the Aggregation layer and in the fault tolerant ISL configuration. The introduction of 10 Gigabit Ethernet to the service chassis is not a strict requirement. However, given the current industry trends of data center and server consolidation, increasing server density and load within a single Aggregation layer block of the data center is expected; therefore, applications and network services should be sized appropriately.

The fault tolerant ISL bandwidth requirement is dependent on the number of service modules within the services switches and the amount of fault tolerant traffic generated by these devices on the link. The FWSM and the ACE do not require the bandwidth of 10 Gigabit Ethernet connections for fault tolerant traffic; in fact, a single Gigabit Ethernet connection would suffice for each. During testing, the available 10 Gigabit Ethernet ports on the Supervisor 720 were leveraged as the ISL between Services Chassis's.

Figure 11 shows the physical connectivity between the Services Chassis's and the Aggregation layer switches. There are redundant physical paths present in this design. The following section details the logical topology under the covers and the steps necessary to deploy a stable service chassis environment.

Note

Although not tested for this document, it is feasible to deploy a single service chassis switch leveraging redundant supervisors supporting a multitude of redundantly deployed network service devices for a single aggregation block in the data center.

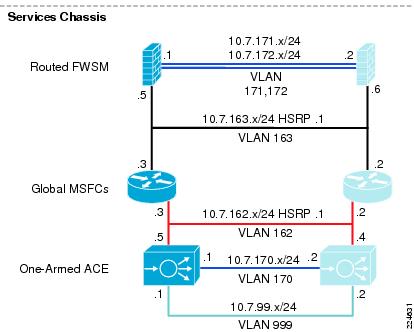

Logical Topology

Figure 12 illustrates the logical topology of the Active-Standby Services Chassis design. As shown in this drawing, the Services Chassis switches employ the following components and features:

•

Layer 2 switching

•

Layer 3 routing

•

The FWSM (routed mode)

•

The ACE (one-arm mode)

Figure 12 Active-Standby Logical Design

Layer 2

From a Layer 2 perspective the Services Chassis is supporting multiple VLANs created either for user data or fault tolerant traffic support. In Figure 12, VLANs 171 and 172 provide fault tolerant connectivity between the active and standby FWSM, while VLAN 170 provides a similar service for the virtual Active-Standby ACE contexts. These VLANs reside on the ISL between Services Chassis.

VLANs 161, 162 and 163 in Figure 12 support user data traffic between the FWSM, the MSFC and the ACE context. These VLANs span the services switches via the Aggregation layer. This is required to meet the Layer 2 adjacency requirements of a redundant service module deployment.

Figure 13 Active-Standby Traffic Flow

Features

Physical Connectivity

The Active-Standby Services Chassis model is based on a physical architecture with dual-homed trunks into the Aggregation layer, and a dedicated ISL between the Services Chassis for fault tolerance traffic. Multiple combinations of physical ports could be used to set up this connectivity. The types of ports that are used can affect the configuration requirements for the modules and Layer 2 switching features to ensure high availability. The Services Chassis models may often be used in migration scenarios where modules previously lived directly in the Aggregation layer switches, so spare equipment on hand may be what is being employed to build out the necessary connections. However, several factors should be considered carefully in establishing this connectivity.

•

Physical Throughput. The total maximum bandwidth capacity of the modules being deployed should be considered when planning the physical connectivity. The maximum throughput of the ACE Modules is on the order of 16 Gbps. The FWSM with software version 3.2 supports a maximum throughput of 5.5 Gbps. The physical bandwidth capacity of each of the dual-homed links should well exceed the capacity of the modules, or at least the expected capacity of the aggregate end servers that are passing their traffic through the modules.

•

Planned and Failover traffic paths. The Services Chassis model is inherently more complex at Layer 2 and Layer 3 than an Integrated Services model with services directly in the Aggregation layer, since there are four physical switches in the service topology instead of only two. Plan for the possibility that in certain failover scenarios if dynamic routing protocols are used, a portion of the traffic may need in ingress and egress a given Services Chassis more than once. This is due to ECMP and should affect only a portion of the traffic; the use of static routes provides more granular path control and can help eliminate these scenarios. If the Services Chassis model is being adapted to serve in a multi-tier application environment, you may consider services both in front of a Web/Application server and also between the Web/Application server and a Database server. These additional traffic flows should be taken into account in bandwidth allocation.

•

Use of EtherChannel. The use of 10 Gigabit EtherChannel is recommended for the construction of the dual-homed links to the Aggregation layer, 1 Gigabit-based EtherChannel may suffice depending on customer bandwidth requirements and failover paths. The most robust configuration will include ports from two different line cards in the EtherChannel, so that an individual linecard failure cannot take down the entire Port Channel interface.

•

Line Card Combinations. High availability should be considered and the potential link or device failures in the active path before completing Layer 2 and service module configuration. Following are some examples of combinations of line cards and considerations for their deployment.

–

Two 8 or 4-port 10-Gigabit cards per chassis. This is the most robust combination. Two or more ports of 10 Gigabit Ethernet should be used to form each EtherChannel connection into each Aggregation layer switch. Each EtherChannel should use ports that are spread across the two different line cards for high availability. Two additional ports of 10 Gigabit Ethernet should be used to build the fault tolerance ISL between Services Chassis.

–

One 8-port 10 Gigabit card per chassis. In this model, EtherChannel connections may still be used from each Services Chassis to each Aggregation layer switch. The dedicated fault tolerance EtherChannel should also be built from ports on the same 8-port 10 Gigabit linecard. An important aspect of this approach to consider is that if the linecard itself fails, it will bring down both data path EtherChannels as well as the fault tolerance link.

–

One 4-port 10 Gigabit card per chassis. In this model, one could choose to build the two dual-homed links each out of single 10-Gigabit ports as opposed to EtherChannel. This would leave two ports open to build the Fault Tolerance ISL. Another possible approach would be to use EtherChannel for the data path links, which would consume all of the 10-Gigabit ports on each line card to connect to both Aggregation layer switches. If two 1-Gigabit ports are available from each of the supervisors, these could then be used to build the fault tolerance Link.

Note

If the data path connections are being deployed using a single linecard that is separate from the fault tolerance links, one must consider and plan for an additional possible failure mode. If the linecard carrying the data path links were to fail, but the separately configured fault tolerance ISL remains up, the standby modules may not realize that the primary modules now have no data path connectivity. Interface monitoring on the service modules may be used to ensure that the primary modules relinquish their active status if this occurs. The fastest failover occurs with the use of interface monitoring in conjunction with firewall and Service Line Card (SVCLC) autostate. The implementation of interface monitoring with autostate also has some side effects which are discussed in the following section.

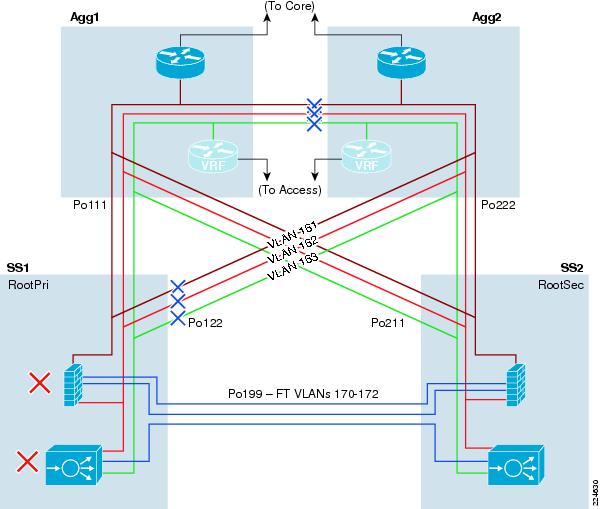

Interface Monitoring and Autostate

Standard autostate is a mechanism which is enabled by default in the Cisco Catalyst 6500 for the integrated Layer 3 routing engine, the Multilayer Switch Feature Card (MSFC). Autostate is used to notify the MSFC when there are no longer any active physical ports in a given VLAN. If a routed VLAN interface for this VLAN has been defined on the MSFC, the routed interface is moved to a "down" state if no physical ports in the switch are forwarding traffic for the VLAN. This mechanism allows the IP route for this VLAN to be removed from the routing table, and no longer advertised to IGP peers, until once again physical ports in the switch become active for that VLAN.

The services modules in the Cisco Catalyst 6500 support similar autostate mechanisms. These mechanisms are not enabled by default. For the FWSM, the keyword firewall is used when enabling autostate, for the ACE, the keyword svclc is used. Examples of the Cisco IOS configuration used to enable these capabilities is shown below.

firewall autostatefirewall multiple-vlan-interfacesfirewall module 4 vlan-group 1,2,3,firewall vlan-group 1 146firewall vlan-group 2 171,172firewall vlan-group 3 161,163analysis module 2 management-port access-vlan 146svclc autostatesvclc multiple-vlan-interfacessvclc module 5 vlan-group 1,52,162,999,svclc vlan-group 52 170svclc vlan-group 162 162svclc vlan-group 999 999Similar to standard MSFC autostate, firewall and svclc autostate will notify the FWSM and ACE respectively, if all of the physical interfaces carrying a specific VLAN address are down. If the module is using interface monitoring as a criterion for failover of the active status, the autostate will provide much faster failover of the modules than the standard mechanisms integrated into the modules.

The decision to run autostate is tightly tied to the Spanning Tree design of the services region of the network. The number of active interfaces in the Spanning Tree table for a given VLAN is used as the criteria for when the switch sends and autostate up or down notification to a service module. If standard hierarchical network design practices are followed, the Aggregation layer switches are set to be primary and secondary STP root. In this way, the path to to STP root for the primary services switch in the model is the link to Aggregation 1. If this link experiences a failure, one would expect traffic to reroute to Services Chassis 1 through Aggregation 2. However, if Services Chassis 1 sees its path to STP root change, then all ports on the VLAN briefly stop forwarding traffic while the Spanning Tree is recalculated. This brief outage also triggers an Autostate Down message from the Services Switch 1 Layer 2 engine to the FWSM, causing the active status to transition to the secondary FWSM, and then back. This flapping of active status on the FWSM can significantly increase the reconvergence time for the failure cases of the Aggregation 1 to Service Chassis 1 link failure, or the case of the failure of Aggregation 1 itself.

To work around this issue, one may consider configuring the STP root of only the service-specific VLANS onto the Services Chassis instead of the Aggregation. This step should not be taken for the server farm VLANs themselves. Specific benefits and implications of this design decision are discussed in the "Services Chassis Spanning Tree Specifics" section.

Layer 2

The Services Chassis leverage common networking features providing Layer 2 connectivity to the services modules allowing traffic to flow through the active modules. Best practices for configuration of these features have already been covered under the core and Aggregation sections of the Active-Standby Services Chassis model description. These features include:

•

PortChannel/EtherChannel with LACP and adaptive hash algorithm

•

Spanning Tree Loop Guard (enabled on dual-homed links of Services Chassis)

•

Rapid Per-VLAN Spanning Tree (RPVST)

Services Chassis Spanning Tree Specifics

The classical hierarchical network design model with the Layer 2 / Layer 3 boundary at the Aggregation layer dictates STP priority be manipulated on the aggregation switches to force the location of a primary and secondary STP root switch. This creates a consistent, deterministic Spanning Tree root bridge location for each of the access switches in the Looped Triangle and Looped Square topologies that are discussed in "Access Layer" section. When analyzing STP configuration for the services region, consider again the logical layout of the Active-Standby Services Chassis model as shown in Figure 14.

Figure 14 Active-Standby Services Chassis Logical Model

The VLANs between the service modules and the Aggregation layer as well as the VLANs between layers of services, must all be extended between the two Services Chassis across the dual-homed physical links to the Aggregation. The set of VLANs defining the services region as shown in Figure 14 include VLAN 161,162, and 163. This VLAN extension provides contiguous IP subnetting, and failover paths required for high availability of the solution. If the classical hierarchical network design approach is taken when connecting the Active-Standby Services Chassis model, the resulting Spanning Trees for each of the VLANs in question will be similar to the illustration in Figure 15

Figure 15 Services Region traditional Spanning Tree

As discussed earlier in the section on autostate and interface monitoring, there may be design factors that cause a network architect to consider moving the Spanning Tree root for the services region VLANs to the Services Chassis themselves. The resulting Spanning Trees for these VLANs would looks similar to Figure 16 , if this was done.

Figure 16 Services Region Altered STP Root

There are pros and cons to this design decision. Moving the Spanning Tree root for the services region VLANs will work around the issue of path to STP root changing and causing Autostate notification and Services module flapping if interface monitoring is in use. As shown in Figure 16, the movement of the Spanning Tree root also opens up the direct Layer 2 forwarding path between Agg2 and SS1. This provides for a cleaner flow of traffic through the network in an "all normal" state with all modules, switches and links in the topology up and running.

Note

SS 2 in Figure 16 effectively has two equal cost paths to reach the STP root switch in SS1. These are the paths through Agg1 and Agg2. When equal cost paths exist in STP, the tie is broken based on the bridge ID which is the MAC address of the switch. Ideally, the path to Agg1 should be open and the path through Agg2 should be blocked when using static routes, since the HSRP primary address that the static route points to will reside on Agg1. If STP does not converge that way due to the MAC address of Agg2 being lower, then increase the STP cost on the PortChannel connection to Agg 2, to force the Spanning Tree to converge as shown in Figure 16. Use the spanning-tree cost command and increment the cost from the default cost of 1 to the value of 2.

The downside of moving the Spanning Tree root to the Services Chassis is that it can cause suboptimal failover paths for a portion of the traffic in certain failure cases if OSPF is being used on the FWSM, causing traffic to need to ingress and egress the primary Services Chassis twice. If Services Chassis physical connectivity has been provisioned with adequate capacity as recommended, this may not be an issue for a backup path. One of the initial goals of this Services Chassis architecture analysis was to optimize traffic paths for the normal state of the network first, before focusing on failover cases. Also, if static routes are in use on a routed FWSM, such as the approach that was used in validation of this model, these suboptimal paths will not occur. Static routes eliminate the FWSM attempting to use OSPF Equal Cost Multi Path (ECMP) to balance traffic to both peering routers, and instead direct the traffic only to the active HSRP default gateway.

Note

Using static routes pointing to an HSRP default gateway address is not supported for multicast traffic through the FWSM in software version 3.2 as used for validating this architecture. If IP multicast is a requirement, running OSPF on the FWSM may be used as an alternate approach.

Layer 3

The Services Chassis also leverage common networking features providing Layer 3 routed connectivity to the services modules allowing traffic to flow through the Active modules. Best practices for configuration of these features have already been covered under the Core and Aggregation sections of the Active-Standby Services Chassis model description. These features include:

•

Hello and dead/hold timer adjustments

•

Neighbor authentication

•

Passive interface default

•

Hardset router ID

•

No Auto-Summary (EIGRP)

•

Auto-Cost Reference Bandwidth (OSPF)

•

Throttle Timer adjustment (OSPF)

•

Multicast best practices

•

HSRP Optimization

Note

The Layer 3 configurations in the Services Chassis exist primarily to provide a segmentation of the ACE VIP VLAN away from the true server farm VLANs. This also provides for local Route Health Injection of VIP host routes from the ACE to control SLB traffic flows, if dynamic routing on the FWSM is used. This approach provides additional security since the application clients need not know the true address space of the real servers. The non-SLB server farm subnets also leverage the Services Chassis MSFC as default gateway for consistency, and to provide flexibility to insert additional services into the service chain at a later time without requiring server farm default gateway changes. If ACE services are not required in the Services Chassis, the MSFC Layer 3 configuration may optionally be eliminated, and server farm VLANS may be terminated with their default gateway directly on the FWSM.

Specific Layer 3 optimizations are required for use of OSPF or static routing to be compatible with configuration on the FWSM . Additional HSRP tracking features are required for aligning default gateway services with the active ACE modules in failover scenarios. Detailed configuration information for these requirements is provided in the discussions of the FWSM- and ACE-specific sections that follow. Below is an example configuration of the Services Chassis MSFC with an OSPF configuration in place for peering with the FWSM, and the necessary redistribution statements and route-maps to support the server VLANs and RHI injected statics.

router ospf 7router-id 4.4.4.1log-adjacency-changesauto-cost reference-bandwidth 10000area 71 authentication message-digestarea 71 nssatimers throttle spf 10 100 5000timers throttle lsa all 10 100 5000redistribute connected metric 10 metric-type 1 subnets route-map OSPFredistribute static subnets route-map RHI-MAPpassive-interface defaultno passive-interface Vlan163network 10.7.163.0 0.0.0.255 area 71!access-list 10 permit 10.7.162.100access-list 100 deny ip 10.7.162.0 0.0.0.255 anyaccess-list 100 deny ip 172.0.0.0 0.255.255.255 anyaccess-list 100 permit ip any anyroute-map OSPF permit 10match ip address 100!route-map RHI-MAP permit 10match ip address 10set metric-type type-1!FWSM

Overview

In the Active-Standby Services Chassis model, the FWSM is configured as a single-context in routed mode employing an active/standby fault tolerant configuration. In an active/standby configuration, only one of the firewall modules will pass traffic and the other will be in a STANDBY READY state. As shown in Figure 17, the FWSM is a routed hop in the data center's logical topology. The firewall is positioned between the MSFCs at the Aggregation layer and the MSFCs on the local Services Chassis switches. The FWSM employs two VLAN interfaces for user traffic, the "north" public interface (VLAN 161) and the "south" private interface (VLAN163). Secure access to the data center server farm is controlled via the FWSM policies associated with these interfaces, allowing network administrators to reliably apply firewall-based security via IP routing.

Note

Although not validated in this model, the FWSM may be also positioned as the default gateway for the servers in the server farm. If the FWSM is leveraged as the default gateway for a server subnet, any server-to-server traffic between subnets would be firewalled as well. For example, n-tier applications using application-to-database server connectivity could be secured.

Note

The introduction of additional service requirement may require traversing the FWSM or other service modules such as the ACE, multiple times that may impact the overall capacity of the solution.

Figure 17 Active-Standby Combined Layer 2 and Layer 3 Topology

From its earliest release, the FWSM has supported static routes and dynamic routing protocols such as OSPF and RIP. In the FWSM 4.0 Release, EIGRP has been added to the list of routing features supported on the platform. The use of dynamic routing can simplify configuration complexity. However, this dynamic intelligence requires the consumption of resources by requiring the firewall to act as a router.

During testing, convergence times were decreased by removing the OSPF process and making use of static routes to direct traffic across the firewall context. Removing the delay of neighbor establishment and routing table loads from the failover process expedited convergence. Although static routes may add some management overhead, faster network convergence and the inherent security of predefined routes within the data center may outweigh that burden. Use of static routing also eliminates the Equal Cost Multi Path (ECMP) feature of dynamic routing. The elimination of ECMP for subnets carrying service traffic can help provide more granular control over traffic paths between the Aggregation and Services Chassis.

Note

FWSM does not support dynamic routing in multiple context mode.

Note

As stated in the FWSM 3.2 configuration guide, Cisco recommends using the advanced routing capabilities of the upstream and downstream routers instead of relying on the FWSM for extensive routing needs.

As previously mentioned, the FWSM is in an active/standby configuration using two fault tolerant VLAN interfaces. The failover interface, in this example VLAN 172, is mandatory to provide a redundant stateless firewall deployment. For stateful failover, dedicate a VLAN interface to replicate state information to the standby unit, in this example VLAN 171. Each of these VLANs resides on the ISL between services switches and is not present at the Aggregation layer.

Catalyst 6500 IOS Implementation

The FWSM physically resides in the Cisco Catalyst 6500 switching platform. To leverage this integrated service the following tasks must be completed:

•

Create the VLANs to support traffic ingress and egress from the FWSM, and fault tolerance communication between the modules.

•

Allow the FWSM to leverage the autostate messaging capabilities of the 6500-supervisor engine. Autostate reporting by the supervisor indicates the status of the physical interfaces of the VLANs associated with the FWSM on the switch, permitting Rapid Link Failure Detection by the FWSM. Rapid Link Failure Detection bypasses a number of interface monitoring tests, which expedites the failover process.

Note

The use of autostate and interface monitoring is optional if data and fault tolerant VLANs share the same physical interfaces. See the "Physical Connectivity" section.

•

Allow multiple VLAN interfaces (SVI) to be connected with the FWSM if there are more than one VLAN with an SVI leveraged by the FWSM. In this example, VLAN 146 (the management VLAN) and VLAN 163 each have a SVI on the Catalyst 6500, therefore the multiple VLAN interface configuration is necessary for the firewall module.

•

Assign the VLANs to firewall VLAN groups

•

Assign the VLAN groups to the associated firewall module

The following example highlights the tasks defined above:

vlan 146vlan 161vlan 163vlan 171vlan 172firewall autostate firewall multiple-vlan-interfaces firewall module 4 vlan-group 1,2,3, firewall vlan-group 1 146 firewall vlan-group 2 171,172 firewall vlan-group 3 161,163To verify the configuration of the FWSM from the perspective of the Catalyst switch, issue the following commands: show firewall module and show firewall vlan-group. In our example, the FWSM in slot 4 of the Services Chassis has three VLAN group assignments that are defined as follows:

dca-ss1#show firewall moduleModule Vlan-groups------ -----------04 1,2,3dca-ss1#show firewall vlan-groupDisplay vlan-groups created by both ACE module and FWSMGroup Created by vlans----- ---------- -----1 FWSM 1462 FWSM 171-1723 FWSM 161,16352 ACE 170162 ACE 162999 ACE 999

Note

The show firewall vlan-group command is the equivalent to the show svclc vlan-group command that details the VLAN assignment of the ACE and FWSM service modules present in the chassis.

Fault Tolerant Implementation

The following section details the fault tolerant configuration of an active/standby pair of redundant stateful firewall modules.

•

Define the role of the primary FWSM unit and the corresponding secondary unit. This must be done on each FWSM in prior to enabling failover

•