Cisco Virtual Multi-Tenant Data Center Implementation Guide, Version 2.1

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- October 18, 2011

Chapter: Implementation Overview

Implementation Overview

This chapter describes the Cisco VMDC 2.1 architecture implementation. The following major sections are discussed:

•![]() Infrastructure Implementation

Infrastructure Implementation

•![]() Additional Technology Implementation

Additional Technology Implementation

•![]() Additional Product Implementation

Additional Product Implementation

As a reference model, Cisco VMDC 2.1 is both flexible and extensible, and may need to be extended or modified to meet the requirements of a specific enterprise data center network.

Functional Components

The Cisco VMDC 2.1 data center network design is based on a proven layered approach, which has been tested and improved over the past several years in some of the largest data center implementations in the world. The layered approach is the basic foundation of the data center design that seeks to improve scalability, performance, flexibility, resiliency, and maintenance.

The four layers covered in this implementation guide are:

•![]() Aggregation Layer

Aggregation Layer

•![]() Services Layer

Services Layer

•![]() Access Layer/Virtual Access Layer

Access Layer/Virtual Access Layer

•![]() Compute Layer

Compute Layer

This guide also includes some sample implementation details for the following additional layers:

•![]() Core Layer

Core Layer

•![]() Management Layer

Management Layer

•![]() Storage Layer

Storage Layer

In addition to the layered datacenter design, the Cisco VMDC 2.1 network implementation is done with the following key operational parameters in mind.

High Availability through:

•![]() Device redundancy

Device redundancy

•![]() Link redundancy

Link redundancy

•![]() Path redundancy

Path redundancy

Performance and Scalability through:

•![]() N×10 Gigabit Ethernet Switching Infrastructure

N×10 Gigabit Ethernet Switching Infrastructure

•![]() vPC (Virtual Port Channels) and MEC (Multi-Chassis EtherChannels)

vPC (Virtual Port Channels) and MEC (Multi-Chassis EtherChannels)

•![]() Fast convergence

Fast convergence

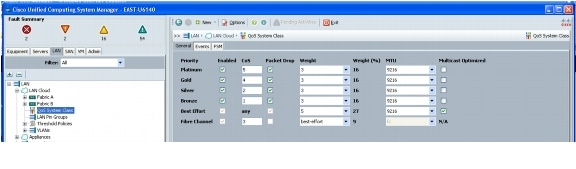

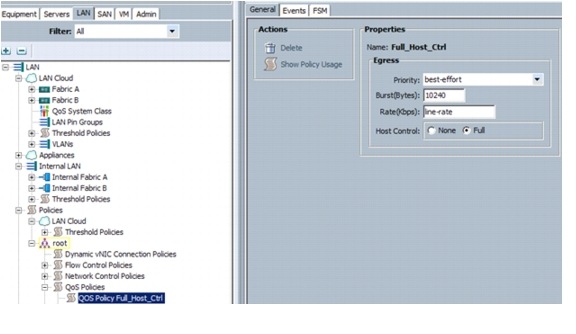

Service Assurance through:

•![]() QoS classification and marking

QoS classification and marking

•![]() Traffic flow matching

Traffic flow matching

•![]() Bandwidth guarantees

Bandwidth guarantees

•![]() Rate limits

Rate limits

Tenant Separation at each network layer:

•![]() Core Layer: Virtual Routing and Forwarding (VRF)

Core Layer: Virtual Routing and Forwarding (VRF)

•![]() Aggregation Layer: VRF, VLAN

Aggregation Layer: VRF, VLAN

•![]() Services Layer: VRF, VLAN, and Virtual Device Contexts

Services Layer: VRF, VLAN, and Virtual Device Contexts

•![]() Access Layer: VLAN

Access Layer: VLAN

•![]() Virtual Access Layer: VLAN

Virtual Access Layer: VLAN

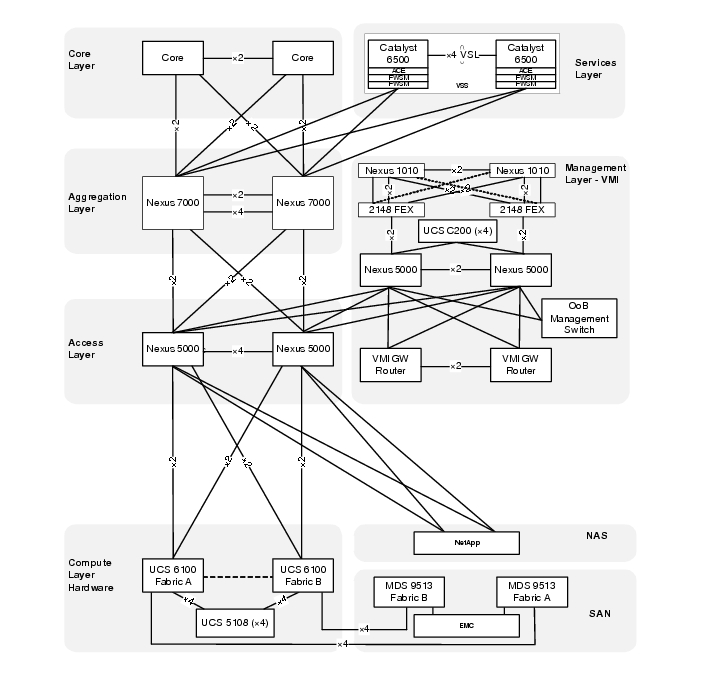

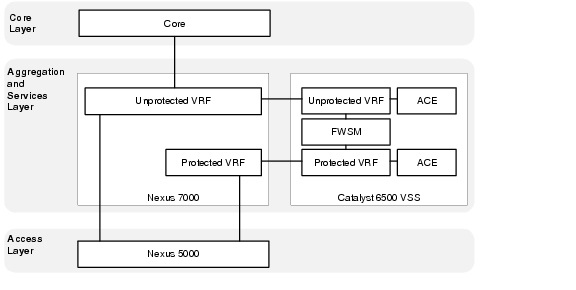

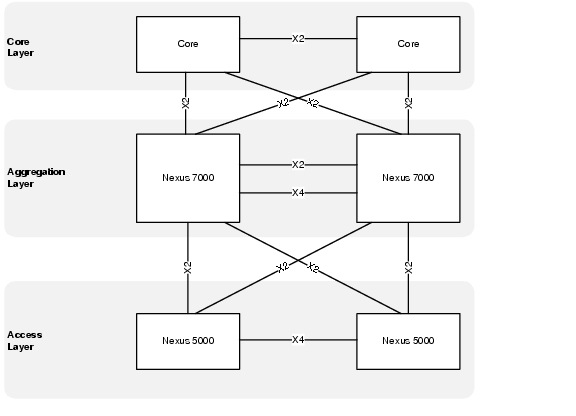

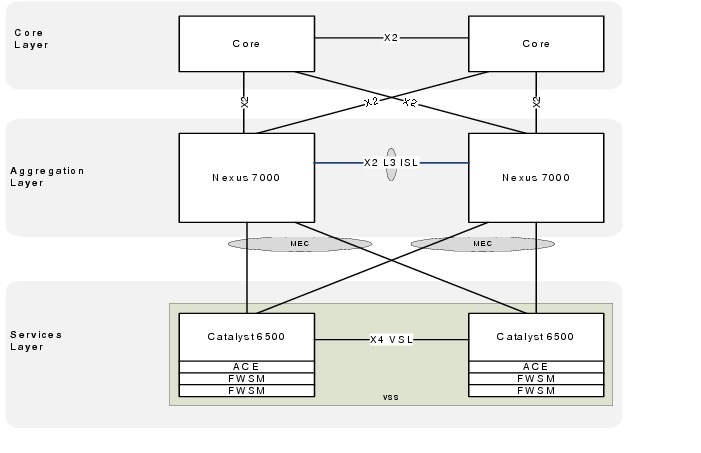

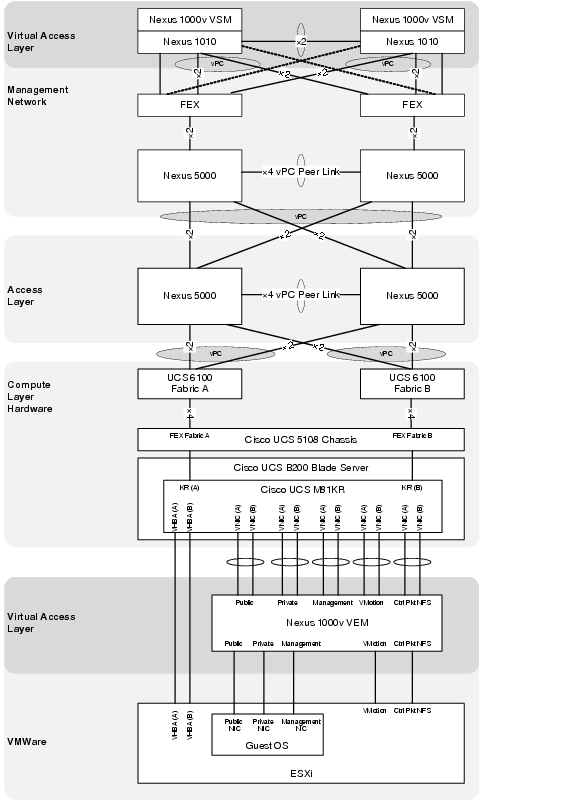

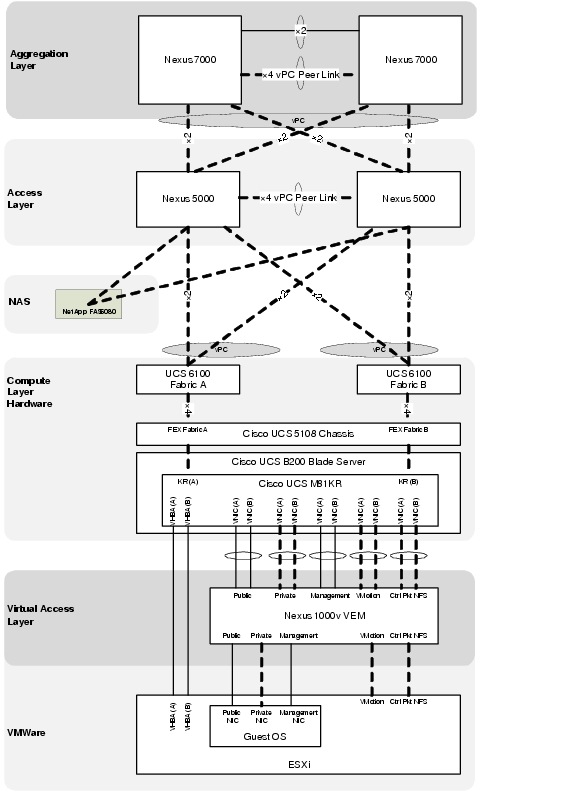

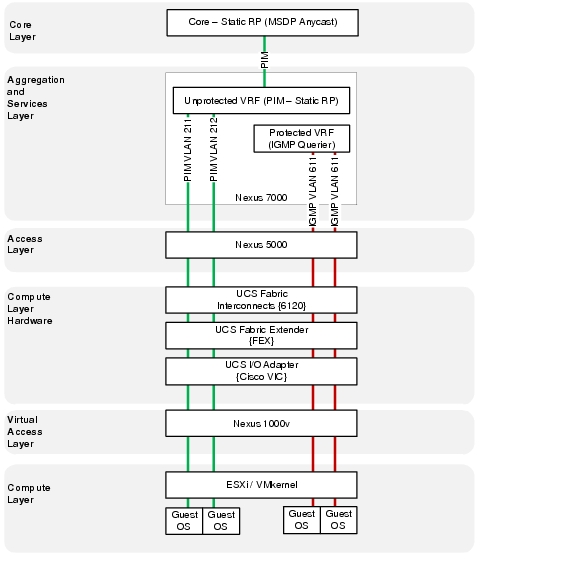

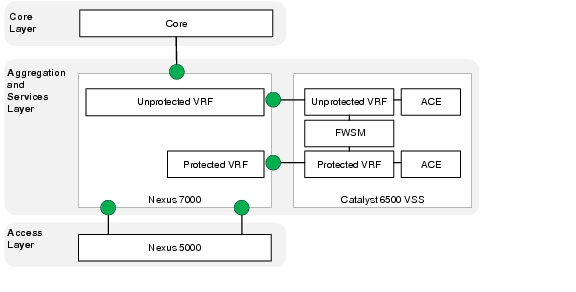

Physical Topology

The Cisco VMDC 2.1 network infrastructure deployment models the standard Cisco three-tier hierarchical architecture model (core, aggregation, access). This data center network design is based on a proven layered approach, which has been tested and improved over the past several years in some of the largest data center implementations in the world. The layered approach is the basic foundation of the data center design that seeks to improve scalability, performance, flexibility, resiliency, and maintenance. Figure 2-1 illustrates the overall Cisco VMDC 2.1 physical topology.

Figure 2-1 Cisco VMDC 2.1 Physical Topology

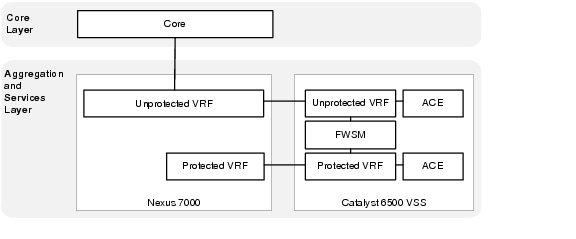

Logical Topology

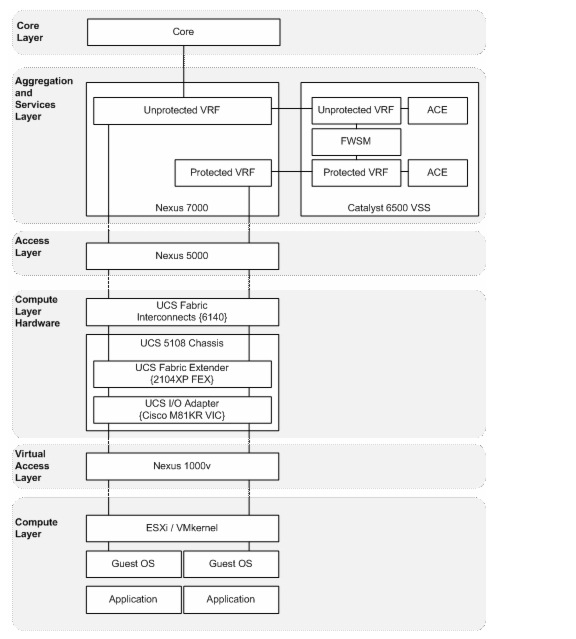

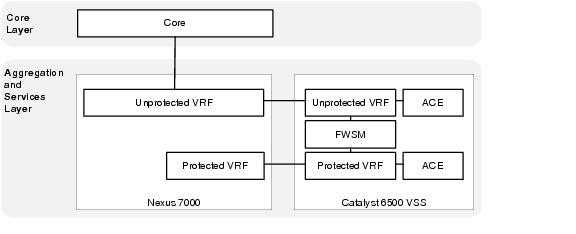

Logical topologies are bound to network protocols and describe how data is moved across the network. The Cisco VMDC 2.1 basic tenant concept is modeled after a simple datacenter structure containing a public/common server farm and a secure/private server farm.

Figure 2-2 represents the Cisco VMDC 2.1 logical tenant topology that is created on the physical topology in Figure 2-1.

Figure 2-2 Cisco VMDC 2.1 Logical Topology

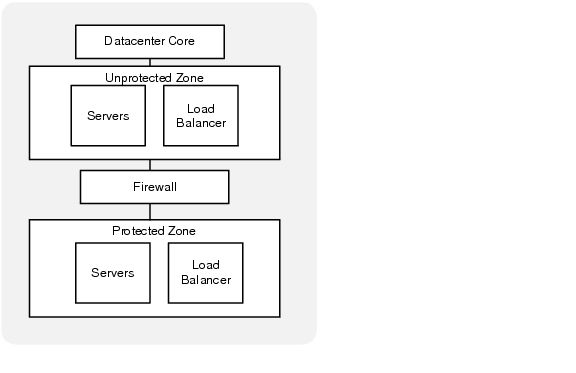

Tenant Model

The Cisco VMDC 2.1 tenant concept is modeled on a basic aggregation block containing both a common server farm and a secure server. Each tenant has an unprotected (public/common) zone and a protected (private/secure) zone.

Figure 2-3 depicts a logical view of a single tenant.

Figure 2-3 Single Tenant Logical Diagram

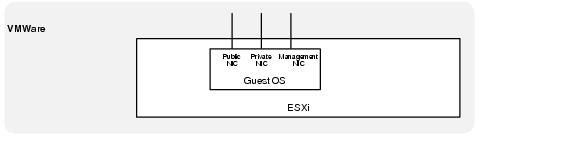

Each Server Virtual Machine (VM) deployed within a tenant protected or unprotected zone is assumed to have three network interfaces (NICs).

•![]() Front End - These interfaces are used for external access (HTTP, HTTPS, etc.) to the server or cluster, which can be accessed by application servers or users that are submitting jobs or retrieving job results from the cluster.

Front End - These interfaces are used for external access (HTTP, HTTPS, etc.) to the server or cluster, which can be accessed by application servers or users that are submitting jobs or retrieving job results from the cluster.

•![]() Back End - These interfaces provide inter-compute node communications (clustering) and potentially a back-end high-speed storage path (NFS). Typical requirements include low latency and high bandwidth and may also include jumbo frame support.

Back End - These interfaces provide inter-compute node communications (clustering) and potentially a back-end high-speed storage path (NFS). Typical requirements include low latency and high bandwidth and may also include jumbo frame support.

•![]() Management - This interface provides out-of-band (OoB) management for the cloud administrative tools or remote access for server administration.

Management - This interface provides out-of-band (OoB) management for the cloud administrative tools or remote access for server administration.

Figure 2-4 Virtual interfaces per VM

VLAN Allocation

In Cisco VMDC 2.1, the tenant VLAN scheme is flexible for different tenants or different tenant zones. The goal of the design was to allocate VLANs for different purposes spanning different devices or layers in the architecture. The Cisco VMDC 2.1 model tenant VLAN allocation was done as follows:

•![]() 3 front-end (Public) VLANs

3 front-end (Public) VLANs

•![]() 1 or 2 backend (Private) VLANs

1 or 2 backend (Private) VLANs

•![]() 1 VM management VLAN

1 VM management VLAN

Note ![]() Throughout this document, example configurations reference the VLANs in Table 2-1.

Throughout this document, example configurations reference the VLANs in Table 2-1.

IP Addressing

In Cisco VMDC 2.1, the tenant IP addressing scheme is flexible and can support public or private addressing. The VRF segmentation would also allow overlapping IP spaces for different tenants give the assumption that complete path isolation is provided end to end. Table 2-2 is an example of tenant IP address modeling done in a private address block. If a tenant is assigned a contiguous block of subnets within the datacenter, the routing may be summarized when it is advertised to the rest of the network. In addition, only a small number of static routes are needed to provide reachability between a tenant's unprotected and protected zones.

Note ![]() Throughout this document, example configurations reference the addresses in Table 2-2.

Throughout this document, example configurations reference the addresses in Table 2-2.

Virtual Routing and Forwarding (VRF)

In Cisco VMDC 2.1, Layer 3 separation between tenants and between tenant zones is accomplished using Virtual Routing and Forwarding (VRF). VRF instances allow multiple routing configurations in a single Layer 3 switch using separate virtual routing tables. By default, communication between VRF instances is not allowed to protect the privacy of each tenant zone.

Each tenant is assigned two VRF instances, one that forms the unprotected (public) zone and another that creates protected (private) zone. Routing information is carried across all the hops in each tenant's Layer 3 domain, and each tenant's unprotected and protected VRF is mapped to one or more VLANs in a Layer 2 domain.

Figure 2-5 depicts a completed logical topology showing the unprotected and protected VRFs extending into the services layer and the server VLANs as they extend to the access layer and then continue throughout the rest of the Layer 2 domain.

Figure 2-5 Cisco VMDC 2.1 Logical Topology

Routing

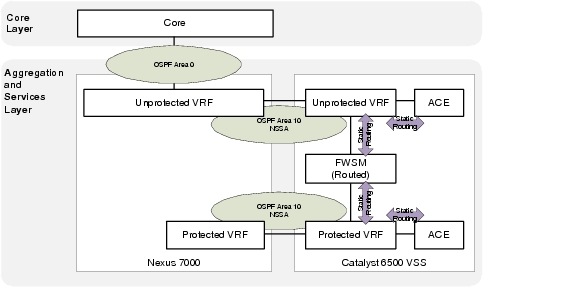

In Cisco VMDC 2.1, dynamic routing for each tenant is accomplished using OSPF as the interior gateway protocol. The remainder of the routing information is provided via static routes which are redistributed into OSPF at the Autonomous System Border Router (ASBR).

Not-so-stubby areas (NSSAs) are an extension of OSPF stub areas. Stub areas prevent the flooding of external link-state advertisements (LSAs) into NSSAs, relying instead on default routes to external destinations. NSSAs are more flexible than stub areas in that a NSSA can import external routes into the OSPF routing domain.

If the FWSM context is deployed in routed mode (recommended as the most flexible option) the unprotected becomes a true NSSA with the connection to Area 0 and the protected OSPF area is almost effectively a totally NSSA area given there is no connection to area 0 and a default static route is used to exit to the unprotected zone. In Figure 2-6, two separate routing domains are connected via static routes on the FWSM.

Figure 2-6 Tenant Routing with FWSM in Routed Mode

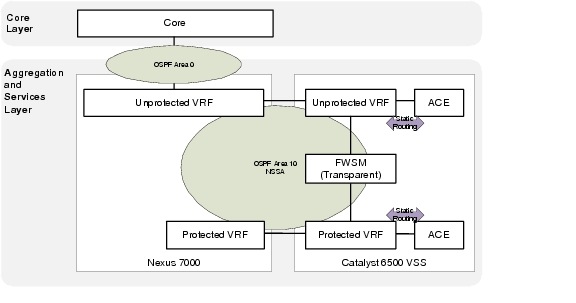

If the FWSM context is deployed in transparent mode, the unprotected and protected interfaces form an OSPF adjacency. The OSPF NSSA is extended through the FWSM, which forms a single routing domain. In this case, all routing information will be populated in both tenant zones.

Figure 2-7 Tenant Routing with FWSM in Transparent Mode

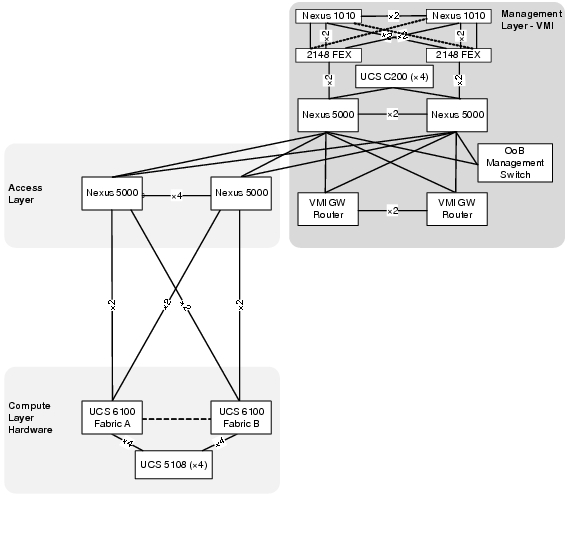

Management Implementation

The Cisco VMDC 2.1 solution does not focus on a specific management network architecture. The Virtual Management Infrastructure (VMI) described in this section illustrates an example deployment of a management network and how it integrates into the overall VMDC architecture.

Virtual Management Infrastructure (VMI)

Virtual Management Infrastructure (VMI) is a network that hosts additional infrastructure that employs a variety of tools, applications, and additional devices to assist human network managers in monitoring and maintaining the overall Cisco VMDC 2.1 architecture.

The software applications may include, but are not limited to, the following list:

•![]() Unified Computing System Manager (UCSM)

Unified Computing System Manager (UCSM)

•![]() Cisco Fabric Manager (FM)

Cisco Fabric Manager (FM)

•![]() VMware vSphere

VMware vSphere

•![]() BMC orchestration tools

BMC orchestration tools

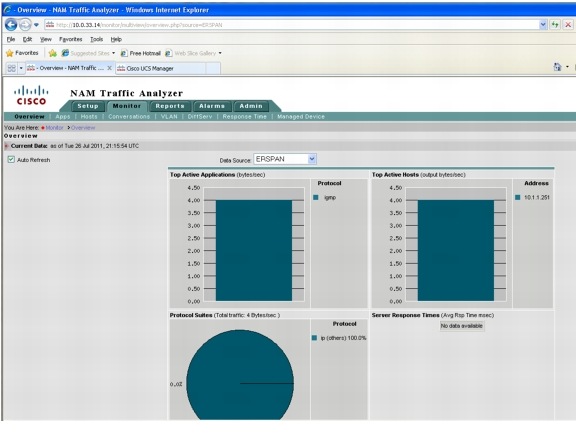

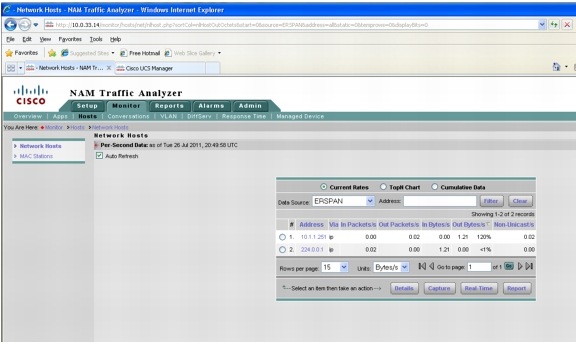

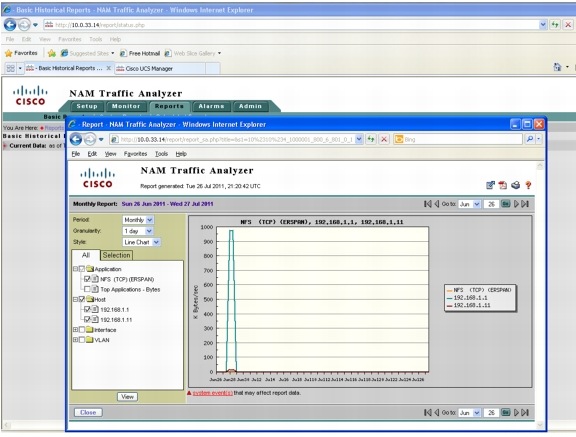

Additional hardware, such as the Nexus 1010 and Network Analysis Modules (NAM), would be deployed in VMI.

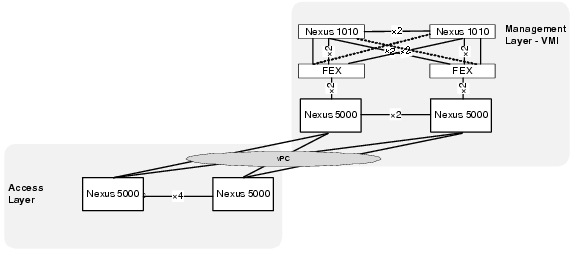

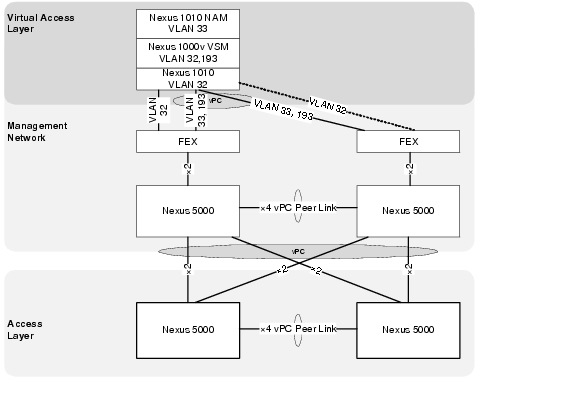

Physical Topology

All VMDC infrastructure devices use the local management VRF and mgmt 0 interface to provide Out-of-Band (OoB) management connection to the VMI OoB management switch.

VMI uses a separate distribution layer (gateway routers) to provide routing functionality between VMI VLANs and connectivity to any internal or external networks.

VMI also employs an additional pair of Nexus 5000 switches to serve as a dedicated access layer for the compute resources contained within VMI. The VMI access switches are directly connected to the Cisco VMDC 2.1 infrastructure via the VMDC Nexus 5000 access switches.

The required management VLANs are extended from VMI through the access layer and into the virtual access and compute layers providing Layer 2 adjacent connectivity to all ESXi hosts as well as all server virtual machines residing on UCS.

Figure 2-8 Cisco VMI Physical Topology

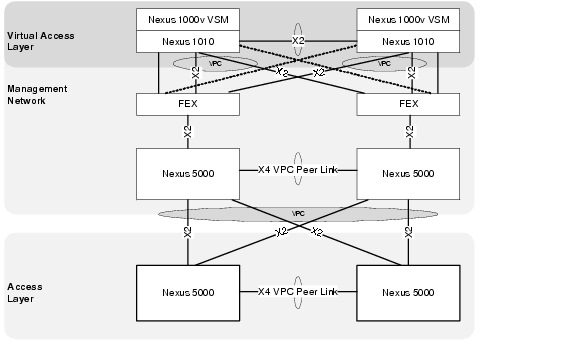

vPC Implementation

A virtual port channel (vPC) allows links that are physically connected to two different Cisco Nexus 5000 Series devices to appear as a single port channel by a third device. The third device can be a switch, server, or any other networking device that supports port channels. A vPC provides Layer 2 multipathing, which allows you to create redundancy and increase bisectional bandwidth by enabling multiple parallel paths between nodes and allowing load balancing traffic.

Virtual PortChannels (vPCs) are configured at both the VMI aggregation and VMDC access layers to enable full, cross-sectional bandwidth utilization (40 Gbps) as depicted in Figure 2-9.

Figure 2-9 vPCs connecting VMDC to VMI

The vPC domain includes both vPC peer devices, the vPC peer keepalive link, the vPC peer link, and all the PortChannels in the vPC domain connected to the downstream device.

Example 2-1 VMI vPC Configurations on VMI Nexus 5000 A

vlan 14

name 10.0.14.0_VMI-VM

vlan 32

name VMDC_Device_Mgmt

vlan 33

name EAST-FWSM-Contexts

vlan 34

name EAST-ACE-Contexts

vlan 40

name 10.0.40.0_EAST-ACE1

vlan 41

name 10.0.41.0_EAST-ACE2

vlan 42

name 10.0.42.0_EAST-FWSM1_and_2

vlan 43

name 10.0.43.0_EAST-FWSM3_and_4

vlan 193

name EAST-N1KV-CTRL/PKT

vlan 300

name EAST-NEXUS-1010-CTRL/PKT

!

spanning-tree pathcost method long

spanning-tree vlan 14,32-38,40-47,193,300 priority 24576

!

vpc domain 1

role priority 1

peer-keepalive destination 10.0.14.5

!

interface port-channel1

description vPC PEER-LINK

switchport mode trunk

vpc peer-link

switchport trunk allowed vlan 14,32-38,40-47,193,300

spanning-tree port type network

speed 10000

!

interface port-channel20

description vPC TO EAST VMDC N5Ks

switchport mode trunk

vpc 20

switchport trunk allowed vlan 14,32-38,40-47,193

!

interface Ethernet1/9

switchport mode trunk

switchport trunk allowed vlan 14,32-38,40-47,193

channel-group 20 mode active

!

interface Ethernet1/10

switchport mode trunk

switchport trunk allowed vlan 14,32-38,40-47,193

channel-group 20 mode active

Example 2-2 VMI vPC Configurations on VMI Nexus 5000 A

vlan 14

name 10.0.14.0_VMI-VM

vlan 32

name VMDC_Device_Mgmt

vlan 33

name EAST-FWSM-Contexts

vlan 34

name EAST-ACE-Contexts

vlan 40

name 10.0.40.0_EAST-ACE1

vlan 41

name 10.0.41.0_EAST-ACE2

vlan 42

name 10.0.42.0_EAST-FWSM1_and_2

vlan 43

name 10.0.43.0_EAST-FWSM3_and_4

vlan 193

name EAST-N1KV-CTRL/PKT

vlan 300

name EAST-NEXUS-1010-CTRL/PKT

!

spanning-tree pathcost method long

spanning-tree vlan 14,32-38,40-47,193,300 priority 28672

!

vpc domain 1

role priority 2

peer-keepalive destination 10.0.14.4

!

interface port-channel1

description vPC PEER-LINK

switchport mode trunk

vpc peer-link

switchport trunk allowed vlan 14,32-38,40-47,193,300

spanning-tree port type network

speed 10000

!

interface port-channel20

description vPC TO EAST VMDC N5Ks

switchport mode trunk

vpc 20

switchport trunk allowed vlan 14,32-38,40-47,193

!

interface Ethernet1/9

switchport mode trunk

switchport trunk allowed vlan 14,32-38,40-47,193

channel-group 20 mode active

!

interface Ethernet1/10

switchport mode trunk

switchport trunk allowed vlan 14,32-38,40-47,193

channel-group 20 mode active

VLAN Allocation

In Cisco VMDC 2.1, the management VLAN allocation used is as follows:

Note ![]() Throughout this document, example management configurations reference the VLANs in Table 2-3.

Throughout this document, example management configurations reference the VLANs in Table 2-3.

IP Addressing

In Cisco VMDC 2.1, the management IP allocation uses is as follows:

Note ![]() Throughout this document, example management reference the addresses in Table 2-4.

Throughout this document, example management reference the addresses in Table 2-4.

VMI Software

VMware vSphere

VMware vSphere is the virtualization hypervisor platform best integrated with the current Cisco Unified Computing System. The following vSphere components were used in VMI:

•![]() ESXi 4.1

ESXi 4.1

•![]() vCenter 4,1

vCenter 4,1

•![]() vSphere Update Manager

vSphere Update Manager

•![]() vSphere Management Agent 4.1

vSphere Management Agent 4.1

http://www.vmware.com/files/pdf/products/vsphere/VMware-vSphere-Standard-DataSheet-DS-EN.pdf

Unified Computing System Manager

Unify and Embed Management for Computing

http://www.cisco.com/en/US/products/ps10281/index.html

Fabric Manager Server Package

Infrastructure Implementation

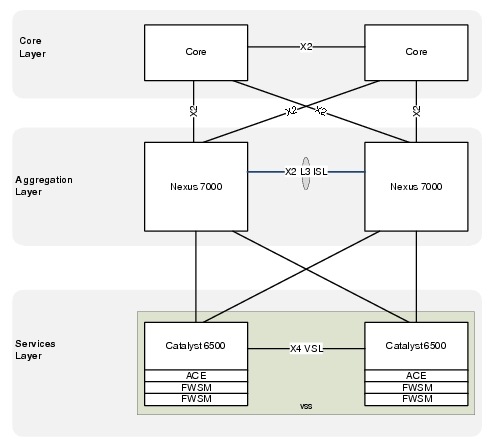

The Cisco VMDC 2.1 network infrastructure deployment models the standard Cisco three-tier hierarchical architecture model (core, aggregation, access) as described in the Datacenter 2.5 and 3.0 infrastructure design guides:

http://www.cisco.com/en/US/docs/solutions/Enterprise/Data_Center/DC_Infra2_5/DCI_SRND_2_5_book.html

http://www.cisco.com/en/US/docs/solutions/Enterprise/Data_Center/DC_3_0/DC-3_0_IPInfra.html

Figure 2-10 shows the basic layered design.design in which the rest of Cisco VMDC 2.1 architecture is built on.

Figure 2-10 Basic Three-Tier Data Center Design

Core Layer

From an overall architecture perspective, provisioning a dedicated pair of data center core switches insulates the core from the remainder of the data center network to improve routing stability and provide a future scale point for the data center topology. If requirements dictate a scenario that needs two or more aggregation blocks, a dedicated data center core network provides ease of deployment for scale expansion with no additional equipment in the data center network.

The Cisco VMDC 2.1 solution does not focus on the core layer; however, some relevant configuration pieces are included in this guide as reference.

Aggregation Layer (Nexus 7000)

The Cisco VMDC 2.1 aggregation layer is deployed using the Cisco Nexus 7000 next generation datacenter switching platform.

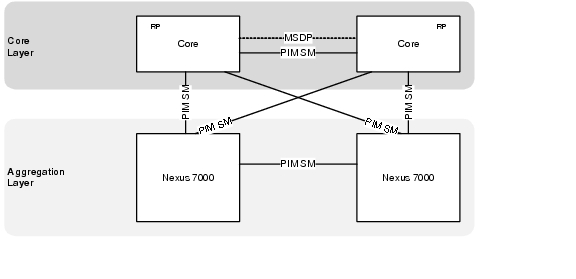

The aggregation layer provides a consolidation point where access layer switches are connected as well as delivers connectivity to the core and services layers of the data center. The aggregation layer provides the boundary between Layer 3 routed links and Layer 2 Ethernet broadcast domains as shown in Figure 2-11.

Figure 2-11 Aggregation Layer - Layer 3 and Layer 2 Boundaries

Nexus 7010 Module Details

The Cisco VMDC 2.1 solution was validated using the following Nexus 7010-compatible modules:

Example 2-3 Show Output for Nexus 7010 A Modules

DIST-N7010-A-EAST-DIST-A# show module

Mod Ports Module-Type Model Status

--- ----- ----------------------------------- ------------------ ----------

3 8 10 Gbps Ethernet XL Module N7K-M108X2-12L ok

4 8 10 Gbps Ethernet XL Module N7K-M108X2-12L ok

5 0 Supervisor module-1X N7K-SUP1 active *

6 0 Supervisor module-1X N7K-SUP1 ha-standby

Mod Sw Hw

--- -------------- ------

3 5.1(3) 1.1

4 5.1(3) 1.1

5 5.1(3) 1.1

6 5.1(3) 1.0

Mod MAC-Address(es) Serial-Num

--- -------------------------------------- ----------

3 00-26-51-c6-58-b4 to 00-26-51-c6-58-c0 JAF1339BDRJ

4 00-26-51-c6-7a-ec to 00-26-51-c6-7a-f8 JAF1339BDRR

5 00-22-55-77-ed-88 to 00-22-55-77-ed-90 JAB123400JY

6 00-26-51-c6-7a-58 to 00-26-51-c6-7a-60 JAF1342ARLB

Mod Online Diag Status

--- ------------------

3 Pass

4 Pass

5 Pass

6 Pass

Xbar Ports Module-Type Model Status

--- ----- ----------------------------------- ------------------ ----------

1 0 Fabric Module 1 N7K-C7010-FAB-1 ok

2 0 Fabric Module 1 N7K-C7010-FAB-1 ok

3 0 Fabric Module 1 N7K-C7010-FAB-1 ok

Xbar Sw Hw

--- -------------- ------

1 NA 1.0

2 NA 1.0

3 NA 1.0

Xbar MAC-Address(es) Serial-Num

--- -------------------------------------- ----------

1 NA JAB123400TK

2 NA JAB123400TJ

3 NA JAB1234002M

* this terminal session

Example 2-4 Show Output for Nexus 7010 B Modules

DIST-N7010-B-EAST-DIST-B# show module

Mod Ports Module-Type Model Status

--- ----- -------------------------------- ------------------ ------------

3 8 10 Gbps Ethernet XL Module N7K-M108X2-12L ok

4 8 10 Gbps Ethernet XL Module N7K-M108X2-12L ok

5 0 Supervisor module-1X N7K-SUP1 active *

6 0 Supervisor module-1X N7K-SUP1 ha-standby

Mod Sw Hw

--- -------------- ------

3 5.1(3) 1.1

4 5.1(3) 1.1

5 5.1(3) 1.1

6 5.1(3) 1.0

Mod MAC-Address(es) Serial-Num

--- -------------------------------------- ----------

3 c4-71-fe-d2-cf-2c to c4-71-fe-d2-cf-38 JAF1446BQNK

4 c4-71-fe-1b-d1-70 to c4-71-fe-1b-d1-7c JAF1444CHBE

5 00-22-55-77-ed-30 to 00-22-55-77-ed-38 JAB123400L8

6 00-22-55-77-71-f0 to 00-22-55-77-71-f8 JAB122501A6

Mod Online Diag Status

--- ------------------

3 Pass

4 Pass

5 Pass

6 Pass

Xbar Ports Module-Type Model Status

--- ----- -------------------------------- ------------------ ------------

1 0 Fabric Module 1 N7K-C7010-FAB-1 ok

2 0 Fabric Module 1 N7K-C7010-FAB-1 ok

3 0 Fabric Module 1 N7K-C7010-FAB-1 ok

Xbar Sw Hw

--- -------------- ------

1 NA 1.0

2 NA 1.0

3 NA 1.0

Xbar MAC-Address(es) Serial-Num

--- -------------------------------------- ----------

1 NA JAB121602E1

2 NA JAB1234002H

3 NA JAB123400T8

* this terminal session

VDC Implementation

In the Nexus 7000 switches, the default VDC has unique abilities, including the ability to create up to three additional VDCs per switch (for a total of four VDCs including the default).

In Cisco VMDC 2.1, the default VDC is reserved for administrative functions and a single non-default VDC for production network connections. The VDC configurations appear in Example 2-5 and Example 2-6.

This approach improves flexibility and security. You may grant Administrative access into the non-default VDCs to perform configuration functions without exposing access to reload the switch or change software versions. There are no Layer 3 interfaces in the default VDC that are exposed to the production data network, and only the management interface is accessible through an out-of-band (OOB) management path. In this implementation, the default VDC is maintained as an administrative context that requires console access and/or separate security credentials.

Example 2-5 VDC Definition on the Nexus 7010 A Aggregation Switch Configuration

hostname DIST-N7010-A

!

! default VDC

!

vdc DIST-N7010-A id 1

limit-resource vlan minimum 16 maximum 4094

limit-resource monitor-session minimum 0 maximum 2

limit-resource monitor-session-erspan-dst minimum 0 maximum 23

limit-resource vrf minimum 2 maximum 1000

limit-resource port-channel minimum 0 maximum 768

limit-resource u4route-mem minimum 96 maximum 96

limit-resource u6route-mem minimum 24 maximum 24

limit-resource m4route-mem minimum 58 maximum 58

limit-resource m6route-mem minimum 8 maximum 8

!

! create production VDC

!

vdc EAST-DIST-A id 2

allocate interface Ethernet3/1-8

allocate interface Ethernet4/1-8

boot-order 1

limit-resource vlan minimum 16 maximum 4094

limit-resource monitor-session minimum 0 maximum 2

limit-resource monitor-session-erspan-dst minimum 0 maximum 23

limit-resource vrf minimum 2 maximum 1000

limit-resource port-channel minimum 0 maximum 768

limit-resource u4route-mem minimum 8 maximum 8

limit-resource u6route-mem minimum 4 maximum 4

limit-resource m4route-mem minimum 8 maximum 8

limit-resource m6route-mem minimum 3 maximum 3

Example 2-6 VDC Definition on the Nexus 7010 B Aggregation Switch Configuration

hostname DIST-N7010-B

!

! default VDC

!

vdc DIST-N7010-B id 1

limit-resource vlan minimum 16 maximum 4094

limit-resource monitor-session minimum 0 maximum 2

limit-resource monitor-session-erspan-dst minimum 0 maximum 23

limit-resource vrf minimum 2 maximum 1000

limit-resource port-channel minimum 0 maximum 768

limit-resource u4route-mem minimum 96 maximum 96

limit-resource u6route-mem minimum 24 maximum 24

limit-resource m4route-mem minimum 58 maximum 58

limit-resource m6route-mem minimum 8 maximum 8

!

! create production VDC

!

vdc EAST-DIST-B id 2

allocate interface Ethernet3/1-8

allocate interface Ethernet4/1-8

boot-order 1

limit-resource vlan minimum 16 maximum 4094

limit-resource monitor-session minimum 0 maximum 2

limit-resource monitor-session-erspan-dst minimum 0 maximum 23

limit-resource vrf minimum 2 maximum 1000

limit-resource port-channel minimum 0 maximum 768

limit-resource u4route-mem minimum 8 maximum 8

limit-resource u6route-mem minimum 4 maximum 4

limit-resource m4route-mem minimum 8 maximum 8

limit-resource m6route-mem minimum 3 maximum 3

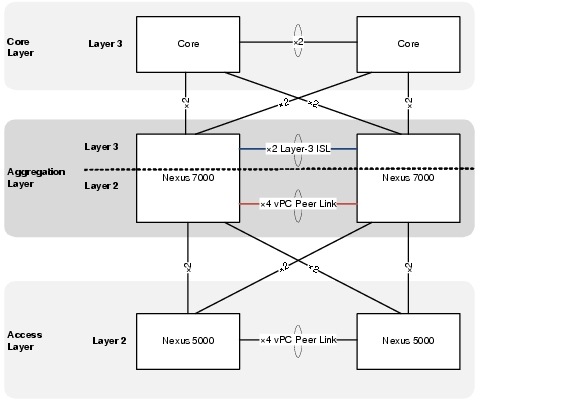

Dual Inter Switch Link Implementation

In Cisco VMDC 2.1, a dual inter switch link (ISL) design is used between the aggregation switches. The Layer 3 inter-switch link is composed of a dedicated 2 x 10Gb port channel that provides OSPF area contiguity and a separate 4 x 10 Gb dedicated port channel that creates the vPC peer link for Layer 2 connectivity to the access layer.

The physical connections are shown in Figure 2-12 and the switch output in Example 2-7.

Figure 2-12 Dual Inter Switch Link Design in Cisco VMDC 2.1

Example 2-7 Aggregation Dual Inter Switch Links (Nexus 7000 A)

! aggregation A side

DIST-N7010-A-EAST-DIST-A# show port-channel summary

Flags: D - Down P - Up in port-channel (members)

I - Individual H - Hot-standby (LACP only)

s - Suspended r - Module-removed

S - Switched R - Routed

U - Up (port-channel)

M - Not in use. Min-links not met

--------------------------------------------------------------------------------

Group Port- Type Protocol Member Ports

Channel

--------------------------------------------------------------------------------

1 Po1(RU) Eth LACP Eth3/3(P) Eth4/3(P)

2 Po2(SU) Eth LACP Eth3/7(P) Eth3/8(P) Eth4/7(P)

Eth4/8(P)

! aggregation B side

DIST-N7010-B-EAST-DIST-B# show port-channel summary

Flags: D - Down P - Up in port-channel (members)

I - Individual H - Hot-standby (LACP only)

s - Suspended r - Module-removed

S - Switched R - Routed

U - Up (port-channel)

M - Not in use. Min-links not met

--------------------------------------------------------------------------------

Group Port- Type Protocol Member Ports

Channel

--------------------------------------------------------------------------------

1 Po1(RU) Eth LACP Eth3/3(P) Eth4/3(P)

2 Po2(SU) Eth LACP Eth3/7(P) Eth3/8(P) Eth4/7(P)

Eth4/8(P)

The Layer 2 vPC implementation is covered in the next section and the Layer 3 implementation is covered in Virtual Routing and Forwarding (VRF).

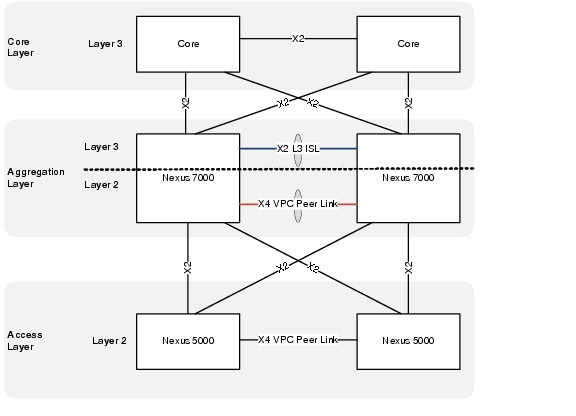

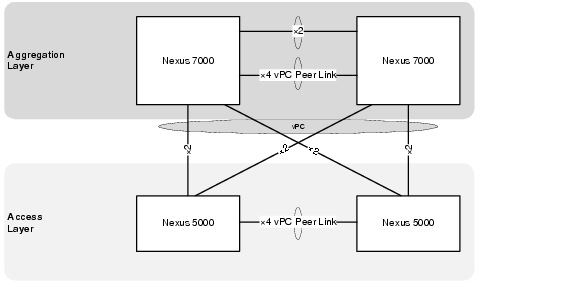

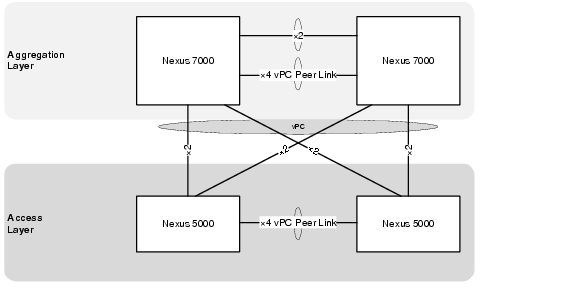

Virtual PortChannel Implementation (vPC)

A virtual port channel (vPC) allows links that are physically connected to two different Cisco Nexus 7000 Series devices to appear as a single port channel by a third device. The third device can be a switch, server, or any other networking device that supports port channels. A vPC provides Layer 2 multipathing, which allows you to create redundancy and increase bisectional bandwidth by enabling multiple parallel paths between nodes and allowing load balancing traffic.

The vPC domain includes vPC peer devices, the vPC peer keepalive link, the vPC peer link, and all the PortChannels in the vPC domain connected to the downstream device

In Cisco VMDC 2.1, virtual PortChannels (vPCs) were configured in the aggregation and access layers enabling full, cross-sectional bandwidth utilization (80 Gbps) as depicted in Figure 2-13.

Figure 2-13 vPCs at the Access and Aggregation Layers

Cisco VMDC 2.1 also leverages two new vPC features added to NX-OS that improve scale and performance during convergence events. These features are peer switch and address resolution protocol (ARP) synchronization.

The vPC peer switch feature addresses the performance of spanning tree protocol (STP) convergence. It allows a pair of Cisco Nexus 7000 Series devices to appear as a single STP root in the Layer 2 topology. This feature eliminates the need to pin the STP root to the vPC primary switch and improves vPC convergence during vPC primary switch failures. To avoid loops, the vPC peer link is excluded from the STP computation. In vPC peer switch mode, STP BPDUs are sent from both vPC peer devices to avoid issues related to STP BPDU timeout on the downstream switches, which can cause traffic disruption.

The ARP synchronization feature addresses table synchronization across vPC peers using the reliable transport mechanism of the Cisco Fabric Service over Ethernet (CFSoE) protocol. You must enable the IP ARP synchronize to support faster convergence of address tables between the vPC peers. This convergence is designed to overcome the delay involved in ARP table restoration for IPv4 table restoration when the peer-link port channel flaps or when a vPC peer comes back online.

The current best practice is to use as much information as possible for input to the EtherChannel algorithm to achieve the best or most uniform utilization of EtherChannel members.

The Cisco VMDC 2.1 vPC validated configuration is in Example 2-8 and Example 2-9:

Example 2-8 Example vPC Configurations on Nexus 7010 Switches (7010 A Configuration)

! set load share algorithm in the default VDC

!

port-channel load-balance ethernet source-dest-ip-port-vlan

!

! STP specific configuration

!

spanning-tree pathcost method long

spanning-tree vlan 201-930 priority 8192

!

! configure vPC in the production VDC

!

vpc domain 201

peer-switch

role priority 16000

peer-keepalive destination 10.0.32.103 source 10.0.32.101

delay restore 60

peer-gateway

reload restore

ip arp synchronize

!

interface port-channel2

description vPC peerlink to EAST-DIST-B

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

spanning-tree port type network

service-policy type queuing output 10G-EGRESS-Q

vpc peer-link

!

interface Ethernet3/7

description To DIST-N7010-B-EAST-DIST-B Eth3/7

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

!

interface Ethernet3/8

description To DIST-N7010-B-EAST-DIST-B Eth3/8

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

!

interface Ethernet4/7

description To DIST-N7010-B-EAST-DIST-B Eth4/7

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

!

interface Ethernet4/8

description To DIST-N7010-B-EAST-DIST-B Eth4/8

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

Example 2-9 Example vPC Configurations on Nexus 7010 Switches (7010 B Configuration)

! set load share algorithm in the default VDC

!

port-channel load-balance ethernet source-dest-ip-port-vlan

!

! STP specific configuration

!

spanning-tree pathcost method long

spanning-tree vlan 201-930 priority 8192

!

! configure vPC in the production VDC

!

vpc domain 201

peer-switch

role priority 32000

peer-keepalive destination 10.0.32.101 source 10.0.32.103

delay restore 60

peer-gateway

reload restore

ip arp synchronize

!

interface port-channel2

description vPC peerlink to EAST-DIST-A

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

spanning-tree port type network

service-policy type queuing output 10G-EGRESS-Q

vpc peer-link

!

interface Ethernet3/7

description To DIST-N7010-A-EAST-DIST-A Eth3/7

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

!

interface Ethernet3/8

description To DIST-N7010-A-EAST-DIST-A Eth3/8

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

!

interface Ethernet4/7

description To DIST-N7010-A-EAST-DIST-A Eth4/7

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

!

interface Ethernet4/8

description To DIST-N7010-A-EAST-DIST-A Eth4/8

switchport

switchport mode trunk

switchport trunk allowed vlan 201-930

channel-group 2 mode active

no shutdown

The following commands and related output in Example 2-10 can be used to verify that the vPC configuration is correct and functioning.

Example 2-10 Nexus 7000 vPC Verification

DIST-N7010-A-EAST-DIST-A# sho vpc brief

Legend:

(*) - local vPC is down, forwarding via vPC peer-link

vPC domain id : 201

Peer status : peer adjacency formed ok

vPC keep-alive status : peer is alive

Configuration consistency status : success

Per-vlan consistency status : success

Type-2 consistency status : success

vPC role : primary

Number of vPCs configured : 1

Peer Gateway : Enabled

Peer gateway excluded VLANs : -

Dual-active excluded VLANs : -

Graceful Consistency Check : Enabled

Auto-recovery status : Enabled (timeout = 240 seconds)

vPC Peer-link status

---------------------------------------------------------------------

id Port Status Active vlans

-- ---- ------ --------------------------------------------------

1 Po2 up 201-930

vPC status

----------------------------------------------------------------------

id Port Status Consistency Reason Active vlans

-- ---- ------ ----------- ------ ------------

202 Po202 up success success 211-213,221

-223,231-23

3,241-243,2

51-253,261-

263,271-273 ....

DIST-N7010-A-EAST-DIST-A# show vpc peer-keepalive

vPC keep-alive status : peer is alive

--Peer is alive for : (637975) seconds, (683) msec

--Send status : Success

--Last send at : 2011.07.28 23:58:10 602 ms

--Sent on interface : mgmt0

--Receive status : Success

--Last receive at : 2011.07.28 23:58:10 604 ms

--Received on interface : mgmt0

--Last update from peer : (0) seconds, (29) msec

vPC Keep-alive parameters

--Destination : 10.0.32.103

--Keepalive interval : 1000 msec

--Keepalive timeout : 5 seconds

--Keepalive hold timeout : 3 seconds

--Keepalive vrf : management

--Keepalive udp port : 3200

--Keepalive tos : 192

DIST-N7010-A-EAST-DIST-A#

DIST-N7010-A-EAST-DIST-A# sho vpc consistency-parameters global

Legend:

Type 1 : vPC will be suspended in case of mismatch

Name Type Local Value Peer Value

------------- ---- ---------------------- -----------------------

STP Mode 1 Rapid-PVST Rapid-PVST

STP Disabled 1 None None

STP MST Region Name 1 "" ""

STP MST Region Revision 1 0 0

STP MST Region Instance to 1

VLAN Mapping

STP Loopguard 1 Disabled Disabled

STP Bridge Assurance 1 Enabled Enabled

STP Port Type, Edge 1 Normal, Disabled, Normal, Disabled,

BPDUFilter, Edge BPDUGuard Disabled Disabled

STP MST Simulate PVST 1 Enabled Enabled

Interface-vlan admin up 2 211-213,221-223,231-23 211-213,221-223,231-23

3,241-243,251-253,261- 3,241-243,251-253,261-

263,271-273,281-283,61 263,271-273,281-283,61

1-613,621-623,631-633, 1-613,621-623,631-633,

641-643 641-643

Interface-vlan routing 2 211-213,221-223,231-23 211-213,221-223,231-23

capability 3,241-243,251-253,261- 3,241-243,251-253,261-

263,271-273,281-283,61 263,271-273,281-283,61

1-613 1-613

Allowed VLANs - 201-930 201-930

Local suspended VLANs - - -

Virtual Routing and Forwarding (VRF)

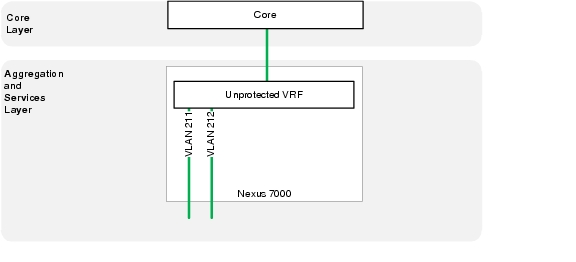

In the Cisco VMDC 2.1, the VRFs created in the aggregation layer provide Layer 3 separation between tenants and between tenant zones.

VRF Design depicts a logical representation of the zone separation for a single tenant.

Each tenant deploys with two VRFs, unprotected and protected. Routes propagate to all hops in a Layer 3 domain so the Services Layer is depicted for clarity (this concept is clarified in section 3.4.3.4). The tenant zone VRFs are then mapped to the VLANs where the virtual machines reside. By default, communications between the VRF instances is prevented to protect the privacy of each tenant. The same default behavior applies to communications between tenant zones.

Figure 2-14 VRF Design

The following configurations are needed to provision a tenant Unprotected Zone VRF:

•![]() VRF definition

VRF definition

•![]() Unprotected Loopback Interface

Unprotected Loopback Interface

•![]() Dot1q sub-interface to Core A

Dot1q sub-interface to Core A

•![]() Dot1q sub-interface to Core B

Dot1q sub-interface to Core B

•![]() Dot1q sub-interface to Aggregation A/B

Dot1q sub-interface to Aggregation A/B

•![]() Dot1q sub-interface to DSN

Dot1q sub-interface to DSN

•![]() Unprotected Public VLANs (front end)

Unprotected Public VLANs (front end)

•![]() (Optional) Private VLANs (back end)

(Optional) Private VLANs (back end)

•![]() OSPF routing process

OSPF routing process

Example 2-11 and Example 2-12 present the Unprotected VRF configurations.

Example 2-11 Nexus 7000 A Unprotected Zone VRF Configuration

vrf context T1U

!

interface loopback1

description RID for VRF T1Unprotected

vrf member T1U

ip address 10.1.31.11/32

ip router ospf 1 area 0.0.0.0

!

interface port-channel1

description L3 Link to EAST-DIST-B

interface port-channel1.1

description T1Unprotected PC Subif to DIST-B

encapsulation dot1q 3001

vrf member T1U

no ip redirects

ip address 10.1.28.17/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface port-channel101

description L3 link to EAST-CORE-A

interface port-channel101.1

description T1U PC Subif to CORE-A

encapsulation dot1q 3101

vrf member T1U

no ip redirects

ip address 10.1.28.1/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.0

no shutdown

!

interface port-channel102

description L3 link to EAST-CORE-B

interface port-channel102.1

description T1U PC Subif to CORE-B

encapsulation dot1q 3201

vrf member T1U

no ip redirects

ip address 10.1.28.9/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.0

no shutdown

!

interface port-channel103

description L3 link to EAST-VSS

no lacp graceful-convergence

interface port-channel103.1

description T1U PC Subif to VSS-A

encapsulation dot1q 3101

vrf member T1U

no ip redirects

ip address 10.1.28.45/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface Vlan211

no shutdown

description Tenant 1 Unprotected Public VLAN

vrf member T1U

no ip redirects

ip address 10.1.1.251/24

ip ospf passive-interface

ip router ospf 1 area 0.0.0.10

hsrp 1

preempt delay minimum 120

ip 10.1.1.253

!

router ospf 1

vrf T1U

router-id 10.1.31.11

area 0.0.0.10 nssa default-information-originate

max-metric router-lsa external-lsa on-startup 180 summary-lsa

area 0.0.0.10 range 10.1.0.0/16

log-adjacency-changes detail

timers throttle spf 10 100 5000

timers lsa-arrival 80

timers throttle lsa 10 100 5000

auto-cost reference-bandwidth 100 Gbps

Example 2-12 Nexus 7000 A Unprotected Zone VRF Configuration

vrf context T1U

!

interface loopback1

description RID for VRF T1Unprotected

vrf member T1U

ip address 10.1.31.12/32

ip router ospf 1 area 0.0.0.0

!

interface port-channel1

description L3 Link to EAST-DIST-A

interface port-channel1.1

description T1Unprotected PC Subif to DIST-A

encapsulation dot1q 3001

vrf member T1U

no ip redirects

ip address 10.1.28.18/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface port-channel101

description L3 link to EAST-CORE-B

interface port-channel101.1

description T1U PC Subif to CORE-B

encapsulation dot1q 3101

vrf member T1U

no ip redirects

ip address 10.1.28.5/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.0

no shutdown

!

interface port-channel102

description L3 link to EAST-CORE-A

interface port-channel102.1

description T1U PC Subif to CORE-A

encapsulation dot1q 3201

vrf member T1U

no ip redirects

ip address 10.1.28.13/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.0

no shutdown

!

interface port-channel104

description L3 link to EAST-VSS

no lacp graceful-convergence

interface port-channel104.1

description T1U PC Subif to VSS-A

encapsulation dot1q 3201

vrf member T1U

no ip redirects

ip address 10.1.28.49/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface Vlan211

no shutdown

description Tenant 1 Unprotected Frontend VLAN

vrf member T1U

no ip redirects

ip address 10.1.1.252/24

ip ospf passive-interface

ip router ospf 1 area 0.0.0.10

hsrp 1

preempt delay minimum 120

ip 10.1.1.253

!

router ospf 1

vrf T1U

router-id 10.1.31.12

area 0.0.0.10 nssa default-information-originate

max-metric router-lsa external-lsa on-startup 180 summary-lsa

area 0.0.0.10 range 10.1.0.0/16

log-adjacency-changes detail

timers throttle spf 10 100 5000

timers lsa-arrival 80

timers throttle lsa 10 100 5000

auto-cost reference-bandwidth 100 Gbps

The following configurations are needed to provision a tenant Protected Zone VRF

•![]() VRF definition

VRF definition

•![]() Protected Loopback Interface

Protected Loopback Interface

•![]() Dot1q sub-interface to Aggregation A/B

Dot1q sub-interface to Aggregation A/B

•![]() Dot1q sub-interface to DSN

Dot1q sub-interface to DSN

•![]() Public VLANs (front end)

Public VLANs (front end)

•![]() (Optional) Private VLANs (back end)

(Optional) Private VLANs (back end)

•![]() OSPF routing process

OSPF routing process

Example 2-13 and Example 2-14 present the unprotected and protected zone VRF configurations.

Example 2-13 Nexus 7000 A Protected VRF Configuration

vrf context T1P

!

interface loopback101

description RID for VRF T1Protected

vrf member T1P

ip address 10.1.63.11/32

ip router ospf 1 area 0.0.0.10

!

interface port-channel1

description L3 Link to EAST-DIST-B

interface port-channel1.101

description T1Protected PC Subif to DIST-B

encapsulation dot1q 3501

vrf member T1P

no ip redirects

ip address 10.1.60.17/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface port-channel103

description L3 link to EAST-VSS

no lacp graceful-convergence

interface port-channel103.101

description T1P PC Subif to VSS-A

encapsulation dot1q 3301

vrf member T1P

no ip redirects

ip address 10.1.60.45/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface Vlan611

no shutdown

description Tenant 1 Protected Frontend VLAN

vrf member T1P

no ip redirects

ip address 10.1.41.251/24

ip ospf passive-interface

ip router ospf 1 area 0.0.0.10

hsrp 1

preempt delay minimum 120

ip 10.1.41.253

!

router ospf 1

vrf T1P

router-id 10.1.63.11

area 0.0.0.10 nssa

max-metric router-lsa external-lsa on-startup 180 summary-lsa

log-adjacency-changes detail

timers throttle spf 10 100 5000

timers lsa-arrival 80

timers throttle lsa 10 100 5000

auto-cost reference-bandwidth 100 Gbps

Example 2-14 Nexus 7000 B Protected VRF Configuration

vrf context T1P

!

interface loopback101

description RID for VRF T1Protected

vrf member T1P

ip address 10.1.63.12/32

ip router ospf 1 area 0.0.0.10

!

interface port-channel1

description L3 Link to EAST-DIST-A

interface port-channel1.101

description T1Protected PC Subif to DIST-A

encapsulation dot1q 3501

vrf member T1P

no ip redirects

ip address 10.1.60.18/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface port-channel104

description L3 link to EAST-VSS

no lacp graceful-convergence

interface port-channel104.101

description T1P PC Subif to VSS-A

encapsulation dot1q 3401

vrf member T1P

no ip redirects

ip address 10.1.60.49/30

ip ospf cost 5

ip ospf network point-to-point

ip router ospf 1 area 0.0.0.10

no shutdown

!

interface Vlan611

no shutdown

description Tenant 1 Protected Frontend VLAN

vrf member T1P

no ip redirects

ip address 10.1.41.252/24

ip ospf passive-interface

ip router ospf 1 area 0.0.0.10

hsrp 1

preempt delay minimum 120

ip 10.1.41.253

!

router ospf 1

vrf T1P

router-id 10.1.63.12

area 0.0.0.10 nssa

max-metric router-lsa external-lsa on-startup 180 summary-lsa

log-adjacency-changes detail

timers throttle spf 10 100 5000

timers lsa-arrival 80

timers throttle lsa 10 100 5000

auto-cost reference-bandwidth 100 Gbps

Deployment Guidelines

Layer 2 Configuration

vPC

The following vPC deployment guidelines were identified:

•![]() With vPC peer switch configuration be sure both peers use the same STP priority.

With vPC peer switch configuration be sure both peers use the same STP priority.

•![]() Back-to-back, multi-layer vPC topologies require unique Domain IDs on each respective vPC.

Back-to-back, multi-layer vPC topologies require unique Domain IDs on each respective vPC.

•![]() Configure all the port channels in the vPC using LACP with the interfaces in active mode.

Configure all the port channels in the vPC using LACP with the interfaces in active mode.

•![]() Use default timers for HSRP and PIM configurations. There is no advantage in convergence times when using aggressive timers in vPC configurations.

Use default timers for HSRP and PIM configurations. There is no advantage in convergence times when using aggressive timers in vPC configurations.

•![]() Configure a separate Layer 3 link for routing from the vPC peer devices, rather than using a VLAN Switched Virtual Interface (SVI) interface for this purpose.

Configure a separate Layer 3 link for routing from the vPC peer devices, rather than using a VLAN Switched Virtual Interface (SVI) interface for this purpose.

•![]() In large scale environments, tune the vPC delay restore to improve convergence.

In large scale environments, tune the vPC delay restore to improve convergence.

OSPF

The following OSPF deployment guidelines were identified:

•![]() Use OSPF point-to-point mode on the 10G Ethernet links so the adjacency is always formed with the neighbor. There is no DR/BDR election in a point-to-point mode. This configuration gives the flexibility to configure separate OSPF cost per point-to-point neighbor.

Use OSPF point-to-point mode on the 10G Ethernet links so the adjacency is always formed with the neighbor. There is no DR/BDR election in a point-to-point mode. This configuration gives the flexibility to configure separate OSPF cost per point-to-point neighbor.

•![]() OSPF hello and hold timers are left at default values.

OSPF hello and hold timers are left at default values.

•![]() Use OSPF manual link costs on Layer 3 port channels to prevent cost changes when member links fail or are added to the bundle.

Use OSPF manual link costs on Layer 3 port channels to prevent cost changes when member links fail or are added to the bundle.

•![]() OSPF throttle timer tuning could be further tuned to achieve faster convergence.

OSPF throttle timer tuning could be further tuned to achieve faster convergence.

•![]() OSPF NSSA is used to allow importing of the static routes into the OSPF routing domain and also to limits the number of routes advertised from the aggregation layer to the services layer.

OSPF NSSA is used to allow importing of the static routes into the OSPF routing domain and also to limits the number of routes advertised from the aggregation layer to the services layer.

•![]() If tenant subnetting allows, the OSPF area range command can be used to limit outbound prefix advertisements and potentially improve convergence.

If tenant subnetting allows, the OSPF area range command can be used to limit outbound prefix advertisements and potentially improve convergence.

Traffic Flow Optimization

The following traffic flow optimization deployment guidelines were identified:

•![]() Use Layer 3 and Layer 4 information to achieve optimum link utilization when using EtherChannel interconnections.

Use Layer 3 and Layer 4 information to achieve optimum link utilization when using EtherChannel interconnections.

•![]() Use Layer 3 routed equal-cost redundant paths and vary the input to the CEF hashing algorithm to improve load distribution.

Use Layer 3 routed equal-cost redundant paths and vary the input to the CEF hashing algorithm to improve load distribution.

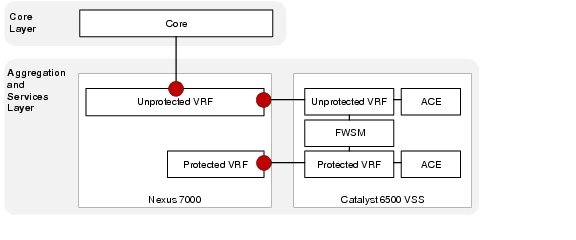

Service Insertion with the Datacenter Services Node (DSN)

In Cisco VMDC 2.1, the firewall and load balancing services are deployed using the Cisco Datacenter Services Node (DSN). This approach decouples the service modules from dependence on a specific aggregation switch.

With VSS, the ACE and FWSM modules will be in active-active mode, with each virtual context in active-standby mode on the designated service modules of each Cisco DSN.

Figure 2-15 Service Layer within DSN

Catalyst 6500 Module Details

The Cisco VMDC 2.1 solution includes the following Catalyst 6500-compatible modules:

Example 2-15 DSN VSS

EAST-VSS-A #show module switch all

Switch Number: 1 Role: Virtual Switch Standby

---------------------- -----------------------------

Mod Ports Card Type Model Serial No.

--- ----- -------------------------------------- ------------------ -----------

1 1 Application Control Engine Module ACE20-MOD-K9 SAD130902AD

2 8 CEF720 8 port 10GE with DFC WS-X6708-10GE SAL1414EH1R

3 8 CEF720 8 port 10GE with DFC WS-X6708-10GE SAL113702RH

4 6 Firewall Module WS-SVC-FWM-1 SAD08300G93

6 5 Supervisor Engine 720 10GE (Hot) VS-S720-10G SAL124052GU

7 6 Firewall Module WS-SVC-FWM-1 SAD090709YU

9 8 Network Analysis Module WS-SVC-NAM-2 SAD111101TX

Mod MAC addresses Hw Fw Sw Status

--- ---------------------------------- ------ ------------ ------------ -------

1 001f.ca7b.7052 to 001f.ca7b.7059 2.4 8.7(0.22)ACE A2(3.3) Ok

2 c47d.4f8f.f810 to c47d.4f8f.f817 2.1 12.2(18r)S1 12.2(33)SXI5 Ok

3 001a.6c9e.76a0 to 001a.6c9e.76a7 1.3 12.2(18r)S1 12.2(33)SXI5 Ok

4 0011.92b7.ac9c to 0011.92b7.aca3 3.0 7.2(1) 4.1(4) Ok

6 001c.58d0.f5d0 to 001c.58d0.f5d7 2.0 8.5(2) 12.2(33)SXI5 Ok

7 0003.e472.ab18 to 0003.e472.ab1f 3.0 7.2(1) 4.1(4) Ok

9 001b.2a4e.5ce8 to 001b.2a4e.5cef 4.2 7.2(1) 3.4(1a) Ok

Mod Sub-Module Model Serial Hw Status

---- --------------------------- ------------------ ----------- ------- -------

2 Distributed Forwarding Card WS-F6700-DFC3CXL SAL12426X4U 1.1 Ok

3 Distributed Forwarding Card WS-F6700-DFC3CXL SAL1136ZK52 1.0 Ok

6 Policy Feature Card 3 VS-F6K-PFC3CXL SAL1240509Z 1.0 Ok

6 MSFC3 Daughterboard VS-F6K-MSFC3 SAL124053J9 1.0 Ok

Mod Online Diag Status

---- -------------------

1 Pass

2 Pass

3 Pass

4 Pass

6 Pass

7 Pass

9 Pass

Switch Number: 2 Role: Virtual Switch Active

---------------------- -----------------------------

Mod Ports Card Type Model Serial No.

--- ----- -------------------------------------- ------------------ -----------

1 1 Application Control Engine Module ACE20-MOD-K9 SAD130801KH

2 8 CEF720 8 port 10GE with DFC WS-X6708-10GE SAL1152BFRV

3 8 CEF720 8 port 10GE with DFC WS-X6708-10GE SAL1414EH07

4 6 Firewall Module WS-SVC-FWM-1 SAD064502U5

6 5 Supervisor Engine 720 10GE (Active) VS-S720-10G SAL1204E26Z

7 6 Firewall Module WS-SVC-FWM-1 SAD080204M3

Mod MAC addresses Hw Fw Sw Status

--- ---------------------------------- ------ ------------ ------------ -------

1 001f.ca7b.6a52 to 001f.ca7b.6a59 2.4 8.7(0.22)ACE A2(3.3) Ok

2 001a.6c9f.0980 to 001a.6c9f.0987 1.3 12.2(18r)S1 12.2(33)SXI5 Ok

3 68ef.bde1.3838 to 68ef.bde1.383f 2.1 12.2(18r)S1 12.2(33)SXI5 Ok

4 0003.feaa.df80 to 0003.feaa.df87 1.1 7.2(1) 4.1(4) Ok

6 001e.7a58.3a10 to 001e.7a58.3a17 2.0 8.5(2) 12.2(33)SXI5 Ok

7 0003.fead.9c60 to 0003.fead.9c67 3.0 7.2(1) 4.1(4) Ok

Mod Sub-Module Model Serial Hw Status

---- --------------------------- ------------------ ----------- ------- -------

2 Distributed Forwarding Card WS-F6700-DFC3C SAL1203DD9U 1.0 Ok

3 Distributed Forwarding Card WS-F6700-DFC3CXL SAL12426X5C 1.1 Ok

6 Policy Feature Card 3 VS-F6K-PFC3CXL SAD120105YJ 1.0 Ok

6 MSFC3 Daughterboard VS-F6K-MSFC3 SAL1203D3YH 1.0 Ok

Mod Online Diag Status

---- -------------------

1 Pass

2 Pass

3 Pass

4 Pass

6 Pass

7 Pass

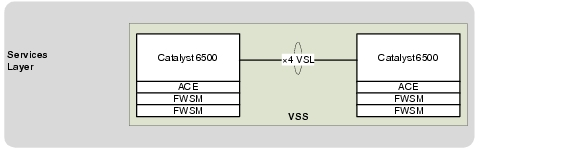

VSS Implementation

Virtual Switching System (VSS) combines two physical Cisco Catalyst 6500 Series Switches into one virtual switch.

Figure 2-16 Cisco Virtual Switching System

This configuration enables a unified control plane and allows both data planes to forward simultaneously. With VSS, the multi-chassis EtherChannel (MEC) capability is introduced, which allows a port channel to be defined across two physical switches.

Integrating VSS with Cisco DSN also increases the number of supported service modules per chassis from four to eight within a VSS domain, which enables an active-active highly available service chassis deployment.

For more information on VSS design see the following link:

http://www.cisco.com/en/US/docs/solutions/Enterprise/Campus/VSS30dg/campusVSS_DG.html

Example 2-16 VSS Configuration

switch virtual domain 100

switch mode virtual

switch 1 priority 150

no dual-active detection pagp

no dual-active detection bfd

mac-address use-virtual

!

interface Port-channel1

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

switch virtual link 1

mls qos trust cos

no mls qos channel-consistency

port-channel port hash-distribution adaptive

!

interface Port-channel2

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

switch virtual link 2

mls qos trust cos

no mls qos channel-consistency

port-channel port hash-distribution adaptive

!

interface TenGigabitEthernet1/3/5

description To EAST-VSS-A 2/3/5

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 1 mode on

!

interface TenGigabitEthernet1/3/6

description To EAST-VSS-A 2/3/6

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 1 mode on

!

interface TenGigabitEthernet1/6/4

description To EAST-VSS-A 2/6/4

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 1 mode on

!

interface TenGigabitEthernet1/6/5

description To EAST-VSS-A 2/6/5

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 1 mode on

!

interface GigabitEthernet1/6/2

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event spanning-tree status

load-interval 30

dual-active fast-hello

end

!

interface TenGigabitEthernet2/3/5

description To EAST-VSS-A 1/3/5

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 2 mode on

!

interface TenGigabitEthernet2/3/6

description To EAST-VSS-A 1/3/6

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 2 mode on

!

interface TenGigabitEthernet2/6/4

description To EAST-VSS-A 1/6/4

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 2 mode on

!

interface TenGigabitEthernet2/6/5

description To EAST-VSS-A 1/6/5

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event bundle-status

load-interval 30

mls qos trust cos

channel-group 2 mode on

!

interface GigabitEthernet2/6/2

no switchport

no ip address

logging event link-status

logging event trunk-status

logging event spanning-tree status

load-interval 30

dual-active fast-hello

end

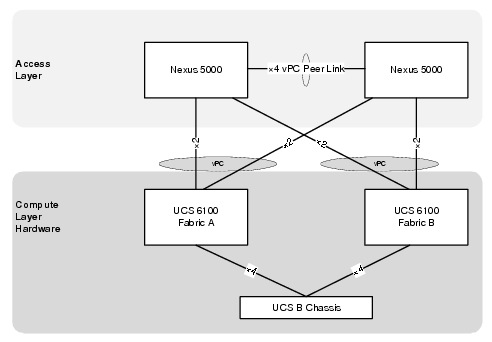

Multi-Chassis EtherChannel (MEC)

For the Cisco VMDC 2.1 solution, the aggregation Nexus 7010 aggregation switches interconnect to the Cisco DSN through the MEC running in the VSS.

Figure 2-17 Multi-Channel EtherChannel Connections to DSN

Example 2-17 Catalyst 6500 DSN Configuration

interface Port-channel103

description L3 PC to EAST-DIST-A

no switchport

no ip address

logging event link-status

logging event bundle-status

load-interval 30

port-channel port hash-distribution adaptive

!

interface Port-channel104

description L3 PC to EAST-DIST-B

no switchport

no ip address

logging event link-status

logging event bundle-status

load-interval 30

port-channel port hash-distribution adaptive

!

interface TenGigabitEthernet1/2/1

description To DIST-N7010-A-EAST-DIST-A Eth 3/4

no switchport

no ip address

logging event bundle-status

load-interval 30

channel-group 103 mode active

!

interface TenGigabitEthernet1/3/1

description To DIST-N7010-B-EAST-DIST-B Eth 4/4

no switchport

no ip address

logging event bundle-status

load-interval 30

channel-group 104 mode active

!

interface TenGigabitEthernet2/2/1

description To DIST-N7010-A-EAST-DIST-A Eth 4/4

no switchport

no ip address

logging event bundle-status

load-interval 30

channel-group 103 mode active

!

interface TenGigabitEthernet2/3/1

description To DIST-N7010-B-EAST-DIST-B Eth 3/4

no switchport

no ip address

logging event bundle-status

load-interval 30

channel-group 104 mode active

Example 2-18 Nexus 7010 Configuration

! EAST-DIST-A

interface port-channel103

description L3 link to EAST-VSS

no lacp graceful-convergence

!

interface Ethernet3/4

description To EAST-VSS-A Ten1/2/1

channel-group 103 mode active

no shutdown

!

interface Ethernet4/4

description To EAST-VSS-A Ten2/2/1

channel-group 103 mode active

no shutdown

!

! EAST-DIST-B

!

interface port-channel104

description L3 link to EAST-VSS

no lacp graceful-convergence

!

interface Ethernet3/4

description To EAST-VSS-A Ten2/3/1

channel-group 104 mode active

no shutdown

!

interface Ethernet4/4

description To EAST-VSS-A Ten1/3/1

channel-group 104 mode active

no shutdown

Virtual Routing and Forwarding (VRF)

IN Cisco VMDC 2.1, the DSN uses a dual-homed routed approach for data path connectivity to redundant aggregation layer switches. The FWSM, and ACE modules operate in routed mode. Each tenant is deployed with two VRFs: unprotected and protected. Routes propagate to all hops in a Layer 3 domain, and the VLANs used by the FW and ACE service modules are then mapped to the unprotected and protected VRFs. By default, communications between VRF instances are prevented to protect the privacy of each tenant as well as between tenant zones.

Figure 2-18 VRFs and DSN Interconnections

The following configurations are required for each zone:

Unprotected Zone VRF

•![]() VRF definition

VRF definition

•![]() Unprotected Loopback Interface

Unprotected Loopback Interface

•![]() Dot1q sub-interface to Aggregation A

Dot1q sub-interface to Aggregation A

•![]() Dot1q sub-interface to Aggregation B

Dot1q sub-interface to Aggregation B

•![]() Unprotected ACE VLAN

Unprotected ACE VLAN

•![]() FWSM Public side VLAN

FWSM Public side VLAN

•![]() OSPF routing process

OSPF routing process

•![]() Static route to Protected Zone subnet

Static route to Protected Zone subnet

•![]() Static routes to ACE VIP and SNAT subnets

Static routes to ACE VIP and SNAT subnets

Example 2-19 is an example unprotected VRF configuration.

Example 2-19 DSN Unprotected VRF Configuration

ip vrf T1U

description Tenant 1 Unprotected

rd 10.1.31.13:13

!

interface Loopback1

description RID for VRF T1U

ip vrf forwarding T1U

ip address 10.1.31.13 255.255.255.255

!

interface Port-channel103

description L3 PC to EAST-DIST-A

no switchport

no ip address

logging event link-status

logging event bundle-status

load-interval 30

port-channel port hash-distribution adaptive

!

interface Port-channel103.1

encapsulation dot1Q 3101

ip vrf forwarding T1U

ip address 10.1.28.46 255.255.255.252

ip ospf network point-to-point

!

interface Port-channel104

description L3 PC to EAST-DIST-B

!

interface Port-channel104.1

encapsulation dot1Q 3201

ip vrf forwarding T1U

ip address 10.1.28.50 255.255.255.252

ip ospf network point-to-point

!

interface Vlan211

description Tenant 1 ACE Unprotected VLAN

ip vrf forwarding T1U

ip address 10.1.28.129 255.255.255.248

load-interval 30

!

interface Vlan212

description Tenant 1 FWSM Outside Unprotected VLAN

ip vrf forwarding T1U

ip address 10.1.28.161 255.255.255.248

load-interval 30

!

router ospf 1 vrf T1U

router-id 10.1.31.13

log-adjacency-changes

auto-cost reference-bandwidth 100000

capability vrf-lite

area 0.0.0.10 nssa

timers throttle spf 10 100 5000

timers throttle lsa all 10 100 5000

timers lsa arrival 80

redistribute static subnets route-map T1U

passive-interface Vlan211

passive-interface Vlan212

network 10.1.28.44 0.0.0.3 area 0.0.0.10

network 10.1.28.48 0.0.0.3 area 0.0.0.10

network 10.1.28.128 0.0.0.7 area 0.0.0.10

network 10.1.28.160 0.0.0.7 area 0.0.0.10

network 10.1.31.13 0.0.0.0 area 0.0.0.10

!

ip route vrf T1U 10.1.24.0 255.255.255.0 10.1.28.130

ip route vrf T1U 10.1.26.0 255.255.255.0 10.1.28.130

ip route vrf T1U 10.1.32.0 255.255.224.0 10.1.28.163

!

route-map T1U permit 10

match ip address 101

!

access-list 101 permit ip 10.1.24.0 0.0.0.255 any

access-list 101 permit ip 10.1.26.0 0.0.0.255 any

access-list 101 permit ip 10.1.32.0 0.0.31.255 any

Protected Zone VRF

•![]() VRF definition

VRF definition

•![]() Unprotected Loopback Interface

Unprotected Loopback Interface

•![]() Dot1q sub-interface to Aggregation A

Dot1q sub-interface to Aggregation A

•![]() Dot1q sub-interface to Aggregation B

Dot1q sub-interface to Aggregation B

•![]() Protected ACE VLAN

Protected ACE VLAN

•![]() FWSM Private side VLAN

FWSM Private side VLAN

•![]() OSPF routing process

OSPF routing process

•![]() Default Static route to Unprotected Zone

Default Static route to Unprotected Zone

•![]() Static routes to ACE VIP and SNAT subnets

Static routes to ACE VIP and SNAT subnets

Example 2-20 is an example protected VRF configuration.

Example 2-20 DSN Protected VRF Configuration

ip vrf T1P

description Tenant 1 Protected

rd 10.1.63.13:13

!

interface Loopback101

description RID for VRF T1P

ip vrf forwarding T1P

ip address 10.1.63.13 255.255.255.255

!

interface Port-channel103

description L3 PC to EAST-DIST-A

no switchport

no ip address

logging event link-status

logging event bundle-status

load-interval 30

port-channel port hash-distribution adaptive

!

interface Port-channel103.101

encapsulation dot1Q 3301

ip vrf forwarding T1P

ip address 10.1.60.46 255.255.255.252

ip ospf network point-to-point

!

interface Port-channel104

description L3 PC to EAST-DIST-B

!

interface Port-channel104.101

encapsulation dot1Q 3401

ip vrf forwarding T1P

ip address 10.1.60.50 255.255.255.252

ip ospf network point-to-point

!

interface Vlan611

description Tenant 1 ACE Protected VLAN

ip vrf forwarding T1P

ip address 10.1.60.129 255.255.255.248

load-interval 30

!

interface Vlan612

description Tenant 1 FWSM Inside Protected VLAN

ip vrf forwarding T1P

ip address 10.1.60.161 255.255.255.248

load-interval 30

!

router ospf 101 vrf T1P

router-id 10.1.63.13

log-adjacency-changes

auto-cost reference-bandwidth 100000

capability vrf-lite

area 0.0.0.10 nssa default-information-originate

timers throttle spf 10 100 5000

timers throttle lsa all 10 100 5000

timers lsa arrival 80

redistribute static subnets route-map T1P

passive-interface Vlan611

passive-interface Vlan612

network 10.1.60.44 0.0.0.3 area 0.0.0.10

network 10.1.60.48 0.0.0.3 area 0.0.0.10

network 10.1.60.128 0.0.0.7 area 0.0.0.10

network 10.1.60.160 0.0.0.7 area 0.0.0.10

network 10.1.63.13 0.0.0.0 area 0.0.0.10

!

ip route vrf T1P 0.0.0.0 0.0.0.0 10.1.60.163

ip route vrf T1P 10.1.56.0 255.255.255.0 10.1.60.130

ip route vrf T1P 10.1.58.0 255.255.255.0 10.1.60.130

!

route-map T1P permit 10

match ip address 2101

!

access-list 2101 permit ip 10.1.56.0 0.0.0.255 any

access-list 2101 permit ip 10.1.58.0 0.0.0.255 any

Application Control Engine (ACE)

In the VMDC 2.1 solution, the Cisco ACE modules provide the following features:

•![]() Virtualization (context and resource allocation)

Virtualization (context and resource allocation)

•![]() Redundancy (active-active context failover)

Redundancy (active-active context failover)

•![]() Load balancing (protocols, stickiness)

Load balancing (protocols, stickiness)

•![]() Source NAT (static and dynamic NAT)

Source NAT (static and dynamic NAT)

The initial step to deploy the Cisco ACE module in the Cisco VMDC 2.1 network is to allocate the VLANs that the module will use from the DSN. The svclc vlan-group command is used to allocate VLANs to VLAN groups and to apply the VLAN groups to the Cisco ACE module use the svclc switch command.

Example 2-21 VLAN Allocation on the Cisco ACE Module (Catalyst 6500 VSS Configuration)

svclc multiple-vlan-interfaces

svclc switch 1 module 1 vlan-group 1,2

svclc switch 2 module 1 vlan-group 1,2

svclc vlan-group 1 3-6,32,40-43,132

svclc vlan-group 2 211,221,231,241,251,261,271,281,611,621,631,641,651,671

svclc vlan-group 2 681

After the VLANs are allocated, the contexts for the unprotected and protected tenant zones are created, as shown in Example 2-22, Example 2-23, Example 2-24, and Example 2-25.

Example 2-22 Example Tenant Contexts on the Cisco ACE Module (System Context Configuration)

EAST-ACE-A# show run

Generating configuration....

!

logging enable

logging timestamp

logging trap 7

logging supervisor 7

logging host 172.18.177.178 udp/514

!

peer hostname EAST-ACE-B

!

login timeout 0

line vty

session-limit 100

hostname EAST-ACE-A

boot system image:c6ace-t1k9-mz.A2_3_3.bin

!

clock timezone standard EST

!

class-map type management match-any REMOTE-ACCESS_ALL

description "Remote Management for ALL"

2 match protocol telnet any

3 match protocol ssh any

4 match protocol icmp any

5 match protocol http any

6 match protocol snmp any

7 match protocol https any

8 match protocol kalap-udp any

!

policy-map type management first-match REMOTE-MGMT

class REMOTE-ACCESS_ALL

permit

!

interface vlan 32

ip address 10.0.32.112 255.255.255.0

peer ip address 10.0.32.113 255.255.255.0

mtu 1500

service-policy input REMOTE-MGMT

no shutdown

!

ft interface vlan 132

ip address 10.0.132.112 255.255.255.0

peer ip address 10.0.132.113 255.255.255.0

no shutdown

!

ft peer 1

heartbeat interval 300

heartbeat count 10

ft-interface vlan 132

!

ft group 100

peer 1

priority 250

peer priority 200

associate-context Admin

inservice

!

ip route 0.0.0.0 0.0.0.0 10.0.32.1

!

context T1U

allocate-interface vlan 40

allocate-interface vlan 211

!

snmp-server community public group Network-Monitor

!

snmp-server enable traps slb serverfarm

snmp-server enable traps snmp coldstart

snmp-server enable traps virtual-context

snmp-server enable traps license

snmp-server enable traps slb vserver

snmp-server enable traps slb real

snmp-server enable traps syslog

snmp-server enable traps snmp authentication

snmp-server enable traps snmp linkup

snmp-server enable traps snmp linkdown

!

ft group 1

peer 1

priority 250

peer priority 200

associate-context T1U

inservice

!

Example 2-23 Example Tenant Contexts on the Cisco ACE Module (Unprotected Tenant Context Configuration)

EAST-ACE-A/T1U# show run

Generating configuration....

!

logging enable

logging timestamp

logging host 10.0.32.34 udp/514

!

access-list anyone line 10 extended permit ip any any

!

probe icmp PROBE_ICMP

interval 10

faildetect 2

passdetect interval 10

!

rserver host Avalanche-VM1

description Avalanche Server-1

ip address 10.1.3.230

inservice

rserver host Avalanche-VM2

description Avalanche Server-2

ip address 10.1.3.231

inservice

rserver host Avalanche-VM3

description Avalanche Server-3

ip address 10.1.3.232

inservice

!

serverfarm host Avalanche-sfarm1

predictor leastconns

rserver Avalanche-VM1

inservice

rserver Avalanche-VM2

inservice

rserver Avalanche-VM3

inservice

!

parameter-map type connection 5min_IDLE

slowstart

set timeout inactivity 300

!

class-map type management match-any REMOTE-ACCESS_ALL

description Remote Management for ALL

2 match protocol telnet any

3 match protocol ssh any

4 match protocol icmp any

5 match protocol http any

6 match protocol snmp any

7 match protocol https any

8 match protocol kalap-udp any

class-map match-any VIP_10.1.24.101_udp:53

2 match virtual-address 10.1.24.101 udp eq domain

class-map match-any VIP_10.1.24.102_tcp:80

2 match virtual-address 10.1.24.102 tcp eq www

!

policy-map type management first-match REMOTE-MGMT

class REMOTE-ACCESS_ALL

permit

!

policy-map type loadbalance first-match Avalanche-LBPM1

class class-default

serverfarm Avalanche-sfarm1

policy-map type loadbalance first-match Avalanche-LBPM2

class class-default

serverfarm Avalanche-sfarm1

!

policy-map multi-match Avalanche-VM-Policy

class VIP_10.1.24.101_udp:53

loadbalance vip inservice

loadbalance policy Avalanche-LBPM1

loadbalance vip icmp-reply active

nat dynamic 53 vlan 211

connection advanced-options 5min_IDLE

class VIP_10.1.24.102_tcp:80

loadbalance vip inservice

loadbalance policy Avalanche-LBPM2

loadbalance vip icmp-reply active

nat dynamic 80 vlan 211

connection advanced-options 5min_IDLE

!

interface vlan 40

ip address 10.0.40.5 255.255.255.0

alias 10.0.40.4 255.255.255.0

peer ip address 10.0.40.6 255.255.255.0

access-group input anyone

service-policy input REMOTE-MGMT

no shutdown

!

interface vlan 211

ip address 10.1.28.131 255.255.255.248

alias 10.1.28.130 255.255.255.248

peer ip address 10.1.28.132 255.255.255.248

access-group input anyone

nat-pool 53 10.1.26.1 10.1.26.1 netmask 255.255.255.0 pat

nat-pool 80 10.1.26.2 10.1.26.2 netmask 255.255.255.0 pat

service-policy input Avalanche-VM-Policy

service-policy input REMOTE-MGMT

no shutdown

!

ip route 0.0.0.0 0.0.0.0 10.1.28.129

ip route 10.0.32.0 255.255.240.0 10.0.40.1

ip route 172.18.0.0 255.255.0.0 10.0.40.1

!

snmp-server community public group Network-Monitor

!

snmp-server enable traps snmp authentication

snmp-server enable traps snmp linkup

snmp-server enable traps snmp linkdown

Example 2-24 Example Tenant Contexts on the Cisco ACE Module (System Context Configuration)

context T1P

allocate-interface vlan 40

allocate-interface vlan 611

!

ft group 101

peer 1

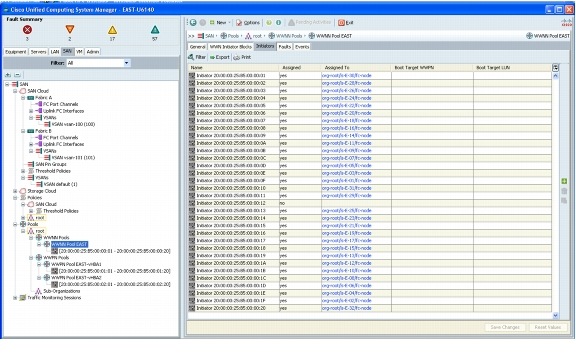

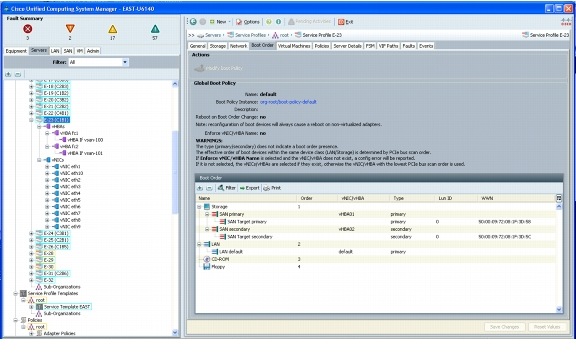

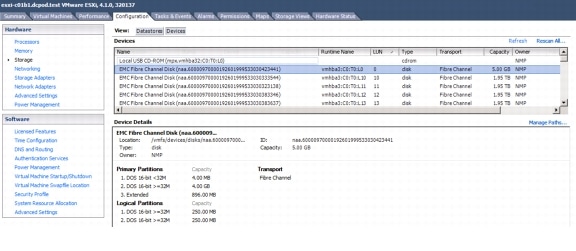

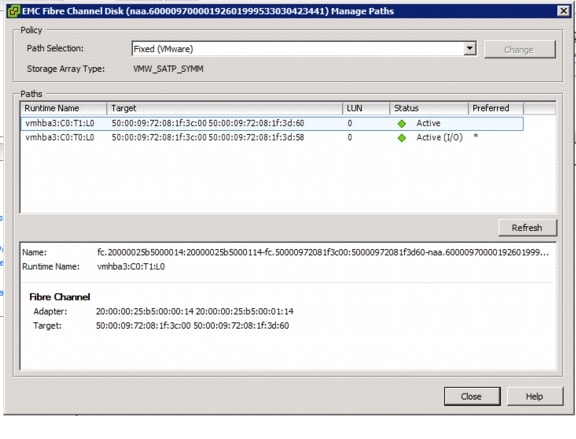

priority 250