Cisco Overlay Transport Virtualization Technology Introduction and Deployment Considerations

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- October 28, 2010

Chapter: OTV Technology Introduction and Deployment Considerations

- OTV Technology Primer

- OTV Terminology

- Control Plane Considerations

- Data Plane: Unicast Traffic

- Data Plane: Multicast Traffic

- Data Plane: Broadcast Traffic

- Failure Isolation

- Multi-Homing

- Traffic Load Balancing

- QoS Considerations

- FHRP Isolation

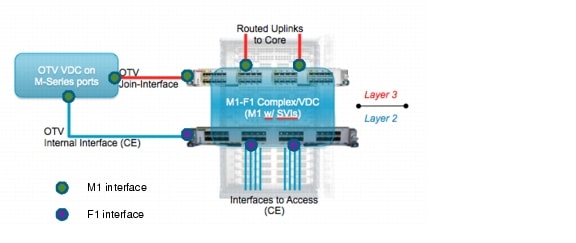

- OTV and SVIs Coexistence

- OTV Scalability Considerations

- OTV Hardware Support and Licensing Information

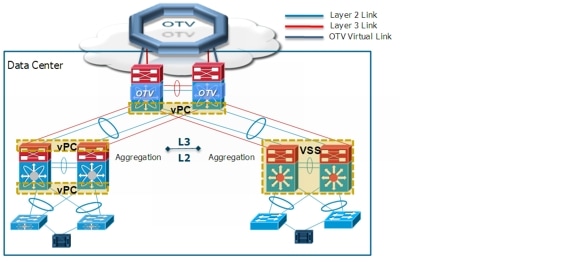

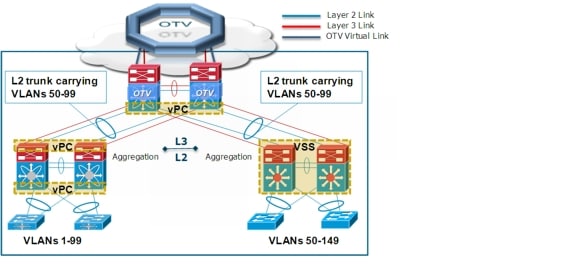

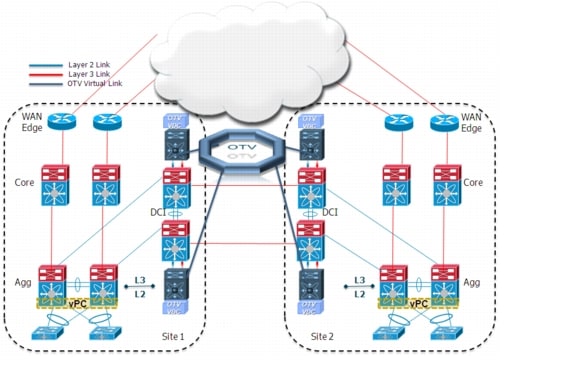

- OTV Deployment Options

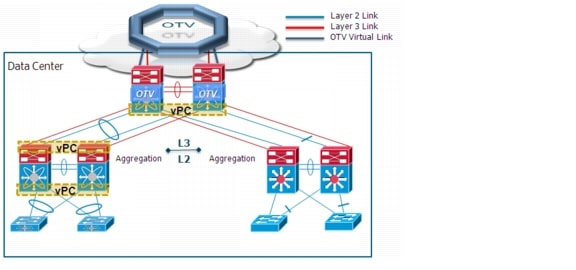

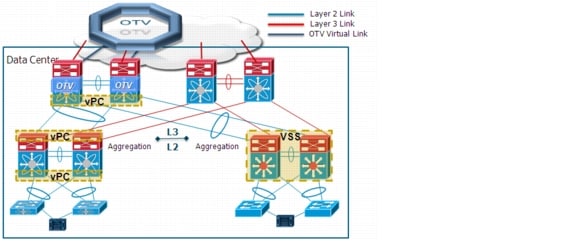

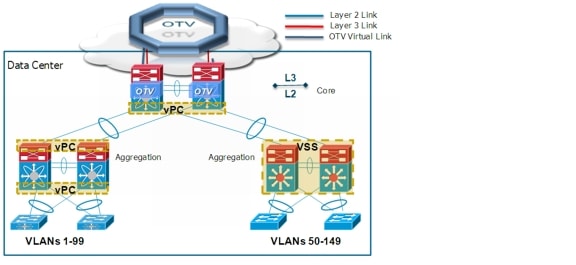

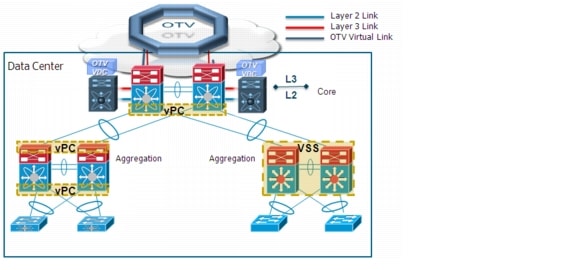

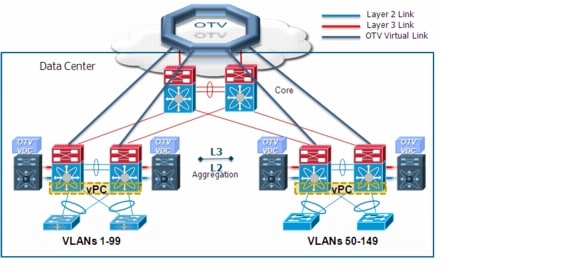

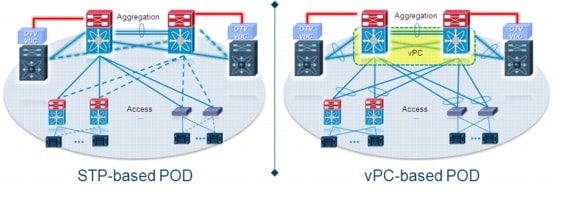

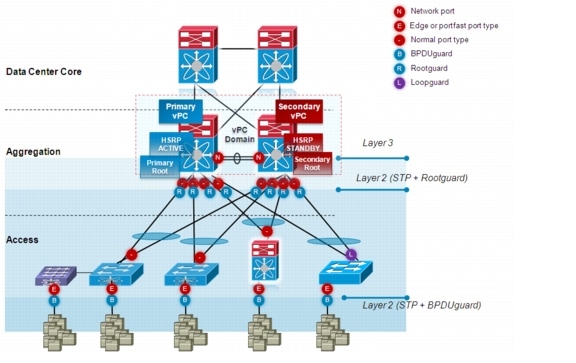

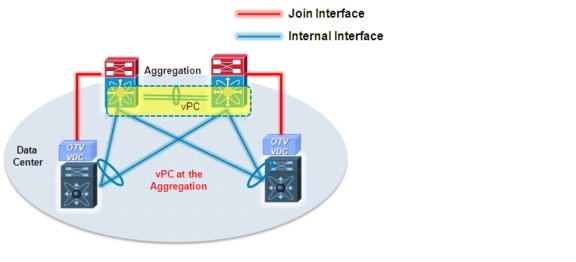

- Deploying OTV at the DC Aggregation

OTV Technology Introduction and Deployment Considerations

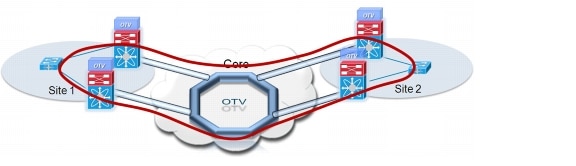

This document introduces a Cisco innovative LAN extension technology called Overlay Transport Virtualization (OTV). OTV is an IP-based functionality that has been designed from the ground up to provide Layer 2 extension capabilities over any transport infrastructure: Layer 2 based, Layer 3 based, IP switched, label switched, and so on. The only requirement from the transport infrastructure is providing IP connectivity between remote data center sites. In addition, OTV provides an overlay that enables Layer 2 connectivity between separate Layer 2 domains while keeping these domains independent and preserving the fault-isolation, resiliency, and load-balancing benefits of an IP-based interconnection.

As of this writing, the Nexus 7000 is the only Cisco platform supporting OTV. All the technology and deployment considerations contained in this paper focus on positioning the Nexus 7000 platforms inside the data center to establish Layer 2 connectivity between remote sites. OTV support on Nexus 7000 platforms has been introduced from the NX-OS 5.0(3) software release. When necessary, available OTV features will be identified in the current release or mentioned as a future roadmap function. This document will be periodically updated every time a software release introduces significant new functionality.

OTV Technology Primer

Before discussing OTV in detail, it is worth differentiating this technology from traditional LAN extension solutions such as EoMPLS and VPLS.

OTV introduces the concept of "MAC routing," which means a control plane protocol is used to exchange MAC reachability information between network devices providing LAN extension functionality. This is a significant shift from Layer 2 switching that traditionally leverages data plane learning, and it is justified by the need to limit flooding of Layer 2 traffic across the transport infrastructure. As emphasized throughout this document, Layer 2 communications between sites resembles routing more than switching. If the destination MAC address information is unknown, then traffic is dropped (not flooded), preventing waste of precious bandwidth across the WAN.

OTV also introduces the concept of dynamic encapsulation for Layer 2 flows that need to be sent to remote locations. Each Ethernet frame is individually encapsulated into an IP packet and delivered across the transport network. This eliminates the need to establish virtual circuits, called Pseudowires, between the data center locations. Immediate advantages include improved flexibility when adding or removing sites to the overlay, more optimal bandwidth utilization across the WAN (specifically when the transport infrastructure is multicast enabled), and independence from the transport characteristics (Layer 1, Layer 2 or Layer 3).

Finally, OTV provides a native built-in multi-homing capability with automatic detection, critical to increasing high availability of the overall solution. Two or more devices can be leveraged in each data center to provide LAN extension functionality without running the risk of creating an end-to-end loop that would jeopardize the overall stability of the design. This is achieved by leveraging the same control plane protocol used for the exchange of MAC address information, without the need of extending the Spanning-Tree Protocol (STP) across the overlay.

The following sections detail the OTV technology and introduce alternative design options for deploying OTV within, and between, data centers.

OTV Terminology

Before learning how OTV control and data planes work, you must understand OTV specific terminology.

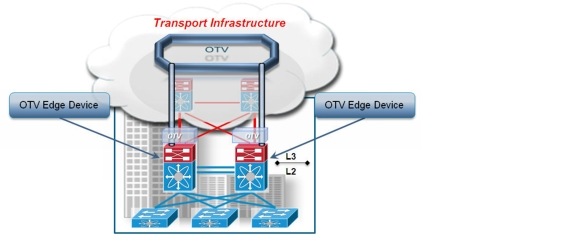

Edge Device

The edge device (Figure 1-1) performs OTV functions: it receives the Layer 2 traffic for all VLANs that need to be extended to remote locations and dynamically encapsulates the Ethernet frames into IP packets that are then sent across the transport infrastructure.

Figure 1-1 OTV Edge Device

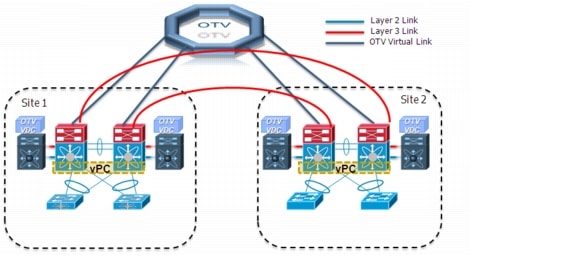

It is expected that at least two OTV edge devices are deployed at each data center site to improve the resiliency, as discussed more fully in Multi-Homing.

Finally, the OTV edge device can be positioned in different parts of the data center. The choice depends on the site network topology. Figure 1-1 shows edge device deployment at the aggregation layer.

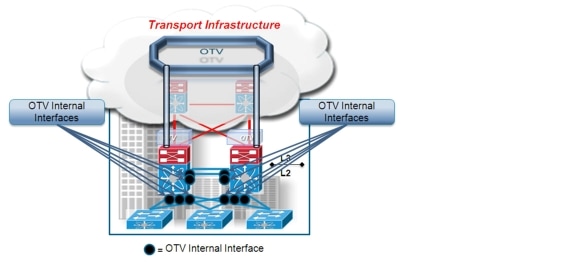

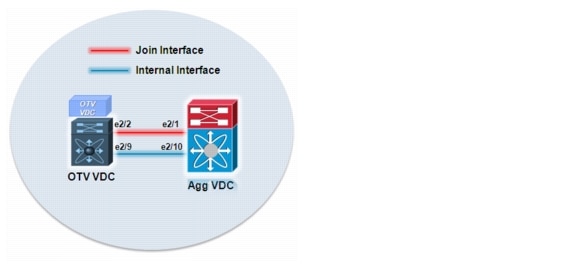

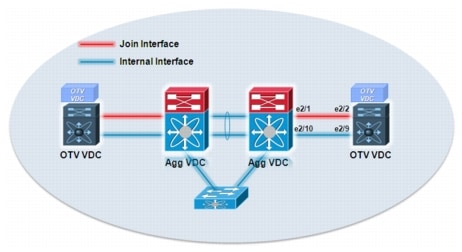

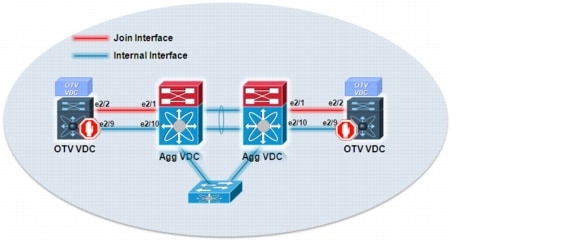

Internal Interfaces

To perform OTV functionality, the edge device must receive the Layer 2 traffic for all VLANs that need to be extended to remote locations. The Layer 2 interfaces, where the Layer 2 traffic is usually received, are named internal interfaces (Figure 1-2).

Figure 1-2 OTV Internal Interfaces

Internal interfaces are regular Layer 2 interfaces configured as access or trunk ports. Trunk configuration is typical given the need to concurrently extend more than one VLAN across the overlay. There is no need to apply OTV-specific configuration to these interfaces. Also, typical Layer 2 functions (like local switching, spanning-tree operation, data plane learning, and flooding) are performed on the internal interfaces. Figure 1-2 shows Layer 2 trunks that are considered internal interfaces which are usually deployed between the edge devices also.

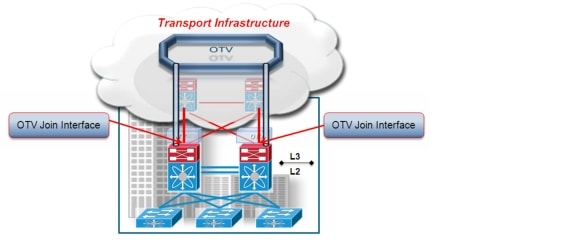

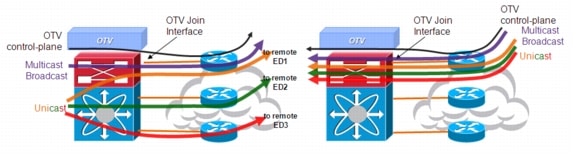

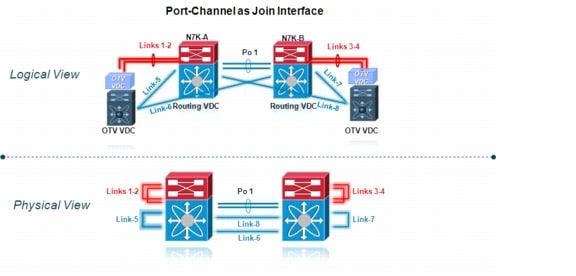

Join Interface

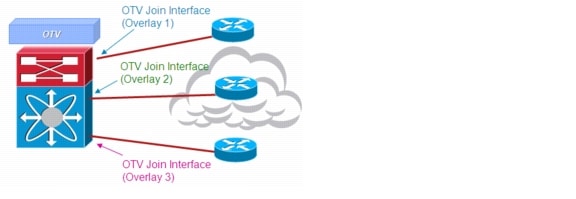

The Join interface (Figure 1-3) is used to source the OTV encapsulated traffic and send it to the Layer 3 domain of the data center network.

Figure 1-3 OTV Join Interface

The Join interface is a Layer 3 entity and with the current NX-OS release can only be defined as a physical interface (or subinterface) or as a logical one (i.e. Layer 3 port channel or Layer 3 port channel subinterface). A single Join interface can be defined and associated with a given OTV overlay. Multiple overlays can also share the same Join interface.

Note ![]() Support for loopback interfaces as OTV Join interfaces is planned for a future NX-OS release.

Support for loopback interfaces as OTV Join interfaces is planned for a future NX-OS release.

The Join interface is used by the edge device for different purposes:

•![]() "Join" the Overlay network and discover the other remote OTV edge devices.

"Join" the Overlay network and discover the other remote OTV edge devices.

•![]() Form OTV adjacencies with the other OTV edge devices belonging to the same VPN.

Form OTV adjacencies with the other OTV edge devices belonging to the same VPN.

•![]() Send/receive MAC reachability information.

Send/receive MAC reachability information.

•![]() Send/receive unicast and multicast traffic.

Send/receive unicast and multicast traffic.

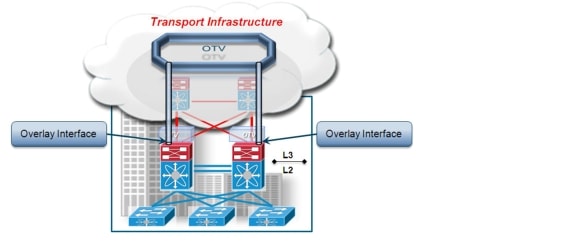

Overlay Interface

The Overlay interface (Figure 1-4) is a logical multi-access and multicast-capable interface that must be explicitly defined by the user and where the entire OTV configuration is applied.

Figure 1-4 OTV Overlay Interface

Every time the OTV edge device receives a Layer 2 frame destined for a remote data center site, the frame is logically forwarded to the Overlay interface. This instructs the edge device to perform the dynamic OTV encapsulation on the Layer 2 packet and send it to the Join interface toward the routed domain.

Control Plane Considerations

As mentioned, one fundamental principle on which OTV operates is the use of a control protocol running between the OTV edge devices to advertise MAC address reachability information instead of using data plane learning. However, before MAC reachability information can be exchanged, all OTV edge devices must become "adjacent" to each other from an OTV perspective. This can be achieved in two ways, depending on the nature of the transport network interconnecting the various sites:

•![]() If the transport is multicast enabled, a specific multicast group can be used to exchange the control protocol messages between the OTV edge devices.

If the transport is multicast enabled, a specific multicast group can be used to exchange the control protocol messages between the OTV edge devices.

•![]() If the transport is not multicast enabled, an alternative deployment model is available starting from NX-OS release 5.2(1), where one (or more) OTV edge device can be configured as an "Adjacency Server" to which all other edge devices register and communicates to them the list of devices belonging to a given overlay.

If the transport is not multicast enabled, an alternative deployment model is available starting from NX-OS release 5.2(1), where one (or more) OTV edge device can be configured as an "Adjacency Server" to which all other edge devices register and communicates to them the list of devices belonging to a given overlay.

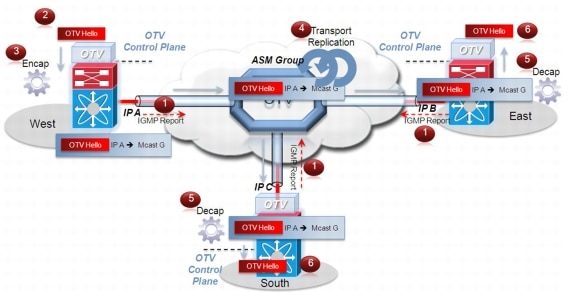

Multicast Enabled Transport Infrastructure

Assuming the transport is multicast enabled, all OTV edge devices can be configured to join a specific ASM (Any Source Multicast) group where they simultaneously play the role of receiver and source. If the transport is owned by a Service Provider, for example, the Enterprise will have to negotiate the use of this ASM group with the SP.

.

Figure 1-5 shows the overall sequence of steps leading to the discovery of all OTV edge devices belonging to the same overlay.

Figure 1-5 OTV Neighbor Discovery

Step 1 ![]() Each OTV edge device sends an IGMP report to join the specific ASM group used to carry control protocol exchanges (group G in this example). The edge devices join the group as hosts, leveraging the Join interface. This happens without enabling PIM on this interface. The only requirement is to specify the ASM group to be used and associate it with a given Overlay interface.

Each OTV edge device sends an IGMP report to join the specific ASM group used to carry control protocol exchanges (group G in this example). The edge devices join the group as hosts, leveraging the Join interface. This happens without enabling PIM on this interface. The only requirement is to specify the ASM group to be used and associate it with a given Overlay interface.

Step 2 ![]() The OTV control protocol running on the left OTV edge device generates Hello packets that need to be sent to all other OTV edge devices. This is required to communicate its existence and to trigger the establishment of control plane adjacencies.

The OTV control protocol running on the left OTV edge device generates Hello packets that need to be sent to all other OTV edge devices. This is required to communicate its existence and to trigger the establishment of control plane adjacencies.

Step 3 ![]() The OTV Hello messages need to be sent across the logical overlay to reach all OTV remote devices. For this to happen, the original frames must be OTV-encapsulated, adding an external IP header. The source IP address in the external header is set to the IP address of the Join interface of the edge device, whereas the destination is the multicast address of the ASM group dedicated to carry the control protocol. The resulting multicast frame is then sent to the Join interface toward the Layer 3 network domain.

The OTV Hello messages need to be sent across the logical overlay to reach all OTV remote devices. For this to happen, the original frames must be OTV-encapsulated, adding an external IP header. The source IP address in the external header is set to the IP address of the Join interface of the edge device, whereas the destination is the multicast address of the ASM group dedicated to carry the control protocol. The resulting multicast frame is then sent to the Join interface toward the Layer 3 network domain.

Step 4 ![]() The multicast frames are carried across the transport and optimally replicated to reach all the OTV edge devices that joined that multicast group G.

The multicast frames are carried across the transport and optimally replicated to reach all the OTV edge devices that joined that multicast group G.

Step 5 ![]() The receiving OTV edge devices decapsulate the packets.

The receiving OTV edge devices decapsulate the packets.

Step 6 ![]() The Hellos are passed to the control protocol process.

The Hellos are passed to the control protocol process.

The same process occurs in the opposite direction and the end result is the creation of OTV control protocol adjacencies between all edge devices. The use of the ASM group as a vehicle to transport the Hello messages allows the edge devices to discover each other as if they were deployed on a shared LAN segment. The LAN segment is basically implemented via the OTV overlay.

Two important considerations for OTV control protocol are as follows:

1. ![]() This protocol runs as an "overlay" control plane between OTV edge devices which means there is no dependency with the routing protocol (IGP or BGP) used in the Layer 3 domain of the data center, or in the transport infrastructure.

This protocol runs as an "overlay" control plane between OTV edge devices which means there is no dependency with the routing protocol (IGP or BGP) used in the Layer 3 domain of the data center, or in the transport infrastructure.

2. ![]() The OTV control plane is transparently enabled in the background after creating the OTV Overlay interface and does not require explicit configuration. Tuning parameters, like timers, for the OTV protocol is allowed, but this is expected to be more of a corner case than a common requirement.

The OTV control plane is transparently enabled in the background after creating the OTV Overlay interface and does not require explicit configuration. Tuning parameters, like timers, for the OTV protocol is allowed, but this is expected to be more of a corner case than a common requirement.

Note ![]() The routing protocol used to implement the OTV control plane is IS-IS. It was selected because it is a standard-based protocol, originally designed with the capability of carrying MAC address information in the TLV. In the rest of this document, the control plane protocol will be generically called "OTV protocol".

The routing protocol used to implement the OTV control plane is IS-IS. It was selected because it is a standard-based protocol, originally designed with the capability of carrying MAC address information in the TLV. In the rest of this document, the control plane protocol will be generically called "OTV protocol".

From a security perspective, it is possible to leverage the IS-IS HMAC-MD5 authentication feature to add an HMAC-MD5 digest to each OTV control protocol message. The digest allows authentication at the IS-IS protocol level, which prevents unauthorized routing message from being injected into the network routing domain. At the same time, only authenticated devices will be allowed to successfully exchange OTV control protocol messages between them and hence to become part of the same Overlay network.

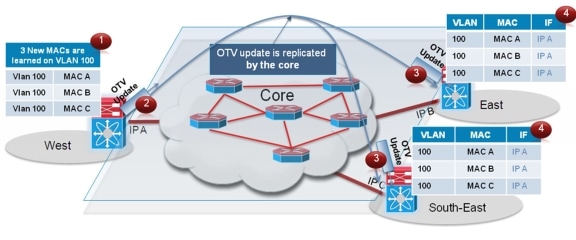

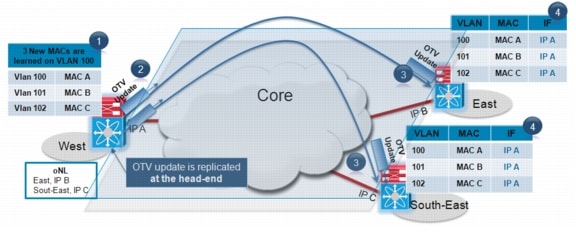

Once OTV edge devices have discovered each other, it is then possible to leverage the same mechanism to exchange MAC address reachability information, as shown in Figure 1-6.

Figure 1-6 MAC Address Advertisement

Step 1 ![]() The OTV edge device in the West data center site learns new MAC addresses (MAC A, B and C on VLAN 100) on its internal interface. This is done via traditional data plan learning.

The OTV edge device in the West data center site learns new MAC addresses (MAC A, B and C on VLAN 100) on its internal interface. This is done via traditional data plan learning.

Step 2 ![]() An OTV Update message is created containing information for MAC A, MAC B and MAC C. The message is OTV encapsulated and sent into the Layer 3 transport. Once again, the IP destination address of the packet in the outer header is the multicast group G used for control protocol exchanges.

An OTV Update message is created containing information for MAC A, MAC B and MAC C. The message is OTV encapsulated and sent into the Layer 3 transport. Once again, the IP destination address of the packet in the outer header is the multicast group G used for control protocol exchanges.

Step 3 ![]() The OTV Update is optimally replicated in the transport and delivered to all remote edge devices which decapsulate it and hand it to the OTV control process.

The OTV Update is optimally replicated in the transport and delivered to all remote edge devices which decapsulate it and hand it to the OTV control process.

Step 4 ![]() The MAC reachability information is imported in the MAC Address Tables (CAMs) of the edge devices. As noted in Figure 1-6, the only difference with a traditional CAM entry is that instead of having associated a physical interface, these entries refer the IP address of the Join interface of the originating edge device.

The MAC reachability information is imported in the MAC Address Tables (CAMs) of the edge devices. As noted in Figure 1-6, the only difference with a traditional CAM entry is that instead of having associated a physical interface, these entries refer the IP address of the Join interface of the originating edge device.

Note ![]() MAC table content shown in Figure 1-6 is an abstraction used to explain OTV functionality.

MAC table content shown in Figure 1-6 is an abstraction used to explain OTV functionality.

The same control plane communication is also used to withdraw MAC reachability information. For example, if a specific network entity is disconnected from the network, or stops communicating, the corresponding MAC entry would eventually be removed from the CAM table of the OTV edge device. This occurs by default after 30 minutes on the OTV edge device. The removal of the MAC entry triggers an OTV protocol update so that all remote edge devices delete the same MAC entry from their respective tables.

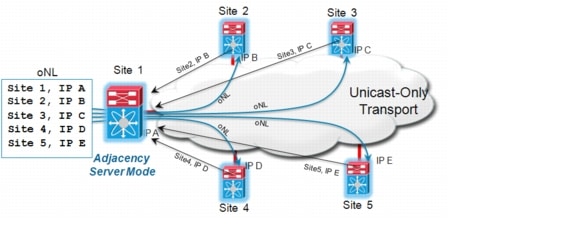

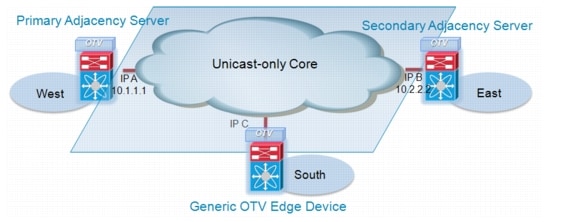

Unicast-Only Transport Infrastructure (Adjacency-Server Mode)

Starting with NX-OS 5.2(1) release, OTV can be deployed with unicast-only transport. As previously described, a multicast enabled transport infrastructure lets a single OTV update or Hello packet reach all other OTV devices by virtue of leveraging a specific multicast control group address.

The OTV control plane over a unicast-only transport works exactly the same way as OTV with multicast mode. The only difference is that each OTV devices would need to create multiple copies of each control plane packet and unicast them to each remote OTV device part of the same logical overlay. Because of this head-end replication behavior, leveraging a multicast enabled transport remains the recommended way of deploying OTV in cases where several DC sites are involved. At the same time, the operational simplification brought by the unicast-only model (removing the need for multicast deployment) can make this deployment option very appealing in scenarios where LAN extension connectivity is required only between few (2-3) DC sites.

To be able to communicate with all the remote OTV devices, each OTV node needs to know a list of neighbors to replicate the control packets to. Rather than statically configuring in each OTV node the list of all neighbors, a simple dynamic means is used to provide this information. This is achieved by designating one (or more) OTV Edge device to perform a specific role, named Adjacency Server. Every OTV device wishing to join a specific OTV logical overlay, needs to first "register" with the Adjacency Server (by start sending OTV Hello messages to it). All other OTV neighbor addresses are discovered dynamically through the Adjacency Server. Thereby, when the OTV service needs to be extended to a new DC site, only the OTV edge devices for the new site need to be configured with the Adjacency Server addresses. No other sites need additional configuration.

The reception of the Hello messages from all the OTV edge devices helps the Adjacency Server to build up the list of all the OTV devices that should be part of the same overlay (named unicast-replication-list). This list is periodically sent in unicast fashion to all the listed OTV devices, so that they can dynamically be aware about all the OTV neighbors in the network.

In Figure 1-7, the OTV Edge device on Site-1 is configured as Adjacency Server. All other OTV edge devices register to this Adjacency Server, which in turn sends the entire neighbor list to each OTV client periodically by means of OTV Hellos.

Figure 1-7 Adjacency Server Functionality

Figure 1-8 shows the overall sequence of steps leading to the establishment of OTV control plane adjacencies between all the OTV edge devices belonging to the same overlay.

Figure 1-8 Creation of OTV Control Plane Adjacencies (Unicast Core)

Step 1 ![]() The OTV control protocol running on the left OTV edge device generates Hello packets that need to be sent to all other OTV edge devices. This is required to communicate its existence and to trigger the establishment of control plane adjacencies.

The OTV control protocol running on the left OTV edge device generates Hello packets that need to be sent to all other OTV edge devices. This is required to communicate its existence and to trigger the establishment of control plane adjacencies.

Step 2 ![]() The OTV Hello messages need to be sent across the logical overlay to reach all OTV remote devices. For this to happen, the left OTV device must perform head-end replication, creating one copy of the Hello message for each remote OTV device part of the unicast-replication-list previously received from the Adjacency Server. Each of these frames must then be OTV-encapsulated, adding an external IP header. The source IP address in the external header is set to the IP address of the Join interface of the local edge device, whereas the destination is the Join interface address of a remote OTV edge device. The resulting unicast frames are then sent out the Join interface toward the Layer 3 network domain.

The OTV Hello messages need to be sent across the logical overlay to reach all OTV remote devices. For this to happen, the left OTV device must perform head-end replication, creating one copy of the Hello message for each remote OTV device part of the unicast-replication-list previously received from the Adjacency Server. Each of these frames must then be OTV-encapsulated, adding an external IP header. The source IP address in the external header is set to the IP address of the Join interface of the local edge device, whereas the destination is the Join interface address of a remote OTV edge device. The resulting unicast frames are then sent out the Join interface toward the Layer 3 network domain.

Step 3 ![]() The unicast frames are routed across the unicast-only transport infrastructure and delivered to their specific destination sites.

The unicast frames are routed across the unicast-only transport infrastructure and delivered to their specific destination sites.

Step 4 ![]() The receiving OTV edge devices decapsulate the packets.

The receiving OTV edge devices decapsulate the packets.

Step 5 ![]() The Hellos are passed to the control protocol process.

The Hellos are passed to the control protocol process.

The same process occurs on each OTV edge device and the end result is the creation of OTV control protocol adjacencies between all edge devices.

The same considerations around the OTV control protocol characteristics already discussed for the multicast transport option still hold valid here (please refer to the previous section for more details).

Once the OTV edge devices have discovered each other, it is then possible to leverage a similar mechanism to exchange MAC address reachability information, as shown in Figure 1-9.

Figure 1-9 MAC Address Advertisement (Unicast Core)

Step 1 ![]() The OTV edge device in the West data center site learns new MAC addresses (MAC A, B and C on VLAN 100, 101 and 102) on its internal interface. This is done via traditional data plan learning.

The OTV edge device in the West data center site learns new MAC addresses (MAC A, B and C on VLAN 100, 101 and 102) on its internal interface. This is done via traditional data plan learning.

Step 2 ![]() An OTV Update message containing information for MAC A, MAC B and MAC C is created for each remote OTV edge device (head-end replication). These messages are OTV encapsulated and sent into the Layer 3 transport. Once again, the IP destination address of the packet in the outer header is the Join interface address of each specific remote OTV device.

An OTV Update message containing information for MAC A, MAC B and MAC C is created for each remote OTV edge device (head-end replication). These messages are OTV encapsulated and sent into the Layer 3 transport. Once again, the IP destination address of the packet in the outer header is the Join interface address of each specific remote OTV device.

Step 3 ![]() The OTV Updates are routed in the unicast-only transport and delivered to all remote edge devices which decapsulate them and hand them to the OTV control process.

The OTV Updates are routed in the unicast-only transport and delivered to all remote edge devices which decapsulate them and hand them to the OTV control process.

Step 4 ![]() The MAC reachability information is imported in the MAC Address Tables (CAMs) of the edge devices. As noted above, the only difference with a traditional CAM entry is that instead of having associated a physical interface, these entries refer the IP address (IP A) of the Join interface of the originating edge device.

The MAC reachability information is imported in the MAC Address Tables (CAMs) of the edge devices. As noted above, the only difference with a traditional CAM entry is that instead of having associated a physical interface, these entries refer the IP address (IP A) of the Join interface of the originating edge device.

A pair of Adjacency Servers can be deployed for redundancy purposes. These Adjacency Server devices are completely stateless between them, which implies that every OTV edge device (OTV clients) should register its existence with both of them. For this purpose, the primary and secondary Adjacency Servers are configured in each OTV edge device. However, an OTV client will not process an alternate server's replication list until it detects that the primary Adjacency Server has timed out. Once that happens, each OTV edge device will start using the replication list from the secondary Adjacency Server and push the difference to OTV. OTV will stale the replication list entries with a timer of 10 minutes. If the Primary Adjacency Server comes back up within 10 mins, OTV will always revert back to the primary replication list. In case the Primary Adjacency Server comes back up after replication list is deleted, a new replication list will be pushed by the Primary after learning all OTV neighbors by means of OTV Hellos that are sent periodically.

OTV also uses graceful exit of Adjacency Server. When a Primary Adjacency Server is de-configured or is rebooted, it can let its client know about it and can exit gracefully. Following this, all OTV clients can start using alternate Server's replication list without waiting for primary Adjacency Server to time out.

For more information around Adjacency Server configuration, please refer to "OTV Configuration" section.

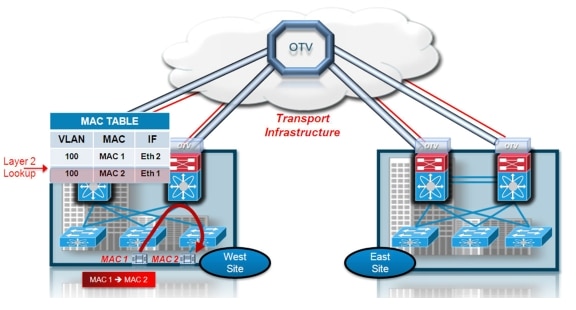

Data Plane: Unicast Traffic

Once the control plane adjacencies between the OTV edge devices are established and MAC address reachability information is exchanged, traffic can start flowing across the overlay. Focusing initially on unicast traffic, it is worthwhile to distinguish between intra-site and inter-site Layer 2 communication (Figure 1-10).

Figure 1-10 Intra Site Layer 2 Unicast Traffic

Figure 1-10 depicts intra-site unicast communication: MAC 1 (Server 1) needs to communicate with MAC 2 (Server 2), both belonging to the same VLAN. When the frame is received at the aggregation layer device (which in this case is also deployed as the OTV edge device), the usual Layer 2 lookup is performed to determine how to reach the MAC 2 destination. Information in the MAC table points out a local interface (Eth 1), so the frame is delivered by performing classical Ethernet local switching. A different mechanism is required to establish Layer 2 communication between remote sites (Figure 1-11).

Figure 1-11 Inter Site Layer 2 Unicast Traffic

The following procedure details that relationship:

Step 1 ![]() The Layer 2 frame is received at the aggregation layer, or OTV edge device. A traditional Layer 2 lookup is performed, but this time the MAC 3 information in the MAC table does not point to a local Ethernet interface but to the IP address of the remote OTV edge device that advertised the MAC reachability information.

The Layer 2 frame is received at the aggregation layer, or OTV edge device. A traditional Layer 2 lookup is performed, but this time the MAC 3 information in the MAC table does not point to a local Ethernet interface but to the IP address of the remote OTV edge device that advertised the MAC reachability information.

Step 2 ![]() The OTV edge device encapsulates the original Layer 2 frame: the source IP of the outer header is the IP address of its Join interface, whereas the destination IP is the IP address of the Join interface of the remote edge device.

The OTV edge device encapsulates the original Layer 2 frame: the source IP of the outer header is the IP address of its Join interface, whereas the destination IP is the IP address of the Join interface of the remote edge device.

Step 3 ![]() The OTV encapsulated frame (a regular unicast IP packet) is carried across the transport infrastructure and delivered to the remote OTV edge device.

The OTV encapsulated frame (a regular unicast IP packet) is carried across the transport infrastructure and delivered to the remote OTV edge device.

Step 4 ![]() The remote OTV edge device decapsulates the frame exposing the original Layer 2 packet.

The remote OTV edge device decapsulates the frame exposing the original Layer 2 packet.

Step 5 ![]() The edge device performs another Layer 2 lookup on the original Ethernet frame and discovers that it is reachable through a physical interface, which means it is a MAC address local to the site.

The edge device performs another Layer 2 lookup on the original Ethernet frame and discovers that it is reachable through a physical interface, which means it is a MAC address local to the site.

Step 6 ![]() The frame is delivered to the MAC 3 destination.

The frame is delivered to the MAC 3 destination.

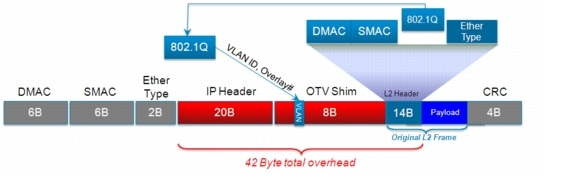

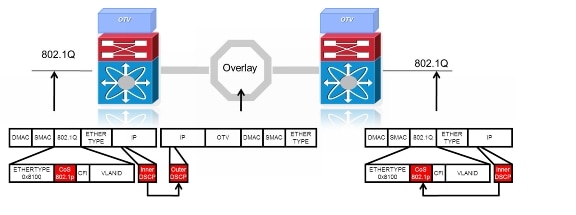

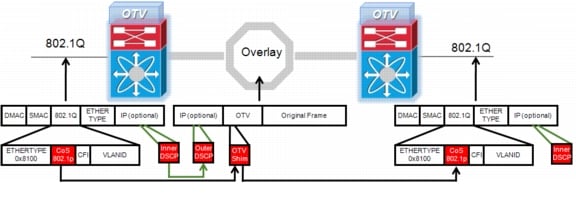

Given that Ethernet frames are carried across the transport infrastructure after being OTV encapsulated, some considerations around MTU are necessary. Figure 1-12 highlights OTV Data Plane encapsulation performed on the original Ethernet frame.

Figure 1-12 OTV Data Plane Encapsulation

In the first implementation, the OTV encapsulation increases the overall MTU size of 42 bytes. This is the result of the operation of the Edge Device that removes the CRC and the 802.1Q fields from the original Layer 2 frame and adds an OTV Shim (containing also the VLAN and Overlay ID information) and an external IP header.

Also, all OTV control and data plane packets originate from an OTV Edge Device with the "Don't Fragment" (DF) bit set. In a Layer 2 domain the assumption is that all intermediate LAN segments support at least the configured interface MTU size of the host. This means that mechanisms like Path MTU Discovery (PMTUD) are not an option in this case. Also, fragmentation and reassembly capabilities are not available on Nexus 7000 platforms. Consequently, increasing the MTU size of all the physical interfaces along the path between the source and destination endpoints to account for introducing the extra 42 bytes by OTV is recommended.

Note ![]() This is not an OTV specific consideration, since the same challenge applies to other Layer 2 VPN technologies, like EoMPLS or VPLS.

This is not an OTV specific consideration, since the same challenge applies to other Layer 2 VPN technologies, like EoMPLS or VPLS.

Data Plane: Multicast Traffic

In certain scenarios there may be the requirement to establish Layer 2 multicast communication between remote sites. This is the case when a multicast source sending traffic to a specific group is deployed in a given VLAN A in site 1, whereas multicast receivers belonging to the same VLAN A are placed in remote sites 2 and 3 and need to receive traffic for that same group.

Similarly to what is done for the OTV control plane, we need to distinguish the two scenarios where the transport infrastructure is multicast enabled, or not, for the data plane.

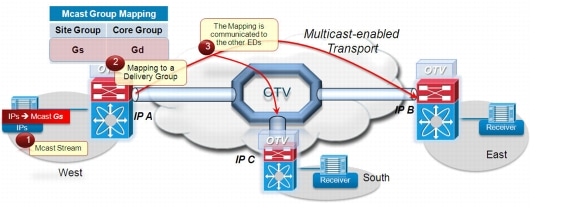

Multicast Enabled Transport Infrastructure

The Layer 2 multicast traffic must flow across the OTV overlay, and to avoid suboptimal head-end replication, a specific mechanism is required to ensure that multicast capabilities of the transport infrastructure can be leveraged.

The idea is to use a set of Source Specific Multicast (SSM) groups in the transport to carry these Layer 2 multicast streams. These groups are independent from the ASM group previously introduced to transport the OTV control protocol between sites.

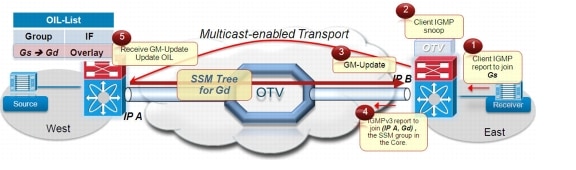

Figure 1-13 shows the steps occurring once a multicast source is activated in a given data center site.

Figure 1-13 Multicast Source Streaming to Group Gs

Step 1 ![]() A multicast source is activated on the West side and starts streaming traffic to the group Gs.

A multicast source is activated on the West side and starts streaming traffic to the group Gs.

Step 2 ![]() The local OTV edge device receives the first multicast frame and creates a mapping between the group Gs and a specific SSM group Gd available in the transport infrastructure. The range of SSM groups to be used to carry Layer 2 multicast data streams are specified during the configuration of the Overlay interface. Refer to OTV Configuration for details.

The local OTV edge device receives the first multicast frame and creates a mapping between the group Gs and a specific SSM group Gd available in the transport infrastructure. The range of SSM groups to be used to carry Layer 2 multicast data streams are specified during the configuration of the Overlay interface. Refer to OTV Configuration for details.

Step 3 ![]() The OTV control protocol is used to communicate the Gs-to-Gd mapping to all remote OTV edge devices. The mapping information specifies the VLAN (VLAN A) to which the multicast source belongs and the IP address of the OTV edge device that created the mapping.

The OTV control protocol is used to communicate the Gs-to-Gd mapping to all remote OTV edge devices. The mapping information specifies the VLAN (VLAN A) to which the multicast source belongs and the IP address of the OTV edge device that created the mapping.

Figure 1-14 shows steps that occur once a receiver, deployed in the same VLAN A of the multicast source, decides to join the multicast stream Gs.

Figure 1-14 Receiver Joining the Multicast Group Gs

Step 1 ![]() The client sends an IGMP report inside the East site to join the Gs group.

The client sends an IGMP report inside the East site to join the Gs group.

Step 2 ![]() The OTV edge device snoops the IGMP message and realizes there is an active receiver in the site interested in group Gs, belonging to VLAN A.

The OTV edge device snoops the IGMP message and realizes there is an active receiver in the site interested in group Gs, belonging to VLAN A.

Step 3 ![]() The OTV Device sends an OTV control protocol message to all the remote edge devices to communicate this information.

The OTV Device sends an OTV control protocol message to all the remote edge devices to communicate this information.

Step 4 ![]() The remote edge device in the West side receives the GM-Update and updates its Outgoing Interface List (OIL) with the information that group Gs needs to be delivered across the OTV overlay.

The remote edge device in the West side receives the GM-Update and updates its Outgoing Interface List (OIL) with the information that group Gs needs to be delivered across the OTV overlay.

Step 5 ![]() Finally, the edge device in the East side finds the mapping information previously received from the OTV edge device in the West side identified by the IP address IP A. The East edge device, in turn, sends an IGMPv3 report to the transport to join the (IP A, Gd) SSM group. This allows building an SSM tree (group Gd) across the transport infrastructure that can be used to deliver the multicast stream Gs.

Finally, the edge device in the East side finds the mapping information previously received from the OTV edge device in the West side identified by the IP address IP A. The East edge device, in turn, sends an IGMPv3 report to the transport to join the (IP A, Gd) SSM group. This allows building an SSM tree (group Gd) across the transport infrastructure that can be used to deliver the multicast stream Gs.

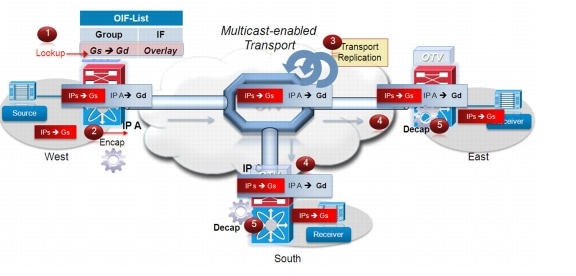

Figure 1-15 finally shows how multicast traffic is actually delivered across the OTV overlay.

Figure 1-15 Delivery of the Multicast Stream Gs

Step 1 ![]() The OTV edge device receives the stream Gs (sourced by IPs) and determines by looking at the OIL that there are receivers interested in group Gs that are reachable through the overlay.

The OTV edge device receives the stream Gs (sourced by IPs) and determines by looking at the OIL that there are receivers interested in group Gs that are reachable through the overlay.

Step 2 ![]() The edge device encapsulates the original multicast frame. The source in the outer IP header is the IP A address identifying itself, whereas the destination is the Gd SSM group dedicated to the delivery of multicast data.

The edge device encapsulates the original multicast frame. The source in the outer IP header is the IP A address identifying itself, whereas the destination is the Gd SSM group dedicated to the delivery of multicast data.

Step 3 ![]() The multicast stream Gd flows across the SSM tree previously built across the transport infrastructure and reaches all the remote sites with receivers interested in getting the Gs stream.

The multicast stream Gd flows across the SSM tree previously built across the transport infrastructure and reaches all the remote sites with receivers interested in getting the Gs stream.

Step 4 ![]() The remote OTV edge devices receive the packets.

The remote OTV edge devices receive the packets.

Step 5 ![]() The packets are decapsulated and delivered to the interested receivers belonging to each given site.

The packets are decapsulated and delivered to the interested receivers belonging to each given site.

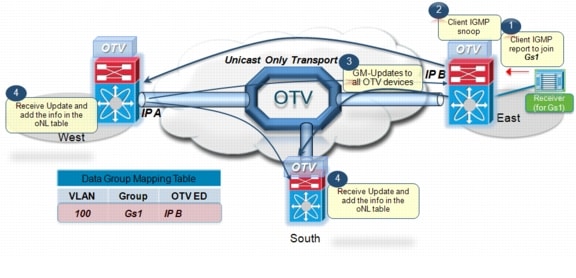

Unicast-Only Transport Infrastructure (Adjacency-Server Mode)

When multicast capabilities are not available in the transport infrastructure, Layer 2 multicast traffic can be sent across the OTV overlay by leveraging head-end replication from the OTV device deployed in the DC site where the multicast source is located. However, similarly to what discussed above for the multicast transport scenario, a specific mechanism based on IGMP Snooping is still available to ensure Layer 2 multicast packets are sent only to remote DC sites where active receivers interested in that flow are connected. This behavior allows reducing the amount of required head-end replication, and it is highlighted in Figure 1-16.

Figure 1-16 Receiver Joining the Multicast Group Gs (Unicast Core)

Step 1 ![]() The client sends an IGMP report inside the East site to join the Gs group.

The client sends an IGMP report inside the East site to join the Gs group.

Step 2 ![]() The OTV edge device snoops the IGMP message and realizes there is an active receiver in the site interested in group Gs, belonging to VLAN 100.

The OTV edge device snoops the IGMP message and realizes there is an active receiver in the site interested in group Gs, belonging to VLAN 100.

Step 3 ![]() The OTV Device sends an OTV control protocol message (GM-Update) to each remote edge devices (belonging to the unicast list) to communicate this information.

The OTV Device sends an OTV control protocol message (GM-Update) to each remote edge devices (belonging to the unicast list) to communicate this information.

Step 4 ![]() The remote edge devices receive the GM-Update message and update their "Data Group Mapping Table" with the information that a receiver interested in multicast group Gs1 is now connected to the site reachable via the OTV device identified by the IP B address.

The remote edge devices receive the GM-Update message and update their "Data Group Mapping Table" with the information that a receiver interested in multicast group Gs1 is now connected to the site reachable via the OTV device identified by the IP B address.

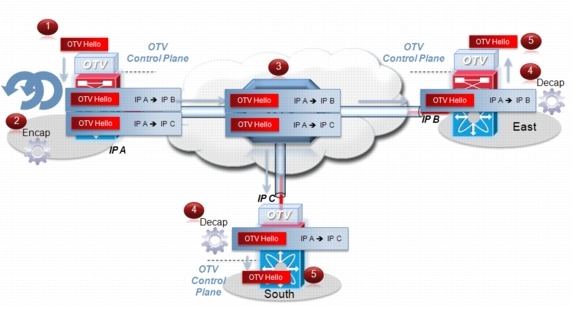

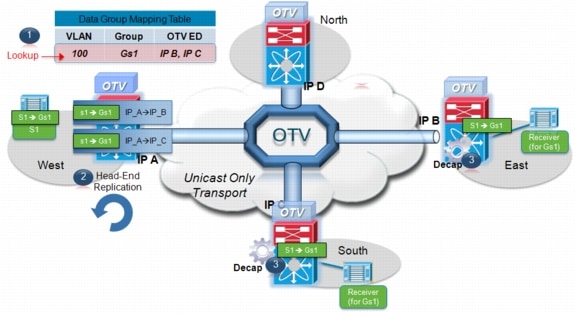

Figure 1-17 highlights how Layer 2 multicast traffic is actually delivered across the OTV overlay.

Figure 1-17 Delivery of the Layer 2 Multicast Stream Gs (Unicast Core)

Step 1 ![]() The multicast traffic destined to Gs1 and generated by a source deployed in the West site reaches the left OTV Edge Device. An OIF lookup takes place in the "Data Group Mapping Table". The table shows that there are receivers across the Overlay (in this example connected to the East and South sites).

The multicast traffic destined to Gs1 and generated by a source deployed in the West site reaches the left OTV Edge Device. An OIF lookup takes place in the "Data Group Mapping Table". The table shows that there are receivers across the Overlay (in this example connected to the East and South sites).

Step 2 ![]() The left edge device performs head-end replication and create two unicast IP packets (by encapsulating the original Layer 2 multicast frame). The source in the outer IP header is the IP A address identifying itself, whereas the destinations are the IP addresses identifying the Join interfaces of the OTV devices in East and South sites (IP B and IP C).

The left edge device performs head-end replication and create two unicast IP packets (by encapsulating the original Layer 2 multicast frame). The source in the outer IP header is the IP A address identifying itself, whereas the destinations are the IP addresses identifying the Join interfaces of the OTV devices in East and South sites (IP B and IP C).

Step 3 ![]() The unicast frames are routed across the transport infrastructure and properly delivered to the remote OTV devices, which decapsulate the frames and deliver them to the interested receivers belonging to the site. Notice how the Layer 2 multicast traffic delivery is optimized, since no traffic is sent to the North site (since no interested receivers are connected there).

The unicast frames are routed across the transport infrastructure and properly delivered to the remote OTV devices, which decapsulate the frames and deliver them to the interested receivers belonging to the site. Notice how the Layer 2 multicast traffic delivery is optimized, since no traffic is sent to the North site (since no interested receivers are connected there).

Step 4 ![]() The remote OTV edge devices receive the packets.

The remote OTV edge devices receive the packets.

Data Plane: Broadcast Traffic

Finally, it is important to highlight that a mechanism is required so that Layer 2 broadcast traffic can be delivered between sites across the OTV overlay. Failure Isolation details how to limit the amount of broadcast traffic across the transport infrastructure, but some protocols, like Address Resolution Protocol (ARP), would always mandate the delivery of broadcast packets.

In the current OTV software release, When a multicast enabled transport infrastructure is available, the current NX-OS software release broadcast frames are sent to all remote OTV edge devices by leveraging the same ASM multicast group in the transport already used for the OTV control protocol. Layer 2 broadcast traffic will then be handled exactly the same way as the OTV Hello messages shown in Figure 1-5.

For unicast-only transport infrastructure deployments, head-end replication performed on the OTV device in the site originating the broadcast would ensure traffic delivery to all the remote OTV edge devices part of the unicast-only list.

Failure Isolation

One of the main requirements of every LAN extension solution is to provide Layer 2 connectivity between remote sites without giving up the advantages of resiliency, stability, scalability, and so on, obtained by interconnecting sites through a routed transport infrastructure.

OTV achieves this goal by providing four main functions: Spanning Tree (STP) isolation, Unknown Unicast traffic suppression, ARP optimization, and broadcast policy control.

STP Isolation

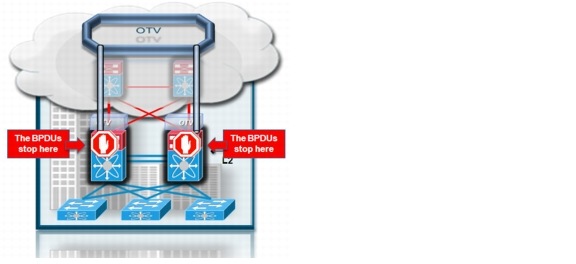

Figure 1-18 shows how OTV, by default, does not transmit STP Bridge Protocol Data Units (BPDUs) across the overlay. This is a native function that does not require the use of an explicit configuration, such as BPDU filtering, and so on. This allows every site to become an independent STP domain: STP root configuration, parameters, and the STP protocol flavor can be decided on a per-site basis.

Figure 1-18 OTV Spanning Tree Isolation

This fundamentally limits the fate sharing between data center sites: a STP problem in the control plane of a given site would not produce any effect on the remote data centers.

Limiting the extension of STP across the transport infrastructure potentially creates undetected end-to-end loops that would occur when at least two OTV edge devices are deployed in each site, inviting a common best practice to increase resiliency of the overall solution. Multi-Homing details how OTV prevents the creation of end-to-end loops without sending STP frames across the OTV overlay.

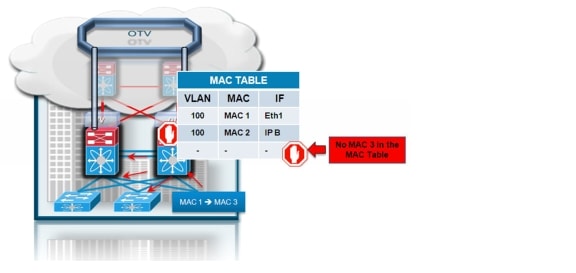

Unknown Unicast Handling

The introduction of an OTV control protocol allows advertising MAC address reachability information between the OTV edge devices and mapping MAC address destinations to IP next hops that are reachable through the network transport. The consequence is that the OTV edge device starts behaving like a router instead of a Layer 2 bridge, since it forwards Layer 2 traffic across the overlay if it has previously received information on how to reach that remote MAC destination. Figure 1-19 shows this behavior.

Figure 1-19 OTV Unknown Unicast Handling

When the OTV edge device receives a frame destined to MAC 3, it performs the usual Layer 2 lookup in the MAC table. Since it does not have information for MAC 3, Layer 2 traffic is flooded out the internal interfaces, since they behave as regular Ethernet interfaces, but not via the overlay.

Note ![]() This behavior of OTV is important to minimize the effects of a server misbehaving and generating streams directed to random MAC addresses. This could occur as a result of a DoS attack as well.

This behavior of OTV is important to minimize the effects of a server misbehaving and generating streams directed to random MAC addresses. This could occur as a result of a DoS attack as well.

The assumption is that there are no silent or unidirectional devices in the network, so sooner or later the local OTV edge device will learn an address and communicate it to the remaining edge devices through the OTV protocol. To support specific applications, like Microsoft Network Load Balancing Services (NLBS) which require the flooding of Layer 2 traffic to function, a configuration knob is provided to enable selective flooding. Individual MAC addresses can be statically defined so that Layer 2 traffic destined to them can be flooded across the overlay, or broadcast to all remote OTV edge devices, instead of being dropped. The expectation is that this configuration would be required in very specific corner cases, so that the default behavior of dropping unknown unicast would be the usual operation model.

Note ![]() Behavior with the current NX-OS release

Behavior with the current NX-OS release

The configuration knob that allows for selective unicast flooding is not available in the current OTV software release. Consequently, all unknown unicast frames will not be forwarded across the logical overlay.

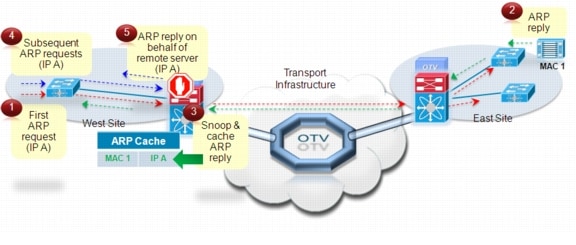

ARP Optimization

Another function that reduces the amount of traffic sent across the transport infrastructure is ARP optimization. Figure 1-20 depicts the OTV ARP optimization process:

Figure 1-20 OTV ARP Optimization

Step 1 ![]() A device in the West site sources an ARP request to determine the MAC of the host with address IP A.

A device in the West site sources an ARP request to determine the MAC of the host with address IP A.

Step 2 ![]() The ARP request is a Layer 2 broadcast frame and it is sent across the OTV overlay to all remote sites eventually reaching the machine with address IP A, which creates an ARP reply message. The ARP reply is sent back to the originating host in the West data center.

The ARP request is a Layer 2 broadcast frame and it is sent across the OTV overlay to all remote sites eventually reaching the machine with address IP A, which creates an ARP reply message. The ARP reply is sent back to the originating host in the West data center.

Step 3 ![]() The OTV edge device in the original West site is capable of snooping the ARP reply and caches the contained mapping information (MAC 1, IP A) in a local data structure named ARP Neighbor-Discovery (ND) Cache.

The OTV edge device in the original West site is capable of snooping the ARP reply and caches the contained mapping information (MAC 1, IP A) in a local data structure named ARP Neighbor-Discovery (ND) Cache.

Step 4 ![]() A subsequent ARP request is originated from the West site for the same IP A address.

A subsequent ARP request is originated from the West site for the same IP A address.

Step 5 ![]() The request is not forwarded to the remote sites but is locally answered by the local OTV edge device on behalf of the remote device IP A.

The request is not forwarded to the remote sites but is locally answered by the local OTV edge device on behalf of the remote device IP A.

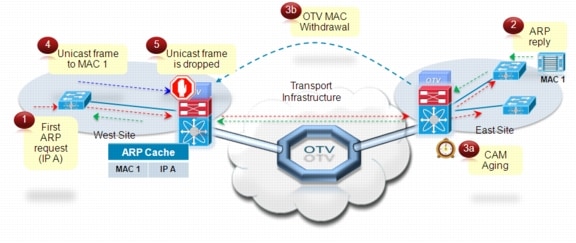

Because of this ARP caching behavior, you should consider the interactions between ARP and CAM table aging timers, since incorrect settings may lead to black-holing traffic. Figure 1-21 shows the ARP aging timer is longer than the CAM table aging timer. This is also a consequence of the OTV characteristic of dropping unknown unicast frames.

Figure 1-21 Traffic Black-Holing Scenario

The following steps explain the traffic black-holing scenario.

Step 1 ![]() A device in the West site sources an ARP request to determine the MAC of the host with address IP A.

A device in the West site sources an ARP request to determine the MAC of the host with address IP A.

Step 2 ![]() The ARP request is a Layer 2 broadcast frame and it is sent across the OTV overlay to all remote sites eventually reaching the machine with address IP A, which creates an ARP reply message. The ARP reply is sent back to the originating host in the West data center. This information is snooped and added to the ARP cache table in the OTV edge device in the West side.

The ARP request is a Layer 2 broadcast frame and it is sent across the OTV overlay to all remote sites eventually reaching the machine with address IP A, which creates an ARP reply message. The ARP reply is sent back to the originating host in the West data center. This information is snooped and added to the ARP cache table in the OTV edge device in the West side.

Step 3 ![]() MAC 1 host stops communicating, hence the CAM aging timer for the MAC 2 entry expires on the East OTV edge device. This triggers an OTV Update sent to the edge device in the West site, so that it can remove the MAC 1 entry as well. This does not affect the entry in the ARP cache, since we assume the ARP aging timer is longer than the CAM.

MAC 1 host stops communicating, hence the CAM aging timer for the MAC 2 entry expires on the East OTV edge device. This triggers an OTV Update sent to the edge device in the West site, so that it can remove the MAC 1 entry as well. This does not affect the entry in the ARP cache, since we assume the ARP aging timer is longer than the CAM.

Step 4 ![]() A Host in the West Site sends a unicast frame directed to the host MAC 1.

A Host in the West Site sends a unicast frame directed to the host MAC 1.

Step 5 ![]() The unicast frame is received by the West OTV edge device, which has a valid ARP entry in the cache for that destination. However, the lookup for MAC 1 in the CAM table does not produce a hit, resulting in a dropped frame.

The unicast frame is received by the West OTV edge device, which has a valid ARP entry in the cache for that destination. However, the lookup for MAC 1 in the CAM table does not produce a hit, resulting in a dropped frame.

The ARP aging timer on the OTV edge devices should always be set lower than the CAM table aging timer. The defaults on Nexus 7000 platforms for these timers are shown below:

•![]() OTV ARP aging-timer: 480 seconds / 8 minutes

OTV ARP aging-timer: 480 seconds / 8 minutes

•![]() MAC aging-timer: 1800 seconds / 30 minutes

MAC aging-timer: 1800 seconds / 30 minutes

Note ![]() It is worth noting how the ARP aging timer on the most commonly found operating systems (Win2k, XP, 2003, Vista, 2008, 7, Solaris, Linux, MacOSX) is actually lower than the 30 minutes default MAC aging-timer. This implies that the behavior shown in Figure 1-21 would never occur in a real deployment scenario, because the host would re-ARP before aging out an entry which would trigger an update of the CAM table, hence maintaining the OTV route.

It is worth noting how the ARP aging timer on the most commonly found operating systems (Win2k, XP, 2003, Vista, 2008, 7, Solaris, Linux, MacOSX) is actually lower than the 30 minutes default MAC aging-timer. This implies that the behavior shown in Figure 1-21 would never occur in a real deployment scenario, because the host would re-ARP before aging out an entry which would trigger an update of the CAM table, hence maintaining the OTV route.

In deployments where the hosts default gateway is placed on a device different than the Nexus 7000 it is important to set the ARP aging-timer of the device to a value lower than its MAC aging-timer.

Broadcast Policy Control

In addition to the previously described ARP optimization, OTV will provide additional functionality such as broadcast suppression, broadcast white-listing, and so on, to reduce the amount of overall Layer 2 broadcast traffic sent across the overlay. Details will be provided upon future functional availability.

Multi-Homing

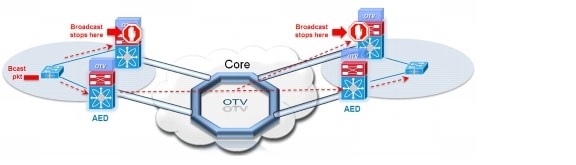

One key function built in the OTV protocol is multi-homing where two (or more) OTV edge devices provide LAN extension services to a given site. As mentioned, this redundant node deployment, combined with the fact that STP BPDUs are not sent across the OTV overlay, may lead to the creation of an end-to-end loop, Figure 1-22.

Figure 1-22 Creation of an End-to-End STP Loop

The concept of Authoritative edge device (AED) is introduced to avoid the situation depicted in Figure 1-22. The AED has two main tasks:

1. ![]() Forwarding Layer 2 traffic (unicast, multicast and broadcast) between the site and the overlay (and vice versa).

Forwarding Layer 2 traffic (unicast, multicast and broadcast) between the site and the overlay (and vice versa).

2. ![]() Advertising MAC reachability information to the remote edge devices.

Advertising MAC reachability information to the remote edge devices.

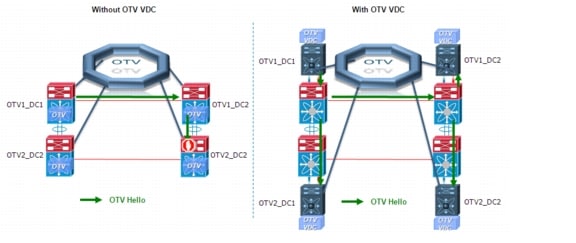

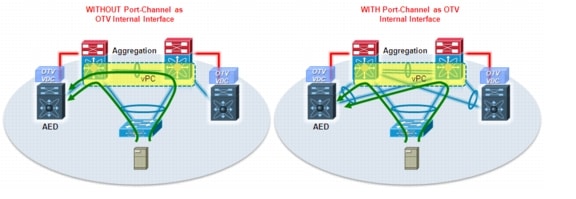

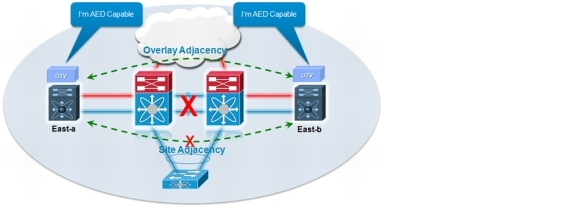

The AED role is negotiated, on a per-VLAN basis, between all the OTV edge devices belonging to the same site (that is, characterized by the same Site ID). Prior to NX-OS release 5.2(1), OTV used a VLAN called "Site VLAN" within a site to detect and establish adjacencies with other OTV edge devices as shown in Figure 1-23. OTV used this site adjacencies as an input to determine Authoritative Edge devices for the VLANS being extended from the site.

Figure 1-23 Establishment of Internal Peering

The Site VLAN should be carried on multiple Layer 2 paths internal to a given site, to increase the resiliency of this internal adjacency (including vPC connections eventually established with other edge switches). However, the mechanism of electing Authoritative Edge device (AED) solely based on the communication established on the site VLAN may create situations (resulting from connectivity issues or misconfiguration), where OTV edge devices belonging to the same site can fail to detect one another and thereby ending up in an "active/active" mode (for the same data VLAN). This could ultimately result in the creation of a loop scenario.

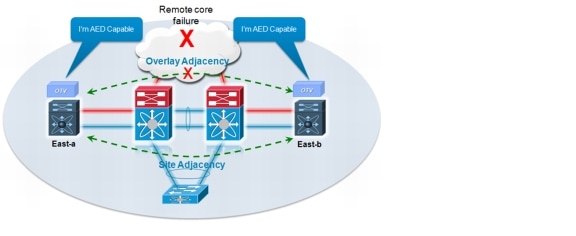

To address this concern, starting with 5.2 (1) NX-OS release, each OTV device maintains dual adjacencies with other OTV edge devices belonging to the same DC site. OTV edge devices continue to use the site VLAN for discovering and establishing adjacency with other OTV edge device in a site. This adjacency is called Site Adjacency.

In addition to the Site Adjacency, OTV devices also maintain a second adjacency, named "Overlay Adjacency", established via the Join interfaces across the Layer 3 network domain. In order to enable this new functionality, it is now mandatory to configure each OTV device also with a site-identifier value. All edge devices that are in the same site must be configured with the same site-identifier. This site-identifier is advertised in IS-IS hello packets sent over both the overlay as well as on the site VLAN. The combination of the site-identifier and the IS-IS system-id is used to identify a neighbor edge device in the same site.

Note ![]() The Overlay interface on an OTV edge device is forced in a "down" state until a site-identifier is configured. This must be kept into consideration when performing an ISSU upgrade to 5.2(1) from a pre-5.2(1) NX-OS software release, because that would result in OTV not being functional anymore once the upgrade is completed.

The Overlay interface on an OTV edge device is forced in a "down" state until a site-identifier is configured. This must be kept into consideration when performing an ISSU upgrade to 5.2(1) from a pre-5.2(1) NX-OS software release, because that would result in OTV not being functional anymore once the upgrade is completed.

The dual site adjacency state (and not simply the Site Adjacency established on the site VLAN) is now used to determine the Authoritative Edge Device role for each extended data VLAN. Each OTV edge device can now proactively inform their neighbors in a local site about their capability to become Authoritative Edge Device (AED) and its forwarding readiness. In other words, if something happens on an OTV device that prevents it from performing its LAN extension functionalities, it can now inform its neighbor about this and let itself excluded from the AED election process.

An explicit AED capability notification allows the neighbor edge devices to get a fast and reliable indication of failures and to determine AED status accordingly in the consequent AED election, rather than solely depending on the adjacency creation and teardown. The forwarding readiness may change due to local failures such as the site VLAN or the extended VLANs going down or the join-interface going down, or it may be intentional such as when the edge device is starting up and/or initializing. Hence, the OTV adjacencies may be up but OTV device may not be ready to forward traffic. The edge device also triggers a local AED election when its forwarding readiness changes. As a result of its AED capability going down, it will no longer be AED for its VLANs.

The AED capability change received from a neighboring edge device in the same site influences the AED assignment, and hence will trigger an AED election. If a neighbor indicates that it is not AED capable, it will not be considered as active in the site. An explicit AED capability down notification received over either the site or the overlay adjacency will bring down the neighbor's dual site adjacency state into inactive state and the resulting AED election will not assign any VLANs to that neighbor.

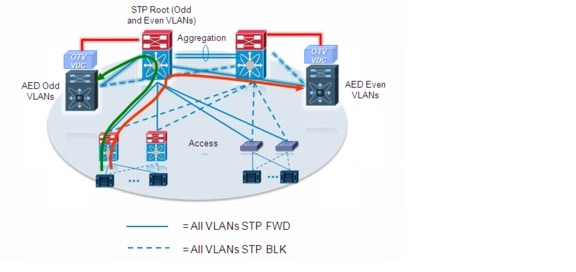

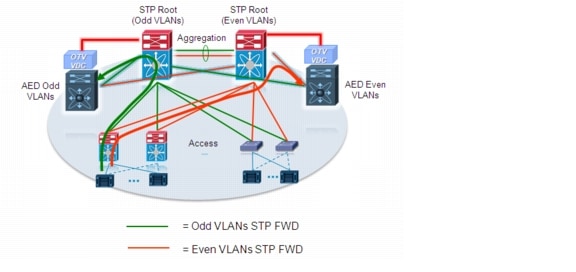

As mentioned above, the single site adjacency (pre 5.2(1) releases) or dual site adjacencies (from 5.2(1) release) are used to negotiate the Authoritative Edge Device role. A deterministic algorithm is implemented to split the AED role for Odd and Evan VLANs between two OTV Edge Devices. More specifically, the Edge Device identified by a lower System-ID will become Authoritative for all the even extended VLANs, whereas the device with higher System-ID will "own" the odd extended VLANs. This behavior is hardware enforced and cannot be tuned in the current NX-OS release.

Note ![]() The specific OTV edge device System-ID can be visualized using the show otv site CLI command.

The specific OTV edge device System-ID can be visualized using the show otv site CLI command.

Figure 1-24 shows how the definition of the AED role in each site prevents the creation of end-to-end STP loops.

Figure 1-24 Prevention of End-to-End STP Loops

Assume, for example, a Layer 2 broadcast frame is generated in the left data center. The frame is received by both OTV edge devices however, only the AED is allowed to encapsulate the frame and send it over the OTV overlay. All OTV edge devices in remote sites will also receive the frame via the overlay, since broadcast traffic is delivered via the multicast group used for the OTV control protocol, but only the AED is allowed to decapsulate the frame and send it into the site.

If an AED re-election was required in a specific failure condition or misconfiguration scenario, the following sequence of events would be triggered to re-establish traffic flows both for inbound and outbound directions:

1. ![]() The OTV control protocol hold timer expires or an OTV edge device receives an explicit AED capability notification from the current AED.

The OTV control protocol hold timer expires or an OTV edge device receives an explicit AED capability notification from the current AED.

2. ![]() One of the two edge devices becomes an AED for all extended VLANs.

One of the two edge devices becomes an AED for all extended VLANs.

3. ![]() The newly elected AED imports in its CAM table all the remote MAC reachability information. This information is always known by the non-AED device, since it receives it from the MAC advertisement messages originated from the remote OTV devices. However, the MAC reachability information for each given VLAN is imported into the CAM table only if the OTV edge device has the AED role for that VLAN.

The newly elected AED imports in its CAM table all the remote MAC reachability information. This information is always known by the non-AED device, since it receives it from the MAC advertisement messages originated from the remote OTV devices. However, the MAC reachability information for each given VLAN is imported into the CAM table only if the OTV edge device has the AED role for that VLAN.

4. ![]() The newly elected AED starts learning the MAC addresses of the locally connected network entities and communicates this information to the remote OTV devices by leveraging the OTV control protocol.

The newly elected AED starts learning the MAC addresses of the locally connected network entities and communicates this information to the remote OTV devices by leveraging the OTV control protocol.

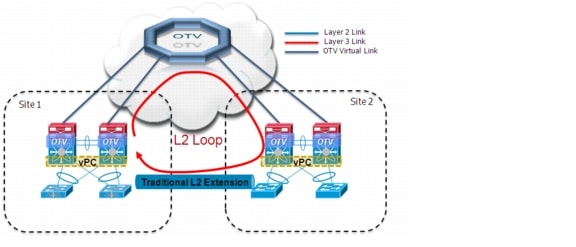

The same loop avoidance mechanism discussed above would be used in site merging scenarios, where a back-door Layer 2 connection exists between data centers. This could happen for example when migrating the LAN extension solution from a traditional one to OTV. Figure 1-25 highlights the fact that during the migration phase it may be possible to create an end-to-end loop between sites. This is a consequence of the design recommendation of preventing STP BPDUs from being sent across the DCI connection.

Figure 1-25 Creation of an End-to-End Loop

To avoid the creation of the end-to-end loop, depicted in Figure 1-25, and minimize the outage for Layer 2 traffic during the migration phase from a traditional DCI solution to OTV, the following step-by-step procedure should be followed (refer to the "OTV Configuration" section for more configuration details):

•![]() Ensure that the same site VLAN is globally defined on the OTV devices deployed in the two data center sites.

Ensure that the same site VLAN is globally defined on the OTV devices deployed in the two data center sites.

•![]() Make sure that the site VLAN is added to the set of VLANs carried via the traditional LAN extension solution. This is critical, because it will allow OTV to detect the already existent Layer 2 connection. For this to happen, it is also important to ensure that the OTV edge devices are adjacent to each other on the site VLAN (i.e. a Layer 2 path exists between these devices through the existing DCI connection).

Make sure that the site VLAN is added to the set of VLANs carried via the traditional LAN extension solution. This is critical, because it will allow OTV to detect the already existent Layer 2 connection. For this to happen, it is also important to ensure that the OTV edge devices are adjacent to each other on the site VLAN (i.e. a Layer 2 path exists between these devices through the existing DCI connection).

•![]() From 5.2(1) release, makes sure also that the same site-identifier is configured for all the OTV devices belonging to the same site.

From 5.2(1) release, makes sure also that the same site-identifier is configured for all the OTV devices belonging to the same site.

•![]() Create the Overlay configuration on the first edge device in site 1, but do not extend any VLAN for the moment. Do not worry about enabling OTV on the second edge device belonging to the same site yet.

Create the Overlay configuration on the first edge device in site 1, but do not extend any VLAN for the moment. Do not worry about enabling OTV on the second edge device belonging to the same site yet.

•![]() Create the Overlay configuration on the first edge device in site 2, but do not extend any VLAN for the moment. Do not worry about enabling OTV on the second edge device belonging to the same site yet.

Create the Overlay configuration on the first edge device in site 2, but do not extend any VLAN for the moment. Do not worry about enabling OTV on the second edge device belonging to the same site yet.

•![]() Make sure the OTV edge devices in site 1 and site 2 establish an internal adjacency on the site VLAN (use the "show otv site" CLI command for that). Assuming the internal adjacency is established, OTV will consider the two sites as merged in a single one. The two edge devices will then negotiate the AED role, splitting between them odd and even VLANs (as previously discussed).

Make sure the OTV edge devices in site 1 and site 2 establish an internal adjacency on the site VLAN (use the "show otv site" CLI command for that). Assuming the internal adjacency is established, OTV will consider the two sites as merged in a single one. The two edge devices will then negotiate the AED role, splitting between them odd and even VLANs (as previously discussed).

•![]() Configure the VLANs that need to be extended through the OTV Overlay (using the "otv extend-vlan" command) on the OTV edge devices in site 1 and site 2. Notice that even if the two OTV devices are now adjacent also via the overlay (this can be verified with the "show otv adjacency" command) the VLAN extension between sites is still happening only via the traditional Layer 2 connection.

Configure the VLANs that need to be extended through the OTV Overlay (using the "otv extend-vlan" command) on the OTV edge devices in site 1 and site 2. Notice that even if the two OTV devices are now adjacent also via the overlay (this can be verified with the "show otv adjacency" command) the VLAN extension between sites is still happening only via the traditional Layer 2 connection.

•![]() Disable the traditional Layer 2 extension solution. This will cause the OTV devices to lose the internal adjacency established via the site VLAN and to detect a "site partition" scenario. After a short convergence window where Layer 2 traffic between sites is briefly dropped, VLANs will start being extended only via the OTV overlay. It is worth noticing that at this point each edge device will assume the AED role for all the extended VLANs.

Disable the traditional Layer 2 extension solution. This will cause the OTV devices to lose the internal adjacency established via the site VLAN and to detect a "site partition" scenario. After a short convergence window where Layer 2 traffic between sites is briefly dropped, VLANs will start being extended only via the OTV overlay. It is worth noticing that at this point each edge device will assume the AED role for all the extended VLANs.

•![]() OTV is now running in single-homed mode (one edge device per site) and it is then possible to improve the resiliency of the solution by enabling OTV on the second edge device existing in each data center site.

OTV is now running in single-homed mode (one edge device per site) and it is then possible to improve the resiliency of the solution by enabling OTV on the second edge device existing in each data center site.

Traffic Load Balancing

As mentioned, the election of the AED is paramount to eliminating risk of creating end-to-end loops. The first immediate consequence is that all Layer 2 multicast and broadcast streams need to be handled by the AED device, leading to a per-VLAN load-balancing scheme for these traffic flows.

The exact same considerations are valid for unicast traffic when considering the current NX-OS release of OTV. Only the AED is allowed to forward unicast traffic in and out a given site. However, an improvement behavior is planned for a future release to provide per-flow load-balancing of unicast traffic. Figure 1-26 shows that this behavior can be achieved every time Layer 2 flows are received by the OTV edge devices over a vPC connection.

Figure 1-26 Unicast Traffic Load Balancing

The vPC peer-link is leveraged in the initial release to steer the traffic to the AED device. In future releases where the edge device does not play the AED role, a given VLAN will be allowed to forward unicast traffic to the remote sites via the OTV overlay, providing a desired per-flow load-balancing behavior.

Another consideration for traffic load balancing is on a per-device level: to understand the traffic behavior it is important to clarify that in the current Nexus 7000 hardware implementation, the OTV edge device encapsulates the entire original Layer 2 frame into an IP packet. This means that there is no Layer 4 (port) information available to compute the hash determining which link to source the traffic from. This consideration is relevant in a scenario where the OTV edge device is connected to the Layer 3 domain by leveraging multiple routed uplinks, as highlighted in Figure 1-27.

Figure 1-27 Inbound and Outbound Traffic Paths

In the example above, it is important to distinguish between egress/ingress direction and unicast/multicast (broadcast) traffic flows.

•![]() Egress unicast traffic: this is destined to the IP address of a remote OTV edge device Join interface. All traffic flows sent by the AED device to a given remote site are characterized by the same source_IP and dest_IP values in the outer IP header of the OTV encapsulated frames. This means that hashing performed by the Nexus 7000 platform acting as the edge device would always select the same physical uplink, even if the remote destination was known with the same metric (equal cost path) via multiple Layer 3 links (notice that the link selected may not necessarily be the Join Interface). However, traffic flows sent to different remote sites will use a distinct dest_IP value, hence it is expected that a large number of traffic flows will be load-balanced across all available Layer 3 links, as shown on the left in Figure 1-27.

Egress unicast traffic: this is destined to the IP address of a remote OTV edge device Join interface. All traffic flows sent by the AED device to a given remote site are characterized by the same source_IP and dest_IP values in the outer IP header of the OTV encapsulated frames. This means that hashing performed by the Nexus 7000 platform acting as the edge device would always select the same physical uplink, even if the remote destination was known with the same metric (equal cost path) via multiple Layer 3 links (notice that the link selected may not necessarily be the Join Interface). However, traffic flows sent to different remote sites will use a distinct dest_IP value, hence it is expected that a large number of traffic flows will be load-balanced across all available Layer 3 links, as shown on the left in Figure 1-27.

Note ![]() The considerations above are valid only in the presence of equal cost routes. If the remote OTV edge device was reachable with a preferred metric out of a specific interface, all unicast traffic directed to that site would use that link, independently from where the Join Interface is defined. Also, the same considerations apply also to deployment scenarios where a Layer 3 Port-channel is defined as OTV Join Interface.

The considerations above are valid only in the presence of equal cost routes. If the remote OTV edge device was reachable with a preferred metric out of a specific interface, all unicast traffic directed to that site would use that link, independently from where the Join Interface is defined. Also, the same considerations apply also to deployment scenarios where a Layer 3 Port-channel is defined as OTV Join Interface.

•![]() Egress multicast/broadcast and control plane traffic: independently from the number of equal cost paths available on a given edge device, multicast, broadcast and control plane traffic is always going to be sourced from the defined Join interface.

Egress multicast/broadcast and control plane traffic: independently from the number of equal cost paths available on a given edge device, multicast, broadcast and control plane traffic is always going to be sourced from the defined Join interface.

•![]() Ingress unicast traffic: all the incoming unicast traffic will always be received on the Join interface, since it is destined to this interface IP address, as shown on the right in Figure 1-27.

Ingress unicast traffic: all the incoming unicast traffic will always be received on the Join interface, since it is destined to this interface IP address, as shown on the right in Figure 1-27.

•![]() Ingress multicast/broadcast and control plane traffic: independently from the number of equal cost paths available on a given edge device, multicast, broadcast and control plane traffic must always be received on the defined Join interface. If in fact control plane messages were delivered to a different interface, they would be dropped and this would prevent OTV from becoming fully functional.

Ingress multicast/broadcast and control plane traffic: independently from the number of equal cost paths available on a given edge device, multicast, broadcast and control plane traffic must always be received on the defined Join interface. If in fact control plane messages were delivered to a different interface, they would be dropped and this would prevent OTV from becoming fully functional.

Load balancing behavior will be modified once loopback interfaces (or multiple physical interfaces) will be supported as OTV Join interfaces allowing the load-balance of unicast and multicast traffic across multiple links connecting the OTV edge device to the routed network domain.

In the meantime, a possible workaround to improve the load-balancing of OTV traffic consists in leveraging multiple OTV overlays on the same edge device and spread the extended VLANs between them. This concept is highlighted in Figure 1-28.

Figure 1-28 Use of Multiple TV Overlays

In the scenario above, all traffic (unicast, multicast, broadcast, control plane) belonging to a given Overlay will always be sent and received on the same physical link configured as Join Interface, independent from the remote site it is destined to. This means that if the VLANs that need to be extended are spread across the 3 defined Overlays, an overall 3 way load-balancing would be achieved for all traffic even in point-to-point deployments where all the traffic is sent to a single remote data center site.

QoS Considerations

To clarify the behavior of OTV from a QoS perspective, we should distinguish between control and data plane traffic.

•![]() Control Plane: The control plane frames are always originated by the OTV edge device and statically marked with a CoS = 6/DSCP = 48.

Control Plane: The control plane frames are always originated by the OTV edge device and statically marked with a CoS = 6/DSCP = 48.

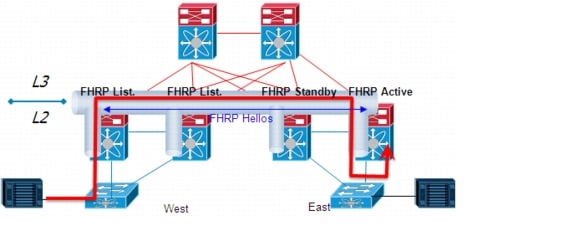

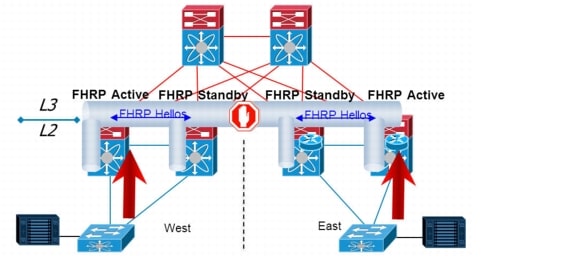

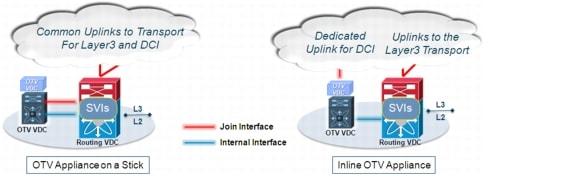

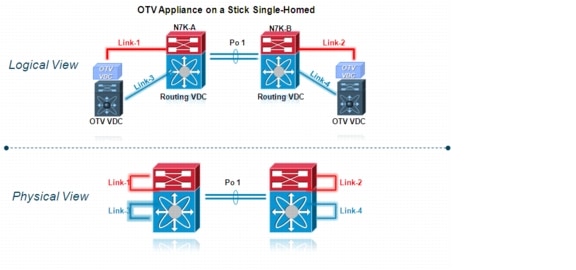

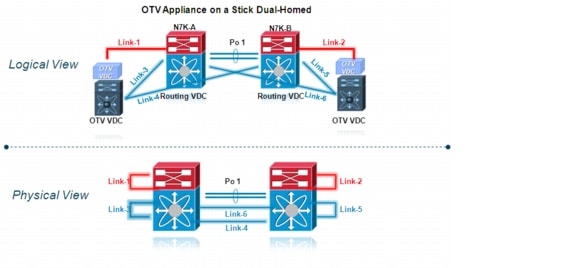

•![]() Data Plane: The assumption is that the Layer 2 frames received by the edge device to be encapsulated have already been properly marked (from a CoS and DCSP perspective).