Introduction

This document describes how to configure the Quality of Service (QoS) Offload feature on the Cisco 9000 Series Aggregated Services Router (ASR9K) platform. The purpose, application, and limitations of the feature are also described.

Requirements

Ensure that your system meets these requirements before you attempt this configuration:

- One or both of these satellite Package Installation Envelopes (PIEs) for the specific satellite hardware must be installed and activated:

- asr9k-asr9000v-nV-px.pie-5.1.1

- asr9k-asr901-nV-px.pie-5.1.2

- The satellite must have updated software and Field-Programmable Devices (FPDs).

Components Used

The information in this document is based on these software and hardware versions:

- Cisco IOS® XR Version 5.1.1 on the ASR9K for the ASR-9000v.

- Cisco IOS XR Version 5.1.2 on the ASR9K for the ASR-901.

Note: The QoS Offload feature on the ASR-903 is not officially supported at this time.

The information in this document was created from the devices in a specific lab environment. All of the devices used in this document started with a cleared (default) configuration. If your network is live, make sure that you understand the potential impact of any command.

Background Information

QoS Offload Overview

The Inter-Chassis Link (ICL) between the satellite and the ASR9K (typically 10 Gbps) can easily become saturated by the access interfaces on the satellite itself. The QoS Offload feature provides QoS capabilities in hardware on the actual satellite (opposed to the ASR9K host) in order to prevent the loss of critical data on the ICL in times of congestion.

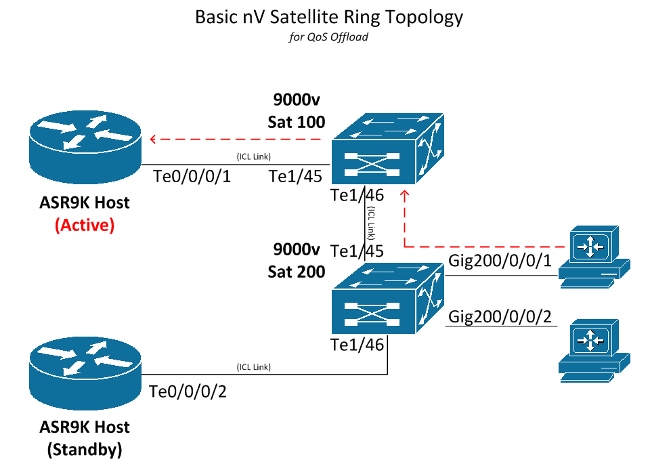

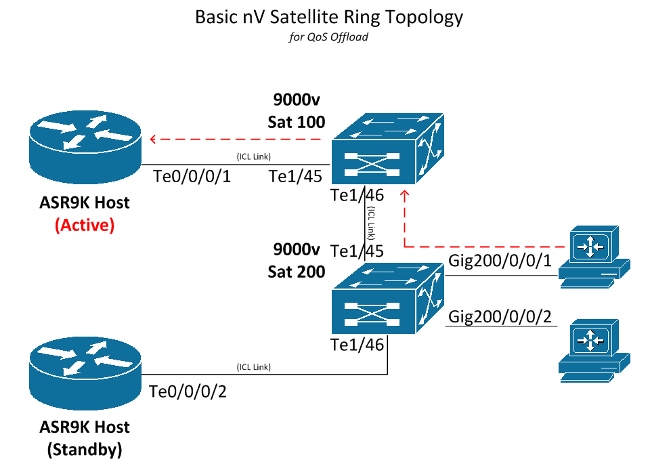

The QoS Offload feature was introduced in order to protect traffic over the ICL from congestion in the direction from the satellite access port to the ASR9K, as denoted by the dashed red arrows in the next image. This concept helps to understand some of the limitations and helps when you design the QoS implementation.

Critical Processes for QoS Offload

This section describes the two critical processes that are used for QoS offload.

Interface Control Plane Extender (icpe_cpm) Process

The Interface Control Plane Extender (ICPE) process manages the Satellite Discovery and Control (SDAC) protocol, which provides the communication channel between the ASR9K host and the satellite.

QoS Policy-Manager (qos_ma) Process

The QoS policy-manager process performs these actions:

- Verifies and stores the class-maps and policy-maps in a database on the Route Switch Processor (RSP).

- Maintains a database of satellite interface to service-policy mappings.

- Periodically collects the QoS statistics from the satellite boxes for offloaded service-policies.

- Runs on all nodes where control-plane interfaces exist, to include both RSPs and Line Cards (LCs).

Configure

Use this section in order to configure the QoS Offload feature on the ASR9K.

QoS Offload Configuration

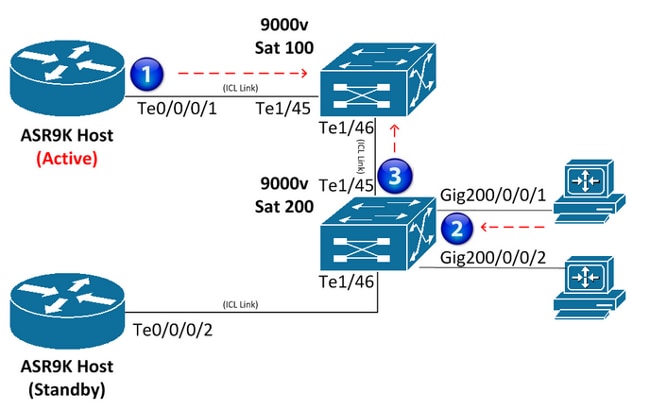

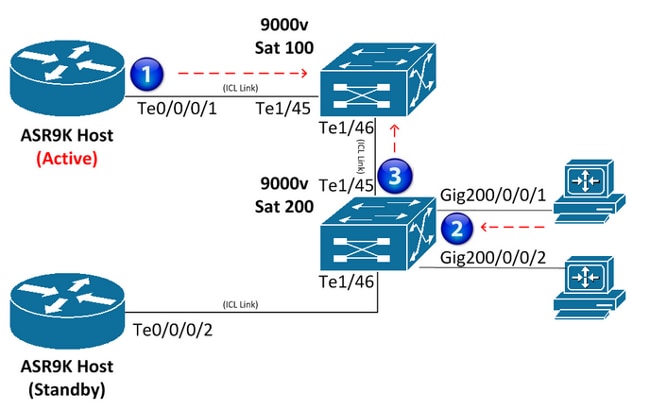

This diagram serves as a visual representation of the location in which the service-policy is installed:

Satellite Access Interface

Here is an example configuration on the satellite access interface:

interface GigabitEthernet200/0/0/1

service-policy output NQoSOff_Out

service-policy input NQoSOff_In

nv

service-policy input ACCESS

Note: The service-policy output NQoSOff_Out indicates non-QoS offload traffic that is transmitted from the ASR9K ICL interface to the satellite access interface (1), and the input NQoSOff_In indicates non-QoS traffic that is received on the ASR9K from the satellite access interface (1). Also, the service-policy input ACCESS indicates the QoS offload traffic that is received on the satellite access interface from the PC (2).

ICL Interface

Here is an example configuration on the ICL interface:

interface TenGigE0/0/0/1

service-policy output NOT_SUPPORTED

service-policy input NOT_SUPPORTED

nv

satellite-fabric-link network

redundancy

iccp-group 1

!

satellite 200

service-policy output ICL_OFFLOAD

remote-ports GigabitEthernet 0/0/1-2

Note: The service-policy output and input is NOT_SUPPORTED for this interface; refer to the next section and design carefully. Also, the service-policy output ICL_OFFLOAD indicates the QoS offload traffic that is sent from the satellite ICL to the ASR9K (3).

ICL Oversubscription

The QoS service-policies are not supported directly on the ICL interfaces (non-QoS offload). Thus, care must be taken so that you do not oversubscribe the satellite ICL interfaces. This section provides two methods that are used in order to prevent ICL oversubscription. The first method restricts the number of access interfaces for each ICL so that congestion is not possible. The second method applies shapers to each access interface so that the sum of all the shapers does not exceed the bandwidth of the ICL.

Restrict Access Interfaces for Each ICL

In order to support fifteen 1-Gbps connections on a satellite (for a potential of 15 Gbps traffic) without packet drops during congestion, two separate 10-Gbps ICL links must be configured. Map the first ten 1-Gbps satellite access interfaces to one 10-Gbps ICL connection, and the next five 1-Gbps satellite access interfaces to the second 10-Gbps ICL connection. Other combinations are possible as long as the number of access interfaces mapped to each 10-Gbps ICL does not exceed ten.

Here is an example configuration:

interface TenGigE0/0/0/1

description ICL_LINK_1_FOR_SAT100

nv

satellite-fabric-link network

satellite 100

remote-ports GigabitEthernet 0/0/0-9

!

interface TenGigE0/0/0/2

description ICL_LINK_2_FOR_SAT100

nv

satellite-fabric-link network

satellite 100

remote-ports GigabitEthernet 0/0/10-14

Apply Shapers on Access Interfaces

The second method that is used in order to prevent oversubscription is to apply a shaper directly to each satellite access interface (GigE100/0/0/9, for example) in order to prevent the transmission of multiple line rates across the ICL to the satellite. For example, with a single 10-Gbps ICL, if a 500-Mbps shaper is applied to twenty GigabitEthernet satellite interfaces, then no more than 10 Gbps (500Mb x 20) is ever scheduled to traverse the ICL.

Here is an example configuration:

interface TenGigE0/0/0/1

nv

satellite-fabric-link network

satellite 100

remote-ports GigabitEthernet 0/0/0-19

!

interface GigE100/0/0/0 (For all Gi100/0/0/0-19)

service-policy output 500MBPS_SHAPE

Note: Full Modular QoS CLI (MQC) functionality is provided for non-QoS offload on satellite access interfaces that are virtual entities on the ASR9K host.

Protect Control-Plane Traffic Over ICL

This section outlines a configuration example which will protect network control-plane traffic received on a satellite access interface as it traverses the ICL. This is a demonstration of how this could be accomplished:

Satellite Access Interface Config:

class-map match-any routing

match precedence 6

policy-map Protect_NCP

class routing

set qos-group 4

!

class class-default

set qos-group 0

interface Gi100/0/0/1

description Satellite Access Interface

service-policy input Protect_NCP

ICL Interface Config:

class-map match-any qos-group-4

match qos-group 4

policy-map ICL-Policy

class qos-group-4

bandwidth remaining percent 5

!

class class-default

bandwidth remaining percent 90

interface TenGigE0/0/0/1

description Satellite ICL

nv

satellite-fabric-link network

redundancy

iccp-group 1

!

satellite 100

service-policy output ICL-Policy

In the previous configuration example, the 'Protect_NCP' policy-map will match all packets with an IP Precedence of 6, and group them to internal QoS group 4. Then once it egresses on the ICL towards the ASR9K host, it will then be protected via the configured bandwidth reservation in the class-map for QoS group 4.

Reminder: A QoS group is not an actual marking on the ToS-byte of the packet, but rather an internal marking that only has local significance to the satellite and ASR9K host.

IMPORTANT! Only QoS groups 1, 2, 4, and 5 can be user-defined when using QoS Offload. QoS groups 3, 6, and 7 are reserved for underlying functionality, specific to nV satellite and should never be used. QoS group 0 is reserved for class-default traffic.

QoS Offload Limitations

This section describes the limitations of the QoS Offload feature.

Service-Policy Placement Restrictions

QoS offload is implemented in order to offer QoS capabilities from the direction of the satellite access port towards the ASR9K host. These placement restrictions apply:

- A QoS service-policy cannot be placed directly on an ASR9K ICL interface for offload or non-offload.

- Egress (output) service-policies are only supported for QoS offload on the satellite ICL interfaces that face the active host.

- Ingress (input) service-policies are only supported for QoS offload on the satellite access port interfaces or bundles for traffic that is received directly on the satellite access interface or bundle. In the event of a bundle, the QoS policy is installed on each member on a per-link basis.

- An offloaded service-policy cannot be applied to a sub-interface.

Supported QoS Offload Capabilities

The supported QoS offload capabilities are documented in the Supported Platform-Specific Information for QoS Offload section of the Cisco ASR 9000 Series Aggregation Services Router Modular Quality of Service Configuration Guide, Release 5.1.x.

Note: There is currently no support for Simple Network Management Protocol (SNMP)-related QoS offload statistics.

Non-QoS Offload Limitations on Satellite Access Interfaces

This section describes the non-QoS offload limitations on the satellite access interfaces.

Service-Policy Placement Restrictions

These service-policy placement restrictions apply to non-QoS offload on satellite access interfaces:

- The ingress and egress service-policies can be applied under the actual access port configuration (not nv). These policies are not offloaded, and packets are queued before they are placed on the wire from the ASR9K to the satellite.

- A QoS service-policy cannot be placed directly on an ASR9K ICL interface for offload or non-offload.

Service Policy Topology Restrictions

For hub and spoke topologies, tri-level (grandparent, parent, and child) QoS policies are supported. For the newer topologies, Ring and Layer 2 (L2) Fabric, only dual-level QoS policies are supported.

Verify

Use this section in order to confirm that your QoS offload configuration works properly.

The Output Interpreter Tool (registered customers only) supports certain show commands. Use the Output Interpreter Tool in order to view an analysis of show command output.

QoS Offload Policy Installation on Satellite

Enter the show qos status interface command with the nv satellite option in order to determine if it has been installed correctly in the satellite hardware for offloaded QoS policies. If the status in the command output shows Active, then installation of the offloaded QoS policy is successful. If the status in the output shows Inactive, there is a failure of some kind.

If a failure occurs, there is often a problem with the actual ICL link, or the QoS policy that attempts the offload is supported in the current IOS XR software version that the ASR9K host runs, but it might not be supported on the actual satellite. Refer to the Supported QoS Offload Capabilities section of this document for more information.

If the status in the command output shows an In-Progress state, it indicates that the satellite connection was lost. In this intermediate state between active and inactive, the QoS policy has not been successfully offloaded.

Here are two example outputs that show a successful offload and a failed offload:

OUTPUT:

RP/0/RSP0/CPU0:ASR9001#show qos status interface gig 0/0/0/0 nv satellite 100

Wed Apr 16 23:50:46.575 UTC

GigabitEthernet0/0/0/0 direction input: Service Policy not installed

GigabitEthernet0/0/0/0 Satellite: 100 output: test-1

Last Operation Attempted : ADD

Status : ACTIVE

OUTPUT:

RP/0/RSP0/CPU0:ASR9001#show qos status interface gig 0/0/0/0 nv satellite 100

Wed Apr 16 23:51:34.272 UTC

GigabitEthernet0/0/0/0 direction input: Service Policy not installed

GigabitEthernet0/0/0/0 Satellite: 100 output: test-2

Last Operation Attempted : ADD

Status : INACTIVE

Failure description :Apply Servicepolicy: Handle Add Request AddSP

test-2 CliParserWrapper:

Remove shape action under class-default first.

QoS Statistics of Offloaded QoS Policy on Satellite Access Interface

Enter these commands in order to view or clear the statistics of a QoS policy map that is applied on the remote satellite access interface:

- show policy-map interface Gi100/0/0/9 input nv

- clear qos counters interface Gi100/0/0/9 input nv

QoS Statistics of Offloaded QoS Policy on Satellite ICL Interface

Enter these commands in order to view or clear the statistics of a QoS policy map that is applied on the remote satellite ICL interface:

- show policy-map interface Ten0/0/0/1 output nv satellite-fabric-link 100

- clear qos counters interface Ten0/0/0/1 input nv satellite-fabric-link 100

Note: The QoS statistics are updated every thirty seconds to the ASR9K host.

Troubleshoot

Enter these commands on order to collect debug information when you attempt to troubleshoot the QoS Offload feature or when you open a Cisco Technical Assistance Center (TAC) service request:

- show policymgr process trace [all|intermittent|critical]

- show tech qos

- show policy-lib trace [all|critical|intermittent]

- show policy-lib trace client <client-name> location <loc>

- show app-obj trace

- show app-obj db <db_name> jid <jid> location <loc>

- show qos-ma trace

Note: The <db_name> is either the class_map_qos_db or the policy_map_qos_db.

Known Defects

For information about known defects in regards to the information that is provided in this document, reference Cisco bug ID CSCuj87492 - service-policy option under non-satether interface nv should be removed. This defect was raised in order to remove the nv option from non-satellite interfaces.

Feedback

Feedback