Cisco IOS XR Multicast Configuration Guide for the Cisco CRS Router, Release 5.1.x

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- May 18, 2016

Chapter: Implementing Multicast Routing on Cisco IOS XR Software

- Prerequisites for Implementing Multicast Routing

- Information About Implementing Multicast Routing

- Key Protocols and Features Supported in the Cisco IOS XR Software Multicast Routing Implementation

- Multicast Routing Functional Overview

- PIM-SM, PIM-SSM, and PIM-BIDIR

- Internet Group Management Protocol and Multicast Listener Discovery

- Protocol Independent Multicast

- PIM Shared Tree and Source Tree (Shortest Path Tree)

- Multicast-Intact

- Designated Routers

- Rendezvous Points

- Auto-RP

- PIM Bootstrap Router

- Reverse-Path Forwarding

- Multicast VPN

- Multitopology Routing

- Multicast VPN Extranet Routing

- MVPN Bidirectional Overview

- Multicast VPN Hub and Spoke Topology

- Label Switched Multicast (LSM) Multicast Label Distribution Protocol (mLDP) based Multicast VPN (mVPN) Support

- Next-Generation Multicast VPN

- PE-PE Ingress Replication

- Multicast Source Discovery Protocol

- Multicast Nonstop Forwarding

- Multicast Configuration Submodes

- Understanding Interface Configuration Inheritance

- Understanding Interface Configuration Inheritance Disablement

- Understanding Enabling and Disabling Interfaces

- Multicast Routing Information Base

- Multicast Forwarding Information Base

- MSDP MD5 Password Authentication

- How to Implement Multicast Routing

- Configuring PIM-SM and PIM-SSM

- Configuring Native PIM BIDIR

- Configuring MVPN GRE BIDIR

- Configuring MVPN MLDP BIDIR

- Configuring a Static RP and Allowing Backward Compatibility

- Configuring Auto-RP to Automate Group-to-RP Mappings

- Configuring the Bootstrap Router

- Calculating Rates per Route

- Configuring Multicast Nonstop Forwarding

- Configuring Multicast VPN

- Prerequisites for Multicast VPN

- Restrictions for Multicast VPN for Multicast Routing

- Enabling a VPN for Multicast Routing

- Specifying the PIM VRF Instance

- Specifying the IGMP VRF Instance

- Configuring the MDT Source per VRF

- Configuring Label Switched Multicast

- Verification of LSM mLDP based MVPN Configuration

- Configuring Multitopology Routing

- Configuring MVPN Extranet Routing

- Interconnecting PIM-SM Domains with MSDP

- Controlling Source Information on MSDP Peer Routers

- Configuring MSDP MD5 Password Authentication

- Configuring VRF for MSDP

- Multicast only fast reroute (MoFRR)

- MSDP Anycast RP Configuration on Cisco IOS XR Software: Example

- Bidir-PIM Configuration on Software: Example

- Calculating Rates per Route: Example

- Preventing Auto-RP Messages from Being Forwarded on Software: Example

- Inheritance in MSDP on Software: Example

- MSDP-VRF: Example

- Configuring Route Policy for Static RPF: Example

- Configuring IPv4 Multicast VPN: Example

- Configuration Examples for MVPN Profiles

- Configuring Multitopology Routing: Example

- Configuring MVPN Extranet Routing: Example

- Configuring Multicast Hub and Spoke Topology: Example

- Configuring LSM based MLDP: Examples

Implementing

Multicast Routing on Cisco IOS XR Software

Multicast routing is a bandwidth-conserving technology that reduces traffic by simultaneously delivering a single stream of information to potentially thousands of corporate recipients and homes. Applications that take advantage of multicast routing include video conferencing, corporate communications, distance learning, and distribution of software, stock quotes, and news.

This document assumes that you are familiar with IPv4 and IPv6 multicast routing configuration tasks and concepts for Cisco IOS XR Software .

Multicast routing allows a host to send packets to a subset of all hosts as a group transmission rather than to a single host, as in unicast transmission, or to all hosts, as in broadcast transmission. The subset of hosts is known as group members and are identified by a single multicast group address that falls under the IP Class D address range from 224.0.0.0 through 239.255.255.255.

For detailed conceptual information about multicast routing and complete descriptions of the multicast routing commands listed in this module, you can refer to the Related Documents.

Feature History for Configuring Multicast Routing on the Cisco CRS Routers

|

Release |

Modification |

|---|---|

|

Release 2.0 |

This feature was introduced. |

|

Release 3.2 |

Support was added for the for IPv6 routing protocol and for the bootstrap router (BSR) feature. |

|

Release 3.5.0 |

Multicast VPNv4 was supported. |

|

Release 3.7.0 |

The following new features or functionality were added: |

|

Release 3.9.0 |

Support was added for these features: |

|

Release 4.0.0 |

Support for Auto-RP Lite and MVPN Hub and Spoke Topology were added. |

|

Release 4.1.1 |

Support for Label Switched Multicast (LSM) Multicast Label Distribution Protocol (mLDP) based Multicast VPN (mVPN) was added. |

|

Release 4.2.1 |

Support was added for these features: |

|

Release 6.1.2 |

MVPN IPv6 over IPv4 GRE feature was introduced. |

- Prerequisites for Implementing Multicast Routing

- Information About Implementing Multicast Routing

- How to Implement Multicast Routing

- Multicast only fast reroute (MoFRR)

- Configuring Route Policy for Static RPF

- Point-to-Multipoint Traffic Engineering Label-Switched Multicast

- Configuration Examples for Implementing Multicast Routing on Software

- Additional References

Prerequisites for Implementing Multicast Routing

-

You must install and activate the multicast pie.

- For detailed information about optional PIE installation, see Cisco IOS XR Getting Started Guide for the Cisco CRS Router

-

For MLDP, an MPLS PIE has to be installed.

-

You must be in a user group associated with a task group that includes the proper task IDs. The command reference guides include the task IDs required for each command. If you suspect user group assignment is preventing you from using a command, contact your AAA administrator for assistance.

-

You must be familiar with IPv4 and IPv6 multicast routing configuration tasks and concepts.

-

Unicast routing must be operational.

-

To enable multicast VPN, you must configure a VPN routing and forwarding (VRF) instance.

Information About Implementing Multicast Routing

- Key Protocols and Features Supported in the Cisco IOS XR Software Multicast Routing Implementation

- Multicast Routing Functional Overview

- PIM-SM, PIM-SSM, and PIM-BIDIR

- Internet Group Management Protocol and Multicast Listener Discovery

- Protocol Independent Multicast

- PIM Shared Tree and Source Tree (Shortest Path Tree)

- Multicast-Intact

- Designated Routers

- Rendezvous Points

- Auto-RP

- PIM Bootstrap Router

- Reverse-Path Forwarding

- Multicast VPN

- Multitopology Routing

- Multicast VPN Extranet Routing

- MVPN Bidirectional Overview

- Multicast VPN Hub and Spoke Topology

- Label Switched Multicast (LSM) Multicast Label Distribution Protocol (mLDP) based Multicast VPN (mVPN) Support

- Next-Generation Multicast VPN

- PE-PE Ingress Replication

- Multicast Source Discovery Protocol

- Multicast Nonstop Forwarding

- Multicast Configuration Submodes

- Understanding Interface Configuration Inheritance

- Understanding Interface Configuration Inheritance Disablement

- Understanding Enabling and Disabling Interfaces

- Multicast Routing Information Base

- Multicast Forwarding Information Base

- MSDP MD5 Password Authentication

Key Protocols and Features Supported in the Cisco IOS XR Software Multicast Routing Implementation

|

Feature |

IPv4 Support |

IPv6 Support |

|---|---|---|

|

Dynamic host registration |

Yes (IGMP v1/2/3) |

Yes (MLD v1/2) |

|

Explicit tracking of hosts, groups, and channels |

Yes (IGMP v3) |

Yes (MLD v2) |

|

PIM-SM1 |

Yes |

Yes |

|

PIM-SSM2 |

Yes |

Yes |

|

PIM-Bidir3 |

Yes |

Yes |

|

Auto-RP |

Yes |

No |

|

Multicast VPN |

Yes |

Yes4 |

|

BSR5 |

Yes |

Yes |

|

MSDP6 |

Yes |

No |

|

BGP7 |

Yes |

Yes |

|

Multicast NSF8 |

Yes |

Yes |

|

OOR handling9 |

Yes |

No |

Multicast Routing Functional Overview

Traditional IP communication allows a host to send packets to a single host (unicast transmission) or to all hosts (broadcast transmission). Multicast provides a third scheme, allowing a host to send a single data stream to a subset of all hosts (group transmission) at about the same time. IP hosts are known as group members.

Packets delivered to group members are identified by a single multicast group address. Multicast packets are delivered to a group using best-effort reliability, just like IP unicast packets.

The multicast environment consists of senders and receivers. Any host, regardless of whether it is a member of a group, can send to a group. However, only the members of a group receive the message.

A multicast address is chosen for the receivers in a multicast group. Senders use that group address as the destination address of a datagram to reach all members of the group.

Membership in a multicast group is dynamic; hosts can join and leave at any time. There is no restriction on the location or number of members in a multicast group. A host can be a member of more than one multicast group at a time.

How active a multicast group is and what members it has can vary from group to group and from time to time. A multicast group can be active for a long time, or it may be very short-lived. Membership in a group can change constantly. A group that has members may have no activity.

Routers use the Internet Group Management Protocol (IGMP) (IPv4) and Multicast Listener Discovery (MLD) (IPv6) to learn whether members of a group are present on their directly attached subnets. Hosts join multicast groups by sending IGMP or MLD report messages.

Many multimedia applications involve multiple participants. Multicast is naturally suitable for this communication paradigm.

Multicast Routing Implementation

Cisco IOS XR Software supports the following protocols to implement multicast routing:

-

IGMP and MLD are used (depending on the IP protocol) between hosts on a LAN and the routers on that LAN to track the multicast groups of which hosts are members.

-

Protocol Independent Multicast in sparse mode (PIM-SM) is used between routers so that they can track which multicast packets to forward to each other and to their directly connected LANs.

-

Protocol Independent Multicast in Source-Specific Multicast (PIM-SSM) is similar to PIM-SM with the additional ability to report interest in receiving packets from specific source addresses (or from all but the specific source addresses), to an IP multicast address.

-

PIM-SSM is made possible by IGMPv3 and MLDv2. Hosts can now indicate interest in specific sources using IGMPv3 and MLDv2. SSM does not require a rendezvous point (RP) to operate.

-

PIM Bidirectional is a variant of the Protocol Independent Multicast suit of routing protocols for IP multicast. PIM-BIDIR is designed to be used for many-to-many applications within individual PIM domains.

This image shows IGMP/MLD and PIM-SM operating in a multicast environment.

PIM-SM, PIM-SSM, and PIM-BIDIR

Protocl Independent Multicast (PIM) is a multicast routing protocol used to create multicast distribution trees, which are used to forward multicast data packets. PIM is an efficient IP routing protocol that is “independent” of a routing table, unlike other multicast protocols such as Multicast Open Shortest Path First (MOSPF) or Distance Vector Multicast Routing Protocol (DVMRP).

Cisco IOS XR Software supports Protocol Independent Multicast in sparse mode (PIM-SM), Protocol Independent Multicast in Source-Specific Multicast (PIM-SSM), and Protocol Independent Multicast in Bi-directional mode (BIDIR) permitting these modes to operate on your router at the same time.

PIM-SM and PIM-SSM supports one-to-many applications by greatly simplifying the protocol mechanics for deployment ease. Bidir PIM helps deploy emerging communication and financial applications that rely on a many-to-many applications model. BIDIR PIM enables these applications by allowing them to easily scale to a very large number of groups and sources by eliminating the maintenance of source state.

- PIM-SM Operations

- PIM-SSM Operations

- PIM-Bidirectional Operations

- Restrictions for PIM-SM and PIM-SSM, and PIM BIDIR

PIM-SM Operations

PIM in sparse mode operation is used in a multicast network when relatively few routers are involved in each multicast and these routers do not forward multicast packets for a group, unless there is an explicit request for the traffic.

For more information about PIM-SM, see the PIM-Sparse Mode.

PIM-SSM Operations

PIM in Source-Specific Multicast operation uses information found on source addresses for a multicast group provided by receivers and performs source filtering on traffic.

-

By default, PIM-SSM operates in the 232.0.0.0/8 multicast group range for IPv4 and ff3x::/32 (where x is any valid scope) in IPv6. To configure these values, use the ssm range command.

-

If SSM is deployed in a network already configured for PIM-SM, only the last-hop routers must be upgraded with Cisco IOS XR Software that supports the SSM feature.

-

No MSDP SA messages within the SSM range are accepted, generated, or forwarded.

PIM-Bidirectional Operations

PIM Bidirectional (BIDIR) has one shared tree from sources to RP and from RP to receivers. This is unlike the PIM-SM, which is unidirectional by nature with multiple source trees - one per (S,G) or a shared tree from receiver to RP and multiple SG trees from RP to sources.

Restrictions for PIM-SM and PIM-SSM, and PIM BIDIR

Interoperability with SSM

PIM-SM operations within the SSM range of addresses change to PIM-SSM. In this mode, only PIM (S,G) join and prune messages are generated by the router, and no (S,G) RP shared tree or (*,G) shared tree messages are generated.

IGMP Version

To report multicast memberships to neighboring multicast routers, hosts use IGMP, and all routers on the subnet must be configured with the same version of IGMP.

A router running Cisco IOS XR Software does not automatically detect Version 1 systems. You must use the version command in router IGMP configuration submode to configure the IGMP version.

MLD Version

To report multicast memberships to neighboring multicast routers, routers use MLD, and all routers on the subnet must be configured with the same version of MLD.

PIM-BIDIR Restrictions

-

PIM SSM is not supported in the core for BIDIR traffic in the MVRF.

-

Anycast RP is not supported for BIDIR in the MVRF and in native.

-

Data MDT is not supported for BIDIR in the MVRF.

-

Extranet is not supported for BIDIR traffic.

-

MVPN BIDIR in the core is not supported.

-

The SM scale is about 350 VRFs per system and the maximum BIDIR scale is expected to be around 10% of SM scale. Thus, the BIDIR scale is about 35 VRFs.

E3 Linecard Limitations

-

Only 1k BIDIR routes (without qos) are supported on E3.

-

If multicast QoS is applied, then we recommend having only <= 1k routes.

-

Only 10 egress interfaces are supported.

-

If Multicast QoS is enabled on E3, then policy can have a maximum of 5 queuing classes, (including class default), assuming 1k scale.

Note | It is recommended to have less BIDIR scale on E3 Linecard, as it requires lot of resources to support BIDIR support such as MGID usage, TLU memory, and CPU utilization. |

Internet Group Management Protocol and Multicast Listener Discovery

Cisco IOS XR Software provides support for Internet Group Management Protocol (IGMP) over IPv4 and Multicast Listener Discovery (MLD) over IPv6.

IGMP (and MLD) provide a means for hosts to indicate which multicast traffic they are interested in and for routers to control and limit the flow of multicast traffic throughout the network. Routers build state by means of IGMP and MLD messages; that is, router queries and host reports.

A set of queries and hosts that receive multicast data streams from the same source is called a multicast group. Hosts use IGMP and MLD messages to join and leave multicast groups.

Note | IGMP messages use group addresses, which are Class D IP addresses. The high-order four bits of a Class D address are 1110. Host group addresses can be in the range 224.0.0.0 to 239.255.255.255. The address 224.0.0.0 is guaranteed not to be assigned to any group. The address 224.0.0.1 is assigned to all systems on a subnet. The address 224.0.0.2 is assigned to all routers on a subnet. |

IGMP and MLD Versions

The following points describe IGMP versions 1, 2, and 3:

-

IGMP Version 1 provides for the basic query-response mechanism that allows the multicast router to determine which multicast groups are active and for other processes that enable hosts to join and leave a multicast group.

-

IGMP Version 2 extends IGMP allowing such features as the IGMP query timeout and the maximum query-response time. See RFC 2236.

Note | MLDv1 provides the same functionality (under IPv6) as IGMP Version 2. |

-

IGMP Version 3 permits joins and leaves for certain source and group pairs instead of requesting traffic from all sources in the multicast group.

Note | MLDv2 provides the same functionality (under IPv6) as IGMP Version 3. |

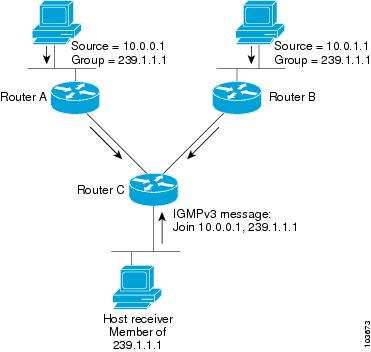

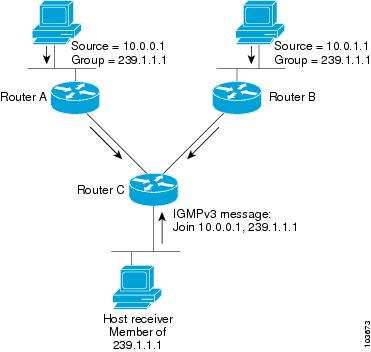

IGMP Routing Example

Figure 1 illustrates two sources, 10.0.0.1 and 10.0.1.1, that are multicasting to group 239.1.1.1. The receiver wants to receive traffic addressed to group 239.1.1.1 from source 10.0.0.1 but not from source 10.0.1.1. The host must send an IGMPv3 message containing a list of sources and groups (S, G) that it wants to join and a list of sources and groups (S, G) that it wants to leave. Router C can now use this information to prune traffic from Source 10.0.1.1 so that only Source 10.0.0.1 traffic is being delivered to

Router C.

Note | When configuring IGMP, ensure that all systems on the subnet support the same IGMP version. The router does not automatically detect Version 1 systems. Configure the router for Version 2 if your hosts do not support Version 3. |

Protocol Independent Multicast

Protocol Independent Multicast (PIM) is a routing protocol designed to send and receive multicast routing updates. Proper operation of multicast depends on knowing the unicast paths towards a source or an RP. PIM relies on unicast routing protocols to derive this reverse-path forwarding (RPF) information. As the name PIM implies, it functions independently of the unicast protocols being used. PIM relies on the Routing Information Base (RIB) for RPF information.

If the multicast subsequent address family identifier (SAFI) is configured for Border Gateway Protocol (BGP), or if multicast intact is configured, a separate multicast unicast RIB is created and populated with the BGP multicast SAFI routes, the intact information, and any IGP information in the unicast RIB. Otherwise, PIM gets information directly from the unicast SAFI RIB. Both multicast unicast and unicast databases are outside of the scope of PIM.

The Cisco IOS XR implementation of PIM is based on RFC 4601 Protocol Independent Multicast - Sparse Mode (PIM-SM): Protocol Specification. For more information, see RFC 4601 and the Protocol Independent Multicast (PIM): Motivation and Architecture Internet Engineering Task Force (IETF) Internet draft.

Note | Cisco IOS XR Software supports PIM-SM, PIM-SSM, PIM Bidir, and PIM Version 2 only. PIM Version 1 hello messages that arrive from neighbors are rejected. |

PIM-Sparse Mode

Typically, PIM in sparse mode (PIM-SM) operation is used in a multicast network when relatively few routers are involved in each multicast. Routers do not forward multicast packets for a group, unless there is an explicit request for traffic. Requests are accomplished using PIM join messages, which are sent hop by hop toward the root node of the tree. The root node of a tree in PIM-SM is the rendezvous point (RP) in the case of a shared tree or the first-hop router that is directly connected to the multicast source in the case of a shortest path tree (SPT). The RP keeps track of multicast groups, and the sources that send multicast packets are registered with the RP by the first-hop router of the source.

As a PIM join travels up the tree, routers along the path set up the multicast forwarding state so that the requested multicast traffic is forwarded back down the tree. When multicast traffic is no longer needed, a router sends a PIM prune message up the tree toward the root node to prune (or remove) the unnecessary traffic. As this PIM prune travels hop by hop up the tree, each router updates its forwarding state appropriately. Ultimately, the forwarding state associated with a multicast group or source is removed. Additionally, if prunes are not explicitly sent, the PIM state will timeout and be removed in the absence of any further join messages.

PIM-SM is the best choice for multicast networks that have potential members at the end of WAN links.

PIM-Source Specific Multicast

In many multicast deployments where the source is known, protocol-independent multicast-source-specific multicast (PIM-SSM) mapping is the obvious multicast routing protocol choice to use because of its simplicity. Typical multicast deployments that benefit from PIM-SSM consist of entertainment-type solutions like the ETTH space, or financial deployments that completely rely on static forwarding.

PIM-SSM is derived from PIM-SM. However, whereas PIM-SM allows for the data transmission of all sources sending to a particular group in response to PIM join messages, the SSM feature forwards traffic to receivers only from those sources that the receivers have explicitly joined. Because PIM joins and prunes are sent directly towards the source sending traffic, an RP and shared trees are unnecessary and are disallowed. SSM is used to optimize bandwidth utilization and deny unwanted Internet broadcast traffic. The source is provided by interested receivers through IGMPv3 membership reports.

In SSM, delivery of datagrams is based on (S,G) channels. Traffic for one (S,G) channel consists of datagrams with an IP unicast source address S and the multicast group address G as the IP destination address. Systems receive traffic by becoming members of the (S,G) channel. Signaling is not required, but receivers must subscribe or unsubscribe to (S,G) channels to receive or not receive traffic from specific sources. Channel subscription signaling uses IGMP to include mode membership reports, which are supported only in Version 3 of IGMP (IGMPv3).

To run SSM with IGMPv3, SSM must be supported on the multicast router, the host where the application is running, and the application itself. Cisco IOS XR Software allows SSM configuration for an arbitrary subset of the IP multicast address range 224.0.0.0 through 239.255.255.255. When an SSM range is defined, existing IP multicast receiver applications do not receive any traffic when they try to use addresses in the SSM range, unless the application is modified to use explicit (S,G) channel subscription.

PIM-Bidirectional Mode

Note | In Cisco IOS XR Release 4.2.1, Anycast RP is not supported on PIM Bidirectional mode. |

PIM-BIDIR is designed to be used for many-to-many applications within individual PIM domains. Multicast groups in bidirectional mode can scale to an arbitrary number of sources without incurring overhead due to the number of sources. PIM-BIDIR is derived from the mechanisms of PIM-sparse mode (PIM-SM) and shares many SPT operations. PIM-BIDIR also has unconditional forwarding of source traffic toward the RP upstream on the shared tree, but no registering process for sources as in PIM-SM. These modifications are necessary and sufficient to allow forwarding of traffic in all routers solely based on the (*, G) multicast routing entries. This feature eliminates any source-specific state and allows scaling capability to an arbitrary number of sources.

The traditional PIM protocols (dense-mode and sparse-mode) provided two models for forwarding multicast packets, source trees and shared trees. Source trees are rooted at the source of the traffic while shared trees are rooted at the rendezvous point. Source trees achieve the optimum path between each receiver and the source at the expense of additional routing information: an (S,G) routing entry per source in the multicast routing table. The shared tree provides a single distribution tree for all of the active sources. This means that traffic from different sources traverse the same distribution tree to reach the interested receivers, therefore reducing the amount of routing state in the network. This shared tree needs to be rooted somewhere, and the location of this root is the rendezvous point. PIM BIDIR uses shared trees as their main forwarding mechanism.

The algorithm to elect the designated forwarder is straightforward, all the PIM neighbors in a subnet advertise their unicast route to the rendezvous point and the router with the best route is elected. This effectively builds a shortest path between every subnet and the rendezvous point without consuming any multicast routing state (no (S,G) entries are generated). The designated forwarder election mechanism expects all of the PIM neighbors to be BIDIR enabled. In the case where one of more of the neighbors is not a BIDIR capable router, the election fails and BIDIR is disabled in that subnet.

PIM Shared Tree and Source Tree (Shortest Path Tree)

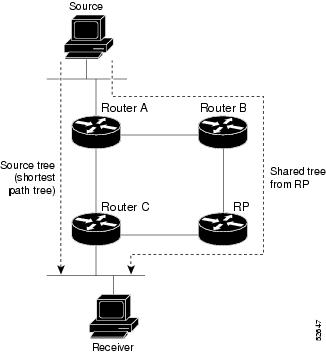

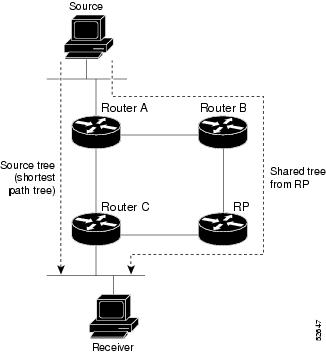

In PIM-SM, the rendezvous point (RP) is used to bridge sources sending data to a particular group with receivers sending joins for that group. In the initial setup of state, interested receivers receive data from senders to the group across a single data distribution tree rooted at the RP. This type of distribution tree is called a shared tree or rendezvous point tree (RPT) as illustrated in Figure 1 . Data from senders is delivered to the RP for distribution to group members joined to the shared tree.

Unless the spt-threshold infinity command is configured, this initial state gives way as soon as traffic is received on the leaf routers (designated router closest to the host receivers). When the leaf router receives traffic from the RP on the RPT, the router initiates a switch to a data distribution tree rooted at the source sending traffic. This type of distribution tree is called a shortest path tree or source tree. By default, the Cisco IOS XR Software switches to a source tree when it receives the first data packet from a source.

The following process describes the move from shared tree to source tree in more detail:

-

Receiver joins a group; leaf Router C sends a join message toward RP.

-

RP puts link to Router C in its outgoing interface list.

-

Source sends data; Router A encapsulates data in Register and sends it to RP.

-

RP forwards data down the shared tree to Router C and sends a join message toward Source. At this point, data may arrive twice at the RP, once encapsulated and once natively.

-

When data arrives natively (unencapsulated) at RP, RP sends a register-stop message to Router A.

-

By default, receipt of the first data packet prompts Router C to send a join message toward Source.

-

When Router C receives data on (S,G), it sends a prune message for Source up the shared tree.

-

RP deletes the link to Router C from outgoing interface of (S,G). RP triggers a prune message toward Source.

Join and prune messages are sent for sources and RPs. They are sent hop by hop and are processed by each PIM router along the path to the source or RP. Register and register-stop messages are not sent hop by hop. They are exchanged using direct unicast communication between the designated router that is directly connected to a source and the RP for the group.

Tip | The spt-threshold infinity command lets you configure the router so that it never switches to the shortest path tree (SPT). |

Multicast-Intact

The multicast-intact feature provides the ability to run multicast routing (PIM) when Interior Gateway Protocol (IGP) shortcuts are configured and active on the router. Both Open Shortest Path First, version 2 (OSPFv2), and Intermediate System-to-Intermediate System (IS-IS) support the multicast-intact feature. Multiprotocol Label Switching Traffic Engineering (MPLS-TE) and IP multicast coexistence is supported in Cisco IOS XR Software by using the mpls traffic-eng multicast-intact IS-IS or OSPF router command. See Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router for information on configuring multicast intact using IS-IS and OSPF commands.

You can enable multicast-intact in the IGP when multicast routing protocols (PIM) are configured and IGP shortcuts are configured on the router. IGP shortcuts are MPLS tunnels that are exposed to IGP. The IGPs route the IP traffic over these tunnels to destinations that are downstream from the egress router of the tunnel (from an SPF perspective). PIM cannot use IGP shortcuts for propagating PIM joins because reverse path forwarding (RPF) cannot work across a unidirectional tunnel.

When you enable multicast-intact on an IGP, the IGP publishes a parallel or alternate set of equal-cost next-hops for use by PIM. These next-hops are called mcast-intact next-hops. The mcast-intact next-hops have the following attributes:

-

They are guaranteed not to contain any IGP shortcuts.

-

They are not used for unicast routing but are used only by PIM to look up an IPv4 next hop to a PIM source.

-

They are not published to the Forwarding Information Base (FIB).

-

When multicast-intact is enabled on an IGP, all IPv4 destinations that were learned through link-state advertisements are published with a set equal-cost mcast-intact next-hops to the RIB. This attribute applies even when the native next-hops have no IGP shortcuts.

-

In IS-IS, the max-paths limit is applied by counting both the native and mcast-intact next-hops together. (In OSPFv2, the behavior is slightly different.)

Designated Routers

Cisco routers use PIM-SM to forward multicast traffic and follow an election process to select a designated router (DR) when there is more than one router on a LAN segment.

The designated router is responsible for sending PIM register and PIM join and prune messages toward the RP to inform it about host group membership.

If there are multiple PIM-SM routers on a LAN, a designated router must be elected to avoid duplicating multicast traffic for connected hosts. The PIM router with the highest IP address becomes the DR for the LAN unless you choose to force the DR election by use of the dr-priority command. The DR priority option allows you to specify the DR priority of each router on the LAN segment (default priority = 1) so that the router with the highest priority is elected as the DR. If all routers on the LAN segment have the same priority, the highest IP address is again used as the tiebreaker.

Figure 1 illustrates what happens on a multiaccess segment. Router A (10.0.0.253) and Router B (10.0.0.251) are connected to a common multiaccess Ethernet segment with Host A (10.0.0.1) as an active receiver for Group A. As the Explicit Join model is used, only Router A, operating as the DR, sends joins to the RP to construct the shared tree for Group A. If Router B were also permitted to send (*, G) joins to the RP, parallel paths would be created and Host A would receive duplicate multicast traffic. When Host A begins to source multicast traffic to the group, the DR’s responsibility is to send register messages to the RP. Again, if both routers were assigned the responsibility, the RP would receive duplicate multicast packets.

If the DR fails, the PIM-SM provides a way to detect the failure of Router A and to elect a failover DR. If the DR (Router A) were to become inoperable, Router B would detect this situation when its neighbor adjacency with Router A timed out. Because Router B has been hearing IGMP membership reports from Host A, it already has IGMP state for Group A on this interface and immediately sends a join to the RP when it becomes the new DR. This step reestablishes traffic flow down a new branch of the shared tree using Router B. Additionally, if Host A were sourcing traffic, Router B would initiate a new register process immediately after receiving the next multicast packet from Host A. This action would trigger the RP to join the SPT to Host A, using a new branch through Router B.

Tip | Two PIM routers are neighbors if there is a direct connection between them. To display your PIM neighbors, use the show pim neighbor command in EXEC mode. |

Note | DR election process is required only on multiaccess LANs. The last-hop router directly connected to the host is the DR. |

Rendezvous Points

When PIM is configured in sparse mode, you must choose one or more routers to operate as a rendezvous point (RP). A rendezvous point is a single common root placed at a chosen point of a shared distribution tree, as illustrated in Figure 1. A rendezvous point can be either configured statically in each box or learned through a dynamic mechanism.

PIM DRs forward data from directly connected multicast sources to the rendezvous point for distribution down the shared tree. Data is forwarded to the rendezvous point in one of two ways:

-

Encapsulated in register packets and unicast directly to the rendezvous point by the first-hop router operating as the DR.

-

Multicast forwarded by the RPF forwarding algorithm, described in the Reverse-Path Forwarding, if the rendezvous point has itself joined the source tree.

The rendezvous point address is used by first-hop routers to send PIM register messages on behalf of a host sending a packet to the group. The rendezvous point address is also used by last-hop routers to send PIM join and prune messages to the rendezvous point to inform it about group membership. You must configure the rendezvous point address on all routers (including the rendezvous point router).

A PIM router can be a rendezvous point for more than one group. Only one rendezvous point address can be used at a time within a PIM domain. The conditions specified by the access list determine for which groups the router is a rendezvous point.

You can either manually configure a PIM router to function as a rendezvous point or allow the rendezvous point to learn group-to-RP mappings automatically by configuring Auto-RP or BSR. (For more information, see the Auto-RP section that follows and PIM Bootstrap Router.)

Auto-RP

Automatic route processing (Auto-RP) is a feature that automates the distribution of group-to-RP mappings in a PIM network. This feature has these benefits:

-

It is easy to use multiple RPs within a network to serve different group ranges.

-

It allows load splitting among different RPs.

-

It facilitates the arrangement of RPs according to the location of group participants.

-

It avoids inconsistent, manual RP configurations that might cause connectivity problems.

Multiple RPs can be used to serve different group ranges or to serve as hot backups for each other. To ensure that Auto-RP functions, configure routers as candidate RPs so that they can announce their interest in operating as an RP for certain group ranges. Additionally, a router must be designated as an RP-mapping agent that receives the RP-announcement messages from the candidate RPs, and arbitrates conflicts. The RP-mapping agent sends the consistent group-to-RP mappings to all remaining routers. Thus, all routers automatically determine which RP to use for the groups they support.

Tip | By default, if a given group address is covered by group-to-RP mappings from both static RP configuration, and is discovered using Auto-RP or PIM BSR, the Auto-RP or PIM BSR range is preferred. To override the default, and use only the RP mapping, use the rp-address override keyword. |

Note | If you configure PIM in sparse mode and do not configure Auto-RP, you must statically configure an RP as described in the Configuring a Static RP and Allowing Backward Compatibility. When router interfaces are configured in sparse mode, Auto-RP can still be used if all routers are configured with a static RP address for the Auto-RP groups. |

Note | Auto-RP is not supported on VRF interfaces. Auto-RP Lite allows you to configure auto-RP on the CE router. It allows the PE router that has the VRF interface to relay auto-RP discovery, and announce messages across the core and eventually to the remote CE. Auto-RP is supported in only the IPv4 address family. |

PIM Bootstrap Router

The PIM bootstrap router (BSR) provides a fault-tolerant, automated RP discovery and distribution mechanism that simplifies the Auto-RP process. This feature is enabled by default allowing routers to dynamically learn the group-to-RP mappings.

PIM uses the BSR to discover and announce RP-set information for each group prefix to all the routers in a PIM domain. This is the same function accomplished by Auto-RP, but the BSR is part of the PIM Version 2 specification. The BSR mechanism interoperates with Auto-RP on Cisco routers.

To avoid a single point of failure, you can configure several candidate BSRs in a PIM domain. A BSR is elected among the candidate BSRs automatically. Candidates use bootstrap messages to discover which BSR has the highest priority. The candidate with the highest priority sends an announcement to all PIM routers in the PIM domain that it is the BSR.

Routers that are configured as candidate RPs unicast to the BSR the group range for which they are responsible. The BSR includes this information in its bootstrap messages and disseminates it to all PIM routers in the domain. Based on this information, all routers are able to map multicast groups to specific RPs. As long as a router is receiving the bootstrap message, it has a current RP map.

Reverse-Path Forwarding

Reverse-path forwarding (RPF) is an algorithm used for forwarding multicast datagrams. It functions as follows:

-

If a router receives a datagram on an interface it uses to send unicast packets to the source, the packet has arrived on the RPF interface.

-

If the packet arrives on the RPF interface, a router forwards the packet out the interfaces present in the outgoing interface list of a multicast routing table entry.

-

If the packet does not arrive on the RPF interface, the packet is silently discarded to prevent loops.

PIM uses both source trees and RP-rooted shared trees to forward datagrams; the RPF check is performed differently for each, as follows:

-

If a PIM router has an (S,G) entry present in the multicast routing table (a source-tree state), the router performs the RPF check against the IP address of the source for the multicast packet.

-

If a PIM router has no explicit source-tree state, this is considered a shared-tree state. The router performs the RPF check on the address of the RP, which is known when members join the group.

Sparse-mode PIM uses the RPF lookup function to determine where it needs to send joins and prunes. (S,G) joins (which are source-tree states) are sent toward the source. (*,G) joins (which are shared-tree states) are sent toward the RP.

Multicast VPN

Multicast VPN (MVPN) provides the ability to dynamically provide multicast support over MPLS networks. MVPN introduces an additional set of protocols and procedures that help enable a provider to support multicast traffic in a VPN.

Note | PIM-Bidir is not supported on MVPN. |

-

Rosen GRE (native): MVPN uses GRE with unique multicast distribution tree (MDT) forwarding to enable scalability of native IP Multicast in the core network. MVPN introduces multicast routing information to the VPN routing and forwarding table (VRF), creating a Multicast VRF. In Rosen GRE, the MCAST customer packets (c-packets) are encapsulated into the provider MCAST packets (p-packets), so that the PIM protocol is enabled in the provider core, and mrib/mfib is used for forwarding p-packets in the core.

-

MLDP ones (Rosen, partition): MVPN allows a service provider to configure and support multicast traffic in an MPLS VPN environment. This type supports routing and forwarding of multicast packets for each individual VPN routing and forwarding (VRF) instance, and it also provides a mechanism to transport VPN multicast packets across the service provider backbone. In the MLDP case, the regular label switch path forwarding is used, so core does not need to run PIM protocol. In this scenario, the c-packets are encapsulated in the MPLS labels and forwarding is based on the MPLS Label Switched Paths (LSPs) ,similar to the unicast case.

In both the above types, the MVPN service allows you to build a Protocol Independent Multicast (PIM) domain that has sources and receivers located in different sites.

To provide Layer 3 multicast services to customers with multiple distributed sites, service providers look for a secure and scalable mechanism to transmit customer multicast traffic across the provider network. Multicast VPN (MVPN) provides such services over a shared service provider backbone, using native multicast technology similar to BGP/MPLS VPN.

MVPN emulates MPLS VPN technology in its adoption of the multicast domain (MD) concept, in which provider edge (PE) routers establish virtual PIM neighbor connections with other PE routers that are connected to the same customer VPN. These PE routers thereby form a secure, virtual multicast domain over the provider network. Multicast traffic is then transmitted across the core network from one site to another, as if the traffic were going through a dedicated provider network.

Multi-instance BGP is supported on multicast and MVPN. Multicast-related SAFIs can be configured on multiple BGP instances.

- Multicast VPN Routing and Forwarding

- Multicast Distribution Tree Tunnels

- InterAS Support on Multicast VPN

- BGP Requirements

Multicast VPN Routing and Forwarding

Dedicated multicast routing and forwarding tables are created for each VPN to separate traffic in one VPN from traffic in another.

The VPN-specific multicast routing and forwarding database is referred to as MVRF. On a PE router, an MVRF is created when multicast is enabled for a VRF. Protocol Independent Multicast (PIM), and Internet Group Management Protocol (IGMP) protocols run in the context of MVRF, and all routes created by an MVRF protocol instance are associated with the corresponding MVRF. In addition to VRFs, which hold VPN-specific protocol states, a PE router always has a global VRF instance, containing all routing and forwarding information for the provider network.

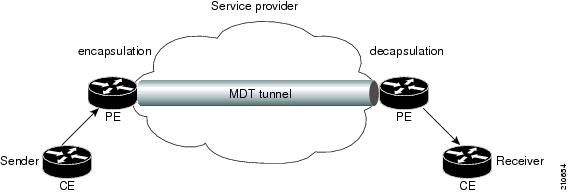

Multicast Distribution Tree Tunnels

The multicast distribution tree (MDT) can span multiple customer sites through provider networks, allowing traffic to flow from one source to multiple receivers. For MLDP, the MDT tunnel trees are called as Labeled MDT (LMDT).

Secure data transmission of multicast packets sent from the customer edge (CE) router at the ingress PE router is achieved by encapsulating the packets in a provider header and transmitting the packets across the core. At the egress PE router, the encapsulated packets are decapsulated and then sent to the CE receiving routers.

Multicast distribution tree (MDT) tunnels are point-to-multipoint. A MDT tunnel interface is an interface that MVRF uses to access the multicast domain. It can be deemed as a passage that connects an MVRF and the global MVRF. Packets sent to an MDT tunnel interface are received by multiple receiving routers. Packets sent to an MDT tunnel interface are encapsulated, and packets received from a MDT tunnel interface are decapsulated.

Encapsulating multicast packets in a provider header allows PE routers to be kept unaware of the packets’ origin—all VPN packets passing through the provider network are viewed as native multicast packets and are routed based on the routing information in the core network. To support MVPN, PE routers only need to support native multicast routing.

MVPN also supports optimized VPN traffic forwarding for high-bandwidth applications that have sparsely distributed receivers. A dedicated multicast group can be used to encapsulate packets from a specific source, and an optimized MDT can be created to send traffic only to PE routers connected to interested receivers. This is referred to as data MDT.

InterAS Support on Multicast VPN

The Multicast VPN Inter-AS Support feature enables service providers to provide multicast connectivity to VPN sites that span across multiple autonomous systems. This feature was added to MLDP profile that enables Multicast Distribution Trees (MDTs), used for Multicast VPNs (MVPNs), to span multiple autonomous systems.

There are two types of MVPN inter-AS deployment scenarios:

-

Single-Provider Inter-AS—A service provider whose internal network consists of multiple autonomous systems.

-

Intra-Provider Inter-AS—Multiple service providers that need to coordinate their networks to provide inter-AS support.

To establish a Multicast VPN between two autonomous systems, a MDT-default tunnel must be setup between the two PE routers. The PE routers accomplish this by joining the configured MDT-default group. This MDT-default group is configured on the PE router and is unique for each VPN. The PIM sends the join based on the mode of the groups, which can be PIM SSM, bidir, or sparse mode.

Note | PIM-Bidir is not supported on MVPN. |

Benefits of MVPN Inter-AS Support

The MVPN Inter-AS Support feature provides these benefits to service providers:

InterAS Option A

InterAS Option A is the basic Multicast VPN configuration option. In this option, the PE router partially plays the Autonomous System Border Router (ASBR) role in each Autonomous System (AS). Such a PE router in each AS is directly connected through multiple VRF bearing subinterfaces. MPLS label distribution protocol need not run between these InterAS peering PE routers. However, an IGP or BGP protocol can be used for route distribution under the VRF.

The Option A model assumes direct connectivity between PE routers of different autonomous systems. The PE routers are attached by multiple physical or logical interfaces, each of which is associated with a given VPN (through a VRF instance). Each PE router, therefore, treats the adjacent PE router like a customer edge (CE) router. The standard Layer 3 MPLS VPN mechanisms are used for route redistribution with each autonomous system; that is, the PEs use exterior BGP (eBGP) to distribute unlabeled IPv4 addresses to each other.

Note | Option A allows service providers to isolate each autonomous system from the other. This provides better control over routing exchanges and security between the two networks. However, Option A is considered the least scalable of all the inter-AS connectivity options. |

BGP Requirements

PE routers are the only routers that need to be MVPN-aware and able to signal remote PEs with information regarding the MVPN. It is fundamental that all PE routers have a BGP relationship with each other, either directly or through a route reflector, because the PE routers use the BGP peering address information to derive the RPF PE peer within a given VRF.

PIM-SSM MDT tunnels cannot be set up without a configured BGP MDT address-family, because you establish the tunnels, using the BGP connector attribute.

See the Implementing BGP on Cisco IOS XR Software module of the Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router for information on BGP support for Multicast VPN.

Multitopology Routing

Multitopology routing allows you to manipulate network traffic flow when desirable (for example, to broadcast duplicate video streams) to flow over non-overlapping paths.

At the core of multitopology routing technology is router space infrastructure (RSI). RSI manages the global configuration of routing tables. These tables are hierarchically organized into VRF tables under logical routers. By default, RSI creates tables for unicast and multicast for both IPv4 and IPv6 under the default VRF. Using multitopology routing, you can configure named topologies for the default VRF.

PIM uses a routing policy that supports matching on source or group address to select the topology in which to look up the reverse-path forwarding (RPF) path to the source. If you do not configure a policy, the existing behavior (to select a default table) remains in force.

Currently, IS-IS and PIM routing protocols alone support multitopology-enabled network.

For information on how to configure multitopology routing, see Configuring Multitopology Routing.

Multicast VPN Extranet Routing

Multicast VPN (MVPN) extranet routing lets service providers distribute IP multicast content from one enterprise site to another across a multicast VRF. In other words, this feature provides capability to seamlessly hop VRF boundaries to distribute multicast content end to end.

Unicast extranet can be achieved simply by configuring matching route targets across VRFs. However, multicast extranet requires such configuration to resolve route lookups across VRFs in addition to the following:

-

Maintain multicast topology maps across VRFs.

-

Maintain multicast distribution trees to forward traffic across VRFs.

- Information About Extranets

- Information About the Extranet MVPN Routing Topology

- RPF Policies in an Extranet

Information About Extranets

An extranet can be viewed as part of an enterprise intranet that is extended to users outside the enterprise. A VPN is used as a way to do business with other enterprises and with customers, such as selling products and maintaining strong business partnerships. An extranet is a VPN that connects to one or more corporate sites to external business partners or suppliers to securely share a designated part of the enterprise’s business information or operations.

MVPN extranet routing can be used to solve such business problems as:

-

Inefficient content distribution between enterprises.

-

Inefficient content distribution from service providers or content providers to their enterprise VPN customers.

MVPN extranet routing provides support for IPv4 and IPv6 address family.

An extranet network requires the PE routers to pass traffic across VRFs (labeled “P” in Figure 1). Extranet networks can run either IPv4 or IPv6, but the core network always runs only IPv4 active multicast.

Note | Multicast extranet routing is not supported on BVI interfaces. |

Extranet Components

MVRF—Multicast VPN routing and forwarding (VRF) instance. An MVRF is a multicast-enabled VRF. A VRF consists of an IP routing table, a derived forwarding table, a set of interfaces that use the forwarding table, and a set of rules and routing protocols that determine what goes into the forwarding table. In general, a VRF includes the routing information that defines a customer VPN site that is attached to a provider edge (PE) router.

Source MVRF—An MVRF that can reach the source through a directly connected customer edge (CE) router.

Receiver MVRF—An MVRF to which receivers are connected through one or more CE devices.

Source PE—A PE router that has a multicast source behind a directly connected CE router.

Receiver PE—A PE router that has one or more interested receivers behind a directly connected CE router.

Information About the Extranet MVPN Routing Topology

In unicast routing of peer-to-peer VPNs, BGP routing protocol is used to advertise VPN IPv4 and IPv6 customer routes between provider edge (PE) routers. However, in an MVPN extranet peer-to-peer network, PIM RPF is used to determine whether the RPF next hop is in the same or a different VRF and whether that source VRF is local or remote to the PE.

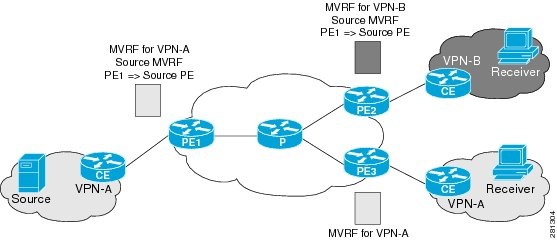

Source MVRF on a Receiver PE Router

To provide extranet MVPN services to enterprise VPN customers by configuring a source MVRF on a receiver PE router, you would complete the following procedure:

-

On a receiver PE router that has one or more interested receivers in an extranet site behind a directly connected CE router, configure an MVRF that has the same default MDT group as the site connected to the multicast source.

-

On the receiver PE router, configure the same unicast routing policy to import routes from the source MVRF to the receiver MVRF.

If the originating MVRF of the RPF next hop is local (source MVRF at receiver PE router), the join state of the receiver VRFs propagates over the core by using the default multicast distribution tree (MDT) of the source VRF. Figure 1 illustrates the flow of multicast traffic in an extranet MVPN topology where the source MVRF is configured on a receiver PE router (source at receiver MVRF topology). An MVRF is configured for VPN-A and VPN-B on PE2, a receiver PE router. A multicast source behind PE1, the source PE router, is sending out a multicast stream to the MVRF for VPN-A, and there are interested receivers behind PE2, the receiver PE router for VPN-B, and also behind PE3, the receiver PE router for VPN-A. After PE1 receives the packets from the source in the MVRF for VPN-A, it replicates and forwards the packets to PE2 and PE3. The packets received at PE2 in VPN-A are decapsulated and replicated to receivers in VPN-B.

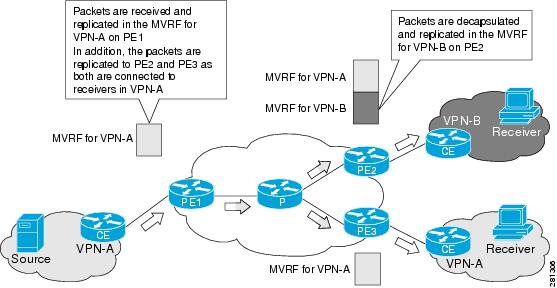

Receiver MVRF on the Source PE Router

To provide extranet MVPN services to enterprise VPN customers by configuring the receiver MVRF on the source PE router, complete the following procedure:

-

For each extranet site, you would configure an additional MVRF on the source PE router, which has the same default MDT group as the receiver MVRF, if the MVRF is not already configured on the source PE.

-

In the receiver MVRF configuration, you would configure the same unicast routing policy on the source and receiver PE routers to import routes from the source MVRF to the receiver MVRF.

If the originating MVRF of the RPF next-hop is remote (receiver MVRF on the source PE router), then the join state of receiver VRFs propagates over the core through the MDT of each receiver.

Figure 2 illustrates the flow of multicast traffic in an extranet MVPN topology where a receiver MVRF is configured on the source PE router. An MVRF is configured for VPN-A and VPN-B on PE1, the source PE router. A multicast source behind PE1 is sending out a multicast stream to the MVRF for VPN-A, and there are interested receivers behind PE2 and PE3, the receiver PE routers for VPN-B and VPN-A, respectively. After PE1 receives the packets from the source in the MVRF for VPN-A, it independently replicates and encapsulates the packets in the MVRF for VPN-A and VPN-B and forwards the packets. After receiving the packets from this source, PE2 and PE3 decapsulate and forward the packets to the respective MVRFs.

For more information, see also Configuring MVPN Extranet Routing and Configuring MVPN Extranet Routing: Example.

RPF Policies in an Extranet

RPF policies can be configured in receiver VRFs to bypass RPF lookup in receiver VRFs and statically propagate join states to specified source VRF. Such policies can be configured to pick a source VRF based on either multicast group range, multicast source range, or RP address.

For more information about configuration of RFP policies in extranets, see Configuring RPL Policies in Receiver VRFs to Propagate Joins to a Source VRF: Example and Configuring RPL Policies in Receiver VRFs on Source PE Routers to Propagate Joins to a Source VRF: Example.

MVPN Bidirectional Overview

MVPN Bidirectional (BIDIR) uses GRE or MLDP MS-PMSI (Partitioned MDT) to support BIDIR in MVPN. This functionality allows each RP-PE to announce a BGP AD route with a unique core group that is used by the RP-PE for its partitioned MDT traffic. The core group is also configured and each PE that has the RP mapping for the RP joins this group. All BIDIR sources are sending traffic to a group G along with encapsulated source traffic as its own address. The group G of the partitioned-MDT corresponds to the correct RP-PE (the PE via which the RP is reachable). Since there may be more than one partitioned-MDTs carrying traffic for group G, receiver-PEs need to implement a strict RPF check based on the core group address G of the partitioned-MDT.

The root of the partitioned-MDT (RP-PE) acts as the Designated Forwarders (DF) on its tree. BIDIR uses the concept of Designated Forwarders (DF) for forwarding. A single DF for a particular PIM-BIDIR group exists on every link within a PIM domain. DF is the router on the link with the best Unicast route to the RP.

Note | There are no interoperability issues even if there are PE routers in the network that do not support BIDIR. |

Multicast VPN Hub and Spoke Topology

Hub and spoke topology is an interconnection of two categories of sites — Hub sites and Spoke sites. The routes advertised across sites are such that they achieve connectivity in a restricted hub and spoke fashion. A spoke can interact only with its hub because the rest of the network (that is, other hubs and spokes) appears hidden behind the hub.

The hub and spoke topology can be adopted for these reasons:

-

Spoke sites of a VPN customer receives all their traffic from a central (or Hub) site hosting services such as server farms.

-

Spoke sites of a VPN customer requires all the connectivity between its spoke sites through a central site. This means that the hub site becomes a transit point for interspoke connectivity.

-

Spoke sites of a VPN customer do not need any connectivity between spoke sites. Hubs can send and receive traffic from all sites but spoke sites can send or receive traffic only to or from Hub sites.

Note | Both Cisco CRS and Cisco XR 12000 Series routers support MVPN v4 Hub-and-spoke implementation. But MVPNv6 Hub-and-spoke is not supported on Cisco CRS Router. |

Realizing the Hub and Spoke Topology

Hub and Spoke implementation leverages the infrastructure built for MVPN Extranet. The regular MVPN follows the model in which packets can flow from any site to the other sites. But Hub and Spoke MVPN will restrict traffic flows based on their subscription.

A site can be considered to be a geographic location with a group of CE routers and other devices, such as server farms, connected to PE routers by PE-CE links for VPN access. Either every site can be placed in a separate VRF, or multiple sites can be combined in one VRF on the PE router.

By provisioning every site in a separate VRF, you can simplify the unicast and multicast Hub and Spoke implementation. Such a configuration brings natural protection from traffic leakage - from one spoke site to another. Cisco IOS XR Software implementation of hub and spoke follows the one- site-to-one VRF model. Any site can be designated as either a hub or spoke site, based on how the import or export of routes is setup. Multiple hub and spoke sites can be collated on a given PE router.

Unicast Hub and Spoke connectivity is achieved by the spoke sites importing routes from only Hub sites, and Hub sites importing routes from all sites. As the spoke sites do not exchange routes, spoke to spoke site traffic cannot flow. If interspoke connectivity is required, hubs can choose to re-inject routes learned from one spoke site into other spoke site.

MVPN Hub and Spoke is achieved by separating core tunnels, for traffic sourced from hub sites, and spoke sites. MDT hub is the tunnel carrying traffic sourced from all Hub sites, and MDT spoke carries traffic sourced from all spoke sites. Such tunnel end-points are configured on all PEs participating in hub and spoke topology. If spoke sites do not host any multicast sources or RPs, provisioning of MDT Spoke can be completely avoided at all such routers.

Once these tunnels are provisioned, multicast traffic path will be policy routed in this manner:

-

Hub sites will send traffic to only MDT Hub.

-

Spoke sites will send traffic to only MDT Spoke.

-

Hub sites will receive traffic from both tunnels.

-

Spoke sites will receive traffic from only MDT Hub.

These rules ensure that hubs and spokes can send and receive traffic to or from each other, but direct spoke to spoke communication does not exist. If required, interspoke multicast can flow by turning around the traffic at Hub sites.

These enhancements are made to the Multicast Hub and Spoke topology in Cisco IOS XR Software Release 4.0:

-

Auto-RP and BSR are supported across VRFs that are connected through extranet. It is no longer restricted to using static RP only.

-

MP-BGP can publish matching import route-targets while passing prefix nexthop information to RIB.

-

Route policies can use extended community route targets instead of IP address ranges.

-

Support for extranet v4 data mdt was included so that data mdt in hub and spoke can be implemented.

Label Switched Multicast (LSM) Multicast Label Distribution Protocol (mLDP) based Multicast VPN (mVPN) Support

Label Switch Multicast (LSM) is MPLS technology extensions to support multicast using label encapsulation. Next-generation MVPN is based on Multicast Label Distribution Protocol (mLDP), which can be used to build P2MP and MP2MP LSPs through a MPLS network. These LSPs can be used for transporting both IPv4 and IPv6 multicast packets, either in the global table or VPN context.

- Benefits of LSM MLDP based MVPN

- Configuring MLDP MVPN

- P2MP and MP2MP Label Switched Paths

- Packet Flow in mLDP-based Multicast VPN

- Realizing a mLDP-based Multicast VPN

- Characteristics of mLDP Profiles

- Summary of Supported MVPN Profiles

- Configuration Process for MLDP MVPN (Intranet)

Benefits of LSM MLDP based MVPN

LSM provides these benefits when compared to GRE core tunnels that are currently used to transport customer traffic in the core:

Configuring MLDP MVPN

The MLDP MVPN configuration enables IPv4 multicast packet delivery using MPLS. This configuration uses MPLS labels to construct default and data Multicast Distribution Trees (MDTs). The MPLS replication is used as a forwarding mechanism in the core network. For MLDP MVPN configuration to work, ensure that the global MPLS MLDP configuration is enabled. To configure MVPN extranet support, configure the source multicast VPN Routing and Forwarding (mVRF) on the receiver Provider Edge (PE) router or configure the receiver mVRF on the source PE. MLDP MVPN is supported for both intranet and extranet.

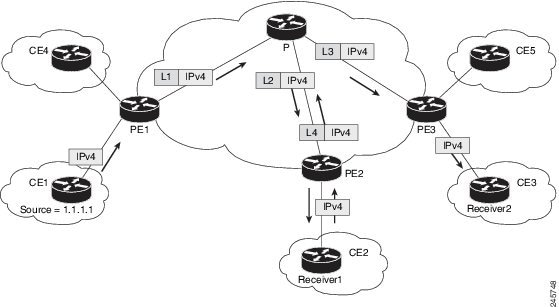

P2MP and MP2MP Label Switched Paths

mLDP is an application that sets up Multipoint Label Switched Paths (MP LSPs) in MPLS networks without requiring multicast routing protocols in the MPLS core. mLDP constructs the P2MP or MP2MP LSPs without interacting with or relying upon any other multicast tree construction protocol. Using LDP extensions for MP LSPs and Unicast IP routing, mLDP can setup MP LSPs. The two types of MP LSPs that can be setup are Point-to-Multipoint (P2MP) and Multipoint-to-Multipoint (MP2MP) type LSPs.

A P2MP LSP allows traffic from a single root (ingress node) to be delivered to a number of leaves (egress nodes), where each P2MP tree is uniquely identified with a 2-tuple (root node address, P2MP LSP identifier). A P2MP LSP consists of a single root node, zero or more transit nodes, and one or more leaf nodes, where typically root and leaf nodes are PEs and transit nodes are P routers. A P2MP LSP setup is receiver-driven and is signaled using mLDP P2MP FEC, where LSP identifier is represented by the MP Opaque Value element. MP Opaque Value carries information that is known to ingress LSRs and Leaf LSRs, but need not be interpreted by transit LSRs. There can be several MP LSPs rooted at a given ingress node, each with its own identifier.

A MP2MP LSP allows traffic from multiple ingress nodes to be delivered to multiple egress nodes, where a MP2MP tree is uniquely identified with a 2-tuple (root node address, MP2MP LSP identifier). For a MP2MP LSP, all egress nodes, except the sending node, receive a packet sent from an ingress node.

A MP2MP LSP is similar to a P2MP LSP, but each leaf node acts as both an ingress and egress node. To build an MP2MP LSP, you can setup a downstream path and an upstream path so that:

Packet Flow in mLDP-based Multicast VPN

For each packet coming in, MPLS creates multiple out-labels. Packets from the source network are replicated along the path to the receiver network. The CE1 router sends out the native IP multicast traffic. The PE1 router imposes a label on the incoming multicast packet and replicates the labeled packet towards the MPLS core network. When the packet reaches the core router (P), the packet is replicated with the appropriate labels for the MP2MP default MDT or the P2MP data MDT and transported to all the egress PEs. Once the packet reaches the egress PE, the label is removed and the IP multicast packet is replicated onto the VRF interface.

Realizing a mLDP-based Multicast VPN

There are different ways a Label Switched Path (LSP) built by mLDP can be used depending on the requirement and nature of application such as:

P2MP LSPs for global table transit Multicast using in-band signaling.

P2MP/MP2MP LSPs for MVPN based on MI-PMSI or Multidirectional Inclusive Provider Multicast Service Instance (Rosen Draft).

P2MP/MP2MP LSPs for MVPN based on MS-PMSI or Multidirectional Selective Provider Multicast Service Instance (Partitioned E-LAN).

The Cisco CRS Router performs the following important functions for the implementation of MLDP:

Characteristics of mLDP Profiles

The characteristics of various mLDP profiles are listed in this section.

Profile 1:Rosen-mLDP (with no BGP-AD)

These are the characteristics of this profile:

-

MP2MP mLDP trees are used in the core.

-

VPN-ID is used as the VRF distinguisher.

-

Configuration based on Default MDTs.

-

Same Default-MDT core-tree used for IPv4 and IPv6 traffic.

-

Data-MDT announcements sent by PIM (over Default-MDT).

-

The multicast traffic can either be SM or SSM.

-

Inter-AS Options A, B, and C are supported. Connector Attribute is announced in VPN-IP routes.

Profile 2:MS-PMSI-mLDP-MP2MP (No BGP-AD)

These are the characteristics of this profile:

Profile 3:Rosen-GRE with BGP-AD

These are the characteristics of this profile:

-

PIM-trees are used in the core. The data encapsulation method used is GRE.

-

SM, SSM , or Bidir used in the core.

-

Configuration is based on Default-MDTs.

-

The multicast traffic can be SM or SSM.

-

MoFRR in the core is supported.

-

Extranet, Hub and Spoke, CsC, Customer-RP-discovery (Embedded-RP, AutoRP and BSR) are supported.

-

Inter-AS Options A, B, and C are supported. VRF-Route-Import EC is announced in VPN-IP routes.

Profile 4: MS-PMSI-mLDP-MP2MP with BGP-AD

These are the characteristics of this profile:

Profile 5: MS-PMSI-mLDP-P2MP with BGP-AD

These are the characteristics of this profile:

Profile 6: VRF In-band Signaling (No BGP-AD)

These are the characteristics of this profile:

Profile 7: Global Inband Signalling

These are the characteristics of this profile:

-

P2MP mLDP inband tree in the core; no C-multicast Routing.

-

Customer traffic can be SM S,G or SSM.

-

Support for global table S,Gs on PEs.

For more information on MLDP implementation and OAM concepts, see the Cisco IOS XR MPLS Configuration Guide for the Cisco CRS Router

Profile 8: Global P2MP-TE

These are the characteristics of this profile:

Profile 9: Rosen-mLDP with BGP-AD

These are the characteristics of this profile:

-

Single MP2MP mLDP core-tree as the Default-MDT, with PIM C-multicast Routing.

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

-

RIB-Extranet, RPL-Extranet, Hub & Spoke supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

Profile 10 : VRF Static-P2MP-TE with BGP AD

These are the characteristics of this profile:

Profile 11 : Rosen-PIM/GRE with BGP C-multicast Routing

These are the characteristics of this profile:

- PIM-trees in the core, data encapsulation is GRE, BGP C-multicast Routing.

- Static config of (S,G) required on Head-end PE.

- For PIM-SSM core-tree and PIM-SM core-tree with no spt-infinity, all UMH options are supported.

-

For PIM-SM core-tree with spt-infinity case, only SFS (Highest PE or Hash-of-BGP-paths) is supported. Hash of installed-paths method is not supported.

-

Default and Data MDTs supported.

-

Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

-

Inter-AS Option A supported. Options B and C not supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Profile 12 : Rosen-mLDP-P2MP with BGP C-multicast Routing

These are the characteristics of this profile:

- Full mesh of P2MP mLDP core-tree as the Default-MDT, with BGP C-multicast Routing.

- All UMH options supported.

- Default and Data MDT supported.

- Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

- RPL-Tail-end-Extranet supported.

- Inter-AS Option A, B and C supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Profile 13 : Rosen-mLDP-MP2MP with BGP C-multicast Routing

These are the characteristics of this profile:

- Single MP2MP mLDP core-tree as the Default-MDT, with BGP C-multicast Routing.

-

Only SFS (Highest PE or Hash-of-BGP-paths) is supported. Hash of Installed-paths method is not supported.

-

Default and Data MDT supported.

- Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

- RIB-Tail-end-Extranet, RPL-Tail-end-Extranet supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

-

Inter-AS Option A, B and C supported. For Options B and C, Root has to be on a PE or the roor-address reachability has to be leaked across all autonomous systems.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Profile 14 : MP2MP-mLDP-P2MP with BGP C-multicast Routing

These are the characteristics of this profile:

- Full mesh of P2MP mLDP core-tree as the Default-MDT, with BGP C-multicast Routing.

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

- RPL-Tail-end-Extranet supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A, B and C supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Profile 15 : MP2MP-mLDP-MP2MP with BGP C-multicast Routing

These are the characteristics of this profile:

- Full mesh of MP2MP mLDP core-tree as the Default-MDT, with BGP C-multicast Routing.

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

- RPL-Tail-end-Extranet supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A, B and C supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Profile 16 : Rosen-Static-P2MP-TE with BGP C-multicast Routing

These are the characteristics of this profile:

- Full mesh of Static-P2MP-TE core-trees, as the Default-MDT, with BGP C-multicast Routing.

-

All UMH options supported.

-

Support for Data MDT, Default MDT.

-

Customer traffic can be SM, SSM .

- RPL-Tail-end-Extranet supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A supported. Options B and C not supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Note | Whenever multicast stream crosses configured threshold on encap PE(Head PE), S-PMSI is announced. Core tunnel is static P2MP-TE tunnel configured under route-policy for the stream. Static P2MP-TE data mdt is implemented in such a way that it can work with dynamic data mdt, dynamic default mdtand default static P2MP. |

Profile 17: Rosen-mLDP-P2MP with BGP AD/PIM C-multicast Routing

These are the characteristics of this profile:

- Full mesh of P2MP mLDP core-tree as the Default-MDT, with PIM C-multicast Routing.

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM , or Bidir (separate-partitioned-mdt).

-

RPL-Extranet, Hub & Spoke supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A, B and C supported.

Profile 18 : Rosen-Static-P2MP-TE with BGP AD/PIM C-multicast Routing

These are the characteristics of this profile:

-

Full mesh of Static-P2MP-TE core-trees, as the Default-MDT, with PIM C-multicast Routing.

-

All UMH options supported.

-

Default MDT supported; Data MDT is not supported.

-

Customer traffic can be SM, SSM .

-

RPL-Extranet, Hub & Spoke supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A supported. Options B and C not supported.

Profile 20 : Rosen-P2MP-TE with BGP AD/PIM C-multicast Routing

These are the characteristics of this profile:

Profile 22 : Rosen-P2MP-TE with BGP C-multicast Routing

These are the characteristics of this profile:

- Dynamic P2MP-TE tunnels with BGP C-multicast Routing

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM , or Bidir.

- RIB-Tail-end-Extranet, RPL-Tail-end-Extranet supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A and C- supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Profile 24: Partitioned-P2MP-TE with BGP AD/PIM C-multicast Routing

These are the characteristics of this profile:

-

Dynamic P2MP-TE tunnels setup on demand, with PIM C-multicast Routing

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM , or Bidir.

- RPL-Extranet, Hub & Spoke supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A and C- supported.

Profile 26 : Partitioned-P2MP-TE with BGP C-multicast Routing

These are the characteristics of this profile:

-

Dynamic P2MP-TE tunnels with BGP C-multicast Routing

-

All UMH options supported.

-

Default and Data MDT supported.

-

Customer traffic can be SM, SSM.

- RIB-Tail-end-Extranet, RPL-Tail-end-Extranet supported.

-

Customer-RP-discovery (Embedded-RP, AutoRP & BSR) is supported.

- Inter-AS Option A and C- supported.

-

All PEs must have a unique BGP Route Distinguisher (RD) value. To configure BGP RD value, refer Cisco IOS XR Routing Configuration Guide for the Cisco CRS Router .

Configuration rules for profiles