Overview of Line Cards and PLIMs

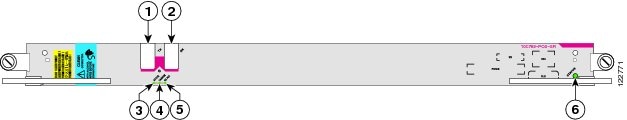

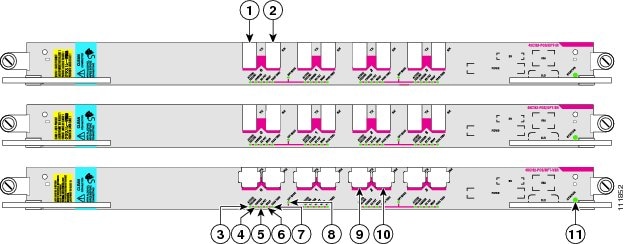

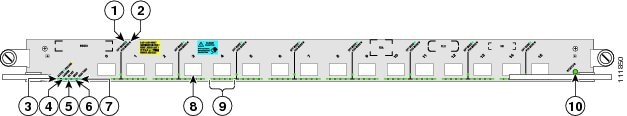

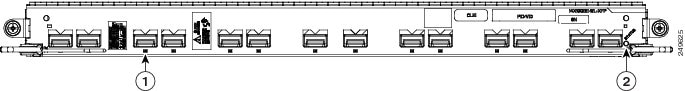

The MSC, FP, or LSP card, also called line cards, are the Layer 3 forwarding engine in the CRS 16-slot routing system. Each MSC, FP, and LSP is paired with a corresponding physical layer interface module (PLIM, also called line card) that contains the physical interfaces for the line card. An MSC, FP, or LSP can be paired with different types of PLIMs to provide a variety of packet interfaces.

- The MSC card is available in the following versions: CRS-MSC (end-of-sale), CRS-MSC-B, CRS-MSC-140G, and CRS-MSC-X/CRS-MSC-L (200G mode).

- The FP card is available in the following versions: CRS-FP140, CRS-FP-X/CRS-FP-X-L(200G mode).

- The LSP card is: CRS-LSP.

Note |

For CRS-X next generation line cards and fabric cards, we recommend that you use a modular configuration power system in the chassis. See Modular Configuration Power Supply. |

Note |

See Hardware Compatibility for information about CRS fabric, MSC, and PLIM component compatibility. |

Each line card and associated PLIM implement Layer 1 through Layer 3 functionality that consists of physical layer framers and optics, MAC framing and access control, and packet lookup and forwarding capability. The line cards deliver line-rate performance (up to 200 Gbps aggregate bandwidth). Additional services, such as Class of Service (CoS) processing, Multicast, Traffic Engineering (TE), including statistics gathering, are also performed at the 200 Gbps line rate.

Line cards support several forwarding protocols, including IPV4, IPV6, and MPLS. Note that the route processor (RP) performs routing protocol functions and routing table distributions, while the MSC, FP, and LSP actually forward the data packets.

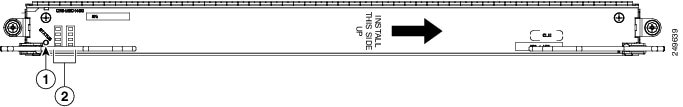

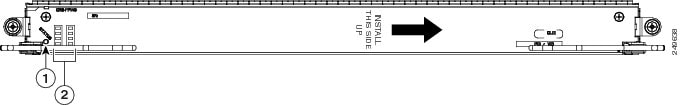

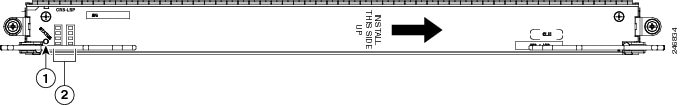

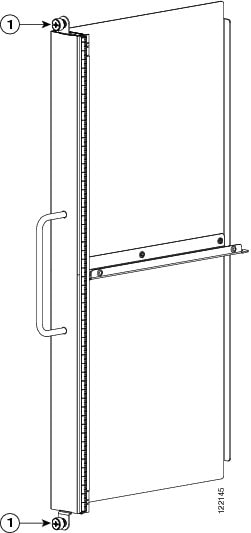

Line cards and PLIMs are installed on opposite sides of the line card chassis, and mate through the line card chassis midplane. Each MSC/PLIM pair is installed in corresponding chassis slots in the chassis (on opposite sides of the chassis).

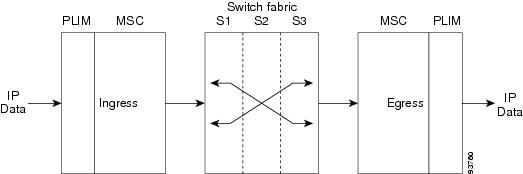

The following figure shows how the MSC takes ingress data through its associated PLIM and forwards the data to the switch fabric where the data is switched to another MSC, FP, and LSP, which passes the egress data out its associated PLIM.

Data streams are received from the line side (ingress) through optic interfaces on the PLIM. The data streams terminate on the PLIMs. Frames and packets are mapped based on the Layer 2 (L2) headers.

The line card converts packets to and from cells and provides a common interface between the routing system switch fabric and the assorted PLIMs. The PLIM provides the interface to user IP data. PLIMs perform Layer 1 and Layer 2 functions, such as framing, clock recovery, serialization and deserialization, channelization, and optical interfacing. Different PLIMs provide a range of optical interfaces, such as very-short-reach (VSR), intermediate-reach (IR), or long-reach (LR).

A PLIM eight-byte header is built for packets entering the fabric. The PLIM header includes the port number, the packet length, and some summarized layer-specific data. The L2 header is replaced with PLIM headers and the packet is passed to the MSC for feature applications and forwarding.

The transmit path is essentially the opposite of the receive path. Packets are received from the drop side (egress) from the line card through the chassis midplane. The Layer 2 header is based on the PLIM eight-byte header received from the line card. The packet is then forwarded to appropriate Layer 1 devices for framing and transmission on the fiber.

A control interface on the PLIM is responsible for configuration, optic control and monitoring, performance monitoring, packet count, error-packet count and low-level operations of the card, such as PLIM card recognition, power up of the card, and voltage and temperature monitoring.

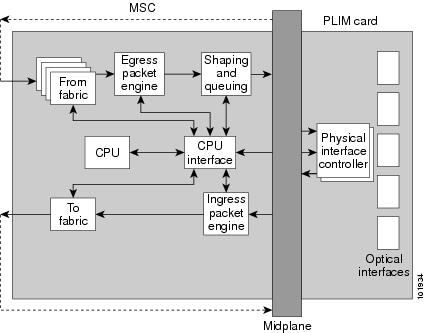

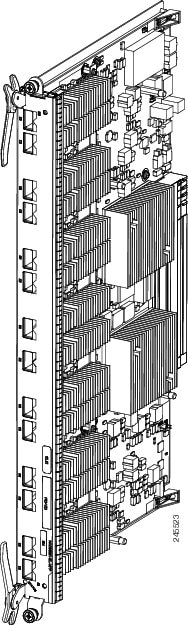

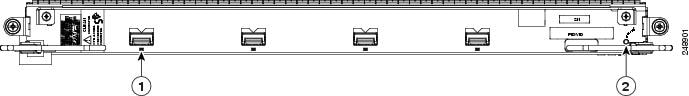

The following figure is a simple block diagram of the major components of an MSC and PLIM pair. These components are described in the sections that follow. This diagram also applies to the FP and LSP line cards.

PLIM Physical Interface Module on Ingress

As shown in the above figure, received data enters a PLIM from the physical optical interface. The data is routed to the physical interface controller, which provides the interface between the physical ports, and the Layer 3 function of the line card. For receive (ingress) data, the physical interface controller performs the following functions:

- Multiplexes the physical ports and transfers them to the ingress packet engine through the line card chassis midplane.

- Buffers incoming data, if necessary, to accommodate back-pressure from the packet engine.

- Provides Gigabit

Ethernet specific functions, such as:

- VLAN accounting and filtering database

- Mapping of VLAN subports

MSC Ingress Packet Engine

The ingress packet engine performs packet processing on the received data. It makes the forwarding decision and places the data into a rate-shaping queue in the “to fabric” section. To perform Layer 3 forwarding, the packet engine performs the following functions:

- Classifies packets by protocol type and parses the appropriate headers on which to do the forwarding lookup on

- Performs an algorithm to determine the appropriate output interface to which to route the data

- Performs access control list filtering

- Maintains per-interface and per-protocol byte-and-packet statistics

- Maintains Netflow accounting

- Implements a flexible dual-bucket policing mechanism

MSC To Fabric Section and Queuing

The “to fabric” section of the board takes packets from the ingress packet engine, segments them into fabric cells, and distributes (sprays) the cells into the eight planes of the switch fabric. Because each MSC has multiple connections per plane, the “to fabric” section distributes the cells over the links within a fabric plane. The chassis midplane provides the path between the “to fabric” section and the switch fabric (as shown by the dotted line in Figure 2).

- The first level performs ingress shaping and queuing, with a rate-shaping set of queues that are normally used for input rate-shaping (that is, per input port or per subinterface within an input port), but can also be used for other purposes, such as to shape high-priority traffic.

- The second level consists of a set of destination queues where each destination queue maps to a destination line card, plus a multicast destination.

Note that the flexible queues are programmable through the Cisco IOS XR software.

MSC From Fabric Section

The “from fabric” section of the board receives cells from the switch fabric and reassembles the cells into IP packets. The section then places the IP packets in one of its 8K egress queues, which helps the section adjust for the speed variations between the switch fabric and the egress packet engine. Egress queues are serviced using a modified deficit round-robin (MDRR) algorithm. The dotted line in Figure 2 indicates the path from the midplane to the “from fabric” section.

MSC Egress Packet Engine

The transmit (egress) packet engine performs a lookup on the IP address or MPLS label of the egress packet based on the information in the ingress MSC buffer header and on additional information in its internal tables. The transmit (egress) packet engine performs transmit side features such as output committed access rate (CAR), access lists, diffServ policing, MAC layer encapsulation, and so on.

Shaping and Queuing Function

The transmit packet engine sends the egress packet to the shaping and queuing function (shape and regulate queues function), which contains the output queues. Here the queues are mapped to ports and classes of service (CoS) within a port. Random early-detection algorithms perform active queue management to maintain low average queue occupancies and delays.

PLIM Physical Interface Section on Egress

On the transmit (egress) path, the physical interface controller provides the interface between the line card and the physical ports on the PLIM. For the egress path, the controller performs the following functions:

- Support for the physical ports. Each physical interface controller can support up to four physical ports and there can be up to four physical interface controllers on a PLIM.

- Queuing for the ports

- Back-pressure signalling for the queues

- Dynamically shared buffer memory for each queue

- A loopback function where transmitted data can be looped back to the receive side

MSC CPU and CPU Interface

As shown in Figure 2, the MSC contains a central processing unit (CPU) that performs the following tasks:

- MSC configuration

- Management

- Protocol control

The CPU subsystem includes:

- CPU chip

- Layer 3 cache

- NVRM

- Flash boot PROM

- Memory controller

- Memory, a dual

in-line memory module (DIMM) socket, providing the following:

- Up to 2 GB of 133 MHz DDR SDRAM on the CRS-MSC

- Up to 2 GB of 166 MHz DDR SDRAM on the CRS-MSC-B

- Up to 8GB of 533 MHz DDR2 SDRAM on the CRS-MSC-140G

- Up to 15GB of 533 MHz DDR3 DIMM on the CRS-MSC-X

The CPU interface provides the interface between the CPU subsystem and the other ASICs on the MSC and PLIM.

The MSC also contains a service processor (SP) module that provides:

- MSC and PLIM power-up sequencing

- Reset sequencing

- JTAG configuration

- Power monitoring

The SP, CPU subsystem, and CPU interface module work together to perform housekeeping, communication, and control plane functions for the MSC. The SP controls card power up, environmental monitoring, and Ethernet communication with the line card chassis RPs.

The CPU subsystem performs a number of control plane functions, including FIB download receive, local PLU and TLU management, statistics gathering and performance monitoring, and MSC ASIC management and fault-handling.

The CPU interface module drives high-speed communication ports to all ASICs on the MSC and PLIM. The CPU talks to the CPU interface module through a high-speed bus attached to its memory controller.

Feedback

Feedback