VMware vSphere 5.0 Built on FlexPod

Available Languages

Table Of Contents

About Cisco Validated Design (CVD) Program

VMware vSphere 5.0 Built On FlexPod

Benefits of the Cisco Unified Computing System

Benefits of Cisco Nexus 5548UP

Benefits of the NetApp FAS Family of Storage Controllers

Benefits of NetApp OnCommand Unified Manager Software

Benefits of VMware vSphere with the NetApp Virtual Storage Console

NetApp FAS3240 Deployment Procedure: Part 1

Assign Controller Disk Ownership

Install Data ONTAP to Onboard Flash Storage

Harden Storage System Logins and Security

Enable Active-Active Controller Configuration Between Two Storage Systems

Set Up Storage System NTP Time Synchronization and CDP Enablement

Create an SNMP Requests Role and Assign SNMP Login Privileges

Create an SNMP Management Group and Assign an SNMP Request Role

Create an SNMP User and Assign It to an SNMP Management Group

Set Up SNMP v1 Communities on Storage Controllers

Set Up SNMP Contact Information for Each Storage Controller

Set SNMP Location Information for Each Storage Controller

Reinitialize SNMP on Storage Controllers

Initialize NDMP on the Storage Controllers

Export NFS Infrastructure Volumes to ESXi Servers

Cisco Unified Computing System Deployment Procedure

Perform Initial Setup of the Cisco UCS C-Series Blade Servers

Perform Initial Setup of the Cisco UCS 6248 Fabric Interconnects

Upgrade the Cisco UCS Manager Software to Version 2.0(4a)

Add a Block of IP Addresses for KVM Access

Synchronize Cisco Unified Computing System to NTP

Edit the Chassis Discovery Policy

Enable Server and Uplink Ports

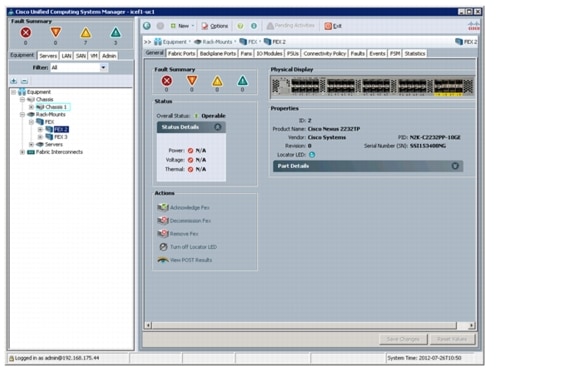

Acknowledge Cisco UCS Chassis and FEX

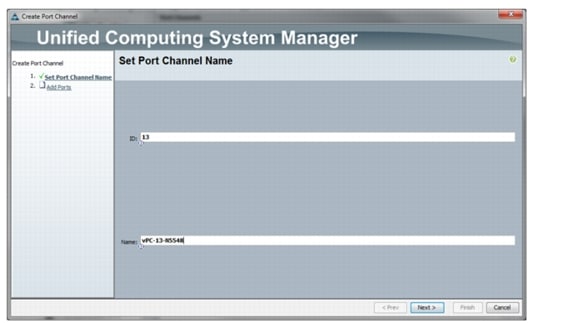

Create Uplink PortChannels to the Cisco Nexus 5548 Switches

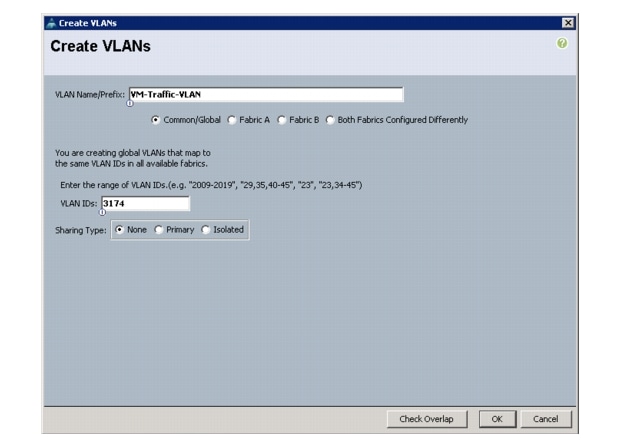

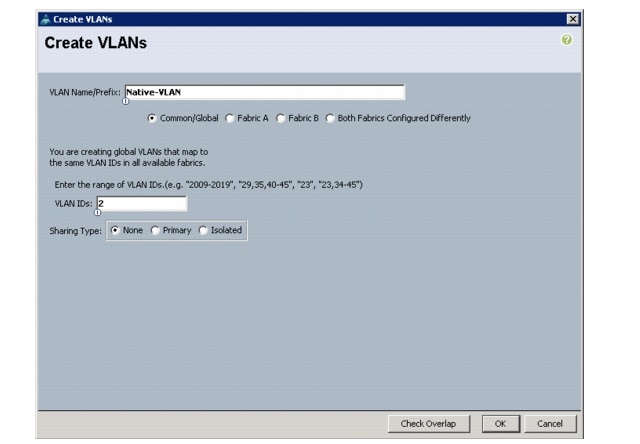

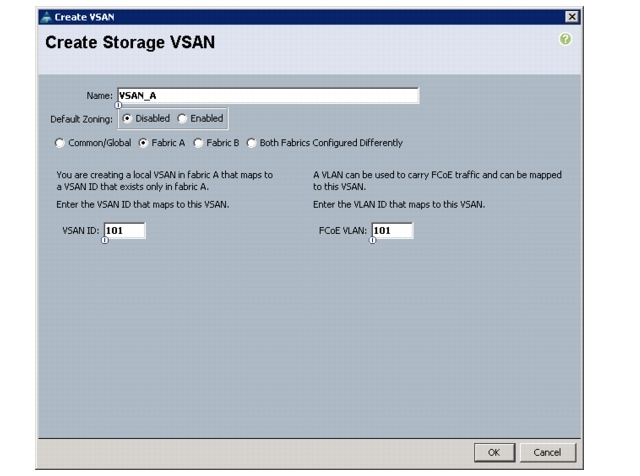

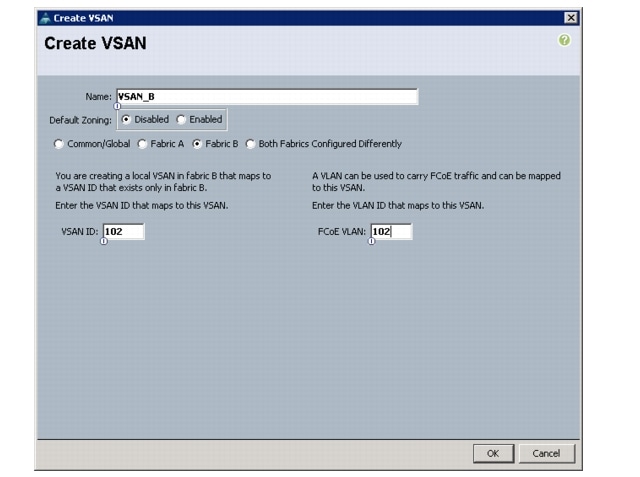

Create VSANs and SAN PortChannels

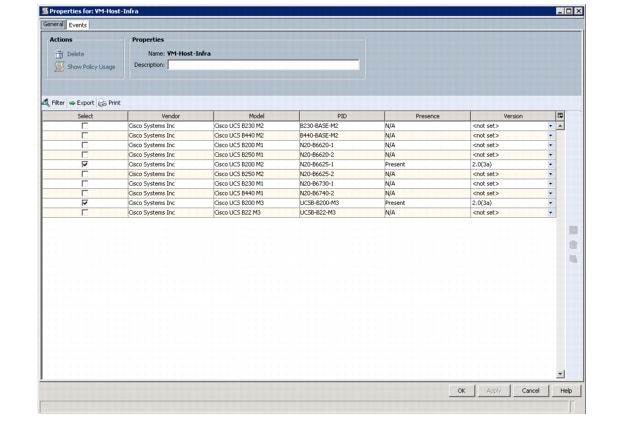

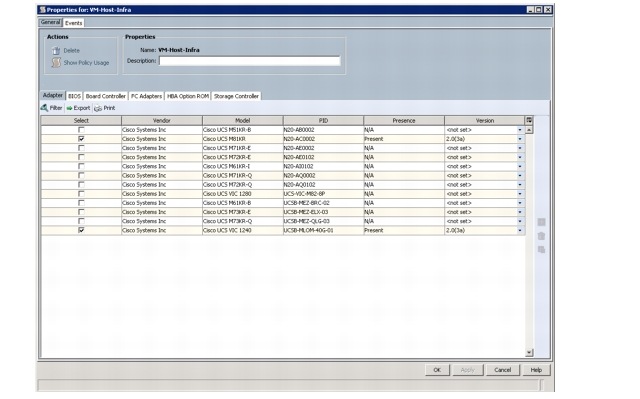

Create a Firmware Management Package

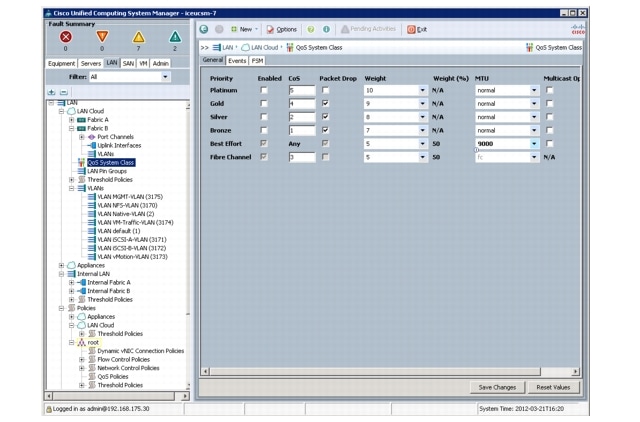

Set Jumbo Frames in Cisco UCS Fabric

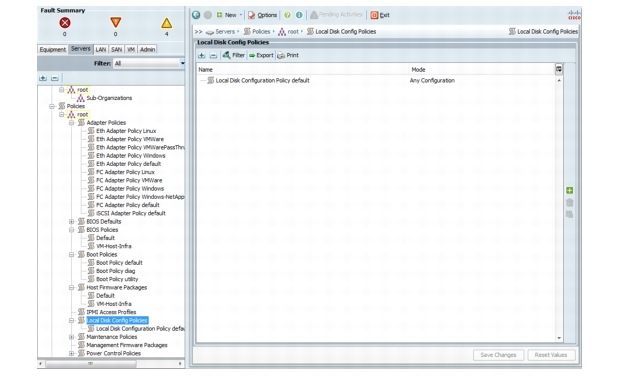

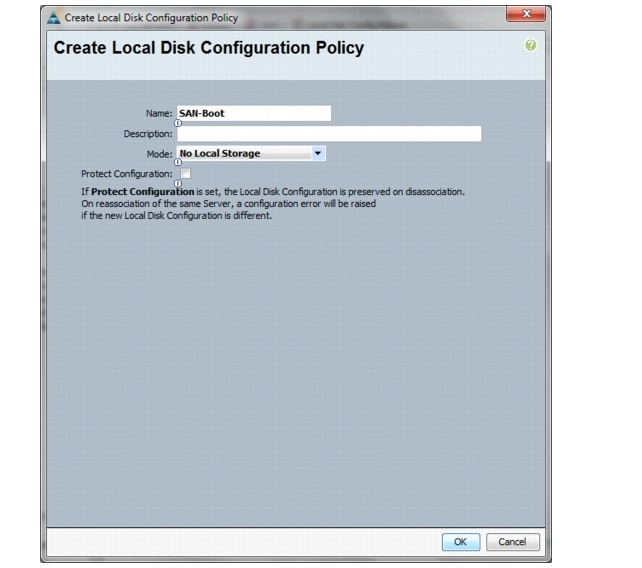

Create a Local Disk Configuration Policy (Optional)

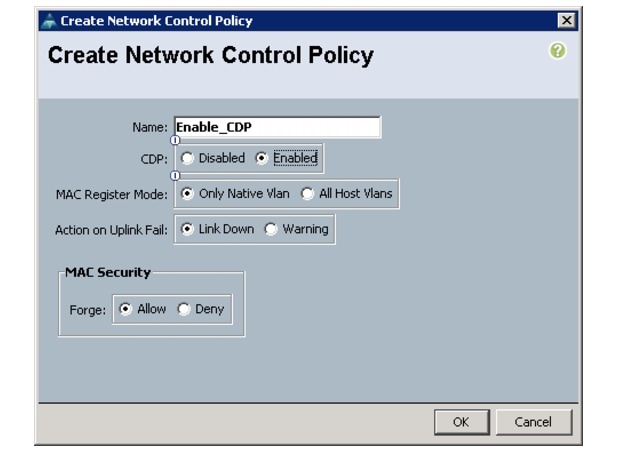

Create a Network Control Policy for Cisco Discovery Protocol (CDP)

Create a Server Pool Qualification Policy (Optional)

Create vNIC / vVHBA Placement Policy for Virtual Machine Infrastructure Hosts

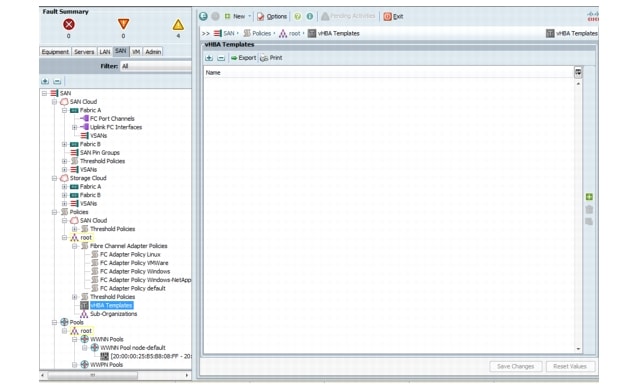

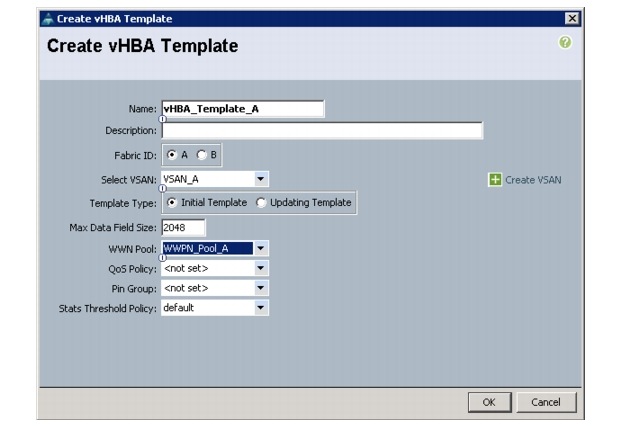

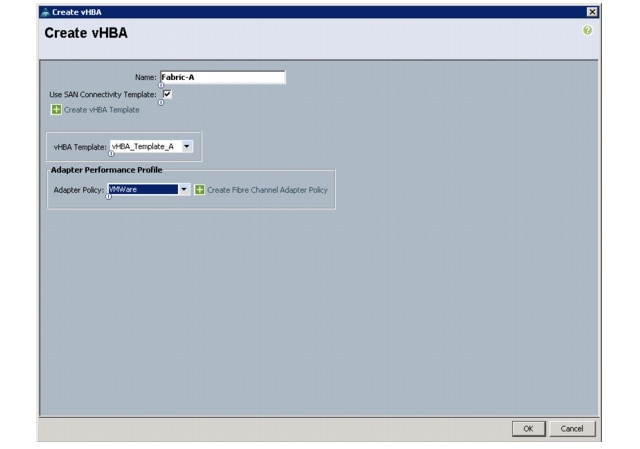

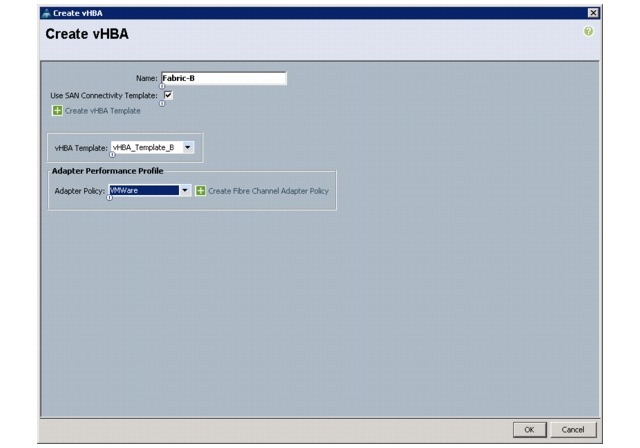

Create vHBA Templates for Fabric A and B

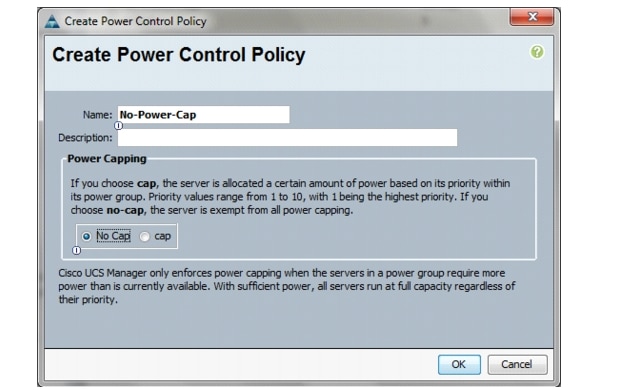

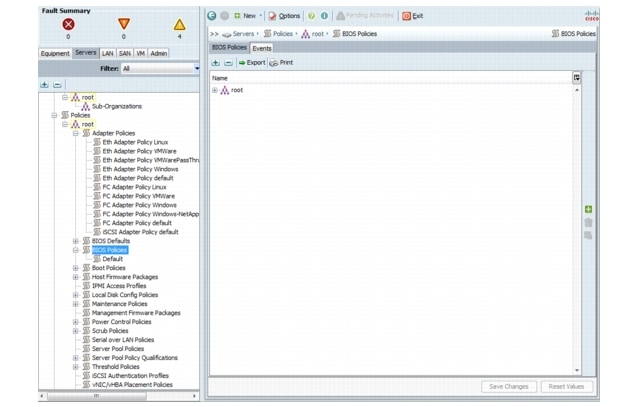

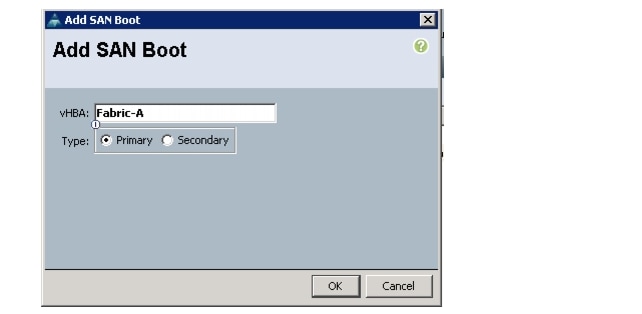

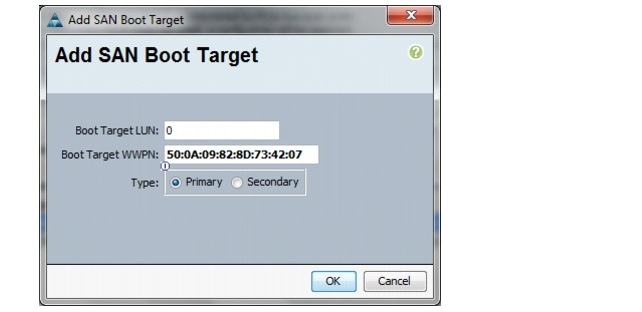

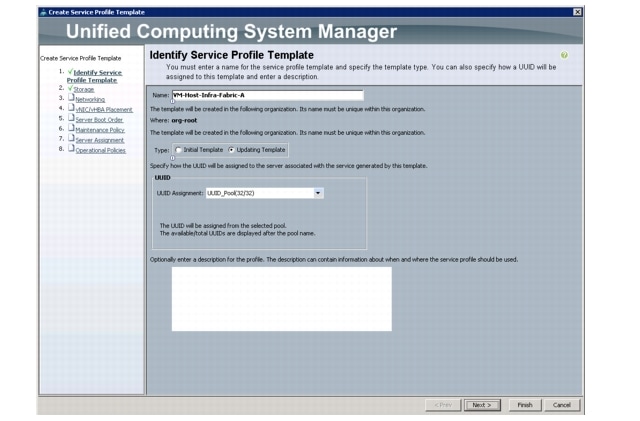

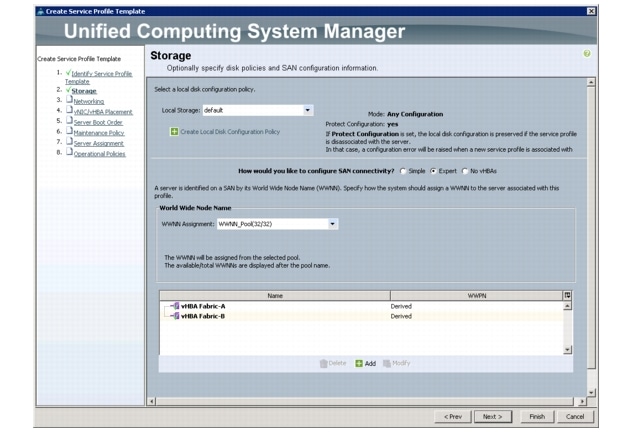

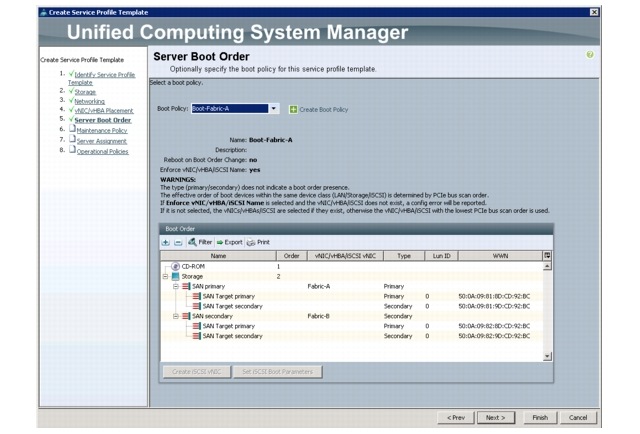

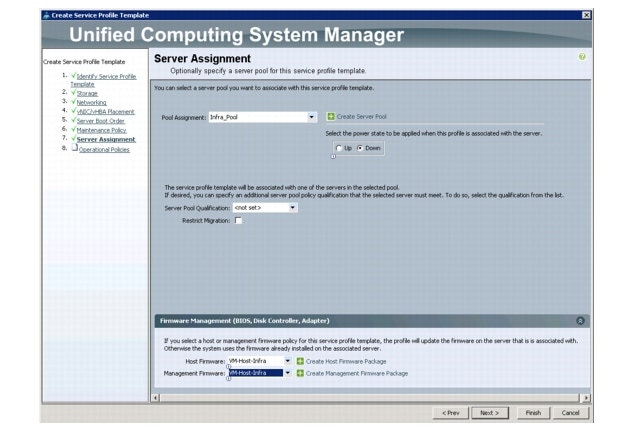

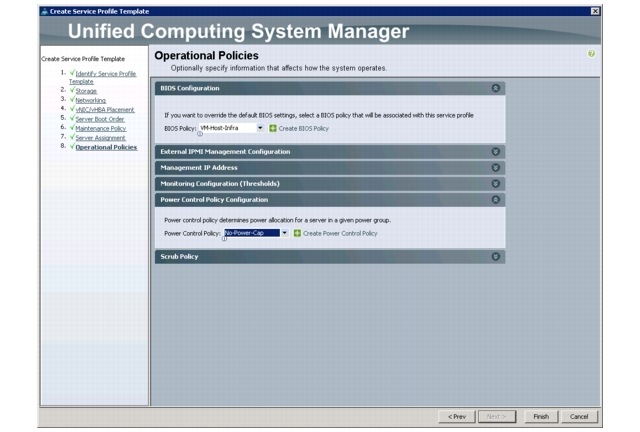

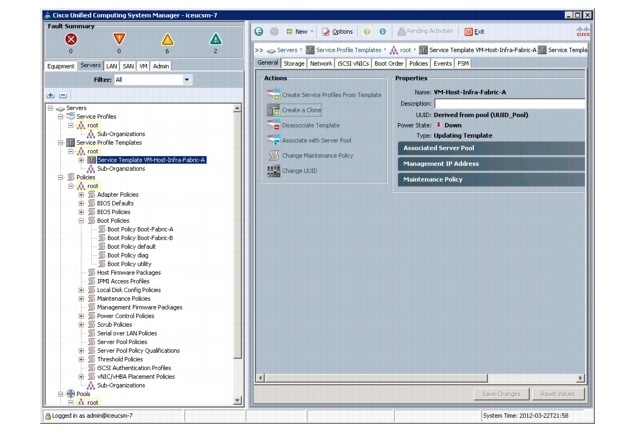

Create Service Profile Templates

Add More Servers to the FlexPod Unit

Cisco Nexus 5548 Deployment Procedure

Set up Initial Cisco Nexus 5548 Switch

Enable Appropriate Cisco Nexus Features

Add Individual Port Descriptions for Troubleshooting

Add Port Channel Configurations

Configure Virtual Port Channels

Configure Ports for the Cisco Nexus 1010 Virtual Appliances

Create VSANs, Assign FCoE Ports, Turn on FCoE Ports

Create Device Aliases and Create Zones for FCoE Devices

Create VSANs, Assign FC Ports, Turn on FC Ports

Create Device Aliases and Create Zones for FC Devices

Uplink into Existing Network Infrastructure

NetApp FAS3240 Deployment Procedure: Part 2

Add Infrastructure Host Boot LUNs

VMware ESXi 5.0 Deployment Procedure

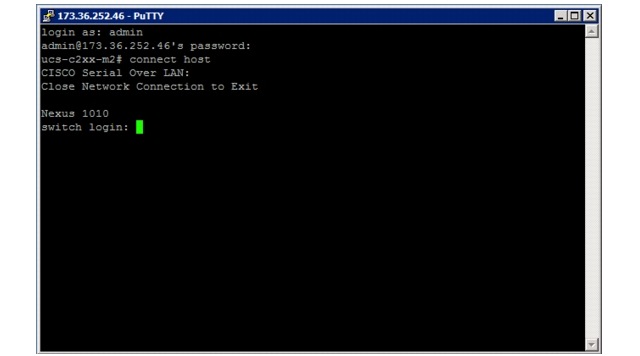

Log in to the Cisco UCS 6200 Fabric Interconnects

Set Up the ESXi Hosts' Management Networking

Set Up Each ESXi Host's Management Networking

Download VMware vSphere Client and vSphere Remote Command Line

Log in to VMware ESXi Host Using VMware vSphere Client

Load Updated Cisco VIC enic Driver Version

Set up VMkernel Ports and Virtual Switch

Move the VM Swap File Location

VMware vCenter 5.0 Deployment Procedure

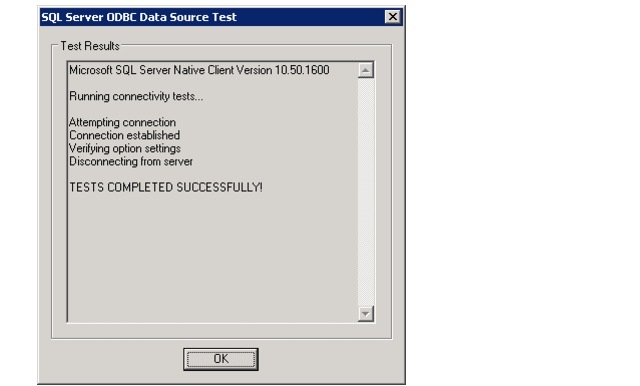

Build a Microsoft SQL Server VM

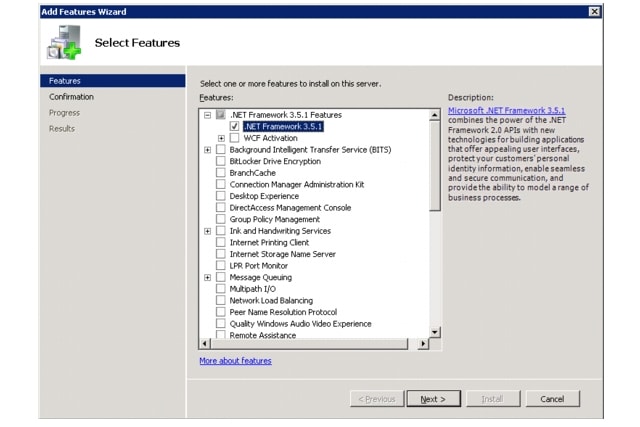

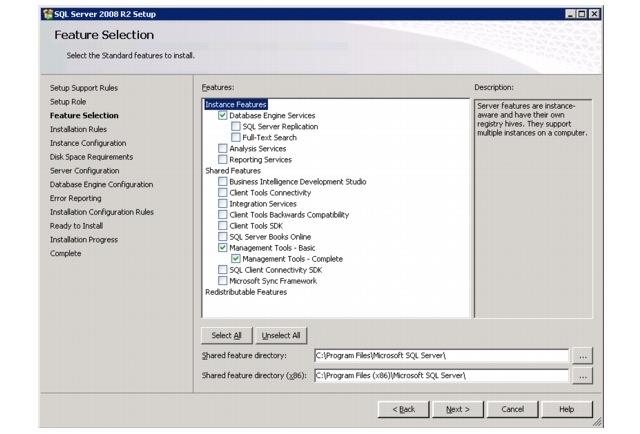

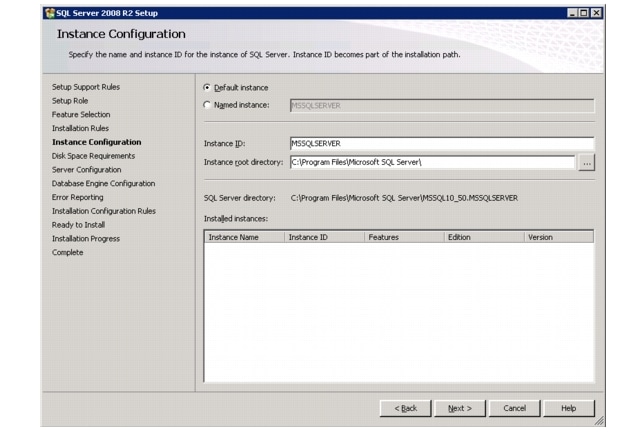

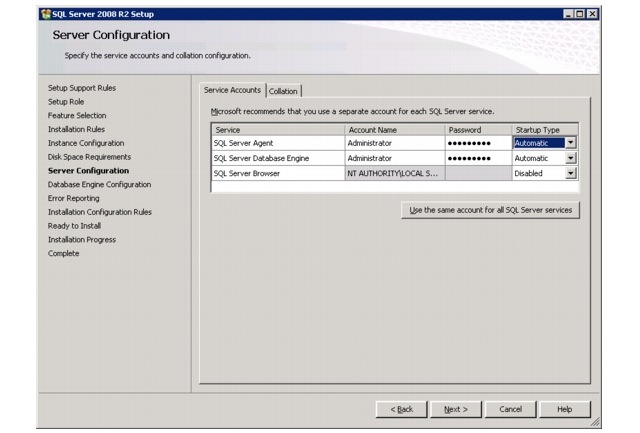

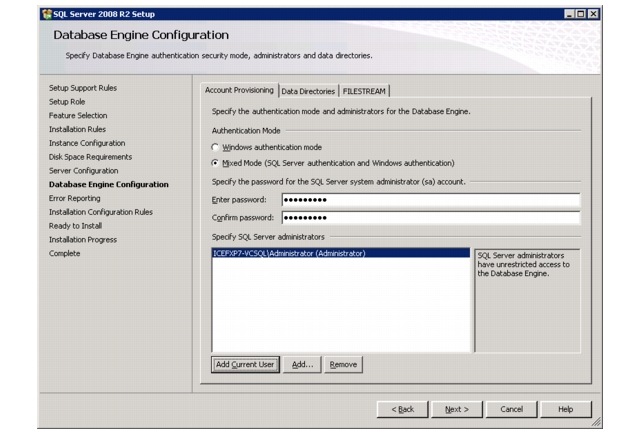

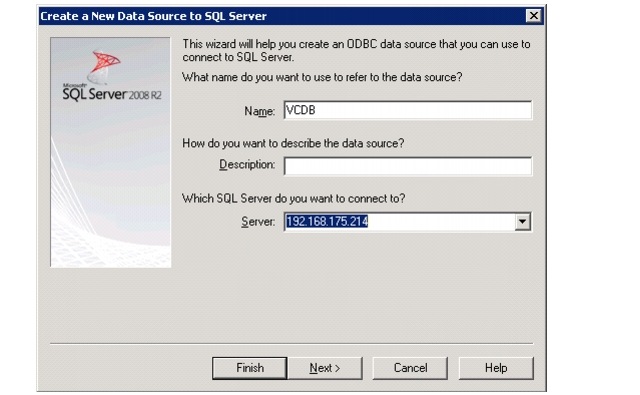

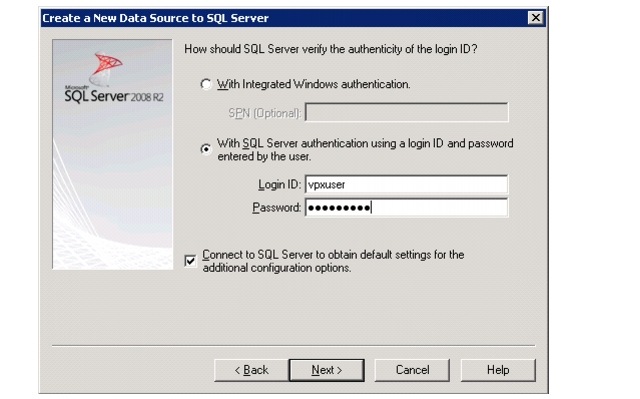

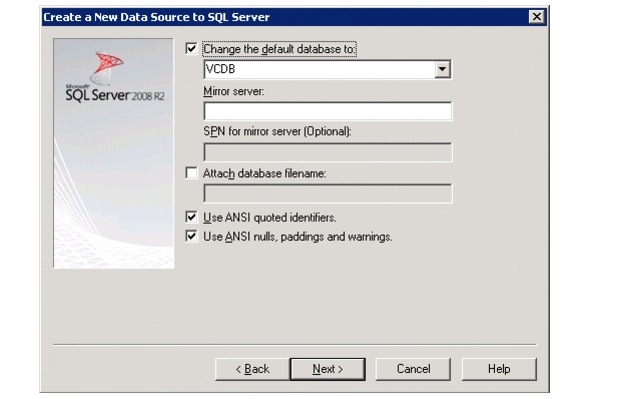

Install Microsoft SQL Server 2008 R2

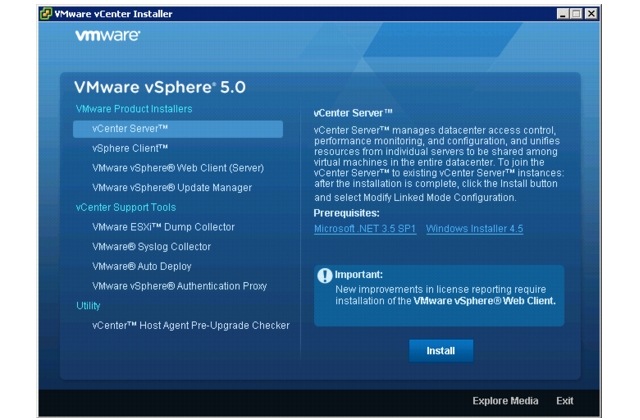

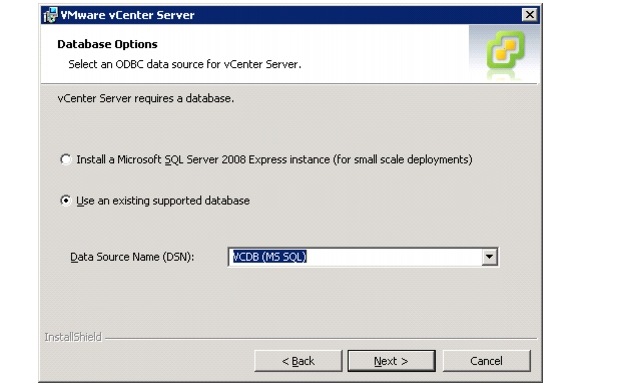

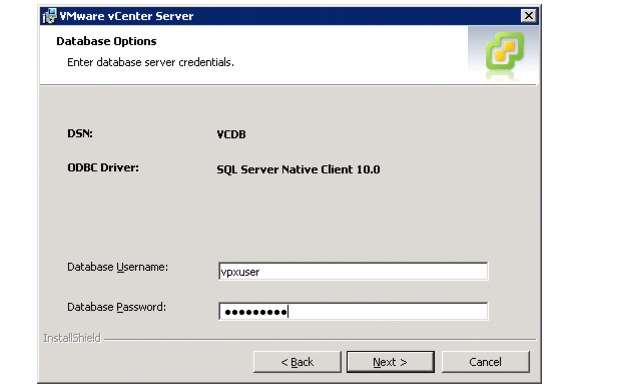

Build a VMware vCenter Virtual Machine

Cisco Nexus 1010-X and 1000v Deployment Procedure

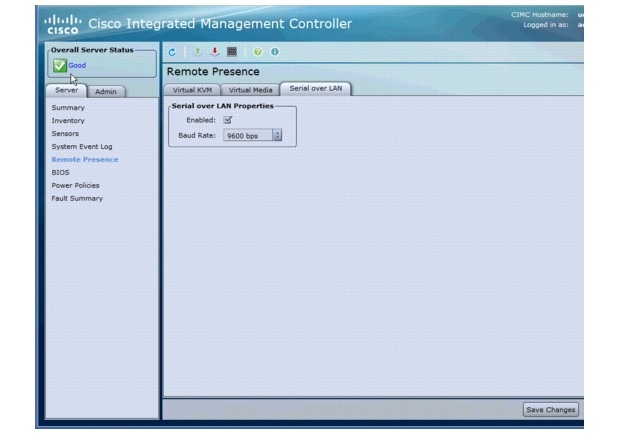

Configure the CIMC Interface on Both Nexus 1010-Xs

Configure Serial over LAN for both Nexus 1010-Xs

Configure the Nexus 1010-X Virtual Appliances

Setup the Primary Cisco Nexus 1000v VSM

Set Up the Secondary Cisco Nexus 1000v VSM

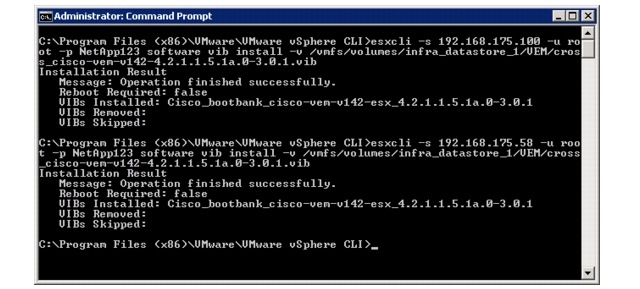

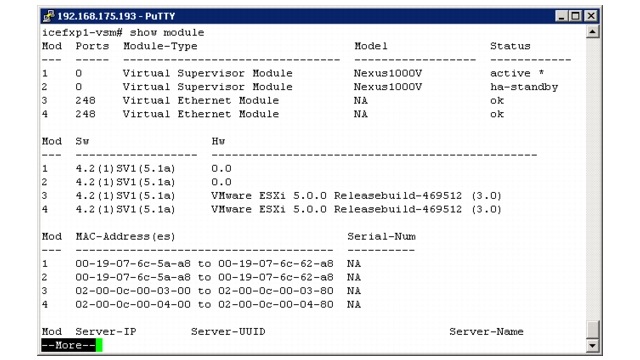

Install the Virtual Ethernet Module (VEM) on each ESXi Host

Register the Nexus 1000v as a vCenter Plugin

Base Configuration of the Primary VSM

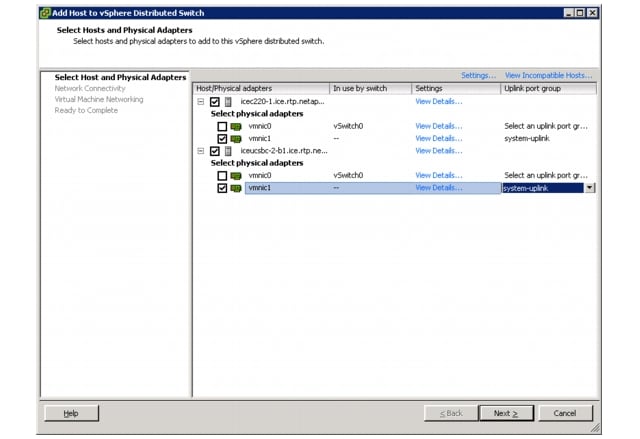

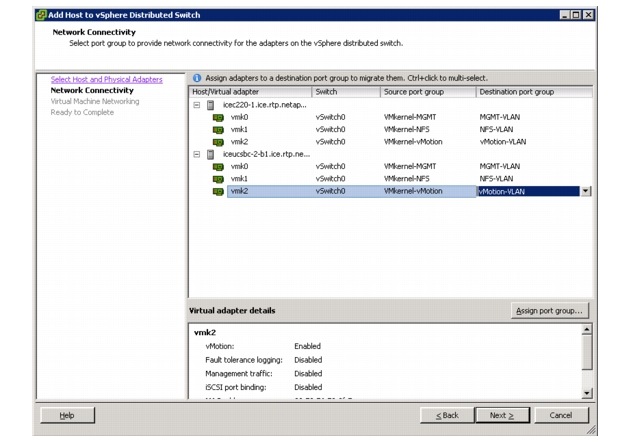

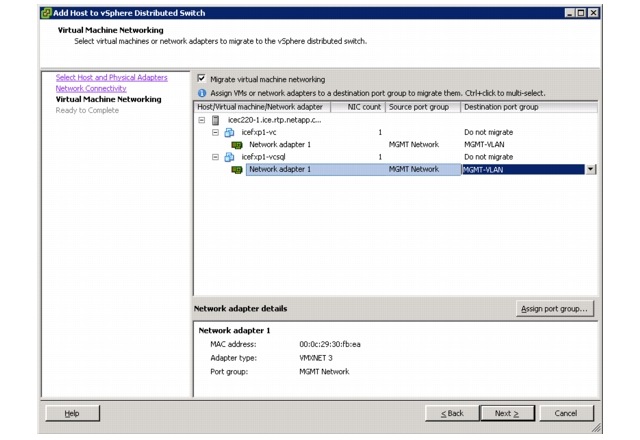

Migrate the ESXi Hosts' Networking to the Nexus 1000v

NetApp Virtual Storage Console Deployment Procedure

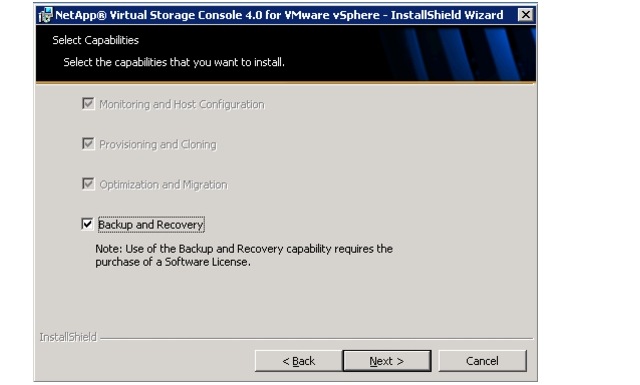

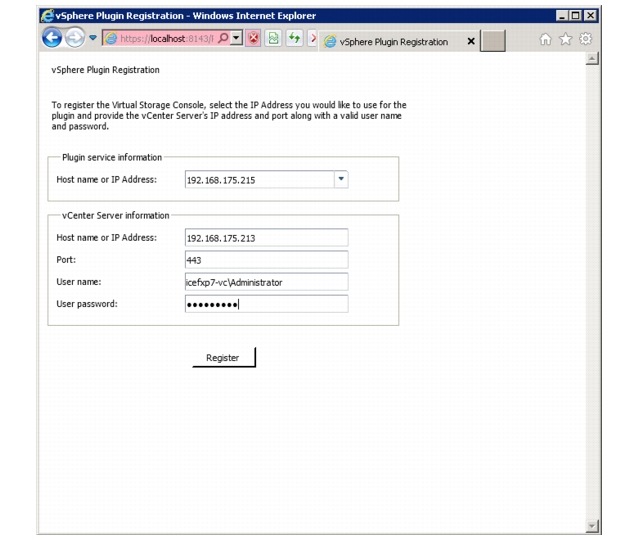

Installing NetApp Virtual Storage Console 4.0

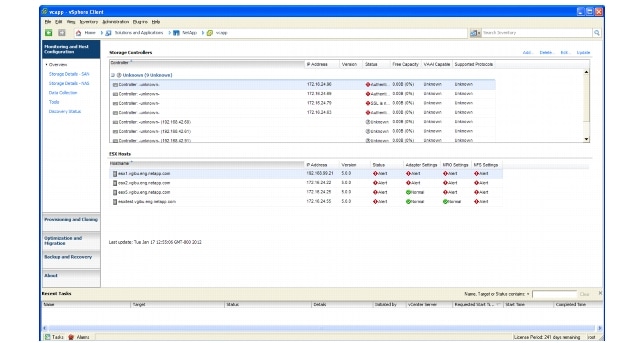

Discover and Add Storage Resources

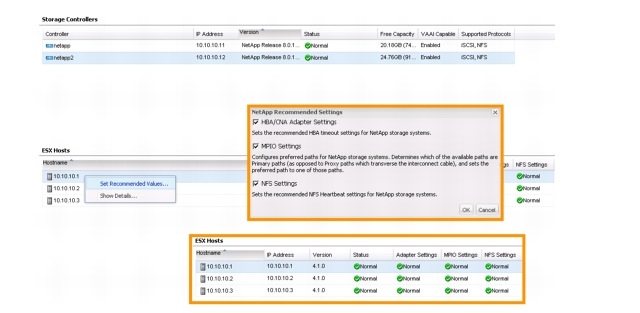

Optimal Storage Settings for ESXi Hosts

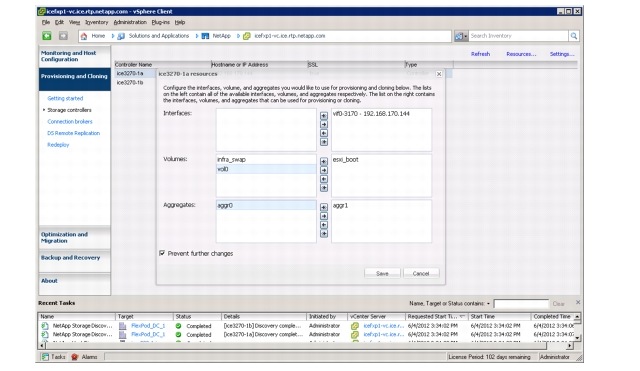

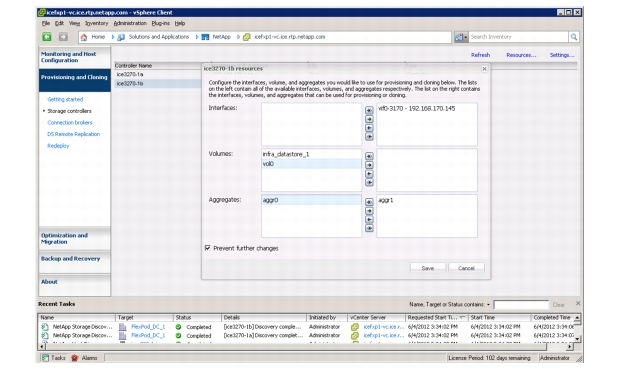

Provisioning and Cloning Setup

NetApp OnCommand Deployment Procedure

Manually Add Storage Controllers into DataFabric Manager

Run Diagnostics for Verifying DataFabric Manager Communication

Configure Additional Operations Manager Alerts

Deploy the NetApp OnCommand Host Package

Set a Shared Lock Directory to Coordinate Mutually Exclusive Activities on Shared Resources

Install NetApp OnCommand Windows PowerShell Cmdlets

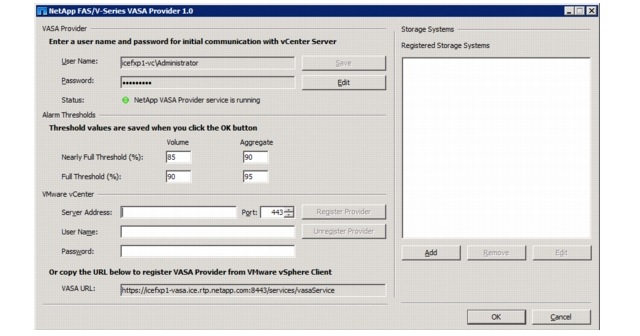

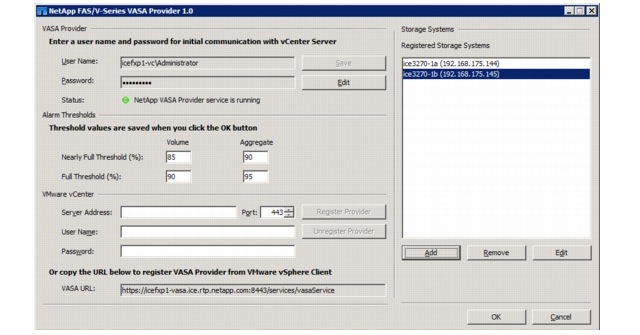

NetApp VASA Provider Deployment Procedure

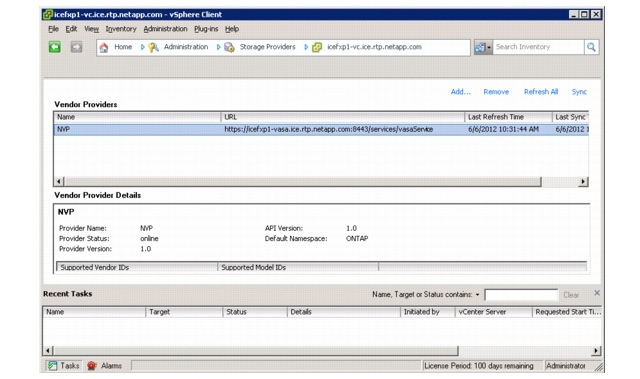

Verify VASA Provider in vCenter

VMware vSphere 5.0 Built On FlexPodLast Updated: November 1, 2012

Building Architectures to Solve Business Problems

About the Authors

John Kennedy, Technical Leader, Cisco

John Kennedy is a technical marketing engineer in the Server Access and Virtualization Technology group. Currently, John is focused on the validation of FlexPod architecture while contributing to future SAVTG products. John spent two years in the Systems Development unit at Cisco, researching methods of implementing long-distance vMotion for use in the Data Center Interconnect Cisco Validated Designs. Previously, John worked at VMware for eight and a half years as a senior systems engineer supporting channel partners outside the United States and serving on the HP Alliance team. He is a VMware Certified Professional on every version of VMware ESX and ESXi, vCenter, and Virtual Infrastructure, including vSphere 5. He has presented at various industry conferences.

John George, Reference Architect, Infrastructure and Cloud Engineering, NetApp

John George is a reference architect in the NetApp Infrastructure and Cloud Engineering team and is focused on developing, validating, and supporting cloud infrastructure solutions that include NetApp products. Before assuming his current role, he supported and administered Nortel's worldwide training network and VPN infrastructure. John holds a Master's Degree in Computer Engineering from Clemson University.

Ganesh Kamath, Technical Marketing Engineer, NetApp

Ganesh Kamath is a technical architect in the NetApp TSP Solutions Engineering team focused on architecting and validating solutions for TSPs based on NetApp products. Ganesh's diverse experiences at NetApp include working as a technical marketing engineer as well as a member of the NetApp Rapid Response Engineering team, qualifying specialized solutions for our most demanding customers.

Lindsey Street, Systems Architect, Infrastructure and Cloud Engineering, NetApp

Lindsey Street is a systems architect in the NetApp Infrastructure and Cloud Engineering team. She focuses on the architecture, implementation, compatibility, and security of innovative vendor technologies to develop competitive and high-performance end-to-end cloud solutions for customers. Lindsey started her career in 2006 at Nortel as an interoperability test engineer, testing customer equipment interoperability for certification. Lindsey has her Bachelor of Science degree in computer networking and her Master of Science degree in Information Security from East Carolina University.

Chris Reno, Reference Architect, Infrastructure and Cloud Engineering, NetApp

Chris Reno is a reference architect in the NetApp Infrastructure and Cloud Engineering team and is focused on creating, validating, supporting, and evangelizing solutions based on NetApp products. Chris has his Bachelor of Science degree in International Business and Finance and his Bachelor of Arts degree in Spanish from the University of North Carolina-Wilmington while also holding numerous industry certifications.

About Cisco Validated Design (CVD) Program

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

The Cisco implementation of TCP header compression is an adaptation of a program developed by the University of California, Berkeley (UCB) as part of UCB's public domain version of the UNIX operating system. All rights reserved. Copyright © 1981, Regents of the University of California.

Cisco and the Cisco Logo are trademarks of Cisco Systems, Inc. and/or its affiliates in the U.S. and other countries. A listing of Cisco's trademarks can be found at http://www.cisco.com/go/trademarks. Third party trademarks mentioned are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (1005R)

Any Internet Protocol (IP) addresses and phone numbers used in this document are not intended to be actual addresses and phone numbers. Any examples, command display output, network topology diagrams, and other figures included in the document are shown for illustrative purposes only. Any use of actual IP addresses or phone numbers in illustrative content is unintentional and coincidental.

© 2012 Cisco Systems, Inc. All rights reserved.

VMware vSphere 5.0 Built On FlexPod

Overview

Industry trends indicate a vast data center transformation toward shared infrastructures. By using virtualization, enterprise customers have embarked on the journey to the cloud by moving away from application silos and toward shared infrastructure, thereby increasing agility and reducing costs. Cisco and NetApp have partnered to deliver FlexPod®, which serves as the foundation for a variety of workloads and enables efficient architectural designs that are based on customer requirements.

Audience

This document describes the architecture and deployment procedures of an infrastructure composed of Cisco Unified Computing System™, NetApp®, and VMware® virtualization that uses FCoE-based storage serving NAS and SAN protocols. The intended audience for this document includes, but is not limited to, sales engineers, field consultants, professional services, IT managers, partner engineering, and customers who want to deploy the core FlexPod architecture.

Architecture

The FlexPod architecture is highly modular or "podlike." Although each customer's FlexPod unit varies in its exact configuration, once a FlexPod unit is built, it can easily be scaled as requirements and demand change. The unit can be scaled both up (adding resources to a FlexPod unit) and out (adding more FlexPod units).

Specifically, FlexPod is a defined set of hardware and software that serves as an integrated foundation for all virtualization solutions. VMware vSphere 5.0 Built On FlexPod includes NetApp storage, Cisco networking, the Cisco Unified Computing System (Cisco UCS®), and VMware vSphere® software in a single package. The design is flexible enough that the networking, computing, and storage can fit in one data center rack or deployed according to a customer's data center design. Port density enables the networking components to accommodate multiple configurations of this kind.

One benefit of the FlexPod architecture is the ability to customize or "flex" the environment to suit a customer's requirements. This is why the reference architecture detailed in this document highlights the resiliency, cost benefit, and ease of deployment of an FCoE-based storage solution. A storage system capable of serving multiple protocols across a single interface allows for customer choice and investment protection because it truly is a wire-once architecture.

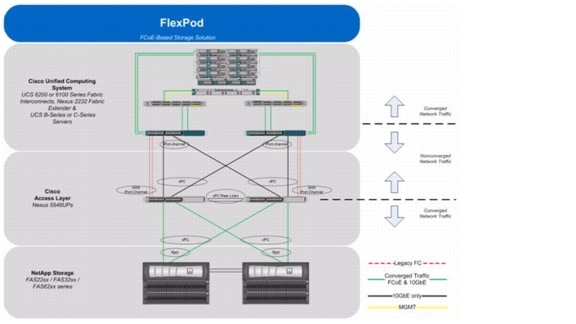

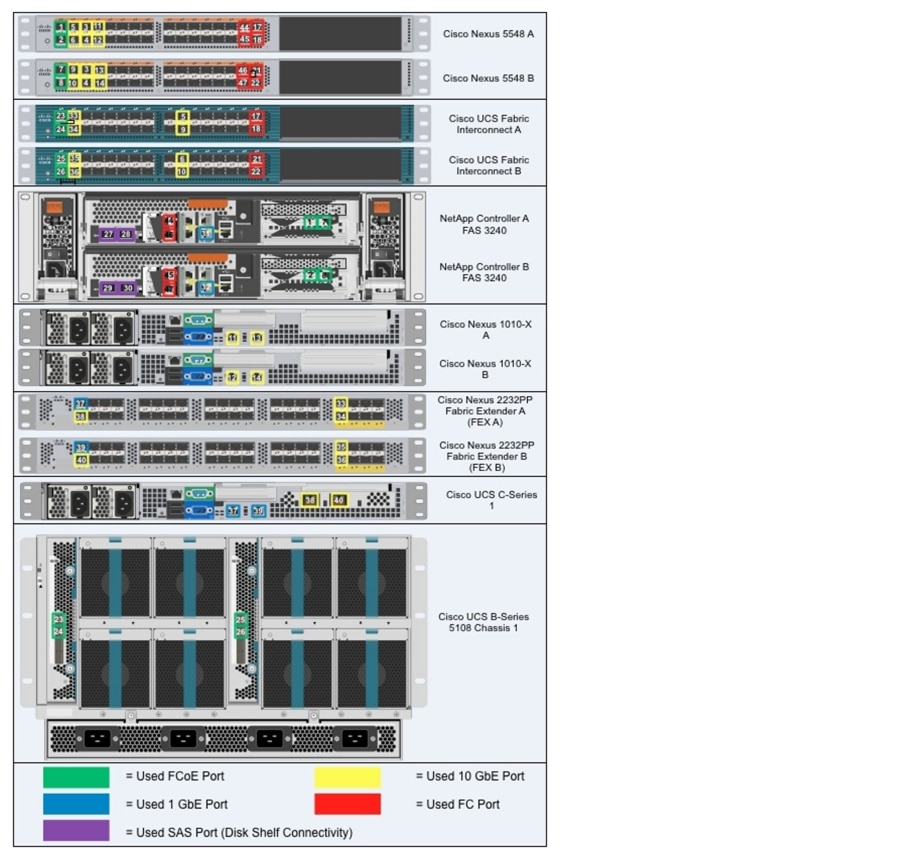

Figure 1 shows theVMware vSphere 5.0 Built On FlexPod components and the network connections for a configuration with FCoE-based storage. This design uses the Cisco Nexus® 5548UP, Cisco Nexus 2232PP FEX, and Cisco UCS C-Series and B-Series with the Cisco UCS virtual interface card (VIC) and the NetApp FAS family of storage controllers connected in a highly available design using Cisco Virtual Port Channels (vPCs). This infrastructure is deployed to provide FCoE-booted hosts with file- and block-level access to shared storage datastores. The reference architecture reinforces the "wire-once" strategy, because as additional storage is added to the architecture-be it FC, FCoE, or 10GbE-no recabling is required from the hosts to the Cisco UCS fabric interconnect.

Figure 1 VMware vSphere Built on FlexPod Components with FCoE Boot Topology

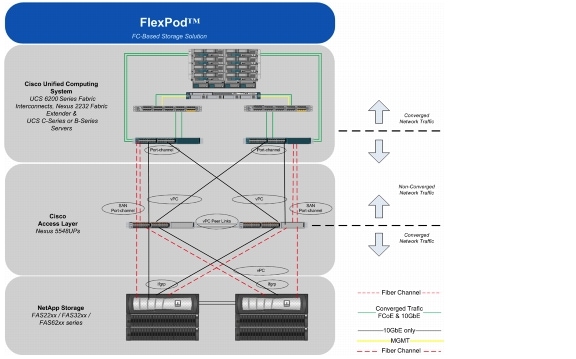

Figure 2 VMware vSphere Built on FlexPod with FC Boot Topology

The reference configuration includes:

•

Two Cisco Nexus 5548UP switches

•

Two Cisco Nexus 2232PP fabric extenders

•

Two Cisco UCS 6248UP fabric interconnects

•

Support for 16 Cisco UCS C-Series servers without any additional networking components

•

Support for 8 Cisco UCS B-Series servers without any additional blade server chassis

•

Support for hundreds of Cisco UCS C-Series and B-Series servers by way of additional fabric extenders and blade server chassis

•

One NetApp FAS3240-A (HA pair)

Storage is provided by a NetApp FAS3240-A (HA configuration in a single chassis). All system and network links feature redundancy, providing end-to-end high availability (HA). For server virtualization, the deployment includes VMware vSphere. Although this is the base design, each of the components can be scaled flexibly to support specific business requirements. For example, more (or different) servers or even blade chassis can be deployed to increase compute capacity, additional disk shelves can be deployed to improve I/O capacity and throughput, and special hardware or software features can be added to introduce new capabilities.

This document guides you through the low-level steps for deploying the base architecture, as shown in Figure 1. These procedures cover everything from physical cabling to compute and storage configuration to configuring virtualization with VMware vSphere.

FlexPod Benefits

One of the founding design principles of the FlexPod architecture is flexibility. Previous FlexPod architectures have highlighted FCoE- or FC-based storage solutions as well as IP-based storage solutions in addition to showcasing a variety of application workloads. This particular FlexPod architecture is a predesigned configuration that is built on the Cisco Unified Computing System (Cisco UCS), the Cisco Nexus family of data center switches, NetApp FAS storage components, and VMware virtualization software. FlexPod is a base configuration, but it can scale up for greater performance and capacity, and it can scale out for environments that require consistent, multiple deployments. FlexPod has the flexibility to be sized and optimized to accommodate many different use cases. These use cases can be layered on an infrastructure that is architected based on performance, availability, and cost requirements.

FlexPod is a platform that can address current virtualization needs and simplify the evolution to an IT as a service (ITaaS) infrastructure. The VMware vSphere 5.0 Built On FlexPod solution can help improve agility and responsiveness, reduce total cost of ownership (TCO), and increase business alignment and focus.

This document focuses on deploying an infrastructure that is capable of supporting VMware vSphere, VMware vCenter™ with NetApp plug-ins, and NetApp OnCommand® as the foundation for virtualized infrastructure. Additionally, this document details a use case for those who want to design an architecture with FCoE-based storage using storage protocols such as iSCSI, FCoE, CIFS, and NFS. For a detailed study of several practical solutions deployed on FlexPod, refer to the NetApp Technical Report 3884: FlexPod Solutions Guide.

Benefits of the Cisco Unified Computing System

Cisco Unified Computing System is the first converged data center platform that combines industry-standard, x86-architecture servers with networking and storage access into a single converged system. The system is entirely programmable using unified, model-based management to simplify and speed deployment of enterprise-class applications and services running in bare-metal, virtualized, and cloud computing environments.

The system's x86-architecture rack-mount and blade servers are powered by Intel® Xeon® processors. These industry-standard servers deliver world-record performance to power mission-critical workloads. Cisco servers, combined with a simplified, converged architecture, drive better IT productivity and superior price/performance for lower total cost of ownership (TCO). Building on Cisco's strength in enterprise networking, Cisco's Unified Computing System is integrated with a standards-based, high-bandwidth, low-latency, virtualization-aware unified fabric. The system is wired once to support the desired bandwidth and carries all Internet protocol, storage, inter-process communication, and virtual machine traffic with security isolation, visibility, and control equivalent to physical networks. The system meets the bandwidth demands of today's multicore processors, eliminates costly redundancy, and increases workload agility, reliability, and performance.

Cisco Unified Computing System is designed from the ground up to be programmable and self integrating. A server's entire hardware stack, ranging from server firmware and settings to network profiles, is configured through model-based management. With Cisco virtual interface cards, even the number and type of I/O interfaces is programmed dynamically, making every server ready to power any workload at any time. With model-based management, administrators manipulate a model of a desired system configuration, associate a model's service profile with hardware resources, and the system configures itself to match the model. This automation speeds provisioning and workload migration with accurate and rapid scalability. The result is increased IT staff productivity, improved compliance, and reduced risk of failures due to inconsistent configurations.

Cisco Fabric Extender technology reduces the number of system components to purchase, configure, manage, and maintain by condensing three network layers into one. This represents a radical simplification over traditional systems, reducing capital and operating costs while increasing business agility, simplifying and speeding deployment, and improving performance.

Cisco Unified Computing System helps organizations go beyond efficiency: it helps them become more effective through technologies that breed simplicity rather than complexity. The result is flexible, agile, high-performance, self-integrating information technology, reduced staff costs with increased uptime through automation, and more rapid return on investment.

This reference architecture highlights the use of the Cisco UCS C200-M2, Cisco UCS C220-M3, Cisco UCS B200-M2, Cisco UCS B200-M3, the Cisco UCS 6248UP, and the Nexus 2232PP FEX to provide a resilient server platform balancing simplicity, performance and density for production-level virtualization. Also highlighted in this architecture, is the use of Cisco UCS service profiles that enable FCoE boot of the native operating system. Coupling service profiles with unified storage delivers on demand stateless computing resources in a highly scalable architecture.

Recommended support documents include:

•

Cisco Unified Computing System:

http://www.cisco.com/en/US/products/ps10265/index.html

•

Cisco Unified Computing System C-Series Servers: http://www.cisco.com/en/US/products/ps10493/index.html

•

Cisco Unified Computing System B-Series Servers: http://www.cisco.com/en/US/products/ps10280/index.html

Benefits of Cisco Nexus 5548UP

The Cisco Nexus 5548UP Switch delivers innovative architectural flexibility, infrastructure simplicity, and business agility, with support for networking standards. For traditional, virtualized, unified, and high-performance computing (HPC) environments, it offers a long list of IT and business advantages, including:

Architectural Flexibility

•

Unified ports that support traditional Ethernet, Fibre Channel (FC), and Fibre Channel over Ethernet (FCoE)

•

Synchronizes system clocks with accuracy of less than one microsecond, based on IEEE 1588

•

Offers converged Fabric extensibility, based on emerging standard IEEE 802.1BR, with Fabric Extender (FEX) Technology portfolio, including:

•

Cisco Nexus 2000 FEX

•

Adapter FEX

•

VM-FEX

Infrastructure Simplicity

•

Common high-density, high-performance, data-center-class, fixed-form-factor platform

•

Consolidates LAN and storage

•

Supports any transport over an Ethernet-based fabric, including Layer 2 and Layer 3 traffic

•

Supports storage traffic, including iSCSI, NAS, FC, RoE, and IBoE

•

Reduces management points with FEX Technology

Business Agility

•

Meets diverse data center deployments on one platform

•

Provides rapid migration and transition for traditional and evolving technologies

•

Offers performance and scalability to meet growing business needs

Specifications At-a Glance

•

A 1 -rack-unit, 1/10 Gigabit Ethernet switch

•

32 fixed Unified Ports on base chassis and one expansion slot totaling 48 ports

•

The slot can support any of the three modules: Unified Ports, 1/2/4/8 native Fibre Channel, and Ethernet or FCoE

•

Throughput of up to 960 Gbps

This reference architecture highlights the use of the Cisco Nexus 5548UP. As mentioned, this platform is capable of serving as the foundation for wire-once, unified fabric architectures. This document provides guidance for an architecture capable of delivering storage protocols including iSCSI, FC, FCoE, CIFS, and NFS. With the storage protocols license enabled on the Nexus 5548UP, end-users can take advantage of a full feature SAN switch as well as traditional Ethernet networking.

Recommended support documents include:

•

Cisco Nexus 5000 Family of switches: http://www.cisco.com/en/US/products/ps9670/index.html

Benefits of the NetApp FAS Family of Storage Controllers

The NetApp Unified Storage Architecture offers customers an agile and scalable storage platform. All NetApp storage systems use the Data ONTAP® operating system to provide SAN (FCoE, FC, iSCSI), NAS (CIFS, NFS), and primary and secondary storage in a single unified platform so that all workloads can be hosted on the same storage array. A single process for activities such as installation, provisioning, mirroring, backup, and upgrading is used throughout the entire product line, from the entry level to enterprise-class controllers. Having a single set of software and processes simplifies even the most complex enterprise data management challenges. Unifying storage and data management software and processes streamlines data ownership, enables companies to adapt to their changing business needs without interruption, and reduces total cost of ownership.

This reference architecture focuses on the use case of using FCoE-based storage to solve customers' challenges and meet their needs in the data center. Specifically this entails FCoE boot of Cisco UCS hosts; provisioning of virtual machine datastores by using NFS; and application access through FCoE, iSCSI, CIFS, or NFS, all while using NetApp unified storage.

In a shared infrastructure, the availability and performance of the storage infrastructure are critical because storage outages or performance issues can affect thousands of users. The storage architecture must provide a high level of availability and performance. For detailed documentation about best practices, NetApp and its technology partners have developed a variety of best practice documents.

This reference architecture highlights the use of the NetApp FAS3200 product line, specifically the FAS3240-A with an FCoE adapter card, Flash Cache, and SAS storage.

Recommended support documents include:

•

NetApp storage systems: www.netapp.com/us/products/storage-systems/

•

NetApp FAS3200 storage systems: www.netapp.com/us/products/storage-systems/fas3200/

•

NetApp TR-3437: Storage Best Practices and Resiliency Guide

•

NetApp TR-3450: Active-Active Controller Overview and Best Practices Guidelines

•

NetApp TR-3749: NetApp and VMware vSphere Storage Best Practices

•

NetApp TR-3884: FlexPod Solutions Guide

•

NetApp TR-3824: MS Exchange 2010 Best Practices Guide

Benefits of NetApp OnCommand Unified Manager Software

NetApp OnCommand management software delivers efficiency savings by unifying storage operations, provisioning, and protection for both physical and virtual resources. The key product benefits that creates this value include:

•

Simplicity. A single unified approach and a single set of tools to manage both the physical world and the virtual world as you move to a services model to manage your service delivery. This makes NetApp the most effective storage for the virtualized data center. It has a single configuration repository for reporting, event logs, and audit logs.

•

Efficiency. Automation and analytics capabilities deliver storage and service efficiency, reducing IT capex and opex spend by up to 50%.

•

Flexibility. With tools that let you gain visibility and insight into your complex multiprotocol, multivendor environments and open APIs that let you integrate with third-party orchestration frameworks and hypervisors, OnCommand offers a flexible solution that helps you rapidly respond to changing demands.

OnCommand gives you visibility across your storage environment by continuously monitoring and analyzing its health. You get a view of what is deployed and how it is being used, enabling you to improve your storage capacity utilization and increase the productivity and efficiency of your IT administrators. And this unified dashboard gives at-a-glance status and metrics, making it far more efficient than having to use multiple resource management tools.

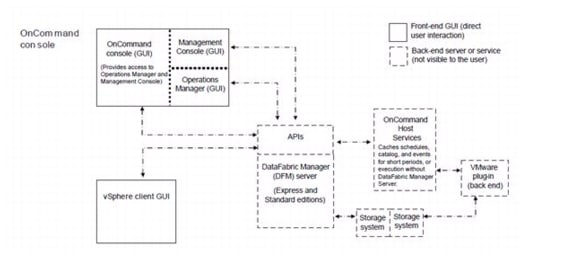

Figure 3 OnCommand Architecture

OnCommand Host Package

You can discover, manage, and protect virtual objects after installing the NetApp OnCommand Host Package software. The components that make up the OnCommand Host Package are:

•

OnCommand host service VMware plug-in. A plug-in that receives and processes events in a VMware environment, including discovering, restoring, and backing up virtual objects such as virtual machines and datastores. This plug-in executes the events received from the host service.

•

Host service. The host service software includes plug-ins that enable the NetApp DataFabric® Manager server to discover, back up, and restore virtual objects, such as virtual machines and datastores. The host service also enables you to view virtual objects in the OnCommand console. It enables the DataFabric Manager server to forward requests, such as the request for a restore operation, to the appropriate plug-in, and to send the final results of the specified job to that plug-in. When you make changes to the virtual infrastructure, automatic notification is sent from the host service to the DataFabric Manager server. You must register at least one host service with the DataFabric Manager server before you can back up or restore data.

•

Host service Windows PowerShell™ cmdlets. Cmdlets that perform virtual object discovery, local restore operations, and host configuration when the DataFabric Manager server is unavailable.

Management tasks performed in the virtual environment by using the OnCommand console include:

•

Create a dataset and then add virtual machines or datastores to the dataset for data protection.

•

Assign local protection and, optionally, remote protection policies to the dataset.

•

View storage details and space details for a virtual object.

•

Perform an on-demand backup of a dataset.

•

Mount existing backups onto an ESX® server to support tasks such as backup verification, single file restore, and restoration of a virtual machine to an alternate location.

•

Restore data from local and remote backups as well as restoring data from backups made before the introduction of OnCommand management software.

•

View storage details and space details for a virtual object.

Storage Service Catalog

The Storage Service Catalog, a component of OnCommand, is a key NetApp differentiator for service automation. It lets you integrate storage provisioning policies, data protection policies, and storage resource pools into a single service offering that administrators can choose when provisioning storage. This automates much of the provisioning process, and it also automates a variety of storage management tasks associated with the policies.

The Storage Service Catalog provides a layer of abstraction between the storage consumer and the details of the storage configuration, creating "storage as a service." The service levels defined with the Storage Service Catalog automatically specify and map policies to the attributes of your pooled storage infrastructure. This higher level of abstraction between service levels and physical storage lets you eliminate complex, manual work, encapsulating storage and operational processes together for optimal, flexible, dynamic allocation of storage.

The service catalog approach also incorporates the use of open APIs into other management suites, which leads to a strong ecosystem integration.

FlexPod Management Solutions

The FlexPod platform provides open APIs for easy integration with a broad range of management tools. NetApp and Cisco work with trusted partners to provide a variety of management solutions. Products designated as Validated FlexPod Management Solutions must pass extensive testing in Cisco and NetApp labs against a broad set of functional and design requirements. Validated solutions for automation and orchestration provide unified, turnkey functionality. Now you can deploy IT services in minutes instead of weeks by reducing complex, multiadministrator processes to repeatable workflows that are easily adaptable. The following list details the current vendors for these solutions.

Note

Some of the following links are available only to partners and customers.

•

CA

http://solutionconnection.netapp.com/CA-Infrastructure-Provisioning-for-FlexPod.aspx

http://www.youtube.com/watch?v=mmkNUvVZY94

•

Cloupia

http://solutionconnection.netapp.com/cloupia-unified-infrastructure-controller.aspx

http://www.cloupia.com/en/flexpodtoclouds/videos/Cloupia-FlexPod-Solution-Overview.html

•

Gale Technologies

http://solutionconnection.netapp.com/galeforce-turnkey-cloud-solution.aspx

http://www.youtube.com/watch?v=ylf81zjfFF0

Products designated as FlexPod Management Solutions have demonstrated the basic ability to interact with all components of the FlexPod platform. Vendors for these solutions currently include BMC Software Business Service Management, Cisco Intelligent Automation for Cloud, DynamicOps, FireScope, Nimsoft, and Zenoss. Recommended documents include:

•

https://solutionconnection.netapp.com/flexpod.aspx

•

http://www.netapp.com/us/communities/tech-ontap/tot-building-a-cloud-on-flexpod-1203.html

Benefits of VMware vSphere with the NetApp Virtual Storage Console

VMware vSphere, coupled with the NetApp Virtual Storage Console (VSC), serves as the foundation for VMware virtualized infrastructures. vSphere 5.0 offers significant enhancements that can be employed to solve real customer problems. Virtualization reduces costs and maximizes IT efficiency, increases application availability and control, and empowers IT organizations with choice. VMware vSphere delivers these benefits as the trusted platform for virtualization as demonstrated by its contingent of more than 300,000 customers worldwide.

VMware vCenter Server is the best way to manage and use the power of virtualization. A vCenter domain manages and provisions resources for all the ESX hosts in the given data center. The ability to license various features in vCenter at differing price points allows customers to choose the package that best serves their infrastructure needs.

The VSC is a vCenter plug-in that provides end-to-end virtual machine (VM) management and awareness for VMware vSphere environments running on top of NetApp storage. The following core capabilities make up the plug-in:

•

Storage and ESXi™ host configuration and monitoring by using Monitoring and Host Configuration

•

Datastore provisioning and VM cloning by using Provisioning and Cloning

•

Backup and recovery of VMs and datastores by using Backup and Recovery

•

Online alignment and single and group migrations of VMs into new or existing VMFS datastores by using Optimization and Migration

Because the VSC is a vCenter plug-in, all vSphere clients that connect to vCenter can access VSC. This availability is different from a client-side plug-in that must be installed on every vSphere client.

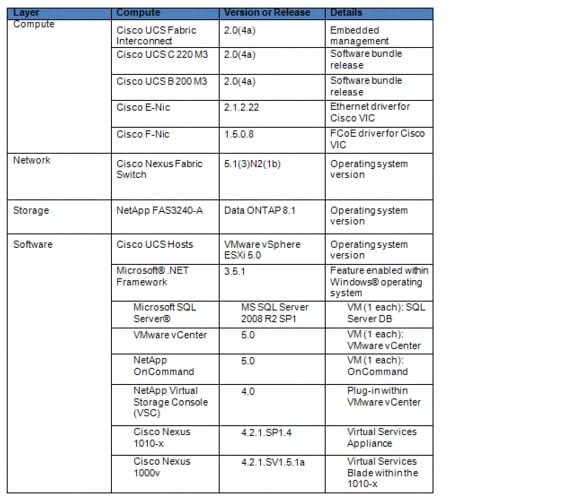

Software Revisions

It is important to note the software versions used in this document. Table 1 details the software revisions used throughout this document.

Table 1 Software Revisions

Configuration Guidelines

This document provides details for configuring a fully redundant, highly available configuration for a FlexPod unit with IP-based storage. Therefore, reference is made to which component is being configured with each step, either A or B. For example, controller A and controller B are used to identify the two NetApp storage controllers that are provisioned with this document, and Nexus A and Nexus B identify the pair of Cisco Nexus switches that are configured. The Cisco UCS fabric interconnects are similarly configured. Additionally, this document details steps for provisioning multiple Cisco UCS hosts, and these are identified sequentially: VM-Host-Infra-01, VM-Host-Infra-02, and so on. Finally, to indicate that you should include information pertinent to your environment in a given step, <text> appears as part of the command structure. See the following example for the vlan create command:

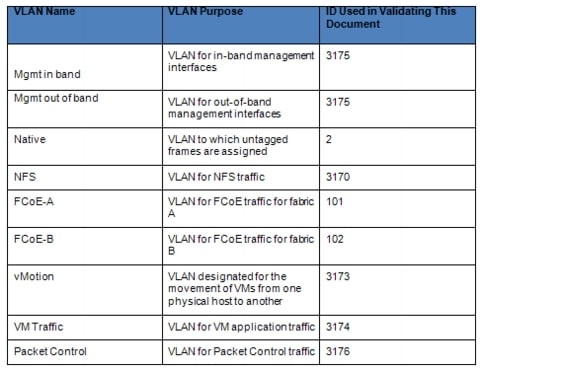

controller A> vlan createUsage:vlan create [-g {on|off}] <ifname> <vlanid_list>vlan add <ifname> <vlanid_list>vlan delete -q <ifname> [<vlanid_list>]vlan modify -g {on|off} <ifname>vlan stat <ifname> [<vlanid_list>]Example:controller A> vlan create vif0 <management VLAN ID>This document is intended to enable you to fully configure the customer environment. In this process, various steps require you to insert customer-specific naming conventions, IP addresses, and VLAN schemes, as well as to record appropriate MAC addresses. Table 2 describes the VLANs necessary for deployment as outlined in this guide. The VM-Mgmt VLAN is used for management interfaces of the VMware vSphere hosts. Table 3 lists the VSANs necessary for deployment as outlined in this guide.

Table 4 lists the configuration variables that are used throughout this document. This table can be completed based on the specific site variables and used in implementing the document configuration steps.

Note

If you use separate in-band and out-of-band management VLANs, you must create a Layer 3 route between these VLANs. For this validation, a common management VLAN was used.

Table 2 Necessary VLANs

Table 3 Necessary VSANs

Table 4 Configuration Variables

Note

In this document, management IPs and host names must be assigned for the following components:

•

NetApp storage controllers A and B

•

Cisco UCS fabric Interconnects A and B and the Cisco UCS cluster

•

Cisco Nexus 5548s A and B

•

VMware ESXi hosts

•

VMware vCenter SQL Server virtual machine

•

VMware vCenter virtual machine

•

NetApp Virtual Storage Console or OnCommand virtual machine

For all host names except the virtual machine host names, the IP addresses must be preconfigured in the local DNS server. Additionally, the NFS IP addresses of the NetApp storage systems are used to monitor the storage systems from OnCommand DataFabric Manager. In this validation, a management host name was assigned to each storage controller (that is, ice3240-1a-m) and provisioned in DNS. A host name was also assigned for each controller in the NFS VLAN (that is, ice3240-1a) and provisioned in DNS. This NFS VLAN host name was then used when the storage system was added to OnCommand Data Fabric Manager.

Deployment

This document describes the steps to deploy base infrastructure components as well to provision VMware vSphere as the foundation for virtualized workloads. When you finish these deployment steps, you will be prepared to provision applications on top of a VMware virtualized infrastructure. The outlined procedure contains the following steps:

•

Initial NetApp controller configuration

•

Initial Cisco UCS configuration

•

Initial Cisco Nexus configuration

•

Creation of necessary VLANs for management, basic functionality, and virtualized infrastructure specific to VMware

•

Creation of necessary VSANs for booting of the Cisco UCS hosts

•

Creation of necessary vPCs to provide high availability among devices

•

Creation of necessary service profile pools: MAC, UUID, server, and so forth

•

Creation of necessary service profile policies: adapter, boot, and so forth

•

Creation of two service profile templates from the created pools and policies: one each for fabric A and B

•

Provisioning of two servers from the created service profiles in preparation for OS installation

•

Initial configuration of the infrastructure components residing on the NetApp controller

•

Installation of VMware vSphere 5.0

•

Installation and configuration of VMware vCenter

•

Enabling of NetApp Virtual Storage Console (VSC)

•

Configuration of NetApp OnCommand

•

Configuration of NetApp vStorage APIs for Storage Awareness (VASA) Provider

The VMware vSphere built on FlexPod architecture is flexible; therefore the configuration detailed in this section can vary for customer implementations, depending on specific requirements. Although customer implementations can deviate from the following information, the best practices, features, and configurations described in this section should be used as a reference for building a customized VMware vSphere built on FlexPod solution.

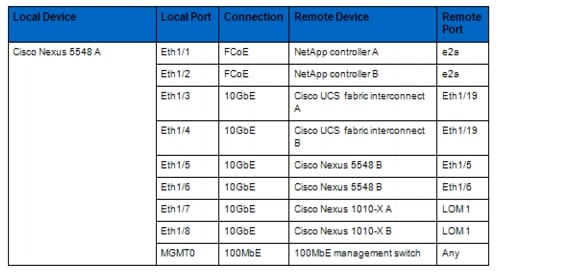

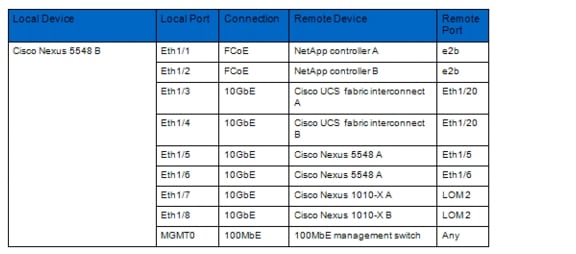

Cabling Information

The information in this section is provided as a reference for cabling the physical equipment in a FlexPod environment. To simplify cabling requirements, the tables include both local and remote device and port locations.

The tables in this section contain details for the prescribed and supported configuration of the NetApp FAS3240-A running Data ONTAP 8.1. This configuration uses a dual-port FCoE adapter, built-in FC ports, and external SAS disk shelves. For any modifications of this prescribed architecture, consult the NetApp Interoperability Matrix Tool (IMT).

This document assumes that out-of-band management ports are plugged into an existing management infrastructure at the deployment site. These interfaces will be used in various configuration steps

Be sure to follow the cabling directions in this section. Failure to do so will result in necessary changes to the deployment procedures that follow because specific port locations are mentioned.

It is possible to order a FAS3240-A system in a different configuration from what is prescribed in the tables in this section. Before starting, be sure that the configuration matches the descriptions in the tables and diagrams in this section.

Figure 4 shows a FlexPod cabling diagram. The labels indicate connections to endpoints rather than port numbers on the physical device. For example, connection 1 is an FCoE port connected from NetApp controller A to Cisco Nexus 5548 A. SAS connections 27, 28, 29, and 30 as well as ACP connections 31 and 32 should be connected to the NetApp storage controller and disk shelves according to best practices for the specific storage controller and disk shelf quantity. Additionally, this paper assumes the FCoE adapter is installed in the second PCI slot of the controller. If the FCoE card is not installed in slot 2 but is installed in a slot according to best practices based on the quantity of add-on cards, modify the card name as appropriate.

Note

Cables Necessary for FC configuration are optional when implementing FCoE, and are identified by green text. For disk shelf cabling, refer to the Universal SAS and ACP Cabling Guide at https://library.netapp.com/ecm/ecm_get_file/ECMM1280392.

Figure 4 Cabling

Table 5 Cisco Nexus 5548 A Ethernet Cabling Information

Note

For devices requiring GbE connectivity, use the GbE copper SFP+s (GLC-T=).

Table 6 Cisco Nexus 5548 B Ethernet Cabling Information

Note

For devices requiring GbE connectivity, use the GbE copper SFP+s (GLC-T=).

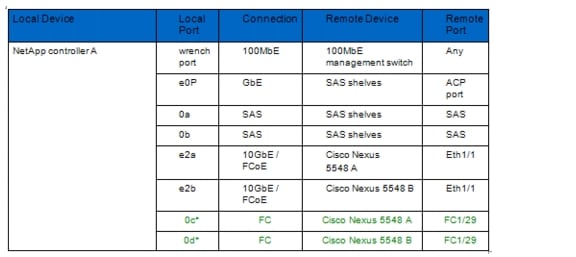

Table 7 NetApp Controller A Cabling Information

Table 8 NetApp Controller B Cabling Information

Note

Connections denoted with * necessary for FC configuration only.

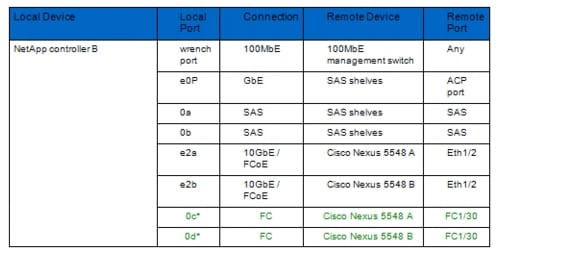

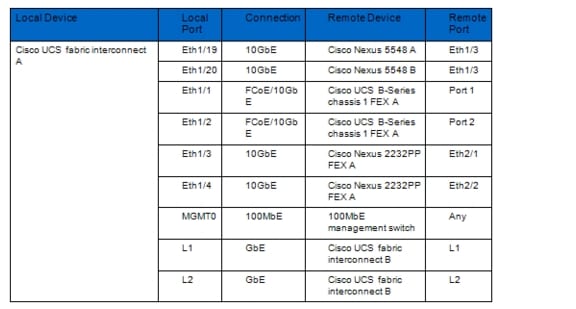

Table 9 Cisco UCS Fabric Interconnect A Ethernet Cabling Information

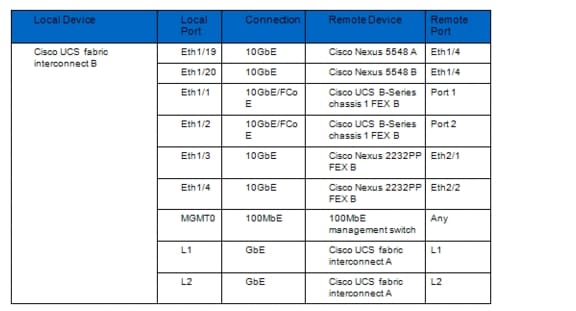

Table 10 Cisco UCS Fabric interconnect B Ethernet Cabling Information

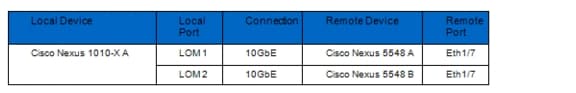

Table 11 Cisco Nexus 1010-X A Ethernet Cabling Information

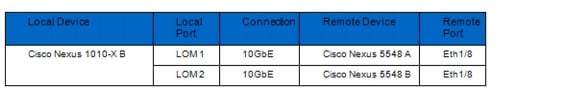

Table 12 Cisco Nexus 1010-X B Ethernet Cabling Information

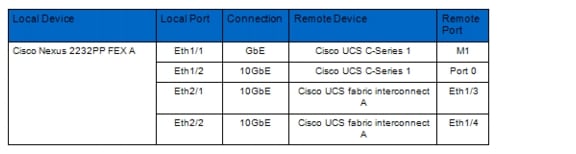

Table 13 Cisco Nexus 2232PP Fabric Extender A (FEX A) Ethernet Cabling Information

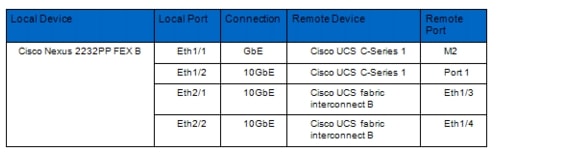

Table 14 Cisco Nexus 2232PP Fabric Extender B (FEX B) Ethernet Cabling Information

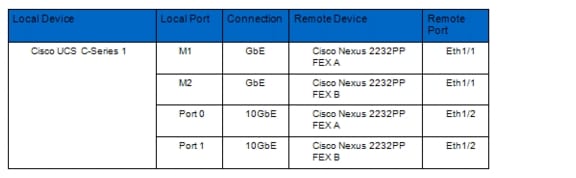

Table 15 Cisco UCS C-Series 1 Ethernet Cabling Information

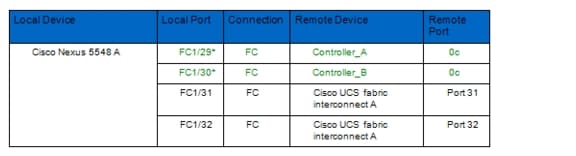

Table 16 Cisco Nexus 5548 A Fibre Channel Cabling Information

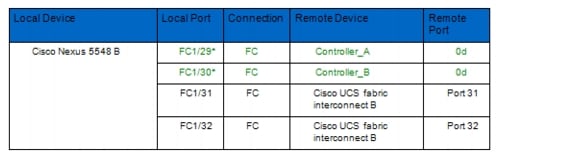

Table 17 Cisco Nexus 5548 B Fibre Channel Cabling Information

Note

Connections denoted with * necessary for FC configuration only.

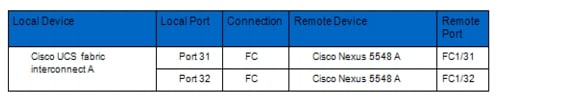

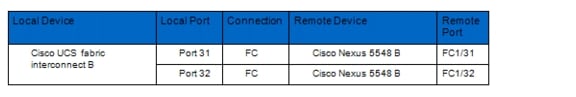

Table 18 Cisco UCS Fabric Interconnect A Fibre Channel Cabling Information

Table 19 Cisco UCS Fabric Interconnect B Fibre Channel Cabling Information

NetApp FAS3240 Deployment Procedure: Part 1

This section provides a detailed procedure for configuring the NetApp FAS3240-A for use in a VMware vSphere built on FlexPod solution. These steps should be followed precisely. Failure to do so could result in an improper configuration.

Note

The configuration steps described in this section provide guidance for configuring the FAS3240-A running Data ONTAP 8.1.

Assign Controller Disk Ownership

These steps provide details for assigning disk ownership and disk initialization and verification.

Note

Typical best practices should be followed when determining the number of disks to assign to each controller head. You may choose to assign a disproportionate number of disks to a given storage controller in an HA pair, depending on the intended workload. In this reference architecture, half the total number of disks in the environment is assigned to one controller and the remainder to its partner. Divide the number of disks in half and use the result in the following command for <# of disks>.

Controller A

1.

If the controller is at a LOADER-A> prompt, enter autoboot to start Data ONTAP. During controller boot, when prompted for the Boot Menu, press Ctrl-C.

2.

At the menu prompt, select option 5 for Maintenance mode boot.

3.

If prompted with Continue to boot? enter Yes.

4.

Enter ha-config show to verify that the controllers and chassis configuration is ha.

Note

If either component is not in HA mode, use the ha-config modify command to put the components in HA mode.

5.

Enter disk show. No disks should be assigned to the controller.

6.

To determine the total number of disks connected to the storage system, enter disk show -a.

7.

Enter disk assign -n <# of disks>.

Note

This reference architecture allocates half the disks to each controller. Workload design could dictate different percentages, however.

8.

Enter halt to reboot the controller.

9.

If the controller stops at a LOADER-A> prompt, enter autoboot to start Data ONTAP.

10.

During controller boot, when prompted, press Ctrl-C.

11.

At the menu prompt, select option 4 for "Clean configuration and initialize all disks."

12.

The installer asks if you want to zero the disks and install a new file system. Enter y.

13.

A warning is displayed that this will erase all of the data on the disks. Enter y to confirm that this is what you want to do.

Note

The initialization and creation of the root volume can take 75 minutes or more to complete, depending on the number of disks attached. When initialization is complete, the storage system reboots.

Controller B

1.

If the controller is at a LOADER-B> prompt, enter autoboot to start Data ONTAP. During controller boot, when prompted, press Ctrl-C for the special boot menu.

Note

If you are using two controllers in separate chassis, the prompt will be at the LOADER-A> prompt.

2.

At the menu prompt, select option 5 for Maintenance mode boot.

3.

If prompted with Continue to boot? enter Yes.

4.

Type ha-config show to verify that the controllers and chassis configuration is ha.

Note

If either controller is not in HA mode, use the ha-config modify command to put the components in HA mode.

5.

Enter disk show. No disks should be assigned to this controller.

6.

To determine the total number of disks connected to the storage system, enter disk show -a. This will now show the number of remaining unassigned disks connected to the controller.

7.

Enter disk assign -n <# of disks> to assign the remaining disks to the controller.

8.

Enter halt to reboot the controller.

9.

If the controller stops at a LOADER-B> prompt, enter autoboot to start Data ONTAP.

10.

During controller boot, when prompted, press Ctrl-C for the boot menu.

11.

At the menu prompt, select option 4 for "Clean configuration and initialize all disks."

12.

The installer asks if you want to zero the disks and install a new file system. Enter y.

13.

A warning is displayed that this will erase all of the data on the disks. Enter y to confirm that this is what you want to do.

Note

The initialization and creation of the root volume can take 75 minutes or more to complete, depending on the number of disks attached. When initialization is complete, the storage system reboots.

Set Up Data ONTAP 8.1

These steps provide details for setting up Data ONTAP 8.1.

Controller A and Controller B

1.

After the disk initialization and the creation of the root volume, Data ONTAP setup begins.

2.

Enter the host name of the storage system.

3.

Enter n for enabling IPv6.

4.

Enter y for configuring interface groups.

5.

Enter 1 for the number of interface groups to configure.

6.

Name the interface vif0.

7.

Enter l to specify the interface as LACP.

8.

Enter i to specify IP load balancing.

9.

Enter 2 for the number of links for vif0.

10.

Enter e2a for the name of the first link.

11.

Enter e2b for the name of the second link.

12.

Press Enter to accept the blank IP address for vif0.

13.

Enter n for interface group vif0 taking over a partner interface.

14.

Press Enter to accept the blank IP address for e0a.

15.

Enter n for interface e0a taking over a partner interface.

16.

Press Enter to accept the blank IP address for e0b.

17.

Enter n for interface e0b taking over a partner interface.

18.

Enter the IP address of the out-of-band management interface, e0M.

19.

Enter the net mask for e0M.

20.

Enter y for interface e0M taking over a partner IP address during failover.

21.

Enter e0M for the name of the interface to be taken over.

22.

Press Enter to accept the flow controller as full.

23.

Enter n to continue setup through the Web interface.

24.

Enter the IP address for the default gateway for the storage system.

25.

Enter the IP address for the administration host.

26.

Enter the local time zone (such as PST, MST, CST, or EST or Linux® time zone format; for example, America/New_York).

27.

Enter the location for the storage system.

28.

Press Enter to accept the default root directory for HTTP files [/home/http].

29.

Enter y to enable DNS resolution.

30.

Enter the DNS domain name.

31.

Enter the IP address for the first name server.

32.

Enter n to finish entering DNS servers, or select y to add up to two more DNS servers.

33.

Enter n for running the NIS client.

34.

Press Enter to acknowledge the AutoSupport™ message.

35.

Enter y to configure the SP LAN interface.

36.

Enter n to setting up DHCP on the SP LAN interface.

37.

Enter the IP address for the SP LAN interface.

38.

Enter the net mask for the SP LAN interface.

39.

Enter the IP address for the default gateway for the SP LAN interface.

40.

Enter the fully qualified domain name for the mail host to receive SP messages and AutoSupport.

41.

Enter the IP address for the mail host to receive SP messages and AutoSupport.

Note

If you make a mistake during setup, press Ctrl+C to get a command prompt. Enter setup and run the setup script again. Or, you can complete the setup script and at the end enter setup to redo the setup script. If you redo the setup script, you must use the passwd command at the end setup to input the administrative password. You will not be automatically prompted to do so. At the end of the setup script, the storage system must be rebooted for changes to take effect.

42.

Enter the new administrative (root) password.

43.

Enter the new administrative (root) password again to confirm.

44.

Log in to the storage system with the new administrative password.

Install Data ONTAP to Onboard Flash Storage

The following steps describe installing Data ONTAP to the onboard flash storage.

Controller A and Controller B

1.

To install the Data ONTAP image to the onboard flash device, enter software install and indicate the HTTP or HTTPS Web address of the NetApp Data ONTAP 8.1 flash image; for example, http://192.168.175.5/81_q_image.tgz.

2.

Enter download and press Enter to download the software to the flash device.

Harden Storage System Logins and Security

The following steps describe hardening the storage system logins and security.

Controller A and Controller B

1.

Enter secureadmin disable ssh.

2.

Enter secureadmin setup -f ssh to enable SSH on the storage controller.

3.

If prompted, enter yes to rerun SSH setup.

4.

Accept the default values for SSH1.x protocol.

5.

Enter 1024 for SSH2 protocol.

6.

If the information specified is correct, enter yes to create the SSH keys.

7.

Enter options telnet.enable off to disable telnet on the storage controller.

8.

Enter secureadmin setup ssl to enable SSL on the storage controller.

9.

If prompted, enter yes to rerun SSL setup.

10.

Enter the country name code, state or province name, locality name, organization name, and organization unit name.

11.

Enter the fully qualified domain name of the storage system.

12.

Enter the administrator's e-mail address.

13.

Accept the default for days until the certificate expires.

14.

Enter 1024 for the SSL key length.

15.

Enter options httpd.admin.enable off to disable HTTP access to the storage system.

16.

Enter options httpd.admin.ssl.enable on to enable secure access to the storage system.

Install the Required Licenses

The following steps provide details about storage licenses that are used to enable features in this reference architecture. A variety of licenses come installed with the Data ONTAP 8.1 software.

Note

The following licenses are needed to deploy this reference architecture:

•

cluster (cf): To configure storage controllers into an HA pair

•

FCP: To enable the FCP protocol

•

nfs: To enable the NFS protocol

•

flex_clone: To enable the provisioning of NetApp FlexClone® volumes and files

Controller A and Controller B

1.

Enter license add <necessary licenses> to add licenses to the storage system.

2.

Enter license to double-check the installed licenses.

3.

Enter reboot to reboot the storage controller.

4.

Log back in to the storage controller with the root password.

Enable Licensed Features

The following steps provide details for enabling licensed features.

Controller A and Controller B

1.

Enter options licensed_feature.multistore.enable on.

2.

Enter options licensed_feature.nearstore_option.enable on.

Enable Active-Active Controller Configuration Between Two Storage Systems

This step provides details for enabling active-active controller configuration between the two storage systems.

Controller A Only

1.

Enter cf enable and press Enter to enable active-active controller configuration.

Start FCP

This step provides details for enabling the FCP protocol.

Controller A and Controller B

1.

Enter fcp start.

2.

Record the WWPN or FC port name for later use by typing fcp show adapters.

Note

If using FC instead of FCoE between storage and the network and there are no available target ports, reconfiguration is necessary.

3.

Type fcadmin config.

Note

Only FC ports that are configured as targets can be used to connect to initiator hosts on the SAN.

4.

Type fcadmin config -t target 0c.

5.

Type fcadmin config -t target 0d.

Note

If an initiator port is made into a target port, a reboot is required. NetApp recommends rebooting after completing the entire configuration because other configuration steps might also require a reboot.

Set Up Storage System NTP Time Synchronization and CDP Enablement

The following steps provide details for setting up storage system NTP time synchronization and enabling Cisco Discovery Protocol (CDP).

Controller A and Controller B

1.

Enter date CCyymmddhhmm, where CCyy is the four-digit year, mm is the two-digit month, dd is the two-digit day of the month, hh is the two-digit hour, and the second mm is the two-digit minute to set the storage system time to the actual time.

2.

Enter options timed.proto ntp to synchronize with an NTP server.

3.

Enter options timed.servers <NTP server IP> to add the NTP server to the storage system list.

4.

Enter options timed.enable on to enable NTP synchronization on the storage system.

5.

Enter options cdpd.enable on.

Create Data Aggregate aggr1

This step provides details for creating the data aggregate aggr1.

Note

In most cases, the following command finishes quickly, but depending on the state of each disk, it might be necessary to zero some or all of the disks in order to add them to the aggregate. This could take up to 60 minutes to complete.

Controller A

1.

Enter aggr create aggr1 -B 64 <# of disks for aggr1> to create aggr1 on the storage controller.

Controller B

1.

Enter aggr create aggr1 -B 64 <# of disks for aggr1> to create aggr1 on the storage controller.

Create an SNMP Requests Role and Assign SNMP Login Privileges

This step provides details for creating the SNMP request role and for assigning SNMP login privileges to it.

Controller A and Controller B

1.

Run the following command: useradmin role add <ntap SNMP request role> -a login-snmp.

Create an SNMP Management Group and Assign an SNMP Request Role

This step provides details for creating an SNMP management group and assigning an SNMP request role to it.

Controller A and Controller B

1.

Run the following command: useradmin group add <ntap SNMP managers> -r <ntap SNMP request role>.

Create an SNMP User and Assign It to an SNMP Management Group

This step provides details for creating an SNMP user and assigning it to an SNMP management group.

Controller A and Controller B

1.

Run the following command: useradmin user add <ntap SNMP user> -g <ntap SNMP managers>.

Note

After the user is created, the system prompts for a password. Enter the SNMP password.

Set Up SNMP v1 Communities on Storage Controllers

These steps provide details for setting up SNMP v1 communities on the storage controllers so that OnCommand System Manager can be used.

Controller A and Controller B

1.

Run the following command: snmp community delete all.

2.

Run the following command: snmp community add ro <ntap SNMP community>.

Set Up SNMP Contact Information for Each Storage Controller

This step provides details for setting SNMP contact information for each of the storage controllers.

Controller A and Controller B

1.

Run the following command: snmp contact <ntap admin email address>.

Set SNMP Location Information for Each Storage Controller

This step provides details for setting SNMP location information for each of the storage controllers.

Controller A and Controller B

1.

Run the following command: snmp location <ntap SNMP site name>.

Reinitialize SNMP on Storage Controllers

This step provides details for reinitializing SNMP on the storage controllers.

Controller A and Controller B

1.

Run the following command: snmp init 1.

Initialize NDMP on the Storage Controllers

This step provides details for initializing NDMP.

Controller A and Controller B

1.

Run the following command: ndmpd on.

Enable Flash Cache

This step provides details for enabling the NetApp Flash Cache module, if installed.

Controller A and Controller B

1.

Enter the following command to enable Flash Cache on each controller: options flexscale.enable on.

Add VLAN Interfaces

The following steps provide details for adding VLAN interfaces on the storage controllers.

Controller A

1.

Run the following command: vlan create vif0 <NFS VLAN ID>.

2.

Run the following command: wrfile -a /etc/rc vlan create vif0 <NFS VLAN ID>.

3.

Run the following command: ifconfig vif0-<NFS VLAN ID> <Controller A NFS IP> netmask <NFS Netmask> mtusize 9000 partner vif0-<NFS VLAN ID>.

4.

Run the following command: wrfile -a /etc/rc ifconfig vif0-<NFS VLAN ID> <Controller A NFS IP> netmask <NFS Netmask> mtusize 9000 partner vif0-<NFS VLAN ID>.

5.

Run the following command to verify additions to the /etc/rc file: rdfile /etc/rc.

Controller B

1.

Run the following command: vlan create vif0 <NFS VLAN ID>.

2.

Run the following command: wrfile -a /etc/rc vlan create vif0 <NFS VLAN ID>.

3.

Run the following command: ifconfig vif0-<NFS VLAN ID> <Controller B NFS IP> netmask <NFS Netmask>.mtusize 9000 partner vif0-<NFS VLAN ID>.

4.

Run the following command: wrfile -a /etc/rc ifconfig vif0-<NFS VLAN ID> <Controller B NFS IP> netmask <NFS Netmask>.mtusize 9000 partner vif0-<NFS VLAN ID>.

5.

Run the following command to verify additions to the /etc/rc file: rdfile /etc/rc.

Add Infrastructure Volumes

The following steps describe adding volumes on the storage controller for SAN boot of the Cisco UCS hosts as well as virtual machine provisioning.

Note

In this reference architecture, controller A houses the boot LUNs for the VMware hypervisor in addition to the swap files, while controller B houses the first datastore for virtual machines.

Controller A

1.

Run the following command: vol create esxi_boot -s none aggr1 100g.

2.

Run the following command: sis on /vol/esxi_boot.

3.

Run the following command: vol create infra_swap -s none aggr1 100g.

4.

Run the following command: snap sched infra_swap 0 0 0.

5.

Run the following command: snap reserve infra_swap 0.

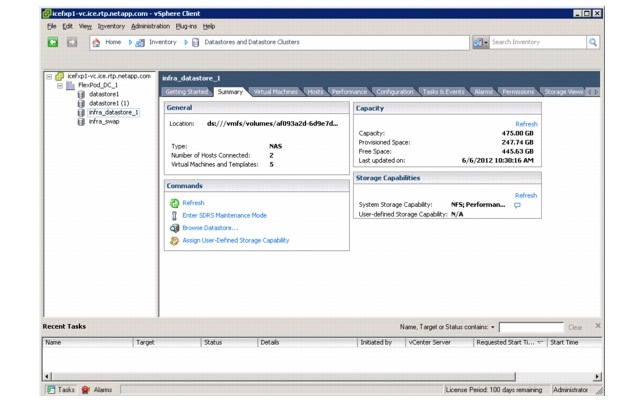

Controller B

1.

Run the following command: vol create infra_datastore_1 -s none aggr1 500g.

2.

Run the following command: sis on /vol/infra_datastore_1.

Export NFS Infrastructure Volumes to ESXi Servers

These steps provide details for setting up NFS exports of the infrastructure volumes to the VMware ESXi servers.

Controller A

1.

Run the following command: exportfs -p rw=<ESXi Host 1 NFS IP>:<ESXi Host 2 NFS IP>,root=<ESXi Host 1 NFS IP>:<ESXi Host 2 NFS IP>,nosuid /vol/infra_swap.

2.

Run the following command: exportfs. Verify that the NFS exports are set up correctly.

Controller B

1.

Run the following command: exportfs -p rw=<ESXi Host 1 NFS IP>:< ESXi Host 2 NFS IP>,root=<ESXi Host 1 NFS IP>:< ESXi Host 2 NFS IP>,nosuid /vol/infra_datastore_1.

2.

Run the following command: exportfs. Verify that the NFS exports are set up correctly.

Cisco Unified Computing System Deployment Procedure

The following section provides a detailed procedure for configuring the Cisco Unified Computing System for use in a FlexPod environment. These steps should be followed precisely because a failure to do so could result in an improper configuration.

Note

The following sections document the steps necessary to provision Cisco UCS C-Series and B-Series servers as part of a FlexPod environment.

Perform Initial Setup of the Cisco UCS C-Series Blade Servers

These steps provide details for initial setup of the Cisco UCS C-Series M2 Servers with Server BIOS and CIMC software at a 1.4.3c or higher level. This procedure is not necessary for Cisco UCS C-Series M3 servers because they shipped with a minimum of 1.4.3c firmware. This procedure is also not necessary for Cisco UCS C-Series M2 servers that are already at a 1.4.3c or higher level. It is important to get the systems to a known state with the appropriate firmware package, so that they can be discovered by the Cisco UCS Manager.

Note

If the Cisco UCS C-Series blade servers are not part of the architecture to be deployed, this section may be skipped.

All Cisco UCS C-Series M2 Blade Servers

1.

From a system connected to the internet, download the latest Cisco UCS Host Upgrade Utility release for your C-Series servers from www.cisco.com. Navigate to Downloads Home > Products Unified Computing and Servers > Cisco UCS C-Series Rack-Mount Standalone Server Software.

2.

After downloading the Host Upgrade Utility package, install the contents to a recordable CD / DVD.

3.

Connect a monitor and keyboard to the Front Console port of the server, power on the server and insert the CD media into the C-Series server.

4.

Monitor the system as it proceeds through Power On Self Test (POST).

5.

Enter F8 to enter the CIMC Config.

6.

Upon entering the CIMC Configuration Utility, select the box to return the CIMC to its factory defaults.

7.

Enter F10 to save.

8.

Enter F10 to confirm the configuration and the system will reset the CIMC to its factory defaults and automatically reboot.

9.

Monitor the system as it proceeds through POST.

10.

Enter F6 to enter the Boot Selection Menu.

11.

Select the SATA DVD Drive when prompted and the server will boot into the upgrade utility.

12.

Press Y in the C-Series Host Based Upgrade utility screen to acknowledge the Cisco EULA.

13.

Select option 8 to upgrade all of the upgradeable items installed.

14.

The system will then begin updating the various components, a process that can take 10 -15 minutes.

15.

If the system prompts a note that the current version of the LOM is equal to the upgrade version and if the system should continue, enter Y.

16.

Press any key to acknowledge completion of the updates.

17.

Select option 10 to reboot the server.

18.

Eject the upgrade media from the server.

Perform Initial Setup of the Cisco UCS 6248 Fabric Interconnects

These steps provide details for initial setup of the Cisco UCS 6248 fabric Interconnects.

Cisco UCS 6248 A

1.

Connect to the console port on the first Cisco UCS 6248 fabric interconnect.

2.

At the prompt to enter the configuration method, enter console to continue.

3.

If asked to either do a new setup or restore from backup, enter setup to continue.

4.

Enter y to continue to set up a new fabric interconnect.

5.

Enter y to enforce strong passwords.

6.

Enter the password for the admin user.

7.

Enter the same password again to confirm the password for the admin user.

8.

When asked if this fabric interconnect is part of a cluster, answer y to continue.

9.

Enter A for the switch fabric.

10.

Enter the cluster name for the system name.

11.

Enter the Mgmt0 IPv4 address.

12.

Enter the Mgmt0 IPv4 netmask.

13.

Enter the IPv4 address of the default gateway.

14.

Enter the cluster IPv4 address.

15.

To configure DNS, answer y.

16.

Enter the DNS IPv4 address.

17.

Answer y to set up the default domain name.

18.

Enter the default domain name.

19.

Review the settings that were printed to the console, and if they are correct, answer yes to save the configuration.

20.

Wait for the login prompt to make sure the configuration has been saved.

Cisco UCS 6248 B

1.

Connect to the console port on the second Cisco UCS 6248 fabric interconnect.

2.

When prompted to enter the configuration method, enter console to continue.

3.

The installer detects the presence of the partner fabric interconnect and adds this fabric interconnect to the cluster. Enter y to continue the installation.

4.

Enter the admin password for the first fabric interconnect.

5.

Enter the Mgmt0 IPv4 address.

6.

Answer yes to save the configuration.

7.

Wait for the login prompt to confirm that the configuration has been saved.

Log into Cisco UCS Manager

Cisco UCS Manager

These steps provide details for logging into the Cisco UCS environment.

1.

Open a Web browser and navigate to the Cisco UCS 6248 fabric interconnect cluster address.

2.

Select the Launch UCS Manager link to download the Cisco UCS Manager software.

3.

If prompted to accept security certificates, accept as necessary.

4.

When prompted, enter admin for the username and enter the administrative password and click Login to log in to the Cisco UCS Manager software.

Upgrade the Cisco UCS Manager Software to Version 2.0(4a)

This document assumes the use of the Cisco UCS Manager 2.0(4a). Refer to Upgrading Between Cisco UCS 2.0 Releases to upgrade the Cisco UCS Manager software and UCS 6248 Fabric Interconnect software to version 2.0(4a). Do not load the Cisco UCS C-Series version 2.0(4a) software bundle on the Fabric Interconnects.

Add a Block of IP Addresses for KVM Access

These steps provide details for creating a block of KVM IP addresses for server access in the Cisco UCS environment. This block of IP addresses should be in the same subnet as the management IP addresses for the Cisco UCS Manager.

Cisco UCS Manager

1.

Select the Admin tab at the top of the left window.

2.

Select All > Communication Management.

3.

Right-click Management IP Pool.

4.

Select Create Block of IP Addresses.

5.

Enter the starting IP address of the block and number of IPs needed as well as the subnet and gateway information.

6.

Click OK to create the IP block.

7.

Click OK in the message box.

Synchronize Cisco Unified Computing System to NTP

These steps provide details for synchronizing the Cisco Unified Computing System environment to the NTP server.

Cisco UCS Manager

1.

Select the Admin tab at the top of the left window.

2.

Select All > Timezone Management.

3.

In the right pane, select the appropriate timezone in the Timezone drop-down menu.

4.

Click Save Changes and then OK.

5.

Click Add NTP Server.

6.

Input the NTP server IP and click OK.

7.

Click OK.

Configure Unified Ports

These steps provide details for modifying an unconfigured Ethernet port into an FC uplink port in the Cisco UCS environment.

Note

Modification of the unified ports leads to a reboot of the fabric interconnect in question. This reboot can take up to 10 minutes.

Cisco UCS Manager

1.

Navigate to the Equipment tab in the left pane.

2.

Select Fabric Interconnect A.

3.

In the right pane, click the General tab.

4.

Select Configure Unified Ports.

5.

Select Yes to launch the wizard.

6.

Use the slider tool and move one position to the left to configure the last two ports (31 and 32) as FC uplink ports.

7.

Ports 31 and 32 now have the "B" indicator indicating their reconfiguration as FC uplink ports.

8.

Click Finish.

9.

Click OK.

10.

The Cisco UCS Manager GUI will close as the primary fabric interconnect reboots.

11.

Upon successful reboot, open a Web browser,navigate to the Cisco UCS 6248 fabric interconnect cluster address, and Launch the Cisco UCS Manager.

12.

When prompted, enter admin for the username and enter the administrative password and click Login to log in to the Cisco UCS Manager software.

13.

Navigate to the Equipment tab in the left pane.

14.

Select Fabric Interconnect B.

15.

In the right pane, click the General tab.

16.

Select Configure Unified Ports.

17.

Select Yes to launch the wizard.

18.

Use the slider tool and move one position to the left to configure the last two ports (31 and 32) as FC uplink ports.

19.

Ports 31 and 32 now have the "B" indicator indicating their reconfiguration as FC uplink ports.

20.

Click Finish.

21.

Click OK.

Edit the Chassis Discovery Policy

These steps provide details for modifying the chassis discovery policy. Setting the discovery policy will simplify the addition of B-Series UCS Chassis and additional fabric extenders for further C-Series connectivity.

Cisco UCS Manager

1.

Navigate to the Equipment tab in the left pane and select Equipment in the list on the left.

2.

In the right pane, click the Policies tab.

3.

Under Global Policies, change the Chassis Discovery Policy to 2-link or to match the number of uplink ports cabled between your chassis or fexes and the Fabric Interconnects.

4.

Leave the Link Grouping Preference set to None.

5.

Click Save Changes in the bottom right corner.

6.

Click OK.

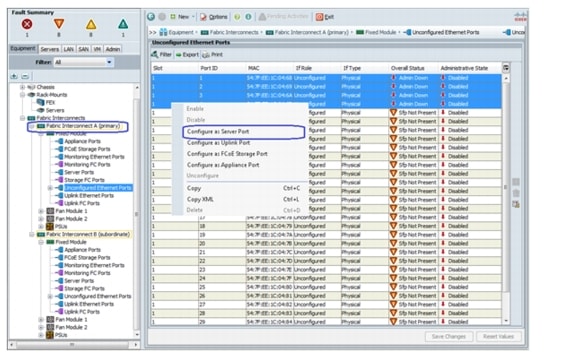

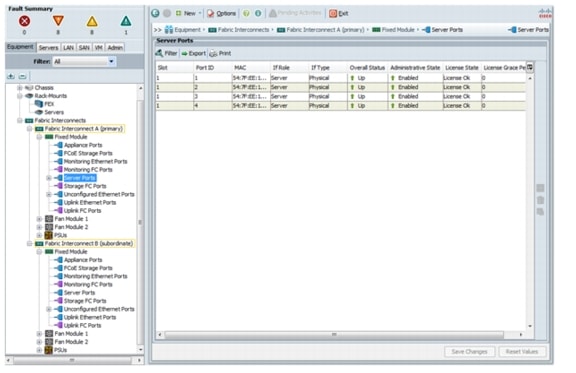

Enable Server and Uplink Ports

These steps provide details for enabling server and uplinks ports.

Cisco UCS Manager

1.

Select the Equipment tab on the top left of the window.

2.

Select Equipment > Fabric Interconnects > Fabric Interconnect A (primary) > Fixed Module.

3.

Expand the Unconfigured Ethernet Ports section.

4.

Select the ports that are connected to the Chassis or Cisco 2232 FEX (2 per FEX), right-click them, and select Configure as Server Port.

5.

A prompt displays asking if this is what you want to do. Click Yes, then OK to continue.

6.

Select ports 19 and 20 that are connected to the Cisco Nexus 5548 switches, right-click them, and select Configure as Uplink Port.

7.

A prompt displays asking if this is what you want to do. Click Yes, then OK to continue.

8.

Select Equipment > Fabric Interconnects > Fabric Interconnect B (subordinate) > Fixed Module.

9.

Expand the Unconfigured Ethernet Ports section.

10.

Select the ports that are connected to the Chassis or Cisco 2232 FEX (2 per FEX), right-click them, and select Configure as Server Port.

11.

A prompt displays asking if this is what you want to do. Click Yes, then OK to continue.

12.

Select ports 19 and 20 that are connected to the Cisco Nexus 5548 switches, right-click them, and select Configure as Uplink Port.

13.

A prompt displays asking if this is what you want to do. Click Yes, then OK to continue.

14.

In the case of using the 2208 or 2204 FEX or the external 2232 FEX, navigate to each device by selecting Equipment -> Chassis -> or Equipment -> Rack-Mounts -> FEX -> <FEX #> and select the Connectivity Policy tab in the right-pane and modify the Admin States to be Port Channel.

15.

Click Save Changes and then OK.

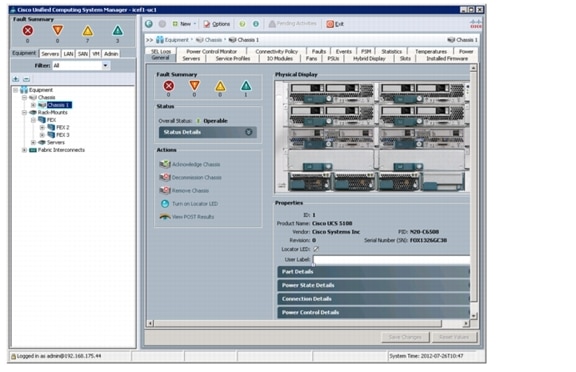

Acknowledge Cisco UCS Chassis and FEX

These steps provide details for acknowledging all UCS Chassis and External 2232 FEX Modules.

Cisco UCS Manager

1.

Select the Equipment tab on the top left of the window.

2.

Expand Chassis and select each Chassis listed.

3.

For each Chassis, select Acknowledge Chassis.

4.

Click Yes and OK to complete acknowledging the Chassis.

5.

If you have C-Series servers in your configuration,expand Rack Mounts and FEX.

6.

For each FEX listed, select it and select Acknowledge Fex.

7.

Click Yes and OK to complete acknowledging the FEX.

Create Uplink PortChannels to the Cisco Nexus 5548 Switches

These steps provide details for configuring the necessary PortChannels out of the Cisco UCS environment.

Cisco UCS Manager

1.

Select the LAN tab on the left of the window.

Note

Two PortChannels are created, one from fabric A to both Cisco Nexus 5548 switches and one from fabric B to both Cisco Nexus 5548 switches.

2.

Under LAN Cloud, expand the Fabric A tree.

3.

Right-click Port Channels.

4.

Select Create Port Channel.

5.

Enter 13 as the unique ID of the PortChannel.

6.

Enter vPC-13-N5548 as the name of the PortChannel.

7.

Click Next.

8.

Select the port with slot ID: 1 and port: 19 and also the port with slot ID: 1 and port 20 to be added to the PortChannel.

9.

Click >> to add the ports to the Port Channel.

10.

Click Finish to create the Port Channel.

11.

Select the check box for Show navigator for Port-Channel 13 (Fabric A).

12.

Click OK to continue.

13.

Under Actions, select Enable Port Channel.

14.

In the pop-up box, click Yes, then OK to enable.

15.

Click OK to close the Navigator.

16.

Under LAN Cloud, expand the Fabric B tree.

17.

Right-click Port Channels.

18.

Select Create Port Channel.

19.

Enter 14 as the unique ID of the Port Channel.

20.

Enter vPC-14-N5548 as the name of the Port Channel.

21.

Click Next.

22.

Select the port with slot ID: 1 and port: 19 and also the port with slot ID: 1 and port 20 to be added to the Port Channel.

23.

Click >> to add the ports to the Port Channel.

24.

Click Finish to create the Port Channel.

25.

Select Check box for Show navigator for Port-Channel 14 (Fabric B).

26.

Click OK to continue.

27.

Under Actions, select Enable Port Channel.

28.

In the pop-up box, click Yes, then OK to enable.

29.

Click OK to close the Navigator.

Create an Organization

These steps provide details for configuring an organization in the Cisco UCS environment. Organizations are used as a means to organize and restrict access to various groups within the IT organization, thereby enabling multi-tenancy of the compute resources. This document does not assume the use of Organizations, however the necessary steps are included below.

Cisco UCS Manager

1.

From the New... menu at the top of the window, select Create Organization.

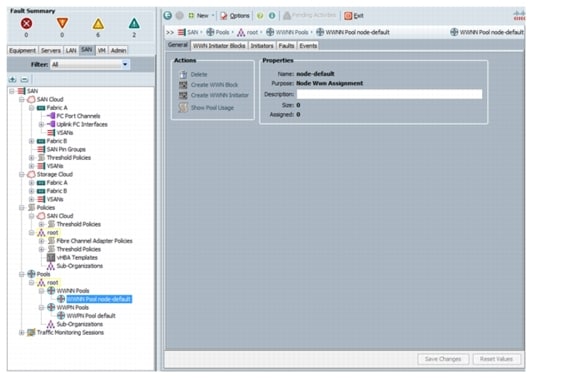

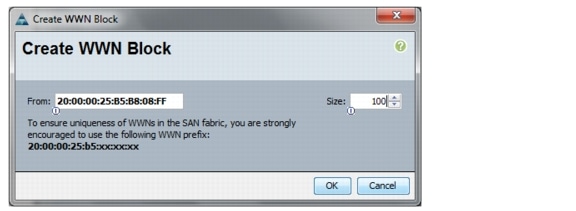

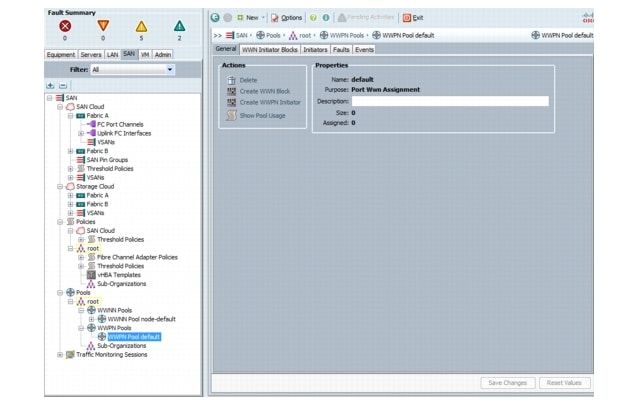

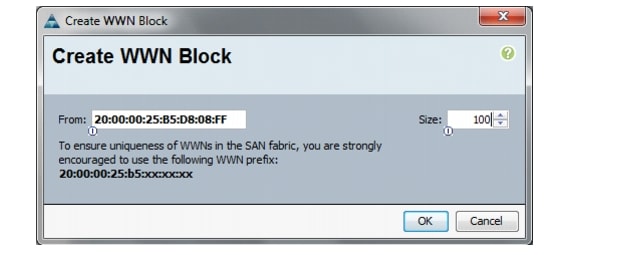

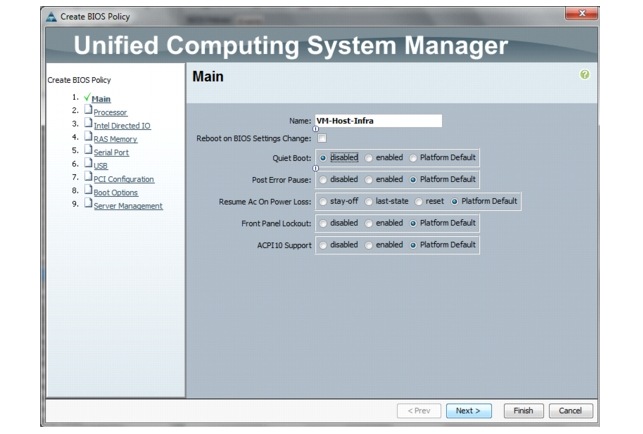

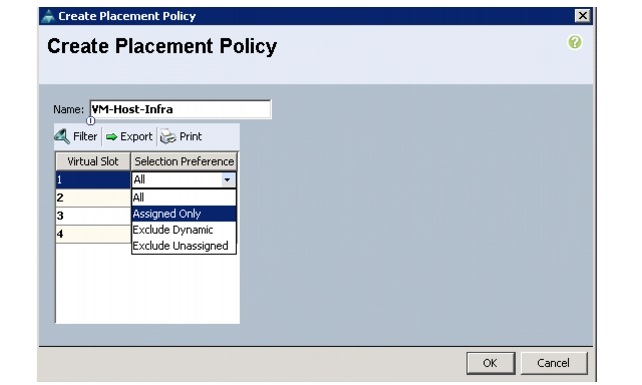

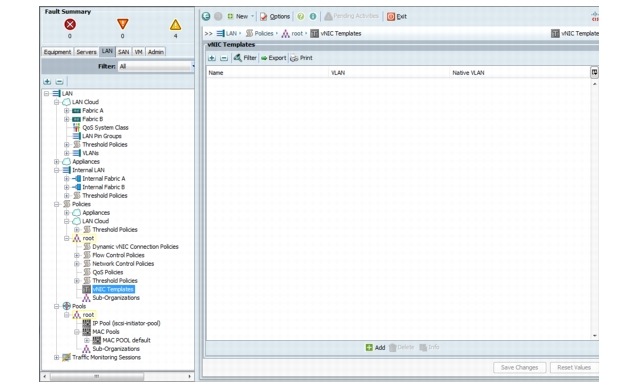

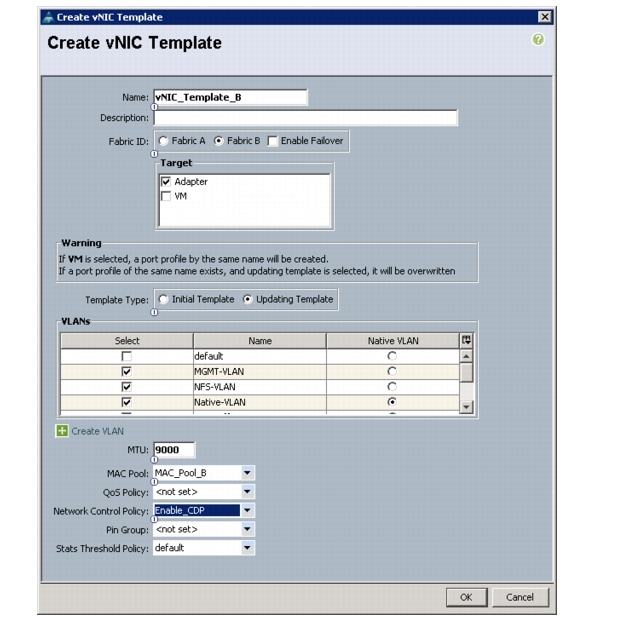

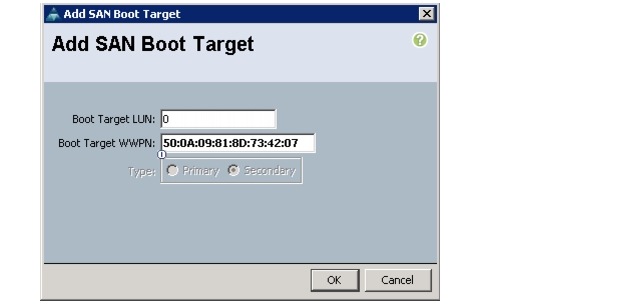

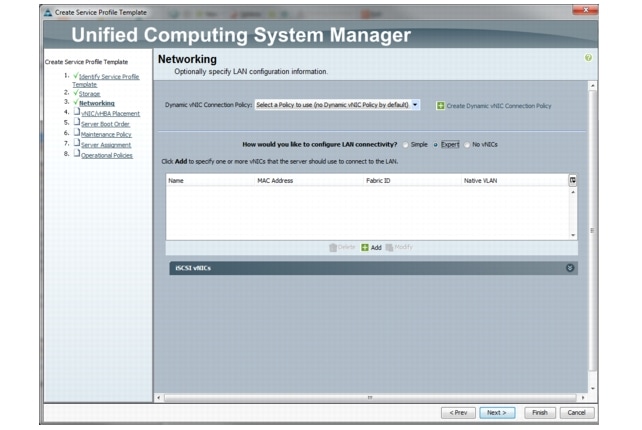

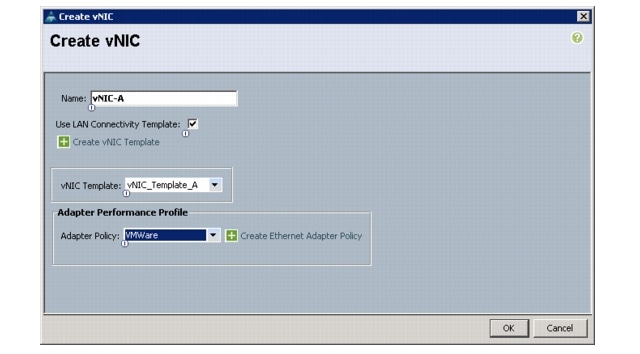

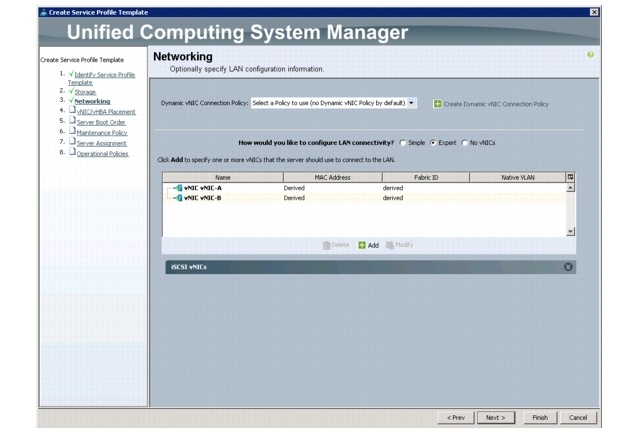

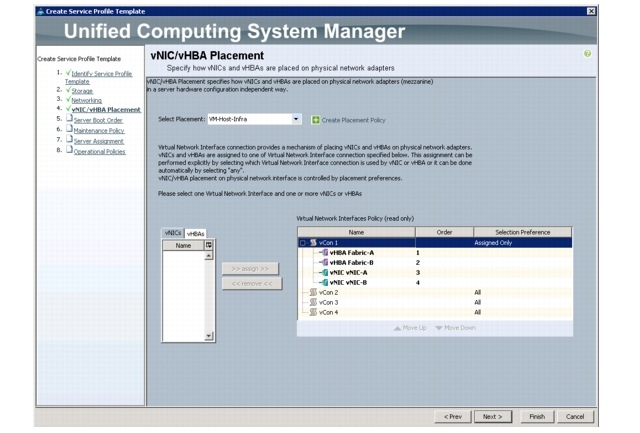

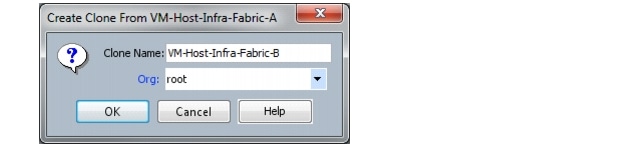

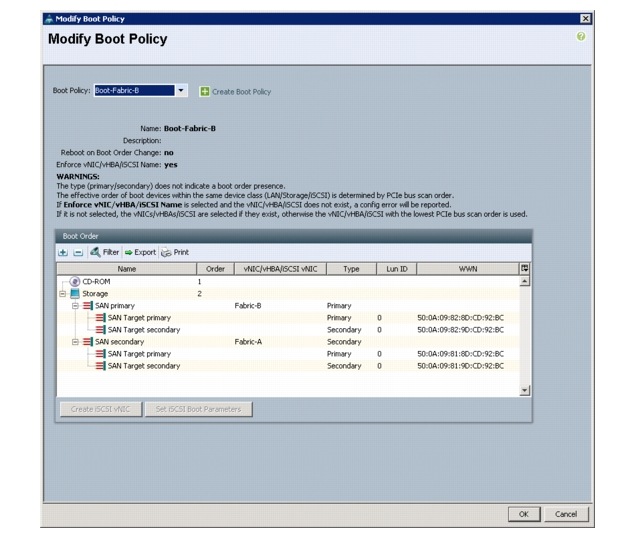

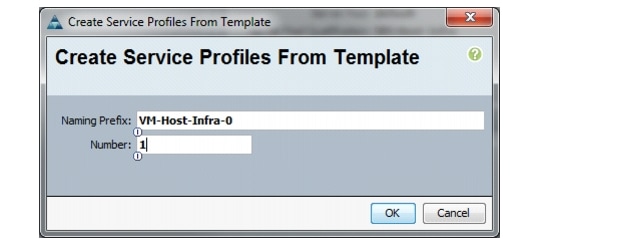

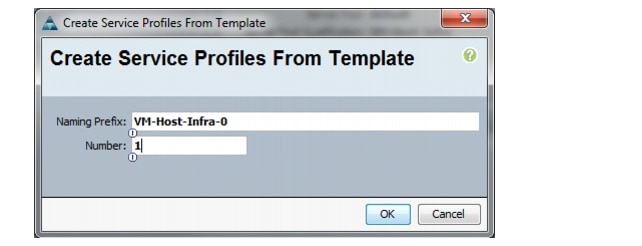

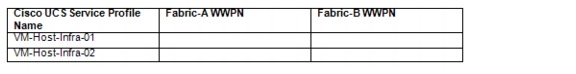

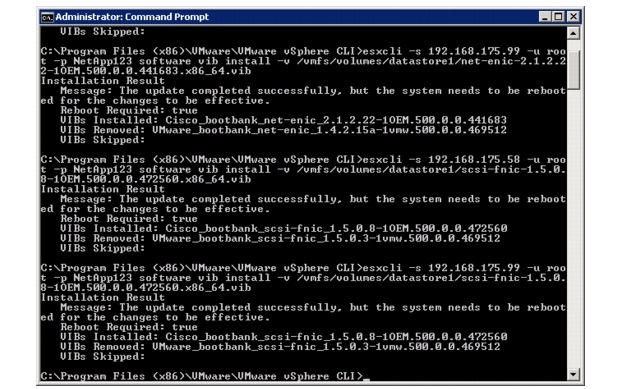

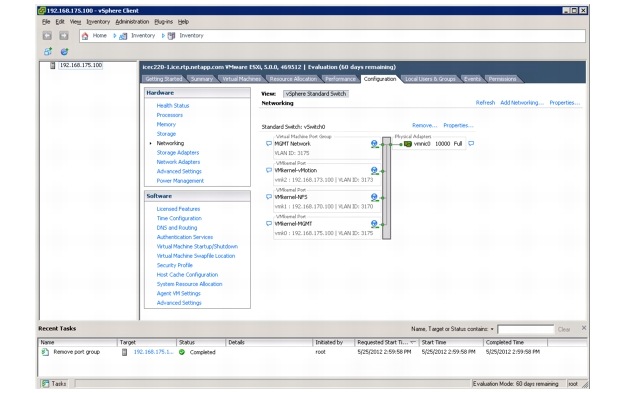

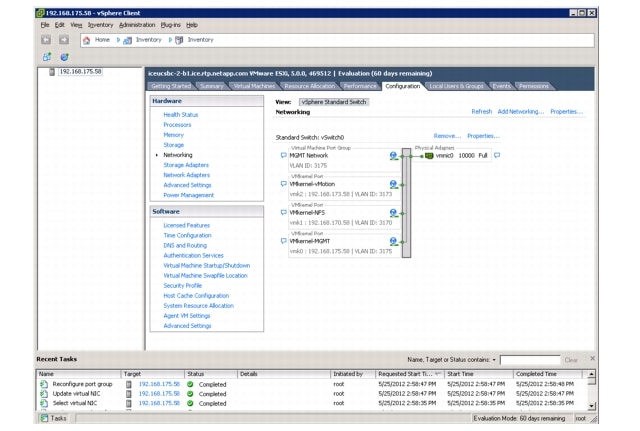

2.