Cisco Video Surveillance Deployment Guide for B- and C-Series Platforms

Available Languages

Table Of Contents

Video Surveillance Deployment Guide for UCS B- and C-Series Platforms

Installing and Configuring the VSM Software, Release 6.3.2

Fiber Channel-based SAN Storage

Internal Storage on C-Series Disks

Configuring Network Switches for the VSM VM

Video Surveillance Deployment Guide for UCS B- and C-Series Platforms

November 2012This guide describes the key requirements and instructions for deploying a virtualized Cisco® Video Surveillance Manager (VSM) on the Cisco Unified Computing System™ (UCS) B- and C-Series platforms.

Contents

This document includes the following sections:

Installing and Configuring the VSM Software, Release 6.3.2

Configuring Network Switches for the VSM VM

Introduction

This guide describes the key requirements and instructions for deploying a virtualized VSM on the Cisco UCS B- and C-Series platforms. This guide also describes installation and configuration guidelines for VMware vSphere Hypervisor and the VSM virtual machine (VM), as well as best practice recommendations.

Audience

This guide is intended for use by Cisco System Engineers, Physical Security Advanced Technology Provider (ATP) partners, and technical field staff who develop and implement Cisco Video Surveillance and UCS Servers for data center and branch office solutions.

A successful implementation also requires additional knowledge in the following areas:

•

Cisco UCS B- and C-Series installation and management

•

Cisco Video Surveillance Manager (Version 6.3.2) installation and configuration

Scope

This guide contains detailed instructions on how to install the VSM on the UCS (see deployment scope in Figure 1).

Figure 1 Deployment Scope

Note

This guide does not describe the configuration and operation of these products. For a full description of these products, see the "More Information" section.

System Overview

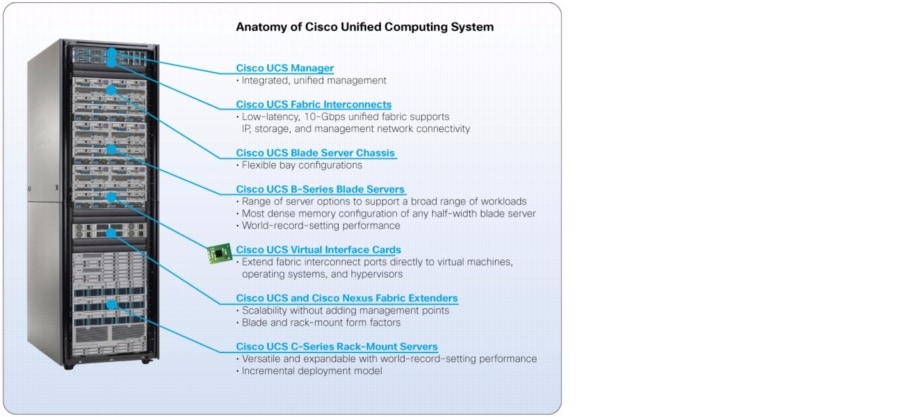

The Cisco UCS fuses access layer networking and servers. This high-performance, next-generation server system provides a data center with a high degree of workload agility and scalability.

The Cisco Unified Computing System Manager (UCSM) presents a new paradigm for server management (see Figure 2). Among the most relevant attributes of UCSM include the following:

•

Foundation for stateless/utility computing model

•

Policy-based management

•

Full inventory, auto-discovery, and device management

•

Simple association of logical server or application images to a physical server or blades

Figure 2 Anatomy of the Cisco UCS

The hardware (see Figure 3) and software components support the Cisco unified fabric, which runs multiple types of data center traffic over a single, converged network adapter.

Figure 3 UCS B-Series Chassis, B-Series Blades, C-Series Rack-Mount Servers, and Fabric Interconnects

Figure 4 illustrates the overall, logical topology of the networking and video surveillance components, including a UCS B-Series Chassis containing the B-Series Server Blades that run the VSM and the Video Surveillance Operations Manager (VSOM), various IP cameras, an external switch, and the operator workstations that run the VSOM client.

Figure 4 Logical Network Topology

Note

This deployment guide has been validated to operate within the specifications outlined in the "Cisco Video Surveillance Manager for UCS Express" data sheet (http://www.cisco.com/en/US/products/ps10265/index.html). Refer to this data sheet for the latest guidelines, including the maximum number of cameras, maximum input/output (I/O), and maximum raw storage.

Installing and Configuring the VSM Software, Release 6.3.2

This section describes the installation procedure, including the configuration of Hypervisor services and guest clients, and deploying and verifying the VSM VM.

Assumptions

In the following configuration examples, it is assumed that:

•

UCS B-Series Blades or UCS C-Series rack-mount servers are installed with ESXi 5.0 and configured with the required IP addresses for the Management Network.

•

The VMWare vCenter Server is installed and used to manage the hypervisors running on the UCS servers.

•

Fiber Channel-based Storage Area Network (SAN) storage is used for UCS B-Series servers.

•

Onboard redundant array of independent disk (RAID)-based storage is used for UCS C-Series servers.

Pre-Installation Requirements

Table 1 outlines the pre-installation requirements for the vSphere Client.

To install the VSM software, Release 6.3.2:

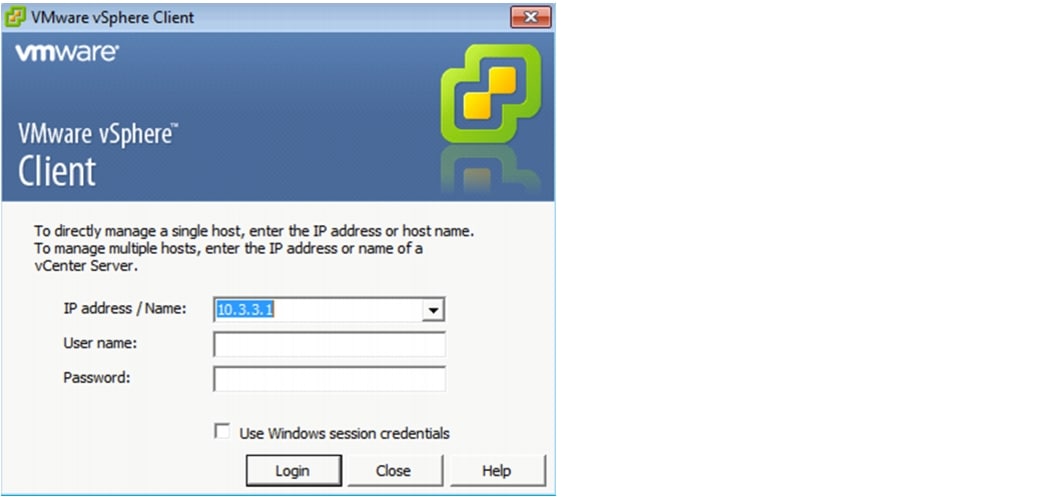

Step 1

Launch the vSphere client and enter the vCenter Server's IP address and credentials to access the vCenter server (see Figure 5).

Figure 5 VMware vSphere Client Login Page

Step 2

Download the VSM 6.3.2 Open Virtualization Format (OVF) template from the Cisco web page or insert a USB drive with the VSM template and copy the template to the same computer on which the vSphere client was previously installed.

The OVF Template is approximately 2.5 GB in size. Once deployed, the VM requires 20 GB of space (without including the space for the video partitions).

The vSphere Client main page displays (see Figure 6).

Figure 6 Deploying the OVF Template

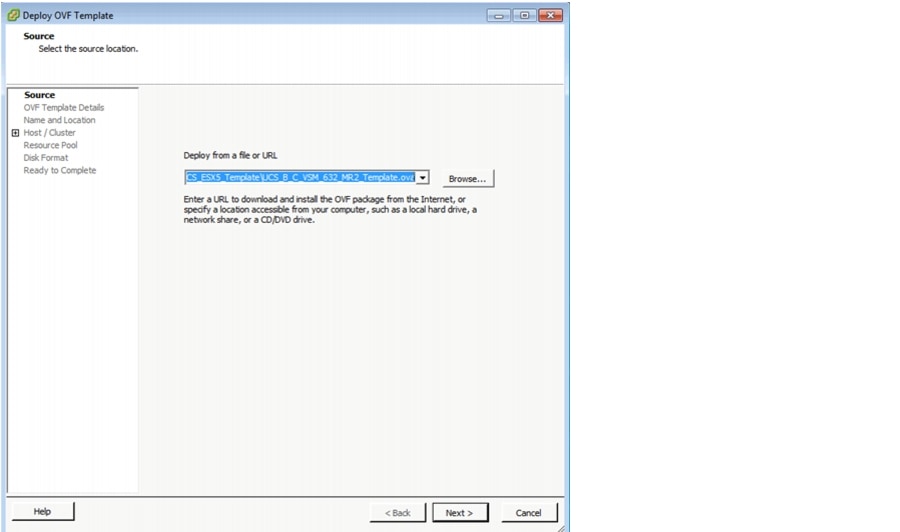

Step 3

Click File > Deploy OVF Template to launch the Deploy OVF Template screen (see Figure 7).

Figure 7 Deploying the OVF Template from a File or URL

Step 4

Click Browse and either enter a URL to download, or specify a location and select the VSM template.

Step 5

Complete the remaining steps by selecting all of the default options.

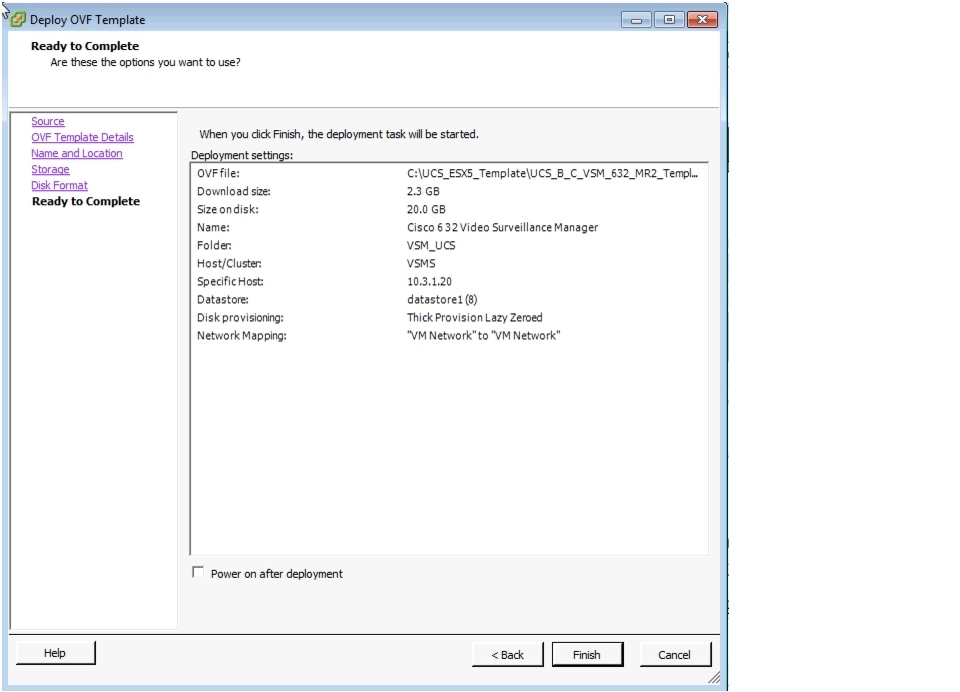

Figure 8 displays the result of those selected deployment settings.

Figure 8 Ready to Complete—Deployment Settings

Step 6

Click Finish to deploy the VSM template.

For more information about OVF Templates, see http://www.vmware.com/technical-resources/interfaces/ovf.html.

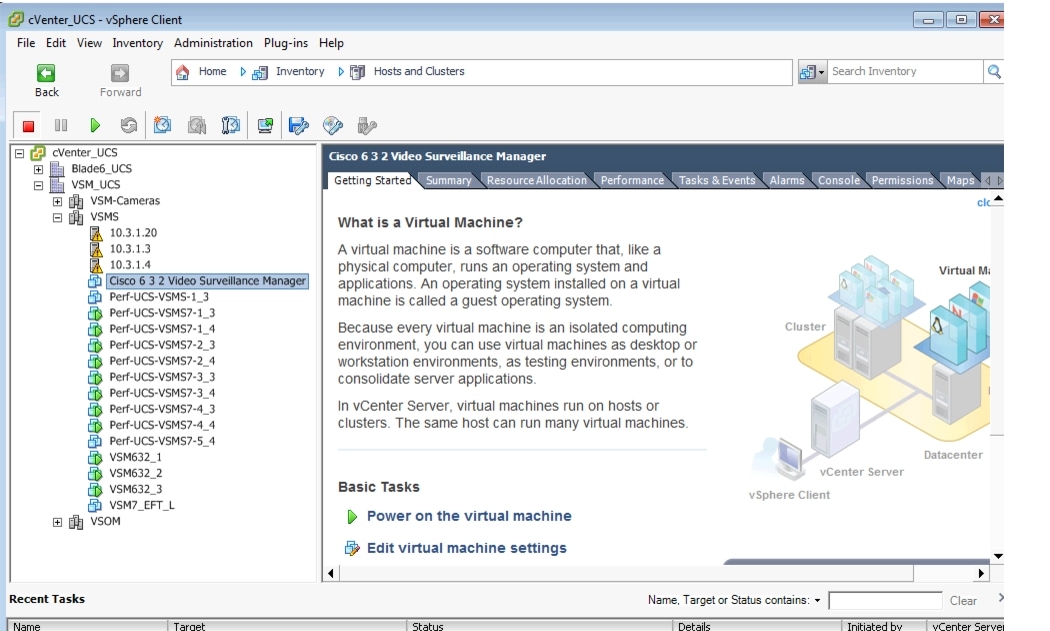

Once the VSM template is successfully deployed, the VSM VM displays under the host entry in the Inventory tree (see Figure 9, left pane).

Note

The VSM VM size is 20 GB.

Figure 9 VSM VM Console in the vSphere Client

Creating New Media Hard Disks

The hard disk size depends on the SAN logical unit number (LUN) size for the B-Series or the RAID array for the C-Series UCS servers.

Fiber Channel-based SAN Storage

Fiber Channel-based SAN storage (B-Series blades and C-Series rack-mount servers) includes the following guidelines:

•

The guest OS is SUSE Linux Enterprise Server (SLES) 10 Serial Peripheral Interface (SP1) 32-bit; hence, the maximum size of each disk that can be added is limited to 16 TB.

•

To overcome the VMware limitation of 2 TB per virtual machine disk (vmdk), the SAN LUNs are attached to the VM as a Raw Device Mapping (RDM).

Internal Storage on C-Series Disks

Internal storage on C-Series disks include the following guidelines:

•

The onboard C-Series disks are subject to the VMware VMDK limitation of 2 TB each.

•

Depending on the available storage (number of hard disks and RAID configuration), multiple disks of 2 TB (or less) must be added to the VMs on the UCS C-Series servers.

For more information about Virtual Machine File Systems (VMFS) and RDMs, see http://pubs.vmware.com/vi301/san_cfg/wwhelp/wwhimpl/common/html/wwhelp.htm?context=san_cfg&file=esx_san_cfg_esx_and_san.4.13.html.

To add a second hard disk for the VSM VM:

Step 1

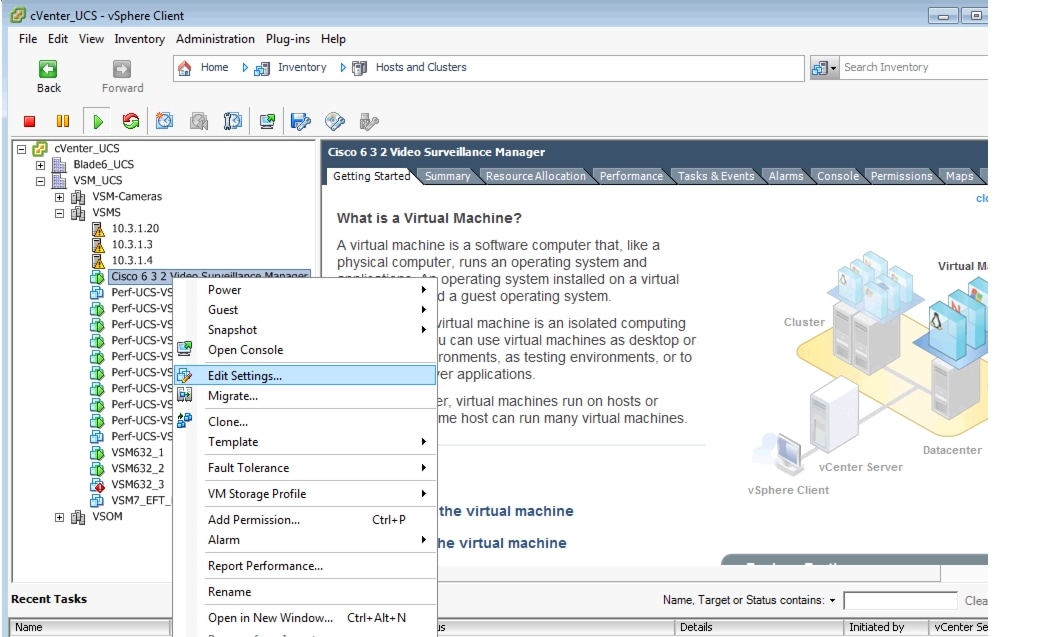

Launch the vSphere client and connect to the vCenter server (see Figure 5 and Figure 10).

Figure 10 vCenter UCS, vSphere Client—Edit Settings

Step 2

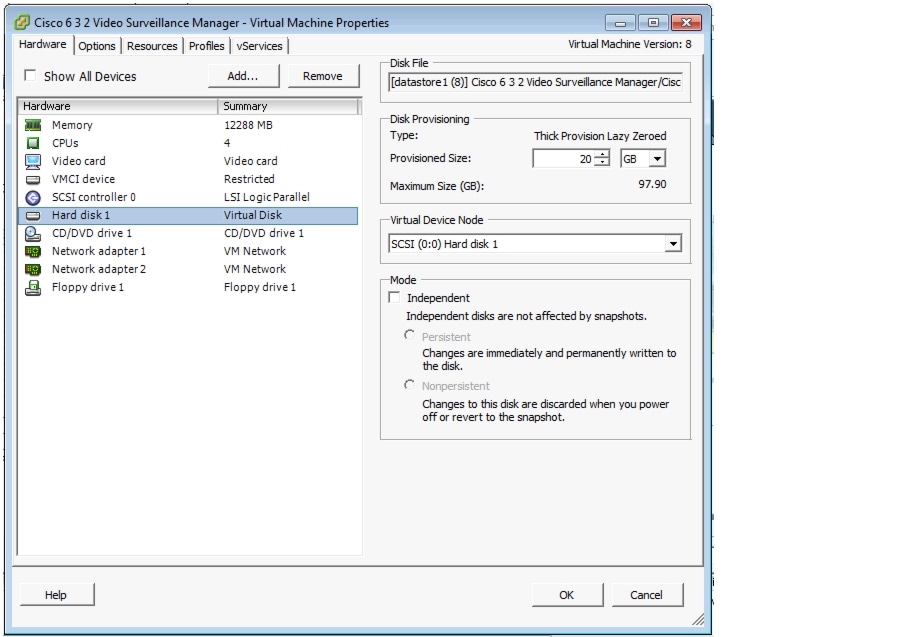

In the left pane (Inventory tree), right-click the hypervisor's name and select Edit Settings to launch the Virtual Machine Properties—Hardware Tab settings (see Figure 11).

Figure 11 Virtual Machine Properties—Hardware Tab Settings

Step 3

Select Hard disk and note the Disk Provisioning sizes (Maximum Size and the Provisioned Size).

Step 4

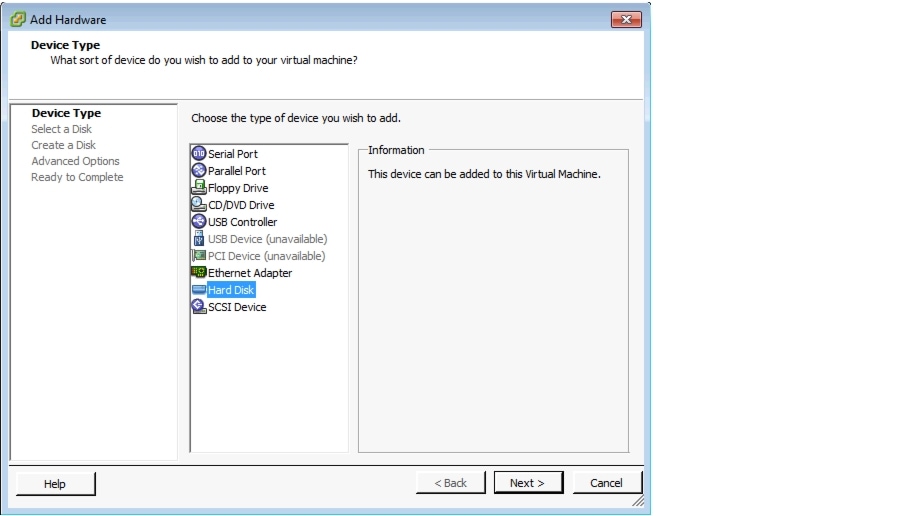

Click Add to display the Add Hardware screen (see Figure 12).

Figure 12 Selecting a Hard Disk

Step 5

From the device type list, select Hard Disk and click Next to display the Select a Disk option (see Figure 13).

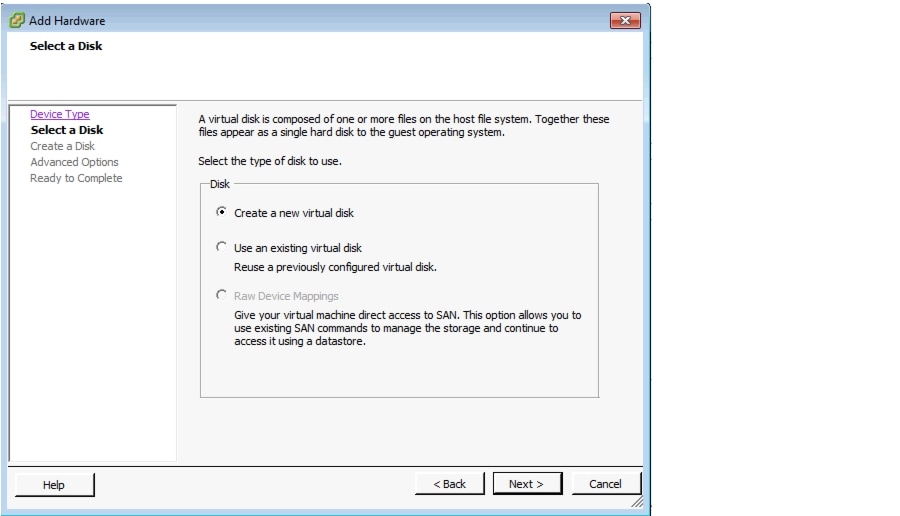

Figure 13 Disk Options available for UCS C-Series Internal RAID-based Storage

Step 6

Select the Create a new virtual disk radio button and click Next to display the Raw Device Mappings option (see Figure 14).

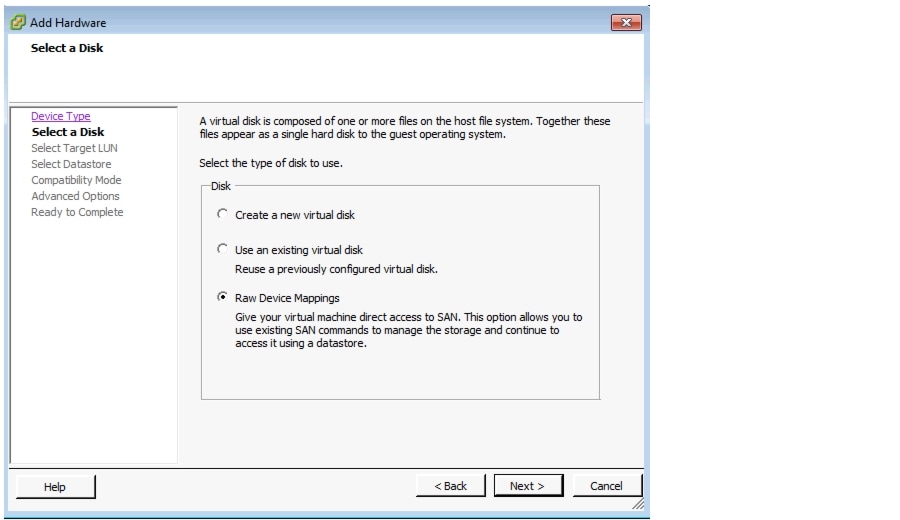

Figure 14 Disk Options Available for UCS B-Series Fiber Channel-based SAN Storage

Step 7

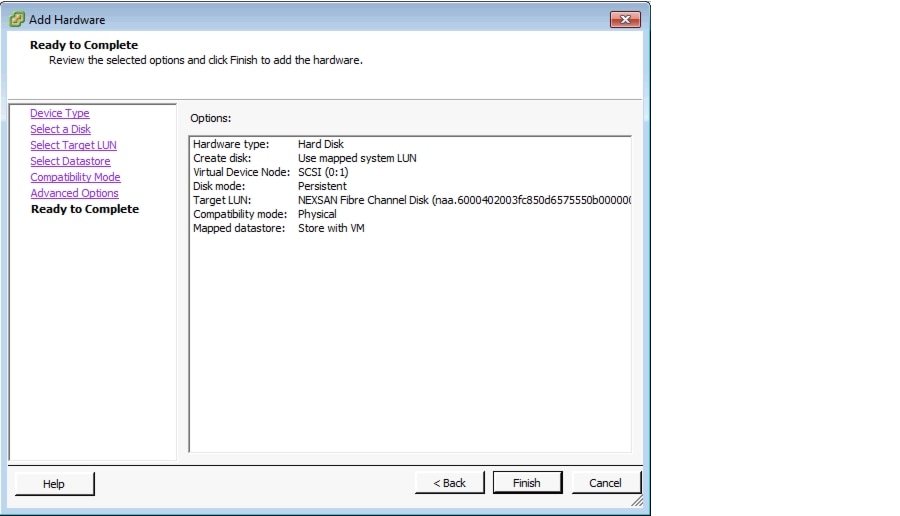

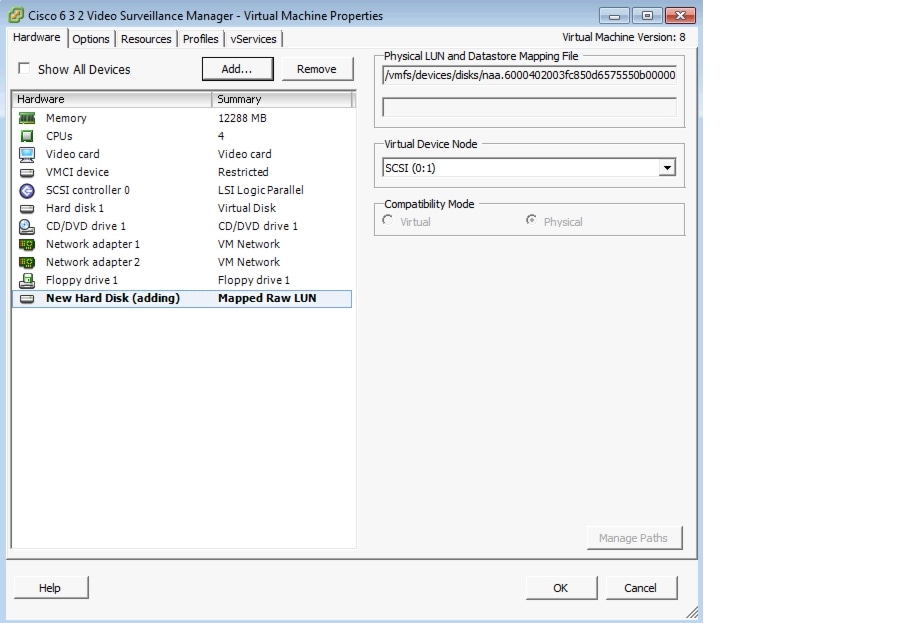

Select the Raw Device Mappings radio button and click Next to display the summary options (see Figure 15).

Figure 15 New Hard Disk Summary Options

Step 8

Click Finish to return to the Virtual Machine Properties screen and view the newly created hard disk (see Figure 16).

Figure 16 VM Properties—Add New Hard Disk to the VSM VM

Step 9

Click OK to complete this procedure.

Configuring Network Switches for the VSM VM

To set up and change the networking configuration for the VSM VM to operate in the customer environment:

Step 1

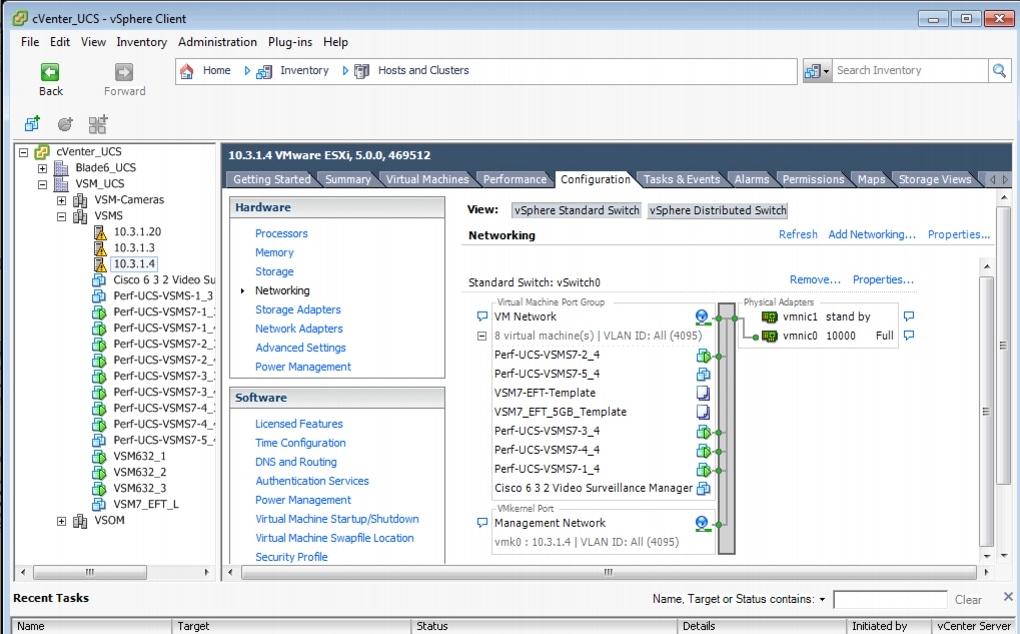

Launch the vSphere client and connect to the vCenter server (see Figure 5 and Figure 17).

Figure 17 VM Switch Networking Properties—vSphere Client

Step 2

In the left pane (Inventory tree), click the hypervisor's name > click the Configuration tab, and in the Hardware area, click Networking.

The default Virtual Switch: vSwitch 0 displays.

Step 3

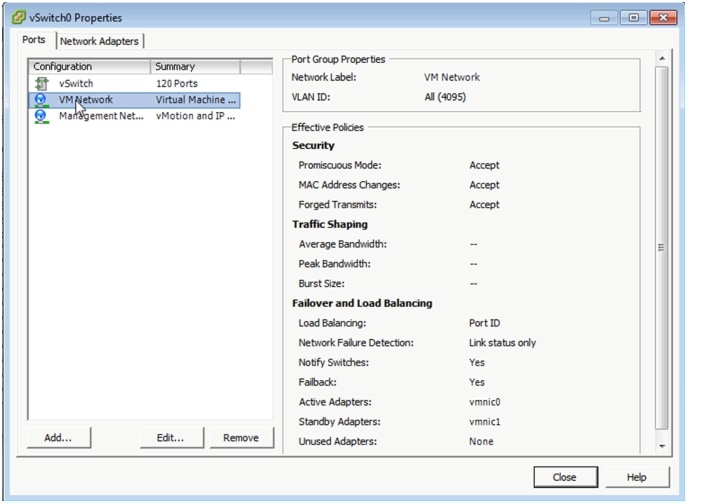

Click the Properties link to configure the virtual switch's properties (see Figure 18).

Figure 18 VM Switch Networking Properties—vSphere Client

Step 4

Select VM Network and click Edit.

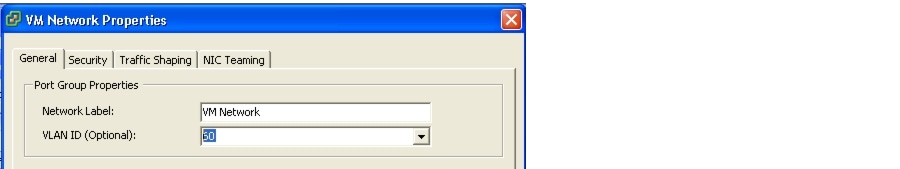

The VM Network Properties screen displays (see Figure 19).

Figure 19 VM Network Properties—vSphere Client

Step 5

Optional. Change the VLAN ID to the VSM VLAN ID (for example, 60).

Note

By default, the virtual LAN (VLAN) ID (ALL, 4095) is set on all the virtual interfaces.

Step 6

Click OK to complete the network configuration.

For more information about configuring network switches for VLAN tagging in VMware, see http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1266.

For information about VLAN tagging, see http://pubs.vmware.com/vsphere-50/index.jsp?topic=%2Fcom.vmware.vsphere.networking.doc_50%2FGUID-7225A28C-DAAB-4E90-AE8C-795A755FBE27.html.

For information about VMware vSphere documentation, see http://www.vmware.com/support/pubs/vsphere-esxi-vcenter-server-pubs.html.

Configuring NTP

Note

We recommend that you use the Network Time Protocol (NTP) source to maintain the correct time on the server. Configuring NTP should be done before configuring the video. The clock should be set to use Coordinated Universal Time (UTC) and the appropriate time zone for the server.

To set up the NTP configuration for the VM host:

Step 1

Launch the vSphere client and connect to the vCenter server (see Figure 5).

Step 2

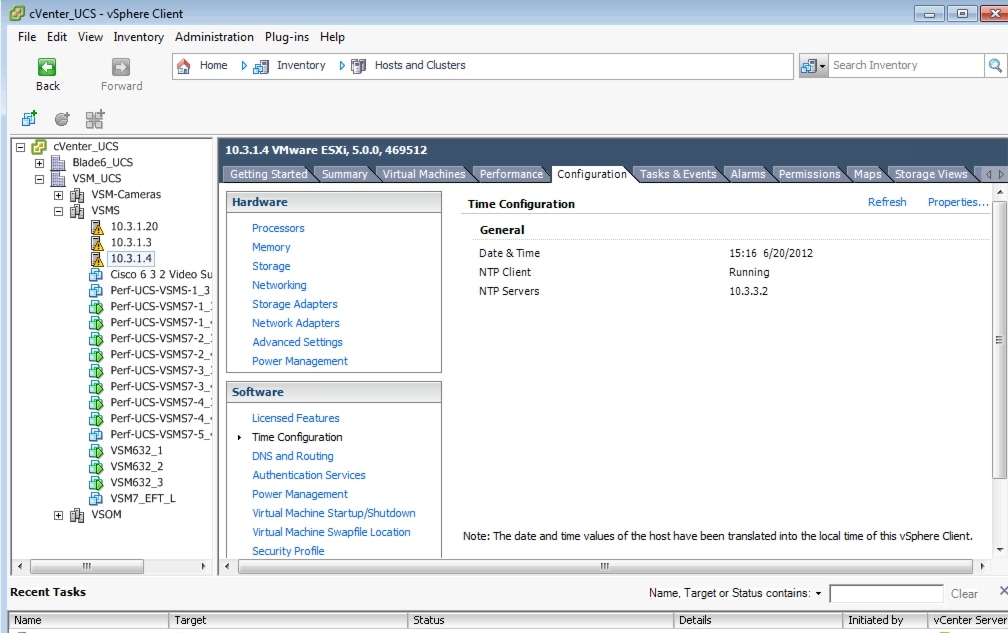

In the left pane (Inventory tree), click the hypervisor's name > click the Configuration tab, and in the Software area, click Time Configuration (see Figure 20).

Figure 20 Time Configuration Settings

Step 3

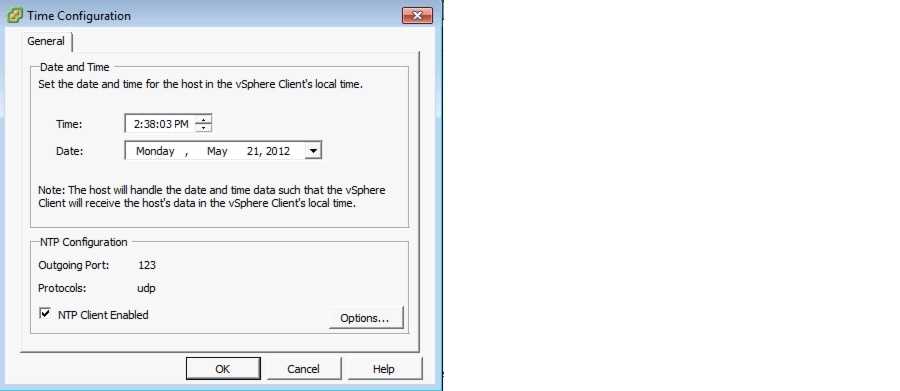

Click the Properties link (top, right corner) to configure the NTP settings (see Figure 21).

Figure 21 Time Configuration Properties

Step 4

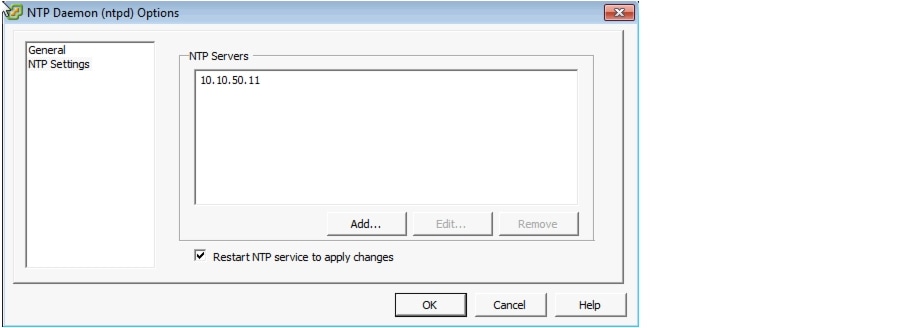

Check the NTP Client Enabled check box and click Options to add the NTP server address (see Figure 22).

Figure 22 Adding the NTP Server Address

Step 5

Click OK to complete the NTP configuration for the hypervisor.

Note

To set up the NTP configuration on the VSM VM running SUSE, use the Yet Another Setup Tool (YaST) to configure the NTP source and server time.

Powering On the VSM

To power on the VSM:

Step 1

Launch the vSphere client and connect to the vCenter server (see Figure 5).

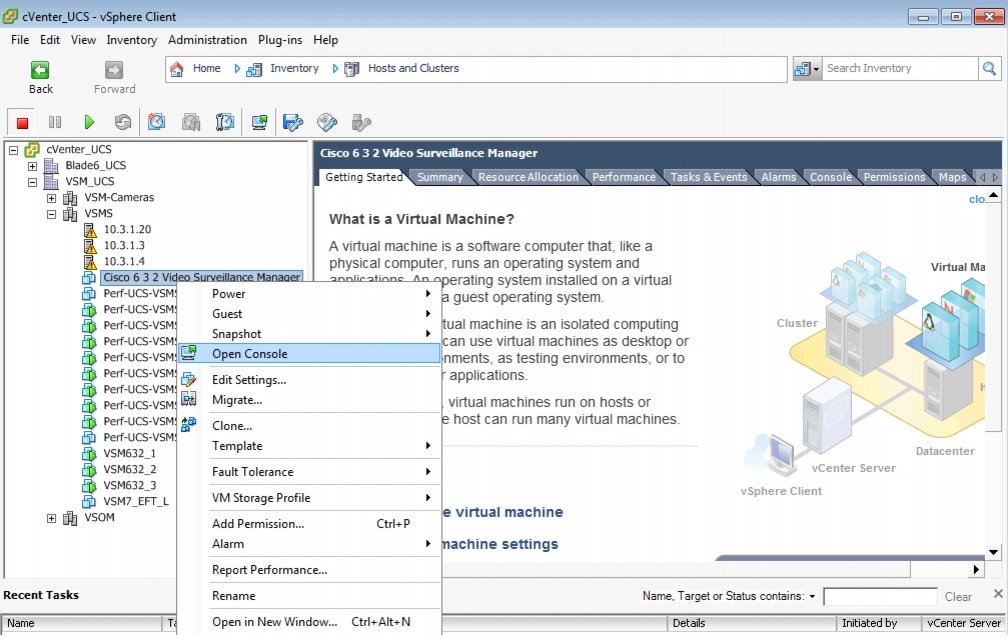

Step 2

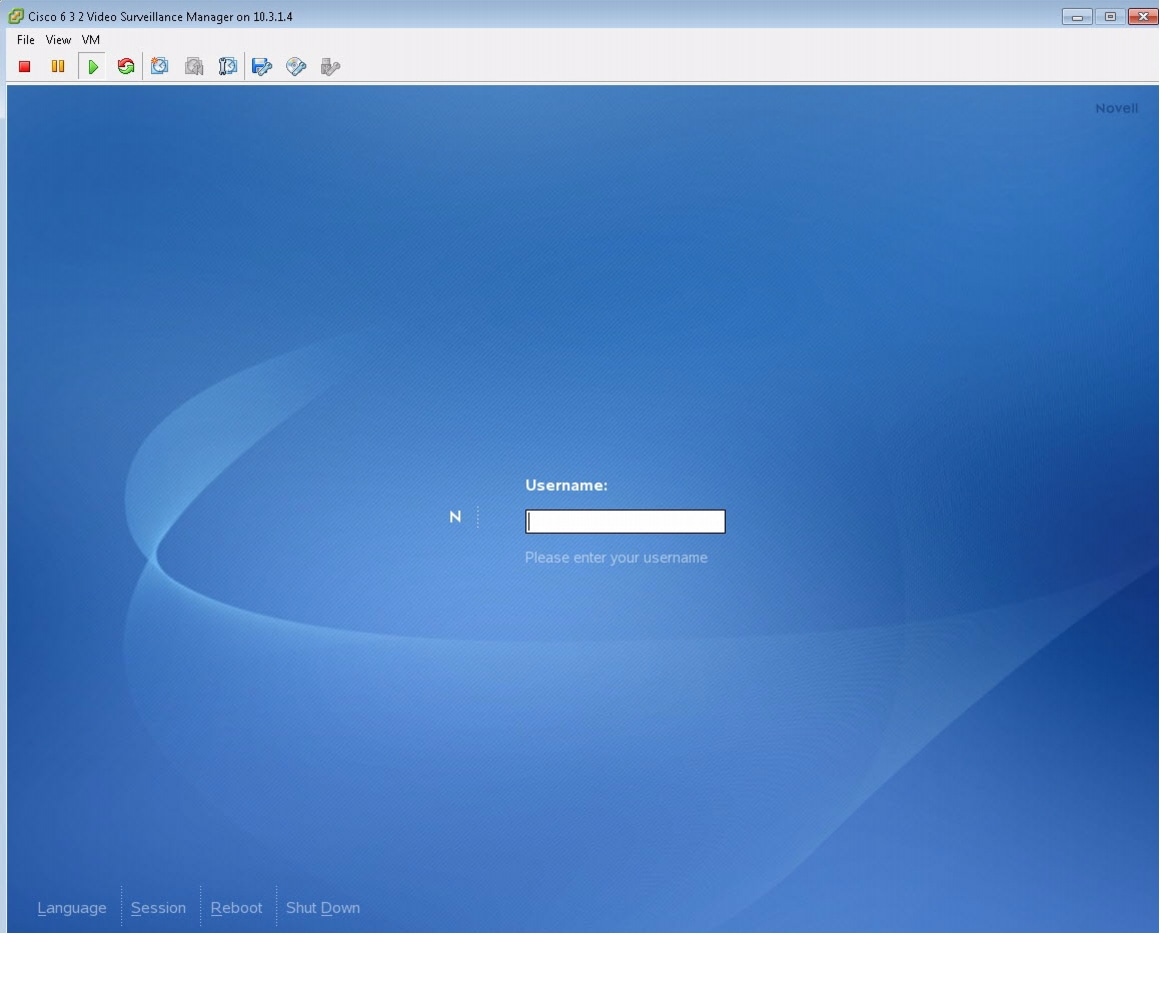

In the left pane (Inventory tree) of the cVenter_UCS—vSphere Client, right-click the VSM VM name, and select Open Console (see Figure 23) to launch the VM console (see Figure 24).

Figure 23 Opening the VM Console

Figure 24 Launching the VM Console—Cisco 6.3.2 Video Surveillance Manager

Step 3

On the console toolbar, click the green arrow to power on the VM.

Configuring the VSM

This section describes how to add a media partition to the VSM VM.

For other relevant, configuration steps for VSM 6.3.2, see http://www.cisco.com/en/US/products/ps9152/prod_installation_guides_list.html.

Preparing Video Repositories

Video that is recorded by the VSMS is stored in repositories on storage volumes that are dedicated for recording video by the VSMS. The repositories must be separate partitions from the operating system (OS) partitions.

Each repository has a mount point to specify the path through which the files are accessed. The common convention for naming repositories is

/media#, with/media0used for a repository on the operating system volume, and/media1 - /mediaNused for additional storage volumes.Repository Greater Than 2 TiB

To create partitions greater than 2 TiB, the volume must use a globally unique identifier (GUID) partition table (GPT) and must be a different storage volume from the OS volume.

Note

The GPT partition table does not work on the boot volume.

CautionThe following procedure erases the GPT partition table on the specified volume, which deletes all data on the volume.

Step 1

Log on to the VSMS server and create a partition GPT table on the volume:

linux:~ # parted /dev/<device> mklabel gpta.

Replace <device> with the volume device name, such as /dev/sdb.

b.

Verify the device name to use by going into YaST, navigate to System > Partitioner, and check the device name listed for the SAN Partition.

c.

Verify that the volume is using the correct partition type:

linux:~ # parted /dev/sdb printDisk geometry for /dev/sdb: 0kB - 10TBDisk label type: gptNumber Start End Size File system Name FlagsInformation: Do not forget to update /etc/fstab, if necessary.d.

Ensure that the disk label type is set to gpt.

Step 2

After creating the GPT, use the standard following methods for partitioning and formatting the partitions.

a.

Open the VSM VM console and log on to the VSM with either your specific authentication or the standard default username of root and the secur4u password.

b.

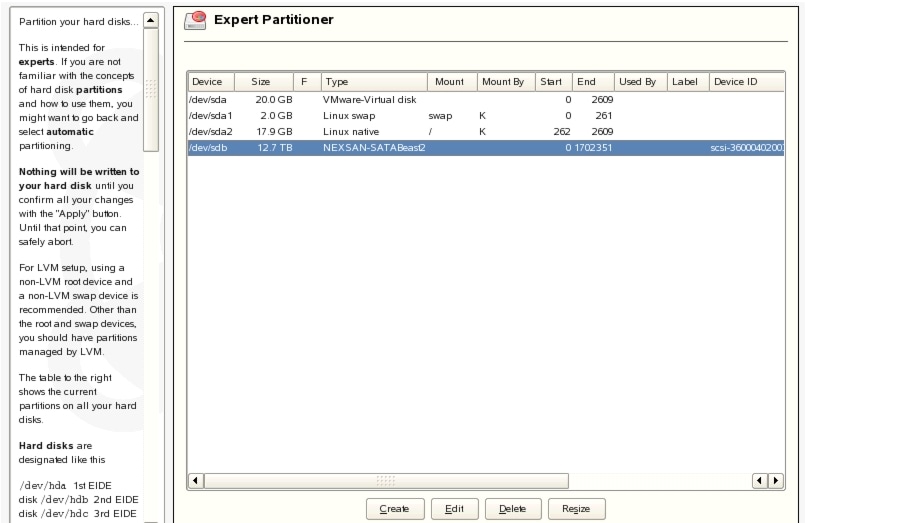

Launch the YaST partitioner utility to add the new media partition (see Figure 25).

Figure 25 Creating a New Media Partition in YaST

c.

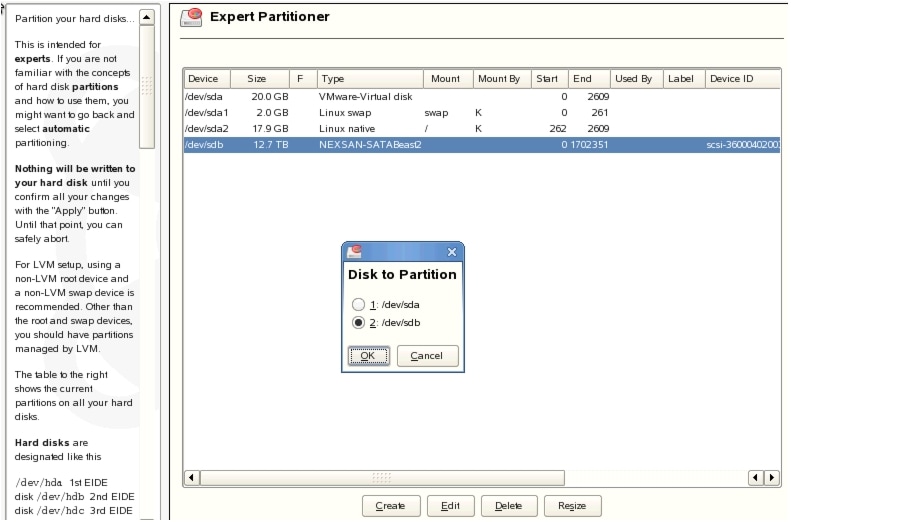

Select a device and click Create to display the Disk to Partition screen (see Figure 26).

Figure 26 Selecting the Disk Device to Partition in YaST

d.

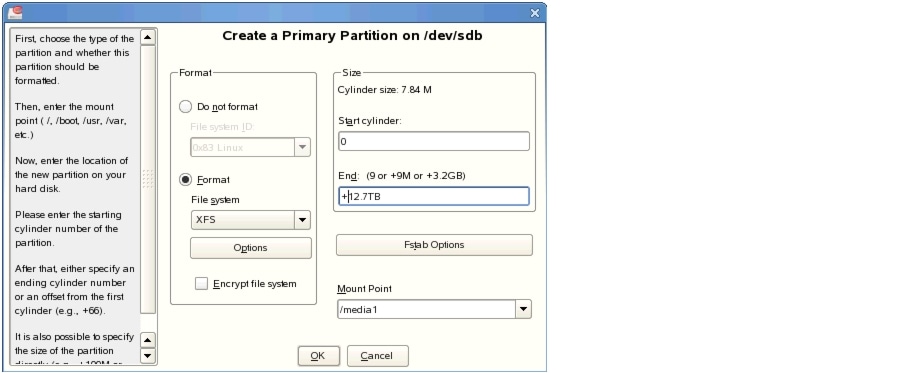

Select the /dev/sdt option and click OK to display the Create a Primary Position on the /dev/sdb screen (see Figure 27).

Figure 27 Create a Primary Partition on /dev/sdb

e.

Using the XFS Format, choose the partition Size, name the Mount Point in YaST, and click OK.

f.

After successfully creating the partition, change the partition ownership to the nobody account and group with the chown nobody:nobody /media1 command.

Step 3

Reboot the VSM VM: log out and click Reboot.

Step 4

After the VM reboots, from the browser, open the Video Surveillance Management Console (VSMC) screen and configure the Media Server options to set the /media1 partition for storing archives and video clip data.

Table 2 lists the available storage options.

Table 2 Storage Options

•

SLES 10 SP1 32-bit allows for a maximum partition size of 16 TB.

•

There can be multiple media partitions (media1 ....mediaN) based on the retention period for the video recordings of the cameras being hosted on the particular VM.

•

The virtual disk sizes are limited to 2 TB. For more information, see http://blogs.vmware.com/vsphere/2011/07/new-vsphere-50-storage-features-part-1-vmfs-5.html.

•

Multiple 2 TB virtual disks can be added to the VM, based on the retention period for the video recordings of the cameras being hosted on the particular VM.

Note

Finish all of the remaining VSM administration and configuration tasks. For the VSM Installation and Upgrade Guides, see http://www.cisco.com/en/US/docs/security/physical_security/video_surveillance/network/vsm/6_3/install_upgrade/vsm_install_upgrade.html.

Testing Network Connectivity

To verify that network connectivity is working properly between endpoints, including IP cameras and VSOM operator workstations:

Step 1

From a terminal window on the VSM VM, ping the gateway, cameras, and VSOM client PCs to ensure proper connectivity.

Step 2

Log on to the VSM server and ensure that all Cisco services are running and operational.

Step 3

Navigate to the VSMC page and from the Status Console, verify the status of the Cisco services.

More Information

For more information about Cisco-related products, see the following resources:

Cisco Physical Security and Building Systems:

http://www.cisco.com/go/physec/Cisco Video Surveillance Media Server Software—Install and Upgrade Guides:

http://www.cisco.com/en/US/products/ps9152/prod_installation_guides_list.htmlCisco Unified Computing and Servers:

http://www.cisco.com/en/US/products/ps10265/index.htmlInstalling and Configuring VMware Tools:

http://www.vmware.com/pdf/vmware-tools-installation-configuration.pdfVMware ESXi Configuration Guides:

http://www.vmware.com/support/pubs/vsphere-esxi-vcenter-server-pubs.htmlCisco UCS Site Preparation Guide

http://www.cisco.com/en/US/docs/unified_computing/ucs/hw/site_prep/guide/ucs_site_prep.htmlCisco UCS Manager GUI Configuration Guide

•

Release 2.0

•

Release 1.4

Cisco UCS 6100 Series Fabric Interconnect Hardware Installation Guide

http://www.cisco.com/en/US/docs/unified_computing/ucs/hw/switch/install/ucs6100_install.htmlCisco UCS 5108 Server Chassis Installation Guide

http://www.cisco.com/en/US/docs/unified_computing/ucs/hw/chassis/install/ucs5108_install.htmlCisco UCS C-Series Rack Servers Installation and Upgrade Guides

http://www.cisco.com/en/US/products/ps10493/prod_installation_guides_list.htmlCisco UCS Servers RAID Guide

http://www.cisco.com/en/US/docs/unified_computing/ucs/c/sw/raid/configuration/guide/RAID_GUIDE.htmlCisco UCS B-Series Blade Servers VMware Installation Guide

http://www.cisco.com/en/US/docs/unified_computing/ucs/sw/b/os/vmware/install/bseries-vmware-install.htmlCisco UCS Manager Configuration Guides

http://www.cisco.com/en/US/products/ps10281/products_installation_and_configuration_guides_list.htmlFor more information about configuring network switches for VLAN tagging in VMware, see http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1266.

For information about VLAN tagging, see http://pubs.vmware.com/vsphere-50/index.jsp?topic=%2Fcom.vmware.vsphere.networking.doc_50%2FGUID-7225A28C-DAAB-4E90-AE8C-795A755FBE27.html.

For more information about VMWare vSphere documentation, see http://www.vmware.com/support/pubs/vsphere-esxi-vcenter-server-pubs.html.

Contact Cisco

- Open a Support Case

- (Requires a Cisco Service Contract)

Feedback

Feedback